Validating Quantum Chemistry Results: A Practical Framework for Benchmarking Against Classical Methods

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for validating quantum chemistry computations against established classical methods.

Validating Quantum Chemistry Results: A Practical Framework for Benchmarking Against Classical Methods

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive framework for validating quantum chemistry computations against established classical methods. Covering foundational principles, current methodological approaches, and optimization strategies, it addresses the critical challenge of verification and validation (V&V) in an evolving computational landscape. The content synthesizes recent insights on overcoming barren plateaus, leveraging hybrid quantum-classical algorithms, and establishing robust benchmarking protocols to assess the practical utility and accuracy of quantum simulations for real-world chemical and biomedical problems.

The Critical Need for Validation in Quantum Chemistry

Defining Verification and Validation (V&V) for Computational Chemistry

For researchers, scientists, and drug development professionals, computational chemistry is an indispensable tool for in-silico discovery and analysis [1]. The credibility of these simulations is paramount, particularly with the emergence of novel computational paradigms like quantum computing. Establishing this credibility relies on two critical, distinct processes: verification and validation (V&V) [2].

Verification is the process of determining that a computational model is implemented correctly, ensuring it accurately represents the conceptual mathematical model and its solution. In essence, it asks, "Are we solving the equations right?" [2]. In contrast, validation is the process of assessing a computational model's accuracy by comparing its predictions to experimental data, the recognized "gold standard." It asks, "Are we solving the right equations?" and determines if the model correctly represents the underlying physical reality [3] [2].

This guide provides an objective comparison of classical and quantum computational methods through the lens of V&V, framing it within the broader thesis of establishing confidence in computational chemistry results.

Core Concepts of V&V in Computational Chemistry

Defining Verification and Validation

In computational chemistry, the distinction between verification and validation is foundational. The table below summarizes their key differences.

Table 1: Fundamental Differences Between Verification and Validation

| Aspect | Verification | Validation |

|---|---|---|

| Core Question | "Did we build the model correctly?" [4] [5] | "Did we build the correct model?" [4] [5] |

| Primary Focus | Checking the programming, mathematics, and numerical implementation of the conceptual model [3] [2]. | Assessing the model's agreement with physical reality and the underlying science [3] [2]. |

| Basis of Comparison | Comparison to known analytical solutions or benchmark problems [2]. | Comparison to high-quality experimental data [2]. |

| Primary Goal | Ensure the model is error-free in its implementation [4]. | Ensure the model is an accurate representation of the real-world process [4]. |

| Typical Methods | Code reviews, grid convergence studies, checking conservation laws [3] [2]. | Systematic comparison of simulation outputs with experimental measurements [6] [2]. |

The V&V Workflow

The process of Verification and Validation typically follows a logical sequence, beginning with the conceptual model and culminating in a validated computational tool. The following diagram illustrates this workflow and the distinct roles of verification and validation.

V&V Across Computational Methodologies

The requirements and challenges for V&V vary significantly across different computational chemistry methods, from well-established classical algorithms to emerging quantum approaches.

Classical Computational Chemistry Methods

Classical methods form the backbone of contemporary computational chemistry. Each method has distinct characteristics that influence how V&V is performed.

Table 2: Common Classical Computational Chemistry Methods and V&V Considerations

| Method | Typical Time Complexity | Key Characteristics | V&V Focus |

|---|---|---|---|

| Density Functional Theory (DFT) | O(N³) to O(N⁴) [1] | Uses electronic density; requires approximation of exchange-correlation functional [1]. | Validation is critical due to functional approximations; verification of numerical integration and basis sets [7]. |

| Hartree-Fock (HF) | O(N⁴) [1] | Lacks electron correlation; often a starting point for more accurate methods [1]. | Verification of integral computations; validation shows limitations for correlated systems. |

| Møller-Plesset 2nd Order (MP2) | O(N⁵) [1] | Includes electron correlation via perturbation theory [1]. | Verification of post-Hartree-Fock implementation; validation against experimental correlation energies. |

| Coupled Cluster (CCSD, CCSD(T)) | O(N⁶) to O(N⁷) [1] | High-accuracy method, considered the "gold standard" for many problems [1]. | Verification of iterative solver convergence; rigorous validation against benchmark experimental data. |

| Full Configuration Interaction (FCI) | O*(4^N) [1] | Theoretically exact solution within a given basis set, but computationally prohibitive [1]. | Used as a numerical benchmark for verifying other quantum chemistry codes for small systems. |

Quantum Computational Chemistry Methods

Quantum computers leverage quantum mechanics to simulate chemical systems, offering potential exponential speedups for certain problems [8]. The V&V of these emerging methods presents unique challenges.

Table 3: Emerging Quantum Computational Chemistry Methods

| Method | Key Principle | V&V Challenges |

|---|---|---|

| Quantum Phase Estimation (QPE) | Uses quantum Fourier transform to obtain energy eigenvalues; can achieve high precision [1] [8]. | Verification requires checking quantum circuit compilation and error mitigation. Validation is needed to confirm the prepared state is the true ground state [8]. |

| Variational Quantum Eigensolver (VQE) | Hybrid quantum-classical algorithm; uses a variational principle to find ground state energy [8]. | Verification involves ensuring the classical optimizer and quantum circuit work correctly. Validation is complex due to noise in current quantum hardware and approximations in the ansatz [8]. |

| Qubitization | A technique for encoding Hamiltonian dynamics into quantum circuits more efficiently [8]. | Verification focuses on the correctness of the Hamiltonian embedding. Validation requires comparison to classical results for known systems. |

Comparative Analysis: Classical vs. Quantum

A objective comparison between classical and quantum computational methods must consider not only theoretical scaling but also current practical limitations and the role of V&V in establishing credibility.

Table 4: Objective Comparison of Classical vs. Quantum Computational Chemistry

| Criterion | High-Accuracy Classical (e.g., CCSD(T), FCI) | Noisy Intermediate-Scale Quantum (NISQ) Algorithms (e.g., VQE) | Fault-Tolerant Quantum (e.g., QPE) |

|---|---|---|---|

| Theoretical Scaling | O(N⁶) to O*(4^N) [1] | Polynomial in N and M, but number of measurements scales as O(M⁴/ε²) to O(M⁶/ε²) [8] | O(N²/ε) to O(N⁵/ε) for plane-wave basis sets [1] [8] |

| Current System Size Limit | Tens to hundreds of atoms (basis functions), depending on method and resources [1]. | Small molecules (few atoms) due to limited qubit counts and noise [8]. | Not yet realized; requires large-scale fault-tolerant computers. |

| Key V&V Challenge | Managing computational cost for large systems; approximations in density functionals [1] [7]. | Distinguishing algorithmic results from hardware noise; limited qubit fidelity and connectivity [8]. | Preparing correct initial state; managing coherent evolution time and error correction overhead [8]. |

| Primary Validation Target | Experimental thermochemical data, reaction rates, spectroscopic constants [1]. | Agreement with classical high-accuracy methods (e.g., FCI) for small, tractable systems [8]. | Surpassing the accuracy of CCSD(T) and FCI for systems where they fail [1]. |

| Projected Advantage Timeline | N/A (Current standard) | N/A (Currently in research/development) | Could surpass highly accurate classical methods for small molecules in the next decade [1]. |

Experimental Protocols for V&V

A Standard Verification Protocol for Electronic Structure Codes

- Define Benchmark Systems: Select a set of small, well-understood molecules (e.g., H₂O, N₂, CO) where highly accurate or exact results are obtainable.

- Establish Reference Values: For the benchmark systems, compute energies and properties using a trusted, independently developed code or, for very small systems, FCI calculations.

- Perform Convergence Testing: Systematically vary numerical parameters (e.g., basis set size, grid density for integrals, SCF convergence threshold) to ensure the results converge to a stable value.

- Check Physical Constraints: Verify that the computed results obey physical laws, such as the virial theorem, and display the correct molecular symmetry.

- Cross-Code Comparison: Execute calculations on the benchmark systems using multiple electronic structure codes to identify discrepancies arising from implementation differences [7].

A Standard Validation Protocol for a Novel Quantum Chemistry Method

- Select a Validation Dataset: Choose a curated, publicly available dataset of molecular properties derived from high-quality experiments (e.g., the GMTKN55 database for thermochemistry). This dataset should contain molecules not used in the method's parameterization.

- Compute Properties: Use the novel computational method to predict the target properties (e.g., atomization energies, reaction barrier heights) for all molecules in the validation dataset.

- Statistical Comparison: Calculate statistical measures of accuracy, such as the mean absolute error (MAE) and root-mean-square error (RMSE), between the computed results and the experimental reference data.

- Benchmark Against Established Methods: Compare the statistical performance of the novel method against well-established methods (e.g., DFT with various functionals, MP2, CCSD(T)) on the same dataset.

- Report and Analyze Discrepancies: Document all results and provide chemical insight into cases where the novel method shows significant deviation from experiment, analyzing whether the error is systematic [2].

This table details essential "research reagents" — the core computational tools and concepts — required for conducting V&V in computational chemistry.

Table 5: Essential Research Reagents for V&V in Computational Chemistry

| Item | Function in V&V |

|---|---|

| Benchmark Molecular Datasets | Curated collections of molecular structures and associated high-quality experimental data (e.g., energies, spectra) used as a ground truth for validation [7]. |

| Reference-Quality Classical Codes | Established, well-verified software (e.g., for FCI, CCSD(T)) that provides reliable benchmark results for verifying new implementations or quantum algorithms [7]. |

| Electronic Structure Code | Software implementing the computational method (e.g., DFT, Coupled Cluster) whose results are being verified and validated. |

| Error Metrics | Quantitative measures (e.g., Mean Absolute Error, RMSE) used to objectively assess the difference between computed results and experimental data during validation [2]. |

| Quantum Hardware / Simulator | Physical quantum processors or high-performance classical simulators used to run quantum algorithms like VQE and QPE, requiring their own V&V [8]. |

| Pseudopotentials / Basis Sets | Standardized approximations for atomic core electrons and mathematical sets of functions used to represent molecular orbitals; their quality must be verified and their choice validated [7]. |

Verification and Validation are the twin pillars supporting reliable computational chemistry. For the foreseeable future, classical computers will remain the primary tool for most chemical applications, particularly for larger molecules [1]. The rigorous V&V framework established for classical methods provides the essential foundation for evaluating emerging quantum computational chemistry approaches. The path to quantum advantage in chemistry will be paved not just by demonstrating superior algorithmic scaling, but by conclusively demonstrating, through robust validation, that these new methods can deliver more accurate or cost-effective solutions to chemically significant problems than the best classical alternatives [1].

Why Quantum Computations Are Not Useful Without Efficient Verification

The promise of quantum computing to revolutionize fields like drug discovery and materials science is tempered by a fundamental challenge: the inherent susceptibility of quantum processors to errors. Without robust, efficient methods to verify their results, the unparalleled computational power of quantum systems remains an untrustworthy novelty. This is especially critical in quantum chemistry, where the accuracy of molecular simulations directly impacts scientific and commercial decisions. This guide compares the current landscape of quantum verification methods, framing them within essential research that validates quantum results against established classical computational methods.

Classical vs. Quantum Computing: A Verification Paradox

Classical computers are reliable because error correction is a mature field, and results can be easily replicated and verified. In contrast, quantum computers are fundamentally fragile. Their basic units of information, qubits, are highly sensitive to environmental noise such as vibrations or temperature changes, which can cause computational errors or a complete loss of their quantum state (decoherence) [9]. This inherent instability creates a verification paradox: the results from a quantum computer are only valuable if they can be trusted, yet the systems best suited to verify these results—classical computers—often lack the computational power to simulate the quantum process efficiently [9].

The table below summarizes the core differences that make verification a trivial task for classical computing and a monumental challenge for quantum computing.

| Feature | Classical Computing | Quantum Computing |

|---|---|---|

| Basic Unit | Bit (0 or 1) | Qubit (0, 1, or any superposition) |

| Error Correction | Mature and highly effective [9] | Nascent and extraordinarily difficult [9] |

| Result Verification | Straightforward replication and check | Often classically intractable for complex circuits [9] |

| Key Vulnerability | Hardware failure (rare) | Environmental noise and decoherence [9] |

Comparative Analysis of Quantum Verification Methods

Researchers have developed several strategies to tackle the verification problem, each with distinct advantages, limitations, and applicability to quantum chemistry. The following table provides a structured comparison of the primary approaches.

| Verification Method | Core Principle | Typical Experimental Platform | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Classical Simulation of Error-Corrected Circuits [9] | Uses advanced algorithms to simulate specific error-corrected quantum computations on classical computers. | Software-based simulation on HPC clusters. | Enables validation of fault-tolerant computations crucial for building robust systems [9]. | Currently limited to specific quantum error-correcting codes (e.g., GKP bosonic codes) [9]. |

| Blind Quantum Computing [10] [11] | A verifier with minimal quantum resources (can prepare single qubits) interactively tests a more powerful quantum computer without knowing the computation itself. | Photonic qubits [10] [11]. | Provides information-theoretic security and is platform-independent [10] [11]. | Requires a verifier with some quantum capabilities; can introduce overhead. |

| Hybrid Quantum-Classical Benchmarks | Runs computations on both quantum and classical hardware to compare results, often using simplified problems where classical verification is possible. | Trapped-ion quantum computers (e.g., IonQ), classical CPUs/GPUs [12]. | Practical for near-term applications; provides a direct performance benchmark [12]. | Limited to problems that are not classically intractable; does not verify full quantum advantage. |

| Quantum Algorithm Validation via Classical HPC | Uses high-performance classical simulators (e.g., GPU-based) to validate the performance and output of quantum algorithms before running them on quantum hardware. | NVIDIA CUDA-Q on H200/GH200 Superchips [13]. | Drastically accelerates development cycles (e.g., 73x faster) and reduces costs [13]. | Is a simulation of the algorithm, not a verification of the physical quantum computer's output. |

Experimental Performance Data

The theoretical value of these methods is proven by experimental data. The table below summarizes quantitative results from recent verification experiments, highlighting the performance gaps and validation successes.

| Experiment Focus | Verification Method Used | Key Quantitative Result | Implication for Verification |

|---|---|---|---|

| Simulating GKP Codes [9] | Classical simulation of error-corrected circuits. | First method to accurately simulate quantum computations with GKP codes on a classical computer [9]. | Provides a classical benchmark for a code widely used for error correction, enabling better testing of quantum hardware. |

| Atomic Force Calculation [12] | Hybrid Quantum-Classical Benchmark (QC-AFQMC algorithm). | Quantum-derived atomic forces were more accurate than those from classical methods [12]. | Demonstrates a verifiable, tangible quantum advantage for a specific chemistry simulation task. |

| Quantum AI for Drug Discovery [13] | Quantum Algorithm Validation on NVIDIA CUDA-Q. | Algorithm execution was 60-73x faster on the quantum simulator than on traditional CPU-based methods [13]. | Enables efficient pre-hardware validation of quantum algorithms, ensuring they are optimized before costly quantum computer time is used. |

| Timeline for Quantum Advantage [14] | Theoretical and empirical comparison framework. | Suggests quantum phase estimation may surpass high-accuracy classical methods for small molecules in the coming decade, but classical methods remain superior for larger molecules for much longer [14]. | Provides a critical timeline for when verification of quantum chemistry results will become most critical, as quantum computers begin to outperform classical ones. |

Detailed Experimental Protocols for Verification

To implement these verification strategies, researchers rely on specific, rigorous experimental protocols.

Protocol for Blind Verification of Quantum Computation

This protocol, demonstrated by Barz et al., allows a limited verifier to check a more powerful quantum computer [10] [11].

Methodology:

- Verifier Preparation: The verifier prepares a series of single qubits in specific states. Each qubit is randomly chosen to be in one of several states, forming a secret "trappification" pattern.

- Transmission to Quantum Computer: These qubits are sent to the untrusted quantum computer for processing.

- Measurement-Based Computation: The quantum computer executes a measurement-based quantum computation (MBQC) on the provided qubits. The pattern of measurements is dictated by the computation the verifier wants to run, but the specific angles are concealed by the initial random choices.

- Result Submission & Verification: The quantum computer returns the computation results to the verifier. Using its knowledge of the secret initial states, the verifier can check a subset of the results ("trap" qubits). If the trap results are correct, it guarantees with high probability that the entire computation was performed faithfully [10] [11].

Diagram of the blind quantum computation verification protocol, where a verifier uses "trap" qubits to test a more powerful quantum computer.

Protocol for Hybrid Quantum-Classical Force Calculation

IonQ's demonstration of accurate atomic force calculation illustrates a benchmark-based verification method [12].

Methodology:

- System Selection: A specific chemical system is chosen where atomic forces are critical, such as a molecule relevant to carbon capture.

- Classical Baseline: Established classical computational chemistry methods (e.g., Density Functional Theory) are used to calculate the forces, providing a benchmark.

- Quantum Computation: The Quantum-Classical Auxiliary-Field Quantum Monte Carlo (QC-AFQMC) algorithm is run on a trapped-ion quantum computer (e.g., IonQ Forte) to compute the same forces.

- Comparative Analysis: The forces and resulting reaction pathways calculated by the quantum and classical methods are compared. The quantum computation is considered verified for this specific task if its results are more accurate (e.g., better matching known experimental data or high-level theoretical calculations) than the classical baseline [12].

The Scientist's Toolkit: Essential Reagents for Quantum Verification

The following table details key computational tools and concepts essential for researchers working on verifying quantum computations in chemistry.

| Research Reagent / Tool | Function in Verification |

|---|---|

| Gottesman-Kitaev-Preskill (GKP) Code [9] | A bosonic error-correcting code that makes quantum information more resilient to noise. New algorithms allow its simulation on classical computers, providing a vital verification benchmark [9]. |

| Variational Quantum Eigensolver (VQE) [15] | A hybrid algorithm that uses a quantum computer to prepare a molecular trial wavefunction and a classical computer to optimize it. Its results are often verified against classical methods like Full Configuration Interaction. |

| CUDA-Q Platform [13] | An open-source software platform for simulating quantum algorithms on NVIDIA GPUs. It allows for rapid validation of quantum algorithm performance and correctness before deployment on physical quantum hardware [13]. |

| Unitary Coupled-Cluster (UCC) Ansatz [15] | A specific parameterization for a quantum circuit that is used in algorithms like VQE to prepare accurate molecular wavefunctions. Its choice is critical for the accuracy and verifiability of the result. |

| pUCCD-DNN Method [15] | A hybrid method combining a paired UCC ansatz with a Deep Neural Network (DNN) optimizer. The DNN learns from past optimizations, improving efficiency and compensating for noisy quantum hardware, leading to more reliable, verifiable results [15]. |

Workflow of a hybrid quantum-classical algorithm used in computational chemistry, where a classical optimizer verifies and refines quantum results.

The journey toward useful quantum computation is inextricably linked to the development of efficient verification. As the data shows, no single method is a panacea; instead, a multi-faceted approach is emerging. This includes classically simulating advanced error-correcting codes, using interactive protocols like blind quantum computing for security-critical tasks, and heavily relying on hybrid quantum-classical benchmarks and high-performance simulator validation for near-term practical applications. For researchers in quantum chemistry, the message is clear: rigorous verification against classical methods is not a secondary concern but the very foundation upon which reliable and impactful scientific discovery will be built.

For researchers in computational chemistry and drug development, the promise of quantum computing has always been tempered by a fundamental question: can it produce verifiably more accurate results than established classical methods? The transition from theoretical potential to practical application represents the central challenge in the field today. As quantum hardware evolves from experimental curiosities to tools capable of utility-scale computations, the scientific community requires rigorous, objective comparisons to validate claims of quantum advantage. This guide provides a systematic comparison of emerging quantum computational approaches against classical benchmarks, focusing specifically on validation methodologies essential for research scientists demanding reproducible, chemically accurate results. The following analysis synthesizes the most current experimental data and performance metrics to equip professionals with the analytical framework needed to assess this rapidly evolving landscape.

Comparative Performance: Quantum vs. Classical Computational Methods

Quantitative Performance Benchmarks

Table 1: Comparative Analysis of Computational Chemistry Methods

| Method | Key Principle | Accuracy (Mean Absolute Error) | Computational Scaling | Current System Size Limits |

|---|---|---|---|---|

| pUCCD-DNN (Quantum-Classical) | Hybrid quantum simulation with deep neural network optimization | Two orders of magnitude reduction vs. non-DNN pUCCD [15] | Dependent on quantum circuit depth & classical NN training | Small test molecules; demonstrated cyclobutadiene isomerization [15] |

| Classical DFT | Electron density determines system energy | Limited by electron density approximation [15] | O(N³) in practice | Large systems (1000s of atoms) |

| Full Configuration Interaction (FCI) - Classical | Exact solution of electronic Schrödinger equation | Highest accuracy (benchmark) | Exponential | Small molecules (~10s of atoms) due to computational cost [16] |

| Coupled Cluster (CCSD(T)) - Classical | Includes single, double, and perturbative triple excitations | Near-FCI accuracy for many systems | O(N⁷) | Medium-sized molecules [16] |

| QC-AFQMC (IonQ) | Quantum-Classical Auxiliary-Field Quantum Monte Carlo | More accurate than classical methods for force calculations [12] | Dependent on quantum resources | Complex chemical systems; demonstrated for carbon capture [12] |

Table 2: Quantum Hardware Performance Metrics (2025)

| Platform/Processor | Qubit Count | Key Performance Metrics | Reported Chemistry Applications |

|---|---|---|---|

| IBM Quantum Nighthawk | 120 qubits | 30% more complex circuits; target of 5,000 two-qubit gates by end of 2025 [17] [18] | Observable estimation, variational algorithms [18] |

| Google Willow | 105 physical qubits | Exponential error reduction; completed benchmark in ~5 minutes vs. 10²⁵ years classically [19] | Molecular geometry calculations, "molecular ruler" [19] |

| IonQ Forte | 36 qubits (utility-scale) | Outperformed classical HPC by 12% in medical device simulation [19] | Atomic-level force calculations for carbon capture [12] |

| JUPITER Supercomputer (Simulation) | 50 qubits (simulated) | Required ~2 petabytes memory [20] | Quantum algorithm testing and validation (VQE, QAOA) [20] |

Analysis of Comparative Data

The performance data reveals a nuanced landscape where quantum and classical methods each hold distinct advantages. For small molecular systems, quantum-inspired hybrid approaches like pUCCD-DNN demonstrate remarkable accuracy improvements, reducing mean absolute error by two orders of magnitude compared to traditional pUCCD methods [15]. This suggests that for targeted applications, quantum methods are beginning to deliver on their promise of enhanced accuracy.

However, classical methods maintain significant advantages in scalability and accessibility. Methods like DFT and Coupled Cluster can be applied to substantially larger molecular systems than current quantum approaches can handle [16]. The timeline for widespread quantum advantage remains measured in years, with one comprehensive analysis suggesting that classical methods will likely maintain dominance for large molecule calculations for approximately the next two decades, while quantum advantage may emerge sooner for highly accurate simulations of smaller molecules (tens to hundreds of atoms) [16].

Experimental Protocols for Method Validation

Protocol 1: Variational Quantum Eigensolver with Neural Network Optimization (pUCCD-DNN)

Objective: To compute molecular ground state energies with higher accuracy than standalone quantum or classical methods by integrating quantum simulation with deep neural networks.

Workflow:

- Initial Wavefunction Preparation: A paired Unitary Coupled-Cluster with Double Excitations (pUCCD) ansatz prepares the trial wavefunction on a quantum computer, representing it as an exponential of a unitary operator acting on an initial reference state [15].

- Quantum Execution: The parameterized quantum circuit is executed to generate the trial wavefunction and measure the system's energy.

- Neural Network Optimization: Unlike traditional "memoryless" optimizers, a Deep Neural Network (DNN) trains on system data from the current wavefunction and global parameters. Crucially, the DNN learns from past optimizations of other molecules, improving efficiency and reducing required quantum hardware calls [15].

- Parameter Update: The DNN outputs an optimized set of parameters for the unitary operator.

- Iteration: Steps 2-4 repeat until energy convergence is achieved, using significantly fewer quantum measurements than traditional approaches due to the DNN's learning capability.

Validation: Benchmarking involves comparing calculated molecular energies against Full Configuration Interaction (FCI) results, the most accurate but computationally expensive classical method. The pUCCD-DNN approach has demonstrated a close match to FCI predictions in tests such as the isomerization of cyclobutadiene [15].

Protocol 2: Quantum Advantage Validation Framework

Objective: To rigorously validate claims of quantum advantage through community-driven benchmarking and comparison against state-of-the-art classical methods.

Workflow:

- Candidate Identification: Identify potential advantage experiments across three categories: observable estimation, variational algorithms, and problems with efficient classical verification [18].

- Quantum Implementation: Execute the candidate algorithm on current quantum hardware (e.g., IBM Nighthawk, Google Willow).

- Classical Baselines: Run the same problem using leading classical methods (e.g., GPU-accelerated simulations, specialized HPC algorithms).

- Performance Comparison: Evaluate results against multiple criteria: computational efficiency, cost-effectiveness, and accuracy [18].

- Community Verification: Contribute results to an open, community-led quantum advantage tracker (e.g., IBM/Algorithmiq/Flatiron initiative) for independent verification [17] [18].

Validation: A computation is considered validated only when it demonstrates a clear separation from classical methods that has been rigorously verified by the broader scientific community, moving beyond theoretical potential to empirically demonstrable advantage [18].

Experimental Workflow Visualization

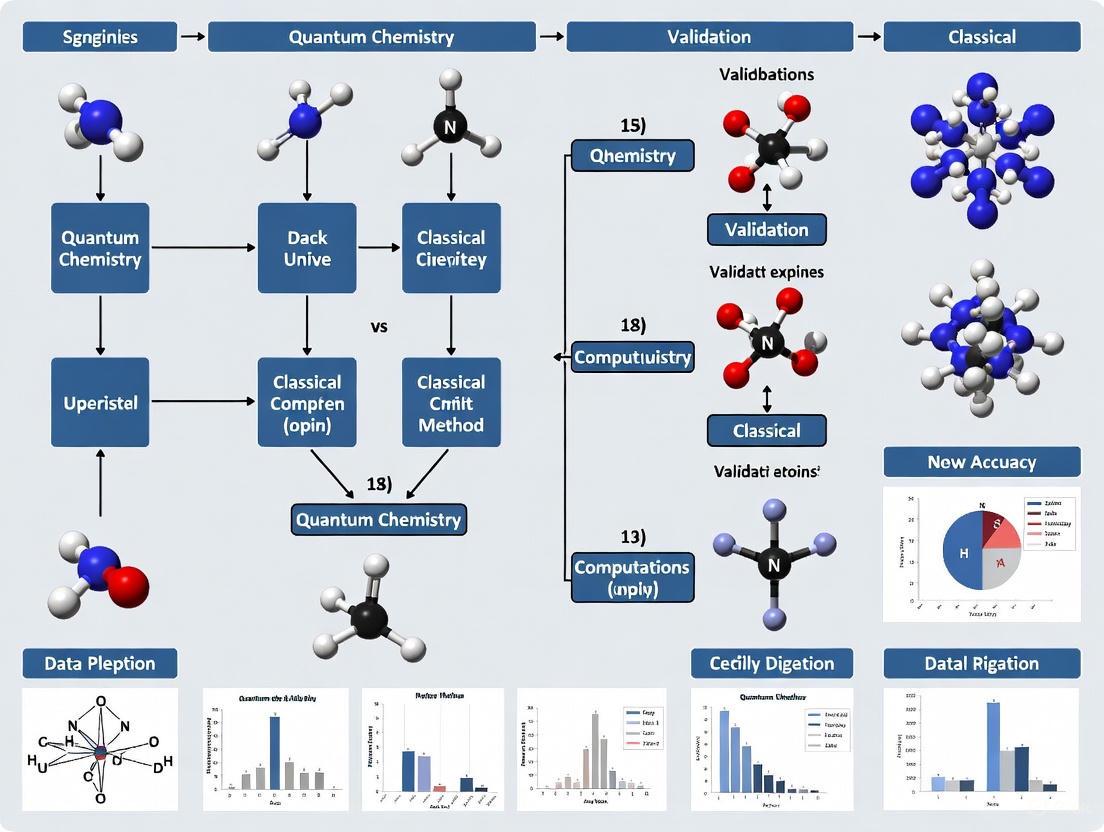

Diagram 1: Method validation workflow for comparing quantum and classical computational chemistry approaches.

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Essential Tools for Quantum Computational Chemistry Research

| Tool/Platform | Type | Primary Function | Access Method |

|---|---|---|---|

| Qiskit SDK | Quantum Software Development Kit | Open-source Python/C++ framework for quantum circuit design, optimization, and execution [17] [18] | Python Package / C API |

| IBM Quantum Nighthawk | Quantum Processing Unit (QPU) | 120-qubit processor with square lattice topology for increased circuit complexity (30% more than previous gen) [17] [18] | Cloud access via IBM Quantum Platform |

| IBM Quantum Heron | Quantum Processing Unit (QPU) | 133-156 qubit processor with lowest median two-qubit gate errors (<1/1000 for 57 couplings) [18] | Cloud access via IBM Quantum Platform |

| Jülich Universal Quantum Computer Simulator (JUQCS-50) | Quantum Simulator | High-performance simulator for 50-qubit universal quantum computers; validates algorithms before hardware deployment [20] | JUNIQ infrastructure access |

| Quantum Advantage Tracker | Validation Framework | Community-driven platform for systematically monitoring and verifying quantum advantage claims [18] | Open community resource |

| pUCCD-DNN Framework | Hybrid Algorithm | Combines quantum simulation with deep neural network optimization for enhanced accuracy [15] | Research implementation |

| QC-AFQMC Algorithm | Quantum-Classical Algorithm | Quantum-Classical Auxiliary-Field Quantum Monte Carlo for accurate atomic-level force calculations [12] | Vendor-specific implementation (IonQ) |

Roadmap and Future Projections

Hardware and Algorithm Development Trajectory

The quantum computing industry has established concrete roadmaps with specific milestones for achieving and extending quantum advantage. IBM's roadmap targets demonstrated quantum advantage by the end of 2026, with fault-tolerant quantum computing by 2029 [17] [18]. The company projects successive generations of the Nighthawk processor will deliver increasing circuit complexity, from 5,000 two-qubit gates by end of 2025 to 15,000 gates by 2028 [17].

Error correction has emerged as the critical enabling technology, with Google's Willow chip demonstrating exponential error reduction as qubit counts increase [19]. IBM has achieved a 10x speedup in quantum error correction decoding, completing this milestone a year ahead of schedule [17]. These advancements in error management are essential for achieving the stability required for chemically accurate computations.

Application-Specific Projections

Different chemical applications will reach quantum advantage at varying timescales. Materials science problems involving strongly interacting electrons and lattice models appear closest to achieving quantum advantage, while quantum chemistry problems have seen algorithm requirements drop fastest as encoding techniques improve [19]. A comprehensive analysis suggests economic advantage (where quantum computations are cost-effective) will likely emerge in the mid-2030s, following technical advantage by several years [16].

The National Energy Research Scientific Computing Center analysis suggests quantum systems could address Department of Energy scientific workloads—including materials science, quantum chemistry, and high-energy physics—within five to ten years [19]. This timeline aligns with industry projections that by the 2040s, quantum computers could model systems containing up to 10⁵ atoms in less than a month, assuming continued algorithmic progress [16].

The grand challenge of moving from theoretical speedup to practical application in quantum computational chemistry is being addressed through rigorous validation frameworks and hybrid approaches that leverage the complementary strengths of quantum and classical systems. While classical methods remain dominant for large-scale molecular calculations and will continue to do so for the foreseeable future, quantum approaches are demonstrating tangible advantages in specific, targeted applications, particularly for highly accurate simulations of smaller molecular systems. For research scientists and drug development professionals, the emerging validation protocols and comparative frameworks presented in this guide provide the essential tools for critically evaluating claims of quantum advantage and strategically integrating these evolving technologies into their research workflows. The continued co-development of quantum hardware, error correction techniques, and hybrid quantum-classical algorithms suggests that the transition from laboratory demonstration to practical chemical discovery tool is now underway, with the most significant impacts expected to emerge over the coming decade.

The field of computational chemistry is defined by the clear dominance of mature, high-performance classical methods and the emergence of pioneering, niche applications on quantum hardware. Classical machine learning and established computational algorithms deliver practical, industrial-scale solutions today. In parallel, quantum computing is demonstrating its first verifiable advantages in targeted, proof-of-principle experiments. This guide provides an objective comparison of their performance, supported by experimental data, to help researchers navigate this evolving landscape.

Performance Benchmarking: Classical vs. Quantum Methods

The following tables summarize quantitative performance data and projections for classical and quantum computational methods in chemistry.

Table 1: Projected Timeline for Quantum Advantage in Ground-State Energy Estimation [1]

| Computational Method | Classical Time Complexity | Projected Year Quantum (QPE) Becomes Faster |

|---|---|---|

| Density Functional Theory (DFT) | O(N³) | >2050 |

| Hartree Fock (HF) | O(N⁴) | >2050 |

| Møller-Plesset Second Order (MP2) | O(N⁵) | 2038 |

| Coupled Cluster Singles & Doubles (CCSD) | O(N⁶) | 2036 |

| Coupled Cluster with Perturbative Triples (CCSD(T)) | O(N⁷) | 2034 |

| Full Configuration Interaction (FCI) | O*(4^N) | 2031 |

Note: Analysis assumes significant classical parallelism (e.g., thousands of GPUs) and treats quantum algorithms as mostly serial. N represents the number of relevant basis functions; accuracy target ε=10⁻³.

Table 2: Market Context & Hardware Performance (2024-2025) [21] [18] [22]

| Metric | Classical / Market Context | Quantum Hardware Performance |

|---|---|---|

| Overall Market | QT market could reach $97B by 2035; quantum computing to be largest segment [21]. | |

| Hardware Scale | Classical HPC (e.g., supercomputers with thousands of GPUs) used for benchmarking [1]. | IBM's 127-qubit Eagle processors demonstrated exponential speedup [22]. IBM's 120-qubit Nighthawk chip enables 30% more complex circuits [18]. |

| Key Benchmark Result | Unconditional exponential speedup demonstrated for Simon's problem (13,000x faster than classical) [22]. Google's Willow chip ran OTOC algorithm 13,000x faster than supercomputer [23]. | |

| Error Rates | IBM Heron r3 chip achieved a new record: <1 error per 1,000 operations on 57 of 176 couplings [18]. |

Experimental Protocols and Workflows

To contextualize the performance data, below are the detailed methodologies for key experiments cited, which highlight the distinct approaches of classical and quantum paradigms.

Protocol: Classical Machine Learning for Molecular Properties

This protocol underpins the current dominance of classical methods, achieving quantum mechanical accuracy at a fraction of the time and cost [24].

- 1. Data Set Curation: A large dataset of molecular structures and their corresponding properties (e.g., energy, dipole moment) is generated using high-accuracy quantum chemistry methods like CCSD(T) or DFT for small to medium-sized molecules.

- 2. Feature Engineering: Molecular structures are converted into a machine-readable format. Graph neural networks (GNNs) are often used, where atoms are represented as nodes and bonds as edges, capturing the inherent topology of the molecule.

- 3. Model Training: A classical machine learning model (e.g., a neural network or a kernel-based method) is trained on the curated dataset. The model learns to map the molecular features to the target property.

- 4. Validation and Prediction: The trained model is validated on a held-out test set of molecules to ensure it has generalized correctly. Once validated, it can predict properties for new, larger molecules (e.g., millions of atoms) at speeds far exceeding traditional quantum chemistry calculations.

Protocol: Hybrid Quantum-Classical Algorithm (VQE/pUCCD-DNN)

This protocol represents a leading hybrid approach, designed to work with current noisy quantum hardware while leveraging classical AI for improved performance [15].

- 1. Ansatz Selection: A parameterized quantum circuit (ansatz), such as the paired Unitary Coupled-Cluster with Double Excitations (pUCCD), is chosen to prepare the trial quantum state of the molecule on the quantum processor.

- 2. Quantum Execution: The parameterized circuit is executed on a quantum computer. The output is a measurement of the system's energy expectation value.

- 3. Classical Optimization (AI-Enhanced): A classical deep neural network (DNN) optimizer—not a "memoryless" traditional optimizer—is used. The DNN trains on system data from the current wavefunction and global parameters, and it can learn from past optimizations of other molecules.

- 4. Iterative Convergence: The measured energy is fed to the DNN, which calculates a new set of improved parameters for the quantum circuit. Steps 2 and 3 repeat iteratively until the energy of the system converges to a minimum, representing the best approximation of the ground-state energy.

Protocol: Verification of Quantum Advantage (Quantum Echoes)

This protocol details the methodology behind a recent demonstration of verifiable quantum advantage with a potential chemical application [23].

- 1. System Initialization: The quantum processor (e.g., Google's Willow chip) is initialized with a carefully crafted signal, representing the system to be studied.

- 2. Qubit Perturbation: A specific qubit within the system is deliberately perturbed.

- 3. Forward Evolution: The entire quantum system is allowed to evolve for a set period.

- 4. Time Reversal: The evolution of the system is precisely reversed using quantum gates.

- 5. Echo Measurement: The "quantum echo" is measured. In a perfect, noiseless system, the reversal would perfectly reconstruct the initial state. In practice, the echo provides a highly sensitive measure of the system's dynamics and interactions.

- 6. Verification: The result can be repeated on the same quantum computer or another of similar caliber to verify the result, making it "quantum verifiable." This technique was used as a "molecular ruler" to study 15- and 28-atom molecules, matching results from traditional Nuclear Magnetic Resonance (NMR).

The following workflow diagram illustrates the key steps and logical relationship of the hybrid quantum-classical method:

Diagram 1: Hybrid Quantum-Classical Workflow. This illustrates the iterative loop where a quantum computer prepares and measures a state, and a classical AI optimizer refines the parameters.

The Scientist's Toolkit: Research Reagent Solutions

This table details essential computational "reagents" — the core algorithms, software, and hardware — that researchers are using in this field.

Table 3: Key Research Tools and Platforms [18] [15] [24]

| Category | Item | Function |

|---|---|---|

| Classical Software | Density Functional Theory (DFT) | Workhorse for electronic structure calculations; balances accuracy and cost for many industrial applications [1] [24]. |

| Graph Neural Networks (GNNs) | Classical ML models that achieve quantum-mechanical accuracy for large systems (e.g., millions of atoms) at high speed [24]. | |

| Quantum Software & SDKs | Qiskit SDK | Open-source software development kit for leveraging quantum processors; enables circuit construction, optimization, and execution [18]. |

| Variational Quantum Eigensolver (VQE) | A leading hybrid algorithm designed for NISQ-era hardware to find molecular ground-state energies [15]. | |

| Quantum Hardware Platforms | IBM Quantum Heron & Nighthawk | High-performance quantum processors with high fidelity and low error rates, accessible via the cloud [18]. |

| Google Quantum AI Willow Chip | A 125-qubit processor that demonstrated verifiable quantum advantage and enables advanced algorithms like Quantum Echoes [23]. | |

| Specialized Algorithms | pUCCD-DNN | A hybrid algorithm that combines a quantum ansatz (pUCCD) with a deep neural network optimizer to improve efficiency and noise resistance [15]. |

| Quantum Echoes (OTOC) | A quantum algorithm that acts as a "molecular ruler," providing verifiable advantage for probing system structures and dynamics [23]. |

The relationship between these tools and the broader research landscape, from hardware to application, can be visualized as follows:

Diagram 2: Toolchain for Quantum Chemistry Research. This shows the stack from quantum hardware to chemical application, and the critical benchmarking role of classical methods.

Critical Analysis of Comparative Performance

Synthesizing the experimental data reveals a clear, nuanced picture:

Classical Dominance is Rooted in Practicality: Classical machine learning models, particularly graph neural networks and machine learning force fields, now routinely deliver quantum mechanical accuracy at speeds that scale to millions of atoms, directly impacting drug discovery and materials design [24]. For the vast majority of industrial applications, especially those without strong electron correlation, these classically-accelerated methods are the most efficient and practical choice [1].

The "Quantum Advantage" is Emerging in Rigorously Defined Niches: The first unconditional exponential quantum speedups have been demonstrated, but on abstract, "toy" problems like Simon's problem [22]. The most convincing steps toward chemical utility are verifiable advantages in algorithms like Quantum Echoes, which can probe molecular structures and outperform classical supercomputers, albeit not yet on a universal chemistry problem [23].

The Path to Broad Quantum Utility is a Decade Away: Projections indicate that quantum phase estimation will likely surpass highly accurate classical methods like Full Configuration Interaction (FCI) within approximately a decade, initially for small to medium-sized molecules [1]. The resource estimates for impactful industrial problems (e.g., modeling the FeMoco cofactor for nitrogen fixation) remain daunting, requiring millions of physical qubits or advanced architectures to achieve ~100,000 logical qubits [25] [1]. For the foreseeable future, hybrid quantum-classical algorithms, enhanced by classical AI, represent the most promising path for extracting value from noisy quantum hardware [15].

Benchmarking Methods and Hybrid Algorithms in Practice

In the pursuit of accurately modeling molecular systems, quantum algorithms represent a paradigm shift for computational chemistry. Algorithms such as the Variational Quantum Eigensolver (VQE), Quantum Approximate Optimization Algorithm (QAOA), and Quantum Phase Estimation (QPE) offer promising pathways to simulate quantum mechanical phenomena with potentially superior efficiency than classical computational methods. As research moves from idealized gas-phase simulations toward biologically relevant conditions, validating these algorithms against established classical benchmarks becomes paramount. This guide provides an objective comparison of these three key algorithms, focusing on their performance, experimental protocols, and current utility in advancing chemical research, particularly in pharmaceutical and materials science applications where accurate molecular modeling is critical.

Core Principles and Applications

Variational Quantum Eigensolver (VQE): VQE is a hybrid quantum-classical algorithm designed to find the ground state energy of a quantum system, a central task in quantum chemistry. It leverages a parameterized quantum circuit (ansatz) to prepare trial wave functions, whose energy expectation value is measured on the quantum processor. A classical optimizer then varies these parameters to minimize the energy, approximating the ground state [26] [27]. Its resilience to noise makes it particularly suited for current Noisy Intermediate-Scale Quantum (NISQ) devices.

Quantum Approximate Optimization Algorithm (QAOA): QAOA is a hybrid algorithm tailored for combinatorial optimization problems. It operates by applying a sequence of parameterized unitaries—a cost Hamiltonian (encoding the problem) and a mixer Hamiltonian (exploring the solution space)—to an initial state. A classical optimizer adjusts the parameters to minimize the expected cost [26] [27]. While its applications extend to finance and logistics, it is also used for quantum chemistry problems framed as optimization tasks.

Quantum Phase Estimation (QPE): QPE is a fundamental quantum subroutine that estimates the phase (or eigenvalue) of an eigenvector of a unitary operator. It is the quantum counterpart of classical phase estimation and is a critical component of many quantum algorithms, including the famous Shor's algorithm. In quantum chemistry, QPE is used to obtain precise energy eigenvalues of molecular Hamiltonians, enabling highly accurate ground and excited state energy calculations [27].

The following table summarizes the key characteristics and typical performance metrics of VQE, QAOA, and QPE based on current research and experimental implementations.

Table 1: Comparative Overview of VQE, QAOA, and QPE

| Feature | VQE | QAOA | QPE |

|---|---|---|---|

| Primary Use Case | Ground state energy calculation [26] [27] | Combinatorial optimization [26] [27] | Eigenvalue estimation for unitary operators [27] |

| Algorithm Type | Hybrid (Quantum-Classical) [26] | Hybrid (Quantum-Classical) [26] | Purely Quantum |

| Resource Requirements (Qubits/Circuit Depth) | Moderate (NISQ-suitable) [26] | Moderate (NISQ-suitable) [26] | High (requires fault tolerance) |

| Classical Optimizer Dependency | High (core component) [26] | High (core component) [26] | None |

| Theoretical Precision | Limited by ansatz and optimizer | Approximate solution | Chemically accurate (in theory) |

| Noise Resilience | High (by design) [27] | Moderate [27] | Low |

| Reported Accuracy (vs. Classical) | Chemical accuracy (for small molecules) [28] | Varies with problem and parameters | Target for fault-tolerant era |

| Key Advantage | Practical for today's hardware | Good for NISQ-era optimization [27] | Proven speedups and high precision |

Experimental Protocols and Performance Validation

Key Experimental Methodologies

The validation of quantum algorithms relies on well-defined experimental protocols that are consistent across different software and hardware platforms. The methodologies below are commonly employed in recent research to ensure reproducible and comparable results.

Table 2: Summary of Key Experimental Protocols

| Protocol Component | VQE-Specific Approach | QAOA-Specific Approach | Common/Cross-Algorithm Practices |

|---|---|---|---|

| Problem Definition | Molecular Hamiltonian (e.g., H₂) via Jordan-Wigner transformation [26] | Cost Hamiltonian for problems like MaxCut or TSP [26] | Use of parser tools for consistent problem definition across simulators [26] |

| Ansatz/State Preparation | UCCSD ansatz applied to Hartree-Fock reference state [26] | Alternating application of cost and mixer unitaries [26] | Parameterized Quantum Circuits (PQCs) |

| Classical Optimization | BFGS, COBYLA, SPSA | BFGS, gradient descent | Benchmarking on HPC systems; use of job arrays for parallelization [26] |

| Hardware Execution | IBM quantum devices (e.g., 27-52 qubit systems) [28] | Quantinuum H-Series devices [29] | Noise mitigation techniques (e.g., readout error correction) |

| Validation & Benchmarking | Comparison to Full Configuration Interaction (FCI) or CASCI [28] | Comparison to classical optimizers (e.g., Simulated Annealing) | Use of chemical accuracy threshold (1 kcal/mol); verification against classical benchmarks [28] |

Protocol for VQE in Solvated Molecular Systems

A significant advance in VQE protocols is the move beyond gas-phase simulations. A recent study integrated the Integral Equation Formalism Polarizable Continuum Model (IEF-PCM) into the Sample-based Quantum Diagonalization (SQD) method to account for solvent effects [28]. The workflow is as follows:

- Hamiltonian Preparation: The molecular Hamiltonian is generated, and the solvent effect is incorporated as a perturbation using IEF-PCM.

- Quantum Sampling: Electronic configurations are sampled from the molecule's wavefunction using quantum hardware.

- Noise Correction: The sampled configurations, affected by hardware noise, are corrected via a self-consistent process (S-CORE) to restore physical properties like electron number and spin.

- Classical Diagonalization: The corrected samples are used to construct a smaller, manageable subspace of the full molecular problem, which is then solved diagonalized on a classical computer.

- Iteration: The process is iterated until the molecular wavefunction and the solvent model achieve self-consistency. This protocol has been tested on IBM quantum hardware for molecules like water and methanol, achieving solvation free energies within 0.2 kcal/mol of classical benchmarks, meeting the threshold for chemical accuracy [28].

Protocol for QAOA in Combinatorial Optimization

For QAOA, performance is often benchmarked on combinatorial problems like the Sherrington-Kirkpatrick (SK) model. A protocol developed by Quantinuum researchers uses a parameterized Instantaneous Quantum Polynomial (IQP) circuit, warm-started from 1-layer QAOA [29]:

- Circuit Design: A fully connected parameterized IQP circuit of the same depth as 1-layer QAOA is implemented, incorporating corrections that would otherwise require multiple layers.

- Efficient Training: The parameters in the IQP circuit are trained efficiently on a classical computer.

- Execution: The circuit is executed on a trapped-ion quantum computer (e.g., Quantinuum H2), leveraging features like all-to-all connectivity and parameterized two-qubit gates.

- Solution Sampling: Multiple shots are performed to sample solutions. Performance is measured by the probability of finding the optimal solution. For a 30-qubit instance, the optimal solution was found on the H2 device within 776 shots, a significant result given the search space of over 1 billion candidates [29]. Numerical simulations indicated an average probability of sampling the optimal solution of (2^{-0.31n}), representing a speedup over 1-layer QAOA [29].

Performance Data and Validation Against Classical Methods

Quantitative performance data is essential for objectively comparing quantum and classical approaches. The table below consolidates key metrics from recent experimental studies.

Table 3: Experimental Performance Data from Recent Studies

| Algorithm & Experiment | System / Problem | Key Performance Metric | Reported Result | Classical Benchmark |

|---|---|---|---|---|

| VQE (SQD-IEF-PCM) [28] | Methanol in water (Solvation Energy) | Accuracy (Deviation from benchmark) | < 0.2 kcal/mol | CASCI-IEF-PCM / MNSol Database |

| QAOA (IQP-style) [29] | Sherrington-Kirkpatrick (32 qubits) | Probability of optimal solution | (2^{-0.31n}) (average) | 1-layer QAOA ((2^{-0.5n})) |

| QAOA (IQP-style) [29] | 30-qubit instance on H2 hardware | Success in finding optimal solution | Optimal solution found in 776 shots | Search space: (2^{30} > 10^9) |

| Quantum Echoes (OTOC) [23] | Molecular structure (15 & 28 atoms) | Computational Speed | 13,000x faster than supercomputer | Classical supercomputer simulation |

| General VQE Simulation [26] | H₂ molecule (4 qubits) | Result Agreement | Physically consistent results | Classical eigensolver |

Successfully executing quantum algorithm experiments requires a suite of software, hardware, and methodological "reagents." The following table details key components cited in contemporary research.

Table 4: Essential Research Reagents and Resources

| Tool Category | Specific Examples | Function & Relevance |

|---|---|---|

| Quantum Software Simulators | PennyLane, Qiskit, CUDA-Q [30] [29] | Provide environments for algorithm design, simulation, and hybrid quantum-classical workflow management. |

| Classical Optimizers | BFGS, SPSA, COBYLA [26] | Classical subroutines that adjust parameters of the quantum circuit to minimize the cost function (critical for VQE/QAOA). |

| Ansatz Architectures | UCCSD [26], Hardware Efficient, IQP [29] | Parameterized quantum circuits that define the search space for the algorithm's solution. |

| High-Performance Computing (HPC) | Job arrays, Containerization (Docker/Singularity) [26] | Manage the computational burden of classical optimization and enable scalable, reproducible simulations. |

| Chemical Modeling Tools | IEF-PCM [28], STO-3G Basis Set [26] | Incorporate realistic chemical conditions (e.g., solvation) into the quantum simulation, enhancing practical relevance. |

| Quantum Hardware Platforms | IBM's superconducting processors [28], Quantinuum's trapped-ion H-Series [29] | Physical devices for running quantum circuits; their fidelity and connectivity are critical for algorithm performance. |

Workflow and Algorithm Diagrams

VQE for Solvated Molecules Workflow

The following diagram illustrates the iterative hybrid workflow for simulating solvated molecules using VQE, as demonstrated with the SQD-IEF-PCM method [28].

Diagram Title: VQE Workflow with Implicit Solvent

QAOA/IQP Comparative Structure

This diagram contrasts the standard QAOA structure with the enhanced IQP-style approach, highlighting the source of performance improvements [29].

Diagram Title: QAOA vs. Enhanced IQP Structure

The comparative analysis of VQE, QAOA, and QPE reveals a nuanced landscape for quantum algorithm application in computational chemistry. VQE has demonstrated immediate utility, achieving chemical accuracy for small molecules and now incorporating realistic solvent effects, making it a practical tool for near-term research [28]. QAOA, while primarily an optimization algorithm, shows remarkable efficiency in solving combinatorial problems with minimal quantum resources, a promising sign for its application in specific chemistry domains [29]. In contrast, QPE remains the gold standard for precision but awaits the advent of fully fault-tolerant quantum hardware. The emergence of novel, verifiable algorithms like Quantum Echoes, which has demonstrated a 13,000-fold speedup over classical supercomputers for a specific task, signals a pivotal shift toward tangible quantum utility in molecular structure problems [23]. The ongoing validation of these algorithms against robust classical methods remains the critical step in bridging the gap between theoretical promise and practical application in drug discovery and materials science.

This guide provides an objective comparison of Coupled Cluster with Single, Double, and Perturbative Triple Excitations (CCSD(T)), Density Functional Theory (DFT), and emerging machine learning (ML) methods for quantum chemistry simulations. It is structured for researchers and professionals who need to select appropriate computational methods for validating quantum chemistry results in fields like drug development and materials science.

Quantum chemistry simulations provide essential insights into molecular structure, reactivity, and properties. For decades, the field has been dominated by a trade-off between the high accuracy of CCSD(T) and the computational efficiency of DFT. CCSD(T)) is widely considered the "gold standard" for its high reliability, often matching experimental results [31]. However, its steep computational cost, which scales poorly with system size, has traditionally restricted its application to small molecules [31]. Conversely, DFT offers a more practical balance of cost and accuracy for larger systems but can suffer from inaccuracies due to its dependence on the chosen approximate functional [32].

Recent advances in machine learning are bridging this gap. New ML architectures are now being trained on CCSD(T) data to achieve gold-standard accuracy at a fraction of the computational cost, while other approaches are refining lower-level quantum methods to enhance their precision and scope [33] [31] [34]. This guide compares these methods through quantitative benchmarks and detailed experimental protocols.

The table below summarizes the key characteristics, strengths, and limitations of CCSD(T), DFT, and leading machine-learning approaches.

| Method | Computational Cost (Scaling) | Typical System Size | Key Strengths | Primary Limitations |

|---|---|---|---|---|

| CCSD(T) | Very High (O(N⁷)) | ~10s of atoms [31] | "Gold standard" accuracy; high reliability against experiment [31] [32] | Prohibitively expensive for large systems; poor scaling [31] |

| DFT | Moderate (O(N³-⁴)) | ~100s to 1000s of atoms | Good balance of speed and accuracy; widely used for condensed phases [33] [31] | Functional-dependent accuracy; can be unreliable for specific interactions (e.g., dispersion) [32] |

| Δ-Machine Learning | Low (after training) | ~1000s of atoms [33] | Corrects low-level methods (e.g., DFT) to CCSD(T) accuracy [33] [35] | Requires high-quality training data; risk of poor out-of-distribution generalization |

| MEHnet (MIT) | Low (after training) | ~1000s of atoms [31] | Multi-task property prediction from one model; CCSD(T)-level accuracy [31] | Model development and training complexity |

| NN-xTB | Very Low | ~1000s of atoms [34] | Near-DFT accuracy at semi-empirical cost; strong generalization [34] | Accuracy ceiling below CCSD(T) |

Quantitative Benchmarking and Accuracy Assessment

Benchmarking Against Experimental Data

CCSD(T) demonstrates exceptional agreement with experimental measurements. For example, in calculating the enthalpy of formation for Si–O–C–H molecules, CCSD(T) results typically deviate from experimental data by only about 1–2 kJ/mol [32]. This high fidelity makes it the preferred benchmark for assessing other theoretical methods.

DFT Functional Performance on Specific Systems

The performance of DFT is highly functional-dependent. A systematic study on Si–O–C–H molecules benchmarked against CCSD(T) revealed significant variations in accuracy across different properties [32]. The table below summarizes the best-performing functionals for this system.

| Property Evaluated | Best Performing Functional(s) | Mean Absolute Error (MAE) vs. CCSD(T) |

|---|---|---|

| Enthalpy of Formation | M06-2X [32] | Lowest MAE |

| Vibrational Frequencies & Zero-Point Energies | SCAN [32] | Lowest MAE |

| Reaction Energies & Relative Stability | B2GP-PLYP [32] | Smallest Errors |

| Consistent Overall Performance | PW6B95 [32] | Consistently Low Errors |

For ion-solvent binding energies, the ωB97M-V and ωB97X-V functionals have been identified as cost-effective, with mean errors well below the threshold of chemical accuracy (∼5 kJ mol⁻¹) relative to DLPNO-CCSD(T) benchmarks [36].

Machine Learning Model Benchmarks

Machine learning models show dramatic improvements in accuracy and efficiency:

- MEHnet: When tested on hydrocarbon molecules, this model outperformed DFT counterparts and closely matched experimental results from published literature [31].

- NN-xTB: This method reduced the MAE for vibrational frequencies from 200.6 cm⁻¹ (with the underlying GFN2-xTB method) to 12.7 cm⁻¹, a reduction of over 90%. It also achieves DFT-like accuracy on the GMTKN55 benchmark (WTMAD-2 of 5.6 kcal/mol) at a near-semiempirical computational cost [34].

- Δ-ML for Potential Energy Surfaces (PES): A Δ-ML PES for acetylacetone reproduced the hydrogen-transfer barrier with excellent agreement, showing a barrier of 3.15 kcal/mol versus the direct FNO-CCSD(T) value of 3.11 kcal/mol [35].

Key Experimental and Workflow Protocols

The Δ-Machine Learning (Δ-ML) Workflow

The Δ-ML approach involves learning the difference (Δ) between a high-accuracy, expensive method and a lower-accuracy, fast method [33] [35]. A typical workflow for creating a CCSD(T)-accurate ML potential is as follows:

- Dataset Generation:

- Generate diverse molecular configurations for the system of interest.

- Use the Frozen Natural Orbital (FNO) approximation to accelerate CCSD(T) calculations, achieving speedups by a factor of 30-40 while maintaining fidelity with conventional CCSD(T) results [35].

- Compute reference energies and forces at the FNO-CCSD(T) level for these configurations.

- Model Training:

- Validation:

- Validate the final Δ-ML potential on unseen configurations, assessing its performance on energies, forces, structural properties, and vibrational frequencies [35].

The WASP for Multireference Systems

Simulating transition metal catalysts requires accurately capturing multireference character, which is poorly described by standard DFT. The Weighted Active Space Protocol (WASP) addresses this [37]:

- Wavefunction Sampling: For a set of molecular geometries along a reaction path, compute multiconfigurational wavefunctions using a method like MC-PDFT.

- Consistent Labeling: For a new geometry, generate a unique and consistent wavefunction as a weighted combination of wavefunctions from the k-nearest known structures. Weights are based on the similarity (e.g., geometric root-mean-square deviation) to the new geometry.

- ML Potential Training: Use these consistently labeled energies and forces to train a machine-learned interatomic potential. This enables multireference molecular dynamics simulations at speeds millions of times faster than the original quantum chemistry calculations [37].

The advancement of machine learning in quantum chemistry relies on key software methods, datasets, and computational tools.

| Resource Name | Type | Primary Function | Relevance to Validation |

|---|---|---|---|

| MEHnet [31] | Neural Network Architecture | Multi-task prediction of electronic properties at CCSD(T) level accuracy. | Provides a single, unified model for predicting multiple molecular properties with high fidelity. |

| WASP [37] | Computational Algorithm | Ensures consistent wavefunction labeling for training ML potentials on multireference data. | Enables accurate and efficient simulation of complex systems like transition metal catalysts. |

| NN-xTB [34] | ML-Augmented Model | Adds neural-network corrections to a semi-empirical quantum method (xTB). | Offers a fast pathway for dynamics and screening with accuracy approaching that of DFT. |

| OMol25 Dataset [38] | Training Dataset | Large-scale DFT dataset with 100M+ calculations across 83 elements, includes solvation. | Provides broad chemical diversity for training and benchmarking generalizable ML models. |

| QM9 Dataset [39] | Benchmark Dataset | DFT-calculated properties for ~134k small organic molecules. | Serves as a foundational benchmark for developing and comparing new machine learning models. |

The computational chemistry landscape is undergoing a rapid transformation driven by machine learning. While CCSD(T) remains the unchallenged benchmark for accuracy and DFT continues to be a versatile workhorse, new hybrid methods are successfully bridging the gap between these classical approaches. Techniques like Δ-ML, MEHnet, and WASP are making it increasingly feasible to perform routine simulations of condensed phases, complex catalytic cycles, and large biomolecules with near-CCSD(T) accuracy but at drastically reduced computational cost [33] [31] [37].

Future progress hinges on the development of more comprehensive and chemically diverse benchmark datasets, such as OMol25 [38], and continued innovation in neural network architectures that incorporate physical constraints. The ultimate goal, actively pursued by several groups, is to create models that deliver CCSD(T)-level accuracy across the entire periodic table at a computational cost lower than that of DFT [31]. This will unequivocally accelerate the discovery of new materials, catalysts, and pharmaceuticals.

The pursuit of quantum advantage in computational chemistry hinges on developing algorithms that can accurately simulate molecular systems beyond the reach of classical methods. Hybrid quantum-classical approaches represent a promising pathway toward this goal, leveraging the complementary strengths of both computational paradigms. Among these emerging methods, the ADAPT-Generator Coordinate Inspired Method (ADAPT-GCIM) framework has shown particular promise for addressing strongly correlated quantum chemical systems that challenge conventional computational approaches.

This guide provides a comprehensive comparison of the ADAPT-GCIM framework against alternative quantum-classical methods, examining their theoretical foundations, experimental performance, and practical implementation. The analysis is situated within the broader research context of validating quantum chemistry results against well-established classical computational methods, offering researchers in chemistry and drug development an objective assessment of current capabilities and limitations in the quantum computing landscape.

Theoretical Foundation: From Constrained Optimization to Generalized Eigenvalue Problems

The Limitations of Conventional Hybrid Approaches

Hybrid quantum-classical computational strategies, particularly the Variational Quantum Eigensolver (VQE), have emerged as leading candidates for leveraging near-term quantum devices. Conventional VQE approaches formulate quantum chemistry problems as constrained optimization challenges, where parameterized quantum circuits are optimized to minimize the energy expectation value of a molecular Hamiltonian [40]. Mathematically, this is expressed as:

[ {E}{g}=\mathop{\mathrm{min}}\limits{\vec{\theta }}\langle {\psi }{VQE}(\vec{\theta })| H| {\psi }{VQE}(\vec{\theta })\rangle ]

However, these approaches face several fundamental limitations:

- Barren plateaus: Gradients vanish exponentially with system size, hindering convergence [41] [40]

- Ansatz dependence: Performance heavily relies on the choice of parameterized circuit structure [40]

- Local minima: Optimization landscapes contain numerous local minima that trap conventional minimizers [40]

- Circuit depth: High-depth circuits required for complex systems exceed current hardware capabilities [42] [43]

The GCIM Foundation: Bridging Nuclear Physics and Quantum Chemistry

The Generator Coordinate Method (GCM), originally developed in nuclear physics to model collective phenomena like nuclear deformation, provides an alternative theoretical foundation [41] [40]. Rather than solving constrained optimization problems, GCM constructs wavefunctions as superpositions of non-orthogonal many-body basis states and projects the system Hamiltonian into an effective Hamiltonian through a generalized eigenvalue problem.

This approach circumvents the highly nonlinear parametrization challenges of VQE and provides a more efficient framework for extending the probed subspaces on which target functions are built [41]. The GCIM (Generator Coordinate Inspired Method) adapts this nuclear physics framework for quantum chemical applications, using Unitary Coupled Cluster (UCC) excitation generators to construct non-orthogonal, overcomplete many-body bases [40].

The ADAPT-GCIM Framework: Methodology and Workflow

The ADAPT-GCIM framework enhances the base GCIM approach with an adaptive scheme that automatically constructs optimal many-body basis sets from a pool of UCC excitation generators [40]. This creates a hierarchical quantum-classical strategy that balances subspace expansion and ansatz optimization.

Core Computational Workflow

The following diagram illustrates the adaptive workflow of the ADAPT-GCIM framework:

Mathematical Foundation

ADAPT-GCIM employs UCC excitation generators to construct generating functions, creating a subspace consisting of multiple non-orthogonal superpositioned states [40]. For a molecular system with N electrons in M spin orbitals, the approach uses a sequence of K Givens rotations (equivalent to UCC single excitations) applied to a reference state |φ₀⟩:

[ \vert \psi (\vec{\theta })\rangle =\mathop{\prod }\limits{i=1}^{K}{G}{{p}{i},{q}{i}}({\theta }{i})\vert {\phi }{0}\rangle ]

Each rotation generates a superposition of no more than two states, with the total number of configurations (n_c) in the superpositioned ansatz not exceeding 2^K [40]. The method then projects the system Hamiltonian into this subspace and solves the resulting generalized eigenvalue problem:

[ \mathbf{H}\vec{c} = E\mathbf{S}\vec{c} ]

where (\mathbf{H}) is the projected Hamiltonian matrix, (\mathbf{S}) is the overlap matrix, and (\vec{c}) contains the expansion coefficients.

Performance Comparison: ADAPT-GCIM vs. Alternative Methods

Benchmarking Within the QRDR Framework

Recent research has evaluated ADAPT-GCIM within the Quantum Infrastructure for Reduced-Dimensionality Representations (QRDR) pipeline, which integrates coupled cluster downfolding techniques with quantum solvers [42] [43]. This framework allows comprehensive comparison against other prominent quantum-classical algorithms:

Table 1: Quantum Solver Comparison in QRDR Pipeline

| Method | Theoretical Foundation | Key Strength | Key Limitation | Correlation Handling |

|---|---|---|---|---|

| ADAPT-GCIM | Generalized eigenvalue problem in dynamic subspace | Avoids barren plateaus; balanced accuracy/efficiency [41] [40] | Requires more measurements than single VQE iteration [40] | Strongly correlated systems [40] |

| ADAPT-VQE | Iterative ansatz construction & optimization | Systematically grows ansatz [40] | Optimization challenges; barren plateaus [40] | Static correlation dominant [42] |

| Qubit-ADAPT-VQE | Qubit-based operators rather than fermionic | Reduced circuit depth [42] | May sacrifice chemical accuracy [42] | Moderate correlation [42] |

| UCCGSD | Unitary Coupled Cluster with Generalized Single & Double excitations | Size-extensive; preserves physical symmetries [42] [43] | High circuit depth; challenging optimization [42] | Dynamical correlation [42] |

Accuracy and Efficiency Metrics

The performance of these methods has been assessed across several molecular systems with varying correlation characteristics:

Table 2: Performance Comparison Across Molecular Systems

| Molecular System | Method | Ground State Energy Accuracy (Hartree) | Circuit Depth | Measurement Requirements | Classical Resources |

|---|---|---|---|---|---|

| N₂ (equilibrium) | ADAPT-GCIM | High (exact within subspace) [40] | Low to moderate [40] | High [40] | Moderate (eigenvalue solver) |

| ADAPT-VQE | Moderate to high [42] | Moderate to high [42] | Low to moderate [42] | High (optimizer) | |

| UCCGSD | Moderate [42] | High [42] | Low [42] | High (optimizer) | |

| N₂ (stretched) | ADAPT-GCIM | High (exact within subspace) [40] | Low to moderate [40] | High [40] | Moderate (eigenvalue solver) |

| ADAPT-VQE | Moderate (optimization challenges) [42] | Moderate to high [42] | Low to moderate [42] | High (optimizer) | |

| UCCGSD | Low to moderate (static correlation) [42] | High [42] | Low [42] | High (optimizer) | |

| Benzene | ADAPT-GCIM | High [42] [43] | Low to moderate [40] | High [40] | Moderate (eigenvalue solver) |

| ADAPT-VQE | Moderate [42] [43] | Moderate to high [42] | Low to moderate [42] | High (optimizer) | |

| Free-base porphyrin | ADAPT-GCIM | High [42] [43] | Low to moderate [40] | High [40] | Moderate (eigenvalue solver) |

| ADAPT-VQE | Moderate [42] [43] | High [42] | Low to moderate [42] | High (optimizer) |

Method Selection Guidelines

The relationship between system characteristics and suitable quantum-classical methods can be visualized as follows:

Experimental Protocols and Implementation

The Scientist's Toolkit: Essential Research Reagents

Implementing hybrid quantum-classical methods requires both theoretical components and computational tools:

Table 3: Essential Research Components for Hybrid Quantum-Classical Chemistry

| Component | Function | Implementation in ADAPT-GCIM |

|---|---|---|

| UCC Excitation Generator Pool | Provides elementary operations for constructing many-body basis states [40] | Pool of operators (e.g., singles, doubles) selected based on molecular system |

| Reference State | Initial approximation of target wavefunction | Hartree-Fock state; improved initial states (e.g., DMRG) recommended [44] |

| Classical Eigenvalue Solver | Solves generalized eigenvalue problem in constructed subspace | Standard numerical linear algebra libraries (e.g., LAPACK) |

| Quantum Simulator/Hardware | Executes quantum circuits to measure matrix elements | State-vector simulators (e.g., SV-Sim) or actual quantum hardware [42] [43] |

| Measurement Protocol | Determines Hamiltonian and overlap matrix elements | Quantum expectation value measurements with error mitigation [40] |

| Downfolding Framework | Reduces problem dimensionality to active space | Coupled cluster downfolding for incorporating dynamical correlation [42] [43] |

QRDR Computational Pipeline

The Quantum Infrastructure for Reduced-Dimensionality Representations (QRDR) provides a comprehensive experimental framework for comparing quantum-classical methods [42] [43]:

- Hamiltonian Downfolding: Classical computation of effective Hamiltonians using coupled cluster theory, reducing hundreds of orbitals to tractable active spaces

- Quantum Solver Execution: Implementation of quantum algorithms (ADAPT-GCIM, ADAPT-VQE, UCCGSD) on selected backends

- Energy Extraction: Calculation of ground-state energies from the quantum computations

- Accuracy Assessment: Comparison with full configuration interaction (FCI) or exact diagonalization where feasible