Validating Quantum Chemical Methods with Spectroscopic Data: A Modern Guide for Computational Chemists and Drug Developers

This article provides a comprehensive framework for validating quantum chemical methods against experimental spectroscopic data, a critical step for ensuring reliability in computational chemistry and drug discovery.

Validating Quantum Chemical Methods with Spectroscopic Data: A Modern Guide for Computational Chemists and Drug Developers

Abstract

This article provides a comprehensive framework for validating quantum chemical methods against experimental spectroscopic data, a critical step for ensuring reliability in computational chemistry and drug discovery. It covers foundational principles, explores advanced methodologies integrating machine learning and AI, addresses common troubleshooting and optimization challenges, and establishes rigorous validation and comparative analysis protocols. Tailored for researchers, scientists, and drug development professionals, the content synthesizes the latest advancements—including Large Wavefunction Models (LWMs), AI-powered autonomous labs, and massive datasets like OMol25—to offer practical strategies for enhancing the predictive accuracy and trustworthiness of computational models in biomedical research.

The Quantum-Spectroscopy Interface: Core Principles and the Critical Need for Validation

The validation of quantum chemical methods using spectroscopic data is a critical process in computational chemistry and drug development. It ensures that theoretical predictions accurately reflect experimental reality, enabling researchers to trust computational models for elucidating molecular structures, reaction mechanisms, and electronic properties. This guide provides a comprehensive comparison of various quantum chemical methods, assessing their performance against experimental spectroscopic data and high-level theoretical benchmarks.

As computational power has increased and algorithms have matured, the integration of machine learning (ML) has begun to revolutionize the field. ML approaches can achieve accuracy comparable to standard quantum chemical methods while reducing computational time by several orders of magnitude, offering promising avenues for accelerating research [1] [2]. Furthermore, the development of extensive, gold-standard benchmark databases provides the essential foundation for both validating existing methods and training new ML models [3].

Comparative Analysis of Quantum Chemical Methods

Quantum chemical methods form a hierarchy of approximations for solving the Schrödinger equation, each with different trade-offs between computational cost and accuracy. Ab Initio methods, such as Hartree-Fock (HF) and Post-Hartree-Fock approaches, solve the electronic structure problem from first principles without empirical parameters. Density Functional Theory (DFT) methods approximate the electron correlation energy via exchange-correlation functionals, offering a good balance of cost and accuracy. Semi-Empirical Methods introduce parameterizations to simplify calculations, significantly speeding up computations at the cost of some transferability. Recently, Machine Learning (ML) Potentials have emerged as powerful tools for learning complex relationships from quantum chemical data, enabling highly efficient predictions of molecular properties and spectra [2].

Performance Benchmarking Data

The following tables summarize the performance of various quantum chemical and machine learning methods against high-level benchmarks and experimental data.

Table 1: Performance of Methods for Proton Transfer Reaction Energies (Mean Unsigned Error, kJ/mol) [4]

| Method | Category | -NH3 | COOH | +CNH2 | NH | PhOH | Q | -SH | H2O | Average |

|---|---|---|---|---|---|---|---|---|---|---|

| PM7 | Semi-Empirical | 13.0 | 10.3 | 14.1 | 7.03 | 10.2 | 14.1 | 27.6 | 15.7 | 13.4 |

| GFN2-xTB | Semi-Empirical/TB-DFT | 22.2 | 10.0 | 13.0 | 11.7 | 9.70 | 20.1 | 5.60 | 12.2 | 13.5 |

| DFTB3 | Tight-Binding DFT | 14.4 | 5.74 | 23.1 | 30.1 | 20.8 | 20.7 | 4.65 | 5.70 | 15.2 |

| PM6-ML | ML-Corrected Semi-Empirical | 7.26 | 15.1 | 9.38 | 10.3 | 5.92 | 14.7 | 14.8 | 8.13 | 10.8 |

| B3LYP | DFT (Hybrid Functional) | 7.29 | 5.41 | 4.73 | 9.54 | 7.15 | 11.4 | 4.07 | 8.94 | 7.44 |

| M06L | DFT (Meta-GGA Functional) | 6.99 | 3.94 | 3.82 | 10.1 | 9.19 | 15.7 | 3.33 | 8.06 | 8.35 |

| CCSD(T)/CBS | Gold-Standard Ab Initio | - | - | - | - | - | - | - | - | Reference |

Table 2: Comparative Performance in Reaction Mechanism Studies and Spectral Predictions

| Method / Study | System | Key Performance Metric | Comparison to Standard Method |

|---|---|---|---|

| AI-Powered (MLAtom) [1] | Silanediamine Cyclization | ~800x speedup in geometry optimization; ~2000x speedup in frequency calculations. | "Shows same accuracy as standard quantum chemical approach." |

| SNS-MP2 [3] | Dimer Interaction Energies (DES5M database) | Accuracy comparable to CCSD(T)/CBS. | Provides gold-standard interaction energies at a greatly reduced computational cost. |

| DFT/B3LYP/6-311+G(d,p) [5] | Phenylephrine Molecule (IR, Raman, UV-Vis) | Accurately predicted optimized geometry, vibrational frequencies, and UV-Vis spectrum. | Validated against experimental spectroscopic data with good agreement. |

| Machine Learning Spectroscopy [2] | Various Molecules (UV, IR, X-ray, NMR) | Rapid prediction of spectra from molecular structure. | Complements traditional computational spectroscopy; enables high-throughput screening. |

Key Findings and Trends

The benchmark data reveals several critical trends. For modeling proton transfer reactions, modern DFT functionals like B3LYP and M06L generally provide the best balance of accuracy and efficiency, though their performance can vary significantly across different chemical groups [4]. Among approximate methods, PM7 and GFN2-xTB show reasonable average accuracy, making them suitable for rapid screening or studies of very large systems. The application of machine learning as a correction (e.g., PM6-ML) demonstrates a powerful strategy to boost the accuracy of faster methods to near-DFT levels [4].

Furthermore, studies on organosilicon reactions and molecular spectroscopy highlight that ML-powered approaches can match the accuracy of standard quantum chemistry while achieving speedups of several hundred to a thousand times, drastically expanding the scope of systems that can be studied computationally [1] [2] [3].

Experimental Protocols for Method Validation

Protocol 1: Validating with Gold-Standard Interaction Energies

This protocol outlines using the DES370K and DES5M benchmark databases to validate the accuracy of a new quantum method for predicting noncovalent interactions, which are crucial in drug binding and materials science [3].

Workflow: Method Validation via Benchmark Databases

Detailed Steps:

Database Selection and Access:

- Obtain the DES370K (CCSD(T)/CBS level) or DES5M (machine-learned gold-standard) databases, which contain Cartesian coordinates and interaction energies for thousands of dimer complexes [3].

- The DES15K subset can be used for initial, less computationally demanding validation.

Geometry and Data Processing:

- Extract the Cartesian coordinates for the dimer geometries from the database.

- Extract the corresponding reference interaction energies (in kJ/mol or kcal/mol).

Quantum Chemical Calculation:

- Using the method under validation (e.g., a new DFT functional, semi-empirical method, or force field), calculate the single-point energy for each dimer geometry and its constituent monomers.

- Compute the interaction energy as: ( \Delta E = E{dimer} - (E{monomer A} + E_{monomer B}) ). Ensure consistent geometry is used for monomer calculations as in the dimer.

Error Calculation and Statistical Analysis:

- For each dimer, calculate the error: ( Error = \Delta E{method} - \Delta E{reference} ).

- Perform statistical analysis across the dataset:

- Mean Unsigned Error (MUE): Average of the absolute values of errors.

- Mean Absolute Error (MAE): Similar to MUE.

- Root-Mean-Square Error (RMSE): Amplifies the impact of larger errors.

- Coefficient of Determination (R²): Measures correlation.

Reporting:

- Report the statistical metrics for the entire dataset and for specific chemical subgroups (e.g., hydrogen-bonded dimers, dispersion-dominated complexes) to identify method strengths and weaknesses.

Protocol 2: Validating with Experimental Spectroscopic Data

This protocol describes the process of validating a quantum chemical method by comparing its predictions directly with experimental spectroscopic data, using the phenylephrine molecule as a case study [5].

Workflow: Spectroscopic Validation

Detailed Steps:

Geometry Optimization:

- Begin with an initial molecular structure.

- Perform a full geometry optimization using the quantum method being validated (e.g., B3LYP/6-311+G(d,p)) to find the minimum energy structure [5]. Confirm the structure is a true minimum by verifying the absence of imaginary frequencies in the vibrational analysis.

Spectroscopic Property Calculation:

- Vibrational Spectra (IR/Raman): Calculate the harmonic vibrational frequencies and their intensities (IR) or activities (Raman) on the optimized geometry. Apply a scaling factor (e.g., 0.966) to account for anharmonicity and basis set limitations [5].

- UV-Vis Spectrum: Use Time-Dependent DFT (TD-DFT) to calculate electronic excitation energies and oscillator strengths. Convolute the results with line-shape functions (e.g., Gaussian) to generate a simulated spectrum [5].

- Other Spectroscopies: For NMR, calculate chemical shielding tensors; for XPS, calculate core-electron binding energies.

Experimental Data Acquisition:

- Obtain high-quality experimental spectra from literature or direct measurement. For the phenylephrine case study, this would involve FT-IR, Raman, and UV-Vis spectra [5].

Spectral Comparison and Analysis:

- Overlay the calculated and experimental spectra.

- For IR/Raman: Compare the positions (frequencies in cm⁻¹) and relative intensities of key vibrational bands. Calculate the mean absolute deviation of scaled frequencies.

- For UV-Vis: Compare the position and shape of absorption peaks (λ_max). Analyze which molecular orbitals are involved in the transitions to confirm assignments.

- Assign the experimental spectral features based on the computational predictions.

Reporting:

- Report quantitative measures of agreement (e.g., mean absolute error for vibrational frequencies, error in λ_max).

- Discuss the method's success in reproducing experimental trends and its utility for interpreting spectroscopic data.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Computational Tools and Resources for Quantum Chemical Validation

| Tool / Resource Name | Category | Function in Validation | Example Use Case |

|---|---|---|---|

| DES370K / DES5M Databases [3] | Benchmark Data | Provides gold-standard dimer interaction energies for validating method accuracy on noncovalent interactions. | Testing a new density functional's ability to model van der Waals forces in drug-like molecules. |

| Gaussian 09 W [5] | Quantum Chemistry Software | Performs a wide range of QM calculations (geometry optimization, frequency, TD-DFT) for spectroscopic validation. | Optimizing the structure of phenylephrine and calculating its IR, Raman, and UV-Vis spectra. |

| MLAtom [1] | Machine Learning Software | Accelerates quantum chemical computations like geometry optimizations and frequency calculations. | Rapidly scanning the reaction pathway of silanediamine cyclization with quantum-level accuracy. |

| GaussView [5] | Visualization Software | A graphical interface used for setting up calculations, visualizing molecular structures, orbitals, and vibrational modes. | Building an initial molecular model and visually analyzing the HOMO-LUMO orbitals of a target molecule. |

| Multiwfn [5] | Wavefunction Analysis | A powerful tool for analyzing computational results, including plotting spectra, calculating descriptors, and topological analysis. | Performing Natural Bond Orbital (NBO) analysis or plotting the Density of States (DOS) for a molecule. |

| B3LYP Functional [5] [4] | Quantum Chemical Method | A widely used hybrid DFT functional known for its good general-purpose performance for organic molecules. | Predicting molecular geometries and ground-state energies for a series of drug candidates. |

| 6-311+G(d,p) Basis Set [5] | Quantum Chemical Method | A triple-zeta basis set with polarization and diffuse functions, suitable for accurate calculations of anions and spectroscopy. | Calculating accurate vibrational frequencies and electronic excitation energies. |

| Polarizable Continuum Model (PCM) [1] | Solvation Model | Simulates the effect of a solvent on molecular properties and reaction energies within QM calculations. | Modeling the solvation energy of a molecule in water to predict its behavior in a physiological environment. |

Density Functional Theory (DFT) stands as the workhorse of modern computational chemistry, materials science, and drug discovery due to its favorable balance between computational cost and accuracy. However, its widespread application has revealed systematic limitations that create a significant data quality bottleneck for research and development. This bottleneck manifests particularly in pharmaceutical and materials science contexts where predictive accuracy is paramount. While DFT has revolutionized our ability to model complex molecular systems, the inherent approximations in its exchange-correlation functionals introduce errors that can compromise predictive reliability in critical applications. The fundamental challenge lies in the method's variable performance across different chemical systems and properties—what works sufficiently well for one system may fail dramatically for another.

The quest for higher accuracy has driven development along multiple frontiers: refinement of traditional DFT functionals, creation of multi-level composite methods, integration of quantum computing, and incorporation of artificial intelligence. Each approach seeks to address specific limitations while maintaining computational feasibility. This comparison guide examines the performance gaps of traditional DFT and evaluates emerging solutions that promise to overcome these limitations, providing researchers with a clear framework for method selection based on empirical evidence and theoretical advances. Understanding these limitations and alternatives is particularly crucial for drug development professionals who rely on computational predictions to guide experimental efforts and reduce costly trial-and-error approaches.

Quantifying the Limitations of Traditional DFT

Performance Inconsistencies Across Chemical Systems

Traditional DFT approximations demonstrate significant performance variations when applied to different chemical systems, with particularly problematic behavior for transition metal complexes and non-covalent interactions. A comprehensive benchmark study analyzing 250 electronic structure theory methods for describing spin states and binding properties of iron, manganese, and cobalt porphyrins revealed that current approximations fail to achieve the "chemical accuracy" target of 1.0 kcal/mol by a substantial margin [6]. The best-performing methods achieved a mean unsigned error (MUE) of <15.0 kcal/mol, but errors for most methods were at least twice as large [6]. This accuracy gap presents a substantial reliability concern for drug discovery applications involving metalloenzymes or catalytic systems.

The study further identified that approximations with high percentages of exact exchange (including range-separated and double-hybrid functionals) can lead to catastrophic failures for certain systems, while semilocal functionals and global hybrid functionals with a low percentage of exact exchange proved least problematic for spin states and binding energies [6]. This inconsistency necessitates careful functional selection based on the specific chemical system under investigation, creating challenges for high-throughput screening applications where multiple chemical environments may be encountered.

Table 1: Performance Grades of DFT Functional Types for Metalloporphyrin Chemistry

| Functional Type | Representative Functionals | Overall Grade | Mean Unsigned Error (kcal/mol) | Recommended Context |

|---|---|---|---|---|

| Local GGAs/meta-GGAs | GAM, r2SCAN, revM06-L | A | <15.0 | Transition metal systems, spin state energies |

| Global Hybrids (low exact exchange) | r2SCANh, B98, APF(D) | A-B | 15.0-20.0 | Balanced approach for diverse systems |

| Global Hybrids (high exact exchange) | M06-2X, HFLYP | F | >30.0 | Not recommended for transition metals |

| Double Hybrids | B2PLYP, B2PLYP-D3 | F | >30.0 | Catastrophic failures observed |

System-Specific Failures and Error Propagation

The limitations of traditional DFT become particularly pronounced in specific chemical contexts that strain the approximations inherent in standard functionals. For transition metal systems, which are ubiquitous in pharmaceutical catalysts and biological enzymes, DFT faces challenges in accurately describing the nearly degenerate electronic states that characterize these systems [6] [7]. The presence of multiple low-lying, nearly degenerate spin states in metalloporphyrins makes them particularly challenging for single-reference DFT methods [6]. This limitation extends to bond dissociation processes, excited states, and strongly correlated systems—precisely the scenarios often encountered in photochemical drug interactions and catalytic processes.

At high pressures, relevant for materials science and pharmaceutical polymorph screening, the performance of DFT reveals additional limitations. A systematic investigation found that lessons learned at ambient conditions do not always translate to high-pressure regimes, with different exchange-correlation functionals exhibiting varying degrees of accuracy for equations of state and pressure-induced phase transformations [8]. Interestingly, the local density approximation (LDA), while generally outperformed by other functionals at ambient conditions, demonstrated remarkable performance at high pressures [8]. This context-dependent performance complicates method selection and requires researchers to possess specialized knowledge about functional behavior under specific conditions.

Next-Generation Quantum Chemical Methods

Multiconfiguration Pair-Density Functional Theory (MC-PDFT)

For systems with significant static correlation where traditional DFT fails, Multiconfiguration Pair-Density Functional Theory (MC-PDFT) represents a promising advancement. Developed by Gagliardi and Truhlar, MC-PDFT offers more accuracy than advanced wave function methods but at a much lower computational cost, making it feasible to study larger systems that are prohibitively expensive for traditional wave-function methods [9]. This approach addresses one of the most significant limitations of Kohn-Sham DFT—its inability to properly handle systems where electron interactions are complex and cannot be accurately described by a single-determinant wave function [9].

The recently introduced MC23 functional incorporates kinetic energy density to enable a more accurate description of electron correlation [9]. By fine-tuning functional parameters using an extensive set of training systems ranging from simple molecules to highly complex ones, researchers created a tool that works well across the spectrum of chemical complexity [9]. MC23 improves performance for spin splitting, bond energies, and multiconfigurational systems compared to previous MC-PDFT and KS-DFT functionals, making it particularly valuable for transition metal complexes and bond-breaking processes common in catalytic cycles and reactive intermediate characterization [9].

Artificial Intelligence-Enhanced Quantum Chemistry

The integration of artificial intelligence with quantum mechanical methods has produced breakthrough approaches that maintain high accuracy while dramatically reducing computational cost. The general-purpose artificial intelligence–quantum mechanical method 1 (AIQM1) approaches the accuracy of the gold-standard coupled cluster QM method with the computational speed of approximate low-level semiempirical QM methods for neutral, closed-shell species in the ground state [10]. This method demonstrates remarkable transferability, providing accurate ground-state energies for diverse organic compounds as well as geometries for challenging systems such as large conjugated compounds (including fullerene C60) close to experiment [10].

The AIQM1 method combines three components: a semiempirical QM Hamiltonian (ODM2), neural network corrections trained on high-level reference data, and modern dispersion corrections [10]. This hybrid architecture allows it to overcome limitations of purely local neural network potentials while maintaining computational efficiency. The method's ability to accurately determine geometries of polyyne molecules—a task difficult for both experiment and theory—demonstrates its potential for pharmaceutical research where molecular conformation critically determines biological activity [10].

Table 2: Comparison of Traditional and Next-Generation Quantum Chemical Methods

| Method | Theoretical Foundation | Computational Cost | Key Strengths | Key Limitations |

|---|---|---|---|---|

| Traditional DFT (GGA/Meta-GGA) | Kohn-Sham equations with approximate XC functionals | Low to Moderate | Broad applicability, reasonable accuracy for many systems | Systematic errors for transition metals, dispersion, band gaps |

| Traditional DFT (Hybrid) | Kohn-Sham equations with hybrid XC functionals | Moderate | Improved accuracy for main-group thermochemistry | High computational cost, still fails for multi-reference systems |

| MC-PDFT | Multiconfigurational wavefunction + density functional | Moderate to High | Accurate for multi-reference systems, transition metals | Higher cost than single-reference DFT, requires active space selection |

| AIQM1 | Semiempirical QM + Neural Networks + Dispersion | Very Low to Low | Near-CCSD(T) accuracy for organic molecules, extremely fast | Limited elements (H,C,N,O), primarily neutral closed-shell species |

| Composite Methods (G4, ccCA) | Multi-level wavefunction theory | High to Very High | High accuracy across diverse chemistry | Very high computational cost, limited to small molecules |

Composite Methods: Strategies for High-Accuracy Prediction

Gaussian-n Theories

Quantum chemistry composite methods, also known as thermochemical recipes, aim for high accuracy by combining the results of several calculations. These approaches combine methods with a high level of theory and a small basis set with methods that employ lower levels of theory with larger basis sets [11]. The Gaussian-n theories, including G2, G3, and G4, represent systematic model chemistries designed for broad applicability, with the specific goal of achieving chemical accuracy (within 1 kcal/mol of experimental values) for thermodynamic properties [11].

The G4 method incorporates several improvements over its predecessors, including an extrapolation scheme for obtaining basis set limit Hartree-Fock energies, use of geometries and thermochemical corrections calculated at B3LYP/6-31G(2df,p) level, a highest-level single point calculation at CCSD(T) instead of QCISD(T) level, and addition of extra polarization functions in the largest-basis set MP2 calculations [11]. These developments enable G4 theory to achieve significant improvement over G3 theory, particularly for main group elements. For drug development applications where accurate thermochemical predictions are essential for understanding reaction pathways and binding affinities, these methods provide valuable benchmark data, though their computational cost limits application to smaller model systems.

Feller-Peterson-Dixon (FPD) Approach

The Feller-Peterson-Dixon (FPD) approach employs a flexible sequence of up to 13 components that vary with the nature of the chemical system under study and the desired accuracy [11]. Unlike fixed-recipe methods, the FPD approach typically relies on coupled cluster theory, such as CCSD(T), combined with large Gaussian basis sets (up through aug-cc-pV8Z) and extrapolation to the complete basis set limit [11]. Additive corrections for core/valence, scalar relativistic, and higher-order correlation effects are systematically included, with attention paid to the uncertainties associated with each component [11].

When applied at the highest possible level, the FPD approach yields a root-mean-square deviation of 0.30 kcal/mol across 311 comparisons covering atomization energies, ionization potentials, electron affinities, and proton affinities [11]. For equilibrium structures, it achieves remarkable accuracy with RMS deviations of 0.0020 Å for heavy-atom distances and 0.0034 Å for hydrogen-containing bonds [11]. This exceptional precision makes the FPD approach invaluable for benchmarking more approximate methods and for studying small molecular systems where experimental data is scarce or difficult to obtain.

Experimental Protocols for Method Validation

Benchmarking Against High-Accuracy Reference Data

Robust validation of quantum chemical methods requires carefully designed benchmarking protocols against reliable reference data. The assessment of DFT methods for metalloporphyrins employed the Por21 database of high-level computational data (CASPT2 reference energies taken from the literature) to evaluate 250 electronic structure theory methods [6]. This systematic approach enabled direct comparison of functional performance across a chemically relevant test set, revealing the dramatic variations in accuracy noted previously.

For molecular systems where experimental data is available, the FPD approach has been heavily benchmarked against experiment, providing validated protocols for assessing method accuracy [11]. Similarly, the development of AIQM1 involved training and validation against the ANI-1x and ANI-1ccx datasets, which contain small neutral, closed-shell molecules in ground state with up to 8 non-hydrogen atoms [10]. These datasets cover not only equilibrium geometries but also conformational space through various sampling techniques, ensuring broad transferability of the resulting methods [10].

Numerical Quality Control in DFT Databases

As computational materials databases grow in size and importance, ensuring the quality and consistency of DFT data becomes increasingly critical. A recent study investigating numerical errors in DFT-based materials databases revealed that errors arising from different methodologies and numerical settings can significantly impact the comparability of results [12]. The research examined errors in total and relative energies as a function of computational parameters, comparing results for 71 elemental and 63 binary solids obtained by three electronic-structure codes employing fundamentally different strategies [12].

Based on the observed trends, the study proposed a simple, analytical model for estimating errors associated with basis-set incompleteness [12]. This approach enables comparison of heterogeneous data present in computational materials databases and provides researchers with tools to assess the reliability of database entries. For pharmaceutical researchers leveraging high-throughput screening of materials databases, understanding these numerical uncertainties is essential for proper interpretation of computational predictions.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Quantum Chemical Validation

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Por21 Database | Benchmark Database | Provides reference data for metalloporphyrin systems | Validation of methods for transition metal chemistry |

| ANI-1ccx Data Set | AI Training Data | Contains CCSD(T)*/CBS energies for diverse molecules | Training and validation of AI-enhanced methods |

| Gaussian-n Theories | Composite Method | High-accuracy thermochemical predictions | Benchmark studies, small molecule accuracy |

| MC-PDFT | Theoretical Method | Handles multi-reference character | Transition metal complexes, bond dissociation |

| AIQM1 | Hybrid AI/QM Method | Near-CCSD(T) accuracy with SQM speed | Large organic molecule screening |

| DFT Database Error Models | Quality Control | Estimates numerical errors in DFT results | Assessment of data reliability in materials databases |

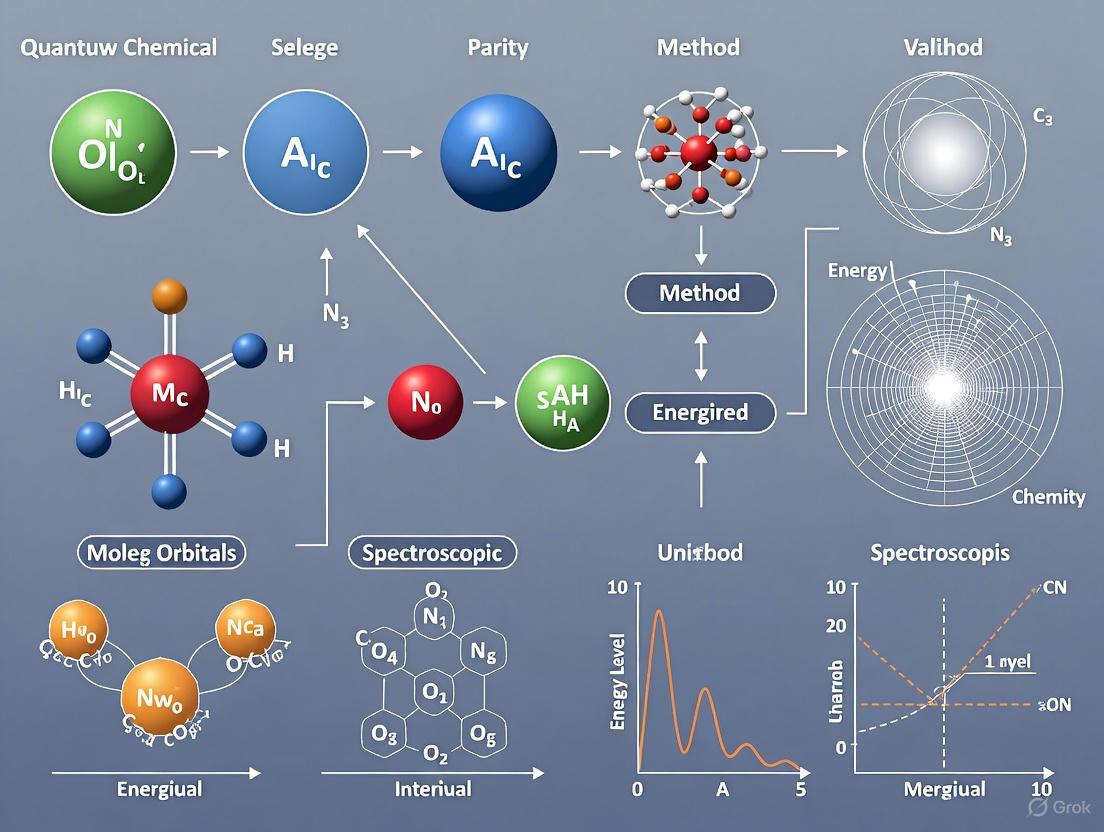

Visualization of Quantum Chemical Validation Workflow

Validation Workflow for Quantum Chemical Methods

The limitations of traditional DFT create genuine bottlenecks for research applications requiring high predictive accuracy, particularly in pharmaceutical development and materials design. However, the evolving landscape of quantum chemical methods offers multiple pathways toward overcoming these limitations. From multiconfiguration approaches that address fundamental theoretical gaps to AI-enhanced methods that leverage machine learning for accuracy and efficiency, researchers now have an expanding toolkit for tackling increasingly complex chemical problems.

The choice between methods involves balancing computational cost against accuracy requirements, with different strategies appropriate for different research contexts. Composite methods provide the highest accuracy for small systems but become prohibitively expensive for larger molecules. MC-PDFT addresses critical failures for multi-reference systems while maintaining reasonable computational cost. AI-enhanced methods offer unprecedented speed and accuracy for organic molecules but have limitations in their current implementations. By understanding these trade-offs and employing robust validation protocols, researchers can select the most appropriate methods for their specific applications, navigating the data quality bottleneck toward more reliable predictions and more efficient discovery pipelines.

In the demanding fields of drug development and materials science, the accuracy of quantum chemical calculations is not merely an academic concern—it is a critical factor that can determine the success or failure of a multi-year research project. Computational methods are now foundational for tasks ranging from molecular property prediction to the design of novel therapeutics. However, these methods are approximations of reality, and their reliability must be rigorously established through validation against trusted reference data, known as "gold standards." For molecular systems, this gold standard has historically been set by high-level wavefunction methods, particularly Coupled Cluster theory with single, double, and perturbative triple excitations (CCSD(T)), which is often considered the most accurate scalable method for single-reference systems [13]. Despite its accuracy, the crippling computational cost of CCSD(T) restricts its application to relatively small molecules, creating a persistent scalability gap [14].

This guide provides a comparative analysis of the established gold standard, CCSD(T), and an emerging disruptive technology: Large Wavefunction Models (LWMs). LWMs are foundation neural-network wavefunctions optimized by Variational Monte Carlo (VMC) that directly approximate the many-electron wavefunction, offering a potential path to gold-standard accuracy at a fraction of the computational cost [14]. We will objectively examine their performance, supported by experimental data, to inform researchers and scientists in their selection of computational protocols for high-stakes discovery.

Understanding the Benchmarking Landscape

The Gold-Standard: Coupled Cluster Theory

Coupled Cluster (CC) theory is a wavefunction-based post-Hartree-Fock method designed to systematically recover the electron correlation energy missing from a mean-field calculation [15]. Its principal strength lies in its size-extensivity, meaning the energy calculated grows linearly with the number of electrons, a crucial property for obtaining accurate thermochemical data [15]. The CCSD(T) variant, which includes a perturbative treatment of triple excitations, has become the de facto reference method for benchmarking other quantum chemical approaches, including Density Functional Theory (DFT), on datasets of small- to medium-sized molecules [13].

However, CC theory is not without its limitations. It is a non-variational method, meaning its calculated energy is not guaranteed to be an upper bound to the exact energy [15]. Furthermore, its computational cost scales steeply with system size—as high as (O(N^7)) for CCSD(T), where (N) is related to the basis set size [14]. This makes it prohibitively expensive for large systems like peptides or drug-sized molecules. Diagnostics like the T1 diagnostic and the emerging density matrix non-Hermiticity indicator have been developed to warn users when CC theory might be yielding unreliable results, often due to significant multi-reference character in the wavefunction [16].

The Emerging Paradigm: Large Wavefunction Models

Large Wavefunction Models represent a paradigm shift, leveraging modern machine learning to create foundation models for quantum chemistry. Unlike CC theory, which solves for the wavefunction of a single molecule at a time, LWMs are pre-trained on a curriculum of molecules and can be fine-tuned for specific tasks [14]. They are trained using Variational Monte Carlo (VMC) by minimizing the variational energy, providing an upper bound to the exact energy [14].

A key differentiator is their scaling cost. While the initial training is computationally intensive, the inference and fine-tuning for specific molecules can be highly efficient. Recent advances in sampling algorithms, such as the proprietary Replica Exchange with Langevin Adaptive eXploration (RELAX), are reported to drastically reduce computational costs. Benchmarking studies indicate that simulacra AI's LWM pipeline can reduce data generation costs by 15-50x compared to a state-of-the-art Microsoft pipeline and by 2-3x compared to traditional CCSD methods for systems on the scale of amino acids [14]. This positions LWMs to potentially fill the scalability gap left by CCSD(T).

Comparative Performance Analysis

The table below summarizes the core characteristics of CCSD(T) and LWMs, highlighting their respective strengths and limitations for practical application in a research and development environment.

Table 1: Fundamental Comparison of CCSD(T) and Large Wavefunction Models

| Feature | Coupled Cluster (CCSD(T)) | Large Wavefunction Models (LWMs) |

|---|---|---|

| Theoretical Basis | Wavefunction theory; exponential ansatz [15] | Neural-network wavefunction; variational Monte Carlo [14] |

| Size-Extensivity | Yes [15] | Yes (inherently variational) [14] |

| Variational | No [15] | Yes [14] |

| Computational Scaling | (O(N^7)) [14] | High pre-training cost, but lower cost for fine-tuning/inference [14] |

| Multi-reference Systems | Struggles; requires diagnostics [16] | Capable of handling static & dynamic correlation [14] |

| Primary Use Case | Gold-standard benchmarking for small molecules [13] | Generating gold-standard data for large systems (e.g., drug candidates) [14] |

| Key Limitation | Prohibitive cost for large molecules [14] | Reliance on quality of pre-training data and sampling efficiency [14] |

Performance in Practical Benchmarking

The quality of any benchmark is dictated by the quality of its reference database. The Gold-Standard Chemical Database 138 (GSCDB138) is a recently curated benchmark library comprising 138 datasets and 8,383 individual data points [13]. It covers a diverse set of chemical properties, including reaction energies, barrier heights, non-covalent interactions, and molecular properties like dipole moments and vibrational frequencies [13]. This database is used to validate and train the next generation of density functionals and, by extension, is a stringent test for any quantum chemical method.

When DFT functionals are benchmarked against CCSD(T)-level references in GSCDB138, the expected hierarchy of performance is observed, with generally higher accuracy for more sophisticated functionals. For example, the double-hybrid functionals lower mean errors by about 25% compared to the best hybrid functionals [13]. However, even the best DFT functionals can struggle with regimes central to drug discovery, such as long-range charge transfer, delicate non-covalent interactions, and open-shell transition-metal complexes [14]. This systematic underperformance in key areas underscores the irreplaceable role of high-level wavefunction methods like CCSD(T) and LWMs for generating reliable reference data.

Table 2: Empirical Performance Data from Benchmarking Studies

| Benchmark Context | Coupled Cluster (CCSD(T)) | Large Wavefunction Models (LWMs) |

|---|---|---|

| Data Generation Cost | Reference point: High cost (e.g., millions of dollars for (10^5) conformations of 32-atom molecules) [14] | 15-50x cost reduction vs. a state-of-the-art Microsoft pipeline; 2-3x cost reduction vs. CCSD on amino-acid scale [14] |

| Accuracy in GSCDB138 | Serves as the reference "gold standard" for updating databases [13] | Aims to provide CCSD(T)-level accuracy for larger systems where CCSD(T) is inapplicable [14] |

| Handling of Challenging Systems | Can fail for systems with strong multi-reference character; requires diagnostics [16] | Designed to capture static & dynamic correlation without hand-crafted functionals, showing promise for complex systems [14] |

Experimental Protocols for Method Validation

Protocol 1: Validating with the GSCDB138 Database

For researchers aiming to validate a new computational method (e.g., a new DFT functional or an LWM), the GSCDB138 protocol provides a comprehensive framework [13].

- System Selection: The database includes 138 curated sub-sets. Select subsets relevant to your chemical domain (e.g.,

BH76for barrier heights,NC558for non-covalent interactions). - Reference Calculation: The reference values in GSCDB138 are derived from high-level CC calculations, often at the complete basis set (CBS) limit or using explicit correlation (F12) methods [13]. These are considered the ground truth.

- Target Method Calculation: Perform single-point energy calculations for all molecular structures in the selected subset using your target method.

- Error Analysis: Calculate the statistical errors (e.g., Mean Absolute Error, Root-Mean-Square Error) between the target method's results and the reference values. This quantitatively benchmarks performance against the gold standard.

The following workflow diagram illustrates this validation process.

Protocol 2: Wavefunction-Based Analysis for Complex Systems

For systems where single-reference CCSD(T) is suspected to be inadequate (e.g., open-shell transition-metal complexes, bond-breaking, solid-state color centers), a multi-configurational wavefunction protocol is necessary. The protocol used for the NV⁻ center in diamond is an excellent example [17].

- Cluster Model Construction: Embed the defect or complex of interest in a finite cluster model of the host material, passivating dangling bonds with hydrogen atoms.

- Active Space Selection: Use a Complete Active Space Self-Consistent Field (CASSCF) calculation. The active space is chosen to include the key defect/orbital electrons and orbitals (e.g., CASSCF(6e,4o) for NV⁻) [17].

- Dynamic Correlation: The CASSCF energy, which captures static correlation, is improved by adding dynamic correlation via second-order N-Electron Valence State Perturbation Theory (NEVPT2) [17].

- Property Calculation: The optimized wavefunction is used to compute energies, geometries, and spectroscopic properties.

This protocol is visualized in the workflow below.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Resources for Gold-Standard Benchmarking

| Tool / Resource | Function | Example/Note |

|---|---|---|

| Gold-Standard Database (GSCDB138) | Provides trusted reference data for method validation. | Curated from GMTKN55 & MGCDB84; updated with best CCSD(T) references [13]. |

| Coupled Cluster Software | Performs high-accuracy CCSD(T) calculations. | Packages like ORCA [14] and Q-Chem [14]. |

| Large Wavefunction Model (LWM) | Generates gold-standard data for large systems. | Utilizes VMC and advanced sampling (e.g., RELAX) [14]. |

| Multi-Reference Wavefunction Code | Handles systems with strong static correlation. | Used for CASSCF/NEVPT2 protocols (e.g., for color centers) [17]. |

| Diagnostic Tools | Assesses reliability of single-reference methods. | T1 diagnostic [16] and non-Hermiticity indicator [16] for CC theory. |

The rigorous benchmarking of quantum chemical methods against gold standards is not an academic exercise but a fundamental pillar of reliable computational research. Coupled Cluster theory, particularly CCSD(T), remains the cornerstone for this validation for small molecules. However, its severe scalability limitations have created a bottleneck for innovation in drug discovery and materials science.

Large Wavefunction Models emerge as a compelling alternative, promising to extend the reach of gold-standard accuracy to previously inaccessible molecular scales. While CCSD(T) will continue to be vital for benchmarking and small-system studies, LWMs offer a path to generate trustworthy, physically grounded data for the complex molecules that define the frontiers of modern science. The integration of these powerful validation tools empowers scientists to make more confident predictions, ultimately de-risking the journey from computational design to real-world discovery.

The development of accurate machine learning (ML) models for molecular property prediction and materials design requires extensive high-quality training data. For years, the quantum chemistry community has relied on foundational datasets like QM9, which contains properties for 133,885 small organic molecules with up to nine heavy atoms. While instrumental for early ML research, such datasets capture only a fraction of the chemical space relevant for modern applications like drug discovery and catalyst development [18]. The recent release of Open Molecules 2025 (OMol25) by Meta's Fundamental AI Research (FAIR) team marks a paradigm shift—offering over 100 million density functional theory (DFT) calculations at the ωB97M-V/def2-TZVPD level of theory, representing billions of CPU core-hours of compute [19]. This article provides a comparative analysis of OMol25 against other emerging and established quantum chemical resources, focusing on their composition, scope, and validation within spectroscopic and drug discovery contexts.

Table 1: Key Specifications of Quantum Chemical Datasets

| Dataset | # Calculations / Molecules | Heavy Atoms (max) | Level of Theory | Key Features |

|---|---|---|---|---|

| OMol25 [20] [19] | ~100 million calculations | 350 | ωB97M-V/def2-TZVPD | Unprecedented elemental/chemical diversity; includes biomolecules, metal complexes, electrolytes |

| QCML [21] | 33.5 million (DFT) / 14.7 billion (semi-empirical) | 8 | Mixed (Systematically sampled) | Focus on small molecules; includes equilibrium and off-equilibrium structures |

| QM40 [18] | 162,954 molecules | 40 | B3LYP/6-31G(2df,p) | Represents 88% of FDA-approved drug space; includes local vibrational mode force constants |

| QM9 [22] | 133,885 molecules | 9 | B3LYP/6-31G(2df,p) | Benchmark for small organic molecules; limited chemical diversity |

| QMugs [21] | 665,911 molecules | 100 | GFN2-xTB (Semi-empirical) | Drug-like molecules; lower-cost calculations but potentially less accurate |

Dataset Comparison: Scope, Diversity, and Applications

OMol25: A Universe of Chemical Diversity

The OMol25 dataset distinguishes itself through its massive scale and comprehensive coverage of chemical space. It encompasses 83 elements from the periodic table, a wide range of intra- and intermolecular interactions, explicit solvation, variable charge and spin states, conformers, and reactive structures [19]. The dataset uniquely blends several domains of chemistry:

- Biomolecules: Structures from RCSB PDB and BioLiP2 datasets, including random docked poses and various protonation states [20].

- Metal Complexes: Combinatorially generated using different metals, ligands, and spin states via the Architector package [20].

- Electrolytes: Aqueous solutions, ionic liquids, and molten salts, including clusters relevant for battery chemistry [20].

This diversity makes OMol25 particularly valuable for developing universal ML models that can perform reliably across different chemical domains, from drug design to energy materials.

While OMol25 provides unparalleled breadth, other datasets offer specialized value:

- QM40 specifically targets drug discovery applications, containing molecules with 10-40 heavy atoms that represent 88% of the chemical space of FDA-approved drugs [18]. A key differentiator is its inclusion of local vibrational mode force constants, which serve as quantitative measures of bond strength and are directly relevant for spectroscopic analysis [18].

- QCML takes a systematic approach to covering chemical space with small molecules of up to 8 heavy atoms, generating both equilibrium and off-equilibrium 3D structures through conformer search and normal mode sampling [21]. Its hierarchical organization facilitates training ML force fields for molecular dynamics simulations.

- QM9 remains a valuable benchmark for small organic molecules, though its limitation to 9 heavy atoms and 4 elements (C, N, O, F) restricts its utility for modeling pharmaceutical compounds or inorganic complexes [22] [18].

Experimental Validation and Benchmarking

Performance on Charge-Related Properties

A critical test for any quantum chemical method or ML model trained on these datasets is its ability to accurately predict electronic properties relevant to spectroscopy and reactivity. Recent research has benchmarked Neural Network Potentials (NNPs) trained on OMol25 against experimental reduction potential and electron affinity data [23].

Table 2: Performance on Experimental Reduction Potentials (Mean Absolute Error in V)

| Method | Main-Group Species (OROP) | Organometallic Species (OMROP) |

|---|---|---|

| B97-3c (DFT) | 0.260 | 0.414 |

| GFN2-xTB (SQM) | 0.303 | 0.733 |

| UMA-S (OMol25) | 0.261 | 0.262 |

| UMA-M (OMol25) | 0.407 | 0.365 |

| eSEN-S (OMol25) | 0.505 | 0.312 |

The benchmarking revealed that OMol25-trained models, particularly UMA-S, can achieve accuracy comparable to or better than traditional DFT and semi-empirical quantum mechanical (SQM) methods for predicting charge-related properties [23]. Surprisingly, despite not explicitly modeling Coulombic physics, these models showed particular strength for organometallic species, contrary to trends observed with DFT and SQM methods [23].

Methodological Framework for Validation

The validation of ML models against experimental data follows rigorous protocols:

Experimental Validation Workflow for Quantum Chemical Methods

For reduction potential prediction, the workflow involves:

- Structure Preparation: Initial geometries of oxidized and reduced states are obtained from experimental datasets (e.g., Neugebauer et al.), often pre-optimized with GFN2-xTB [23].

- Geometry Optimization: Structures are re-optimized using the NNP with the geomeTRIC optimization package [23].

- Solvent Correction: Solvent effects are incorporated using implicit solvation models like the Extended Conductor-like Polarizable Continuum Model (CPCM-X) to obtain solvent-corrected electronic energies [23].

- Property Calculation: The reduction potential is calculated as the difference in electronic energy between the reduced and oxidized structures (in volts) [23].

- Benchmarking: Predicted values are compared against experimental data using statistical metrics like Mean Absolute Error (MAE), Root Mean Squared Error (RMSE), and coefficient of determination (R²) [23].

For gas-phase properties like electron affinity, the solvent correction step is omitted, and the property is directly calculated from the energy difference between neutral and anionic species [23].

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools for Quantum Chemical Validation

| Tool / Resource | Function | Relevance to Research |

|---|---|---|

| OMol25 NNPs (eSEN, UMA) [20] | Pre-trained neural network potentials | Provide quantum chemical accuracy at dramatically reduced computational cost for large systems |

| geomeTRIC [23] | Geometry optimization package | Enables efficient structure optimization using NNPs or traditional quantum methods |

| CPCM-X [23] | Implicit solvation model | Accounts for solvent effects in property predictions like reduction potentials |

| Psi4 [23] | Quantum chemistry software package | Performs traditional DFT calculations for benchmarking and validation |

| LModeA [18] | Local vibrational mode analysis | Calculates bond strength metrics from frequency calculations for spectroscopic insights |

| OMol25 Leaderboard [24] | Community benchmarking platform | Tracks model performance across various chemical tasks to guide method selection |

Implications for Spectroscopy and Drug Discovery

The emergence of these large-scale datasets, particularly OMol25, has profound implications for spectroscopic validation and pharmaceutical development. In spectroscopy, accurate prediction of electronic properties is crucial for interpreting experimental results. The benchmarking studies show that OMol25-trained models can reliably predict electron affinities and reduction potentials—properties directly related to redox processes and electronic transitions observed in spectroscopic techniques [23].

In pharmaceutical research, the integration of AI and quantum chemical calculations is transforming early-stage drug discovery. AI-powered approaches now routinely inform target prediction, compound prioritization, and pharmacokinetic property estimation [25]. The chemical space covered by OMol25, particularly its biomolecular and drug-like structures, provides the training foundation for these in silico screening platforms that have become frontline tools for triaging large compound libraries [25] [20]. Furthermore, the inclusion of local vibrational mode data in specialized datasets like QM40 offers direct insights into bond strengths, enabling more accurate predictions of metabolic stability and reactivity in drug candidates [18].

The landscape of quantum chemical data has evolved dramatically from the era of QM9 to the current paradigm represented by OMol25. This transformation enables the development of more robust, chemically diverse ML models that approach quantum chemical accuracy at a fraction of the computational cost. For researchers in spectroscopy and drug discovery, these resources provide unprecedented opportunities to connect computational predictions with experimental observables. The ongoing community efforts, exemplified by the OMol25 leaderboard, will continue to drive improvements in model reliability and applicability across the chemical sciences [24]. As these datasets grow and integrate more experimental benchmarks, they will increasingly serve as the foundation for predictive computational workflows that accelerate the discovery of new molecules and materials.

From Theory to Practice: AI-Enhanced Methodologies and Real-World Applications

The integration of artificial intelligence (AI) with robotics is catalyzing a fundamental transformation in scientific research, moving from human-directed experimentation to fully autonomous discovery systems. This paradigm shift addresses a critical bottleneck in high-throughput laboratories: while automated systems can execute thousands of reactions, the rapid, accurate analysis required for real-time decision-making has remained elusive. The IR-Bot platform emerges as a seminal case study in overcoming this limitation, representing a convergence of infrared spectroscopy, machine learning, and quantum chemistry that enables closed-loop experimentation without human intervention [26]. This system exemplifies the broader thesis that robust quantum chemical method validation is paramount for generating the reliable spectroscopic data that fuels trustworthy AI-driven analysis. By providing real-time, interpretable feedback on chemical reactions, IR-Bot demonstrates how validated computational methods can transition autonomous laboratories from concept to practical reality, thereby accelerating the pace of discovery in fields ranging from materials science to pharmaceutical development [26].

At its core, IR-Bot is an autonomous robotic platform designed for the real-time analysis of chemical mixtures. Its architecture seamlessly coordinates hardware and software components to close the loop between data acquisition and experimental decision-making. The physical system consists of a rail-mounted robot, two mobile units, and automated liquid handling components that prepare samples and transfer them to a Thermo Fisher Scientific Nicolet iS50 FT-IR spectrometer for analysis [26].

The analytical power is governed by a large-language-model-based "IR Agent" that orchestrates quantum chemical simulations, experimental data collection, and machine-learning-driven spectral interpretation [26]. This agent operates on a sophisticated two-step analytical framework: first, experimental spectra are aligned with simulated reference spectra to correct for experimental artifacts like noise and baseline drift; then, a pre-trained machine learning model predicts mixture composition from the aligned data [26]. This workflow ensures that the system can handle the complexities of real experimental data while leveraging the predictive power of models trained on accurate theoretical simulations.

Table: Core Components of the IR-Bot System

| Component Type | Specific Implementation | Function |

|---|---|---|

| Robotics Platform | Rail-mounted robot with mobile units | Sample preparation and transfer |

| Spectrometer | Nicolet iS50 FT-IR (Thermo Fisher Scientific) | Infrared spectral acquisition |

| Computational Engine | Large Language Model (LLM) "IR Agent" | Coordinates quantum simulations and ML analysis |

| Analytical Framework | Two-step alignment-prediction model | Corrects spectral artifacts and predicts composition |

| Quantum Chemical Foundation | DFT calculations for reference spectra | Provides validated theoretical spectra for machine learning |

Experimental Protocol & Methodologies

IR-Bot Experimental Protocol

The validation of IR-Bot's capabilities followed a rigorous experimental protocol centered on a Suzuki coupling reaction between benzoyl chloride and 4-cyanophenylboronic acid pinacol ester [26]. To systematically evaluate performance, researchers employed a reductionist approach: rather than analyzing the complete multi-component reaction mixture initially, they studied simplified binary and ternary systems containing only product and by-product components. This controlled strategy enabled precise validation of the system's predictive performance while minimizing spectral complexity.

The automated workflow began with robotic sample preparation and transfer to the FT-IR spectrometer. Upon spectral acquisition, the raw data underwent preprocessing to address instrumental variations before alignment with quantum chemically derived reference spectra. The machine learning model—pre-trained on these theoretical spectra—then predicted mixture compositions, with the IR Agent providing explainable insights by identifying influential vibrational features such as carbon-boron and carbonyl stretching modes that drove the predictions [26]. This emphasis on interpretability builds crucial user confidence in the automated analysis, addressing the "black box" concern common to many AI systems.

Quantum Chemical Validation Methodology

The accuracy of IR-Bot's predictions fundamentally depends on the quality of its reference data, which originates from rigorously validated quantum chemical calculations. These methodologies employ composite post-Hartree-Fock schemes and hybrid coupled-cluster/density functional theory (DFT) approaches to predict structural and ro-vibrational spectroscopic properties [27]. For flexible molecular systems, where spectroscopic signatures arise from complex conformational equilibria, specialized treatments are essential. Researchers employ the second-order vibrational perturbation theory framework alongside discrete variable representation anharmonic approaches to manage large-amplitude motions related to internal rotations [27].

Validation of these quantum chemical methods typically involves comparing computed spectroscopic data with high-resolution experimental measurements for benchmark systems. For instance, studies on glycolic acid demonstrate how computed infrared spectroscopic data complement experimental investigations, enhancing the possibility of detecting molecules in complex mixtures [27]. Similarly, DFT calculations using functionals like B3LYP with the 6-311 + G (d, p) basis set have proven effective for reproducing molecular structures and predicting vibrational frequencies, as evidenced by studies on compounds like phenylephrine [5]. This rigorous validation ensures that the theoretical spectra serving as IR-Bot's training data accurately represent molecular vibrational signatures.

Performance Comparison: IR-Bot vs. Traditional Analytical Methods

A critical evaluation of IR-Bot necessitates comparison against established analytical techniques. While traditional methods like nuclear magnetic resonance (NMR), mass spectrometry (MS), and high-performance liquid chromatography (HPLC) remain gold standards for definitive structural elucidation, they present significant limitations for real-time feedback in autonomous workflows [26]. These techniques often require extensive sample preparation, are relatively slow, and demand substantial human intervention—creating a bottleneck for closed-loop experimentation.

Table: Performance Comparison of Analytical Methods for Autonomous Experimentation

| Method | Throughput | Automation Compatibility | Quantitative Accuracy | Best Use Case |

|---|---|---|---|---|

| IR-Bot | High (Real-time) | Excellent (Fully autonomous) | High for key components | Real-time reaction monitoring & optimization |

| NMR Spectroscopy | Low (Minutes to hours) | Poor (Significant human intervention) | Excellent | Definitive structural elucidation |

| Mass Spectrometry | Medium (Minutes) | Moderate (Limited automation) | High with standards | Compound identification & quantification |

| HPLC | Medium (Minutes per sample) | Moderate (Automated injection possible) | Excellent with calibration | Separation and quantification of complex mixtures |

IR-Bot's distinctive advantage lies in its combination of speed, automation compatibility, and minimal sample preparation requirements. In the demonstrated Suzuki coupling application, the system successfully provided accurate quantification of mixture compositions rapidly enough to inform experimental decisions—a capability traditional methods cannot deliver in comparable timeframes [26]. However, the researchers emphasize that IR-Bot complements rather than replaces high-resolution tools; its role is to provide rapid, actionable data for autonomous decision-making, while traditional methods remain essential for definitive characterization.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The implementation of AI-powered autonomous experimentation systems like IR-Bot requires both physical and computational resources. The table below details key components essential for establishing similar autonomous experimentation platforms.

Table: Essential Research Reagents and Computational Tools for AI-Powered Autonomous Experimentation

| Item | Function/Role | Example from IR-Bot Study |

|---|---|---|

| FT-IR Spectrometer | Provides vibrational spectral data for real-time analysis | Nicolet iS50 FT-IR (Thermo Fisher Scientific) [26] |

| Quantum Chemistry Software | Generates theoretical reference spectra for machine learning training | DFT calculations (e.g., B3LYP/6-311+G(d,p)) [5] |

| Robotic Liquid Handling System | Automates sample preparation and transfer | Custom rail-mounted robot with mobile units [26] |

| Machine Learning Framework | Enables spectral interpretation and prediction | Two-step alignment-prediction model with explainable AI [26] |

| Reference Chemical Compounds | Validation and calibration of analytical methods | Suzuki reaction components: benzoyl chloride, 4-cyanophenylboronic acid pinacol ester [26] |

Integration with Broader Research: Data Fusion & FAIR Principles

The development of systems like IR-Bot occurs within a broader scientific context emphasizing data integration and reusability. Recent advances in chemometrics demonstrate how data fusion techniques can significantly enhance spectroscopic analysis. The Complex-level Ensemble Fusion (CLF) approach, for instance, is a two-layer chemometric algorithm that jointly selects variables from concatenated mid-infrared (MIR) and Raman spectra with a genetic algorithm, projects them with partial least squares, and stacks the latent variables into an XGBoost regressor [28]. This method has demonstrated superior predictive accuracy compared to single-source models and classical fusion schemes, highlighting the potential of combining multiple spectroscopic techniques—a logical future direction for platforms like IR-Bot.

Furthermore, the emerging FAIR (Findable, Accessible, Interoperable, and Reusable) data principles are becoming increasingly crucial for spectroscopic data collections [29]. Maintaining data in a form that allows critical metadata extraction increases the probability that data will be findable and reusable both during research and after publication. For AI-powered systems, following FAIRSpec-ready guidelines ensures instrument datasets are unambiguously associated with chemical structure, facilitating the creation of larger, more reliable training datasets that improve model performance across autonomous platforms.

The IR-Bot system represents a significant milestone in autonomous experimentation, successfully demonstrating how the integration of robotics, infrared spectroscopy, and machine learning—grounded in rigorously validated quantum chemical data—can overcome the critical bottleneck of real-time analysis in automated laboratories. While traditional analytical methods retain their importance for definitive characterization, IR-Bot's capacity for providing rapid, actionable feedback enables truly closed-loop experimentation where robots not only perform experiments but also understand and optimize them in real time.

The future trajectory of such systems points toward expanded applicability across diverse reaction types, increased incorporation of multi-technique data fusion, and greater adherence to FAIR data principles that enhance reusability and collaborative development. As quantum chemical methods continue to advance and machine learning models become increasingly sophisticated, the validation of spectroscopic data will remain the foundational element ensuring the reliability and adoption of autonomous platforms across chemical and pharmaceutical research.

The identification of unknown chemical threats, including novel psychoactive substances and toxic agents, represents a significant challenge in forensic science and public safety. Mass spectrometry (MS) is a powerful analytical technique that provides precise molecular identification, with the global mass spectrometry market poised to grow from US$ 6.69 billion in 2025 to US$ 13.33 billion by 2035 [30]. However, confident annotation of mass spectra relies on reference spectra from analytical standards, which are often unavailable for newly emerging threat compounds [31].

Quantum Chemical Mass Spectrometry (QCxMS) has emerged as a powerful computational approach that bridges this identification gap by predicting mass spectra directly from molecular structures without relying on experimental reference data or pre-existing databases [31] [32]. This first-principles method enables researchers to simulate and analyze substances for which chemical standards are inaccessible, making it particularly valuable for threat identification scenarios. This article provides a comprehensive comparison of QCxMS methodologies, their performance relative to alternative approaches, and detailed experimental protocols for implementation in research settings focused on threat detection and characterization.

QCxMS Methodology and Workflow

Theoretical Foundations

QCxMS employs quantum chemical calculations to simulate electron ionization (EI) mass spectra through Born-Oppenheimer molecular dynamics (MD) simulations combined with fragmentation pathways [33]. The method operates on the principle that molecular fragmentation patterns following electron ionization can be predicted through computational modeling of molecular dynamics, without requiring experimental reference data [32]. This first-principles approach contrasts with data-driven statistical methods that depend on extensive databases of known spectra.

The recently introduced QCxMS2 program represents a significant methodological evolution, utilizing automated reaction network discovery, transition state theory, and Monte-Carlo simulations instead of the extensive molecular dynamics approach employed by the original QCxMS [34]. This more efficient approach of using stationary points on the potential energy surface enables the usage of more accurate quantum chemical methods, yielding improved spectral accuracy and robustness [34].

Standard Workflow Implementation

The QCxMS computational workflow typically involves multiple sequential steps that transform a molecular structure into a predicted mass spectrum. Two primary workflows exist: the command-line implementation for HPC environments and the Galaxy platform implementation designed for non-expert users.

Diagram 1: The complete QCxMS workflow for mass spectrum prediction, beginning with molecular structure input and proceeding through format conversion, geometry optimization, and quantum chemical calculations to generate the final predicted spectrum.

Key Computational Components

The QCxMS workflow relies on several specialized computational components that work in concert to predict mass spectra:

xTB Molecular Optimization: Optimizes molecular structures using extended tight-binding semi-empirical quantum mechanical methods, primarily GFN2-xTB or GFN1-xTB, which provide an optimal balance between accuracy and computational efficiency [31]. The optimization process adjusts atomic coordinates to minimize the energy of the molecular structure, producing an optimized XYZ file for subsequent calculations [31].

QCxMS Neutral Run: Initiates quantum chemistry simulations using either GFN2-xTB or GFN1-xTB semi-empirical methods, processing the molecule structure to generate trajectories for production runs [31]. This step creates collections of .in, .start, and .xyz files containing information about individual trajectories for the production run [31].

QCxMS Production Run: Processes trajectories generated by the neutral run and performs detailed quantum chemistry calculations to simulate mass spectra, initiating one job per trajectory [31]. This computationally intensive step recreates the directory structure and performs the core calculations that simulate the fragmentation processes [31].

QCxMS Get Results: Aggregates multiple .res files from the production run to produce a simulated mass spectrum in MSP format using the PlotMS tool [31]. This final processing step generates the predicted high-resolution mass spectra for all molecules contained in the starting SDF file [31].

Performance Comparison and Experimental Data

Methodological Comparison: QCxMS vs. QCxMS2

Recent advancements in computational mass spectrometry have introduced QCxMS2 as a successor to the original QCxMS methodology. The table below compares their key characteristics and performance metrics based on experimental validation studies.

Table 1: Performance comparison between QCxMS and QCxMS2

| Parameter | QCxMS | QCxMS2 | Performance Implication |

|---|---|---|---|

| Computational Approach | Born-Oppenheimer molecular dynamics (MD) simulations [33] | Automated reaction network discovery with transition state theory and Monte-Carlo simulations [34] | QCxMS2 uses stationary points on PES enabling higher-level theory |

| Default QM Method | GFN2-xTB (Semi-empirical) [31] | GFN2-xTB + ωB97X-3c (Composite approach) [34] | QCxMS2 achieves better accuracy with similar efficiency |

| Average Spectral Match | 0.622 [34] | 0.700 (composite), 0.730 (full ωB97X-3c) [34] | 12.5-17.4% improvement in prediction accuracy |

| Minimal Match Score | 0.100 [34] | 0.498-0.527 [34] | Significantly improved robustness and reliability |

| Test Set Size | 16 diverse organic and inorganic molecules [34] | Same 16-molecule test set [34] | Directly comparable performance metrics |

| Charge State Support | Singly charged ions (EI, CID) [32] | Extended to negative and multiple charges [32] | Broader applicability to different ionization modes |

Computational Resource Requirements

The computational demands of QCxMS simulations vary significantly based on molecular complexity and chemical composition. The following table summarizes resource requirements for different molecular types, demonstrating the scalability of the approach.

Table 2: Computational resource requirements for QCxMS calculations [31]

| Molecule | Number of Atoms | Chemical Composition | CPU Cores | Job Runtime (hours) | Memory (TB) |

|---|---|---|---|---|---|

| Ethylene | 6 | C, H | 155 | 9.62 | 0.58 |

| Benzophenone | 24 | C, H, O | 605 | 188.62 | 2.25 |

| Enilconazole | 33 | C, H, N, O, Cl | 830 | 477.84 | 3.08 |

| Mirex | 22 | C, Cl | 555 | 575.26 | 2.06 |

The presence of specific elements, particularly chlorine in compounds like mirex and enilconazole, contributes significantly to computational complexity, resulting in higher resource consumption [31]. For instance, predicting the spectrum of mirex with 22 atoms including chlorine took approximately three times longer than that of comparably sized benzophenone [31]. Simple molecules such as ethylene with just 6 atoms required approximately five times less CPU cores and memory, with a job runtime 50 times smaller and six times less CPU usage compared to the complex enilconazole molecule with 33 atoms [31].

Comparison with Alternative Approaches

QCxMS occupies a unique position in the landscape of mass spectral prediction methods, which can be broadly classified into two categories: first-principles physical-based simulation and data-driven statistical methods [33].

Table 3: Comparison of mass spectral prediction methodologies

| Methodology | Representative Tools | Theoretical Basis | Data Requirements | Advantages | Limitations |

|---|---|---|---|---|---|

| First-Principles Physical Simulation | QCxMS, QCxMS2, GFNn-xTB | Born-Oppenheimer MD with fragmentation pathways [33] | No experimental spectra needed | Works for novel compounds without reference data [32] | Computationally intensive [31] |

| Data-Driven Statistical Methods | CFM-ID, Deep Neural Networks | Rule-based fragmentation, machine learning [33] | Large databases of known spectra | Faster prediction for known compound classes | Limited to chemical space in training data |

| Quantum Theory Based | QET, RRKM theories | Quasi-equilibrium theory, Rice–Ramsperger–Kassel–Marcus theories [33] | Physical parameters | Strong theoretical foundation | Limited applicability to complex systems |

Experimental Protocols

Standard QCxMS Implementation Protocol

For researchers implementing QCxMS in command-line environments, the following protocol outlines the essential steps:

Input Preparation: Prepare a file with the equilibrium structure of your target molecule. For CID mode, the molecule must be protonated, which can be accomplished with the protonation tool of CREST [35]. Structure files can utilize formats supported by the MCTC library, including coord and xyz file formats [35].

Input File Configuration: Prepare an input file called qcxms.in. If no file is prepared, default options will execute GFN2-xTB with 25 × the number of atoms in the molecule trajectories (ntraj) [35]. Key parameters include:

<method>: Mass spectrometry method (ei, cid, dea)<program>: Quantum chemistry program (xtb, tmol, orca, mndo, dftb)charge <integer>: Charge of M+ (1 for EI and CID)ntraj <integer>: Number of trajectories (default: 25 × number of atoms)

Ground State Trajectory Generation: Execute qcxms for the first time to generate the ground state (GS) trajectory from which information is taken for the production trajectories. After equilibration steps, the files trjM and qcxms.gs are generated [35]. For correct sampling of the GS trajectory, it is recommended to conduct this initial run with a low-cost method such as GFN2-xTB or GFN1-xTB [35].

Production Run Preparation: Execute qcxms a second time after the GS run is finished. If qcxms.gs exists, this will create a TMPQCXMS folder and prepares the specifications for parallel production [35].

Production Run Execution: For computer clusters with a queuing system, use the q-batch script for execution of parallel computations. For local execution, use the pqcxms script with -j number of parallel jobs and -t number of OMP threads:

pqcxms -j <integer> -t <integer> &[35].Result Analysis: Monitor the QCxMS run status by changing to the working directory and typing

getres, which will provide the tmpqcxms.res file that can be plotted with PlotMS [35]. For detailed analysis of individual runs, examine the TMPQCXMS/TMP.X folders [35].

Galaxy Platform Implementation

For researchers without extensive computational expertise, the Galaxy platform provides a user-friendly web interface to QCxMS tools:

Data Import and Pre-processing: Begin by importing molecular structures in SMILES format. Convert SMILES to SDF format using Galaxy's Compound Conversion tool (MDL MOL format) [33].

3D Conformer Generation: Utilize the Generate Conformers tool to create three-dimensional molecular conformers. The number of conformers can be specified as an input parameter, with a default value of 1 [33]. This process creates the actual 3D topology of the molecule based on electromagnetic forces.

Format Conversion: Convert generated conformers from SDF format to Cartesian coordinate (XYZ) format using Compound Conversion tool. The XYZ format lists atoms in a molecule and their respective 3D coordinates, which is required for subsequent computational steps [33].

Molecular Optimization: Execute the xTB molecular optimization tool to optimize molecular structures. The level of accuracy for geometry optimisation can be adjusted according to user needs, producing an optimized XYZ file containing the coordinates of molecules after optimisation [31].

QCxMS Execution: Run the three sequential QCxMS tools (neutral run, production run, get results) through the Galaxy interface. The platform automatically manages data transfer between steps and handles collection of output files [31].

Result Retrieval: Access the final predicted mass spectrum in MSP format, which can be directly used by annotation software or exported for further analysis [31].

Key Parameter Optimization

For optimal performance in threat identification scenarios, certain QCxMS parameters may require adjustment:

Trajectory Count: The default number of trajectories (25 × number of atoms) provides a balance between computational cost and statistical reliability. For more complex molecules or higher accuracy requirements, increasing this value may be necessary [35].

Impact Excess Energy: For larger threat compounds with more degrees of freedom, the default impact excess energy per atom (ieeatm = 0.6 eV/atom) may be too low, potentially requiring adjustment to ensure adequate fragmentation [35].

Temperature Settings: Initial temperature (tinit) defaults to 500 K, while electronic temperature (etemp) defaults to 5000 K. These parameters influence the dynamics of fragmentation and may require compound-specific optimization [35].

Table 4: Essential research reagents and computational resources for QCxMS implementation

| Resource Category | Specific Tools/Solutions | Function/Purpose | Availability |

|---|---|---|---|

| Quantum Chemistry Packages | QCxMS (v5.2.1), xTB | Core quantum chemical calculations and molecular optimization [31] | GitHub repositories |

| Computational Platforms | Galaxy Platform | User-friendly web interface for HPC resources [31] | usegalaxy.eu |

| Visualization Tools | PlotMS (v6.2.0) | Generation of mass spectral data and visualization [31] | Included in QCxMS |

| Format Conversion Tools | Open Babel, Compound Conversion | Interconversion between molecular structure formats [31] [33] | Galaxy tools, standalone |

| Containerization | Docker | Encapsulation of software stack for enhanced reproducibility [31] | Docker Hub |

| Spectral Databases | Wiley Mass Spectra of Designer Drugs | Reference spectra for emerging threat compounds [30] | Commercial |