The N-Representability Problem in Reduced Density Matrices: Foundations, Methods, and Applications in Drug Discovery

This article provides a comprehensive overview of the N-representability problem, a central challenge in quantum chemistry and electronic structure theory that ensures reduced density matrices (RDMs) derive from valid physical...

The N-Representability Problem in Reduced Density Matrices: Foundations, Methods, and Applications in Drug Discovery

Abstract

This article provides a comprehensive overview of the N-representability problem, a central challenge in quantum chemistry and electronic structure theory that ensures reduced density matrices (RDMs) derive from valid physical N-electron wave functions. We explore the foundational concepts, including the critical role of spin symmetry and ensemble mixedness, and detail cutting-edge methodological advances from analytical reconstructions to hybrid quantum-stochastic algorithms. The discussion extends to practical troubleshooting of common issues like the BBGKY hierarchy truncation and shot noise, alongside rigorous validation techniques. Finally, we examine the profound implications of solving the N-representability problem for enhancing the accuracy and efficiency of quantum simulations in drug development and biomedical research.

Understanding the N-Representability Problem: From Pauli's Principle to Spin Symmetries

The Core Concept: What is the N-Representability Problem?

The N-representability problem is a fundamental challenge in quantum mechanics, particularly in electronic structure theory. In simple terms, it asks: Given a p-body reduced density matrix (p-RDM), can we be certain it originated from a physically valid, N-particle quantum system? [1] [2]

When you calculate the energy of a system with pairwise interactions (like electrons in a molecule), you only need the 2-body reduced density matrix (2-RDM), not the vastly more complicated full N-body wavefunction [2]. The N-representability problem is the task of finding the necessary and sufficient constraints that a 2-RDM must satisfy to ensure it could have come from a physically allowed N-body state [2] [3]. Without these constraints, variational calculations can collapse, yielding energies that are lower than the true ground state energy—a physically nonsensical result [2].

N-Representability Problem: FAQs & Troubleshooting

FAQ 1: What happens if I use a non-N-representable matrix in my calculations? The Problem: Your calculation may converge to an energy that is below the true ground state energy. This is a violation of the variational principle and renders the result invalid. This collapse happens because the search for the lowest energy is not constrained to physically possible states [2]. Troubleshooting Guide:

- Symptom: The computed energy is unphysically low.

- Diagnosis: The suspected 2-RDM in your calculation is likely not N-representable.

- Solution: Apply an algorithm designed to purify or correct the 2-RDM. Hybrid quantum-stochastic algorithms have been developed for this exact purpose, which evolve an initial state to produce a corrected, N-representable RDM [1] [2].

FAQ 2: Is the N-representability problem solved? The answer depends on whether you are working with a 1-body or 2-body RDM.

- For 1-RDMs: The problem for fermions is solved, with conditions known as the generalized Pauli constraints. For bosons, the one-particle reduced density matrix is solvable [3].

- For 2-RDMs: The problem is not solved in a practical, general form for either fermions or bosons. The number of necessary constraints grows exponentially with system size, making a complete solution intractable for all but the smallest systems [2] [3]. The problem of deciding N-representability for a general 2-RDM is classified as QMA-complete, a quantum generalization of NP-complete problems that emphasizes its extreme computational difficulty [3].

FAQ 3: Why is this problem so difficult? The complexity arises for two main reasons:

- Exponential Growth: The number of conditions needed to fully characterize a valid 2-RDM grows exponentially with the number of particles and orbitals in the system [1] [2].

- Computational Complexity: The problem is QMA-complete, a complexity class that is believed to be intractable even for quantum computers, highlighting its profound difficulty [3].

Featured Experimental Protocol: The Hybrid ADAPT-VQA Method

This protocol is based on the method described by Massaccesi et al. to determine and correct the N-representability of a p-RDM using a hybrid quantum-classical algorithm [1] [2].

Objective: To decide if a given target p-body matrix (e.g., a 2-RDM) is N-representable, and to find the closest N-representable p-RDM if it is not.

Principle: The algorithm starts with an initial N-body quantum state and applies a sequence of unitary operators to evolve it. The goal is to minimize the Hilbert-Schmidt distance between the p-RDM of the evolved state and the target p-body matrix. If the distance can be reduced to zero, the target is N-representable [2].

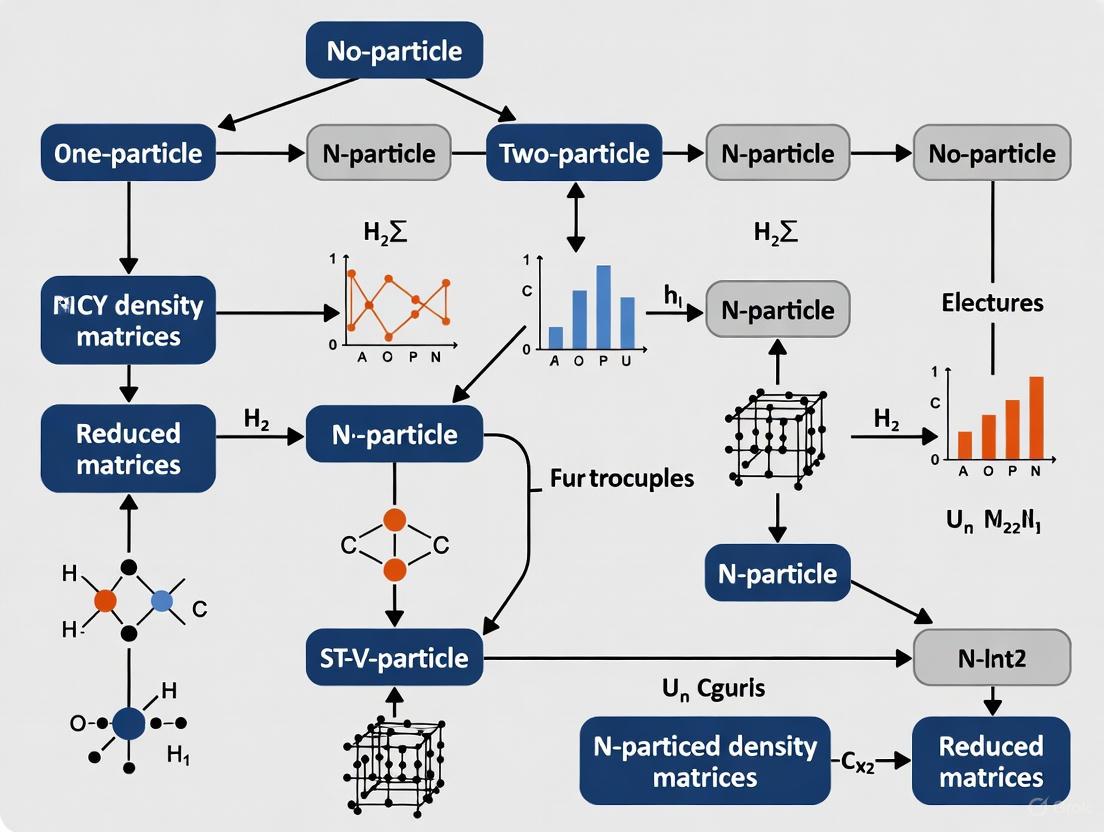

Workflow Diagram:

Step-by-Step Methodology:

- Initialization: Prepare an initial N-body quantum state, ( \rho_0 ) (e.g., an independent-particle-model state like a Hartree-Fock state) on the quantum computer [2].

- Ansatz Construction: Build a unitary evolution operator, ( Un(\vec{\theta}n) = e^{An(\vec{\theta}n)} ), where ( An ) is an anti-Hermitian operator selected from a predefined pool of operators (typically single and double excitation operators for fermionic systems). The parameters ( \vec{\theta}n ) are initially chosen at random [2].

- State Evolution: Apply the unitary to create a new trial state: ( \rhon = Un \rho{n-1} Un^\dagger ) [2].

- Distance Calculation: On the quantum computer, contract the evolved N-body state ( \rhon ) to its p-body reduced density matrix ( ^p\rhon ). Compute the cost function, the Hilbert-Schmidt distance ( Dn = D(^p\rhon, ^p\rho_t) ), to the target matrix [2].

- Classical Optimization: A classical stochastic optimizer (e.g., Simulated Annealing) uses the measured distance ( Dn ) to propose a new set of parameters ( \vec{\theta}{n+1} ) and a new operator ( A_{n+1} ) to improve the ansatz [2].

- Convergence Check: Steps 2-5 are repeated. If the change in distance ( Dn - D{n-1} ) is less than a predefined threshold ( \epsilon ) for a number of consecutive steps, the algorithm terminates. The final ( ^p\rho_n ) is the best approximation of the closest N-representable matrix to the target [2].

The Scientist's Toolkit: Key Research Reagents & Materials

The table below lists essential computational "reagents" for implementing the featured ADAPT-VQA protocol or working on the N-representability problem in general.

| Item Name | Function / Definition | Example / Role in Research |

|---|---|---|

| p-body Reduced Density Matrix (p-RDM) | A matrix describing the p-particle statistics of an N-particle system, obtained by "tracing out" (N-p) particles from the full density matrix. | The 2-RDM is the central object of study, as it is sufficient to compute the energy of systems with pairwise interactions [2]. |

| N-body Wavefunction | The full, many-body quantum state of a system of N particles. | The object from which a physically valid (N-representable) p-RDM must be derived. The ADAPT protocol starts with an initial guess for this state [2]. |

| Operator Pool | A predefined set of anti-Hermitian operators used to build the unitary ansatz in the ADAPT algorithm. | For quantum chemistry, the pool typically includes spin-adapted generalized single and double excitation operators to efficiently explore the space of possible states [2]. |

| Hilbert-Schmidt Distance | A measure of the distance between two matrices. Serves as the cost function in the ADAPT-VQA protocol. | Used to quantify how close the evolved p-RDM is to the target p-body matrix. A distance of zero confirms the target is N-representable [2]. |

| Simulated Annealing Optimizer | A classical global optimization algorithm that mimics the annealing process in metallurgy. | Used as the classical stochastic optimizer in the hybrid ADAPT-VQA to avoid getting trapped in local minima (barren plateaus) during the parameter search [2]. |

The Pauli Exclusion Principle and its Modern Reformulation as a Kinematic Constraint

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental connection between the Pauli Exclusion Principle (PEP) and the N-representability problem in our reduced density matrix (RDM) research?

The Pauli Exclusion Principle is not merely a rule about quantum numbers; it is a profound kinematic constraint on the permissible wave functions for identical fermions. It asserts that a multi-fermion wave function must be antisymmetric under particle exchange, belonging to a one-dimensional representation of the permutation group [4]. The N-representability problem is the task of determining whether a given p-body reduced density matrix (p-RDM) could have originated from a physically valid, antisymmetric N-fermion wave function [1]. Therefore, the PEP is the fundamental physical principle that directly dictates the essential, non-trivial constraints that must be solved for in the N-representability problem. Without the PEP, the set of valid N-body density matrices would be vastly larger.

FAQ 2: In quantum chemistry simulations for drug discovery, how does the PEP manifest computationally, and what are the consequences of an N-representability violation?

Computationally, the PEP ensures that the 1- and 2-body RDMs used in methods like Variational Quantum Eigensolver (VQE) simulations correspond to a physical N-electron system [1] [5]. A violation of N-representability means your computed p-RDM is non-physical. The consequences are severe:

- Inaccurate Energy Predictions: The ground state energy calculated from a non-N-representable RDM will be lower than the true physical ground state energy, leading to unreliable results.

- Faulty Molecular Properties: Subsequent calculations of properties, such as dipole moments or reaction barrier heights (critical in drug design), will be erroneous [5].

- Simulation Failure: In the context of covalent drug binding simulations, this could lead to a fundamental misunderstanding of the drug-target interaction energetics [5].

FAQ 3: Our hybrid quantum-classical pipeline for calculating Gibbs free energy profiles is producing anomalously low energy barriers. Could this be linked to an N-representability issue in the quantum subroutine?

Yes, this is a distinct possibility. The variational freedom in algorithms like VQE can sometimes lead to convergence on a state that yields a lower energy by violating physical constraints, including those imposed by the PEP on the 2-RDM [1] [5]. You should:

- Implement an N-representability Check: Use a hybrid quantum-stochastic algorithm, as proposed in recent research, to verify if the 2-RDM produced by your quantum circuit is N-representable [1].

- Analyze the Active Space: Ensure your active space approximation in the quantum subroutine is well-chosen and that the ansatz can adequately represent the strong electron correlations without sacrificing antisymmetry [5].

FAQ 4: Are there experimental limits on possible violations of the Pauli Exclusion Principle, and what do they imply for computational models?

Yes, extremely stringent experimental limits exist. The VIP and VIP2 experiments, which search for PEP-violating X-ray transitions in copper, have consistently pushed the boundaries [6]. The current best upper limit on the probability for a violation is on the order of β²/2 < 10⁻³¹ [6]. This profound empirical confirmation means that any computational model that inherently or accidentally permits even small violations of the PEP is modeling a non-physical system. It reinforces that the strict enforcement of antisymmetry and the spin-statistics connection in our RDM-based computational frameworks is not just a mathematical convenience but a reflection of a fundamental law of nature.

Troubleshooting Guides

Issue 1: Non-Physical Energies in VQE Simulations

Problem: Your VQE simulation for a molecule converges to an energy significantly below the known ground state, or the energy fails to converge to a stable value.

Diagnosis: This is a classic symptom of the N-representability problem. The quantum circuit may be producing a 2-RDM that does not correspond to any physical N-electron wave function, violating the constraints imposed by the PEP [1].

Resolution:

- Step 1: Extract the 2-RDM from your optimized VQE quantum circuit.

- Step 2: Apply a hybrid quantum-stochastic algorithm (e.g., based on adaptive derivative-assembled pseudo-Trotter methods and simulated annealing) to test the N-representability of your 2-RDM [1].

- Step 3: If the 2-RDM is flagged as non-representable, use the same algorithm to iteratively correct it by applying a sequence of unitary evolution operators to steer it towards a physical state [1].

- Step 4: Re-run the VQE optimization with a modified or constrained ansatz to better respect the physical symmetries.

Issue 2: Inaccurate Covalent Bond Energy Profiles

Problem: Calculations of Gibbs free energy profiles for processes involving covalent bond cleavage or formation (e.g., in prodrug activation or inhibitor binding) are inconsistent with experimental data [5].

Diagnosis: The inaccuracy may stem from an inadequate treatment of electron correlation within the active space of your quantum computation, potentially compounded by approximations that poorly handle the antisymmetry of the wave function.

Resolution:

- Step 1: Re-assess your active space selection. For a covalent bond process, ensure the bonding and antibonding orbitals, along with relevant correlated orbitals, are included.

- Step 2: Compare your quantum results against a classically computed Complete Active Space Configuration Interaction (CASCI) energy, which is the exact solution under the active space approximation and serves as a benchmark for the quantum computer's output [5].

- Step 3: Confirm that your solvation model (e.g., ddCOSMO for water) and thermal Gibbs corrections are correctly applied after the quantum energy calculation, as these are critical for realistic drug-design simulations [5].

Experimental Protocols & Data

Protocol: Testing the Pauli Exclusion Principle with Atomic Transitions

This protocol is based on the methodology of the VIP/VIP2 experiments [6].

1. Objective: To search for X-ray emissions that would only occur if an electron could transition into an atomic orbital already occupied by two electrons of the same spin, thereby violating the PEP.

2. Principle: Introduce "new" electrons into a metal target (e.g., copper) via a large electric current. If a PEP violation exists with a small probability, these incoming electrons could be radiatively captured into the inner-shell 1S orbital already occupied by two electrons. This anomalous transition produces an X-ray with a slightly shifted energy compared to the characteristic X-rays of the element.

3. Experimental Setup:

- Target: A high-purity copper cylinder.

- Current Source: Capable of injecting high current (e.g., 40-100 A) through the copper target.

- Detection: An array of high-resolution X-ray detectors, such as Silicon Drift Detectors (SDDs), positioned around the target to capture emitted X-rays. SDDs offer high efficiency, good energy resolution, and timing capabilities.

- Shielding: The entire apparatus is housed in an underground laboratory (e.g., LNGS at Gran Sasso) with massive lead and active plastic scintillator shielding to suppress cosmic and environmental background radiation.

4. Procedure:

- Conduct alternating measurement runs with and without the electric current.

- Accumulate X-ray spectra for both conditions over long periods (months to years).

- Use the "no-current" data to characterize the natural X-ray background and the characteristic X-ray lines of copper.

- In the "with-current" data, meticulously search for any excess of events at the energy signature predicted for the violation transition.

5. Data Analysis:

- The absence of a statistically significant peak at the violation energy allows for setting an upper limit on the violation parameter.

- The probability of violation is quantified as β²/2, and the experiment sets an upper bound on this value [6].

Quantitative Data from PEP Tests

Table 1: Historical Upper Limits on Pauli Exclusion Principle Violation Probability for Electrons

| Experiment | Upper Limit (β²/2) | Year | Method |

|---|---|---|---|

| Ramberg & Snow | < 1.7 × 10⁻²⁶ | 1990 | X-ray transition in Cu |

| VIP | < 4.7 × 10⁻²⁹ | ~2014 | X-ray transition in Cu (underground) |

| VIP2 (Projected) | < ~10⁻³¹ | ~2018 onwards | X-ray transition in Cu (upgraded detectors) |

Computational Parameters for Drug Discovery Simulations

Table 2: Key Parameters for Quantum Computing of Molecular Properties in Drug Design [5]

| Parameter | Typical Setting | Purpose & Rationale |

|---|---|---|

| Active Space | 2 electrons / 2 orbitals | A minimal model for covalent bond cleavage; balances physical accuracy with near-term quantum device limitations. |

| Basis Set | 6-311G(d,p) | Provides a balance between computational accuracy and cost for atoms involved in organic molecules and drug compounds. |

| Solvation Model | ddCOSMO (PCM) | Models the solvation effect in the human body, which is critical for realistic pharmacological activity. |

| Quantum Method | VQE with hardware-efficient ansatz | A near-term hybrid algorithm for finding molecular ground states on noisy quantum devices. |

| Classical Benchmark | CASCI / HF | Provides the "exact" solution within the active space and a baseline mean-field solution for comparison. |

Visualization of Concepts and Workflows

Diagram: Workflow for N-representability Check and Correction

Diagram: Theoretical Framework of PEP and N-representability

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential "Reagents" for Computational Research in PEP-Constrained Systems

| Item / Concept | Function / Description | Application in Research |

|---|---|---|

| Antisymmetric Wave Function | The mathematical object describing a system of identical fermions; changes sign upon exchange of any two particles. | The foundational constraint. All valid fermionic RDMs must be derivable from such a wave function. |

| p-body Reduced Density Matrix (p-RDM) | A matrix containing the information about the p-particle correlation functions of an N-body system. | The central object of study in the N-representability problem. The 2-RDM is often the focus as it suffices for computing the energy. |

| Variational Quantum Eigensolver (VQE) | A hybrid quantum-classical algorithm used to find the ground state energy of a molecular system. | The primary tool for quantum computational chemistry on near-term devices, where N-representability issues can arise. |

| Active Space Approximation | A method that reduces the computational complexity of a quantum system by restricting the calculation to a subset of important orbitals and electrons. | Enables the application of quantum computers to molecular problems by focusing on the chemically relevant electrons, as used in prodrug activation studies [5]. |

| Hybrid Quantum-Stochastic Algorithm | An algorithm combining unitary quantum evolution with classical stochastic processes (e.g., simulated annealing). | Used to test and enforce the N-representability of a given p-RDM, independent of the underlying Hamiltonian [1]. |

| Silicon Drift Detector (SDD) | A high-resolution X-ray detector with large area, high efficiency, and timing capabilities. | The key detection technology in modern PEP violation experiments (e.g., VIP2) for capturing anomalous X-rays [6]. |

Frequently Asked Questions

Q1: Why do my calculated natural orbital occupation numbers sometimes fall outside the expected range for a system with a well-defined total spin?

Your calculations might be violating the generalized Pauli exclusion principle adapted for spin symmetry. For a system with a definite total spin S and a degree of mixedness 𝒘, the admissible natural orbital occupation numbers are confined to a specific convex polytope, Σ_{N,S}(𝒘), within the Pauli hypercube [0,2]^d. If your results fall outside this polytope, it indicates that the reduced density matrix is not N-representable for that specific spin sector. You should verify that your computational method explicitly enforces the linear constraints related to the quantum numbers (N, S, M) [7].

Q2: How can I enforce spin symmetry constraints in my variational 2-RDM calculations?

Spin constraints can be enforced by incorporating N-representability conditions derived for the specific spin symmetry into your optimization procedure. A practical method is to use a semidefinite program (SDP), such as in the variational 2-RDM (v2RDM) method, where these conditions are cast as constraints [8]. Furthermore, ensure that the random unitary ensembles used in classical shadow tomography are restricted to those that preserve particle number and spin, which is crucial for efficiently estimating physically relevant observables in molecular systems [8].

Q3: What is the practical impact of ignoring the mixedness of a quantum state in reduced density matrix functional theory?

Ignoring the mixedness (𝒘) of a quantum state can lead to an overestimation of the polytope of admissible natural orbital occupation numbers. The correct, more restrictive polytope Σ_{N,S}(𝒘) is a subset of the one you would calculate for a pure state. Using an incorrect polytope can result in an incomplete characterization of universal interaction functionals in ensemble density functional theory (𝒘-RDMFT) and ensemble density functional theory (EDFT), potentially leading to unphysical results [7].

Q4: My algorithm struggles with the computational complexity of full N-representability conditions. Are there alternatives?

Yes, consider hybrid quantum-stochastic algorithms. One approach uses the ADAPT (adaptive derivative-assembled pseudo-Trotter) method combined with a stochastic simulated annealing process. This method evolves an initial N-body density matrix via unitary operators to make its reduced state approach a target p-body matrix. It effectively replaces the explicit, exponentially complex N-representability conditions and can be used to determine the quality of an alleged RDM and correct it [1] [9].

Troubleshooting Guides

Issue: Suspected Violation of Spin Symmetry Constraints

Symptoms:

- Calculated 1-RDM or 2-RDM yields energies below the true ground state (variational collapse).

- Natural orbital occupation numbers do not satisfy the spectral constraints for the given spin quantum numbers.

- Inconsistencies in expectation values of spin operators (e.g.,

𝑺^2,S_z).

Resolution Steps:

- Diagnose: Use a hybrid algorithm (e.g., the ADAPT-based variational quantum algorithm) to check if your alleged

p-RDM isN-representable. The algorithm minimizes the Hilbert-Schmidt distance between your RDM and a physically valid,N-representable RDM [9]. - Correct: If the RDM is not representable, employ a variational procedure with enforced constraints.

- For v2RDM methods: Reformulate your SDP to include the specific linear constraints defining the polytope

Σ_{N,S}(𝒘)for your system'sN,S, and𝒘[7]. - For shadow tomography: Use an improved estimator within the classical shadow protocol that incorporates

N-representability conditions in its optimization constraints, which can enhance performance under a limited shot budget [8].

- For v2RDM methods: Reformulate your SDP to include the specific linear constraints defining the polytope

- Verify: After correction, confirm that the corrected RDM:

- Yields a non-negative energy.

- Respects all relevant symmetries (spin, particle number).

- Produces natural orbital occupation numbers within the theoretical polytope

Σ_{N,S}(𝒘).

Issue: High Sample Variance in RDM Estimation from Quantum Measurements

Symptoms:

- Large statistical errors in estimated RDMs and derived properties (like energy).

- The estimated 2-RDM is not

N-representable due to shot noise.

Resolution Steps:

- Constraint Optimization: Use the classical shadow protocol but replace the standard estimator with one that is variationally optimized under

N-representability constraints. This projects the noisy estimate onto the set of physically valid RDMs [8]. - Protocol Configuration: Ensure your shadow tomography uses an ensemble of random unitaries that respect the symmetries of your system. For molecular systems, this typically means employing the ensemble of single-particle basis rotations (orbital rotations) that preserve particle number and spin [8].

- Evaluate Savings: In numerical studies, this constrained approach has been shown to reduce the required shot budget by a factor of up to 15 compared to the unoptimized estimator to achieve the same accuracy [8].

Experimental Protocols & Data

Protocol 1: Solving the One-Body Ensemble N-Representability Problem with Spin

This methodology provides a foundational cornerstone for ensemble reduced density matrix functional theory [7].

- Objective: To derive a comprehensive solution for the one-body ensemble

N-representability problem that incorporates spin symmetries (S,M) and a potential degree of mixedness (𝒘) of theN-electron state. - Key Mathematical Tools: Representation theory, convex analysis, and discrete geometry.

- Procedure:

- Define the

N-fermion Hilbert space with its Peter-Weyl decomposition into symmetry sectorsℋ_N^{(S,M)}. - Formally define the symmetry-adapted orbital one-body

𝒘-ensembleN-representability problem. - Employ the mathematical tools to demonstrate that the set of admissible 1-RDMs forms a convex polytope,

Σ_{N,S}(𝒘), within the Pauli hypercube[0,2]^d. - Derive the explicit linear constraints on the natural orbital occupation numbers that define this polytope. These constraints depend linearly on

NandSbut are independent of the magnetizationMand the number of orbitalsd.

- Define the

- Output: A complete characterization of the polytope

Σ_{N,S}(𝒘)for arbitrary system sizes and spin quantum numbers.

Protocol 2: Hybrid ADAPT Algorithm for N-Representability Testing and Correction

This protocol offers a Hamiltonian-agnostic method to test and correct alleged RDMs [1] [9].

- Objective: To determine if a given

p-body matrix isN-representable and to find a physically valid corrected RDM if it is not. - Key Components: A parameterized quantum circuit (ansatz), a classical stochastic optimizer (simulated annealing), and the ADAPT method for unitary evolution.

- Procedure:

- Initialization: Prepare an initial

N-body density matrixρ({θ→})on a quantum computer or simulator. - Cost Function Definition: Define the cost function as the Hilbert-Schmidt distance

Dbetween the reducedp-body state⁽ᵖ⁾ρ({θ→})and the targetp-body matrix⁽ᵖ⁾ρ_t. - Stochastic Optimization: Use a simulated annealing process to adjust the parameters

{θ→}. - State Evolution: At each optimization step, apply a sequence of unitary evolution operators (constructed using the ADAPT method) to

ρ({θ→})to steer its reduced state⁽ᵖ⁾ρ({θ→})towards the target⁽ᵖ⁾ρ_t. - Termination: The algorithm terminates when the distance

Dis minimized. A small final distance suggests the target isN-representable; a large distance indicates it is not, and the final⁽ᵖ⁾ρ({θ→})serves as a corrected, physically valid RDM.

- Initialization: Prepare an initial

- Output: A qualified decision on the

N-representability of the target matrix and a correctedp-RDM.

The following workflow diagram illustrates the hybrid ADAPT algorithm process for testing and correcting a reduced density matrix.

The Scientist's Toolkit: Key Research Reagents & Materials

The table below lists essential conceptual and computational "reagents" for working with spin symmetries in the N-representability problem.

| Research Reagent | Function & Explanation |

|---|---|

Convex Polytope Σ_{N,S}(𝒘) |

The foundational geometric object [7]. Defines all possible, physically admissible 1-RDMs for a system with given particle number N, total spin S, and mixedness 𝒘. |

SU(2) Casimir Operator 𝑺^2 |

The mathematical operator [7] used to define and fix the total spin quantum number S of the quantum state, ensuring spin symmetry in the N-body wave function. |

| Classical Shadow Tomography | A measurement protocol [8]. Enables efficient learning of quantum state properties (like RDMs) from a limited number of measurements, which can be post-processed with constraints. |

| Semidefinite Programming (SDP) | An optimization algorithm [8]. The computational engine for the v2RDM method, used to minimize energy subject to N-representability constraints (like those from Σ_{N,S}(𝒘)). |

| Hybrid ADAPT-VQA | A hybrid quantum-classical algorithm [1] [9]. Used to test and enforce N-representability without requiring a full set of explicit conditions, bypassing computational complexity. |

The Role of Ensemble Mixedness in Realistic Quantum States

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between a pure state and a mixed state in quantum mechanics? A pure state represents a quantum system that can be described by a single state vector (|\psi\rangle), meaning we have maximum knowledge about the system. In contrast, a mixed state describes a statistical ensemble of pure states, meaning we have incomplete knowledge about the system. Pure states can be represented by state vectors, while mixed states require density matrices for their mathematical description [10]. The key operational difference is that for a pure state, (\text{Tr}(\rho^2) = 1), while for a mixed state, (\text{Tr}(\rho^2) < 1) [11].

2. How does ensemble mixedness relate to the N-representability problem? The N-representability problem involves determining whether a given p-body reduced density matrix (p-RDM) can be obtained by contracting an N-body density matrix [2]. Ensemble mixedness is central to this problem because a p-RDM must correspond to a physically realizable ensemble of quantum states. If the p-RDM violates N-representability conditions, it may lead to unphysical results such as energies below the true ground state [2]. The hybrid ADAPT algorithm helps address this by evolving an initial p-RDM toward a target p-body matrix while respecting physical constraints [2].

3. What practical issues occur when working with non-N-representable density matrices? Using non-N-representable density matrices can cause variational approaches to collapse, potentially yielding energies below the true ground state [2]. This manifests in simulations as unphysical results, convergence failures, or incorrect prediction of molecular properties. For researchers in drug development, this could lead to inaccurate molecular interaction predictions or faulty drug candidate assessments.

4. How can I verify if my reduced density matrix is N-representable? You can check the trace condition where (\text{Tr}(\rho^2) = 1) for pure states and (\text{Tr}(\rho^2) < 1) for mixed states [11]. For a more robust approach, the hybrid ADAPT quantum-stochastic algorithm can determine whether a given p-body matrix is N-representable by evolving an initial N-body density matrix toward the target p-body matrix using unitary evolution operators and stochastic sampling [2]. The Hilbert-Schmidt distance between the evolved state and the target matrix serves as a measure of N-representability quality [2].

5. What is the significance of reduced density matrices in quantum simulations for drug development? Reduced density matrices (RDMs) are crucial in quantum simulations for drug development because they allow researchers to focus on specific subsystems (such as active sites in enzyme-drug interactions) while ignoring irrelevant parts of the system [2] [11]. This makes complex molecular simulations computationally tractable. The 2-RDM is particularly important since it contains all necessary information to calculate the energy of pairwise interacting systems like molecular electrons [2].

Troubleshooting Guide

Issue 1: Unphysical Simulation Results

Problem: Your quantum simulation returns energies below the true ground state or other unphysical results.

Diagnosis: This often indicates N-representability violations in your reduced density matrix [2].

Solution:

- Implement the ADAPT-VQA algorithm to correct the alleged p-RDM [2]:

- Initialize with an independent-particle-model state (\rho0)

- Generate trial states by applying unitary transformations: (\rhon({\vec{\theta}}n) = Un({\vec{\theta}}n)\rho0 Un^\dagger({\vec{\theta}}n))

- Use stochastic optimization to minimize the Hilbert-Schmidt distance (D(^p\rho({\vec{\theta}}), ^p\rho_t)) between your physical reduced state and target matrix

- Progressively decrease the acceptance probability using simulated annealing to avoid barren plateaus

- Validate your corrected RDM by checking that (\text{Tr}(\rho^2) \leq 1) and that all eigenvalues are non-negative [11].

Issue 2: Difficulty Visualizing Mixed States

Problem: Understanding and visualizing the structure of mixed quantum states.

Diagnosis: Unlike pure states, mixed states cannot be represented as points on the Bloch sphere but require interior points [12].

Solution:

- Use density matrix representations rather than wavefunction approaches

- For single qubits, use the Bloch sphere visualization where:

- Pure states lie on the surface

- Mixed states lie in the interior

- Maximally mixed states reside at the center

- Analyze your state using multiple bases (z-basis, x-basis, y-basis) to fully characterize its properties [12]

Issue 3: Reduced Density Matrix Calculation Errors

Problem: Incorrect computation of reduced density matrices from full quantum states.

Diagnosis: The reduced density matrix is obtained through partial trace, which requires careful implementation [11].

Solution:

- For a bipartite system with density matrix (\rho{AB}), the reduced density matrix for subsystem A is: (\rhoA = \text{Tr}B[\rho{AB}] = \sumn (\langle n|B \otimes IA) \rho{AB} (|n\rangleB \otimes IA)) where ({|n\rangle_B}) forms an orthonormal basis for subsystem B [11].

- Verify your calculation for Bell states, which should yield: (\tilde{\rho} = \frac{1}{2}(|\downarrow\rangle\langle\downarrow| + |\uparrow\rangle\langle\uparrow|)) with (\text{Tr}(\tilde{\rho}^2) = \frac{1}{2} < 1) [11]

Issue 4: Quantum Simulator Selection for Mixed States

Problem: Choosing the appropriate quantum simulator for mixed state evolution.

Diagnosis: Pure state simulators cannot properly handle truly mixed states that don't preserve purity [13].

Solution:

- Use appropriate simulators:

- For pure state evolution:

cirq.Simulator - For mixed state evolution:

cirq.DensityMatrixSimulator[13]

- For pure state evolution:

- For noisy evolution that doesn't preserve purity, ensure you're using a mixed state simulator rather than a pure state simulator [13].

Experimental Protocols

Protocol 1: Implementing the Hybrid ADAPT Algorithm for N-representability

Purpose: To determine the N-representability of a given p-body reduced density matrix and correct it if necessary [2].

Methodology:

Step-by-Step Procedure:

- Initialize with a physically valid initial N-body density matrix (\rho_0), typically an independent-particle-model state [2].

- Construct the unitary ansatz using the prescription: [Un({\vec{\theta}}n) = An(\vec{\theta}n)U{n-1}({\vec{\theta}}{n-1})] where (An(\vec{\theta}n) = \exp\left(\vec{P} \cdot \vec{\theta}_\alpha\right)) with (\vec{P}) being a vector of antihermitian operators from a predefined pool [2].

- Evaluate the Hilbert-Schmidt distance on a quantum computer: [Dn = \text{Tr}\left[\left(^p\rhon({\vec{\theta}}n) - ^p\rhot\right)^2\right]] [2]

- Apply stochastic optimization using simulated annealing with gradually decreasing temperature to minimize (D_n) [2].

- Terminate the algorithm when (Dn - D{n-1} \leq \epsilon) for a predetermined number of consecutive steps [2].

Expected Outcomes: The algorithm produces a sequence of p-body reduced states that progressively approach the target p-body matrix, with the final distance (D_L) providing a quantitative measure of the N-representability quality of the original matrix [2].

Protocol 2: Calculating and Verifying Reduced Density Matrices

Purpose: To correctly compute reduced density matrices and verify their physical validity.

Methodology:

Step-by-Step Procedure:

- Start with the full quantum state (|\psi\rangle) for pure states or density matrix (\rho) for mixed states.

- Construct the full density matrix: (\rho = |\psi\rangle\langle\psi|) for pure states.

- Perform partial trace over the degrees of freedom you want to eliminate: [\rhoA = \text{Tr}B(\rho) = \sum{\alpha} (\langle\alpha|B \otimes IA) \rho (|\alpha\rangleB \otimes IA)] where ({|\alpha\rangleB}) forms a complete orthonormal basis for subsystem B [11].

- Verify the physicality of the resulting reduced density matrix:

- Check that (\text{Tr}(\rhoA) = 1)

- Confirm (\text{Tr}(\rhoA^2) \leq 1)

- Ensure all eigenvalues are non-negative [11]

Validation Example: For the Bell state (|\psi\rangle = \frac{1}{\sqrt{2}}(|00\rangle + |11\rangle)), the reduced density matrix for either qubit should be: [\tilde{\rho} = \frac{1}{2}(|0\rangle\langle 0| + |1\rangle\langle 1|) = \frac{1}{2}\begin{pmatrix} 1 & 0 \ 0 & 1 \end{pmatrix}] with (\text{Tr}(\tilde{\rho}) = 1) and (\text{Tr}(\tilde{\rho}^2) = \frac{1}{2}) [11].

Quantitative Data Tables

Table 1: N-representability Conditions for Reduced Density Matrices

| Condition Type | Mathematical Expression | Physical Interpretation | Validation Method |

|---|---|---|---|

| Trace Condition | (\text{Tr}(^p\rho) = 1) | Conservation of probability | Direct calculation |

| Positivity | (^p\rho \succeq 0) (all eigenvalues ≥ 0) | Physical probabilities | Eigenvalue decomposition |

| Pure State N-representability | (\text{Tr}[(^p\rho)^2] = 1) | State is pure | Hilbert-Schmidt distance minimization [2] |

| Ensemble N-representability | (\text{Tr}[(^p\rho)^2] < 1) | Statistical mixture | Hybrid ADAPT algorithm [2] |

| Contraction Consistency | (^p\rho) derivable from (^q\rho) (q>p) by partial trace | Hierarchical consistency | Iterative contraction check |

Table 2: Comparison of Quantum Simulators for Mixed State Research

| Simulator Type | Suitable for Mixed States | Key Features | Limitations | Example Tools |

|---|---|---|---|---|

| Pure State Simulator | No (only purity-preserving evolution) | Tracks complete state vector | Cannot handle true mixed states | cirq.Simulator [13] |

| Density Matrix Simulator | Yes | Directly simulates density matrix evolution | Higher computational cost | cirq.DensityMatrixSimulator [13] |

| State Vector Simulator | No | High precision for small systems | Exponential resource scaling | IBM Qiskit Statevector [14] |

| Tensor Network Simulator | Yes | Efficient for larger systems with limited entanglement | Accuracy depends on bond dimension | Various research codes |

| Noise Simulator | Yes | Models realistic noisy environments | Requires accurate noise models | IBM Qiskit Noise [14] |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Quantum Simulations | Application in N-representability |

|---|---|---|

| ADAPT-VQA Algorithm | Hybrid quantum-stochastic algorithm for evolving density matrices | Corrects non-N-representable matrices [2] |

| Fermionic Operator Pool | Set of antihermitian operators for constructing unitary ansatz | Ensures proper symmetry in electronic structure problems [2] |

| Simulated Annealing Optimizer | Classical stochastic global search algorithm | Avoids barren plateaus in parameter optimization [2] |

| Density Matrix Simulator | Quantum simulator that handles mixed state evolution | Properly models statistical mixtures [13] |

| Hilbert-Schmidt Distance Metric | Measures distance between quantum states | Quantifies N-representability quality [2] |

| Partial Trace Operation | Mathematical tool for obtaining reduced density matrices | Calculates p-RDMs from N-body states [11] |

| OpenFermion/PySCF | Software libraries for quantum chemistry integrals | Provides molecular Hamiltonians for testing [2] |

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental N-representability problem for orbital occupancies? The N-representability problem involves determining whether a given one-body reduced density matrix (1RDM) describes a physically valid system of N electrons. A 1RDM contains the expected occupation numbers, ( ni ), of a set of orbitals ( \varphii ). The foundational Pauli exclusion principle dictates that each orbital occupancy must lie between 0 and 2, forming a "Pauli hypercube" of possible values, ( [0,2]^d ), for a d-orbital system [7]. However, this is only a necessary condition; a 1RDM must also originate from an N-electron quantum state, making it "N-representable" [2].

FAQ 2: How does spin symmetry refine the admissible set of orbital occupancies? When the N-electron quantum state possesses definite total spin ( S ) and magnetization ( M ) quantum numbers, the set of admissible orbital occupation vectors becomes more restricted. The occupancies are no longer confined merely to the Pauli hypercube but to a specific convex polytope, denoted ( \Sigma_{N,S}(\boldsymbol{w}) ), within that hypercube. This polytope is defined by a set of linear constraints on the natural orbital occupation numbers. Notably, these constraints are independent of the magnetization ( M ) and the number of orbitals ( d ), depending linearly only on the number of electrons ( N ) and the total spin ( S ) [7] [15].

FAQ 3: What role does the concept of a convex polytope play in this context? A convex polytope provides the precise geometric structure for the set of all admissible orbital occupation vectors. The solution to the one-body ensemble N-representability problem, which accounts for spin symmetry and a potential degree of mixedness ( \boldsymbol{w} ) in the quantum state, is exactly this convex polytope, ( \Sigma_{N,S}(\boldsymbol{w}) \subset [0,2]^d ) [7]. The "vertices" of this polytope correspond to the most extreme allowable combinations of orbital occupations, and all physically valid occupation vectors lie within this shape.

FAQ 4: Why is solving this refined N-representability problem important for computational methods? A comprehensive solution to the spin-symmetry-adapted N-representability problem provides the rigorous mathematical domain for universal functionals in ensemble density functional theory (EDFT) and ensemble one-particle reduced density matrix functional theory (ensemble RDMFT) [7]. Knowing the precise boundaries of the convex polytope prevents variational minimization procedures from searching for solutions in unphysical regions of the parameter space, which could lead to collapsed energies below the true ground state [2]. This is a crucial cornerstone for developing accurate methods to study excited states and strongly correlated quantum systems [7] [2].

Troubleshooting Guides

Problem 1: Suspected N-representability violation in a computed 1RDM.

- Symptoms:

- The variational energy minimization collapses to an unphysically low energy.

- The calculated natural orbital occupation numbers fall outside the expected convex polytope ( \Sigma_{N,S}(\boldsymbol{w}) ) for the given ( N ) and ( S ).

- Solutions:

- Polytope Constraint Check: Calculate the linear constraints defining the polytope ( \Sigma_{N,S}(\boldsymbol{w}) ) for your specific ( N ) and ( S ) values. Systematically verify that your computed occupation number vector satisfies all these constraints [7].

- Hybrid Algorithm Correction: Employ a hybrid quantum-stochastic algorithm, such as the ADAPT-VQA (Adaptive Derivative-assembled Pseudo-Trotter Variational Quantum Algorithm). This method can evolve an initial, physical N-body density matrix so that its reduced state (1RDM) approaches your target 1RDM, effectively correcting it to the closest N-representable matrix [2] [1].

Problem 2: Difficulty in visualizing or generating the constraint polytope for a given (N, S).

- Symptoms:

- Inability to determine the specific linear inequalities that define ( \Sigma_{N,S}(\boldsymbol{w}) ).

- Confusion about the polytope's structure and its vertices.

- Solutions:

- Leverage Generalized Solution: Recent work provides a general method to calculate these linear constraints for arbitrary system sizes ( N ) and spin quantum numbers ( S ) [7]. The dependence is linear, making the calculation tractable.

- Refer to Explicit Examples: Consult published works that include explicit computations and examples of these constraints for specific ( (N, S) ) combinations to build intuition [7].

Problem 3: Handling systems without definite spin or with mixed states.

- Symptoms:

- The quantum state of interest is an ensemble (mixed state) characterized by a statistical vector ( \boldsymbol{w} ), not a pure state with definite ( S ).

- The state does not have a well-defined total spin ( S ).

- Solutions:

- Incorporate Mixedness: The polytope definition ( \Sigma_{N,S}(\boldsymbol{w}) ) explicitly accounts for the degree of mixedness ( \boldsymbol{w} ) of the N-electron state. Ensure you are using the correct polytope for your ensemble state [7].

- Pure State Embedding: For transition density matrices, one practical approach is to embed a p-body transition RDM of an N-particle system into a (p+1)-body RDM of an (N+1)-particle system. This allows the application of pure-state N-representability techniques and algorithms [16].

Experimental Protocols & Workflows

Protocol 1: Validating 1RDM N-representability via the ADAPT-VQA

Purpose: To determine if a given one-body reduced density matrix (1RDM) is N-representable and to correct it if it is not.

Principle: This hybrid quantum-stochastic algorithm minimizes the Hilbert-Schmidt distance between a target 1RDM (the alleged RDM) and the reduced state of a parametrized N-body density matrix. If the distance can be driven to zero, the target is N-representable [2] [1].

Workflow:

- Initialization: Prepare an initial N-body density matrix, ( \rho_0 ), typically an independent-particle-model state (e.g., a Slater determinant) [2].

- Iterative Ansatz Construction: For each iteration step ( n ):

- Unitary Expansion: Apply a parametrized unitary transformation to the current state: ( \rhon({\vec{\theta}n) = An(\vec{\theta}n) \rho{n-1} An^\dagger(\vec{\theta}n) ). The operator ( An(\vec{\theta}n) = \exp(Pn \cdot \vec{\theta}_n) ) is built from a pool of anti-Hermitian operators (e.g., fermionic excitation operators) [2].

- Quantum Calculation: On a quantum computer, compute the 1RDM, ( ^1\rhon ), from ( \rhon ) and evaluate the cost function, the Hilbert-Schmidt distance ( Dn = \text{Tr}[(^1\rhon - ^1\rhot)^2] ), where ( ^1\rhot ) is the target 1RDM [2].

- Classical Stochastic Optimization: A classical simulator (e.g., Simulated Annealing) adjusts the parameters ( {\vec{\theta}n ) to minimize ( Dn ). The new ansatz is accepted with a probability based on a decreasing temperature schedule [2].

- Convergence Check: The algorithm terminates when the change in distance ( Dn - D{n-1} ) is less than a predefined precision ( \epsilon ) for a number of consecutive steps. The final distance ( D_L ) indicates the quality of the correction [2].

Diagram 1: ADAPT-VQA workflow for 1RDM correction.

Protocol 2: Determining the Convex Polytope Σ_N,S(w) for a System

Purpose: To derive the linear constraints that define the set of all admissible natural orbital occupation numbers for an N-electron system with total spin S.

Principle: Using tools from representation theory, convex analysis, and discrete geometry, the problem can be solved generally. The constraints are linear in the occupation numbers and independent of the number of orbitals d and magnetization M [7].

Workflow:

- System Characterization: Identify the fundamental parameters of the system: the number of electrons ( N ), the total spin quantum number ( S ), and the ensemble mixedness vector ( \boldsymbol{w} ).

- Mathematical Construction: Apply the general solution framework:

- Representation Theory: Decompose the N-fermion Hilbert space into spin sectors ( \mathcal{H}_N^{(S,M)} ) [7].

- Convex & Discrete Analysis: Analyze the resulting set of one-body reduced density matrices to find its extreme points. The convex hull of these points defines the polytope, and its boundaries are described by linear inequalities [7].

- Constraint Extraction: Extract the explicit system of linear inequalities of the form ( \sumi ci ni \leq b ) that define the polytope ( \Sigma{N,S}(\boldsymbol{w}) ). These are the necessary and sufficient conditions for N-representability in this setting [7].

The Scientist's Toolkit: Research Reagent Solutions

Table 1: Essential Computational Tools for N-representability and Polytope Research.

| Tool / Resource | Type | Function / Application | Relevant Context |

|---|---|---|---|

| Spin-Adapted Constraints | Mathematical Framework | Provides the linear inequalities defining the convex polytope ( \Sigma_{N,S}(\boldsymbol{w}) ) of valid orbital occupancies. | Core theoretical solution for the symmetry-adapted one-body N-representability problem [7]. |

| ADAPT-VQA | Hybrid Quantum Algorithm | Corrects non-N-representable matrices by evolving an initial state to minimize distance to a target RDM. | Practical tool for purifying and validating alleged 1RDMs and 2RDMs without a specific Hamiltonian [2] [1]. |

| Fermionic Operator Pool | Algorithmic Component | A predefined set of anti-Hermitian operators (e.g., singles/doubles) used to build unitary ansätze in ADAPT-VQA. | Enables efficient and physically meaningful exploration of the N-body state space during variational evolution [2]. |

| Simulated Annealing | Classical Optimizer | A stochastic global search algorithm used to adjust variational parameters and avoid local minima. | The classical core of the hybrid ADAPT algorithm, responsible for parameter optimization [2]. |

| Peter-Weyl Decomposition | Mathematical Tool | Decomposes the N-fermion Hilbert space into direct sums of spin symmetry sectors ( \mathcal{H}_N^{(S,M)} ). | Foundational for incorporating spin symmetry into the N-representability problem [7]. |

Advanced Methods for Solving and Applying N-Representable Reduced Density Matrices

Analytical Reconstruction of 1-RDMs from Electron Densities in Finite Basis Sets

Core Theoretical Concepts

What is the fundamental relationship between the electron density and the 1-RDM?

The one-electron reduced density matrix (1-RDM), denoted as γ(r, r'), provides a more complete description of a quantum system than the electron density, ρ(r), as it contains both position and momentum space information. The key relationship is that the electron density is the diagonal element of the 1-RDM: ρ(r) = γ(r, r) [17] [18]. Within a finite basis set {f~i~} of K functions, the 1-RDM can be expanded as γ(r, r') = Σ~i,j~ Γ~i,j~ f~i~(r)f~j~(r'), and the corresponding density becomes ρ(r) = Σ~i,j~ c~ij~ f~i~(r)f~j~(r), where c~ij~ = (2 - δ~ij~)Γ~i,j~ [19].

What does "N-representability" mean in the context of 1-RDM reconstruction?

An N-representable 1-RDM is one that corresponds to a physically meaningful N-electron wavefunction [17]. For a reconstructed 1-RDM to be physically meaningful, it must satisfy specific mathematical constraints. For a closed-shell system, the population matrix P (in an orthogonal basis) must be Hermitian, positive semidefinite (P ≽ 0), and its eigenvalues must be between 0 and 2 [17] [18]. Ensuring these N-representability conditions is crucial for obtaining physically valid results from the reconstruction process.

Practical Implementation & Methodologies

How do I analytically reconstruct a 1-RDM from a density within a LIP basis set?

A basis set where the products of basis functions f~i~f~j~ are linearly independent (LIP) significantly simplifies reconstruction [19].

Workflow: 1-RDM Reconstruction in LIP Basis Sets

- Procedure:

- Obtain the expansion coefficients c~ij~ of your electron density in the LIP basis set: ρ(r) = Σ~i≤j~ P~i,j~ f~i~(r)f~j~(r), where P~i,j~ are known [19].

- Apply the analytical reconstruction formula directly to obtain the 1-RDM coefficients: Γ~i,j~ = P~i,j~ / (2 - δ~ij~) [19].

- Construct the full 1-RDM using these coefficients: γ(r, r') = Σ~i,j~ Γ~i,j~ f~i~(r)f~j~(r').

- Key Advantage: This method is exact and avoids numerically solving the often ill-conditioned system of equations ( W b = c ) associated with Harriman's traditional method [19].

What is the procedure for 1-RDM reconstruction in a non-LIP basis set?

In non-LIP basis sets, products f~i~f~j~ are linearly dependent, leading to infinitely many 1-RDMs that yield the same density [19]. The solution is to construct the family of all compatible 1-RDMs.

Workflow: Handling Non-LIP Basis Sets

- Procedure:

- Identify the L exact linear dependencies among the basis function products: Σ~i,j~ a~kij~ f~i~f~j~ = 0 for k=1,...,L [19].

- The general form of a 1-RDM that collapses to the target density ρ(r) is: γ(r, r') = γ~0~(r, r') + Σ~k=1~^L^ λ~k~ A~k~(r, r'). Here, γ~0~ is a particular solution, A~k~ are symmetric matrices derived from the null space vectors a~kij~, and λ~k~ are arbitrary real coefficients [19].

- To isolate physically meaningful solutions, impose N-representability constraints (e.g., P ≽ 0 and I - P ≽ 0) on the population matrix during a constrained search [19] [17].

How can I reconstruct a 1-RDM from experimental data?

Reconstructing a 1-RDM from experimental data requires a joint refinement using both position-space and momentum-space data, as the 1-RDM contains information for both [17] [18].

- Required Data:

- Methodology:

- Express the 1-RDM in a finite basis set (e.g., atomic orbitals).

- Formulate a least-squares minimization problem that fits the population matrix P to both the SF and DCP data simultaneously.

- Impose N-representability conditions, symmetry constraints, and optionally freeze core-electron contributions as convex constraints during the optimization, typically solved via Semidefinite Programming (SDP) [17] [18].

Troubleshooting Common Issues

The reconstruction process fails or yields non-physical results. What should I check?

- Verify N-Representability Constraints: Ensure your procedure enforces the necessary conditions (positive semidefiniteness, eigenvalue bounds) on the 1-RDM [17] [18]. Without these, the result may be mathematically possible but physically meaningless.

- Check Basis Set Linear Dependencies: For LIP basis sets, ensure the products f~i~f~j~ are truly linearly independent. Near-linear dependencies can make the reconstruction numerically unstable, even if analytical formulas exist [19]. For non-LIP sets, ensure you have correctly identified the null space.

- Inspect Data Adequacy: When working with experimental data, a single type of measurement (e.g., only X-ray diffraction) may be insufficient for a robust reconstruction. Joint refinement using both SF and DCP is recommended [17].

The reconstructed 1-RDM is not sufficiently accurate for calculating molecular properties.

- Consider 1-RDM Optimization: In variational calculations (e.g., Variational Quantum Eigensolvers), directly optimizing the 1-RDM along with the energy, rather than relying on energy minimization alone, can significantly improve the accuracy of derived properties like dipole moments and atomic charges [20].

- Review Active Space Selection: For complex systems, consider reconstruction strategies that freeze core electrons and focus on optimizing the valence space, which reduces the number of parameters and improves stability [17] [18].

- Validate with Known Properties: Check your reconstructed 1-RDM by computing properties like the virial ratio or approximate energy, which can serve as indicators of the reconstruction's quality [18].

Essential Research Reagent Solutions

Table 1: Key Computational Tools and Mathematical Objects for 1-RDM Reconstruction

| Item Name | Function in Reconstruction | Technical Specification / Note |

|---|---|---|

| LIP Basis Set | Ensures unique analytical reconstruction of γ(r, r') from ρ(r). | Rare for general-purpose use; often requires specialized construction [19]. |

| Non-LIP Basis Set | Standard in quantum chemistry; requires more complex reconstruction protocols. | Infinitely many 1-RDMs correspond to a single density; null space identification is crucial [19]. |

| N-Representability Conditions | Constraints ensuring the 1-RDM corresponds to a physical N-electron wavefunction. | For closed-shell: P ≽ 0 and I - P ≽ 0 (where P is the density matrix in an orthogonal basis) [17] [18]. |

| Semidefinite Programming (SDP) | Numerical optimization method for reconstructing 1-RDMs under constraints. | Used in joint refinements (e.g., X-ray + Compton data) to enforce N-representability [17]. |

| X-ray Structure Factors (SF) | Experimental input providing electron density information in position space. | Relates to the diagonal of the 1-RDM: ρ(r) = γ(r, r) [17]. |

| Directional Compton Profiles (DCP) | Experimental input providing electron density information in momentum space. | Essential for constraining the off-diagonal elements of the 1-RDM via Fourier transform [17] [18]. |

Hybrid Quantum-Stochastic Algorithms for N-Representability (ADAPT-VQA)

The N-representability problem is a fundamental challenge in quantum chemistry and condensed matter physics. It asks whether a given p-body reduced density matrix (p-RDM) could have originated from a physically valid, larger N-body quantum system [1]. Accurately solving this problem is crucial because it allows for the determination of a quantum system's exact ground state energy through the constrained minimization of a many-body Hamiltonian's expectation value [1]. However, the complete set of N-representability conditions is exponentially large, making direct computation intractable for all but the smallest systems [1] [21].

The ADAPT-VQA (Adaptive Derivative-Assembled Pseudo-Trotter Variational Quantum Algorithm) is a hybrid quantum-stochastic algorithm designed to circumvent the direct application of these complex conditions [1]. It functions by iteratively evolving an initial N-body density matrix towards a target p-RDM using a sequence of unitary operators, with a stochastic component to guide the search. This method provides a practical pathway to verify the N-representability of a given matrix and correct it if necessary, without relying on the explicit, exponential number of constraints [1].

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the ADAPT-VQA compared to previous approaches to N-representability? The core innovation lies in its hybrid quantum-stochastic nature. Instead of directly enforcing the exponentially large set of N-representability constraints, the algorithm uses a quantum computer to perform unitary evolution guided by the ADAPT method, while a classical computer runs a simulated annealing process to stochastically guide the evolution towards the target reduced density matrix. This bypasses the need to know all constraints explicitly [1].

Q2: On what types of quantum systems or models has ADAPT-VQA been successfully tested? Research has demonstrated the application of ADAPT-VQA on alleged reduced density matrices from a variety of systems, proving its model-independent nature. Successful benchmarks include [1]:

- Quantum chemistry electronic Hamiltonians.

- The reduced BCS model with constant pairing.

- The Heisenberg XXZ spin model.

Q3: How does this algorithm relate to real-world applications like drug discovery? Accurate molecular simulation is a cornerstone of modern drug discovery, as it allows researchers to predict how potential drug molecules (ligands) interact with target proteins [22]. The ADAPT-VQA tackles a key bottleneck in these simulations—ensuring the quantum-mechanical consistency (N-representability) of the electronic structure descriptions. By providing a more efficient path to valid simulations, it can potentially accelerate the identification and optimization of new drug candidates [1] [22].

Q4: What are the main sources of error when running ADAPT-VQA on current quantum hardware? While the algorithm itself is designed to be error-aware, performance on current noisy intermediate-scale quantum (NISQ) devices is influenced by [1]:

- Gate Errors: Imperfections in the quantum gates used to construct the unitary evolution sequences.

- Decoherence: The loss of quantum information over time.

- Sampling Noise: Errors arising from the stochastic sampling component of the algorithm.

Q5: What is the role of the classical computer in this hybrid algorithm? The classical computer has several critical functions [1]:

- It runs the simulated annealing process, a stochastic optimization technique that guides the overall search direction.

- It calculates the gradient information required by the ADAPT method to construct the next unitary operator in the sequence.

- It handles the classical optimization loop, updating parameters for the next iteration based on results from the quantum processor.

Troubleshooting Guides

Algorithm Fails to Converge Towards Target p-RDM

| Possible Cause | Diagnostic Steps | Resolution Steps |

|---|---|---|

| Insufficient Ansatz Expressivity | Check if the pool of operators in the ADAPT protocol is sufficient to represent the system's physics. | Expand the operator pool to include more complex or system-specific generators. |

| Poorly Tuned Stochastic Sampling | Monitor the acceptance rate in the simulated annealing process; an extremely low or high rate indicates poor tuning. | Adjust the annealing schedule (e.g., initial temperature, cooling rate) to balance exploration and exploitation [1]. |

| Hardware Noise Dominating Signal | Compare results from noisy simulators with ideal statevector simulator outputs. | Increase the number of measurement shots to mitigate sampling noise and employ error mitigation techniques [1]. |

Excessive Resource Requirements (Qubits/Circuit Depth)

| Possible Cause | Diagnostic Steps | Resolution Steps |

|---|---|---|

| Large System Size (N) | Profile the algorithm to identify the most resource-intensive subroutines. | Explore system-specific symmetries to reduce the effective problem size and number of required qubits [1]. |

| Deep Circuit from ADAPT Sequence | Track the number of unitary layers added throughout the algorithm's run. | Implement circuit optimization and compilation techniques to simplify and shorten the quantum circuit. |

| Inefficient Contraction | Analyze the cost of the classical contraction step from the N-body to the p-body state. | Investigate tensor network methods or other efficient classical algorithms for the contraction step. |

Inconsistent Results Between Algorithm Runs

| Possible Cause | Diagnostic Steps | Resolution Steps |

|---|---|---|

| Stochastic Sampling Variability | Run the algorithm multiple times with different random seeds and observe the variance in the final result. | Increase the number of iterations in the simulated annealing process or adjust the cooling schedule for more consistent convergence [1]. |

| Quantum Measurement Noise | Examine the statistical uncertainty from a finite number of measurement shots on the quantum processor. | Increase the number of shots for the expectation value measurements to reduce statistical error. |

| Barren Plateaus in Optimization | Monitor the magnitude of the gradients used in the ADAPT protocol; exponentially small gradients indicate a barren plateau. | Utilize techniques like layer-by-layer training or problem-informed operator pools to avoid barren plateaus [21]. |

Experimental Protocols & Workflows

Core Protocol: Verifying N-Representability with ADAPT-VQA

This protocol outlines the steps to determine if a given p-body matrix is N-representable.

1. Input Preparation:

- Target p-RDM: Prepare the p-body reduced density matrix whose N-representability you wish to verify.

- Initial N-Body State: Initialize a starting N-body density matrix, often a simple reference state like a Hartree-Fock Slater determinant.

2. Hybrid Iteration Loop:

- Step A: Quantum Evolution. Construct a unitary operator ( U(\theta) ) using the ADAPT method, where the generators are chosen based on gradient information. Apply this to the current N-body state on the quantum processor: ( \rho{new} = U(\theta) \rho{old} U^\dagger(\theta) ).

- Step B: Contraction. On the classical computer, contract the evolved N-body state ( \rho_{new} ) to obtain a new candidate p-RDM.

- Step C: Stochastic Evaluation. Use a simulated annealing process to evaluate the "distance" (e.g., matrix norm) between the candidate p-RDM and the target p-RDM. Based on this and an annealing temperature, decide whether to accept the new state.

- Step D: Parameter Update. Update the parameters ( \theta ) for the next unitary operator based on the ADAPT protocol and the stochastic guide.

3. Output & Analysis:

- The algorithm terminates after a set number of iterations or when the distance to the target p-RDM falls below a predefined threshold.

- A successfully small final distance indicates that the target p-RDM is likely N-representable. The final N-body state provides a witness to this representability.

The following workflow diagram illustrates this iterative protocol:

Performance Validation Protocol

To benchmark the algorithm's performance, use the following validation steps with known systems:

1. System Selection: Choose a benchmark system with a known, exact solution, such as a small molecular Hamiltonian (e.g., H₂ or LiH) or an integrable model like the reduced BCS Hamiltonian [1]. 2. Generate Ground Truth: Calculate the exact 2-RDM (or 1-RDM) of the benchmark system's ground state using a high-precision classical method (e.g., Full Configuration Interaction). 3. Run ADAPT-VQA: Use the exact RDM as the "target" and run the ADAPT-VQA protocol from a different initial state. 4. Quantitative Comparison: Track the convergence of the energy calculated from the ADAPT-VQA RDM towards the exact ground state energy. The key quantitative metrics to record are shown in the table below.

Table: Key Quantitative Metrics for ADAPT-VQA Validation on Benchmark Systems

| Metric | Description | Target Value for Success |

|---|---|---|

| Final Energy Error | Absolute difference between the computed and exact ground state energy. | Below chemical accuracy (~1.6 mHa) |

| RDM Distance | Matrix norm (e.g., Frobenius) between final ADAPT-VQA p-RDM and exact p-RDM. | Approaches zero |

| Convergence Iterations | Number of algorithm iterations required to meet convergence criteria. | As low as possible; system-dependent |

| Stochastic Acceptance Rate | The percentage of proposed steps accepted by the simulated annealing process. | Stable (e.g., 20-50%) throughout run [1] |

The Scientist's Toolkit: Research Reagent Solutions

This section details the essential computational "reagents" required to implement the ADAPT-VQA for N-representability research.

Table: Essential Components for ADAPT-VQA Experiments

| Item / Solution | Function / Purpose | Implementation Notes |

|---|---|---|

| ADAPT Operator Pool | A set of operators (e.g., fermionic excitations, Pauli strings) used to build the adaptive unitary evolution operators [1]. | Choice of pool (e.g., "Qubit-Excitation" based) critically affects performance and convergence [21]. |

| Simulated Annealing Scheduler | The classical stochastic process that guides the global search and helps avoid local minima [1]. | Requires careful tuning of the initial temperature and cooling schedule for the specific problem. |

| Metric for "Distance" | A function to quantify the difference between the candidate and target p-RDMs (e.g., Frobenius norm, trace distance). | The choice of metric can influence the optimization landscape. |

| Contraction Algorithm | The classical subroutine that computes the p-RDM from the evolved N-body quantum state on the quantum processor [1]. | For large systems, this can be a computational bottleneck. |

| Error Mitigation Suite | A collection of techniques (e.g., zero-noise extrapolation, readout error mitigation) to counteract hardware noise [1]. | Essential for obtaining meaningful results from current NISQ-era quantum devices. |

Exploiting Classical Shadows and Semidefinite Programming (v2RDM)

Frequently Asked Questions (FAQs)

Core Concepts

Q1: What is the fundamental problem that combining Classical Shadows and v2RDM solves? A1: This combination addresses the critical challenge of efficiently obtaining physically meaningful 2-RDMs from quantum computations. Classical Shadows allow you to efficiently estimate the 2-RDM from a limited number of quantum measurements [8]. However, due to shot noise and errors, this estimated 2-RDM may violate the N-representability conditions—the mathematical rules that ensure a 2-RDM could have originated from a valid physical quantum state [2]. The v2RDM method uses semidefinite programming (SDP) to project this noisy, non-N-representable estimate onto the closest valid 2-RDM [8] [2]. This process significantly enhances the quality of quantum data, leading to more accurate computation of properties like molecular energies and forces.

Q2: In what scenarios should a researcher consider this hybrid approach? A2: You should prioritize this method in the following scenarios:

- Limited Quantum Resources: When your measurement budget (shot count) on a quantum device is severely constrained, this technique can achieve a target accuracy with far fewer samples [8].

- Noisy Hardware: When working on current noisy quantum processors, where errors can lead to unphysical results.

- Extracting Complex Observables: When you need to compute chemically relevant properties beyond just the ground state energy, such as molecular forces for geometry optimization or dynamics simulations [8]. Research indicates this approach can lead to savings in shot budgets by a factor of up to 15 over a pure, non-optimized classical shadow estimate [8].

Implementation & Troubleshooting

Q3: My SDP solver fails to converge or returns an infeasible solution. What are the potential causes? A3: This common issue can stem from several sources in the classical shadow pre-processing stage:

- Excessively Noisy Shadow Estimate: If the initial 2-RDM from classical shadows is too far from the space of physical states, the SDP may struggle to find a feasible point.

- Solution: Increase the number of shots used in the classical shadow protocol to improve the initial estimate's quality.

- Incorrect SDP Formulation: The N-representability constraints may be defined incorrectly.

- Solution: Double-check that your SDP includes at a minimum the P (positive semidefiniteness), Q (duality), and G (contractivity) conditions to ensure a valid 2-RDM [2]. The constraints should enforce that the matrix is positive semidefinite and that its partial traces yield valid 1-RDMs.

- Numerical Precision Issues: The SDP solver's internal tolerances might be too tight for the noise level in your data.

- Solution: Relax the convergence tolerances of your SDP solver slightly and ensure your 2-RDM matrix is properly normalized.

Q4: The energy calculated from my refined 2-RDM is still below the exact ground state energy. What does this indicate? A4: This is a classic signature of a non-N-representable 2-RDM. When the 2-RDM violates N-representability conditions, the variational minimization of the energy can collapse to an unphysically low value [2]. Your v2RDM procedure has likely failed to fully enforce all necessary constraints. You must ensure your SDP problem incorporates a sufficient set of N-representability conditions (P, Q, G) to prevent this. If the problem persists, it suggests that the initial data from the quantum device is too corrupt for the SDP to correct fully.

Troubleshooting Guides

Unphysical Energy Estimates

Problem: After processing your classical shadow data with v2RDM, the computed molecular energy is significantly lower than the known ground state energy (violating the variational principle).

Diagnosis: This is a clear indicator that the final 2-RDM is not fully N-representable.

Resolution Steps:

- Validate Constraint Implementation:

- Check your code to ensure the SDP correctly implements the P, Q, and G N-representability constraints.

- A valid 2-RDM must be positive semidefinite, and its contraction must yield a 1-RDM that is also positive semidefinite and normalized [2].

- Increase Measurement Budget:

- The raw classical shadow estimate may be too noisy. Increase the number of shots per measurement basis to improve the initial data quality before SDP optimization. Studies show that with sufficient shots, the v2RDM method can reliably correct the estimate [8].

- Check SDP Solution Status:

- Before using the result, confirm that the SDP solver exited with a

solved_and_feasiblestatus. Do not use results from an infeasible or non-converged solution [23].

- Before using the result, confirm that the SDP solver exited with a

Poor Convergence of SDP

Problem: The semidefinite program fails to converge within a reasonable number of iterations or time.

Diagnosis: The problem may be poorly scaled, ill-conditioned, or overly constrained given the input data.

Resolution Steps:

- Re-scale the Problem:

- Scale your Hamiltonian and 2-RDM matrix elements to have magnitudes closer to 1. This improves the numerical stability for the solver.

- Adjust Solver Parameters:

- Increase the maximum number of iterations.

- Slightly relax the optimality and feasibility tolerances. For example, instead of

1e-8, try1e-6.

- Verify the Initial Guess:

- Provide the SDP solver with a good initial point (X₀). A common and valid initial guess is to set the diagonal elements of the 2-RDM matrix to 1.0 and the off-diagonals to 0, which satisfies the diagonal constraint [23].

set_start_value(X[i, i], 1.0)

Experimental Protocols & Data

Workflow: From Quantum State to Refined 2-RDM

The following diagram illustrates the complete experimental and computational pipeline for obtaining an N-representable 2-RDM.

Key N-representability Conditions for the SDP

The core of the v2RDM method is constraining the SDP to enforce physicality. The following conditions must be implemented as constraints in your SDP formulation [2].

| Condition Matrix | Mathematical Constraint | Physical Meaning |

|---|---|---|

| 2-RDM (D) | ( D \succeq 0 ) | The 2-body density matrix itself must be positive semidefinite. |

| Q Matrix | ( Q \succeq 0 ) | Ensures the positivity of the two-hole reduced density matrix. |

| G Matrix | ( G \succeq 0 ) | Ensures the positivity of the particle-hole reduced density matrix. |

| 1-RDM | ( \text{Tr}(D) = \binom{N}{2} ) and ( ^1D \succeq 0 ) | The 2-RDM must contract to a valid, normalized 1-RDM. |

Performance Comparison: Shadow Estimators

The choice of estimator within the classical shadow protocol can significantly impact performance. The table below summarizes key findings from recent research [8].

| Estimator Type | Key Characteristic | Performance under Shot Noise | Recommended Use Case |

|---|---|---|---|

| Unbiased (Stand-alone) | Standard classical shadow estimator. | Can produce non-N-representable 2-RDMs. | Baseline comparisons; systems with very high shot counts. |

| v2RDM-Optimized (Improved) | Uses SDP to enforce N-representability on the shadow. | More robust, can lead to shot savings up to a factor of 15. | Recommended. Production runs with limited quantum resources. |

The Scientist's Toolkit

Research Reagent Solutions

This table lists the essential computational "reagents" and tools required for experiments in this field.

| Item | Function / Description | Example / Note |

|---|---|---|

| Classical Shadows Engine | A software library to perform the classical shadow protocol: generate random basis rotations, measure quantum states, and reconstruct observable estimates. | Must support the ensemble of single-particle basis rotations (matchgates) for fermionic systems to preserve particle number and spin [8]. |

| SDP Solver | A numerical optimization library capable of solving large-scale semidefinite programs. | Examples: Clarabel.jl, SDPA [23]. The solver must be efficient for matrices of dimension ( \binom{N}{2} \times \binom{N}{2} ). |

| N-Rep Constraints | The set of necessary conditions (P, Q, G) that define the feasible set for the SDP, ensuring the output 2-RDM is physical [2]. | These are the core "reagents" that confer physical meaning to the result. |