Static vs Dynamic Models in Drug Development: A Comprehensive Guide to Correlation Methods and Applications

This article provides a comprehensive analysis of static and dynamic correlation methods essential for modern drug development.

Static vs Dynamic Models in Drug Development: A Comprehensive Guide to Correlation Methods and Applications

Abstract

This article provides a comprehensive analysis of static and dynamic correlation methods essential for modern drug development. Tailored for researchers and pharmaceutical professionals, it explores the foundational principles of these modeling approaches, detailing their specific applications from early discovery to clinical risk assessment. The content delves into methodological execution, common challenges with optimization strategies, and rigorous validation frameworks. By synthesizing current research and comparative analyses, this guide serves as a critical resource for selecting the appropriate model to improve predictive accuracy, streamline development timelines, and enhance patient safety across therapeutic areas.

Core Principles: Understanding Static and Dynamic Modeling Fundamentals

Frequently Asked Questions (FAQs)

Q1: What is a mechanistic static model (MSM) in drug-drug interaction (DDI) prediction?

A mechanistic static model (MSM) is a mathematical tool used in early drug development to predict the risk of metabolic drug-drug interactions. It employs a set of equations to estimate the change in exposure (Area Under the Curve, or AUC) of a "victim" drug when co-administered with a "perpetrator" drug, based primarily on in vitro data. Unlike dynamic models, it uses fixed, or "static," surrogate driver concentrations for the perpetrator drug to represent its concentration at the enzyme interaction site (e.g., in the liver or gut) [1] [2]. Its primary purpose is for initial screening to flag potential interactions, ensuring patient safety by minimizing false-negative predictions [1].

Q2: When should I use a static model versus a dynamic PBPK model?

The choice between a static and a dynamic model depends on your development stage and the complexity of the question you need to answer. The following table outlines the primary applications and limitations of each approach.

| Model Type | Primary Applications | Key Limitations |

|---|---|---|

| Mechanistic Static Model (MSM) | - Early-stage DDI risk screening [1]- Flagging even minor AUC deviations for safety [1]- Supporting regulatory filing for study waivers and label recommendations in some cases [2] | - Uses fixed driver concentrations, not time-variable levels [1]- Cannot evaluate complex scenarios (e.g., active metabolites, dose staggering, multiple perpetrators) [1]- Limited ability to assess inter-individual variability and vulnerable patient populations [1] |

| Dynamic PBPK Model | - Quantitative DDI predictions for regulatory submissions [1] [2]- Assessing DDI risk in specific populations (e.g., organ impairment, genetic polymorphisms) [1]- Complex scenario testing (dose staggering, enzyme-transporter interplay, time-dependence) [1] [2] | - Resource-intensive development and validation [2]- Requires considerable expertise and high-quality input data [2] |

Q3: What are the key assumptions and limitations of static models?

Static models operate on several critical assumptions, which are also their primary limitations:

- Assumption of Fixed Driver Concentration: The model assumes that a single, static concentration (like

[I]representing the maximum (Cmax) or average steady-state (Cavg,ss) concentration) can adequately represent the perpetrator's inhibitory effect over the entire dosing interval. This ignores the dynamic, time-varying nature of real drug concentrations [1]. - Linearity and Time-Invariance: The models typically assume that pharmacokinetic processes are linear and time-invariant, which may not hold for all drugs [1].

- Limited Scope: They are generally unsuitable for predicting interactions involving mechanisms like time-dependent inhibition, enzyme induction, or transporter-mediated interactions without significant modifications [1] [2].

- Vulnerable Patient Risk: Static models may underpredict the DDI risk in vulnerable patient populations, as they do not routinely incorporate physiological variability. One study found a high rate of discrepancy (

IMDR >1.25) in 37.8% of simulations for a 'vulnerable patient' representative [1].

Q4: What is the most common cause of inaccurate predictions from static models?

The choice of driver concentration ([I]) for the perpetrator drug is a major source of variability and potential inaccuracy [1]. Regulatory guidelines often recommend using the maximum unbound hepatic inlet concentration to minimize false negatives [1]. However, some studies suggest that using the unbound average steady-state concentration (Cavg,ss) can sometimes lead to predictions more comparable to dynamic models [2]. The discrepancy between model predictions often stems from this fundamental choice, as the dynamic model uses time-variable concentrations that more accurately reflect the in vivo situation [1].

Troubleshooting Guides

Problem 1: My static model prediction shows a significant DDI risk, but a clinical study is not feasible. What are my options?

Solution: Consider developing a dynamic Physiologically Based Pharmacokinetic (PBPK) model to refine the risk assessment.

- Action 1: Verify Input Parameters. Double-check all input parameters for the static model, especially the fraction of victim drug metabolized by the affected enzyme (

fm) and the inhibition constant (Ki) of the perpetrator. Ensure they are derived from robust and relevant in vitro studies. - Action 2: Develop a PBPK Model. If resources allow, develop and qualify a PBPK model for both the victim and perpetrator drugs. This allows for a more quantitative prediction and can investigate "what-if" scenarios that static models cannot address [2].

- Action 3: Use a "Vulnerable Patient" Simulation. If a full PBPK model is not an option, use the static model with different driver concentrations (e.g.,

CmaxandCavg,ss) to understand the range of possible outcomes. Be conservative in your interpretation, acknowledging that the risk for some patients may be higher than predicted [1].

Problem 2: I am getting different DDI predictions when using Cmax versus Cavg,ss as the driver concentration. Which one should I use?

Solution: The choice depends on the context and regulatory guidance.

- Step 1: Define the Objective. For initial screening and to avoid false negatives (i.e., missing a true DDI risk), the use of

[I] = Cmaxor the unbound maximum hepatic inlet concentration is recommended by regulatory guidelines [1]. - Step 2: Assess Consistency with Dynamic Models. Some research indicates that using

[I] = Cavg,sscan yield predictions closer to those from dynamic PBPK models for certain applications [2]. If your goal is to compare directly with a PBPK simulation or for specific regulatory submissions where this approach has been accepted,Cavg,ssmight be more appropriate. - Step 3: Report the Range. A prudent practice is to calculate and report the DDI risk using both

CmaxandCavg,ssas a sensitivity analysis. This provides a range of possible outcomes and demonstrates a thorough understanding of the model's limitations.

Problem 3: How can I validate the predictions from my static model?

Solution: While static models are often used prospectively before clinical data is available, their predictions should be compared against observed data whenever possible.

- Methodology:

- Conduct a Clinical DDI Study: This is the gold standard for validation. Compare the predicted AUC ratio (

AUCr) from the static model with the observedAUCrfrom the clinical study. - Perform a Correlation Analysis: Plot the observed

AUCrvalues against the predicted ones. Calculate the correlation coefficient and, more importantly, use statistical methods like Bland-Altman's limits of agreement to assess agreement, as the correlation coefficient alone can be misleading [3]. - Evaluate Predictability: A common validation criterion is to check if the prediction error for the

AUCris within a pre-defined range (e.g., ±15-20%). If the static model consistently over- or under-predicts, it may indicate a systematic issue with the chosen driver concentration or other input parameters.

- Conduct a Clinical DDI Study: This is the gold standard for validation. Compare the predicted AUC ratio (

Experimental Protocols & Data

Core Equations for Competitive Inhibition MSM

The fundamental equation for predicting the AUC ratio (AUCr) for a victim drug in the presence of a competitive inhibitor is [1]:

AUCr = 1 / [ (Fg * Fh) ]

Where:

Fg = 1 / [fg + (1 - fg) * (1 / (1 + ([I]_{gut} / K_i)) ) ](Fraction escaping gut metabolism)Fh = 1 / [fh + (1 - fh) * (1 / (1 + ([I]_{liver} / K_i)) ) ](Fraction escaping hepatic metabolism)[I]_{gut}and[I]_{liver}are the driver concentrations of the inhibitor at the gut and liver sites, respectively.K_iis the inhibition constant.fgis the fraction of the victim drug metabolized in the gut.fhis the fraction of the victim drug metabolized in the liver.

Quantitative Comparison of Static vs. Dynamic Models

A large-scale simulation study (2024) involving 30,000 simulated DDIs highlighted the discrepancies between static and dynamic models. The Inter-Model Discrepancy Ratio (IMDR) was defined as AUCr_dynamic / AUCr_static [1]. The table below summarizes the key findings on model discrepancy.

| Simulation Scenario | Driver Concentration | Incidence of IMDR < 0.8 (Sponsor Risk) | Incidence of IMDR > 1.25 (Patient Risk) |

|---|---|---|---|

| 'Population' Representative | Cavg,ss |

85.9% | 3.1% |

| 'Vulnerable Patient' Representative | Not Specified | Not Specified | 37.8% |

Data adapted from [1]. IMDR outside 0.8-1.25 indicates a discrepancy.

Key Research Reagent Solutions

The following table lists essential "reagents" or tools required for building and applying mechanistic static models.

| Item | Function in Experiment |

|---|---|

| In Vitro System (e.g., human liver microsomes, recombinant CYP enzymes) | To determine enzyme kinetic parameters for the victim drug (fm, Km, Vmax) and the inhibition constant (Ki) for the perpetrator drug [1]. |

| Perpetrator Drug Pharmacokinetic Data | To calculate the static driver concentrations ([I]), such as unbound Cmax or unbound Cavg,ss [1] [2]. |

| Victim Drug Pharmacokinetic Data | To understand the clearance mechanisms and the fraction of drug absorbed, which informs the Fg and Fh calculations [1]. |

| Mechanistic Static Model Equations | The mathematical framework (see Core Equations above) that integrates in vitro and PK data to compute the predicted DDI magnitude (AUCr) [1] [2]. |

| PBPK Software (e.g., Simcyp) | Used as a dynamic model comparator to evaluate the performance and potential bias of the static model predictions [1]. |

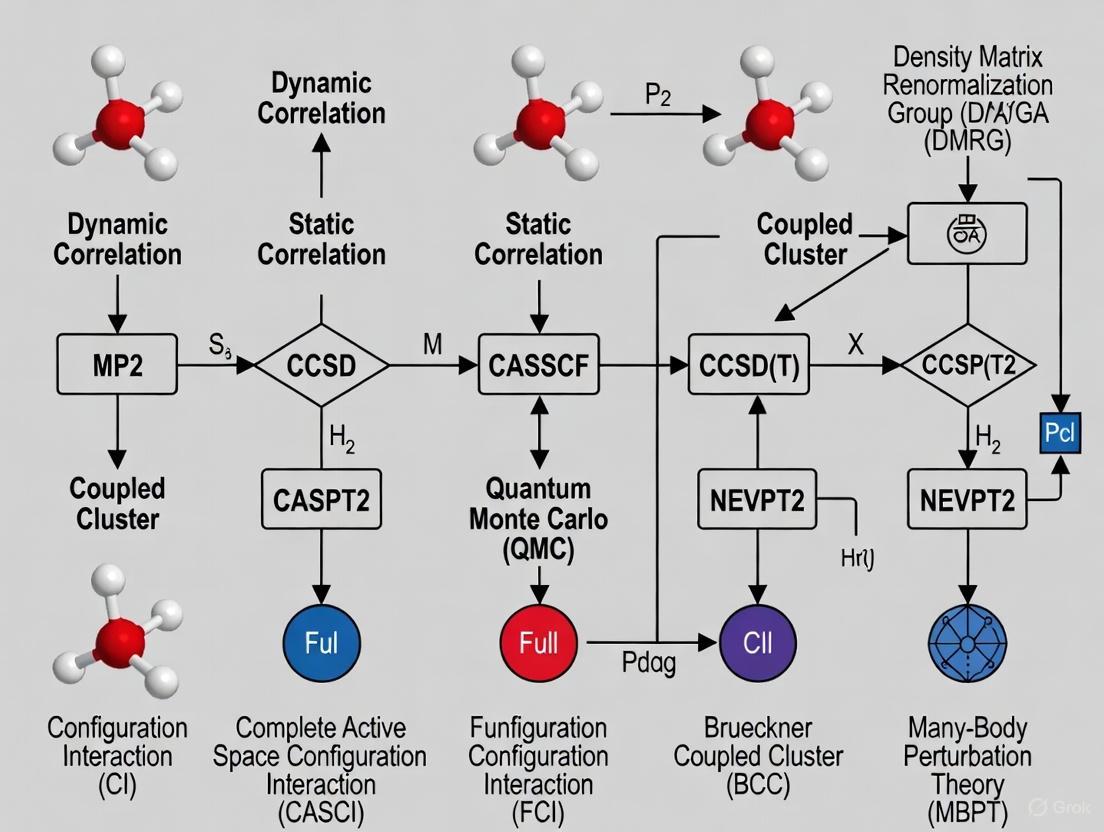

Workflow and Relationship Diagrams

Diagram 1: Static vs Dynamic Model Decision Workflow

Diagram 2: Key Components of a Static Model

Scientific FAQ: Core Principles and Applications

Q1: What fundamentally distinguishes a dynamic PBPK model from a simple static model?

A dynamic PBPK model is a time-dependent, mechanistic system that uses differential equations to simulate the concentration of a compound in various organs and tissues over time. It is structured based on real human physiology, incorporating anatomical (e.g., organ volumes) and physiological (e.g., blood flow rates) parameters. These models are multi-compartmental, with compartments representing specific organs like the liver or kidney, interconnected by the circulating blood or lymph system [4] [5] [6]. This allows for the prediction of full concentration-time profiles at the site of action, which may be difficult to measure experimentally [6] [7].

In contrast, a static model relies on steady-state assumptions and uses algebraic equations. While useful for predicting overall drug exposure or the magnitude of interactions like drug-drug interactions (DDIs), static models cannot predict the shape of a plasma concentration-time curve, time-varying changes, or distribution kinetics [8]. The key differentiator is that PBPK models offer a dynamic, physiological, and mechanistic framework for prediction and extrapolation, whereas static models provide a simpler, non-mechanistic snapshot [5] [8].

Q2: What are the primary assumptions when defining the structure of a PBPK model?

Two primary assumptions govern how drugs are distributed from blood into tissues [5] [7]:

- Perfusion-Rate Limited (Flow-Limited) Model: This assumes that tissue membranes present no barrier to diffusion. The rate-limiting step for a drug's distribution into a tissue is the rate of blood delivery to that tissue. This is typically true for small, lipophilic drugs. The model assumes that at steady state, the free (unbound) drug concentrations in the tissue and blood are equal [5].

- Permeability-Rate Limited (Membrane-Limited) Model: This applies when the permeability across the cell membrane is the rate-limiting step, often for larger or polar molecules. The tissue is conceptually divided into sub-compartments, such as intracellular and extracellular space, separated by a membrane that acts as a diffusional barrier [5].

Q3: In what key areas are dynamic PBPK models most critically applied in drug development?

PBPK modeling has become integral to regulatory submissions and drug development [6] [8] [7]. A systematic review of published models identified the most common applications as follows [8]:

Table 1: Primary Applications of PBPK Models in Drug Development

| Application Area | Prevalence in Publications | Primary Utility |

|---|---|---|

| Drug-Drug Interaction (DDI) Predictions | 28% | Predicting metabolic and transporter-mediated interactions to support dose adjustments and clinical trial design [8] [7]. |

| Interindividual Variability & General PK Predictions | 23% | Simulating population variability to understand exposure and response differences [8]. |

| Formulation & Absorption Modeling | 12% | Simulating the impact of drug properties and formulation on absorption kinetics, including food effects [8]. |

| Predicting Age-Related PK Changes | 10% | Extrapolating adult data to pediatric and geriatric populations via virtual simulations [6] [8]. |

| Extrapolation to Diseased Populations | Not specified | Predicting pharmacokinetics in patients with hepatic or renal impairment by incorporating population-specific physiological changes [6]. |

Troubleshooting Guide: Computational Performance

Q1: Our PBPK model simulations are running slowly, especially for large-scale Monte Carlo analyses. What factors can we adjust to improve computational time?

Computational time is a critical consideration for analyses requiring hundreds of thousands of simulations [9]. Recent research has identified key factors that impact simulation speed.

Table 2: Factors Influencing PBPK Model Computational Time

| Factor | Impact on Computational Time | Recommended Action |

|---|---|---|

| Model Compartment "Lumping" | High | Combine tissues with similar perfusion and lipid content (e.g., grouping slow-perfused tissues like muscle and skin) to reduce the number of state variables and differential equations. A 36% decrease in state variables led to a 20-35% reduction in computational time [9]. |

| Treatment of Physiological Parameters | High | Treat body weight and dependent quantities (e.g., organ volumes, blood flows) as fixed constants rather than time-varying parameters. This can result in a ~30% time savings [9]. |

| Implementation Platform | Medium | Using a compiled language (C, Fortran) is faster than interpreted languages (R, Python). A hybrid approach (e.g., using R with MCSim) balances ease-of-use and speed [9]. |

| Number of Output Variables | Low | Decreasing the number of calculated output variables that are saved from the simulation has a minimal impact on core computational time [9]. |

Q2: We are using a flexible PBPK model template. Why might it be slower than a stand-alone implementation, and is this acceptable?

Yes, this is an expected trade-off. A general-purpose PBPK model template includes more compartments and options than are typically needed for any single chemical-specific model. During simulation, expressions for many unused quantities are still evaluated, which increases computational time compared to a lean, stand-alone model built for a single purpose [9]. The reduced human time required for model preparation and quality assurance review of a template-based implementation often justifies the increase in computational time [9].

Experimental Protocol: A Timing Experiment for PBPK Model Implementation

Objective: To quantitatively evaluate the impact of different model implementation decisions on the computational time required for PBPK model simulations.

Background: As PBPK models are used for more complex analyses (e.g., Monte Carlo simulations), understanding the drivers of computational speed is essential for efficient workflow [9]. This protocol outlines a method to systematically test these factors.

Materials and Reagents:

Table 3: Research Reagent Solutions for PBPK Timing Experiments

| Item Name | Function/Description | Example Sources |

|---|---|---|

| PBPK Model Template | A pre-defined model "superstructure" with equations and logic for many PBPK features. Provides flexibility for testing different structures. | Bernstein et al. 2021/2023 [9] |

| Stand-Alone PBPK Model | A chemical-specific model implementation with a fixed, minimal structure. Serves as a performance benchmark. | U.S. EPA (2011) DCM model [9] |

| Simulation Software Platform | Software to execute the PBPK model and record simulation time. | R with MCSim, Simcyp, GastroPlus, PK-Sim [9] [7] |

| Chemical-Specific Parameters | Validated parameter sets for test compounds. Ensures comparisons are scientifically valid. | Dichloromethane (DCM) and Chloroform (CF) models [9] |

| Exposure Scenarios | Pre-defined exposure protocols to run consistent simulations. | Constant continuous oral, periodic inhalation, etc. [9] |

Methodology:

- Model Configuration: Implement the chosen PBPK models (e.g., for DCM and CF) in both a template structure and a stand-alone structure [9].

- Factor Selection: Define the independent variables to test:

- Compartment Number: Implement the model with and without "lumped" tissue compartments [9].

- Parameter Type: Run simulations with body weight and dependent parameters set as both fixed constants and as time-varying quantities [9].

- Output Detail: Configure the model to calculate a minimal vs. an extensive set of output variables [9].

- Simulation Execution: For each model configuration, run a set number of simulations (e.g., 1,000) for each of the four exposure scenarios. Ensure all simulations are performed on identical hardware.

- Data Collection: Precisely measure and record the computational time required for each set of simulations, excluding model loading and data-saving overhead.

- Data Analysis: Compare the average computational times across different configurations to quantify the impact of each factor.

This experimental approach directly enabled researchers to identify that fixing body weight parameters and reducing state variables significantly improves computational speed [9].

Conceptual Visualization: From Static to Dynamic Correlation in PK Modeling

The evolution from static to dynamic (PBPK) modeling represents a shift from empirical correlation to mechanistic, physiology-based simulation. The following diagram illustrates this conceptual and structural difference.

Frequently Asked Questions (FAQs)

1. What is electronic correlation, and why is it important in computational drug design? Electronic correlation is the interaction between electrons in the electronic structure of a quantum system. It is crucial because the Hartree-Fock (HF) method, a common starting point in computational chemistry, does not account for the instantaneous Coulomb repulsion between electrons, instead having each electron interact with the average field of all others. This missing interaction energy—the correlation energy—is vital for accurately predicting molecular properties, reaction pathways, and binding affinities, which are essential for rational drug design [10] [11] [12].

2. What is the fundamental difference between dynamic and static correlation? The fundamental difference lies in their physical origin and how they are addressed:

- Dynamic Correlation: Arises from the local, short-range repulsion between electrons that prevents them from coming too close to each other. It is related to the instantaneous dynamics of electron motion and can be recovered by adding a large number of electronic configurations (Slater determinants), each with a small weight, to the wavefunction [10] [11].

- Static (or Non-dynamic) Correlation: Occurs when a system's ground state cannot be qualitatively described by a single Slater determinant, often in cases with (near-)degeneracies, such as in bond-breaking or diradicals. It requires a linear combination of a few determinants with weights comparable to the HF determinant [10] [11] [12].

3. My calculations on a transition metal complex are qualitatively wrong. Could this be a static correlation issue? Yes, this is a classic symptom of significant static correlation. Transition metal complexes often have closely spaced electronic states (near-degeneracy). A single-determinant method like HF cannot properly describe this, leading to incorrect predictions. You should employ a multi-configurational method like MCSCF (Multi-Configurational Self-Consistent Field) to first capture the static correlation before applying dynamic correlation corrections [10] [12].

4. For a typical organic drug molecule, which type of correlation is more important? For most closed-shell, organic drug molecules near their equilibrium geometry, dynamic correlation is typically the dominant concern. The Hartree-Fock solution is often qualitatively correct, and the missing correlation energy can be recovered using methods like MP2 or CCSD(T) to achieve quantitative accuracy for properties like interaction energies and conformational barriers [10].

5. Can a method capture both dynamic and static correlation simultaneously? While some methods specialize in one type, it is nearly impossible to completely separate the two effects as they stem from the same physical interaction [10] [11]. High-level methods aim to capture both:

- Multi-Reference Methods: e.g., CASPT2 or MRCI, use an MCSCF reference (for static correlation) and then add perturbative or configurational corrections for dynamic correlation [12].

- Advanced Single-Reference Methods: At high orders, methods like CCSD(T) can incorporate some static effects, but may fail in cases of strong static correlation [10].

Troubleshooting Guides

Problem 1: Poor Description of Bond Dissociation

Symptoms: When calculating a potential energy surface, the energy becomes increasingly unrealistic as a bond is stretched. The dissociation products are incorrectly predicted.

| Suspect Issue | Diagnostic Check | Recommended Solution |

|---|---|---|

| Strong Static Correlation | Perform a stability analysis on the HF wavefunction. Check for (near-)degeneracy of molecular orbitals involved in the bond. | Switch to a multi-configurational method (e.g., MCSCF/CASSCF). Select an active space that includes the bonding/antibonding orbital pair and relevant electrons. |

Experimental Protocol: Diagnosing Static Correlation with CASSCF

- Geometry: Generate molecular structures along the reaction coordinate (e.g., varying bond length).

- Initial Calculation: Run a preliminary HF calculation to obtain molecular orbitals.

- Active Space Selection: This is critical. For a single bond dissociation, a minimal active space of 2 electrons in 2 orbitals (2e,2o) is a starting point.

- CASSCF Calculation: Perform a CASSCF calculation for each geometry, ensuring state-averaging if needed to describe degenerate states.

- Analysis: Plot the CASSCF potential energy curve. It should qualitatively correct the dissociative behavior. For quantitative results, dynamically correlate this wavefunction (e.g., with CASPT2).

Problem 2: Inaccurate Interaction Energies (e.g., Drug-Target Binding)

Symptoms: Binding or interaction energies are significantly over- or under-estimated, even after correcting for basis set superposition error (BSSE).

| Suspect Issue | Diagnostic Check | Recommended Solution |

|---|---|---|

| Insufficient Dynamic Correlation | Compare the HF interaction energy with a higher-level method (e.g., MP2 or CCSD(T)) in a moderate basis set. A large discrepancy indicates strong dynamic correlation effects. | Use a method that accounts for dynamic correlation: MP2 (good for dispersion), CCSD(T) ("gold standard"), or DFT with empirical dispersion for larger systems. Ensure you use a sufficiently large basis set. |

Experimental Protocol: Accurate Binding Energy Calculation using CCSD(T)

- System Preparation: Generate optimized geometries for the drug, target, and the drug-target complex.

- Basis Set Selection: Choose a correlation-consistent basis set (e.g., cc-pVDZ, aug-cc-pVDZ for weak interactions).

- Single-Point Energy Calculations:

- Perform a HF calculation as a baseline.

- Perform a CCSD(T) calculation at the same geometry and basis set.

- BSSE Correction: Perform a counterpoise correction to account for basis set superposition error.

- Energy Calculation: Binding Energy = E(complex) - E(drug) - E(target), using CCSD(T) energies and BSSE correction.

Problem 3: Unphysical Charge or Spin Densities in Diradicals/Metal Complexes

Symptoms: Computed spin densities are delocalized incorrectly, or charge distributions do not match experimental evidence.

| Suspect Issue | Diagnostic Check | Recommended Solution |

|---|---|---|

| Static Correlation & Symmetry Breaking | Check for spatial or spin symmetry breaking in the HF solution (e.g., an unrestricted HF solution lower in energy than restricted). Examine the natural orbital occupation numbers from a correlated calculation; values significantly different from 2 or 0 indicate static correlation. | Use a multi-reference method (MCSCF) that can correctly describe the multi-configurational nature of the wavefunction. Ensure the active space is large enough to capture all essential correlation effects. |

Key Data Tables

Table 1: Comparison of Dynamic vs. Static Correlation

| Feature | Dynamic Correlation | Static Correlation |

|---|---|---|

| Physical Origin | Instantaneous Coulomb repulsion between electrons [10] [11] | Inability of a single determinant to describe (near-)degenerate states [10] [11] |

| Dominant in... | Closed-shell molecules near equilibrium geometry [10] | Bond dissociation, diradicals, transition metal complexes [10] [12] |

| Typical Wavefunction | Many determinants, each with small weight (e.g., CISD, CCSD) [10] | Few determinants, each with large weight (e.g., MCSCF) [10] |

| Primary Methods | MP2, CCSD(T), DFT [10] [11] | MCSCF, CASSCF [10] [12] |

| Impact on Energy | Quantitative correction [10] | Qualitative and quantitative correction [10] |

Table 2: Method Selection Guide for Correlation Treatment

| Method Category | Examples | Best for... | Key Limitations |

|---|---|---|---|

| Static (Non-dynamic) | MCSCF, CASSCF | Bond breaking, diradicals, multi-configurational states [12] | Choice of active space is critical and non-trivial; misses dynamic correlation [12] |

| Dynamic | MP2, CCSD(T), DFT | Closed-shell systems, dispersion interactions, quantitative energetics [10] | CCSD(T) is computationally expensive; MP2 can be poor for some systems; DFT's accuracy depends on functional [10] |

| Combined | CASPT2, MRCI | Systems requiring both static and dynamic correlation (e.g., spectroscopy) [12] | Computationally very demanding; complexity in setup [12] |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Electronic Structure Studies |

|---|---|

| Basis Sets | Sets of mathematical functions (e.g., Gaussian-type orbitals) used to construct molecular orbitals. The size and quality (e.g., cc-pVDZ, aug-cc-pVQZ) critically determine the accuracy of the calculation [12]. |

| Pseudopotentials | Effective potentials used to replace the core electrons of atoms, significantly reducing computational cost for heavier elements while maintaining accuracy for valence electron properties. |

| Active Space (in MCSCF) | The selection of which electrons and orbitals to include in the multi-configurational treatment. This is the central "reagent" for tackling static correlation and requires careful chemical insight [12]. |

| Quantum Chemistry Software | Platforms (e.g., Gaussian, GAMESS, ORCA, Molpro) that implement the algorithms for solving the electronic Schrödinger equation using various methods and basis sets. |

Frequently Asked Questions (FAQs)

1. Why is IVIVE important in modern drug development? IVIVE is crucial because it uses in vitro data to predict in vivo outcomes, which helps streamline drug discovery, reduce development timelines by 30-50%, and lower preclinical testing costs. It supports the 3Rs principle (Replacement, Reduction, and Refinement) in toxicology by minimizing reliance on animal studies and enhances risk assessment for clinical progression [13] [14].

2. What are the main challenges associated with IVIVE predictions? A primary challenge is the systematic underestimation of in vivo clearance, often by a 3- to 10-fold factor. Furthermore, translating subtle, toxicologically relevant signals from in vitro systems and accurately predicting outcomes for diverse drug parameter spaces remain significant hurdles [14] [13] [1].

3. When should I use static versus dynamic IVIVE models? The choice depends on the context and required precision. Static models are simpler and use fixed input parameters (e.g., maximum inhibitor concentration), making them suitable for initial screening and rank-ordering compounds. However, they are not equivalent to dynamic models for quantitative predictions. Dynamic models (Physiologically Based Pharmacokinetic or PBPK) use time-variable concentrations and are essential for capturing inter-individual variability, investigating complex scenarios like multiple perpetrators, and providing accurate predictions for vulnerable patient populations or regulatory submissions [1].

4. Which types of compounds are most suitable for IVIVE studies? Compounds are most suitable when the liver is the primary clearance pathway, and their metabolism is minimally affected by transporter proteins. Ideal compounds have straightforward metabolic profiles, well-documented human pharmacokinetic (PK) data for validation, and demonstrate good stability and solubility for reliable testing [14].

Troubleshooting Guides

Problem 1: Systematic Underprediction of Hepatic Clearance

Issue: IVIVE predictions consistently and significantly underestimate the actual in vivo hepatic clearance value [14] [15].

Solution: Optimize the in vitro experimental system and refinement of calculation methods.

- Action 1: Refine the Metabolic Environment: Modify the in vitro system to better mimic the in vivo cytosol. Using a HEPES-KOH buffer system has been shown to improve performance [15].

- Action 2: Correct the Calculation Method: Incorporate the apparent volume of distribution (Vd) to refine the estimation of intrinsic hepatic clearance (CLint) derived from the Michaelis-Menten equation. This addresses a previously overlooked source of error [15].

- Action 3: Apply a Correction Factor: Develop laboratory-specific linear regression correction equations based on a set of commercial compounds with established human PK data [14].

Problem 2: Failure to Capture Critical In Vivo Toxicity Pathways

Issue: The in vitro to in vivo translation misses subtle but toxicologically critical signals, such as the expression of Cytochrome P450 enzymes [13].

Solution: Integrate advanced AI frameworks to enhance the biological relevance of predictions.

- Action 1: Employ a Generative AI Framework: Implement a tool like AIVIVE, which uses a GAN (Generative Adversarial Network) as a base translator. This generator is trained on paired in vitro and in vivo transcriptomic data (e.g., from Open TG-GATEs) to create synthetic in vivo profiles [13].

- Action 2: Apply Local Optimization: Use local optimizers (AI models) to post-process the GAN output, specifically refining the predictions for low-signal, biologically relevant gene modules that are often missed. This enhances the accuracy of key pathways like bile secretion and steroid hormone biosynthesis [13].

Problem 3: Discrepancies Between Static and Dynamic Model Predictions

Issue: Static and dynamic model predictions show significant discrepancies, leading to potential patient or sponsor risk in evaluating drug-drug interactions (DDIs) [1].

Solution: Understand the limitations of static models and use dynamic models for quantitative predictions.

- Action 1: Identify High-Risk Parameter Spaces: Be cautious when the drug parameter spaces (for both victim and perpetrator) are at the edges of existing drug parameter space. Static models are most likely to fail here [1].

- Action 2: Use Dynamic Models for Vulnerable Populations: For compounds intended for use in populations with known variability (e.g., polymorphisms, organ dysfunction), rely on dynamic PBPK models. Static models show the highest rate of discrepancy (>1.25-fold) when simulating "vulnerable patient" representatives [1].

- Action 3: Validate Driver Concentrations: If using a static model, understand that using the average steady-state concentration (Cavg,ss) as the inhibitor driver can lead to a high rate of discrepancy (over 85% in some cases) compared to dynamic models. The maximum concentration (Cmax) is a more conservative choice [1].

Table 1: Comparison of Static vs. Dynamic IVIVE Models for DDI Prediction

| Feature | Static Model | Dynamic (PBPK) Model |

|---|---|---|

| Model Complexity | Simple equations | Complex, physiologically realistic |

| Input Concentration | Fixed (e.g., Cmax or Cavg,ss) | Time-variable |

| Inter-individual Variability | Not incorporated | Incorporated via virtual populations |

| Quantitative Prediction | Not equivalent to dynamic models; high discrepancy rates [1] | High-fidelity; regulatory standard for quantitative predictions [1] |

| Best Use Case | Initial screening, rank-ordering, flagging potential risks [1] [14] | Final quantitative risk assessment, special populations, complex DDI scenarios [1] |

| Reported Discrepancy (IMDR*) | Up to 85.9% for 'population' and 37.8% for 'vulnerable patient' using Cavg,ss [1] | Used as the reference for calculating discrepancy [1] |

IMDR (Inter-Model Discrepancy Ratio) = AUCrdynamic / AUCrstatic

Table 2: Performance of Optimized IVIVE Methods

| Method | Reported Underprediction Factor | Key Improvement |

|---|---|---|

| Standard IVIVE | 3- to 10-fold [14] | Baseline |

| Well-Stirred Model (Optimized) | 1.25-fold (hepatocyte assay) [14] | Advanced assay standardization and data analysis |

| Refined Hepatic Clearance Model | Reduced from 28.1 to ~70 mL/min/kg (vs. in vivo 73.9 mL/min/kg) [15] | Incorporation of Vd and a more cytosolic-like in vitro environment |

Experimental Protocols

Protocol 1: AIVIVE Framework for Toxicogenomics Translation

This protocol uses AI to translate in vitro transcriptomic data to in vivo-like profiles [13].

1. Data Sourcing and Preprocessing:

- Source: Obtain rat liver in vitro and in vivo (single-dose) transcriptomic profiles from the Open TG-GATEs database.

- Normalization: Normalize the transcriptomic files using the robust multi-array average (RMA) method.

- Filtering: Annotate probes and filter for a toxicologically relevant gene set, such as the rat S1500+.

- Pairing: For each compound, create pairwise samples by matching in vitro and in vivo profiles based on compound, dose, and time. Split the data by compound into training (80%) and test (20%) sets.

2. AIVIVE Model Training:

- Architecture: Implement a GAN-based translator. The generator (a fully connected neural network with multiple hidden layers and LeakyReLU activation) takes the in vitro profile, experimental condition labels, and noise as input to generate a synthetic in vivo profile.

- Training: Train the generator-discriminator pair iteratively. Use a cycle-consistency loss to ensure the generated profiles retain biological relevance.

3. Local Optimization:

- Refinement: Apply multiple local optimizers (AI models) to the GAN's output. These optimizers specifically refine the values of low-signal, biologically relevant gene modules that the GAN might have missed.

4. Model Evaluation:

- Metrics: Evaluate the synthetic in vivo profiles using cosine similarity, root mean squared error (RMSE), and mean absolute percentage error (MAPE).

- Biological Validation: Compare differentially expressed genes (DEGs), enriched pathways (e.g., bile secretion, chemical carcinogenesis), and performance in downstream tasks like necrosis classification.

AIVIVE Workflow Diagram

Protocol 2: Optimizing Hepatic Clearance Prediction

This protocol details a method to reduce the systematic underprediction of hepatic clearance [15].

1. Experimental Setup:

- Target Drug: Select a model compound like Metoprolol.

- In Vitro Assay: Perform a microsomal stability assay. To better simulate in vivo conditions, provide a more cytosolic-like environment (e.g., using a HEPES-KOH buffer system).

- Ex Situ Model: Conduct experiments using an Isolated Perfused Rat Liver (IPRL) system.

- In Vivo Reference: Perform a pharmacokinetic study in rats to establish the true in vivo hepatic clearance value.

2. Data Integration and Model Refinement:

- Calculate Intrinsic Clearance: Determine the in vitro intrinsic clearance (CLint, in vitro) from the microsomal assay using the Michaelis-Menten equation.

- Refine with Volume of Distribution: Incorporate the apparent volume of distribution (Vd) to correct the CLint, in vitro calculation, addressing a key source of error.

- Select Hepatic Clearance Model: Use the Well-Stirred Model (WSM) and integrate findings from the IPRL and PK studies to identify the optimal liver drug metabolism-driving concentrations for the extrapolation.

3. IVIVE Calculation:

- Apply the refined CLint and optimized model to predict the in vivo hepatic clearance. Compare the predicted value to the measured in vivo result to validate the improvement.

Hepatic Clearance Optimization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Materials for IVIVE Experiments

| Reagent / Material | Function in IVIVE Studies |

|---|---|

| Primary Hepatocytes (Human/Rat) | Gold-standard in vitro system for metabolism studies; used to measure intrinsic clearance and generate transcriptomic data [13] [14]. |

| Liver Microsomes | Subcellular fraction containing CYP450 enzymes; used for high-throughput metabolic stability assays [14] [15]. |

| HEPES-KOH Buffer | Buffer system used to create a more physiologically relevant, cytosolic-like environment in microsomal assays, improving prediction accuracy [15]. |

| Open TG-GATEs Database | A comprehensive toxicogenomics database providing paired in vitro and in vivo transcriptomic and pathological data for model training and validation [13]. |

| S1500+ Gene Set | A curated set of genes relevant to toxicity pathways; used to filter transcriptomic data, reducing noise and focusing analysis [13]. |

| Well-Stirred Model | The simplest and most widely used mathematical model for predicting hepatic clearance from in vitro data [14] [15]. |

This technical support center provides troubleshooting guides and FAQs to help researchers, scientists, and drug development professionals navigate key FDA and ICH guidelines. The content is framed within the context of research on dynamic versus static correlation differentiation methods, which are crucial for establishing robust and predictive models in pharmaceutical development.

The International Council for Harmonisation (ICH) brings together regulators and the pharmaceutical industry to harmonize global drug development through consensus-based guidelines [16]. These guidelines provide a critical framework for the application of various scientific models—from nonclinical safety prediction to clinical trial design—ensuring that methodologies are sound, results are reliable, and patient safety is protected.

For research focusing on dynamic versus static correlation differentiation methods, understanding this regulatory landscape is paramount. Your models, which may differentiate between time-dependent (dynamic) and point-in-time (static) relationships in data, must be developed and validated within this structured yet flexible framework to gain regulatory acceptance.

The table below summarizes the core ICH guidelines relevant to the development and application of predictive models and correlation methods in drug development.

| ICH Guideline | Focus Area | Key Principles & Relevance to Model Applications | Status & Date |

|---|---|---|---|

| E6(R3) - Good Clinical Practice [16] | Clinical Trial Design & Conduct | Promotes Quality by Design (QbD), Risk-Based Quality Management, and flexibility for innovative designs and technologies. Directly supports the use of novel endpoints derived from correlation models. | Final (September 2025) |

| M3(R2) - Nonclinical Safety Studies [17] [18] | Nonclinical to Clinical Transition | Defines nonclinical safety study requirements to support human clinical trials. Models that correlate nonclinical data with potential human outcomes must adhere to these standards. | Final (January 2010); Q&A (March 2013) [19] |

| M7(R2) - Assessment of DNA Reactive Impurities [20] | Impurity Risk Assessment | Provides a framework for (Q)SAR models and other methods to assess and control mutagenic impurities. Critical for applying predictive computational models in safety qualification. | Final (July 2023) |

| E20 - Adaptive Designs for Clinical Trials [21] | Adaptive Clinical Trial Designs | Outlines principles for trials that modify design based on interim data. Relies heavily on statistical models and pre-specified rules for dynamic adjustments, directly involving correlation methodologies. | Draft (September 2025) |

Frequently Asked Questions (FAQs)

How does ICH E6(R3) support the use of novel correlation methods in clinical trials?

ICH E6(R3) modernizes the clinical trial framework to be more flexible and proportionate, which is ideal for integrating novel correlation methods [16] [22].

- Principle of Flexibility and Innovation: The guideline "incorporates flexible, risk-based approaches and embraces innovations in trial design, conduct, and technology" [16]. This means that if your research on dynamic correlation methods leads to a novel biomarker or a new digital endpoint, the regulatory pathway for its use in a trial is more clearly defined.

- Data Integrity and Governance: E6(R3) emphasizes stronger expectations for data governance, including "audit trails, metadata, traceability, and secure system validation" [22]. When generating and processing data for your models, you must ensure that the computerised systems used are validated and that the data lifecycle is fully traceable to withstand regulatory scrutiny.

- Risk-Based Approach: The guideline requires that oversight be focused on factors "Critical to Quality" (CtQ) [22]. When proposing a new model, you should perform a risk assessment that identifies how the model and its output impact participant safety and trial result reliability. Your monitoring plan should then be tailored to these risks.

What are the key considerations for validating predictive models under ICH M7(R2)?

ICH M7(R2) focuses on using models, primarily (Q)SAR systems, to predict the mutagenic potential of impurities without needing extensive laboratory testing for every compound [20].

- Model Validation and Applicability: The guideline aims to "harmonize the considerations for assessment and control of DNA reactive (mutagenic) impurities" [20]. A key troubleshooting point is ensuring the (Q)SAR model you are using is scientifically valid and applicable to the chemical space of your pharmaceutical compound. Using two complementary methodologies (one statistical and one expert rule-based) is a common industry standard to increase predictivity.

- Documentation and Justification: You must thoroughly document the models used, all input parameters, and the rationale for accepting or overriding any predictions. This creates a transparent and defensible scientific record for regulatory review.

Our research involves dynamic PK/PD modeling to transition from nonclinical to clinical studies. How does ICH M3(R2) guide this?

ICH M3(R2) provides the framework for determining the scope and duration of nonclinical safety studies needed to support human clinical trials [17] [18]. PK/PD models are central to this transition.

- Dose Selection Justification: The guideline emphasizes the importance of adequate nonclinical data to support the safe starting dose and dose escalation in humans [18]. Your dynamic PK/PD models, which correlate exposure and response over time across species, must be built upon robust nonclinical data from pharmacology, pharmacokinetic, and toxicology studies conducted according to M3(R2) standards.

- Troubleshooting Study Design: A common challenge is designing nonclinical studies that generate data suitable for building predictive models. The M3(R2) Q&A document is a valuable resource for clarifying complex issues in implementation [19]. Ensure your toxicology studies include sufficient time points and dose levels to capture the dynamic relationships essential for your models.

We are designing an adaptive trial using a biomarker model. What principles from ICH E20 must we follow?

ICH E20 provides principles for the use of adaptive designs in confirmatory clinical trials [21]. These designs often rely on models that correlate biomarker data with clinical outcomes.

- Pre-specification and Control of Bias: The draft guidance emphasizes that "principles that are critical for ensuring clinical trials produce reliable and interpretable results" [21]. A major troubleshooting area is the pre-specification of the adaptive plan. The model used to inform adaptations (e.g., a correlation model between biomarker response and patient outcome) and all decision rules must be documented in the trial protocol and statistical analysis plan before data are unblinded for the interim analysis. This is vital to protect trial integrity and minimize operational bias.

- Statistical Rigor: The models and algorithms driving the adaptation must be statistically sound. The type I error rate (false positive) must be controlled, and the analysis plan must account for the adaptive nature of the design.

Experimental Protocol: A Risk-Based Approach to Model Application

This protocol outlines a general methodology for developing and applying a predictive model within a clinical trial, aligning with ICH E6(R3) and E20 principles.

Objective: To develop and implement a model correlating a dynamic biomarker (e.g., daily digital sensor output) with a static clinical endpoint (e.g., 6-month survival) to guide patient enrichment in an adaptive trial.

Step 1: Model Building & Pre-specification (Pre-Trial)

- Gather historical data from previous studies (clinical and nonclinical).

- Develop the initial correlation model, clearly defining its mathematical form and inputs.

- Pre-specify the model's intended use in the trial protocol. Define the performance thresholds the model must meet (e.g., a specific correlation coefficient or predictive accuracy in a validation set) to be triggered for the adaptation.

Step 2: System & Process Validation

- Computer System Validation: If the model is implemented in software, follow FDA expectations for system validation, including audit trails and data security, as underscored in E6(R3) [22].

- Procedure Validation: Develop and train all research staff on the Standard Operating Procedure (SOP) for using the model, including data input formats and interpretation of outputs.

Step 3: In-Trial Execution & Monitoring

- Data Collection: Collect the dynamic biomarker data according to the pre-defined methods.

- Interim Analysis: At the pre-specified interim analysis point, an independent statistical center executes the pre-specified model on the unblinded data.

- Adaptation Decision: The model's output is used by the pre-chartered data monitoring committee to make a recommendation (e.g., to enrich the trial population based on biomarker status).

Step 4: Documentation & Reporting

- Maintain meticulous documentation of all steps, including raw data, model code/software, interim analysis results, and the rationale for all decisions. This creates a defensible audit trail for regulatory inspection.

The Scientist's Toolkit: Research Reagent Solutions

The table below lists key materials and tools essential for working within the regulatory framework for model applications.

| Tool / Reagent | Function in Regulatory Science & Model Application |

|---|---|

| Validated (Q)SAR Software | Computational tool to predict the mutagenic potential of impurities as per ICH M7(R2); requires validation to ensure predictions are reliable [20]. |

| PK/PD Modeling Software | Platform for building quantitative models that correlate pharmacokinetic (exposure) data with pharmacodynamic (response) data across time, critical for M3(R2) transitions and E20 adaptations. |

| Clinical Trial Management System (CTMS) | Centralized system for managing trial operations; must be validated to ensure data integrity as required by ICH E6(R3) for audit trails and data security [22]. |

| Electronic Data Capture (EDC) System | System for collecting clinical trial data; requires validation to ensure the accuracy and reliability of data used in dynamic models and endpoint assessments [22]. |

| Standardized Data Formats (e.g., CDISC) | Provides a common language for data submission; using standardized formats is a regulatory expectation and is critical for building models that integrate data from multiple sources. |

Workflow Visualization: Risk-Based Model Application Pathway

The diagram below visualizes the logical workflow for applying a predictive model within a clinical trial, incorporating key risk-based and quality-focused principles from ICH E6(R3).

Logical Pathway for Nonclinical to Clinical Model Translation

This diagram outlines the key stages and decision points in applying nonclinical models to inform clinical trial design, as guided by ICH M3(R2) and related guidelines.

From Theory to Practice: Implementation in Drug Discovery and Development

Troubleshooting Guides

Guide 1: Addressing Poor Model Performance in Static vs. Dynamic Correlation Scenarios

Problem: Your DDI prediction model shows high accuracy for competitive inhibition but fails to generalize for mechanism-based inhibition scenarios.

Explanation: This often stems from treating all enzyme interactions with static correlation models, ignoring dynamic correlation patterns where relationships between variables change based on unobserved physiological states [23]. Competitive inhibition relies on concentration-dependent binding affinity, while mechanism-based inhibition involves irreversible enzyme complex formation [24] [25].

Solution: Implement a dynamic correlation analysis (DCA) to identify latent factors governing correlation changes between drug pairs.

- Step 1: Calculate Liquid Association Coefficient (LAC) scores for all drug pairs to identify those with dynamic correlation patterns [23].

- Step 2: Apply the DCA method to extract Dynamic Components (DCs) that represent underlying physiological states affecting correlation [23].

- Step 3: Integrate DCs as latent variables into your ensemble machine learning model, such as those used in DDI-CYP frameworks [26] [27].

Verification: Retrain your model with these dynamic components. Performance on mechanism-based inhibition test sets should improve by >15% accuracy [23] [28].

Guide 2: Handling Time-Discrepant Results in Induction vs. Inhibition Studies

Problem: Experimental results show delayed onset of enzyme induction effects compared to rapid inhibition, causing mismatches with model predictions.

Explanation: This discrepancy arises from fundamental mechanistic differences. Competitive inhibition occurs rapidly (hours) as it depends on perpetrator drug concentration, while induction requires new enzyme synthesis, causing a delayed effect (days to weeks) [29] [30]. Static models often fail to capture this temporal dimension.

Solution: Incorporate temporal parameters into your DDI prediction framework.

- For Induction Modeling:

- Factor in the time required for gene transcription and translation (typically 24-72 hours).

- Use time-dependent parameters that reflect enzyme synthesis rates (t½ ≈ 36 hours for CYP450) [25].

- For Inhibition Modeling:

Verification: Plot observed vs. predicted concentration-time profiles for known inducers (e.g., rifampin) and inhibitors (e.g., ketoconazole). The mean absolute error should decrease by >20% across the time series.

Frequently Asked Questions (FAQs)

FAQ 1: Why does our ensemble model perform well on CYP3A4 substrates but poorly on CYP2C9, even though both use similar machine learning architectures?

This indicates a limitation in your model's applicability domain and feature representation. CYP isoforms have distinct active site geometries and chemical preferences [24] [29]. The model may be overfitting to features predominant in CYP3A4 substrates. Retrain with isoform-specific features and validate the model's applicability domain by ensuring inference sets are structurally similar to training data [26] [27].

FAQ 2: How can we differentiate between competitive and mechanism-based inhibition using in silico methods?

The key distinction lies in reversibility and time dependency. Use these computational indicators:

- Competitive Inhibition: High predicted binding affinity to the enzyme's active site, reversible kinetics in molecular dynamics simulations, and concentration-dependent effects [24] [30].

- Mechanism-Based Inhibition: Prediction of metabolite formation that can covalently modify the enzyme, time-dependent inhibition (TDI) patterns in simulated kinetics, and irreversible binding confirmed by docking studies [24] [25].

FAQ 3: What are the critical differences between static and dynamic correlation methods in DDI prediction?

Static correlation assumes consistent relationships between molecular descriptors and DDI outcomes, while dynamic correlation accounts for how these relationships change with latent biological variables [23] [31].

Table: Static vs. Dynamic Correlation Comparison

| Feature | Static Correlation Methods | Dynamic Correlation Methods |

|---|---|---|

| Underlying Assumption | Fixed relationships between variables | Relationships change with latent states (e.g., Z) [23] |

| Computational Load | Lower | Higher (requires scanning for latent factors) |

| Interpretability | More straightforward | More complex but biologically richer [23] |

| Best Suited For | Competitive inhibition, simple kinetics | Mechanism-based inhibition, complex temporal patterns |

FAQ 4: Our deep learning model (DDINet) achieves high accuracy but lacks explainability for clinical translation. How can we improve interpretability?

Integrate an Adverse Outcome Pathway (AOP) framework alongside your deep learning model. This provides mechanistic explainability by visualizing each predicted P450 interaction, from molecular initiating event through to the clinical outcome [27]. Additionally, use attention heatmaps to identify which chemical features the model prioritizes, as demonstrated in DDINet implementations [28].

Table: Key Pharmacokinetic Parameters for DDI Prediction

| Parameter | Competitive Inhibition | Mechanism-Based Inhibition | Enzyme Induction |

|---|---|---|---|

| Onset Time | Hours (follows perpetrator drug t½) [25] | May be delayed if metabolite-mediated [25] | Days to weeks (requires new enzyme synthesis) [30] |

| Offset Time | 2-4 days (dependent on drug t½) [25] | 3-5 days (dependent on enzyme regeneration t½) [25] | Weeks (dependent on enzyme degradation t½) |

| Effect on Km | Increase (decreased affinity) [24] | Irreversible reduction in active enzyme | Increased enzyme pool (effectively decreases [S]/Km) |

| Effect on CL |

Decrease (CL | Significant decrease | Increase |

| Typical AUC Change | 2-5 fold increase [29] | 5-20+ fold increase | Can decrease AUC by >50% |

Table: Performance Metrics of Advanced DDI Prediction Models

| Model Name/Type | Reported Accuracy | Strengths | Limitations |

|---|---|---|---|

| DDI-CYP (Ensemble) | 85% [26] [27] | Incorporates P450 interaction predictions; improved explainability with AOP | Performance degrades with novel structures outside applicability domain |

| DDINet | 95.42% [28] | High accuracy; mechanism-wise prediction (absorption, metabolism, etc.) | Complex architecture; requires significant computational resources |

| Liquid Association (LA) | N/A (screening method) | Detects dynamic correlations governed by latent factors [23] | Computationally intensive; interpretation can be challenging |

| R-xDH7-SCC15 | WTMAD: 2.05 kcal/mol [31] | Excellent for electronic structure properties related to metabolism | Specialized for static/dynamic electronic correlation, not clinical DDI directly |

Experimental Protocols

Protocol 1: Differentiating Competitive vs. Mechanism-Based Inhibition

Objective: Determine the inhibition mechanism of a new chemical entity (NCE) against CYP3A4.

Principle: Competitive inhibition is reversible and immediate, while mechanism-based inhibition is time-dependent and irreversible [24] [25].

Materials:

- Human liver microsomes (HLM) or recombinant CYP3A4

- Test compound (NCE)

- Specific CYP3A4 substrate (e.g., midazolam)

- NADPH regenerating system

- LC-MS/MS for metabolite quantification

Procedure:

- Pre-incubation Variation: Set up two sets of incubations.

- Set A: Pre-incubate HLM + NCE + NADPH for 30 minutes.

- Set B: No pre-incubation (HLM + NCE mixed immediately with substrate and NADPH).

- Reaction: After pre-incubation (Set A) or immediately (Set B), add substrate (midazolam) to initiate the reaction for a fixed time (e.g., 10 minutes).

- Termination & Analysis: Stop the reaction and quantify the metabolite (1'-hydroxymidazolam) using LC-MS/MS.

- Data Interpretation: A significantly greater reduction in metabolite formation in Set A (with pre-incubation) compared to Set B indicates time-dependent, mechanism-based inhibition. Similar inhibition in both sets suggests reversible (competitive) inhibition [25].

Protocol 2: Implementing a Dynamic Correlation Analysis (DCA) for DDI Prediction

Objective: Identify latent dynamic correlation signals in transcriptomic data that affect drug-metabolizing enzyme interactions.

Principle: The DCA method identifies Liquid Association Coefficients (LAC) to find gene pairs whose correlations are governed by unobserved variables (Z) [23].

Materials:

- Gene expression matrix (e.g., from a panel of human liver samples)

- DCA computational algorithm [23]

Procedure:

- Data Preprocessing: Standardize the gene expression matrix so all variables have a mean of zero and a standard deviation of one [23].

- LAC Calculation: For all pairs of genes (X, Y) in your matrix, compute the LAC score. A high LAC suggests their correlation is dynamically regulated by a hidden factor.

- Seed Selection: Rank gene pairs by LAC scores and select the top pairs most likely to be dynamically correlated.

- Extract Dynamic Components (DCs): Apply the DCA algorithm to the selected seed pairs to extract the latent Z variables (DCs) that govern the dynamic correlations.

- Integration with DDI Model: Use the extracted DCs as additional input features in your machine learning model (e.g., the DDI-CYP ensemble) to account for dynamic biological states [23] [27].

Signaling Pathways & Workflows

DDI Mechanism Workflow

DDI Prediction with Dynamic Correlation

Research Reagent Solutions

Table: Essential Computational Tools for Metabolic DDI Prediction

| Tool/Reagent | Function/Description | Application in DDI Research |

|---|---|---|

| DDI-CYP Framework | An ensemble machine learning model that uses P450 interaction predictions and molecular structures [26] [27]. | Predicts DDIs with ~85% accuracy; provides explainable predictions via Adverse Outcome Pathways. |

| DDINet Architecture | A deep sequential learning model (LSTM, GRU, Attention) for mechanism-wise DDI prediction [28]. | Achieves high accuracy (95.42%); classifies DDIs by mechanisms like metabolism and excretion. |

| Liquid Association Coefficient (LAC) | A metric to identify pairs of variables whose correlation is dynamically regulated [23]. | Screens gene/drug pairs to find those most likely influenced by hidden biological states. |

| Dynamic Correlation Analysis (DCA) | A method to extract latent signals (Dynamic Components) that govern dynamic correlations [23]. | Uncovers unobserved physiological variables (Z) that affect drug interaction outcomes. |

| Adverse Outcome Pathway (AOP) | A framework for visualizing sequential events from molecular initiation to adverse outcome [27]. | Increases model explainability by mapping predicted P450 interactions to clinical effects. |

| Molecular Fingerprints (FCFP6, ECFP6) | Numerical representations of molecular structure and properties [27]. | Used as input features for machine learning models to represent drug molecules. |

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is the primary goal of ADMET prediction in lead optimization? The primary goal is to turn a biologically active but flawed "hit" compound into a viable drug candidate by systematically improving its properties. This involves enhancing potency and selectivity while fixing pharmacokinetic or safety problems [32]. The process aims to balance multiple parameters—such as solubility, metabolic stability, and reduced toxicity—simultaneously, as improving one property can often negatively impact another [32].

Q2: How do 'dynamic' and 'static' modeling approaches differ in ADMET prediction? This distinction generally applies to the methods used for analysis and correlation of data. In a broader computational context, static methods are insensitive to the temporal order of data points (e.g., classical QSAR, random forest models). In contrast, dynamic methods are sensitive to temporal sequence and can model causal relationships or time-dependent phenomena [33] [34]. For ADMET, this translates to using dynamic methods like physiologically based pharmacokinetic (PBPK) models that simulate drug disposition over time, versus static models that might predict a single, fixed outcome like a binary classification of solubility [35] [36].

Q3: What are the most common reasons for late-stage failure that ADMET prediction can mitigate? Late-stage attrition is often attributed to suboptimal pharmacokinetics (PK) and unforeseen toxicity [37]. Poor oral bioavailability (influenced by absorption and metabolism) and off-target effects (e.g., interaction with the hERG channel, which can affect heart function) are major contributors [32] [38]. Machine learning (ML)-driven ADMET prediction helps de-risk projects by identifying these issues early, before significant investment is made [37] [39].

Technical and Methodological Questions

Q4: What types of machine learning models are most effective for ADMET prediction? No single algorithm is universally best, but state-of-the-art methodologies include [37]:

- Graph Neural Networks (GNNs): Directly model molecular structure as graphs, effectively capturing structure-property relationships.

- Ensemble Learning: Combines multiple models to improve robustness and predictive accuracy.

- Multitask Models: Trained to predict several ADMET endpoints simultaneously, which can enhance generalizability by learning shared representations. While deep learning architectures are powerful, simpler methods like decision tree ensembles (e.g., Random Forest) can also perform very well, with data quality and molecular representation often mattering more than the algorithm itself [37] [38].

Q5: How can I define the applicability domain of my predictive model? A model's applicability domain defines the chemical space where its predictions are reliable. It can be assessed by comparing the similarity between the training data and the new compounds being predicted [38]. Methods to define this domain often use molecular descriptors or fingerprints. The OpenADMET initiative is generating high-quality, consistent datasets to help the community systematically develop and test such methods [38].

Q6: When should I use a global model versus a local (series-specific) model? The choice depends on data availability and project stage [38]:

- Global Models: Trained on diverse chemical structures. They are best for early-stage screening of large virtual libraries or when working on a new chemical series with limited internal data.

- Local Models: Trained on analogs from a specific chemical series. They can provide more accurate predictions for lead optimization within that series but require sufficient synthesized compounds for training. Systematic comparisons using high-quality data are ongoing to better guide this choice [38].

Troubleshooting Guides

Poor Model Performance and Generalization

Problem: My ADMET prediction model performs well on training data but poorly on new compound series.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Data Quality Issues | Audit data sources for consistency. Check for high experimental variability between batches or sources. | Prioritize internal, high-quality data. Use datasets from initiatives like OpenADMET, which are generated consistently [38]. |

| Incorrect Applicability Domain | Analyze the chemical similarity between your training set and the new compounds. | Retrain the model with more relevant data, or use a local model specific to your chemical series [38]. |

| Overfitting | Check for a large performance gap between training and test set accuracy. | Simplify the model architecture, apply stronger regularization, or use ensemble methods to improve generalizability [37]. |

Inconsistent or Uninterpretable Results from Complex Models

Problem: My complex ML model (e.g., deep neural network) provides accurate predictions but is a "black box," making it hard to gain scientific insight or gain regulatory acceptance.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inherent Model Complexity | The model lacks transparency (e.g., difficulty understanding which structural features drove a prediction). | Implement Explainable AI (XAI) techniques to interpret predictions [37]. Alternatively, use hybrid approaches that combine established mechanistic models (e.g., PBPK) with interpretable ML components, making results more scientifically plausible [39]. |

Integrating Multimodal Data

Problem: I have various data types (e.g., in vitro assay results, structural biology data, omics data) but struggle to integrate them effectively into my predictive models.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Data Silos and Formatting | Data exists in disparate, non-standardized formats. | Develop a unified data pipeline. Adopt multimodal data integration strategies that leverage modern ML frameworks to merge different data types, enhancing model robustness and clinical relevance [37]. Initiatives like OpenADMET combine high-throughput experimentation, structural biology, and ML, providing a blueprint for integration [38]. |

Quantitative Data for Method Comparison

Table 1: Key ADMET Parameters and Optimization Targets

Table summarizing critical properties to predict and optimize during lead optimization.

| Property Category | Specific Parameters | Optimal Ranges / Targets | Common Prediction Methods |

|---|---|---|---|

| Absorption | Permeability (Caco-2, P-gp substrate), Solubility | High permeability, low efflux by P-gp, good solubility [37] | ML classifiers & regressors, PBPK [37] [39] |

| Distribution | Volume of Distribution (Vd), Plasma Protein Binding | Suitable Vd for target tissue, moderate to high PPB for long half-life [37] | QSAR, In vitro-in vivo extrapolation (IVIVE) [37] |

| Metabolism | Metabolic Stability (e.g., Clint), CYP Inhibition/Induction | Low clearance, minimal CYP inhibition to avoid drug-drug interactions [32] [37] | CYP activity assays, ML on structural alerts, QSAR |

| Excretion | Renal/Biliary Clearance | Balanced clearance pathways [37] | Physiologically-based models |

| Toxicity | hERG inhibition, Genotoxicity, Organ-specific toxicity | No activity against hERG; minimal off-target toxicity [32] [38] | In vitro assays (e.g., hERG), ML models, structural alerts |

Table 2: Comparison of Machine Learning Algorithms for ADMET Prediction

Table outlining the performance characteristics of different ML approaches.

| Algorithm Type | Typical Use Case in ADMET | Relative Interpretability | Data Efficiency | Key Advantages |

|---|---|---|---|---|

| Decision Tree Ensembles (RF, XGBoost) | Classification & regression for various endpoints (e.g., solubility, CYP inhibition) | Medium | High | Robust, handles diverse descriptors, good on smaller datasets [38] |

| Graph Neural Networks (GNNs) | Predicting activity directly from molecular structure | Low | Low to Medium | Learns features automatically; no need for manual descriptor calculation [37] |

| Support Vector Machines (SVM) | Classification tasks (e.g., toxic vs. non-toxic) | Low | Medium | Effective in high-dimensional spaces [40] |

| Multitask Learning Networks | Simultaneous prediction of multiple ADMET properties | Low | Medium | Improved data utilization; can enhance accuracy via shared learning [37] |

Experimental Protocols

Protocol: Developing a Machine Learning Model for hERG Inhibition Prediction

Objective: To build a binary classification model that predicts the likelihood of a compound inhibiting the hERG channel.

Workflow Overview:

Materials:

- Dataset: Publicly available hERG inhibition data (e.g., ChEMBL) or internal high-throughput screening data.

- Software: Python/R with cheminformatics libraries (RDKit, OpenBabel).

- ML Libraries: Scikit-learn, XGBoost, Deep Graph Library (for GNNs).

Procedure:

- Data Collection: Curate a dataset of compounds with reliable hERG inhibition labels (e.g., IC50 < 10 µM = active). Note: Be aware of inter-assay variability; data from a single, consistent source is preferable [38].

- Data Curation: Standardize chemical structures (remove salts, neutralize charges), and handle duplicates and inaccurate entries.

- Molecular Featurization: Convert molecules into numerical representations.

- Options: Extended-connectivity fingerprints (ECFPs), molecular descriptors (e.g., molecular weight, logP), or graph representations for GNNs.

- Model Training & Validation:

- Split data into training (~70%), validation (~15%), and hold-out test sets (~15%).

- Train multiple algorithms (e.g., Random Forest, XGBoost, Neural Networks).

- Optimize hyperparameters using the validation set.

- Evaluate final model performance on the hold-out test set using metrics like AUC-ROC, precision, and recall.

- Prospective Testing: Synthesize and test a set of novel compounds not used in training to validate the model's real-world predictive power [38].

Protocol: Implementing a PBPK Model for Human PK Prediction

Objective: To use a physiologically based pharmacokinetic (PBPK) model to simulate the absorption, distribution, and clearance of a lead compound in humans.

Workflow Overview:

Materials:

- Software: Commercial PBPK platform (e.g., GastroPlus, Simcyp) or open-source tool.

- Input Data: In vitro assay results (e.g., solubility, permeability, metabolic stability in human liver microsomes), physicochemical properties (logP, pKa), and protein binding data.

Procedure:

- Gather Input Parameters: Compile all necessary compound-specific physicochemical and in vitro data.

- Build and Verify Model: Populate the PBPK software with the compound parameters and select the appropriate human population model. Verify the model's plausibility.

- Simulate and Analyze: Run simulations to predict human PK profiles, including plasma concentration-time curves, C~max~, AUC, and half-life.

- Refine with Data: As clinical data becomes available (e.g., from Phase I trials), refine the model by adjusting parameters within physiological bounds to improve its predictive accuracy for subsequent simulations [35] [36].

The Scientist's Toolkit: Research Reagent Solutions

| Tool / Resource | Type | Primary Function | Example Use Case |

|---|---|---|---|

| RDKit | Software Library | Cheminformatics and ML | Generating molecular fingerprints and descriptors for QSAR models [40]. |

| SwissADME | Web Tool | ADME Prediction | Rapid, free prediction of key properties like logP, solubility, and CYP inhibition [32]. |

| PBPK Platforms (e.g., Simcyp, GastroPlus) | Software | Mechanistic PK Modeling | Predicting human pharmacokinetics and drug-drug interactions from in vitro data [39] [35]. |

| OpenADMET Data | Data Resource | High-quality experimental data | Training and validating ML models on consistent, reliable datasets [38]. |

| CACO-2 Assay Kit | In Vitro Assay | Measuring Intestinal Permeability | Experimental determination of a compound's absorption potential [37]. |

| hERG Inhibition Assay | In Vitro Assay | Cardiac Safety Screening | Experimentally testing a compound's potential for hERG channel blockade [38]. |

Dose Regimen Selection and First-in-Human (FIH) Dose Prediction

Frequently Asked Questions (FAQs)

FAQ 1: Why is the traditional Maximum Tolerated Dose (MTD) approach no longer sufficient for modern oncology drugs?

The traditional MTD approach, often determined via a '3+3' trial design, focuses primarily on short-term safety and dose-limiting toxicities [41] [42]. While this was suitable for cytotoxic chemotherapies, it is less ideal for targeted therapies and immunotherapies. Studies show that nearly 50% of patients on targeted therapies in late-stage trials require dose reductions, and the FDA has required post-approval dosing re-evaluation for over 50% of recently approved cancer drugs [42]. This is because the MTD approach often selects unnecessarily high doses that increase toxicity without providing additional efficacy, a key issue given that modern drugs often have a flatter exposure-response relationship [41] [43].

FAQ 2: What are the key differences between static and dynamic correlation methods in dose-response analysis?

Static correlation methods, like Pearson's correlation, are insensitive to the temporal order of data points and provide a single, averaged measure of association. In contrast, dynamic correlation methods, such as lagged-cross-correlation (LCC) or autoregressive models, are sensitive to temporal precedence and can model how relationships evolve over time [34] [33]. In drug development, this translates to using dynamic models to understand how drug exposure over time (pharmacokinetics) dynamically influences efficacy and safety outcomes (pharmacodynamics), which is crucial for identifying the optimal biological dose rather than just the maximum tolerated one [41] [34].

FAQ 3: What model-informed approaches are recommended for FIH dose selection?

Model-informed drug development (MIDD) approaches are critical for FIH dose prediction. Key methods include:

- Quantitative Systems Pharmacology (QSP) Models: These mechanistic models incorporate biological mechanisms and drug properties to simulate human pharmacology and predict dose-response, helping to de-risk FIH decisions [41] [44].

- Population PK/PD Modeling: This correlates or links changes in drug exposure to changes in clinical endpoints (safety or efficacy) and can account for confounding factors like concomitant therapies [41].

- Exposure-Response Modeling: This uses nonclinical and early clinical data to predict the probability of efficacy and adverse reactions as a function of drug exposure, helping to simulate the benefit-risk profile of different dosing regimens [41].

FAQ 4: How can I select doses for further exploration after the FIH trial?

Selecting doses for proof-of-concept trials requires a fit-for-purpose approach that leverages all available data [42]. Strategies include: