Quantum Information Theory in Chemistry: Unveiling Electron Correlation and Enabling Computational Breakthroughs

This article explores the rapidly evolving synergy between quantum information theory (QIT) and quantum chemistry, a frontier promising to redefine our understanding and computation of molecular systems.

Quantum Information Theory in Chemistry: Unveiling Electron Correlation and Enabling Computational Breakthroughs

Abstract

This article explores the rapidly evolving synergy between quantum information theory (QIT) and quantum chemistry, a frontier promising to redefine our understanding and computation of molecular systems. We dissect how foundational QIT concepts like entanglement and correlation are being translated to analyze electron interactions, providing an intrinsic measure of complexity in chemical systems. The review covers emerging methodologies that leverage these insights to develop more efficient classical and quantum algorithms for tackling strong correlation problems. We further examine the practical challenges in simulating quantum chemical systems and the optimization strategies being pioneered through international research collaborations and hybrid computing architectures. Finally, the discussion assesses the validation of quantum advantage and benchmarks the performance of QIT-inspired approaches against established computational chemistry methods. This synthesis is tailored for researchers, scientists, and drug development professionals seeking to understand how quantum information science is poised to overcome long-standing barriers in predicting chemical properties and reactions.

Bridging the Realms: Foundational Concepts of Quantum Information in Chemical Systems

The fields of quantum information theory (QIT) and quantum chemistry are experiencing a transformative convergence, creating powerful synergies that address fundamental challenges in both domains. Quantum chemistry, with its remarkable ability to predict molecular and material properties, has become indispensable across modern quantum sciences [1]. Simultaneously, QIT has developed mathematically rigorous frameworks for quantifying quantum correlations and entanglement—resources that are operationally meaningful for distinct information-processing tasks [1] [2]. This fusion of expertise is particularly valuable for tackling the long-standing challenge of strongly correlated electrons in quantum chemistry, where traditional computational methods often struggle [1] [2]. As we progress through the second quantum revolution, this cross-disciplinary dialogue is opening new pathways for developing more efficient approaches to the electron correlation problem while also advancing the development of novel quantum registers based on molecular systems [1].

The interplay between these fields creates a complementary relationship: QIT offers precise characterization of electron correlation that can simplify descriptions of correlated many-electron wave functions, while quantum chemistry provides essential expertise for physically realizing qubits in atomic and molecular systems [1] [2]. This primer explores the key QIT concepts that are revolutionizing quantum chemical research, with particular emphasis on their applications in drug discovery and materials science—domains where accurate molecular simulations can dramatically accelerate innovation timelines [3] [4].

Theoretical Foundations: QIT Concepts for Quantum Chemistry

Orbital versus Particle Correlation

A fundamental contribution of QIT to quantum chemistry is the establishment of two conceptually distinct perspectives on electron correlation, leading to the notions of orbital correlation and particle correlation [1] [2]. Orbital correlation quantifies the complexity of many-electron wave functions relative to a specific orbital basis, representing an extrinsic measure of correlation that depends on the chosen reference frame. In contrast, particle correlation represents the minimal, intrinsic complexity of the wave function, obtained by minimizing total orbital correlation across all possible orbital bases [1] [2].

This distinction is mathematically expressed through the relationship where particle correlation equals the total orbital correlation minimized over all orbital bases [1]. This framework provides theoretical justification for the long-favored use of natural orbitals in simplifying electronic structures, as these orbitals effectively minimize the correlation complexity that must be captured in calculations [1] [2]. The conceptual separation of intrinsic and extrinsic correlation complexity offers researchers powerful tools for analyzing and compressing electronic structure problems.

Entanglement and Quantum Information Measures

In quantum information theory, entanglement serves as a quantitatively rigorous measure of quantum correlations between distinguishable subsystems [1]. When adapted to quantum chemical systems, these concepts require careful consideration of fermionic antisymmetry and superselection rules, but they provide operationally meaningful quantification of electron correlation [1] [2].

The geometric picture of quantum states in QIT enables elegant unification of different correlation types under a single theoretical framework [1]. For quantum chemistry, this means electron correlation can be analyzed using well-established information-theoretic measures such as von Neumann entropy and quantum mutual information [5]. These tools facilitate a more nuanced understanding of static and dynamic correlation effects in molecular systems, moving beyond heuristic descriptions to mathematically precise characterizations [1] [5].

Table: Key Quantum Information Theory Concepts and Their Chemical Interpretations

| QIT Concept | Mathematical Definition | Quantum Chemical Interpretation | Application Domain |

|---|---|---|---|

| Orbital Correlation | Correlation relative to specific basis | Extrinsic complexity of wave function | Basis set selection, Computational efficiency |

| Particle Correlation | Minimal correlation across all bases | Intrinsic complexity of wave function | Strong correlation problem, Wave function analysis |

| Von Neumann Entropy | S(ρ) = -Tr(ρ ln ρ) | Entanglement between subsystems | Bond breaking, Multi-reference character |

| Quantum Mutual Information | I(A;B) = S(ρA) + S(ρB) - S(ρ_AB) | Total correlation between subsystems | Analysis of electron correlation patterns |

Current Landscape: Quantitative Benchmarks and Hardware Progress

The quantum computing industry has reached an inflection point in 2025, transitioning from theoretical promise to tangible commercial reality [3]. This transformation is supported by unprecedented investment growth, with the global quantum computing market reaching $1.8 billion to $3.5 billion in 2025, and projections indicating growth to $5.3 billion by 2029 at a compound annual growth rate of 32.7 percent [3]. More aggressive forecasts suggest the market could reach $20.2 billion by 2030, representing a 41.8 percent CAGR, positioning quantum computing as one of the fastest-growing technology sectors [3].

Hardware advancements have been particularly dramatic in 2025, with error correction representing the most significant breakthrough area [3]. Google's Willow quantum chip, featuring 105 superconducting qubits, achieved a critical milestone by demonstrating exponential error reduction as qubit counts increased—a phenomenon known as going "below threshold" [3]. The Willow chip completed a benchmark calculation in approximately five minutes that would require a classical supercomputer 10^25 years to perform, providing strong evidence that large, error-corrected quantum computers can be constructed [3]. Recent breakthroughs have pushed error rates to record lows of 0.000015% per operation, while researchers at QuEra published algorithmic fault tolerance techniques that reduce quantum error correction overhead by up to 100 times [3].

Table: Quantum Hardware Performance Benchmarks (2025)

| Hardware Platform | Qubit Count | Qubit Type | Key Error Rate | Notable Achievements |

|---|---|---|---|---|

| Google Willow | 105 physical qubits | Superconducting | Exponential reduction with scale | "Below threshold" operation, Quantum Echoes algorithm |

| IBM Quantum Starling | 200 logical (planned) | Superconducting | ~90% overhead reduction | Fault-tolerant roadmap, Quantum-centric supercomputers |

| Microsoft Majorana 1 | N/A | Topological | 1,000-fold error reduction | Novel superconducting materials, Geometric codes |

| Atom Computing | 112 atoms | Neutral atom | Significant error suppression | 28 logical qubits demonstrated, Utility-scale operations |

For quantum chemistry applications, early fault-tolerant quantum computers with approximately 25-100 logical qubits are expected to enable qualitatively distinct strategies that remain challenging for classical solvers [6]. These include polynomial-scaling phase estimation, direct simulation of quantum dynamics, and active-space embedding—particularly valuable for multireference charge-transfer and conical-intersection states central to photochemistry and materials design [6].

Experimental Protocols: Implementing QIT in Chemical Research

The Quantum Echoes Algorithm for Molecular Structure

Google's Quantum Echoes algorithm represents a breakthrough in verifiable quantum advantage for chemical applications, demonstrating a 13,000-fold speedup over classical supercomputers for specific calculations [7]. This protocol enables precise determination of molecular structure through an advanced echo measurement technique.

Experimental Workflow:

System Initialization: Prepare the 105-qubit Willow processor in a known ground state [7]. For molecular simulations, this involves mapping molecular orbitals to qubit states using appropriate encoding schemes (Jordan-Wigner or Bravyi-Kitaev transformations).

Forward Evolution: Apply a carefully crafted sequence of quantum gates (U) to the qubit array, evolving the system forward in time to simulate molecular dynamics [7].

Qubit Perturbation: Introduce a controlled perturbation (P) to a specific qubit, analogous to disturbing a particular atomic site in the target molecule [7].

Reverse Evolution: Apply the inverse quantum operation (U†) to reverse the system's temporal evolution [7].

Echo Measurement: Measure the resulting quantum state, particularly focusing on the "echo" signal amplified by constructive interference effects [7].

This methodology functions as a "molecular ruler," capable of measuring longer distances than traditional methods by leveraging nuclear spin echo principles [7]. The technique has been successfully validated on molecules containing 15 and 28 atoms, matching results from traditional nuclear magnetic resonance (NMR) while revealing additional information not typically accessible through conventional NMR [7].

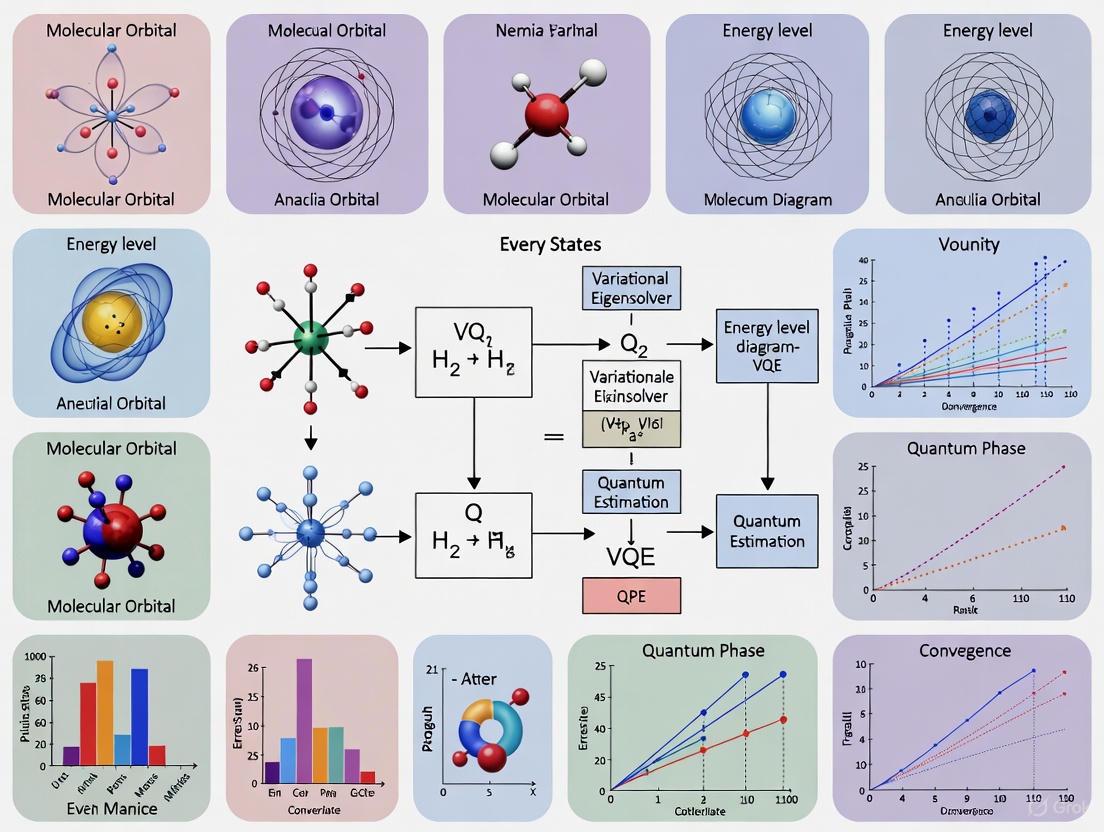

Variational Quantum Eigensolver (VQE) for Molecular Energy Calculations

The Variational Quantum Eigensolver (VQE) has emerged as a leading hybrid quantum-classical algorithm for determining molecular ground-state energies, particularly valuable for near-term quantum devices without full error correction [8].

Protocol Implementation:

Qubit Mapping: Represent the molecular Hamiltonian in qubit space using fermion-to-qubit transformation techniques. For a hydrogen molecule, this typically requires 2-4 qubits depending on the mapping approach.

Ansatz Preparation: Initialize a parameterized quantum circuit (ansatz) that can represent the target molecular wave function. Common approaches include unitary coupled cluster (UCC) ansatzes or hardware-efficient designs.

Quantum Execution: Run the parameterized circuit on quantum hardware (or simulator) to prepare the trial wavefunction |ψ(θ)⟩.

Measurement: Determine the expectation value ⟨ψ(θ)|H|ψ(θ)⟩ through repeated measurements in different bases to reconstruct the Hamiltonian components.

Classical Optimization: Use classical optimizers (e.g., gradient descent, SPSA) to adjust parameters θ to minimize the energy expectation value.

This hybrid approach has been successfully demonstrated for small molecules including helium hydride ion, hydrogen molecule, lithium hydride, and beryllium hydride [8]. More advanced implementations have tackled larger systems such as iron-sulfur clusters, demonstrating potential for scaling to industrially relevant molecular systems [8].

Table: Essential Research Reagents and Computational Resources for Quantum Chemistry Applications

| Resource Category | Specific Solutions | Function/Purpose | Representative Providers |

|---|---|---|---|

| Quantum Hardware Platforms | Superconducting qubits, Trapped ions, Neutral atoms | Physical implementation of quantum processing units | Google Willow, IBM, IonQ, Atom Computing |

| Quantum Software Ecosystems | Qiskit, Cirq, Pennylane | Quantum algorithm development and simulation | IBM, Google, Xanadu |

| Error Mitigation Tools | Zero-noise extrapolation, Probabilistic error cancellation | Improving results from noisy quantum devices | Q-CTRL, Riverlane, Zurich Instruments |

| Chemical Datasets | AQCat25, PubChemQC | Training and validation data for quantum chemistry | SandboxAQ, National Institutes of Health |

| Hybrid HPC-QC Systems | Quantum-classical integration platforms | Combining quantum and classical computational resources | IBM, Fujitsu, Nvidia DGX Cloud |

The AQCat25 dataset exemplifies specialized resources emerging for quantum chemistry applications, containing 11 million high-fidelity quantum chemistry calculations on 40,000 intermediate-catalyst systems [4]. This dataset, developed using Nvidia DGX Cloud with over 400,000 GPU-hours of computation, enables machine learning models to deliver predictions up to 20,000 times faster than traditional physics-based methods [4]. Such resources are particularly valuable for sustainable chemistry applications including aviation fuel production, green hydrogen creation, and industrial waste conversion [4].

Signaling Pathways and Quantum Information Flow

Understanding information flow in quantum algorithms is crucial for optimizing their application to chemical problems. The following diagram illustrates the conceptual pathway for applying quantum information theory to solve quantum chemical problems, highlighting the synergistic relationship between these domains.

The pathway begins with fundamental concepts from quantum information theory, particularly entanglement measures and correlation quantification [1] [2]. These concepts are adapted to fermionic systems through appropriate mapping procedures that account for antisymmetry and superselection rules [1]. The translated concepts then target specific chemical problems, particularly those involving strong electron correlation that challenge conventional computational methods [1] [8]. This approach ultimately generates solutions including compressed wavefunction representations, more efficient computational schemes, and novel approaches to the electron correlation problem [1] [2].

The integration of quantum information theory with quantum chemistry represents a paradigm shift in computational molecular sciences, offering mathematically rigorous frameworks for addressing the persistent challenge of strong electron correlation. As quantum hardware continues to advance—with error-corrected processors moving from theoretical concepts to operational reality—the applications for drug discovery, materials design, and catalyst development are expected to expand dramatically [3] [9].

The emerging toolkit of quantum algorithms, including the Quantum Echoes protocol and variational methods, provides researchers with practical pathways for leveraging quantum advantage in chemical problems [8] [7]. These developments are particularly timely given the growing investment in quantum technologies, which surpassed $2 billion in venture funding alone during 2024 [3] [9]. With over 250,000 new quantum professionals needed globally by 2030, the intersection of QIT and quantum chemistry represents not only a scientific frontier but a significant workforce development opportunity [3].

As the United Nations designation of 2025 as the International Year of Quantum Science and Technology suggests, we are at the beginning of a transformative period where quantum concepts will increasingly impact chemical research and development [3] [9]. The synergy between quantum information theory and quantum chemistry promises to accelerate discoveries across pharmaceuticals, energy storage, materials science, and fundamental chemistry, ultimately enabling the design of molecular systems with precision that was previously impossible.

The application of quantum information theory to chemical systems is revolutionizing our understanding of molecular structure and bonding. Orbital entanglement quantifies the quantum correlations between molecular orbitals, moving beyond classical correlation concepts to reveal the genuinely quantum nature of chemical bonds and reactions. [5] This framework provides powerful tools for analyzing strongly correlated systems where traditional electronic structure methods often struggle, such as in transition states, bond dissociation processes, and systems with near-degenerate orbitals. [10] [11]

The von Neumann entropy serves as a fundamental quantity in this analysis, measuring the entanglement between subsystems and offering insights into electron correlation patterns that are difficult to capture through conventional quantum chemical approaches. [10] [11] [5] When applied to molecular orbitals, these information-theoretic measures can elucidate reaction mechanisms, identify key correlated orbitals in active spaces, and potentially guide the development of more efficient computational methods for quantum chemistry. [5]

Theoretical Framework: From Classical Correlation to Quantum Entanglement

Fundamental Information-Theoretic Measures

Table: Key Information-Theoretic Measures in Quantum Chemistry

| Measure | Mathematical Expression | Chemical Interpretation |

|---|---|---|

| Shannon Entropy | ( H(X) = -\sum_{x \in \mathcal{X}} p(x)\log p(x) ) | Uncertainty in electron distribution [5] |

| Von Neumann Entropy | ( S(\rho) = -\text{Tr}(\rho \log \rho) ) | Quantum entanglement of orbitals [10] [11] |

| Orbital Mutual Information | ( I(A;B) = S(\rhoA) + S(\rhoB) - S(\rho_{AB}) ) | Total correlation between orbital pairs [5] |

| Kullback-Leibler Divergence | ( D{KL}(P|Q) = \sumx p(x)\log\frac{p(x)}{q(x)} ) | Distinguishability of electron distributions [5] |

The Critical Role of Superselection Rules

A crucial consideration in quantifying orbital entanglement involves fermionic superselection rules (SSRs), which arise from fundamental symmetries of electronic systems. [10] [11] These rules restrict physically allowable operations and states, preventing the overestimation of entanglement that can occur when ignoring these fundamental constraints. Recent experimental implementations have demonstrated that incorporating SSRs not only provides a more physically meaningful quantification of orbital entanglement but also significantly reduces the measurement overhead required for constructing orbital reduced density matrices (ORDMs) on quantum hardware. [10] [11] This reduction occurs because SSRs limit the number of measurable Pauli operators that need to be considered when evaluating ORDM elements.

Experimental Protocols: Measuring Orbital Entanglement on Quantum Hardware

Protocol 1: Molecular System Preparation and Active Space Selection

Objective: Prepare the molecular system and identify strongly correlated orbitals for entanglement analysis.

Reaction Path Optimization:

Active Space Selection via AVAS:

- Apply Atomic Valence Active Space (AVAS) projection to target specific atomic orbitals (e.g., oxygen p orbitals in O₂ reactions). [10] [11]

- Project canonical molecular orbitals onto chosen atomic orbitals to generate an intrinsically localized orbital basis.

- Select a subset of energetically relevant molecular orbitals for subsequent calculations (e.g., 4 orbitals with 6 electrons).

Wavefunction Optimization:

Protocol 2: Quantum State Preparation and Measurement

Objective: Prepare chemical wavefunctions on quantum hardware and measure orbital reduced density matrices.

Qubit Encoding and State Preparation:

Orbital Reduced Density Matrix Construction:

- Partition measurable Pauli operators into commuting sets, leveraging superselection rules to minimize measurement requirements. [10] [11]

- Execute measurement circuits on trapped-ion quantum computer (e.g., Quantinuum H1-1).

- Reconstruct orbital reduced density matrices (1-ORDM and 2-ORDM) from measurement outcomes.

Noise Mitigation:

Protocol 3: Entanglement Quantification and Analysis

Objective: Calculate orbital entropies and mutual information to quantify correlation and entanglement.

Orbital Entropy Calculation:

Mutual Information Calculation:

- Construct 2-orbital reduced density matrices (2-ORDM) for orbital pairs.

- Calculate orbital mutual information: ( I(i;j) = S(\rhoi) + S(\rhoj) - S(\rho_{ij}) ). [5]

Interpretation and Validation:

- Analyze entropy profiles along reaction coordinates to identify strongly correlated regions.

- Compare quantum hardware results with noiseless classical benchmarks.

- Interpret vanishing one-orbital entanglement in closed-shell configurations through the lens of superselection rules.

Case Study: Orbital Entanglement in Lithium-Ion Battery Chemistry

Application to Vinylene Carbonate + Singlet Oxygen Reaction

Table: Orbital Entropy Analysis of VC + ¹O₂ → Dioxetane Reaction

| Reaction Stage | Orbital Entropy Profile | Key Entanglement Findings |

|---|---|---|

| Reactants (Image 1) | Moderate entropy values | Initial correlation in O₂ π and π* orbitals [10] [11] |

| Transition State (Images 7-10) | High entropy peaks | Strong orbital entanglement during bond stretching and realignment [10] [11] |

| Intermediate (Image 12) | Local entropy minimum | Partial correlation relaxation at local energy minimum [10] [11] |

| Product (Image 16) | Low entropy values | Weak orbital entanglement in closed-shell dioxetane product [10] [11] |

The reaction between vinylene carbonate (VC) and singlet oxygen to form dioxetane represents an exemplary system for studying orbital entanglement in strongly correlated chemical processes. This transformation is particularly relevant to degradation processes in lithium-ion batteries, where singlet oxygen attacks carbonate solvent molecules. [10] [11] The application of orbital entanglement measures along this reaction path has revealed characteristic signatures of the transition state, manifested as peaks in orbital von Neumann entropies that correspond to regions of maximum electronic correlation as oxygen bonds stretch and align with the C-C bond of the carbonate. [10] [11]

Key Theoretical Insight: One-Orbital Entanglement and Spin Configurations

A fundamental theoretical result emerging from these studies demonstrates that one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction when superselection rules are properly accounted for. [10] [11] This finding highlights the critical importance of considering fundamental fermionic symmetries when quantifying entanglement in chemical systems and provides a rigorous foundation for interpreting orbital correlation patterns in molecular processes.

Table: Essential Resources for Orbital Entanglement Studies

| Resource Category | Specific Tools/Methods | Function/Purpose |

|---|---|---|

| Quantum Hardware | Quantinuum H1-1 trapped-ion quantum computer | Execution of quantum circuits for ORDM measurement [10] [11] |

| Classical Computational Chemistry | PySCF, ASH package | NEB calculations, AVAS, CASSCF wavefunction optimization [10] [11] |

| Entanglement Quantification | Von Neumann entropy, Orbital mutual information | Quantifying orbital correlation and entanglement [10] [5] |

| Noise Mitigation | Thresholding methods, Maximum likelihood estimation | Post-measurement noise reduction in ORDMs [10] [11] |

| Symmetry Handling | Fermionic superselection rules (SSR) | Physically correct entanglement quantification and measurement reduction [10] [11] |

Future Directions and Research Opportunities

The integration of quantum information concepts with quantum chemistry represents a rapidly evolving frontier with several promising research directions. The NSF-UKRI/EPSRC Lead Agency Opportunity on "Understanding and Exploiting Quantum Information in Chemical Systems" specifically encourages collaborative U.S.-U.K. research proposals that advance fundamental understanding of QIS concepts in chemical systems or leverage QIS concepts to advance chemistry research. [12] Priority research areas include developing new ways of creating, observing, and quantifying QIS phenomena in molecular systems, studying the role of quantum correlations in chemical reactions, developing quantum sensors for monitoring chemical systems, and exploiting quantum phenomena to visualize chemical systems at very short length and time scales. [12]

The successful demonstration of orbital entanglement quantification on quantum hardware, combined with ongoing theoretical developments in information-theoretic approaches to electronic structure, suggests that the "new language of electron interactions" based on orbital entanglement and correlation measures will continue to provide valuable insights into chemical phenomena and potentially guide the design of novel materials and chemical processes with tailored quantum properties.

In the realms of quantum information theory and quantum chemistry, the reduced density matrix (RDM) serves as a foundational tool for analyzing subsystems of larger, entangled quantum systems. It provides the necessary formalism to describe the state of a subsystem when the complete state of the total system is known, enabling practical computation and theoretical understanding where the full quantum description is intractable. Within quantum chemistry, RDMs are central to the development of efficient computational methods, particularly on emerging quantum hardware, where managing computational load is critical for tackling problems like predicting molecular properties and simulating material behavior [13] [14].

Mathematical and Theoretical Foundation

Definition and Formalism

In quantum mechanics, the density matrix, or density operator, offers a unified description of quantum states, capable of representing both pure states and mixed statistical ensembles. For a pure state ( |\psi\rangle ), the density matrix is defined as ( \rho = |\psi\rangle\langle\psi| ) [15].

The reduced density matrix is obtained by performing a partial trace over the degrees of freedom of the subsystems one is not interested in. For a composite system ( A \otimes B ) with a total density matrix ( \rho{AB} ), the reduced density matrix for subsystem ( A ) is defined as: [ \rhoA = \operatorname{Tr}B [\rho{AB}] ] where ( \operatorname{Tr}B ) denotes the partial trace over subsystem ( B ). In finite-dimensional Hilbert spaces, this is accomplished by summing over an orthonormal basis ( {|nB\rangle} ) of ( B ): [ \langle a | \rhoA | b \rangle = \sumn \langle a | \langle nB | \rho{AB} | n_B \rangle | b \rangle ] for all vectors ( |a\rangle, |b\rangle ) in the Hilbert space of ( A ) [16].

Pure States vs. Mixed States

A pure state satisfies ( \operatorname{Tr}(\rho^2) = 1 ) and represents maximal knowledge of the quantum system. A mixed state, described by a density matrix ( \rho = \sumj pj |\psij\rangle\langle\psij| ) with ( 0 < pj < 1 ) and ( \sumj p_j = 1 ), satisfies ( \operatorname{Tr}(\rho^2) < 1 ) and represents a statistical ensemble of pure states [15].

The RDM of a pure, entangled state is often a mixed state. For example, the Bell state ( |\Psi\rangle = \frac{1}{\sqrt{2}}(|00\rangle + |11\rangle) ) has a pure state density matrix ( \rho = |\Psi\rangle\langle\Psi| ). The reduced density matrix for either single qubit is ( \rho_{\text{reduced}} = \frac{1}{2}(|0\rangle\langle 0| + |1\rangle\langle 1|) = \frac{\mathrm{Id}}{2} ), which is maximally mixed [16].

Table 1: Key Properties of Density Matrices

| Property | Pure State | Mixed State | Reduced Density Matrix (from entangled pure state) |

|---|---|---|---|

| Definition | (\rho = \ket{\psi}\bra{\psi}) | (\rho = \sumj pj \ket{\psij}\bra{\psij}) | (\rhoA = \operatorname{Tr}B(\rho_{AB})) |

| Trace Condition | (\operatorname{Tr}(\rho) = 1) | (\operatorname{Tr}(\rho) = 1) | (\operatorname{Tr}(\rho_A) = 1) |

| Purity | (\operatorname{Tr}(\rho^2) = 1) | (\operatorname{Tr}(\rho^2) < 1) | (\operatorname{Tr}(\rhoA^2) < 1) (for entangled (\rho{AB})) |

| Knowledge | Maximal | Incomplete | Incomplete (describes a subsystem) |

Applications in Quantum Chemistry

The Active Space Approximation

A pivotal application of RDMs in quantum chemistry is the active space approximation, used in methods like the Complete Active Space Self-Consistent Field (CASSCF). This approach partitions the molecular orbital space into three distinct regions, allowing for a computationally feasible focus on the most chemically relevant electrons and orbitals [13].

Quantum Linear Response (qLR) and Molecular Properties

The quantum Linear Response (qLR) framework leverages RDMs to compute crucial molecular response properties, such as excitation energies and oscillator strengths, which are vital for interpreting spectroscopic data [13]. The expectation value of an operator ( \hat{O} ) within the active space approximation is expressed as: [ \braket{0(\bm{\theta})|\hat{O}|0(\bm{\theta})} = \braket{I|\hat{O}I|I}\braket{A(\bm{\theta})|\hat{O}A|A(\bm{\theta})}\braket{V|\hat{O}V|V} ] where ( |I\rangle ), ( |A(\bm{\theta})\rangle ), and ( |V\rangle ) represent the inactive, active, and virtual parts of the wave function, respectively [13]. The active space component ( \braket{A(\bm{\theta})|\hat{O}A|A(\bm{\theta})} ), which is evaluated using RDMs on a quantum computer, is often the most computationally demanding part.

Table 2: Quantum Linear Response (qLR) Applications and Challenges

| Application Area | Target Molecular Properties | Key Challenge | RDM-Related Approximation |

|---|---|---|---|

| Photochemistry | Excitation Energies, Oscillator Strengths | High computational cost of 4-body RDMs | Reduced Density Cumulant (RDC) approximations [13] |

| Spectroscopy | Rotational Strengths | Noise and decoherence on NISQ devices | Direct RDM approximation [13] |

| Material Design | Electronic Properties of Heavy Elements | Balancing computational load and accuracy [14] | Active space selection ((Ne, NA)) [13] |

Experimental and Computational Protocols

Protocol: Calculating the Reduced Density Matrix on a Quantum Computer

This protocol details the steps for computing a reduced density matrix for an active space on a quantum computer, a routine procedure in variational quantum algorithms like VQE.

1. System Preparation and Active Space Selection

- Input: Molecular geometry, basis set.

- Procedure: Perform a classical electronic structure calculation (e.g., Hartree-Fock). Select an active space denoted by ((Ne, NA)), where (Ne) is the number of active electrons and (NA) is the number of active spatial orbitals [13].

- Output: Molecular Hamiltonian transformed into the active space, ( \hat{H}_A ).

2. Wavefunction Preparation

- Input: Hamiltonian ( \hat{H}_A ).

- Procedure: Prepare the ground state ( |\psi(\bm{\theta}^*)\rangle ) using a parameterized quantum circuit, such as the Unitary Coupled Cluster (UCC) ansatz. Parameters ( \bm{\theta} ) are optimized, typically via the Variational Quantum Eigensolver (VQE), to minimize the energy ( \langle \psi(\bm{\theta}) | \hat{H}_A | \psi(\bm{\theta}) \rangle ) [13].

- Output: Prepared quantum state ( |\psi(\bm{\theta}^*)\rangle ) representing the active space wavefunction.

3. Quantum Measurement and RDM Reconstruction

- Input: Prepared state ( |\psi(\bm{\theta}^*)\rangle ).

- Procedure: To construct the k-body RDM, measure the expectation values of all corresponding k-body operators. For example, the 2-RDM element ( \Gamma{pqrs} = \langle \psi | \hat{a}p^\dagger \hat{a}q^\dagger \hat{a}s \hat{a}r | \psi \rangle ) requires measuring a Hermitian operator ( \hat{a}p^\dagger \hat{a}q^\dagger \hat{a}s \hat{a}_r + \text{h.c.} ) on the quantum computer. This involves translating these fermionic operators into a sum of Pauli strings via a Jordan-Wigner or Bravyi-Kitaev transformation [13].

- Output: Experimentally estimated expectation values for all required matrix elements.

4. Post-Processing and Analysis

- Input: Raw RDM element estimates.

- Procedure: The collected measurements are used to build the RDMs. These matrices can then be analyzed to compute molecular properties. For strongly correlated systems, the accuracy of the result can be probed by examining the purity of the RDMs or by using the RDMs to compute energy contributions from the inactive and virtual spaces [13].

- Output: Verified k-body RDMs for the active space.

The Scientist's Toolkit: Essential Reagents and Computational Materials

Table 3: Key Computational Tools and Methods

| Item / Reagent | Function / Role | Specifications / Notes |

|---|---|---|

| Active Space ((Ne, NA)) | Reduces computational cost by focusing on correlated electrons/orbitals. | Critical choice; directly controls problem size on quantum hardware [13]. |

| Unitary Coupled Cluster (UCC) Ansatz | Parameterized quantum circuit to prepare correlated wavefunctions. | Often truncated to singles and doubles (UCCSD) for feasibility [13]. |

| Jordan-Wigner / Bravyi-Kitaev Transform | Maps fermionic operators to qubit (Pauli) operators. | Enables measurement on quantum processor [13]. |

| Reduced Density Cumulant (RDC) | Functional used to approximate high-rank RDMs from lower-rank ones. | Aims to reduce quantum measurement load, but fails under strong correlation [13]. |

| Orbital-Optimized oo-UCC | Variant of UCC that optimizes orbital coefficients alongside cluster amplitudes. | Improves description with a more compact circuit [13]. |

Data Presentation and Analysis

The computational burden of quantum chemistry methods based on RDMs scales severely with the size of the active space. This scaling is a primary motivation for exploring approximations like cumulant expansions.

Table 4: Scaling of Reduced Density Matrix (RDM) Computation

| RDM Type | Scaling with Active Space Size (N_A) | Key Applications | Approximation Viability |

|---|---|---|---|

| 1-RDM | (N_A^4) | Population analysis, one-electron properties | High fidelity |

| 2-RDM | (N_A^6) | Total energy evaluation, two-electron properties | Standard for many methods |

| 3-RDM | (N_A^8) | Higher-order properties | Approximations severely affect results [13] |

| 4-RDM | (N_A^{10}) | Quantum Linear Response (qLR-SD) | Approximations can work for equilibrium geometries [13] |

Distinguishing Intrinsic vs. Extrinsic Correlation Complexity in Molecular Wavefunctions

The accurate description of electron correlation presents a central challenge in quantum chemistry, critically impacting the prediction of chemical properties and reaction dynamics. Traditional approaches often conflate the inherent complexity of many-electron wavefunctions with the complexity arising from the choice of mathematical representation. Recent advances, powered by quantum information theory (QIT), provide a rigorous framework to disentangle these effects, formally distinguishing between intrinsic correlation (an inherent property of the system) and extrinsic correlation (a basis-dependent quantity) [17] [2]. This distinction is not merely philosophical; it offers a principled pathway to simplify electronic structure problems and develop more efficient computational strategies, both for classical simulation and emerging quantum algorithms [10].

This Application Note delineates the theoretical foundations of intrinsic and extrinsic correlation complexity and provides detailed protocols for their quantification in molecular systems. By framing electron correlation through the lens of quantum information theory, we equip researchers with methodologies to precisely diagnose the correlation structure of molecular wavefunctions, thereby informing the selection of active spaces, guiding the development of compact wavefunction ansatzes, and potentially illuminating quantum advantage in chemical simulations.

Theoretical Foundations

The Orbital and Particle Pictures of Correlation

Quantum information theory introduces two complementary perspectives for analyzing correlation in fermionic systems:

The Orbital Picture (Extrinsic Correlation): This view treats individual spin-orbitals as subsystems. The total orbital correlation quantifies the complexity of the many-body wavefunction relative to a specific orbital basis, such as the canonical molecular orbitals [17] [1] [2]. This value is extrinsic; it depends on the chosen basis and can be altered by a unitary transformation of the orbitals.

The Particle Picture (Intrinsic Correlation): This view considers the fundamental, indistinguishable electrons as the subsystems. The resulting particle correlation is an intrinsic property of the physical system, independent of any specific orbital representation [17] [2].

The crucial link between these two perspectives is established by a fundamental result: the particle correlation is equal to the total orbital correlation minimized over all possible orbital bases [17] [2]. The orbital basis that achieves this minimum is the set of Natural Orbitals. [I{\text{total}}(\text{Particle}) = \min{\text{Orbital Bases}} I_{\text{total}}(\text{Orbital})] Consequently, the intrinsic correlation represents the minimal, and thus unavoidable, complexity of the wavefunction, while the extrinsic component represents additional complexity introduced by a suboptimal orbital choice [17].

The Role of Quantum Entanglement and Superselection Rules

In the orbital picture, quantum entanglement serves as a key quantifier of correlation. The von Neumann entropy of a reduced density matrix of one or more orbitals is a direct measure of their quantum correlation with the rest of the system [10] [18]. It is critical, however, to account for fundamental fermionic symmetries, known as superselection rules (SSRs). These rules forbid coherent superpositions of states with different fermion parity, and ignoring them can lead to a significant overestimation of the useful quantum entanglement in the system [10] [18]. Adherence to SSRs is therefore essential for a physically meaningful quantification of orbital entanglement.

Table 1: Core Concepts in Intrinsic vs. Extrinsic Correlation

| Concept | Definition | Theoretical Significance | Practical Implication |

|---|---|---|---|

| Intrinsic Correlation | Minimal correlation complexity over all orbital bases; inherent to the electron system [17] [2]. | Represents the fundamental, irreducible complexity of the many-body wavefunction. | Determines the ultimate compactness of a wavefunction representation and the resource requirements for quantum simulation. |

| Extrinsic Correlation | Correlation complexity relative to a specific orbital basis [17] [2]. | Quantifies the additional complexity imposed by the researcher's choice of representation. | Can be minimized by optimizing the orbital basis (e.g., using Natural Orbitals), simplifying classical and quantum computations. |

| Natural Orbitals | The orbital basis that diagonalizes the one-electron reduced density matrix and minimizes total orbital correlation [17]. | Provides the most efficient single-particle basis for expanding the wavefunction. | Using Natural Orbitals leads to the most compact representation and fastest convergence in configuration interaction calculations. |

| Superselection Rules (SSRs) | Fundamental symmetries restricting physically allowed superpositions in fermionic systems [10] [18]. | Prevents overestimation of physically accessible quantum resources like entanglement. | Reduces the number of measurements required on quantum hardware to reconstruct orbital correlation [10]. |

Protocols for Quantifying Correlation Complexity

Protocol 1: Calculating Orbital Entropies and Mutual Information

This protocol details the procedure for quantifying extrinsic correlation in a chosen molecular orbital basis using a quantum computer, as demonstrated for a vinylene carbonate + O(_2) reaction system [10] [18].

Principle: Orbital-wise von Neumann entropies (S(i)) are computed from the eigenvalues of the one-orbital reduced density matrix (1-ORDM). The quantum mutual information (I(i,j)) between orbitals (i) and (j) is then derived from these entropies and the two-orbital entropy (S(i,j)), providing a measure of their total correlation [10].

Diagram 1: Orbital Correlation Measurement Workflow

Materials & Reagents:

- Classical Computational Chemistry Suite (e.g., PySCF): For initial geometry optimization and electronic structure calculation to generate reference data.

- Quantum Computing Framework: Includes fermion-to-qubit mapping (e.g., Jordan-Wigner transformation), ansatz circuit design, and measurement routines.

- Trapped-Ion Quantum Computer (e.g., Quantinuum H1-1) or equivalent: For state preparation and measurement.

- Active Space Molecular Orbitals: Typically generated via methods like CASSCF or AVAS to define the relevant orbital subspace [10].

Step-by-Step Procedure:

System Preparation & Active Space Selection:

- Use classical methods (e.g., Nudged Elastic Band) to determine molecular geometries of interest along a reaction path [10].

- Perform an AVAS projection or CASSCF calculation to obtain a chemically relevant, localized set of active molecular orbitals. This mitigates overestimation of correlation from disperse orbitals [10].

State Preparation on Quantum Hardware:

Measurement & Reconstruction of ORDMs:

- For each set of orbitals, determine the expectation values of Pauli operators required to construct the 1- and 2-ORDMs.

- Crucially, respect fermionic SSRs. This not only provides a physically correct result but also significantly reduces the number of unique measurements by allowing Pauli operators to be grouped into commuting sets [10] [18].

Noise Mitigation:

- Apply a post-measurement noise reduction scheme to the raw ORDMs. This involves:

- A thresholding technique to filter out small, unphysical singular values.

- A maximum likelihood estimation (MLE) to reconstruct a physical density matrix [10].

- Apply a post-measurement noise reduction scheme to the raw ORDMs. This involves:

Data Analysis:

- Diagonalize the noise-mitigated 1-ORDM for orbital (i) to obtain its eigenvalues ({\lambda_k^{(i)}}).

- Calculate the orbital entropy: (S(i) = -\sumk \lambdak^{(i)} \log \lambda_k^{(i)}).

- Similarly, obtain the two-orbital entropy (S(i,j)) from the 2-ORDM.

- Calculate the mutual information: (I(i,j) = \frac{1}{2} [S(i) + S(j) - S(i,j)] (1-\delta_{ij})) [10].

Protocol 2: Determining Intrinsic Correlation via Natural Orbitals

This protocol describes a classically oriented method to approximate the intrinsic correlation of a system by identifying the orbital basis that minimizes the total orbital correlation—the Natural Orbitals.

Principle: The intrinsic correlation is defined by the particle picture, which is equivalent to the minimum total orbital correlation achievable by any orbital basis. This minimum is attained by the Natural Orbitals [17] [2].

Materials & Reagents:

- High-Performance Computing Cluster: For executing quantum chemistry calculations.

- Quantum Chemistry Software: Capable of multi-reference methods like CASSCF and density matrix analysis.

- Wavefunction Analysis Tool: To compute orbital entropies and mutual information from a given wavefunction.

Step-by-Step Procedure:

Initial Wavefunction Calculation:

- Perform a high-level wavefunction calculation for the molecular system of interest, such as a CASSCF or Full Configuration Interaction (FCI) calculation within a chosen active space.

Compute the One-Electron Reduced Density Matrix (1-RDM):

- From the converged wavefunction, extract the 1-RDM.

Diagonalize the 1-RDM:

- The eigenvectors of the 1-RDM are the Natural Orbitals. The eigenvalues are the corresponding natural occupancies.

Transform the Hamiltonian and Wavefunction:

- Rotate the active space orbitals from the initial basis (e.g., canonical) into the basis of Natural Orbitals.

Quantify Correlation in the Natural Orbital Basis:

- Recompute the total orbital correlation (e.g., the sum of all single-orbital entropies, (\sum_i S(i))) in this new basis.

- This minimized value is the closest numerical approximation to the system's intrinsic correlation for the given active space.

Table 2: Comparison of Correlation Quantification Protocols

| Aspect | Protocol 1: Orbital Correlation (Quantum Computer) | Protocol 2: Intrinsic Correlation (Classical) |

|---|---|---|

| Primary Objective | Measure basis-dependent (extrinsic) correlation and entanglement between specific molecular orbitals [10]. | Find the minimal, intrinsic correlation by identifying the optimal orbital basis (Natural Orbitals) [17] [2]. |

| Key Measurement | Orbital von Neumann entropy and mutual information from ORDMs. | Natural orbital occupancies from the 1-RDM; total correlation in the Natural Orbital basis. |

| Critical Consideration | Account for superselection rules to avoid overestimating entanglement and to reduce measurement overhead [10] [18]. | Requires an initial, accurate multi-reference wavefunction as a starting point. |

| Main Application | Probing quantum effects in specific reactions (e.g., transition states) and benchmarking quantum hardware [10]. | Providing theoretical justification for orbital choices and guiding the development of efficient computational methods [17]. |

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions

| Item Name | Function/Definition | Relevance to Correlation Analysis |

|---|---|---|

| Natural Orbitals | The single-particle basis that diagonalizes the one-electron reduced density matrix (1-RDM). | The fundamental "reagent" for isolating intrinsic correlation, as it provides the most compact representation of the wavefunction [17]. |

| Active Space | A selected set of molecular orbitals and electrons treated with high-level quantum chemistry methods. | Defines the subsystem for correlation analysis; its selection (e.g., via AVAS) is critical for focusing on chemically relevant correlations [10]. |

| Orbital Entropy ((S(i))) | Von Neumann entropy of the reduced density matrix of orbital (i). | A direct quantifier of an orbital's total correlation with the rest of the system in a given basis [10] [18]. |

| Quantum Mutual Information ((I(i,j))) | A measure of the total correlation (classical and quantum) between a pair of orbitals (i) and (j). | Identifies strongly correlated orbital pairs that are critical for accurate active space selection and understanding bonding [10]. |

| Superselection Rules (SSRs) | Physical constraints forbidding quantum superpositions of states with different global particle number parity. | A necessary "filter" for obtaining physically meaningful entanglement values and reducing quantum measurement costs [10] [18]. |

Discussion

The formal separation of intrinsic and extrinsic correlation complexity has profound implications for quantum chemistry research and development. For researchers investigating complex reaction mechanisms, such as the vinylene carbonate + O(_2) reaction, applying Protocol 1 allows for the precise identification of which molecular orbitals become highly correlated at transition states, providing a quantifiable picture of the quantum mechanical interactions driving the chemistry [10].

For scientists developing new computational methodologies, the intrinsic correlation concept (Protocol 2) provides a rigorous metric and a clear target: any efficient model for strong correlation should aim to capture the intrinsic correlation while avoiding the overhead of extrinsic complexity. This principle justifies the decades-long use of Natural Orbitals and opens pathways for designing new, more efficient orbital optimization protocols and wavefunction ansatzes [17] [2].

Finally, for the field of quantum computing for chemistry, these protocols establish a precise framework for assessing the performance of quantum algorithms. The correlation measures obtained from a quantum computer (Protocol 1) can be directly compared to classical benchmarks to verify accuracy. Furthermore, the distinction between intrinsic and extrinsic complexity helps delineate which aspects of a chemical problem are fundamentally challenging and which can be mitigated through algorithmic choices, thereby clarifying the potential path toward a practical quantum advantage [10].

The Theoretical Basis for Natural Orbitals in Simplifying Electronic Structures

Natural Orbitals (NOs) represent a fundamental concept in quantum chemistry, providing the optimal one-electron basis for describing many-electron wavefunctions. They are defined as the unique set of orbitals that diagonalize the one-particle reduced density matrix (1-RDM) of an N-electron system [19]. Mathematically, this is expressed as:

ΓΘk = pkΘk* (k = 1,2,...)

where Γ represents the first-order reduced density operator, Θk are the natural orbitals, and pk* represents the population (occupancy) of each eigenfunction Θk [19]. This definition reveals that NOs are intrinsic to the wavefunction itself rather than dependent on any particular basis set choice, making them a "natural" representation of electron density [19].

Within the framework of quantum information theory, recent research has revealed a remarkable property of natural orbitals: they effectively transform the nature of electron correlation from quantum to predominantly classical. Studies utilizing Shannon and von Neumann entropies have demonstrated that the difference between classical and quantum mutual information in molecular systems decreases by approximately 100-fold when using natural orbitals compared to canonical Hartree-Fock orbitals [20]. This profound insight suggests that computational tasks in quantum chemistry could be significantly simplified by employing natural orbitals, as they provide a representation where wavefunction correlations become essentially classical [20].

Theoretical Foundation and Orbital Hierarchies

The Natural Orbital Concept

Natural orbitals achieve their optimal description through a variational principle—they are the set of orthonormal orbitals that maximize electron occupancy [19]. For any normalized trial orbital φ, the occupancy pφ can be evaluated as the expectation value of the density operator:

pφ = <φ|Γ|φ>

The variational maximization of pφ for successive orthonormal trial orbitals leads to optimal populations pk* and orbitals Θk that satisfy the original eigenvalue equation [19]. The Pauli exclusion principle ensures these occupancies satisfy 0 ≤ pk* ≤ 2, with bonding natural bond orbitals (NBOs) typically exhibiting occupancies close to 2.000, and antibonding NBOs having occupancies near 0 [21].

Hierarchies of Localized Orbitals

The natural orbital approach generates a sequence of natural localized orbital sets that form a bridge between atomic orbitals and molecular orbitals [21]:

Atomic orbital → Natural Atomic Orbital (NAO) → Natural Hybrid Orbital (NHO) → Natural Bond Orbital (NBO) → Natural Localized Molecular Orbital (NLMO) → Molecular orbital

Each level in this hierarchy provides increasingly sophisticated descriptions of molecular electronic structure while maintaining chemical interpretability. Natural Atomic Orbitals incorporate two important physical effects that distinguish them from isolated-atom natural orbitals: (1) spatial diffuseness optimized for effective atomic charge in the molecular environment, and (2) nodal features due to steric confinement in the molecular environment [19].

Table 1: Hierarchy of Natural Localized Orbitals and Their Characteristics

| Orbital Type | Description | Key Features | Primary Applications |

|---|---|---|---|

| Natural Atomic Orbitals (NAOs) | Localized 1-center orbitals representing effective "natural orbitals of atom A" in molecules | Incorporate breathing responses to charge shifts; maintain strict orthogonality | Natural population analysis; basis for constructing higher-level orbitals |

| Natural Hybrid Orbitals (NHOs) | Directed hybrids formed from NAOs on individual atoms | Optimized for directional bonding; maximum electron density along bonding directions | Describing atomic hybridization in molecular environments |

| Natural Bond Orbitals (NBOs) | Localized 1-center or 2-center orbitals with maximum electron density | Occupancies ideally close to 2.000; provide most accurate "natural Lewis structure" | Chemical bonding analysis; resonance structure evaluation |

| Natural Localized Molecular Orbitals (NLMOs) | Semi-localized orbitals intermediate between NBOs and canonical MOs | Retain chemical interpretability while incorporating delocalization effects | Analysis of delocalization corrections to Lewis structure |

Computational Advantages and Applications

Accelerating Configuration Interaction Calculations

Natural orbitals significantly enhance the efficiency of correlated electronic structure calculations. Recent advances in neural-network-assisted configuration interaction (NNCI) methods demonstrate that using approximate natural orbitals—eigenfunctions of the one-particle density matrix computed from intermediate many-body eigenstates—consistently reduces the number of determinants required to achieve a given accuracy level [22].

Across benchmarks for H₂O, NH₃, CO, and C₃H₈, natural orbitals provide a more compact representation of electron correlation compared to canonical Hartree-Fock orbitals [22]. This efficiency gain stems from the fact that natural orbitals concentrate electron occupation into fewer orbitals, with only core and valence-shell NAOs having significant occupancies compared to extra-valence Rydberg-type NAOs [19]. This condensation of occupancy effectively reduces the dimensionality of the problem to a "natural minimal basis" (NMB), spanning core and valence-shell NAOs only, while the residual "natural Rydberg basis" (NRB) can be largely ignored [19].

Active Space Selection for Multiconfigurational Problems

For systems with strong static correlation—such as larger conjugated systems, transition states involving bond breaking/formation, and transition metal complexes—natural orbitals provide a robust approach for active space selection in multiconfigurational calculations [23]. The Unrestricted Natural Orbital (UNO) criterion uses the fractionally occupied UHF natural orbitals to define the active space, with fractional occupancy generally meaning electron population between 0.02-1.98 or 0.01-1.99 [23].

This approach yields the same active space as much more expensive approximate full CI methods for typical strongly correlated systems including polyenes, polyacenes, and transition metal complexes such as Hieber's anion [(CO)₃FeNO]⁻ and ferrocene [23]. The UHF natural orbitals approximate the optimized CAS-SCF orbitals exceptionally well, with energy errors typically below 1 mEh/active orbital [23].

Table 2: Performance Comparison of Orbital Bases in Electronic Structure Calculations

| Method | Orbital Basis | Determinants Required | Correlation Energy Recovery | Computational Scaling |

|---|---|---|---|---|

| Neural Network CI | Canonical HF orbitals | Baseline | Baseline | O(N⁴-N⁷) depending on method |

| Neural Network CI | Natural orbitals | Reduced by ~30-50% [22] | Equivalent accuracy with fewer determinants [22] | Same scaling but with smaller prefactor |

| CASSCF | Canonical orbitals | Large number for convergence | Often overestimates static correlation [23] | Factorial with active space size |

| CASSCF | UNOs | Minimal active space [23] | Balanced static/dynamic correlation [23] | Factorial but with smaller active space |

| DLPNO-CCSD | Pair Natural Orbitals | Highly compressed [24] | ~99.9% of canonical CCSD energy [24] | Near-linear with system size |

Specialized Natural Orbital Types and Methodologies

Pair Natural Orbitals for Local Correlation

Pair Natural Orbitals (PNOs) represent a specialized category designed specifically for efficient treatment of electron correlation in large systems. PNOs are defined as eigenvectors of the "pair density matrix" for each pair of localized occupied orbitals [24]. Unlike standard localization schemes (Ruedenberg, Pipek-Mezey) that only localize occupied orbitals, PNOs provide a compact virtual orbital basis that dramatically reduces the number of cluster amplitudes in coupled-cluster theory [24].

The compression is achieved by diagonalizing the pair density matrix for each occupied orbital pair (i,j), then discarding PNOs with occupation numbers below a threshold (TcutPNO) [24]. This approach enables coupled-cluster calculations on systems with thousands of atoms by achieving near-linear scaling of both memory and computational costs with system size [24].

Natural Bond Orbital Analysis

Natural Bond Orbital (NBO) analysis represents one of the most widely applied implementations of natural orbital theory, providing a mathematical foundation for qualitative Lewis structure concepts [21]. In NBO theory, each bonding NBO σₐ₈ can be expressed in terms of two directed valence hybrids (NHOs) hₐ, h₈ on atoms A and B:

σₐ₈ = cₐhₐ + c₈h₈

The polarization coefficients cₐ, c₈ determine the bond character, varying smoothly from covalent (cₐ = c₈) to ionic (cₐ >> c₈) limits [21]. The optimal Lewis structure in NBO analysis is defined as that with the maximum amount of electronic charge in Lewis orbitals (Lewis charge), with minor contributions from non-Lewis orbitals signaling delocalization effects [21].

Experimental Protocols and Implementation

Protocol 1: Natural Orbital Implementation in Neural Network CI

Purpose: To enhance the efficiency of neural-network-assisted configuration interaction calculations by implementing natural orbitals as the single-particle basis.

Workflow:

- Initial Calculation: Perform Hartree-Fock calculation in an appropriate basis set (plane-wave with 1000 eV cutoff or numerical atomic orbitals) [22].

- Integral Transformation: Compute single- and two-particle integrals for the many-body Hamiltonian using canonical Hartree-Fock orbitals [22].

- Initial NNCI Iteration: Perform preliminary NNCI calculation to obtain an intermediate many-body wavefunction [22].

- Density Matrix Construction: Compute the one-particle reduced density matrix (1-RDM) from the intermediate NNCI solution [22].

- Natural Orbital Generation: Diagonalize the 1-RDM to obtain approximate natural orbitals [22].

- Final NNCI Iteration: Continue the neural-network-assisted selection in the rotated natural orbital basis [22].

- Validation: Compare the convergence rate and number of determinants required versus canonical orbital basis [22].

Key Parameters:

- Plane-wave cutoff: 1000 eV [22]

- Convergence threshold: squared residual < 10⁻¹¹ eV² per valence electron [22]

- Active space size: Typically 100-500 orbitals depending on system [22]

Protocol 2: UNO-CASSCF for Strongly Correlated Systems

Purpose: To determine the optimal active space for multiconfigurational wavefunctions using the Unrestricted Natural Orbital criterion for systems with strong static correlation.

Workflow:

- UHF Calculation: Perform unrestricted Hartree-Fock calculation using an analytical method accurate to fourth order in orbital rotation angles to ensure proper convergence [23].

- Natural Orbital Analysis: Compute natural orbitals from the UHF density matrix and identify fractionally occupied orbitals (occupancies between 0.02-1.98) [23].

- Active Space Definition: Define the active space as comprising all fractionally occupied natural orbitals [23].

- CASSCF Calculation: Perform complete active space self-consistent field calculation using the UNO-defined active space [23].

- Dynamical Correlation: Add dynamical correlation using perturbation theory or coupled-cluster methods [23].

- Validation: Compare with approximate full CI methods to verify active space completeness [23].

Applications: Polyenes, polyacenes, Bergman cyclization reaction pathway, transition metal complexes [23].

Troubleshooting:

- For systems with multiple strongly correlated partners, use multiple independent UHF solutions and average their natural orbitals [23]

- For excited states, employ state-averaged natural orbitals from multiple UHF solutions [23]

Protocol 3: DLPNO-CCSD with Pair Natural Orbitals

Purpose: To perform accurate coupled-cluster calculations on large molecular systems using Domain-based Local Pair Natural Orbitals to reduce computational cost.

Workflow:

- Hartree-Fock Calculation: Obtain converged HF molecular orbitals [24].

- Occupied Orbital Localization: Localize occupied orbitals using Pipek-Mezey or Foster-Boys scheme [24].

- MP2 Initial Guess: Obtain MP2 guess for cluster amplitudes using localized occupied orbitals and canonical virtual orbitals [24].

- Pair Density Construction: Construct pair density matrix for each pair of localized occupied orbitals (i,j) in the virtual space [24].

- PNO Generation: Diagonalize each pair density matrix to obtain pair-natural orbitals and their occupation numbers [24].

- PNO Truncation: Discard PNOs with occupation numbers below threshold (TcutPNO, typically 10⁻⁷-10⁻⁵) [24].

- DLPNO-CCSD: Perform coupled-cluster calculation defining cluster amplitudes only for significant PNOs for each pair [24].

Key Parameters:

- TcutPNO: 10⁻⁷ (tight) to 10⁻⁵ (normal) [24]

- TCutPairs: Threshold for neglecting distant orbital pairs [24]

- TCutMKN: Threshold for natural orbital occupation number [24]

The Scientist's Toolkit: Essential Research Reagents

Table 3: Essential Computational Tools for Natural Orbital Calculations

| Tool/Software | Function | Application Context | Key Features |

|---|---|---|---|

| NBO Program | Natural Bond Orbital analysis | Chemical bonding analysis in molecules | NAO, NHO, NBO generation; Lewis structure analysis [19] [21] |

| MOLPRO/MOLCAS | Multireference quantum chemistry | CASSCF with UNO active spaces | Advanced CI methods; UNO-CASSCF implementation [23] |

| GPAW | DFT/HF calculator with PAW | Orbital basis generation for NNCI | Plane-wave basis; projector-augmented wave method [22] |

| DLPNO-CCSD Codes | Local coupled-cluster methods | Large-system correlation calculations | Linear scaling; PNO generation and truncation [24] |

| JANPA Package | Open-source NPA implementation | Natural population analysis | Free alternative for NAO transformation [21] |

Quantum Information Perspective and Future Directions

The intersection of quantum information theory and natural orbital analysis reveals profound insights about the nature of electron correlation. The dramatic reduction in quantum mutual information when using natural orbitals suggests that the computational complexity of the quantum many-body problem may be less severe than previously assumed [20]. This has significant implications for the development of quantum computing algorithms in quantum chemistry, as it indicates that classical preprocessing with natural orbitals could substantially reduce the quantum resources required for accurate electronic structure calculations.

Future research directions include developing improved methods for generating accurate natural orbitals with low computational cost, extending natural orbital approaches to time-dependent and excited-state problems, and further exploring the connections between quantum information measures and chemical bonding patterns. The integration of machine learning methods with natural orbital analysis, as demonstrated in neural-network CI approaches, represents a particularly promising avenue for enabling accurate calculations on increasingly complex molecular systems [22].

From Theory to Practice: QIT-Driven Methods for Chemical Discovery

The accurate quantification of electron correlation represents one of the most fundamental challenges in quantum chemistry, directly impacting the predictive capability of electronic structure methods across chemical, materials, and pharmaceutical research. Traditional quantum chemistry approaches have quantified correlation energy operationally as the difference between the exact non-relativistic energy and the Hartree-Fock energy [25]. While this definition has served the field for decades, it provides limited insight into the intrinsic complexity of many-electron wave functions or pathways toward more efficient computational strategies.

Quantum Information Theory (QIT) offers a transformative perspective by reframing electron correlation through rigorously defined concepts of entanglement and information [1] [2]. This framework enables the decomposition of correlation into mathematically rigorous, operationally meaningful components that can guide the development of both classical and quantum computational approaches. For drug development professionals, these advanced correlation measures provide deeper insight into electronic structure phenomena that underlie molecular reactivity, binding interactions, and spectroscopic properties—ultimately enhancing predictive modeling in complex biomolecular systems.

The synergy between QIT and quantum chemistry creates a powerful paradigm: QIT offers precise characterization of various aspects of electron correlation, potentially simplifying descriptions of correlated many-electron wave functions and inspiring new approaches to the electron correlation problem, while quantum chemistry provides essential expertise for developing novel quantum registers based on molecular systems [1]. This application note establishes the operational protocols for implementing these advanced correlation measures in practical research settings.

Theoretical Foundation: Orbital Versus Particle Correlation

Distinct Perspectives on Electron Correlation

QIT introduces two conceptually distinct perspectives on electron correlation that provide complementary insights into electronic structure:

Orbital Correlation: Quantifies wave function complexity relative to a specific orbital basis. This basis-dependent measure reflects the extrinsic complexity of the electronic system and varies with different orbital transformations [1] [2] [25].

Particle Correlation: Represents the minimal, intrinsic complexity of many-electron wave functions, independent of orbital choice. Mathematically, particle correlation equals the total orbital correlation minimized over all possible orbital bases [1] [2].

The theoretical relationship between these perspectives provides rigorous justification for the long-favored use of natural orbitals in quantum chemistry, as these orbitals specifically minimize the orbital correlation to reveal the intrinsic particle correlation [1] [2]. This distinction is particularly valuable for diagnosing strong correlation in transition metal complexes, open-shell systems, and bond-breaking processes—all computationally challenging scenarios relevant to pharmaceutical research.

Mathematical Framework and Quantification

Within the QIT framework, correlation and entanglement are quantified through the geometry of quantum states and their subsystems [1] [2]. For a bipartite quantum system, the total correlation between subsystems A and B can be quantified through the quantum mutual information:

[I(A:B) = S(\rhoA) + S(\rhoB) - S(\rho_{AB})]

where (S(\rho) = -\text{Tr}(\rho \ln \rho)) is the von Neumann entropy, and (\rhoA), (\rhoB) are reduced density matrices obtained by partial tracing [10]. For molecular systems, these subsystems may represent individual orbitals, spatial regions, or specific particles, with appropriate modifications to account for fermionic antisymmetry and superselection rules [10].

Table 1: Key Quantum Information Theoretic Measures of Electron Correlation

| Measure | Mathematical Definition | Physical Interpretation | Application Context |

|---|---|---|---|

| Orbital Entropy | (Si = -\sum{\alpha} \lambda{\alpha} \ln \lambda{\alpha}) [10] | Complexity of orbital i when rest of system is traced out | Basis-dependent correlation assessment |

| Mutual Information | (I{ij} = Si + Sj - S{ij}) [10] | Total correlation between orbitals i and j | Identifying strongly correlated orbital pairs |

| Particle Correlation | (\min{U} \sumi S_i(U\rho U^\dagger)) [1] | Intrinsic correlation independent of orbital basis | Fundamental correlation complexity |

| Cumulant Order Parameter | (\lambda2 = \gamma2 - \gamma1 \wedge \gamma1 + \text{exchange}) [25] | Factorizable part of 2-RDM | Separating Fermi from Coulomb correlation |

Experimental and Computational Protocols

Research Reagent Solutions for Correlation Analysis

Table 2: Essential Computational Tools for Electron Correlation Quantification

| Research Reagent | Function | Example Implementation |

|---|---|---|

| Orbital Optimization Algorithms | Generate natural orbitals that minimize orbital correlation | CASSCF orbital optimization [10] |

| Active Space Selection Methods | Identify strongly correlated orbital subspaces | AVAS projection onto atomic orbitals [10] |

| Quantum Circuit Compilation | Prepare molecular states on quantum hardware | Jordan-Wigner transformation, VQE ansätze [10] |

| Reduced Density Matrix Solvers | Compute orbital entropies and mutual information | Classical diagonalization or quantum measurement [10] |

| Information-Theoretic Descriptors | Predict correlation energies from electron density | Shannon entropy, Fisher information [26] |

Protocol 1: Orbital Correlation Measurement via Classical Computation

Purpose: To quantify orbital-wise correlation and entanglement in molecular systems using classical computational resources.

Step-by-Step Workflow:

System Preparation

- Select molecular geometry and atomic basis set (e.g., def2-SVP [10])

- Perform Hartree-Fock calculation to obtain mean-field reference

Active Space Selection

- Identify chemically relevant orbitals using automated methods (e.g., AVAS [10])

- Project canonical orbitals onto targeted atomic orbitals (e.g., oxygen p orbitals for transition metal complexes)

- Determine active space size (e.g., 6 electrons in 9 orbitals)

Wavefunction Optimization

- Perform CASSCF calculation to optimize both CI coefficients and orbital shapes [10]

- Apply symmetry constraints (e.g., (\langle S^2 \rangle = 0) for singlet states)

- Converge wavefunction to stable solution

Orbital Reduced Density Matrix (ORDM) Construction

- Compute 1- and 2-orbital reduced density matrices from full wavefunction

- Apply fermionic superselection rules to ensure physically meaningful results [10]

Entropy and Correlation Calculation

- Diagonalize each ORDM to obtain eigenvalues (\lambda_\alpha)

- Compute orbital entropies: (Si = -\sum{\alpha} \lambda{\alpha} \ln \lambda{\alpha}) [10]

- Compute orbital-orbital mutual information: (I{ij} = Si + Sj - S{ij})

Data Analysis

- Identify strongly correlated orbital pairs (high (I_{ij}))

- Map correlation networks to chemical intuition

- Compare different orbital bases (canonical vs. localized)

Figure 1: Classical protocol for orbital correlation measurement

Protocol 2: Quantum Computation of Orbital Entropies

Purpose: To measure orbital correlation and entanglement directly on quantum hardware, bypassing classical wavefunction storage limitations.

Step-by-Step Workflow:

State Preparation

- Encode fermionic problem into qubits using Jordan-Wigner transformation [10]

- Prepare molecular ground state using variational quantum eigensolver (VQE) with optimized ansatz

Measurement Strategy Optimization

- Account for fermionic superselection rules to reduce measurement overhead [10]

- Group Pauli operators into commuting sets to minimize quantum circuit executions

- Construct measurement circuits for ORDM elements

Quantum Execution

- Execute measurement circuits on quantum hardware (e.g., trapped-ion quantum computer [10])

- Collect statistical samples for ORDM matrix elements

Noise Mitigation

- Apply post-measurement noise reduction schemes

- Use thresholding method to filter small singular values from noisy ORDMs [10]

- Apply maximum likelihood estimation to reconstruct physical ORDMs

Entropy Computation

- Diagonalize noise-corrected ORDMs to obtain eigenvalues

- Compute von Neumann entropies for individual orbitals and orbital pairs

- Validate results against noiseless benchmarks where available

Technical Notes: For the Quantinuum H1-1 trapped-ion quantum computer, superselection rules significantly reduce the number of circuits required for ORDM construction [10]. One-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction when superselection rules are properly accounted for [10].

Figure 2: Quantum computing protocol for orbital entropy measurement

Applications and Case Studies

Correlation Analysis in Chemical Reactions

The VC + O(_2) → dioxetane reaction, relevant to lithium-ion battery degradation, provides an illustrative case study for applying orbital correlation measures [10]. This reaction involves a strongly correlated transition state with stretched oxygen bonds aligning to the C-C bond of vinylene carbonate.

Protocol Application:

- System: 4 energetically shallowest molecular orbitals from AVAS projection (6 electrons in 4 orbitals) [10]

- Method: CASSCF with spin constraints followed by orbital entropy calculation

- Key Finding: 2p oxygen orbitals show intensified correlation at the transition state (images 7-10), settling to weaker correlation in the product state [10]

- Chemical Insight: Orbital correlation measures directly identify the electronic origins of reaction barriers in this chemically relevant system

Predicting Correlation Energies via Information-Theoretic Approach

Beyond wavefunction-based measures, density-based information-theoretic quantities provide an alternative pathway for correlation energy prediction [26]. This approach establishes linear relationships between ITA descriptors computed at the Hartree-Fock level and post-Hartree-Fock correlation energies.

Table 3: Performance of ITA Quantities for Correlation Energy Prediction

| System Class | Best-Performing ITA Descriptors | Prediction RMSD | Linear Correlation (R²) |

|---|---|---|---|

| Octane Isomers | Fisher Information ((I_F)) [26] | <2.0 mH | ~0.99 |

| Polymeric Chains | Shannon Entropy ((SS)), Fisher Information ((IF)) [26] | 1.5-4.0 mH | ~1.000 |

| Molecular Clusters | Multiple descriptors [26] | 17-42 mH | >0.990 |

| Protonated Water Clusters | Onicescu Energy ((E2), (E3)) [26] | 2.1 mH | 1.000 |

Protocol for LR(ITA) Correlation Energy Prediction:

- Compute electron density (\rho(\mathbf{r})) at Hartree-Fock level

- Calculate ITA quantities:

- Shannon entropy: (SS = -\int \rho(\mathbf{r}) \ln \rho(\mathbf{r}) d\mathbf{r})

- Fisher information: (IF = \int \frac{|\nabla \rho(\mathbf{r})|^2}{\rho(\mathbf{r})} d\mathbf{r})

- Onicescu information energy: (E_2 = \int \rho^2(\mathbf{r}) d\mathbf{r})

- Establish linear regression between ITA quantities and reference correlation energies (MP2, CCSD, or CCSD(T))

- Predict correlation energies for similar systems using regression model

This protocol achieves chemical accuracy (<1 kcal/mol) for many system classes while avoiding expensive post-Hartree-Fock computations [26], making it particularly valuable for high-throughput screening in drug discovery applications.

The operational measures of electron correlation provided by quantum information theory represent a significant advancement beyond traditional correlation energy definitions. The distinction between orbital and particle correlation offers profound theoretical insights, while practical protocols for measuring orbital entropies—whether through classical computation or quantum hardware—provide researchers with actionable tools for analyzing complex electronic structures.

For pharmaceutical researchers, these approaches enable deeper understanding of electronic phenomena in drug-target interactions, metalloenzyme activity, and excited state processes. The ability to identify intrinsically correlated orbital networks within complex molecular systems guides the development of more efficient computational strategies and active space selections for accurate property prediction.