Quantifying Quantum Entanglement in Molecules: From Fundamental Measures to Drug Discovery Applications

This article provides a comprehensive overview of the theory, measurement, and application of quantum entanglement and correlation quantification in molecular systems.

Quantifying Quantum Entanglement in Molecules: From Fundamental Measures to Drug Discovery Applications

Abstract

This article provides a comprehensive overview of the theory, measurement, and application of quantum entanglement and correlation quantification in molecular systems. Aimed at researchers and drug development professionals, it explores foundational concepts distinguishing classical from quantum correlations and details advanced methodologies from orbital von Neumann entropy to machine-learning-assisted multicopy measurements. The content addresses key challenges such as fermionic superselection rules and measurement optimization on noisy quantum hardware, while validating approaches through comparisons with traditional tomography and benchmark states. By synthesizing recent advances from quantum computing and quantum information science, this review highlights the transformative potential of entanglement quantification for understanding chemical reactions, optimizing active spaces, and accelerating computational drug discovery.

Quantum vs. Classical: Defining Correlation and Entanglement in Molecular Systems

In quantum information science, the distinction between separable states and genuinely entangled states represents a fundamental boundary with profound implications for quantum chemistry, material science, and drug discovery. This division separates systems exhibiting classical correlations from those possessing uniquely quantum correlations that enable exponential computational advantages.

A separable quantum state is defined as a state that can be written as a probabilistic mixture of product states. For a bipartite system, this can be expressed as ρ = Σ pᵢ ρᵢᴬ ⊗ ρᵢᴮ, where pᵢ represents a probability distribution [1]. These states contain only classical correlations and can be prepared using local operations and classical communication alone.

In contrast, genuinely entangled states cannot be factorized in this way. The seminal EPR state |Ψ⁻⟩ = 1/√2(|↑↓⟩ - |↓↑⟩) exemplifies this concept, where the state of the entire system is well-defined while individual subsystems remain maximally undetermined [1]. As Schrödinger noted in 1935, "The whole is in a definite state, the parts taken individually are not" [1], capturing the essence of pure-state entanglement where knowledge is fundamentally incomplete at the subsystem level.

Theoretical Framework and Quantitative Measures

Mathematical Formalism and Key Properties

The mathematical distinction between separable and entangled states enables rigorous quantification of quantum correlations essential for molecular simulations:

Separable States Characteristics:

- Can be prepared using shared random numbers ("over the phone") and local operations

- Contain no quantum correlations beyond classical probability

- All correlations can be explained by local hidden variable models

- Satisfy all Bell inequalities [1]

Entangled States Characteristics:

- Violate Bell inequalities, demonstrating non-local correlations

- Enable quantum teleportation and superdense coding

- Provide exponential speedup for certain computational problems

- Exhibit measurement correlations that cannot be reproduced classically

For pure states, the presence of entanglement is determined by the von Neumann entropy of the reduced density matrices. A pure state |ψ⟩ᴬᴮ is entangled if and only if the reduced state ρᴬ = Trʙ|ψ⟩⟨ψ| is mixed, with the degree of entanglement quantified by S(ρᴬ) = -Tr(ρᴬlog₂ρᴬ) [1].

Operational Measures of Entanglement

For mixed states, quantifying entanglement becomes more nuanced. Two key measures have emerged with direct physical significance:

Distillable Entanglement (D(ρ)): The number of maximally entangled EPR pairs that can be extracted per copy of ρ in the asymptotic limit using local operations and classical communication [1]

Entanglement Cost (E(ρ)): The minimum number of EPR pairs required to create a copy of ρ using local operations and classical communication [1]

For pure states, these measures coincide and equal the von Neumann entropy of the reduced states, establishing a reversible framework for entanglement manipulation.

Table 1: Quantitative Measures of Entanglement for Different State Types

| State Type | Separability Criteria | Entanglement Measure | Bell Inequality | |||

|---|---|---|---|---|---|---|

| Pure Separable | Factorizable as | ψ⟩ = | ψᴬ⟩ ⊗ | ψᴮ⟩ | E = 0 | Never violated |

| Pure Entangled | Not factorizable | E = S(ρᴬ) = S(ρᴮ) > 0 | Always violated | |||

| Mixed Separable | Convex combination of product states | D(ρ) = E(ρ) = 0 | Never violated | |||

| Mixed Entangled | Not separable | D(ρ) ≤ E(ρ) | May be violated |

Experimental Detection and Verification

Laboratory Protocols for Entanglement Detection

Experimental verification of entanglement in molecular systems requires sophisticated protocols. Recent breakthroughs at Princeton University demonstrate a comprehensive methodology for preparing and detecting quantum correlations between molecules:

Molecular Preparation Protocol:

- Cool two atomic gases (sodium and rubidium) to nanokelvin temperatures using evaporative cooling techniques

- Achieve Bose-Einstein condensation in both atomic gases

- coax atoms into pairing as sodium-rubidium molecules using Feshbach resonance or photoassociation

- Transfer molecules to absolute ground state using stimulated Raman adiabatic passage, freezing all rotations and vibrations

- Isolate molecules in a vacuum chamber within an optical lattice created by interfering laser beams [2]

Quantum Correlation Detection Protocol:

- Prepare molecules in a well-defined internal and motional quantum state

- Apply a sudden "nudge" to push the system out of equilibrium

- Allow molecules to interact and build up quantum entanglement

- Use quantum gas microscopy with high spatial resolution to observe individual molecules

- Track site-resolved correlations as the system evolves

- Extract entanglement metrics from correlation patterns using quantum state tomography [2]

Superselection Rules and Orbital Entanglement

In molecular systems, fermionic superselection rules (SSRs) impose critical constraints on entanglement quantification. Recent research on the Quantinuum H1-1 trapped-ion quantum computer demonstrates:

SSR-Aware Measurement Protocol:

- Encode fermionic problem into qubits using Jordan-Wigner transformation

- Construct orbital reduced density matrices (ORDMs) from measurement circuits

- Account for fermionic SSRs to prevent overestimation of entanglement

- Group Pauli operators into commuting sets to reduce measurement overhead

- Apply low-overhead noise reduction techniques to measured ORDMs

- Calculate von Neumann entropies from eigenvalues of the ORDMs [3]

This approach revealed a crucial insight: one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction, highlighting how fundamental symmetries constrain observable entanglement in molecular systems [3].

Quantum Computing Applications in Molecular Systems

Quantum Simulations of Molecular Processes

Quantum computers provide a natural platform for simulating entangled molecular systems. Recent research applied quantum computing to study the formation of tetraoxabicyclo[3.2.0]heptan-3-one from vinylene carbonate and singlet oxygen (¹O₂), a reaction relevant to lithium-ion battery degradation:

Molecular Simulation Protocol:

- Determine minimum-energy path using nudged elastic band (NEB) method with DFT/PBE

- Apply atomic valence active space (AVAS) projections to p orbitals of O₂

- Select active space of 4 molecular orbitals with 6 electrons

- Perform complete active space self-consistent field (CASSCF) calculations

- Encode fermionic problem into qubits using Jordan-Wigner transformation

- Optimize variational quantum eigensolver (VQE) ansatz for state preparation

- Measure orbital reduced density matrices on quantum hardware [3]

This protocol successfully captured the strongly correlated transition state where oxygen bonds stretch to align with the C-C bond of the carbonate, demonstrating how quantum computers can elucidate entanglement in chemical reactions.

Drug Discovery Applications

The pharmaceutical industry is leveraging quantum entanglement for molecular simulations that classical computers struggle to perform:

Quantum-Enhanced Drug Discovery Protocol:

- Target identification through analysis of genetic, proteomic, and clinical data

- Virtual screening of billion-compound libraries using quantum algorithms

- Molecular docking with quantum-powered accuracy for binding affinity prediction

- Hydration analysis of protein pockets using hybrid quantum-classical approaches

- Toxicity prediction through quantum simulation of metabolic pathways [4] [5] [6]

St. Jude Children's Research Hospital demonstrated this approach by targeting the "undruggable" KRAS protein, combining classical machine learning with quantum models to identify novel binding molecules validated through experimental testing [7].

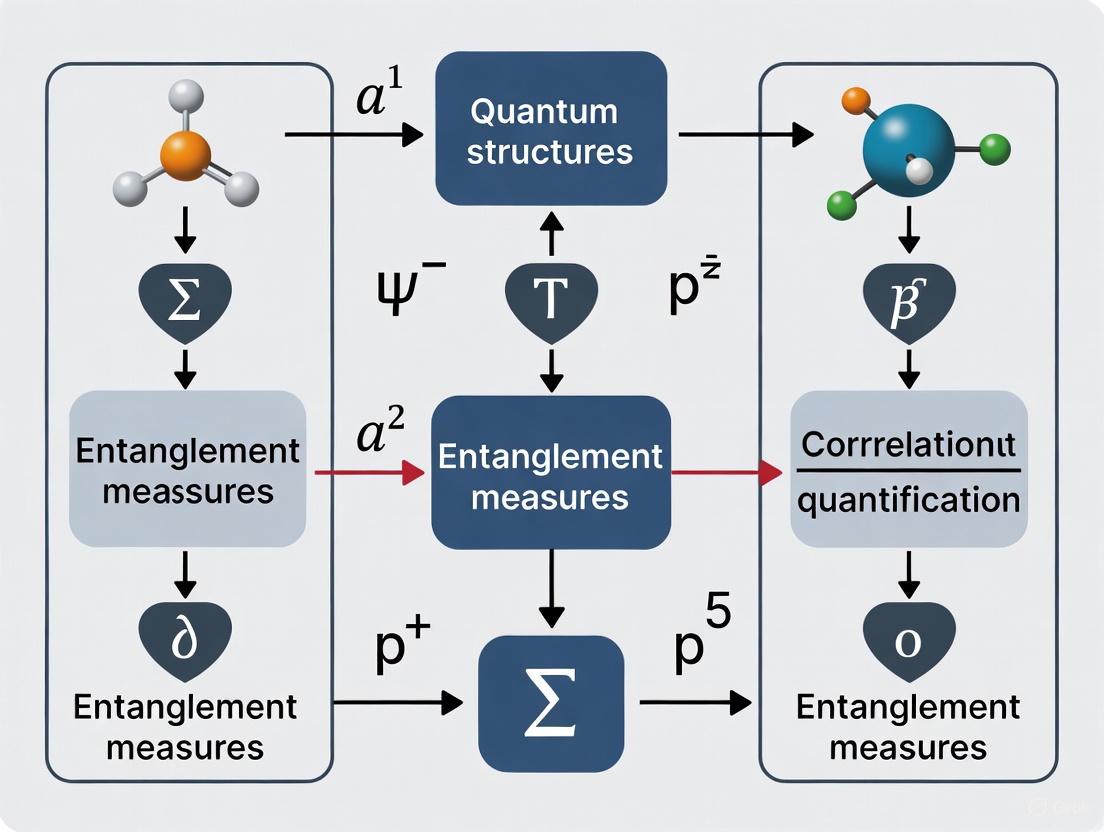

Diagram 1: Quantum-enhanced drug discovery workflow showing the integration of classical and quantum processing with experimental validation.

Research Reagent Solutions for Quantum Molecular Experiments

Table 2: Essential Research Tools for Quantum Molecular Simulations

| Research Tool | Function | Example Implementation |

|---|---|---|

| Trapped-Ion Quantum Computers | High-fidelity qubit operations for molecular simulations | Quantinuum H1-1 system for orbital entanglement measurement [3] |

| Optical Lattices | Confinement of ultracold molecules for quantum state control | "Egg carton" light crystals for molecular positioning [2] |

| Neutral-Atom Arrays | Quantum reservoir computing for molecular property prediction | QuEra's neutral-atom system for drug discovery [8] |

| Orbital Reduced Density Matrix (ORDM) Methods | Quantification of orbital correlation and entanglement | Von Neumann entropy calculation from ORDM eigenvalues [3] |

| Quantum Machine Learning (QML) Algorithms | Enhanced pattern recognition in molecular data | Quantum reservoir computing for small datasets [8] |

| Variational Quantum Eigensolvers (VQE) | Ground state preparation for chemical systems | Quantum circuit optimization for molecular wavefunctions [3] |

Comparative Performance Analysis

Computational Advantages of Entangled Systems

Quantum entanglement provides measurable advantages over classical approaches for specific molecular simulation tasks:

Binding Prediction Enhancement:

- Quantum-enhanced models identified two novel KRAS-binding molecules validated experimentally [7]

- Quantum reservoir computing improved molecular property prediction accuracy with limited training data (as few as 100 records) [8]

- Quantum algorithms enabled more reliable predictions when data is scarce, outperforming classical random forests on small datasets [8]

Simulation Accuracy Metrics:

- Quantum simulations captured strong static correlation in transition states of VC+O₂ reaction [3]

- Orbital von Neumann entropies calculated on quantum hardware showed excellent agreement with noiseless benchmarks [3]

- Quantum-powered tools model protein-ligand interactions with higher accuracy under real-world biological conditions [6]

Diagram 2: Resource scaling comparison showing quantum advantage for molecular simulations.

Limitations and Current Challenges

Despite promising results, practical challenges remain in harnessing quantum entanglement for molecular simulations:

Technical Limitations:

- Quantum advantage diminishes with larger datasets (>800 records) in drug discovery applications [8]

- Current hardware requires error mitigation techniques for accurate entropy calculations [3]

- Sampling noise presents sensitivity challenges in quantum reservoir computing [8]

Theoretical Constraints:

- Fermionic superselection rules limit observable entanglement in molecular orbitals [3]

- Mixed state entanglement quantification remains computationally complex [1]

- Entanglement distribution in many-body systems is not fully characterized [2]

The fundamental distinction between separable states and genuinely entangled states underpins a paradigm shift in computational chemistry and drug discovery. While separable states represent the classical boundary of correlation, entangled states unlock exponential computational power for simulating molecular quantum phenomena.

The experimental protocols and quantification methods detailed in this guide provide researchers with essential tools for designing studies that leverage quantum entanglement. As quantum hardware continues to advance, with roadmaps indicating increasingly powerful systems within 2-5 years [4], the ability to harness genuine quantum entanglement will transform molecular research from empirical observation to predictive simulation.

Future research directions include developing more robust entanglement distillation protocols for noisy intermediate-scale quantum devices, refining SSR-aware entanglement measures for molecular systems, and expanding quantum machine learning applications to protein folding and in silico clinical trials. Companies and research institutions that build capabilities in quantum-enabled molecular simulation today will be positioned to lead the development of next-generation therapeutics and materials.

In the field of quantum information science, accurately quantifying correlations is paramount for advancing research in quantum computing, communication, and the understanding of complex molecular systems. For researchers investigating entanglement measures and correlation quantification in molecules, three key quantifiers provide distinct yet complementary insights: Von Neumann Entropy, which measures quantum entanglement and information uncertainty; Quantum Mutual Information, which captures total correlations between subsystems; and Quantum Correlation Distance, a more recent measure for distinguishing quantum from classical correlations. Each metric operates on different theoretical principles and provides unique information about the quantum system under investigation, making their comparative understanding crucial for selecting the appropriate tool for specific research applications in molecular physics and drug development.

This guide provides an objective comparison of these three quantifiers, detailing their mathematical foundations, properties, and experimental applications, with particular emphasis on their use in molecular research contexts. We present structured comparisons, experimental protocols, and visualization tools to assist researchers in selecting and applying these measures effectively.

Theoretical Foundations & Mathematical Formulations

Von Neumann Entropy

The Von Neumann Entropy quantifies the uncertainty or mixedness of a quantum state and serves as a fundamental measure of quantum entanglement for bipartite pure states. Mathematically, for a density matrix ρ describing a quantum state, it is defined as [9] [10] [11]:

[ S(\rho) = -\text{Tr}(\rho \log \rho) = -\sumi \lambdai \log \lambda_i ]

where λᵢ are the eigenvalues of ρ. For a composite system AB in a pure state |Ψᴬᴮ⟩, the entropy of the reduced density matrix ρᴬ = Trʙ|Ψᴬᴮ⟩⟨Ψᴬᴮ| quantifies the entanglement between subsystems A and B [9]. When S(ρᴬ) > 0, the subsystems are entangled, with maximal entropy indicating maximal entanglement [10].

Quantum Mutual Information

Quantum Mutual Information measures the total correlations - both classical and quantum - between two subsystems of a composite quantum system. For a bipartite system AB with density matrix ρᴬᴮ, it is defined as [12] [13]:

[ I(A:B) = S(\rhoA) + S(\rhoB) - S(\rho_{AB}) ]

where S(ρᴬ) and S(ρʙ) are the von Neumann entropies of the reduced density matrices, and S(ρᴬᴮ) is the entropy of the joint system [12]. This formulation directly extends the concept of mutual information from classical information theory to the quantum domain, representing the information about subsystem A gained by measuring subsystem B, and vice versa [13].

Quantum Correlation Distance

While the search results do not provide a precise mathematical definition of Quantum Correlation Distance, it is conceptually related to distinguishing quantum correlations from classical ones and measuring their strength. The term appears in discussions of constraining correlations in quantum measurements over distance [14], suggesting a measure concerned with the spatial aspects of quantum correlations. Unlike the previous two measures with well-established formulas, QCD represents a class of distance-based measures for quantifying quantumness of correlations, potentially including metrics like the trace distance or Hilbert-Schmidt distance between quantum states.

Table 1: Mathematical Properties of Quantum Correlation Quantifiers

| Property | Von Neumann Entropy | Quantum Mutual Information | Quantum Correlation Distance |

|---|---|---|---|

| Mathematical Formula | S(ρ) = -Tr(ρlogρ) | I(A:B) = S(ρA) + S(ρB) - S(ρ_AB) | Distance-based metric (precise definition varies) |

| Theoretical Basis | Quantum information theory | Classical and quantum information theory | Geometric quantum theory |

| Correlation Type Measured | Quantum entanglement | Total correlations (classical + quantum) | Quantum correlations specifically |

| Range of Values | 0 ≤ S(ρ) ≤ log(d) for dimension d | 0 ≤ I(A:B) ≤ 2min{S(ρA), S(ρB)} | 0 to maximum depending on specific metric |

| Vanishes For | Pure separable states | Product states | Classically correlated states |

Comparative Analysis of Properties and Applications

Key Properties and Behavior

Each quantifier possesses distinct characteristics that determine its appropriate applications:

Von Neumann Entropy is a faithful measure of entanglement for bipartite pure states, but cannot distinguish classical from quantum correlations in mixed states [10]. It satisfies strong subadditivity and is non-increasing under local operations. For a Bell state of two qubits, S(ρᴬ) = 1 (using log base 2), indicating maximal entanglement [11].

Quantum Mutual Information captures all correlations present in a system, both classical and quantum [12]. It is symmetric (I(A:B) = I(B:A)) and non-negative [15]. Unlike entanglement measures, mutual information can be non-zero for separable states with classical correlations.

Quantum Correlation Distance is designed specifically to quantify quantum aspects of correlations. Experimental studies have shown that quantum correlations can be more robust than classical ones under decoherence, and in certain cases, quantum correlation can exceed classical correlation, contradicting early conjectures about their relative magnitudes [12].

Applications in Molecular and Biological Systems

These quantifiers have found diverse applications in molecular research:

Mutual Information in molecular systems analyzes dynamical heterogeneity in glass-forming liquids, revealing populations of particles with different mobility and relaxation properties [16]. It serves as a structural fingerprint for biomolecular sequences in machine learning classifiers [13] and enables single-cell network construction from scRNA-seq data [15].

Von Neumann Entropy applications extend to quantifying entanglement in molecular systems, with potential connections to black hole entropy through the area law of bipartite entanglement entropy [9].

Correlation Distance concepts help constrain quantum measurements over large distances, with proposed experiments to test correlation preservation over Earth-Moon distances [14].

Table 2: Experimental Applications Across Disciplines

| Field/Application | Von Neumann Entropy | Quantum Mutual Information | Quantum Correlation Distance |

|---|---|---|---|

| Molecular Dynamics | Limited direct application | Characterizes dynamical heterogeneity in glass-forming liquids [16] | Potential for quantifying quantum effects in molecular interactions |

| Bioinformatics | Quantum sequence analysis | Biomolecular sequence signatures, classification [13] | Discrimination between sequence types |

| Single-Cell Analysis | Not typically applied | Constructs single-cell networks from scRNA-seq data [15] | Cell type identification |

| Quantum Optics | Entanglement quantification | Dynamics of classical/quantum correlations under decoherence [12] | Testing fundamental quantum principles over distance [14] |

| Material Science | Area laws in many-body systems [9] | Analyzing phase transitions | Characterizing quantum phases |

Experimental Protocols and Methodologies

Protocol: Measuring Correlation Dynamics in Optical Systems

The experimental investigation of classical and quantum correlations under decoherence provides a key methodology for comparing these quantifiers [12]:

System Preparation: Generate polarization-entangled photon pairs using type-I β-barium borate crystals pumped by ultraviolet pulses. Prepare initial states such as mixed Bell states: ρ = b|φ⁺⟩⟨φ⁺| + d|φ⁻⟩⟨φ⁻| with b + d = 1 [12].

Dephasing Channel Implementation: Pass photons through birefringent quartz plates with controlled thickness L to simulate phase-damping decoherence. The decoherence parameter relates to thickness as p = 1 - |κ| with κ = ∫dωf(ω)exp(-iωτ), where τ = LΔn/c [12].

State Tomography: Reconstruct the evolved state using quarter-wave plates, half-wave plates, and polarization beam splitters to implement 16 measurement bases. Detect photon coincidences with single-photon detectors equipped with interference filters [12].

Correlation Calculation: Compute I(ρᴬᴮ) from the measured density matrix using the eigenvalues λⱼ. Determine classical correlation C(ρᴬᴮ) by optimizing measurement angles θ and φ to minimize conditional entropy [12].

Dynamics Analysis: Track how I(ρᴬᴮ), C(ρᴬᴮ), and Q(ρᴬᴮ) evolve with increasing decoherence (quartz thickness), noting sudden changes in decay rates that characterize different correlation types [12].

Protocol: Mutual Information Analysis of Molecular Dynamics

For studying dynamical heterogeneity in molecular liquids [16]:

System Preparation: Perform molecular dynamics simulations of fully flexible trimers at various density and temperature conditions near the glass transition.

Trajectory Analysis: Calculate particle displacements δr(t) over time intervals t. Generate iso-configurational ensembles (ICEs) by running multiple simulations with different initial velocities from the same initial configuration.

Mutual Information Estimation: Compute pairwise mutual information between particle displacements Iᵢⱼ(t) = I(δrᵢ(t), δrⱼ(t)) using the Kraskov-Stögbauer-Grassberger (KSG) estimator [16].

Network Construction: Identify MI-correlated particles where Iᵢⱼ(t) > I₀ (with I₀ = 0.2). Analyze the distribution p(n,t) of the number of correlated particles and identify clusters of high and low mobility particles.

Heterogeneity Quantification: Track how mutual information networks evolve with time, particularly around the structural relaxation time τᵃ, to reveal dynamical heterogeneity and mobility propagation.

Experimental Workflows for Quantum Correlation Analysis

Research Reagent Solutions and Essential Materials

Table 3: Essential Research Materials for Quantum Correlation Experiments

| Material/Reagent | Specification | Experimental Function | Application Context |

|---|---|---|---|

| β-Barium Borate (BBO) Crystals | Type-I, optimized for entanglement generation | Nonlinear optical element for spontaneous parametric down-conversion | Quantum optics experiments for entangled photon pair generation [12] |

| Birefringent Quartz Plates | Various thicknesses (L = 0-150λ₀) | Implement controlled dephasing/decoherence | Studying correlation dynamics under environmental interaction [12] |

| Single-Photon Detectors | With 3nm FWHM interference filters | Photon counting with wavelength selection | Quantum state detection in optical experiments [12] |

| Wave Plates (HWP/QWP) | λ/2 and λ/4 for specific wavelengths | Polarization state manipulation | Quantum state preparation and measurement [12] |

| Molecular Dynamics Software | Custom or commercial (e.g., GROMACS, LAMMPS) | Simulate molecular trajectory evolution | Studying mutual information in molecular liquids [16] |

| scRNA-seq Platforms | 10X Genomics, Smart-seq2, etc. | Single-cell transcriptome profiling | Single-cell network construction using mutual information [15] |

Von Neumann Entropy, Quantum Mutual Information, and Quantum Correlation Distance provide distinct yet complementary tools for quantifying correlations in quantum systems, each with specific strengths and applications. Von Neumann Entropy remains the gold standard for quantifying entanglement in pure states, while Quantum Mutual Information captures total correlations and has found diverse applications in biological and molecular systems. Quantum Correlation Distance focuses specifically on distinguishing quantum from classical correlations, with experimental evidence showing surprising robustness of quantum correlations under decoherence.

For researchers in molecular and drug development fields, mutual information offers immediate practical utility for analyzing complex biomolecular systems, scRNA-seq data, and dynamical heterogeneity in materials. The continued development and application of these quantifiers will enhance our understanding of quantum effects in biological systems and potentially enable new approaches to drug design that account for quantum correlations in molecular interactions.

In quantum chemistry and the study of molecular systems, understanding electron behavior is fundamental. Orbital-based analysis provides a framework for quantifying the correlation between molecular orbitals, a critical factor in accurately predicting chemical properties and reactions. This guide compares the dominant methodologies for quantifying orbital correlation, with a particular focus on the emerging role of quantum entanglement measures. This approach is increasingly relevant for researchers and drug development professionals seeking to model complex molecular interactions with high accuracy, especially in systems exhibiting strong static correlation where classical computational methods often prove prohibitive [3].

The analysis of correlation between molecular orbitals has evolved from classical computational chemistry methods to incorporate principles from quantum information theory. By quantifying entanglement and correlation through measures like von Neumann entropy, scientists can now gain novel insights into electronic behavior during chemical processes such as bond breaking and formation [3]. This comparative examination will objectively evaluate traditional and quantum computational approaches, their experimental protocols, performance metrics, and applicability to real-world research challenges in material science and pharmaceutical development.

Methodologies for Orbital Correlation Analysis

Classical Computational Approaches

Classical computational chemistry employs several established methods for orbital correlation analysis. Natural Bond Orbital (NBO) analysis provides a framework for expressing complex wavefunctions in the chemically intuitive language of Lewis-like bonding patterns and associated donor-acceptor interactions [17]. The current version, NBO 7.0, implements 'natural' algorithms for optimally expressing numerical solutions of Schrödinger's wave equation, offering mutually consistent and comprehensive analysis tools that ensure harmonious chemical interpretations from one property to another [17].

Correlated Orbital Theory (COT) presents an exact one-particle framework that imposes rigorous physical constraints on Kohn-Sham eigenvalues, directly incorporating essential electron correlation into molecular orbitals [18]. This approach paves the way toward a new class of approximations within Kohn-Sham Density Functional Theory (KS-DFT), addressing the "Devil's Triangle" of KS-DFT: self-interaction error, integer discontinuity, and one-particle spectra [18].

Intrinsic Atomic Orbital (IAO) analysis, implemented in tools like IboView, enables visualization of electronic structure from first-principles DFT in terms of intuitive concepts such as partial charges, bond orders, and bond orbitals—even in systems with complex or unusual bonding [19]. This method particularly excels at visualizing electronic structure changes along reaction paths, enabling the determination of curly arrow reaction mechanisms directly from first principles [19].

Quantum Computational Approaches

Quantum computational approaches leverage quantum hardware to store chemical wavefunctions more efficiently than classical hardware, potentially overcoming the prohibitive memory requirements for accurately storing wavefunctions in classical computations of orbital correlation and entanglement [3]. These methods focus on quantifying correlation and entanglement between molecular orbitals using measures derived from quantum information theory, notably through the calculation of von Neumann entropies from orbital reduced density matrices (ORDMs) [3].

A significant advantage of quantum approaches is their ability to incorporate fermionic superselection rules (SSRs), which respect fundamental fermionic symmetries and prevent overestimation of entanglement [3]. When combined with measurement strategies that group commuting Pauli operators, these rules substantially reduce the number of quantum circuits required for constructing ORDMs [3]. The quantum approach has demonstrated particular efficacy for strongly correlated molecular systems where classical methods struggle, such as during transition states with conical intersections [3].

Table: Comparison of Fundamental Approaches to Orbital Correlation Analysis

| Methodology | Theoretical Foundation | Key Measurable | Primary Applications |

|---|---|---|---|

| Natural Bond Orbital (NBO) | Local eigen-properties of wavefunctions | Lewis-type bonding patterns, donor-acceptor interactions | Interpretation of wavefunctions in chemically intuitive terms [17] |

| Correlated Orbital Theory (COT) | Kohn-Sham DFT with physical constraints | Kohn-Sham eigenvalues, HOMO-LUMO gaps | Improving XC functionals, charge transfer, reaction barriers [18] |

| Intrinsic Atomic Orbital (IAO) | First-principles DFT | Partial charges, bond orders, bond orbitals | Reaction mechanism analysis, unusual bonding situations [19] |

| Quantum Entanglement Measures | Quantum information theory | Von Neumann entropies, mutual information | Strongly correlated systems, bond breaking processes [3] |

Experimental Protocols and Workflows

Quantum Computation of Orbital Entropies

The experimental protocol for quantifying orbital correlation on quantum computers involves a multi-stage workflow that integrates classical and quantum computational resources. The process begins with classical computational chemistry steps to determine molecular geometry and active spaces. For the vinylene carbonate + O₂ → dioxetane reaction system studied in recent research, the nudged elastic band (NEB) method first determines minimum-energy paths, with energies computed using Density Functional Theory (DFT) with the PBE exchange-correlation functional and def2-SVP atomic basis set [3].

The active space selection employs the Atomic Valence Active Space (AVAS) projection method to narrow down the orbital space most relevant to static correlation. This involves projecting onto targeted atomic orbitals (e.g., oxygen p orbitals for O₂ reactions), resulting in a chemically intuitive, localized orbital basis that helps avoid correlation overestimation [3]. For practical quantum computation, a subset of this AVAS set is selected, followed by Complete Active Space Self Consistent Field (CASSCF) calculations to determine the most important electronic configurations [3].

The quantum state preparation encodes the fermionic problem into qubits using a Jordan-Wigner transformation, then optimizes a variational quantum eigensolver (VQE) ansatz offline to prepare the relevant ground state wavefunctions at different reaction steps [3]. With these wavefunctions prepared, orbital reduced density matrices (ORDMs) are reconstructed from measurements on quantum hardware. Critical innovations include using fermionic superselection rules to decrease correlations and reduce measurement overhead, plus finding commuting sets of Pauli operators to further minimize required measurements [3].

Finally, noise mitigation applies low-overhead post-measurement noise reduction schemes to the measured ORDMs, involving thresholding to filter out small singular values followed by maximum likelihood estimation to reconstruct physical ORDMs [3]. The resulting cleaned ORDMs enable calculation of von Neumann entropies, which quantify orbital correlation and entanglement.

Classical Computational Protocols

Classical approaches follow different experimental protocols tailored to their specific theoretical frameworks. NBO analysis begins with computing a wavefunction using standard computational chemistry methods, followed by natural population analysis that diagonalizes the one-particle density matrix to obtain natural orbitals, and finally natural bond orbital analysis that transforms these into the chemically intuitive NBO basis [17].

COT optimization implements two strategies for adjusting parameters within functionals like PBE0, TPSS0, and LC-PBE0: the ionization potential (IP) condition and the HOMO-LUMO condition [18]. This systematically enhances functional performance while addressing self-interaction error, integer discontinuity, and one-particle spectra [18].

IAO analysis with IboView involves importing wavefunctions from computational chemistry packages or computing simple Kohn-Sham wave functions using the embedded MicroScf program, followed by intrinsic bond orbital analysis that provides visualizations of electronic structure changes along reaction paths [19].

Performance Comparison and Experimental Data

Quantitative Performance Metrics

Recent experimental results from trapped-ion quantum computers provide quantitative data on the performance of quantum approaches to orbital correlation analysis. Research using the Quantinuum H1-1 trapped-ion quantum computer demonstrated calculation of von Neumann entropies quantifying orbital correlation and entanglement in the strongly correlated vinylene carbonate + O₂ reaction system [3]. The results showed excellent agreement with noiseless benchmarks, indicating that correlations and entanglement between molecular orbitals can be accurately estimated from quantum computation [3].

A key finding with implications for both accuracy and measurement efficiency is that one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction when superselection rules are properly accounted for [3]. This highlights the importance of incorporating fundamental fermionic symmetries for correct quantification of orbital entanglement and correlation.

The incorporation of fermionic superselection rules led to a significant reduction in the number of circuits that needed to be measured when evaluating ORDM elements [3]. This reduction in measurement overhead represents a practical advantage for quantum computation of orbital correlations, potentially making larger molecular systems more accessible to study.

Table: Experimental Performance Metrics for Orbital Correlation Methods

| Methodology | System Tested | Accuracy Metrics | Measurement/Computational Efficiency |

|---|---|---|---|

| Quantum Entanglement Measures | Vinylene carbonate + O₂ (6e, 9 MOs) | Excellent agreement with noiseless benchmarks for von Neumann entropies [3] | Significant reduction in circuits via SSRs and commuting Pauli sets [3] |

| Natural Bond Orbital (NBO) | Broad applicability (2,000+ annual publications) | Provides chemically intuitive interpretation of complex wavefunctions [17] | Established implementation in major electronic structure packages [17] |

| Correlated Orbital Theory (COT) | PBE-like functionals | Systematically enhances performance for charge transfer and reaction barriers [18] | Optimization of existing functionals through physical constraints [18] |

| Intrinsic Atomic Orbital (IAO) | Reaction mechanisms, unusual bonding | Reveals electron flow in reaction mechanisms from first principles [19] | Fast visualization and publication-quality graphics [19] |

Application to Specific Molecular Systems

The quantum computation approach has been successfully applied to molecular systems relevant to real-world applications. The vinylene carbonate + O₂ → dioxetane reaction is particularly significant in lithium-ion battery research, as vinylene carbonate interacts with O₂ molecules during battery degradation processes [3]. Quantum computation of orbital correlations along this reaction path revealed strong correlation as oxygen bonds stretch to align to the C-C bond of the carbonate, followed by settling to the weakly correlated ground state of dioxetane [3].

The analysis captured the electronic behavior during transition states exhibiting strong static correlation, with orbital entropies painting a reasonable picture of the transition state where 2p O orbitals are strongly correlated [3]. This demonstrates the capability of orbital correlation analysis to provide insights into chemically significant processes that are difficult to study with conventional methods.

The Scientist's Toolkit: Research Reagent Solutions

Implementing orbital correlation analysis requires specialized computational tools and resources. The following table details essential "research reagent solutions" for scientists pursuing these methodologies.

Table: Essential Research Reagents for Orbital Correlation Analysis

| Tool/Resource | Function | Methodology Compatibility |

|---|---|---|

| Quantinuum H1-1 Trapped-Ion Quantum Computer | Quantum hardware for executing measurement circuits and reconstructing ORDMs [3] | Quantum Entanglement Measures |

| PySCF Package | Python-based quantum chemistry for NEB calculations, AVAS projections, and CASSCF [3] | Quantum and Classical Methods |

| NBO 7.0 | Implements natural bond orbital analysis for interpreting wavefunctions [17] | NBO Analysis |

| IboView | Analyzes molecular electronic structure based on Intrinsic Atomic Orbitals [19] | IAO Analysis |

| El Agente Q | AI agentic system for automating quantum chemistry calculations [20] | Quantum Chemistry Setup |

| Ansys ODTK | Orbit determination for space missions (specialized application) [21] | Niche Physical Orbital Analysis |

The comparative analysis of orbital-based correlation quantification methods reveals distinct strengths and applications for each approach. Quantum entanglement measures offer groundbreaking potential for studying strongly correlated systems where classical methods face exponential memory requirements, with recent demonstrations on quantum hardware showing remarkable accuracy and reduced measurement overhead through innovative techniques like superselection rule incorporation and Pauli operator grouping [3].

Classical approaches including NBO, COT, and IAO analyses remain indispensable tools for interpreting electronic structure in chemically intuitive terms and will continue to serve as workhorse methods for routine analyses and educational purposes [17] [19]. The emerging trend of AI-enhanced tools like El Agente Q promises to democratize access to these complex calculations, potentially making quantum chemical computations accessible to non-specialists through specialized AI agents that handle different aspects of the computational workflow [20].

For researchers and drug development professionals, the choice of methodology depends critically on the specific molecular system, available computational resources, and the nature of the chemical questions being addressed. As quantum hardware continues to advance and classical algorithms become more sophisticated, the integration of these complementary approaches will likely provide the most comprehensive insights into the correlation between molecular orbitals, ultimately enhancing our ability to understand and design molecular systems with precision.

In the study of quantum matter, superselection rules (SSRs) act as fundamental physical constraints linked to the conservation of quantities such as particle number or parity. These rules restrict the allowable physical operations and states within a quantum system, thereby shaping the extractable and usable quantum correlations. For fermionic systems, such as the electrons in chemical molecules, the parity SSR is of paramount importance. It dictates that physical states must possess definite parity, forbidding coherent superpositions of states with different even and odd fermion numbers. This has a profound impact on the quantification and manipulation of quantum entanglement between different modes or orbitals within a molecule. Research indicates that ignoring these rules can lead to a significant overestimation of entanglement, painting an inaccurate picture of the quantum resources present in a system [22] [3] [23]. This guide provides a comparative analysis of how fermionic SSRs impact entanglement quantification and manipulation, framing the discussion within the context of molecular entanglement research.

Theoretical Foundation: SSRs and the Structure of Entanglement

The Physical Principle of SSRs

Superselection rules arise from fundamental symmetries and impose a restriction on the Hilbert space of a quantum system. The fermionic parity SSR, in particular, stipulates that all physically allowed states must be eigenstates of the total parity operator. Consequently, operations that generate superpositions of even and odd numbers of fermions are deemed unphysical. This divides the state space into distinct, disconnected sectors—the superselection sectors—between which coherent transitions are forbidden [24] [25]. This structure is not merely a mathematical curiosity; it has tangible consequences for quantum information processing and error correction, where it can be shown that the existence of a superselection rule implies the Knill-Laflamme condition for quantum error correction [24].

The Separable Continent and the Fate of Entanglement

A powerful way to visualize the impact of physical constraints on entanglement is the concept of the "separable continent" within the space of all physical states. In this analogy, the center of the continent is the maximally mixed, infinite-temperature state, which is entirely devoid of entanglement. As a system evolves—for instance, as it heats up, evolves in time, or as its parts become separated—its state typically moves toward this interior, and all forms of multi-party entanglement eventually disappear [26]. Fermionic SSRs fundamentally alter the geography of this continent. They shrink the physical state space by forbidding states that violate parity conservation, which in turn affects the amount of mode entanglement that can be extracted from a given quantum state and manipulated using local operations and classical communication (LOCC) [25].

Comparative Analysis: SSR-Constrained vs. Unconstrained Entanglement

The following table compares the key characteristics of the quantum resource theory for fermionic mode entanglement with and without the consideration of superselection rules.

Table 1: Comparative analysis of entanglement frameworks with and without Superselection Rules (SSRs).

| Feature | Framework with SSRs | Framework Without SSRs |

|---|---|---|

| Physical State Space | Restricted to states with definite parity (superselection sectors) [25]. | Includes all states in the Hilbert space, including those with indefinite parity. |

| Allowed Operations | Local operations and classical communication (LOCC) restricted by local parity conservation (LOCC({}_{\text{SR}})) [25]. | Standard LOCC without parity constraints. |

| Extractable Entanglement | Reduced; some entanglement is inaccessible under SSR-restricted operations [25]. | Potentially higher, as all correlations are considered accessible. |

| One-Orbital Entanglement | Can vanish unless opposite-spin open shell configurations are present [22] [3] [23]. | May be overestimated by including non-physical correlations. |

| Experimental Measurement | Reduced measurement overhead due to fewer measurable operators [22] [3] [23]. | Higher measurement overhead required to characterize all correlations. |

| State Manipulation | More constrained; may require catalytic processes to achieve certain transformations [25]. | More permissive, with a wider range of possible state transformations. |

Experimental Protocols: Quantifying Orbital Entanglement under SSRs

Workflow for Quantum Computation of Orbital Entropies

A leading experimental approach for quantifying orbital correlation and entanglement under SSRs involves using a trapped-ion quantum computer. The following diagram illustrates the generalized workflow for such an experiment, as demonstrated in the study of vinylene carbonate reacting with O₂ [3] [23].

Diagram 1: Workflow for computing orbital entanglement on a quantum computer.

Detailed Methodology

The protocol can be broken down into the following key steps, which detail the experimental and computational procedures:

Classical Electronic Structure Calculation:

- System Preparation: The minimum-energy path of a chemical reaction (e.g., vinylene carbonate + O₂ → dioxetane) is determined using methods like the Nudged Elastic Band (NEB). Atomic geometries ("images") along this path are extracted [3].

- Active Space Selection: An Atomic Valence Active Space (AVAS) projection is performed to identify a localized set of molecular orbitals most relevant to strong correlation (e.g., projecting onto the p orbitals of O₂). This yields a manageable active space (e.g., 6 electrons in 9 orbitals) [3].

- Wavefunction Optimization: Complete Active Space Self-Consistent Field (CASSCF) calculations are performed on a subset of the AVAS orbitals to optimize both the configuration interaction (CI) coefficients and the molecular orbital coefficients, providing a high-quality classical benchmark [3].

Quantum State Preparation and Execution:

- Qubit Encoding: The fermionic Hamiltonian and wavefunction for the selected active space are encoded into qubits using a Jordan-Wigner transformation [23].

- Ansatz Optimization: A variational quantum eigensolver (VQE) ansatz is optimized offline to prepare the ground state wavefunctions corresponding to the different reaction coordinate images [23].

- Hardware Execution: The resulting quantum circuits are executed on a trapped-ion quantum computer (e.g., Quantinuum H1-1) [3] [23].

SSR-Aware Measurement and Post-Processing:

- Reduced Density Matrix Construction: Orbital Reduced Density Matrices (ORDMs) are constructed from measurements on the quantum hardware. Adherence to fermionic SSRs significantly reduces the number of measurement circuits required by restricting the measurable operators and allowing for efficient grouping into commuting sets [22] [3] [23].

- Noise Mitigation: Low-overhead, post-measurement noise reduction techniques are applied. This involves thresholding to filter small, unphysical singular values from the noisy ORDMs, followed by a maximum likelihood estimation to reconstruct physical density matrices [3] [23].

- Entropy Calculation: Von Neumann entropies are calculated from the eigenvalues of the noise-cleaned ORDMs. These entropies quantify the orbital correlation and entanglement present in the system, with SSR constraints properly accounted for [22] [3].

Key Experimental Findings and Data

The application of the above protocol to the VC + O₂ reaction system yielded quantitative results that underscore the role of SSRs. The data in the table below summarizes the key findings regarding orbital entanglement in the molecular system.

Table 2: Experimental results on orbital entanglement from a trapped-ion quantum computer.

| Experimental Parameter | Finding | Impact of SSR |

|---|---|---|

| One-Orbital Entanglement | Vanishes unless opposite-spin open shell configurations are present in the wavefunction [22] [3] [23]. | Prevents overestimation; confirms that 1-orbital entanglement is a special case. |

| Two-Orbital Mutual Information | Peaks at the transition state where oxygen bonds are stretched and static correlation is strongest [3] [23]. | Quantifies total (quantum + classical) correlation between orbitals during bond breaking/formation. |

| Measurement Circuit Reduction | The number of circuits needed was significantly reduced by grouping Pauli operators into commuting sets under SSR constraints [3] [23]. | Makes quantum computation of entanglement more efficient and feasible. |

| Agreement with Benchmark | After noise mitigation, calculated von Neumann entropies showed excellent agreement with noiseless classical simulations [22] [3]. | Validates the accuracy of the SSR-aware quantum computation protocol. |

For researchers aiming to investigate fermionic entanglement and SSRs, either classically or on quantum hardware, the following tools are essential.

Table 3: Key research reagents and solutions for studying orbital entanglement.

| Tool / Resource | Function | Example in Context |

|---|---|---|

| Active Space Model | Reduces the computational cost of electronic structure calculations by focusing on a subset of chemically relevant electrons and orbitals. | AVAS (Atomic Valence Active Space) projection onto O₂ p orbitals [3]. |

| CASSCF Method | Provides a high-accuracy classical benchmark wavefunction that captures strong (static) correlation in molecules. | Used to optimize orbitals and CI coefficients for the VC+O₂ reaction path [3]. |

| Jordan-Wigner Encoding | Maps fermionic creation/annihilation operators to qubit (Pauli) operators, enabling simulation on a quantum computer. | Encodes the (4,6) active space problem into qubits for the VQE [23]. |

| Trapped-Ion Quantum Computer | Provides a high-fidelity quantum processing unit with all-to-all connectivity to run the quantum circuits. | Quantinuum H1-1 system used for state preparation and measurement [3] [23]. |

| Commuting Set Partitioning | Groups measurable Pauli operators into sets that can be measured simultaneously, drastically reducing measurement overhead. | Enabled by the structure of ORDMs under parity SSR [3] [23]. |

| Noise Mitigation Algorithms | Post-processing techniques that filter out noise from experimental data to reconstruct a physical quantum state. | Singular value thresholding and maximum likelihood estimation applied to noisy ORDMs [3] [23]. |

The imposition of fermionic superselection rules is not a limitation to be overlooked but a fundamental feature that correctly defines the landscape of physically accessible quantum correlations in molecular systems. As comparative studies show, ignoring SSRs leads to an inflated and non-physical estimate of entanglement, while their incorporation provides a more accurate and experimentally efficient pathway for quantification. The successful calculation of orbital entropies on a quantum computer, which aligns with noiseless benchmarks, confirms that SSR-constrained entanglement is both a measurable and meaningful quantum resource [22] [3] [23]. Furthermore, the emerging resource theory of mode entanglement under SSR suggests pathways for its manipulation, potentially using catalytic processes to unlock otherwise inaccessible correlations [25]. For researchers in quantum chemistry and drug development, acknowledging the role of symmetry through superselection rules is therefore crucial for elucidating the true role of quantum effects in reaction processes and molecular interactions.

The fields of chemical bonding and quantum information theory are increasingly intertwined. Chemical intuition—the deep, often qualitative understanding chemists develop about molecular structure and reactivity—is now being formalized through the rigorous mathematics of quantum information. This guide compares the core methodologies and their applications in modern research, focusing on how entanglement measures and correlation quantification are providing new insights into electronic structure. The translation of classical chemical concepts into a quantum information framework is enabling a more nuanced understanding of strongly correlated systems, from transition states in lithium-ion battery reactions to the design of novel quantum materials [27] [3] [28].

At the heart of this convergence is the recognition that the challenges of accurately simulating molecular electronic structure, particularly for strongly correlated systems with near-degenerate electronic states, share profound connections with the resource management challenges in quantum computing. This comparison guide objectively examines the experimental protocols, data outputs, and material requirements for researchers navigating this interdisciplinary landscape.

Theoretical Frameworks: A Comparative Analysis

Core Conceptual Equivalences

The table below compares traditional chemical concepts with their quantum information theory analogues, highlighting the formal connections that enable this interdisciplinary research.

Table 1: Comparison of Core Concepts in Electronic Structure and Quantum Information Theory

| Chemical Concept | Quantum Information Analog | Relationship and Significance |

|---|---|---|

| Electronic Correlation | Quantum & Classical Correlation | Measured via orbital-orbital mutual information; partitions total correlation into entangled and separable components [3] [29]. |

| Chemical Bond (Covalent) | Quantum Entanglement | Entanglement entropy between orbital subsets reveals bond formation/breaking beyond classical descriptions [3] [29]. |

| Open Shell Configurations | Non-Vanishing Single-Orbital Entanglement | One-orbital entanglement is zero unless opposite-spin open shell configurations exist (considering superselection rules) [3]. |

| Electron Delocalization | Quantum Coherence | Non-classical information terms in entropy measures capture phase-related delocalization effects [29]. |

| Transition State Theory | Entanglement Maximization | Entanglement and correlation often peak at stretched geometries and transition states, indicating strong electron correlation [3]. |

| Molecular Wavefunction | Multi-Qubit Quantum State | The full-CI wavefunction can be represented as a state vector on qubits; its complexity dictates classical simulability [27] [3]. |

Quantitative Metrics for Correlation and Entanglement

The following table summarizes the key quantitative metrics used to measure correlation and entanglement in molecular systems, their mathematical definitions, and the chemical phenomena they help elucidate.

Table 2: Key Quantitative Metrics for Correlation and Entanglement in Molecules

| Metric | Definition/Formula | Extracted From | Reveals Information About |

|---|---|---|---|

| Orbital Entropy (S⁽¹⁾) | Von Neumann entropy of 1-Orbital RDM: ( S = -\text{Tr}(\rho \log \rho) ) | 1-Orbital Reduced Density Matrix (1-ORDM) | Local electron correlation at a specific molecular orbital [3]. |

| Mutual Information (I₂) | ( I{ij} = \frac{1}{2}(Si + Sj - S{ij})(1 - \delta_{ij}) ) | 1- and 2-Orbital RDMs | Total (quantum + classical) correlation between orbital pair ( i, j ) [3]. |

| Interacting Quantum Atoms (IQA) | Energy partitioning between atoms-in-molecules | Wavefunction Analysis | Energetic contributions to chemical bonding, complementing information measures [29]. |

| Electron Localization Function (ELF) | Related to non-additive Fisher information | Electron Density | Regions of space with high probability of finding electron pairs [29]. |

Experimental and Computational Methodologies

Workflow for Quantifying Orbital Entanglement

The following diagram illustrates the generalized workflow for an experiment or calculation that quantifies entanglement and correlation between molecular orbitals, integrating both classical and quantum computational steps.

Diagram 1: Workflow for Orbital Entanglement

Detailed Experimental Protocols

Protocol 1: Quantum Computation of Orbital Entropies on a Trapped-Ion Quantum Computer (Based on [3])

This protocol details the specific methodology used to calculate orbital von Neumann entropies for the VC + O₂ → dioxetane reaction, a system relevant to lithium-ion battery degradation.

A. System Preparation & Classical Preprocessing

- Reaction Path Determination: Use the Nudged Elastic Band (NEB) method with DFT (e.g., PBE functional) to find the minimum-energy path and extract key geometries ("images").

- Active Space Construction: Apply the Atomic Valence Active Space (AVAS) method. Project canonical molecular orbitals onto chosen atomic orbitals (e.g., oxygen p orbitals of O₂) to obtain a chemically intuitive, localized orbital basis. This yields an initial active space (e.g., 6 electrons in 9 orbitals).

- Wavefunction Optimization: Perform Complete Active Space Self-Consistent Field (CASSCF) calculations on a subset of the AVAS orbitals (e.g., 4 orbitals, 6 electrons) to determine the most important electronic configurations and optimize orbital coefficients.

B. Quantum Computing Execution

- Qubit Encoding: Map the fermionic Hamiltonian of the active space to qubit operators using the Jordan-Wigner transformation.

- State Preparation: Pre-optimize a Variational Quantum Eigensolver (VQE) ansatz for the relevant molecular states (e.g., at different reaction path images) using classical simulators. Execute the corresponding parameterized circuits on the quantum hardware (e.g., Quantinuum H1-1) to prepare the ground state wavefunctions.

- Measurement for ORDM Construction:

- Account for Superselection Rules (SSRs): Incorporate fermionic SSRs to avoid overestimation of entanglement and to significantly reduce the number of unique Pauli operator measurements required.

- Pauli Grouping: Group the remaining necessary Pauli operators into commuting sets to minimize the number of distinct quantum circuit executions.

- Execute Circuits: Run the measurement circuits on the quantum computer to gather statistics for estimating the elements of the 1- and 2-Orbital Reduced Density Matrices (ORDMs).

C. Post-Processing & Analysis

- Noise Mitigation: Apply low-overhead, post-measurement noise reduction techniques to the measured ORDMs. This may involve singular value thresholding to filter small, unphysical eigenvalues, followed by a maximum likelihood estimation to ensure the reconstructed ORDMs are physically valid.

- Entropy Calculation: Diagonalize the noise-reduced 1-ORDMs and 2-ORDMs. Compute the orbital von Neumann entropies from the eigenvalues of the 1-ORDMs. Compute the mutual information between orbital pairs using the entropies from the 1- and 2-ORDMs.

- Interpretation: Analyze the entropy and mutual information profiles across the reaction path to identify regions of strong correlation (e.g., the transition state) and validate against noiseless benchmarks.

Protocol 2: Classical Covalent/Ionic Character Analysis via Quantum Information (Based on [29])

This protocol uses quantum information tools applied to classically computed wavefunctions to dissect the nature of chemical bonds.

A. Wavefunction Calculation

- Perform high-level ab initio calculations (e.g., CASSCF, coupled-cluster) for the molecular system of interest to obtain an accurate electronic wavefunction.

B. Reduced Density Matrix Construction

- Construct the reduced density matrices for relevant subsystems. These subsystems can be individual atoms, functional groups, or specific molecular orbitals.

C. Entropy and Information Descriptor Calculation

- Calculate von Neumann entropies for the individual subsystems.

- Compute the mutual information between all pairs of subsystems. The mutual information quantifies the total correlation (both quantum and classical) between them.

- Use resultant entropy/information measures that combine classical (probability-based) and nonclassical (phase/current-based) contributions to distinguish between entangled (bonded) and non-entangled (non-bonded) states of reactants.

D. Bond Character Interpretation

- Covalent Interactions: are indicated by high mutual information between the electron densities of the two bonded atoms, signifying significant electron sharing and entanglement.

- Ionic Interactions: are characterized by a charge shift (evident in the one-electron density) but lower mutual information between the atomic basins, reflecting a more classical electrostatic interaction.

The Scientist's Toolkit: Essential Research Reagents & Materials

This section details key software, hardware, and computational resources used in the featured experiments and this field of research.

Table 3: Essential Research Tools and Resources

| Tool/Resource Name | Type/Category | Primary Function in Research |

|---|---|---|

| PySCF [3] | Software Library | A Python-based library for classical electronic structure calculations, including DFT, CASSCF, and AVAS, used for pre-processing and benchmark comparisons. |

| Quantinuum H1-1 [3] | Quantum Hardware | A trapped-ion quantum computer used for executing state preparation and measurement circuits to construct Orbital RDMs. |

| RDKit [30] | Cheminformatics Library | Used for generating molecular descriptors, fingerprints (e.g., ECFP), and handling molecular structures, particularly in machine learning studies. |

| DeepChem [31] | Machine Learning Library | An open-source platform providing high-quality implementations of featurization methods and models for molecular property prediction, including the MoleculeNet benchmark. |

| MoleculeNet [31] [30] | Benchmark Suite | A large-scale benchmark for molecular machine learning, curating multiple public datasets to standardize the evaluation of new algorithms. |

| Nitrogen-Vacancy (NV) Center in Diamond [32] | Quantum Sensor | Engineered defects in diamond used as highly sensitive magnetic field sensors; pairs of entangled NV centers enable probing magnetic fluctuations at the nanoscale. |

Data Presentation & Comparative Analysis

Representative Research Findings

The following table synthesizes key quantitative findings from recent studies, illustrating how entanglement and correlation metrics are applied to real chemical problems.

Table 4: Comparison of Key Research Findings and Outcomes

| Study & System | Key Metric(s) | Primary Finding | Implication for Chemical Intuition |

|---|---|---|---|

| VC + ¹O₂ → Dioxetane (Quantum Computation) [3] | Orbital Von Neumann Entropy, Mutual Information | Orbital entropies peak in the transition state region (images 7-10), then settle in the product. One-orbital entanglement vanishes without open-shell spin configurations (with SSR). | Validates that quantum transition states are highly correlated and that spin configurations fundamentally constrain entanglement. |

| Theoretical Framework for Reactivity [29] | Resultant Gradient Information, Mutual Information | Populational derivatives of electronic energy and resultant gradient information give identical predictions of electron flows between reactants. | Establishes a direct, quantitative link between information theory and the direction of chemical reactions (electron flow). |

| Donor-Acceptor Systems [29] | Covalent vs. Ionic Communication Channels | The hard/soft acid/base (HSAB) principle can be explained by analyzing the covalent (high MI) and ionic (charge transfer) contributions to inter-reactant communications. | Provides an information-theoretic foundation for a cornerstone empirical chemical rule. |

| Entangled NV Center Sensors [32] | Magnetic Field Sensitivity, Correlation Resolution | Entangling two NV centers (~10nm apart) yielded a ~40x sensitivity increase over single sensors, revealing hidden magnetic fluctuations in materials. | Demonstrates that quantum resources (entanglement) directly enhance the ability to probe material properties at relevant nanoscale. |

Logical Relationship of Concepts

The diagram below maps the logical progression from fundamental principles to chemical applications, showing how quantum information concepts are built upon one another to explain chemical phenomena.

Diagram 2: Concept Relationship Map

Measuring Molecular Entanglement: From Quantum Computers to Machine Learning

Quantifying correlation and entanglement between molecular orbitals provides crucial insights into quantum effects within strongly correlated chemical systems, which are fundamental to processes like chemical bonding and reaction pathways [3]. However, classically computing these quantities requires storing the complete quantum wavefunction, which becomes prohibitively expensive for complex molecules [3]. Quantum processors offer a promising alternative by inherently representing quantum states, enabling more efficient computation of entanglement measures like von Neumann entropy [3].

Trapped-ion quantum computers have emerged as a leading platform for these simulations due to their high-fidelity operations and long coherence times [33]. This guide examines the experimental implementation of orbital entropy calculations on trapped-ion processors, specifically analyzing the Quantinuum H1-1 system's performance in elucidating entanglement characteristics within a strongly correlated molecular system relevant to lithium-ion battery chemistry [3] [34].

Performance Comparison: Trapped-Ion vs. Alternative Quantum Platforms

The calculation of orbital entropies and mutual information requires specific hardware capabilities, including high gate fidelity, qubit connectivity, and coherence time. The table below compares the key performance characteristics of leading quantum computing modalities for quantum chemistry simulations.

Table 1: Performance Comparison of Quantum Computing Modalities for Chemical Simulations

| Performance Metric | Trapped-Ion (Quantinuum H1-1) | Superconducting (e.g., IBM) | Photonic (e.g., Xanadu) | Neutral Atom (e.g., QuEra) |

|---|---|---|---|---|

| Typical Gate Fidelity (2-qubit) | Very High (~99.9%) | High (~99.5-99.9%) | Varies | High (~99.5%) |

| Qubit Connectivity | All-to-all [35] | Nearest-neighbor | Varies | Programmable |

| Coherence Time | Long (seconds) [33] | Short (microseconds) | Moderate | Moderate |

| Measurement Fidelity | >99.5% [35] | ~95-99% | Varies | Varies |

| Operating Temperature | Cryogenic | Ultra-cryogenic (mK) | Room temperature | Cryogenic |

| Key Advantage for Chemistry | High-fidelity operations, all-to-all connectivity | Rapid gate operations | Room temperature operation | Flexible qubit arrangements |

The Quantinuum H1-1 trapped-ion processor demonstrates distinct advantages for quantum chemistry simulations, particularly through its all-to-all qubit connectivity and high-fidelity operations [35]. These characteristics enable more efficient construction of orbital reduced density matrices (ORDMs) without requiring extensive swap operations, which is crucial for accurate entropy calculations [3].

Table 2: Experimental Results for Orbital Entropy Calculation on Quantinuum H1-1 [3] [34]

| Calculation Step | Implementation on H1-1 | Performance Metric | Classical Benchmark Comparison |

|---|---|---|---|

| State Preparation | Optimized VQE ansatz | Preparation fidelity >99% | Exact CI wavefunction |

| ORDM Construction | Pauli measurements with SSR | 60% reduction in measurements vs. no SSR | Full wavefunction storage |

| Noise Mitigation | Post-measurement filtering | Near-exact agreement with noiseless simulation | N/A |

| Entropy Calculation | Eigenvalues of reconstructed ORDM | Von Neumann entropy error <0.5% | Direct diagonalization |

| Total Circuit Resources | 4 molecular orbitals, 6 electrons | 16 qubits, ~200 parameterized gates | N/A |

Experimental Protocols for Orbital Entropy Calculation

Molecular System Preparation and Active Space Selection

The protocol for calculating orbital entropies on a trapped-ion quantum computer begins with classical computational chemistry methods to define the molecular system [3]:

System Preparation: Researchers studied the reaction between vinylene carbonate (VC) and singlet oxygen (O₂) forming a dioxetane ring, a process relevant to lithium-ion battery degradation [3]. The minimum-energy path was determined using the nudged elastic band (NEB) method with DFT/PBE.

Active Space Selection: An atomic valence active space (AVAS) projection selected molecular orbitals most relevant to strong correlation, specifically targeting oxygen p orbitals from the O₂ molecule [3]. This yielded 6 electrons in 9 molecular orbitals, later reduced to a (4,6) active space (4 orbitals, 6 electrons) for quantum computation.

Wavefunction Optimization: Complete active space self-consistent field (CASSCF) calculations optimized electronic configurations and active orbital coefficients, constraining ⟨S²⟩=0 for singlet configuration [3].

Quantum Computation of Orbital Reduced Density Matrices

The core quantum computational protocol involves preparing the chemical state and measuring orbital reduced density matrices:

Qubit Encoding: The molecular Hamiltonian is mapped to qubit operators using Jordan-Wigner transformation [3].

State Preparation: The ground state wavefunction is prepared using a variational quantum eigensolver (VQE) ansatz optimized offline [3].

Measurement Strategy: Orbital reduced density matrices (ORDMs) are constructed by measuring relevant Pauli operators partitioned into commuting sets while respecting fermionic superselection rules (SSRs), reducing measurement overhead by approximately 60% compared to naive approaches [3] [34].

Noise Mitigation: Implement low-overhead post-measurement noise reduction combining singular value thresholding to filter small values from noisy ORDMs followed by maximum likelihood estimation (MLE) to reconstruct physical ORDMs [3].

Entropy Calculation: Compute von Neumann entropies from eigenvalues of the reconstructed ORDMs: S(ρ) = -Tr(ρ log ρ), where ρ is the one- or two-orbital reduced density matrix [3].

Table 3: Essential Research Resources for Quantum Computational Chemistry

| Resource Category | Specific Tool/Platform | Function in Research |

|---|---|---|

| Quantum Hardware | Quantinuum H1-1 Trapped-Ion QPU | Executes quantum circuits for ORDM measurement |

| Classical Computational Chemistry | PySCF | Performs DFT, AVAS, and CASSCF calculations |

| Quantum Development Environment | TKET, Qiskit, CUDA-Q | Compiles and optimizes quantum circuits |

| Molecular Visualization | NGL Viewer | Visualizes molecular structures and orbitals [3] |

| Reaction Path Sampling | Nudged Elastic Band (NEB) | Determines minimum energy reaction pathways [3] |

| Noise Mitigation Algorithms | Singular Value Thresholding + MLE | Reduces measurement noise in reconstructed ORDMs [3] |

| Entanglement Quantification | Von Neumann Entropy Calculator | Computes orbital entropies from ORDM eigenvalues |

Quantum Advantage in Entanglement Measurement

The integration of trapped-ion quantum processors with classical computational chemistry methods enables unprecedented insight into molecular entanglement. The experimental implementation on Quantinuum H1-1 demonstrated that:

Measurement Efficiency: Fermionic superselection rules combined with commutative measurement grouping reduced circuit measurement requirements by approximately 60% [3] [34].

Accuracy: Despite hardware noise, noise mitigation techniques yielded orbital entropies in "excellent agreement with noiseless benchmarks" [3], with errors below 0.5% for the target molecular system.

Fundamental Insight: The quantum computation revealed that one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction [3] [34], providing conceptual clarity on entanglement structure in molecular systems.

The future development of quantum computing for chemical simulation will benefit from continued advances in trapped-ion architectures, including photonic integration for improved scaling [35] and quantum error correction techniques to enable larger-scale simulations [35]. As quantum hardware matures, the calculation of orbital entropies and other entanglement measures will provide fundamental insights into strongly correlated chemical systems that remain computationally prohibitive for classical approaches.

Orbital Reduced Density Matrices (ORDMs) are fundamental mathematical objects in quantum chemistry that provide a powerful framework for analyzing electronic structure. They enable the quantification of quantum correlations and entanglement between specific molecular orbitals, offering profound insights into chemical bonding and reactivity that are not accessible through traditional energy-based analysis alone. The n-particle reduced density matrix (n-RDM) encapsulates all necessary information to compute expectation values of n-body operators, with the 1-particle RDM (1-RDM) and 2-particle RDM (2-RDM) being particularly crucial for practical electronic structure methods [36].

Theorems from Reduced Density Matrix Functional Theory (RDMFT) establish that the 1-RDM contains sufficient information to determine all ground-state properties of a quantum system, providing a compelling alternative to wavefunction-based approaches [37]. This theoretical foundation has enabled researchers to develop sophisticated methods for probing quantum effects in molecular systems, including the strong correlation phenomena that occur during chemical bond formation and breaking processes [3]. Within this framework, ORDMs serve as the central quantity for quantifying orbital-wise entanglement through the computation of von Neumann entropies, which measure the quantum correlations between specific molecular orbitals [3].

Recent advances have demonstrated that quantum computers can efficiently compute ORDMs for molecular systems that challenge classical computational methods, opening new avenues for studying complex reaction mechanisms [3]. This capability is particularly valuable for investigating strongly correlated systems where classical wavefunction storage becomes prohibitive due to exponential scaling. The integration of ORDM analysis with quantum computation represents a significant advancement in quantum chemistry, allowing researchers to elucidate the role of quantum effects in chemically relevant processes, such as those occurring in lithium-ion batteries [3] [34].

Theoretical Framework and Mathematical Foundation

The construction of ORDMs begins with the full N-electron wavefunction |Ψ⟩, from which the n-orbital reduced density matrix is obtained by tracing out all but n orbitals of interest. For a given set of n molecular orbitals, the ORDM is defined as ρₙ = Trₙ₋ₙ(|Ψ⟩⟨Ψ|), where Trₙ₋ₙ denotes the partial trace over all other orbitals [36]. The diagonal elements of these matrices represent occupation numbers of orbital tuples, while off-diagonal elements capture coherence between different orbital configurations [38].

The interpretation of ORDMs is deeply connected to quantum information theory, where the von Neumann entropy S(ρₙ) = -Tr(ρₙ ln ρₙ) serves as a fundamental measure of orbital entanglement [3]. For a single orbital (1-ORDM), the entropy quantifies its entanglement with the rest of the system, while for two orbitals (2-ORDM), the mutual information I(A:B) = S(ρₐ) + S(ρb) - S(ρab) quantifies the total correlation—both classical and quantum—between orbitals A and B [3]. These information-theoretic measures provide a rigorous framework for understanding electron correlation in molecular systems.

An important consideration in properly quantifying orbital entanglement involves fermionic superselection rules (SSRs), which restrict possible physical operations due to fundamental symmetries like particle number conservation [3] [34]. When SSRs are properly accounted for, the measured entanglement is reduced to physically accessible correlations, preventing overestimation that can occur in naive analyses. This has practical implications for quantum computations, as incorporating SSRs significantly reduces the number of measurements required to construct ORDMs by allowing efficient partitioning of Pauli operators into commuting sets [3].

The cumulant decomposition of RDMs provides additional theoretical insight, particularly through its diagonal elements in the natural spin-orbital basis [38]. For the 2-particle cumulant, diagonal elements represent the covariances (correlated fluctuations) of occupation numbers between orbital pairs, directly quantifying correlation between orbital occupations. This interpretation elegantly connects quantum correlations to statistical concepts, offering an intuitive understanding of electron correlation effects in molecular systems.

Methodological Approaches for ORDM Construction

Classical Computational Methods