Orbital and Particle Correlation in Drug Discovery: A Comparative Analysis of Methods and Applications

This article provides a comprehensive analysis of orbital and particle correlation, essential quantum phenomena in computational drug discovery.

Orbital and Particle Correlation in Drug Discovery: A Comparative Analysis of Methods and Applications

Abstract

This article provides a comprehensive analysis of orbital and particle correlation, essential quantum phenomena in computational drug discovery. It explores foundational theories, compares advanced methodologies like Density Functional Theory (DFT) and the Fragment Molecular Orbital (FMO) method, and addresses key challenges such as computational cost and electron correlation handling. Through practical applications in targeting protein-protein interactions and prion diseases, it validates these approaches and benchmarks their performance. Aimed at researchers and drug development professionals, this review serves as a guide for leveraging quantum mechanical calculations to overcome challenges in targeting undruggable proteins and designing precision therapeutics.

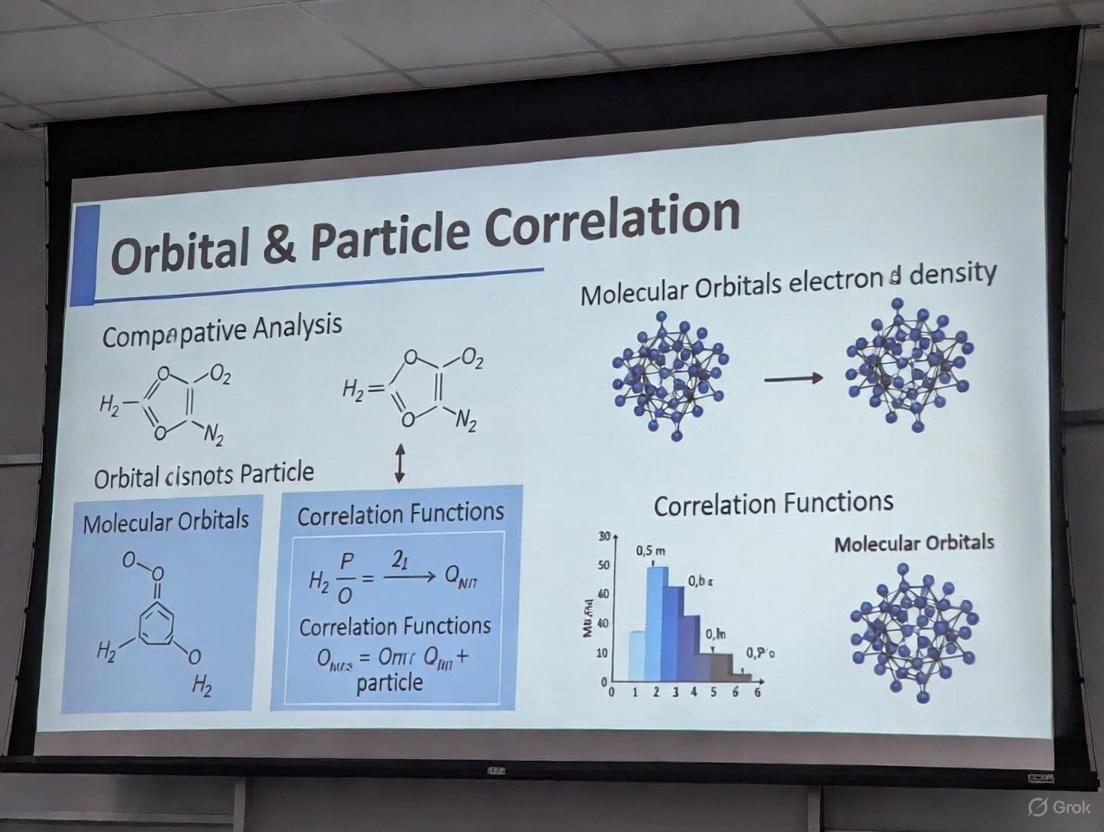

Unraveling Quantum Foundations: The Principles of Orbital and Particle Correlation

Defining Orbital Correlation, Particle Correlation, and Electron Entanglement in Molecular Systems

In quantum chemistry, electron correlation represents a central challenge for accurate computational methods, as the mean-field approximation fails to capture the complex, correlated motion of electrons. This guide provides a comparative analysis of three fundamental concepts—orbital correlation, particle correlation, and electron entanglement—that are essential for understanding and modeling electronic structure in molecular systems. These concepts differ in their theoretical foundations, quantitative measures, and implications for predicting chemical phenomena.

Orbital correlation describes the dependency between electrons occupying specific molecular orbitals, particularly crucial in strongly correlated systems like transition metal complexes and during bond-breaking processes. Particle correlation quantifies the deviation from the Hartree-Fock approximation due to correlated electron motion, directly impacting excitation energies and reaction barriers. Electron entanglement captures the non-classical correlations between electronic degrees of freedom that cannot be described by local hidden variable theories. Within the context of orbital correlation particle correlation comparative analysis research, understanding these distinctions enables researchers to select appropriate computational methods for studying molecular systems ranging from drug candidates to quantum materials.

Theoretical Definitions and Comparative Framework

Conceptual Foundations

Orbital Correlation refers to the statistical dependence between electronic occupations of different molecular orbitals. These correlations arise from electron-electron interactions and are quantified through reduced density matrices of orbital subspaces. In practical terms, orbital correlation helps explain phenomena such as static correlation in transition metal complexes and bond dissociation processes where multiple electronic configurations become nearly degenerate. The strength of orbital correlation is system-dependent and is significantly influenced by the choice of orbital basis, with localized bases often providing more chemically intuitive pictures [1] [2].

Particle Correlation encompasses the deviation from the mean-field approximation where electrons move independently in an average potential. This includes both dynamical correlation (short-range electron-electron repulsion) and static correlation (near-degeneracy effects). Particle correlation is directly responsible for the accuracy of methods like coupled cluster theory and configuration interaction in predicting molecular properties, reaction energies, and spectroscopic parameters. Unlike orbital correlation, particle correlation is a global property of the electronic wavefunction rather than a measure between specific subsystems.

Electron Entanglement represents the quantum mechanical phenomenon where the quantum states of electrons cannot be described independently, even when separated by large distances. This non-classical correlation violates local realism and is quantified through quantum information measures such as von Neumann entropy and mutual information. For electrons, entanglement must respect the fermionic superselection rules which prohibit superpositions of different particle numbers, significantly affecting the quantification of orbital entanglement [1] [2].

Table 1: Fundamental Characteristics of Correlation Types in Molecular Systems

| Feature | Orbital Correlation | Particle Correlation | Electron Entanglement |

|---|---|---|---|

| Theoretical Origin | Electron interactions in orbital subspaces | Deviation from mean-field independent electron motion | Quantum non-separability violating classical probability |

| Primary Quantifiers | Orbital mutual information, reduced density matrices | Correlation energy, density cumulants | Von Neumann entropy, entanglement entropy, concurrence |

| Dependence on Basis | Strong (localized vs. canonical orbitals) | Weak (intensive property) | Moderate (affected by orbital choice but basis-independent in principle) |

| Role of Superselection Rules | Reduces measured correlations | Not applicable | Essential for physical meaning; removes unphysical entanglement |

| Chemical Manifestations | Multi-configurational states, bond-breaking | Dispersion forces, dynamic polarizability | Quantum coherence in molecular qubits, radical pairs |

Quantitative Measures and Relationships

The quantitative description of these correlation types employs distinct mathematical frameworks. Orbital correlation is commonly measured through the quantum mutual information between orbital pairs, derived from the von Neumann entropy of one- and two-orbital reduced density matrices (RDMs). For orbitals A and B, the mutual information is given by I(A:B) = S(ρA) + S(ρB) - S(ρ_AB), where S(ρ) = -Tr(ρ ln ρ) is the von Neumann entropy [1] [2].

Particle correlation is typically quantified by the correlation energy, defined as Ecorr = EHF - Eexact, where EHF is the Hartree-Fock energy and E_exact is the true ground state energy. More sophisticated measures include density cumulants and the connected components of reduced density matrices.

Electron entanglement is quantified through various entanglement measures adapted from quantum information theory. The von Neumann entropy of orbital subsystems serves as a primary measure, while the entanglement of formation and concurrence provide alternative quantifications. Recent research has demonstrated that when proper superselection rules are accounted for, the total correlation between orbitals is predominantly classical, with quantum entanglement playing a surprisingly minor role in many chemical bonds [2].

Experimental and Computational Methodologies

Protocols for Quantum Computation of Orbital Entanglement

Quantum computers offer a promising approach for quantifying orbital correlations and entanglement that would be prohibitively expensive for classical computation. The following protocol has been demonstrated for calculating von Neumann entropies and orbital entanglement on trapped-ion quantum processors [1]:

System Preparation: Select a strongly correlated molecular system. For the vinylene carbonate + O₂ reaction system, apply the AVAS (atomic valence active space) method to project onto relevant atomic orbitals (oxygen p-orbitals), yielding an active space of 6 electrons in 9 molecular orbitals.

Wavefunction Preparation: Encode the fermionic problem into qubits using Jordan-Wigner transformation. Prepare the ground state wavefunction at different reaction coordinates using an optimized variational quantum eigensolver (VQE) ansatz.

Orbital Reduced Density Matrix (ORDM) Measurement:

- Construct measurement circuits for one- and two-orbital reduced density matrices.

- Apply fermionic superselection rules to reduce measurement overhead by restricting to physically accessible sectors.

- Group Pauli operators into commuting sets to further minimize measurement requirements.

- Execute measurement circuits on quantum hardware (e.g., Quantinuum H1-1 trapped-ion processor).

Noise Mitigation: Apply post-measurement noise reduction techniques:

- Use thresholding to filter small singular values from noisy ORDMs.

- Apply maximum likelihood estimation to reconstruct physical ORDMs.

Entropy Calculation: Compute von Neumann entropies from the eigenvalues of the noise-reduced ORDMs to obtain orbital correlations and entanglement.

Quantum Computation of Orbital Entanglement

Classical Computational Approaches

Traditional computational chemistry offers well-established protocols for quantifying particle and orbital correlations:

Complete Active Space Self-Consistent Field (CASSCF) for Orbital Correlation:

Geometry Optimization: Use nudged elastic band (NEB) method with DFT (PBE/def2-SVP) to determine minimum energy paths for reactions [1].

Active Space Selection: Apply AVAS projection to identify strongly correlated orbitals. For the VC+O₂ system, project onto O₂ p-orbitals to obtain 6 electrons in 9 orbitals, then select a (4,6) active space subset [1].

Wavefunction Optimization: Perform CASSCF calculations with spin constraints (〈S²〉=0 for singlets) to optimize both CI coefficients and molecular orbitals [1].

Orbital Entropy Calculation: Construct one- and two-orbital reduced density matrices from CASSCF wavefunction and compute orbital entropies and mutual information.

High-Level Electron Correlation Methods for Particle Correlation:

Reference Calculation: Perform Hartree-Fock calculation to establish baseline energy.

Dynamic Correlation Treatment: Apply perturbation theory (MP2, CCSD(T)) or coupled cluster methods to capture dynamic correlation.

Benchmarking: Compare with experimental results or higher-level theories to validate correlation energy recovery.

Table 2: Comparison of Correlation Quantification Methods

| Method | Target Correlation | System Size Limit | Key Metrics | Experimental Validation |

|---|---|---|---|---|

| Quantum Hardware (e.g., H1-1) | Orbital entanglement | Small active spaces (4-12 qubits) | Von Neumann entropy, mutual information | Excellent agreement with noiseless simulation [1] |

| CASSCF | Orbital correlation, static correlation | ~16 electrons in 16 orbitals | Orbital entropies, configuration weights | Spectroscopy, bond dissociation energies |

| CCSD(T) | Particle correlation (dynamic) | ~50 atoms with triple-zeta basis | Correlation energy, reaction barriers | Thermochemistry (kcal/mol accuracy) |

| DMRG | Strong electron correlation | Large active spaces (100+ orbitals) | Block entropy, entanglement spectrum | Material properties, multireference systems |

Research Applications and Case Studies

Strongly Correlated Molecular Systems

The vinylene carbonate + O₂ → dioxetane reaction provides an excellent case study for orbital correlation analysis. This reaction is relevant to lithium-ion battery degradation where singlet oxygen attacks carbonate solvents. Quantum computations revealed distinctive orbital correlation patterns across different reaction stages [1]:

- Reactants: Moderate orbital correlations in O₂ π and π* orbitals.

- Transition State: Strongly enhanced correlations as oxygen bonds stretch and align with the C-C bond of carbonate, characteristic of static correlation in bond-breaking.

- Product (Dioxetane): Reduced correlations in the closed-shell singlet product.

The quantum computation successfully captured this correlation dynamics, with von Neumann entropies showing excellent agreement with noiseless benchmarks. This demonstrates the capability of quantum processors to track correlation-driven chemical transformations [1].

Correlation-Driven Materials Design

In quantum materials, orbital correlations drive emergent phenomena in correlated electron molecular orbital (CEMO) materials. The Nb₃X₈ (X = Cl, Br, I) series exemplifies symmetry and correlation-driven trimer formation in Kagome lattices [3]:

- Electronic Requirements: Triangular trimer stability requires 6-8 electrons occupying molecular orbitals derived from transition metal d-states.

- Correlation Strength: Intermediate correlation strength is essential—strong enough for local molecular orbital formation but weak enough to prevent charge ordering.

- Orbital Symmetry: Breathing distortions optimize bonding/antibonding occupation, enhancing stability by ~181 meV/atom.

This principles framework enables rational design of quantum materials with tailored magnetic and electronic properties through correlation engineering [3].

Table 3: Key Computational Tools and Resources for Correlation Analysis

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| PySCF | Electronic structure package | CASSCF, orbital entanglement | Open-source, Python-based, AVAS implementation [1] |

| Quantinuum H1-1 | Trapped-ion quantum computer | Orbital RDM measurement | High-fidelity operations, mid-circuit measurement [1] |

| DFT+U | Density functional theory with Hubbard correction | Strongly correlated materials | Parameterized electron localization [3] |

| Multi-Dimensionally Constrained CDFT | Nuclear structure calculations | Octupole correlations in nuclei | Shape deformation analysis [4] |

| NCI Orbital Decomposition | Non-covalent interaction analysis | Intermolecular forces | Orbital-pair interaction energy decomposition [5] |

Orbital correlation, particle correlation, and electron entanglement represent distinct yet interconnected frameworks for understanding electron interactions in molecular systems. While particle correlation has traditionally dominated quantum chemistry methods for recovering correlation energies, orbital correlation provides a chemically intuitive picture of electron interactions in specific orbital subspaces. Electron entanglement offers the most fundamental quantum information perspective but appears less dominant in chemical bonding when proper physical constraints are applied.

The emerging capability to measure these correlations on quantum processors represents a significant advancement, particularly for strongly correlated systems intractable to classical computation. As quantum hardware advances, the integrated understanding of these correlation phenomena will enable more accurate predictions of molecular behavior across drug discovery, materials design, and quantum technology development. Future research directions include developing more efficient measurement strategies for orbital RDMs, extending correlation analysis to larger molecular systems, and establishing clearer connections between orbital entanglement measures and chemical reactivity predictions.

The Critical Role of Correlation in Modeling Bond Breaking, Transition States, and Strongly Correlated Electrons

In computational chemistry and materials science, accurately describing electron correlation is paramount for modeling complex quantum phenomena. This is particularly true for processes involving bond breaking, transition states, and strongly correlated materials, where conventional single-reference quantum chemical methods often fail. Electron correlation encompasses both dynamic correlation (arising from the instantaneous Coulomb repulsion between electrons) and static correlation (resulting from near-degeneracies of electronic configurations) [6]. The significance of static correlation is profound—it has substantial nonlocal contributions to potential energy surfaces and can qualitatively alter their shape, making its accurate treatment essential for studying chemical reactions and correlated materials [6].

This guide provides a comparative analysis of computational methods designed to handle strong electron correlation. We objectively evaluate their performance, supported by experimental and benchmark data, and detail the protocols essential for their application. Framed within a broader thesis on orbital and particle correlation analysis, this resource is tailored for researchers, scientists, and drug development professionals who require robust computational tools to model challenging electronic structures.

Theoretical Foundations: From Bond Breaking to Correlated Materials

The Nature of Transition States and Bond Breaking

A transition state is a transient, high-energy configuration occurring during a chemical reaction where bonds are partially broken and partially formed. It exists at a local energy maximum on the reaction coordinate, characterized by partial bonds and an extremely short lifetime on the order of femtoseconds (10⁻¹⁵ seconds), making it experimentally unisolable [7]. In the SN2 reaction between NaOH and CH₃Br, for instance, the transition state features a trigonal bipyramidal geometry where the nucleophile and leaving group share partial bonds with the central carbon atom, violating ideal tetrahedral geometry [7].

Bond dissociation represents a classic case of strong static correlation. As a bond stretches, electronic configurations that were negligible at equilibrium geometry become near-degenerate with the ground state. Conventional density functional theory (DFT) with standard functionals often fails qualitatively here, while single-reference wavefunction methods like coupled-cluster theory face immense challenges [6].

Strongly Correlated Electron Systems

In materials science, strongly correlated materials are those where electron-electron interactions dominate physical properties, rendering conventional one-electron models like standard DFT inadequate [8]. The competition between kinetic energy and electron-electron repulsion in these systems gives rise to a rich tapestry of quantum phases, including:

- High-temperature superconductivity

- Magnetism

- Mott metal-insulator transitions [9]

These phenomena are ubiquitous in materials with partially filled d or f electron shells, such as transition metal oxides (e.g., vanadium and nickel oxides) and rare-earth metals [10]. In these systems, the motion of one electron is highly dependent on the positions and states of others, leading to remarkable emergent behaviors.

Comparative Analysis of Computational Methods

The following table summarizes the key features, strengths, and limitations of different computational approaches for handling strong correlation.

Table 1: Comparison of Computational Methods for Strongly Correlated Systems

| Method | Theoretical Basis | Key Features | Strengths | Limitations |

|---|---|---|---|---|

| CASSCF [6] | Multiconfigurational wavefunction | Full CI within active orbital space; orbital optimization | Gold standard for static correlation; symmetry-adapted | Exponential scaling with active space size; active space selection non-trivial |

| DFT+U [8] | Density Functional Theory | Adds Hubbard U to treat on-site Coulomb interaction | Corrects self-interaction error in DFT; computationally efficient | Static treatment of correlation; U parameter choice is empirical |

| Dynamical Mean Field Theory (DMFT) [10] [8] | Many-body perturbation theory | Maps lattice problem to impurity model; handles dynamic correlations | Non-perturbative; captures Kondo physics & Mott transitions | Computationally demanding; impurity solver required |

| Unrestricted Natural Orbital (UNO) [6] | UHF natural orbitals | Uses fractional occupancy (0.02-1.98) to define active space | Inexpensive; excellent approximation to CASSCF orbitals | Can be discontinuous if symmetry breaks; historically required UHF convergence |

| Atomic Valence Active Space (AVAS) [6] | Projection to atomic orbitals | Projects molecular orbitals to user-defined atomic orbitals | Automates active space selection; chemically intuitive | Requires initial orbital choice; can yield larger spaces |

| Quantum Computing (VQE) [1] | Variational quantum algorithms | Quantum hardware stores wavefunction; measures orbital entropy | Bypasses exponential classical cost; direct entanglement measurement | Current hardware limitations (noise, qubit count) |

Performance Benchmarks and Application Data

Table 2: Performance Comparison for Different Chemical Systems

| Chemical System | Strong Correlation Origin | Recommended Method(s) | Key Performance Metrics |

|---|---|---|---|

| Diatomic Molecule Bond Breaking (e.g., F₂, N₂) [6] | Stretched bonds; near-degeneracy | CASSCF, UNO-CAS | UNO error typically <1 mEh/active orbital vs. CASSCF [6] |

| Transition Metal Complexes (e.g., Hieber's anion, ferrocene) [6] | Partially filled d-orbitals | CASSCF, DMFT, UNO-CAS | UNO provides identical active space to expensive approximate full CI [6] |

| Organic Reactions (e.g., Bergman cyclization) [6] | Transition state bond rearrangements | CASSCF, UNO-CAS | Correctly describes biradical character at transition state |

| Conjugated Polymers (e.g., polyacenes) [6] | Small HOMO-LUMO gap | CASSCF, UNO-CAS | Handles growing static correlation with system size |

| Li-ion Battery Materials (e.g., Li-doped V₂O₅) [8] | Polarons; electron localization | DFT+DMFT | Captures dual nature (free/bound) of polarons; explains conduction mechanism [8] |

| Mott Insulators (e.g., transition metal oxides) [9] | Strong on-site Coulomb repulsion | DMFT, DFT+DMFT | Correctly predicts insulating behavior where DFT fails |

| VC + O₂ Reaction [1] | Transition state static correlation | VQE on quantum processor | Calculated orbital entropies agree with noiseless benchmarks [1] |

Experimental and Computational Protocols

Protocol 1: Transition State Analysis via Kinetic Isotope Effects (KIEs)

This protocol determines enzymatic transition state structures using experimental kinetic isotope effects combined with computational chemistry [11].

Detailed Methodology:

- KIE Measurement: Compare reaction rates of isotope-labeled and natural abundance reactants. Competitive reactions yield KIEs on ( k{cat}/Km ), encompassing all steps from free reactants to the first irreversible step [11].

- Intrinsic KIE Determination: Correct measured KIEs for rate-limiting steps not involving the chemical step (e.g., product release) to obtain intrinsic KIEs, which report solely on the bonding environment at the transition state [11].

- Computational Matching: Use quantum chemistry software (e.g., Gaussian) to locate reactants, transition state, and products. Computational methods like B3LYP/6-31G* are common. The transition state is identified by a single imaginary frequency [11].

- KIE Fitting: Fix bonds along the reaction coordinate and relax other geometric parameters. Compute KIEs for each trial structure using specialized software (e.g., QUIVER or ISOEFF98). Iterate until computed KIEs match experimental intrinsic KIEs [11].

- Electrostatic Potential Mapping: Use programs like CUBE in Gaussian to generate molecular electrostatic potential surfaces of the transition state for analog design [11].

Protocol 2: Active Space Selection for Multiconfigurational Calculations

This protocol outlines the UNO method for selecting active orbitals for CASSCF calculations [6].

Detailed Methodology:

- UHF Calculation: Perform an Unrestricted Hartree-Fock calculation, seeking broken-symmetry solutions. Modern analytical methods accurate to fourth order in orbital rotation angles have largely solved historical convergence problems [6].

- Natural Orbital Transformation: Diagonalize the UHF charge density matrix to obtain Unrestricted Natural Orbitals (UNOs) [6].

- Active Orbital Selection: Identify fractionally occupied orbitals, typically those with occupancies between 0.02 and 1.98 (or 0.01 and 1.99). These orbitals span the active space [6].

- CASSCF Calculation: Use the selected active space to perform a CASSCF calculation. The UNO orbitals typically provide an excellent starting point, with energies often within 1 mEh of the fully optimized CASSCF energy [6].

Protocol 3: Orbital Entanglement Measurement on a Quantum Computer

This protocol measures orbital correlation and entanglement using a quantum computer, as demonstrated for the VC + O₂ reaction [1].

Detailed Methodology:

- Classical Pre-processing:

- Use DFT with the PBE functional and a def2-SVP basis set to optimize reaction pathway geometries (e.g., via Nudged Elastic Band method) [1].

- Perform AVAS projection onto relevant atomic orbitals (e.g., O₂ p orbitals) to define a chemically relevant active space (e.g., 6 electrons in 9 orbitals) [1].

- Run CASSCF calculations to obtain reference wavefunctions and configuration interaction coefficients [1].

- Quantum State Preparation:

- Quantum Measurement and Post-processing:

- Execute measurement circuits on the quantum computer (e.g., Quantinuum H1-1 trapped-ion processor) to reconstruct Orbital Reduced Density Matrices (ORDMs). Group Pauli operators into commuting sets, considering fermionic superselection rules to reduce measurement counts [1].

- Apply noise reduction techniques: use thresholding to filter small singular values from noisy ORDMs, followed by a maximum likelihood estimate to reconstruct physical ORDMs [1].

- Calculate von Neumann entropies from the eigenvalues of the cleaned ORDMs to quantify orbital correlation and entanglement [1].

The diagram below illustrates the core workflow for analyzing strongly correlated systems, integrating both classical and quantum computational approaches.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Computational and Experimental Reagents for Correlation Studies

| Item / Resource | Type | Primary Function | Example Applications |

|---|---|---|---|

| Gaussian Suite [11] | Software | Quantum chemical package for electronic structure | Transition state optimization; KIE matching; electrostatic potential mapping [11] |

| PySCF [1] | Software | Python-based quantum chemistry framework | CASSCF calculations; AVAS active space selection [1] |

| Quantinuum H1-1 [1] | Hardware | Trapped-ion quantum computer | Measuring orbital entanglement; preparing correlated wavefunctions [1] |

| ISOEFF98 / QUIVER [11] | Software | Calculates isotope effects from molecular structures | Matching computed KIEs to experimental values for transition state analysis [11] |

| Nudged Elastic Band (NEB) [1] | Algorithm | Locates minimum energy paths and transition states | Mapping reaction coordinates for VC + O₂ → dioxetane [1] |

| Jordan-Wigner Transform [1] | Algorithm | Encodes fermionic operators into qubit operators | Preparing molecular Hamiltonians for quantum computation [1] |

| Variational Quantum Eigensolver (VQE) [1] | Algorithm | Hybrid quantum-classical ground state energy calculation | Preparing ground state wavefunctions on quantum hardware [1] |

| Dynamical Mean-Field Theory (DMFT) [10] [8] | Theoretical Framework | Non-perturbative treatment of correlated electrons | Studying Mott transitions in transition metal oxides [10] |

The accurate modeling of bond breaking, transition states, and strongly correlated electrons demands a methodical approach that respects the profound role of electron correlation. No single method universally outperforms all others; rather, the choice depends on the specific system, the property of interest, and available computational resources. CASSCF with carefully selected active spaces remains the gold standard for molecular quantum chemistry, while DMFT excels for extended solid-state systems. Promisingly, emerging quantum computing approaches now enable direct measurement of orbital entanglement, offering a new paradigm for quantifying correlation. As computational power increases and algorithms refine, the integration of these methods will continue to push the boundaries of our understanding and control of complex chemical and materials systems.

In the realm of computational drug discovery, the concepts of dynamic and static correlation represent two fundamentally distinct approaches to predicting molecular behavior and interactions. Static correlation typically refers to simplified, time-averaged models that use fixed parameters for rapid screening, while dynamic correlation encompasses more complex, time-evolving models that account for physiological variability and changing conditions. This distinction is particularly crucial in orbital correlation analysis, where the accurate representation of electron behavior in molecular systems directly impacts the predictive accuracy of drug-target interactions, binding affinities, and metabolic pathways. The pharmaceutical industry increasingly relies on these computational approaches to prioritize compounds for expensive synthetic and experimental testing, making the choice between static and dynamic correlation methods a critical determinant of research efficiency and success rates.

The broader thesis of orbital correlation particle correlation comparative analysis research provides essential context for understanding these methodologies. At the quantum level, electron correlation—the interaction between electrons in a molecular system—manifests differently in static and dynamic contexts. Static correlation arises when a single electronic configuration inadequately describes a system, requiring multiple configurations for accuracy, particularly in bond-breaking or transition states. Dynamic correlation, in contrast, accounts for the instantaneous correlations between electrons as they move and interact. This fundamental distinction at the quantum level parallels the methodological differences in drug discovery applications, where each approach offers distinct advantages and limitations for specific research scenarios.

Theoretical Foundations: Orbital Correlation Principles

Quantum Chemical Basis of Electron Correlation

The accurate description of electron behavior in molecular orbitals represents a cornerstone of predictive computational chemistry. Static correlation dominates in systems with near-degeneracy, where multiple electron configurations contribute significantly to the ground state wavefunction. This is particularly evident in bond dissociation processes, transition metal complexes, and biradical systems where a single-configuration description fails dramatically. In contrast, dynamic correlation accounts for the instantaneous repulsion between electrons that is inadequately described by mean-field approaches. Most molecular systems require both types of correlation for quantitatively accurate predictions, though practical computational constraints often force researchers to prioritize one approach based on the specific application.

Quantum information theory has provided powerful tools for quantifying orbital correlation and entanglement in molecular systems. Recent research has demonstrated procedures for obtaining orbital von Neumann entropies from orbital reduced density matrices (ORDMs), enabling direct measurement of correlation strength between molecular orbitals [1]. These measures have revealed fascinating insights—for instance, one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction when superselection rules are properly accounted for [1]. This fundamental understanding directly informs the selection of appropriate computational methods for drug discovery applications where accurate prediction of molecular reactivity and binding is paramount.

From Quantum Principles to Practical Prediction Methods

The theoretical framework of electron correlation translates directly to practical computational methods used in pharmaceutical research. Wavefunction-based methods like complete active space self-consistent field (CASSCF) explicitly handle static correlation by considering multiple configurations, while perturbation theories (e.g., MP2, CCSD(T)) or density functional methods primarily address dynamic correlation. The balance between these approaches in practical drug discovery reflects fundamental trade-offs between computational cost and predictive accuracy, with different methodologies appropriate for different stages of the drug development pipeline.

The implications for drug discovery are profound, as the choice of correlation treatment affects predictions of protein-ligand binding, reaction barriers in metabolic pathways, and electronic properties relevant to photopharmacology. For instance, studies on strongly correlated systems like uranium monoxide have revealed orbital-selective electronic transitions driven by relativistic spin-orbit coupling, leading to metallic 5f5/2 states and insulating 5f7/2 states [12]. Such nuanced electronic behavior would be completely missed by inadequate correlation treatments, potentially leading to faulty predictions of reactivity or binding in drug candidates targeting metalloenzymes.

Methodological Comparison: Static vs. Dynamic Modeling Approaches

Static Models in Drug Discovery Applications

Static models employ simplified, time-averaged parameters to predict drug behavior and interactions. In the context of drug-drug interaction (DDI) prediction, mechanistic static models use fixed inhibitor concentrations such as unbound average steady-state systemic concentration (Isys) or maximum steady-state systemic concentration (Imax) to estimate interaction magnitude [13]. These approaches provide rapid screening capabilities that are particularly valuable in early drug discovery when elimination routes of the victim compound and the role of gut extraction are not well defined [13].

The computational efficiency of static models enables broad screening of chemical space but comes with significant limitations. Static models systematically overlook temporal dynamics of drug concentration, inter-individual physiological variability, and complex interactions occurring at specific timepoints during drug administration. For DDI prediction, static equations using Isys have demonstrated reasonable accuracy (84% of interactions predicted within 2-fold in one analysis) [13], but this performance comes with important caveats regarding their application scope.

Dynamic Models in Drug Discovery Applications

Dynamic models incorporate time-dependent parameters and population variability to create more physiologically realistic simulations. Physiologically based pharmacokinetic (PBPK) modeling platforms like Simcyp represent the state-of-the-art in dynamic modeling, using time-variable concentrations of perpetrator and victim drugs in various organs and the systemic circulation as driver concentrations [14]. This approach enables incorporation of inter-individual variability through covariates such as CYP enzyme polymorphisms, age, eliminating organ function, and pathologies affecting gut wall abundance of enzymes and transporters [14].

The key advantage of dynamic models lies in their ability to identify vulnerable patient populations who may experience extreme DDIs—individuals unlikely to be adequately represented in typical clinical trials. One comprehensive simulation study demonstrated that using a 'vulnerable patient' representative showed discrepancy rates of up to 37.8% between static and dynamic predictions [14]. This capacity to model special populations makes dynamic approaches particularly valuable for informing regulatory decisions and prescribing information when clinical data in these populations are lacking.

Table 1: Fundamental Characteristics of Static and Dynamic Modeling Approaches

| Characteristic | Static Models | Dynamic Models |

|---|---|---|

| Time Handling | Time-averaged parameters | Time-varying parameters |

| Concentration Representation | Fixed values (e.g., Isys, Imax) | Continuously changing concentrations |

| Population Variability | Limited or no variability | Incorporates physiological variability |

| Computational Demand | Low | High |

| Typical Application Stage | Early discovery | Late discovery/development |

| Regulatory Acceptance | Screening purposes | Label recommendations |

Quantitative Performance Comparison in Key Applications

Drug-Drug Interaction Prediction Accuracy

Direct comparisons between static and dynamic models reveal significant differences in prediction accuracy across different scenarios. A retrospective analysis of 19 clinical interactions from 11 proprietary compounds found that static equations using unbound average steady-state systemic inhibitor concentration (Isys) performed better than Simcyp V11 (84% versus 58% of interactions predicted within 2-fold) [13]. However, this advantage must be interpreted in context—the superior performance came with specific implementation choices, including using a fixed fraction of gut extraction and neglecting gut extraction in the case of induction interactions.

In contrast, a large-scale simulation study examining 30,000 DDIs between hypothetical substrates and inhibitors of CYP3A4 found that static and dynamic models are not equivalent for predicting metabolic DDIs arising from competitive CYP inhibition [14]. The highest rate of discrepancy in the 'population' representative was 85.9% when using Cavg,ss as the inhibitor driver concentration, with the vulnerable patient representative showing IMDR >1.25 discrepancies up to 37.8% of the time [14]. These results highlight the context-dependent nature of model performance and the critical importance of the specific patient population being modeled.

Table 2: Performance Comparison for DDI Prediction

| Performance Metric | Static Models | Dynamic Models | Study Context |

|---|---|---|---|

| Predictions within 2-fold | 84% | 58% | 19 clinical interactions from 11 compounds [13] |

| Inter-model discrepancy (IMDR <0.8) | - | - | Up to 85.9% in population representative [14] |

| Inter-model discrepancy (IMDR >1.25) | - | - | Up to 37.8% in vulnerable patient representative [14] |

| Recommended Variability | Fixed variability of 40% of predicted mean AUC ratio [13] | Incorporates physiological variability sources | Population-based simulation [13] |

Protein-Ligand Interaction and Binding Prediction

The static-dynamic distinction extends beyond DDI prediction to fundamental aspects of drug-target interactions. Traditional structure-based drug discovery often relies on static protein structures, which provide limited information about the conformational flexibility essential for protein function. The emerging paradigm recognizes that "protein function is not solely determined by static three-dimensional structures but is fundamentally governed by dynamic transitions between multiple conformational states" [15].

Innovative approaches that integrate both static structural information and dynamic correlations from molecular dynamics trajectories demonstrate significant improvements over structure-based approaches across multiple tasks, including atomic adaptability prediction, binding site detection, and binding affinity prediction [16]. This fusion of static and dynamic information provides complementary signals for understanding protein-ligand interactions, offering new possibilities for drug design [16]. The performance advantage demonstrates that dynamic information captures essential aspects of molecular behavior that static snapshots cannot represent.

Experimental Protocols and Methodologies

Protocol for Comparative DDI Prediction Studies

Well-designed benchmarking studies follow specific methodologies to ensure fair comparison between static and dynamic approaches. For DDI prediction, a typical protocol involves several key steps. First, a diverse set of victim and perpetrator drugs is selected, encompassing reversible or time-dependent inhibition or induction of relevant enzymes like CYP3A4 or CYP2D6 [13]. Clinical interaction studies that involve inhibition of drug transporters may be excluded to focus specifically on metabolic interactions.

All input data except for gut interaction parameters are kept identical for both static and dynamic models to ensure fair comparison [13]. For static models, equations typically use unbound average steady-state systemic inhibitor concentration (Isys) with a fixed fraction of gut extraction. For dynamic models, platforms like Simcyp implement population-based simulations incorporating demographic and genetic variability sources. Performance is evaluated by comparing predicted area under the concentration-time curve ratios (AUCr) to clinically observed values, with predictions within 2-fold of observed values generally considered acceptable [13].

Protocol for Protein-Ligand Interaction Studies

The integration of static and dynamic information for protein-ligand interaction prediction follows a distinct methodological framework. Researchers first gather static structural information from experimental sources like protein data bank (PDB) or computational predictions from tools like AlphaFold [16] [15]. Dynamic information is then obtained from molecular dynamics (MD) simulations, which provide trajectories of protein motion over time [16].

These static and dynamic components are integrated into a heterogeneous graph representation, which is processed using relational graph neural networks (RGNNs) to generate predictions [16]. Performance is evaluated across specific tasks like atomic adaptability prediction, binding site detection, and binding affinity prediction, with the combined static-dynamic approach compared against methods using only static structural information [16]. This protocol demonstrates how hybrid approaches can leverage the complementary strengths of both methodologies.

Diagram 1: Experimental workflow for comparing static and dynamic models in drug discovery applications. The protocol emphasizes identical input parameters and context-specific interpretation of results.

Table 3: Key Research Reagent Solutions for Correlation Analysis

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| Simcyp Simulator | Software Platform | Population-based PBPK modeling | Dynamic DDI prediction, special populations [13] [14] |

| ATLAS Database | Data Resource | Protein molecular dynamics trajectories | Protein dynamic conformation analysis [15] |

| GPCRmd Database | Specialized Database | GPCR molecular dynamics data | Membrane protein dynamics, drug targeting [15] |

| ChEMBL Database | Compound Activity Data | Bioactivity data from literature | Benchmarking compound activity prediction [17] |

| CARA Benchmark | Evaluation Framework | Compound activity assessment | Evaluating prediction models in real-world contexts [17] |

| Quantum Computers | Hardware Platform | Wavefunction storage and computation | Orbital correlation and entanglement calculation [1] |

| AlphaFold | AI Tool | Protein structure prediction | Static structure generation as basis for dynamics [15] |

Implications for Drug Discovery Accuracy and Decision-Making

Impact on Predictive Accuracy Across Development Stages

The choice between static and dynamic correlation approaches has stage-specific implications for predictive accuracy throughout the drug development pipeline. In early discovery, when metabolic routes and gut extraction roles are poorly defined, static models provide valuable screening utility with minimal computational investment [13]. The AURA framework exemplifies how standardized statistical methodologies can enhance early-stage drug optimization by integrating diverse data types and offering flexible visualizations for cross-functional teams [18].

As compounds advance toward clinical development, the limitations of static approaches become more consequential. The failure to identify vulnerable populations at high DDI risk represents a significant accuracy limitation with potential clinical consequences [14]. Dynamic models excel in these later stages by incorporating physiological variability and identifying worst-case scenarios, though they require more extensive compound characterization and computational resources.

Strategic Implementation in Pharmaceutical R&D

The most effective implementation of correlation methodologies involves strategic application of both approaches according to their complementary strengths. Static models serve as efficient filters for prioritizing compounds in data-poor environments, while dynamic models provide rigorous assessment of clinical risk in data-rich environments. This strategic approach acknowledges that "static models are not equivalent to dynamic models for predicting metabolic DDIs via competitive CYP inhibition across diverse drug parameter spaces, particularly for vulnerable patients" [14].

Emerging methodologies that fuse static and dynamic information offer promising avenues for enhanced accuracy. For protein modeling, frameworks that integrate static structural information with dynamic correlations from molecular dynamics trajectories demonstrate "significant improvements over structure-based approaches across three distinct tasks: atomic adaptability prediction, binding site detection, and binding affinity prediction" [16]. This hybrid approach exemplifies the future direction of computational drug discovery—leveraging the respective strengths of both methodologies while mitigating their individual limitations.

The distinction between dynamic and static correlation methodologies represents more than a technical computational choice—it reflects fundamental decisions about how molecular complexity is represented in pharmaceutical research. Static approaches offer efficiency and simplicity valuable in early discovery, while dynamic approaches provide physiological realism essential for clinical risk assessment. Rather than an either-or proposition, the most effective drug discovery pipelines strategically integrate both approaches according to specific research questions and development stages.

The evolving paradigm recognizes that proteins and drug molecules are inherently dynamic entities, and their interactions cannot be fully captured by static representations alone. As the field progresses, hybrid approaches that fuse static structural information with dynamic behavioral data offer promising avenues for enhanced predictive accuracy. By understanding the distinct strengths, limitations, and appropriate applications of each methodology, drug discovery researchers can make informed decisions that optimize predictive accuracy while efficiently allocating computational resources across the development pipeline.

Theoretical Foundations and Key Concepts

Quantum information theory provides powerful tools for analyzing complex electronic structures in molecular systems by quantifying correlation and entanglement between molecular orbitals. These tools are increasingly vital for studying strongly correlated systems that challenge traditional computational methods, with significant implications for drug discovery and materials science [19] [1] [20].

The von Neumann entropy serves as the foundational concept for quantifying orbital entanglement. For a quantum system described by density matrix ρ, it is defined as S(ρ) = -Tr(ρ log ρ) = -Σwₚ log wₚ, where wₚ are the eigenvalues of the density matrix [19]. When applied to molecular orbitals, we consider individual orbitals as subsystems and compute their orbital entropy by tracing the full wavefunction over the remaining "environment" orbitals [19]. For a single orbital i, the reduced density matrix is obtained through ρᵢ = Trₑᵢ|Ψ⟩⟨Ψ|, where ℰᵢ represents all other orbitals except i [19].

Mutual information extends this concept to measure the correlation between specific orbital pairs. For two orbitals i and j, the mutual information Iᵢⱼ quantifies their total correlation and is defined as Iᵢⱼ = (sᵢ⁽¹⁾ + sⱼ⁽¹⁾ - sᵢⱼ⁽²⁾)(1 - δᵢⱼ), where sᵢ⁽¹⁾ and sⱼ⁽¹⁾ are single-orbital entropies, sᵢⱼ⁽²⁾ is the two-orbital entropy, and δᵢⱼ is the Kronecker delta [21]. This formulation captures both classical correlations and quantum entanglement between orbitals, providing crucial insights into bonding patterns and correlation structures within molecules [1] [21].

Recent theoretical advances include the development of spin-free orbital entropy and mutual information, which simplify entanglement analysis and are invariant with respect to the Mₛ component of spin multiplet states [19]. This approach helps distinguish static correlation due to spin couplings from genuine strong correlation arising from multiconfigurational character in wavefunctions [19].

Comparative Analysis of Measurement Methodologies

Classical Computational Approaches

Traditional quantum chemistry methods employ density matrix renormalization group (DMRG) calculations to compute orbital entropies and mutual information, particularly for strongly correlated systems [21]. This approach effectively handles multireference systems where multiple Slater determinants contribute significantly to the wavefunction [19] [21]. The DMRG method achieves near-full configuration interaction accuracy within active spaces, making it suitable for systems with quasidegenerate orbitals such as transition metal complexes and molecules undergoing bond breaking [19] [21].

Machine learning approaches have recently emerged as efficient alternatives to direct DMRG calculations. These models predict mutual information patterns for strongly correlated systems at significantly reduced computational cost, enabling rapid determination of correlation structures and optimal orbital ordering for subsequent high-accuracy calculations [21]. For aromatic molecules like p-xylene and p-quinine, ML models successfully reproduce the expected electron distribution patterns, though they may partially underestimate values for some orbital pairs [21].

Table 1: Comparison of Classical Computational Methods for Orbital Entropy and Mutual Information

| Method | Theoretical Basis | Key Applications | Measurement Requirements | Limitations |

|---|---|---|---|---|

| DMRG | Matrix product states | Strongly correlated systems, transition metal complexes [19] [21] | Wavefunction optimization with bond dimension ~2000 [21] | Computationally expensive for large active spaces [21] |

| Post-DMRG | DMRG-adiabatic connection, downfolding CC [21] | Systems requiring dynamic correlation | Additional correlation calculations beyond active space [21] | Increased complexity and computational cost [21] |

| Machine Learning | Trained on DMRG data [21] | Correlation pattern prediction, orbital ordering [21] | Pre-trained model inference | Potential underestimation for specific orbital pairs [21] |

| CASSCF/AVAS | Active space methods with orbital optimization [1] | Reaction pathways, transition states | Orbital localization and active space selection [1] | Dependency on active space selection [1] |

Quantum Computing Approaches

Quantum computers offer a fundamentally different approach to measuring orbital correlations by directly preparing molecular wavefunctions and measuring reduced density matrices. Recent implementations on trapped-ion quantum computers, such as Quantinuum's H1-1 system, have successfully calculated von Neumann entropies for molecular orbitals in strongly correlated systems like the vinylene carbonate + O₂ reaction relevant to lithium-ion batteries [1].

A critical consideration in quantum computation of entanglement measures is the implementation of fermionic superselection rules (SSRs), which account for fundamental fermionic symmetries [1]. These rules significantly reduce the number of quantum circuits required for measurements and prevent overestimation of entanglement [1]. When SSRs are properly incorporated, they lead to an important physical result: one-orbital entanglement vanishes unless opposite-spin open shell configurations are present in the wavefunction [1].

Table 2: Quantum Computing Protocols for Orbital Correlation Measurements

| Component | Implementation | Key Innovation | Impact |

|---|---|---|---|

| State Preparation | VQE with JW transformation [1] | Fermionic encoding into qubits | Accurate ground state wavefunctions |

| Measurement | Pauli operator commuting sets [1] | Accounting for superselection rules | Reduced circuit counts, physical results |

| Noise Mitigation | Singular value thresholding + maximum likelihood [1] | Post-measurement noise reduction | Experimental accuracy matching noiseless benchmarks |

| System | Quantinuum H1-1 trapped-ion quantum computer [1] | High-fidelity quantum operations | Reliable entropy calculation for moderate systems |

Experimental Protocols and Workflows

Classical DMRG Workflow

The standard protocol for computing orbital entropies and mutual information via DMRG begins with molecular geometry optimization using methods like Nudged Elastic Band for reaction pathways [1]. Next, researchers perform active space selection typically using atomic valence active space projection to identify orbitals most relevant to strong correlation, often targeting specific atomic orbitals like oxygen p orbitals in O₂ reactions [1].

The core computational phase involves DMRG wavefunction optimization with sufficient bond dimension (typically 2000 for small systems) to achieve convergence [21]. Finally, entropy calculations proceed by constructing one- and two-orbital reduced density matrices from the optimized wavefunction and computing their eigenvalues to obtain orbital entropies and mutual information [19] [21].

Classical DMRG Workflow for Orbital Entropy Calculation

Quantum Computing Measurement Protocol

Quantum approaches employ a different workflow beginning with Hamiltonian encoding using Jordan-Wigner or similar transformations to map fermionic operators to qubit operators [1]. Next, ansatz optimization uses variational quantum eigensolver to prepare ground states with offline optimization of circuit parameters [1].

The critical measurement phase involves executing quantum circuits grouped by commuting Pauli operators while respecting superselection rules to efficiently reconstruct orbital reduced density matrices [1]. Finally, error mitigation applies post-measurement noise reduction through singular value thresholding and maximum likelihood estimation to obtain physical density matrices [1].

Applications in Pharmaceutical Research

Quantum information measures of orbital correlation are revolutionizing pharmaceutical research by enabling more accurate simulation of complex molecular interactions central to drug discovery. The iron-sulfur bound complexes exemplify systems where orbital entropy analysis provides crucial insights - these biologically essential complexes facilitate electron transfer in processes like nitrogen fixation and photosynthesis, but challenge computational methods due to partially occupied 3d-shells and many closely lying states of different spin multiplicity [19]. For such transition metal compounds, orbital entanglement patterns help distinguish between high-spin states dominated by single configurations and low-spin states with more complicated multiconfigurational character [19].

In drug-protein binding studies, quantum-powered tools model interaction dynamics with unprecedented accuracy by accounting for electron correlation effects at the orbital level [22]. This approach is particularly valuable for studying water molecules that mediate protein-ligand binding processes, as quantum algorithms can efficiently evaluate numerous configurations of water placement within protein pockets, even in challenging buried regions [22]. Companies like Qubit Pharmaceuticals and Pasqal have demonstrated successful implementation of hybrid quantum-classical approaches for analyzing protein hydration, marking significant advances in computational drug discovery [22].

The Alzheimer's disease research community has embraced these techniques through initiatives like the Quanta-Bind platform, where quantum methods study protein-metal interactions tied to neurodegeneration [23]. By applying quantum information analysis to these systems, researchers can identify correlation patterns that illuminate the electronic structure factors contributing to pathological processes, potentially accelerating therapeutic development [23].

Table 3: Pharmaceutical Applications of Orbital Correlation Analysis

| Application Area | System Studied | Quantum Information Tool | Impact |

|---|---|---|---|

| Enzyme Simulation | Cytochrome P450 [24] | Quantum entanglement measures | Improved drug metabolism prediction |

| Neurodegenerative Disease | Protein-metal interactions [23] | Orbital correlation analysis | Insights into Alzheimer's mechanisms |

| Battery Material Degradation | Vinylene carbonate + O₂ [1] | Orbital entropy along reaction path | Understanding of battery degradation |

| Protein-Ligand Binding | Hydration site prediction [22] | Quantum-assisted molecular docking | More accurate binding affinity prediction |

Essential Research Tools and Reagents

Computational Software and Platforms

The PySCF package provides essential infrastructure for performing active space calculations and orbital localization through methods like Foster-Boys or Pipek-Mezey, which help avoid overestimation of correlation from delocalized orbital bases [1] [21]. DMRG implementations such as those in CheMPS2 or Block2 enable high-accuracy wavefunction optimization for strongly correlated systems, with capabilities for calculating one- and two-orbital reduced density matrices [21]. Quantum computing frameworks including Qiskit, Cirq, and PennyLane offer tools for mapping chemical problems to quantum circuits and executing them on hardware like Quantinuum's H1-1 trapped-ion systems [1].

Quantum Hardware Systems

Trapped-ion quantum computers like the Quantinuum H1-1 provide high-fidelity gates essential for accurate measurement of orbital reduced density matrices, with current implementations successfully calculating von Neumann entropies for moderate system sizes [1]. Superconducting quantum processors including Google's Willow chip and IBM's Quantum systems demonstrate rapid progress in qubit count and error correction, with algorithmic advances reducing quantum error correction overhead by up to 100 times [24]. Neutral-atom platforms from companies like Atom Computing and Pasqal offer alternative approaches with recent demonstrations of utility-scale quantum operations relevant to molecular simulation [22] [24].

Research Tools for Orbital Entropy Studies

Orbital entropy and mutual information represent powerful concepts from quantum information theory that are transforming how researchers analyze electronic structure in complex molecular systems. As both classical algorithms and quantum computing hardware continue to advance, these measures provide increasingly detailed insights into correlation patterns essential for understanding drug-receptor interactions, catalytic mechanisms, and materials properties. The integration of machine learning with quantum information analysis offers particularly promising avenues for accelerating drug discovery pipelines and developing more targeted therapeutics for complex diseases.

In computational drug discovery, strong electron correlation presents a significant challenge for accurately modeling complex biological systems. This is particularly true for two important classes of drug targets: metalloproteins containing transition metals and large protein-protein interfaces. Strong correlation effects arise in systems with nearly degenerate electronic states, making them difficult to treat with conventional computational methods. Transition metals exhibit complex electronic structures due to their partially filled d-orbitals, while extensive π-stacking and charge transfer at protein-protein interfaces also introduce significant correlation effects.

Understanding these challenging targets requires advanced computational approaches that can properly describe their electronic structures. This guide provides a comparative analysis of methodologies for studying these systems, summarizes experimental validation protocols, and presents the essential toolkit for researchers working at the intersection of quantum chemistry and drug discovery.

Computational Methodologies for Strong Correlation

Quantum Mechanical Approaches

Table 1: Comparison of Computational Methods for Strongly-Correlated Systems

| Method | Theoretical Basis | Strengths | Limitations | Applicable Targets |

|---|---|---|---|---|

| Fragment Molecular Orbital (FMO) | Divides system into fragments; calculates inter-fragment interactions [25] | Scalable to large systems; provides energy decomposition (electrostatics, charge transfer, dispersion) [26] | Accuracy depends on fragmentation scheme; parameterization required | GPCR-ligand complexes, Prion protein binders [26] |

| Density Functional Theory (DFT) | Electron density functional theory | Favorable cost/accuracy balance; widely available | Standard functionals fail for strong correlation; requires advanced functionals | Metalloprotein active sites, catalytic centers |

| Molecular Dynamics (MD) | Newtonian mechanics with classical force fields | Microsecond timescales; conformational sampling | Limited by force field accuracy; missing quantum effects | PPIs, allosteric mechanisms, protein folding |

| Hybrid ML/Quantum (PinMyMetal) | Ensemble machine learning with geometric constraints [27] | High accuracy for metal positioning (0.19-0.56 Å deviation); certainty scoring [27] | Training data dependent; limited to characterized geometries | Transition metal binding sites in proteins [27] |

The FMO method has proven particularly valuable for protein-ligand systems, as it enables quantum mechanical calculations on large biological complexes by dividing the system into smaller fragments [25]. The method provides pair interaction energy decomposition analysis (PIEDA), which breaks down interactions into electrostatic, exchange-repulsion, charge transfer, and dispersion components [25] [26]. This decomposition is crucial for understanding the nature of binding in strongly correlated systems.

For transition metal centers, hybrid machine learning approaches like PinMyMetal have demonstrated remarkable accuracy in predicting metal ion positions with median deviations of 0.19 Å for structural sites and 0.33 Å for catalytic sites [27]. These methods combine geometric constraints with ensemble learning to address the challenges of metal coordination complexity.

Specialized Methods for Transition Metals

Transition metals in biological systems present unique challenges due to their variable coordination geometries, oxidation states, and spin states. The coordination environment significantly influences metal function in proteins:

- Tetrahedral sites: Often involve Cysteine (C) and Histidine (H) residues, common for structural zinc ions [27]

- Octahedral sites: Typically involve Glutamate (E), Aspartate (D), and Histidine (H) residues, frequent in catalytic centers [27]

- Low-coordination sites: Regulatory metal binding sites often feature reduced coordination numbers (2-3 ligands) [27]

Different computational strategies are required for these coordination geometries. CH-focused approaches work well for tetrahedral coordination, while EDH-based methods are more appropriate for octahedral sites [27].

Diagram 1: Computational workflow for predicting transition metal binding sites in proteins, integrating geometric constraints with machine learning scoring.

Protein-Protein Interactions as Drug Targets

Classification and Detection Methods

Protein-protein interactions represent another class of challenging targets where correlation effects play an important role in binding. PPIs can be classified based on experimental detection methods:

- Binary methods: Detect direct physical interactions between specific protein pairs (e.g., yeast two-hybrid) [28]

- Indirect methods: Identify interactions within protein complexes without distinguishing direct partners (e.g., co-immunoprecipitation) [28]

Table 2: Experimentally Verified PPI Database Coverage Comparison

| Database | Experimentally Verified PPIs | Coverage of Curated Interactions | Special Features |

|---|---|---|---|

| STRING | High | ~70% | Integrates experimental and predicted interactions [29] |

| UniHI | High | N/A | Human interactome focus [29] |

| APID | ~21% of all reported PPIs [28] | ~70% [29] | Unified interactomes; binary interaction emphasis [28] |

| HIPPIE | Medium | ~70% | Context-specific interaction data [29] |

| hPRINT | Lower for experimental | High for total PPIs | Comprehensive predicted interactions [29] |

Database integration is crucial for PPI research. Combined use of STRING and UniHI retrieves approximately 84% of experimentally verified PPIs, while adding hPRINT and IID captures about 94% of total available PPIs [29].

Experimental Validation and Case Studies

Protocols for Metalloprotein Drug Discovery

Experimental validation of computational predictions for metalloprotein targets follows rigorous protocols:

Standard Scrapie Cell Assay (SSCA) for Prion Disease Targets:

- Cells are exposed to candidate compounds and passaged multiple times (typically six passages)

- Cells are collected and subjected to proteinase K (PK) digestion

- PrPSc levels are determined to evaluate antiprion activity [26]

- Western blot (WB) verification confirms inhibitory concentrations [26]

Binding Site Mutation Studies:

- Site-directed mutagenesis of predicted metal-binding residues (e.g., Asn159, Val189, Thr192, Lys194, Glu196 in PrPC) [26]

- Isothermal titration calorimetry (ITC) to measure binding affinity changes

- Functional assays to correlate metal binding with protein function

Crystallographic Validation:

- X-ray crystallography with resolution ≤ 2.0 Å for metal coordination assessment [30]

- Anomalous scattering to confirm metal identity (e.g., Zn, Cu, Mn)

- Validation tools like CheckMyMetal to assess metal binding site geometry [27]

Case Study: FMO-Driven Discovery of Natural Products for Prion Disease

The integration of FMO calculations with experimental validation successfully identified natural products with antiprion activity:

- Pharmacophore Development: FMO calculations on PrPC-GN8 complex identified key interacting residues (Arg136, Arg156, Tyr157, Pro158, Asn159, Gln160, His187, Lys194) [26]

- Virtual Screening: In-house natural product database screened against FMO-derived pharmacophore model

- Experimental Validation: Two compounds (BNP-03 and BNP-08) reduced PrPSc levels at 12.5 µM concentration in SSCA [26]

The FMO analysis provided critical insights into interaction energies, with dispersion components contributing significantly to binding (e.g., -14.64 kcal/mol for Glu196) despite repulsive electrostatic components [26].

Table 3: Key Research Reagent Solutions for Strong Correlation Studies

| Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Computational Tools | PinMyMetal [27] | Predicts transition metal localization and environment | Metalloprotein engineering, functional annotation |

| FMO Software [25] [26] | Quantum mechanical calculation of protein-ligand interactions | Binding energy decomposition, SAR analysis | |

| Databases | APID [28] | Unified protein interactomes with experimental evidence | PPI network analysis, target identification |

| STRING [29] | Integrated PPI database with confidence scoring | Pathway analysis, functional annotation | |

| RCSB PDB [30] | Experimentally determined macromolecular structures | Metal binding site analysis, homology modeling | |

| Experimental Resources | CheckMyMetal [27] | Validates metal binding sites in protein structures | Quality control for crystallographic data |

| Gold-standard PPI set [29] | Literature-curated experimentally proven PPIs | Method benchmarking, database validation |

Comparative Performance Analysis

Accuracy Metrics Across Methodologies

Table 4: Quantitative Performance Comparison of Prediction Methods

| Method | System Type | Accuracy Metric | Performance | Reference |

|---|---|---|---|---|

| PinMyMetal | Transition metal sites | Median position deviation | 0.19 Å (structural), 0.33 Å (catalytic), 0.36 Å (regulatory) | [27] |

| FMO | GPCR-ligand complexes | Correlation with experimental affinity | High correlation with measured values | [25] |

| Homology Modeling | Metal sites | Transfer accuracy | Limited for novel motifs without homologous templates | [27] |

| Geometric Predictors | Metal binding sites | Recall at IoUR ≥ 0.5 | >90% for most transition metals | [27] |

| Database Integration | PPI networks | Coverage of verified interactions | 84% with STRING+UniHI; 94% total PPIs with additional databases | [29] |

The performance data demonstrates that hybrid approaches combining physical principles with machine learning generally outperform single-method strategies. For metal binding site prediction, PinMyMetal achieves high accuracy by employing separate strategies for different coordination geometries and coordination numbers [27]. For PPIs, integrated database approaches provide the most comprehensive coverage [29].

Diagram 2: Decision framework for selecting computational methods based on target properties and correlation challenges.

The study of strongly correlated systems in drug discovery requires specialized approaches that go beyond conventional computational methods. For transition metal-containing targets, hybrid machine learning systems that account for coordination geometry and electronic effects show particular promise, with accuracy exceeding traditional homology-based methods. For protein-protein interactions, integrated database strategies provide the most comprehensive coverage, though experimental validation remains essential.

Future advancements will likely come from improved quantum mechanical methods that more efficiently handle strong correlation, better integration of machine learning with physical principles, and more comprehensive databases that incorporate structural, kinetic, and thermodynamic data. As these methods mature, they will increasingly enable the targeting of challenging biological systems that have previously been considered undruggable.

Computational Tools in Action: A Comparative Guide to Correlation Methods

Density Functional Theory (DFT) is a foundational computational method for predicting ground-state electronic properties in materials science and chemistry. This guide compares the accuracy and efficiency of various DFT software and methodologies, helping researchers select the optimal approach for calculating properties such as lattice constants, band structures, and formation energies.

Software and Functional Selection Guide

The choice of DFT software and exchange-correlation functional profoundly impacts the accuracy of ground-state property predictions. The table below summarizes leading DFT software options.

Table 1: Comparison of Representative DFT Software for Ground-State Calculations

| Software | Main Target System | Key Features | License |

|---|---|---|---|

| VASP [31] | Solid | Industry standard for solid-state/periodic system calculations | Paid |

| Quantum Espresso [31] | Solid | Free, open-source software for solid-state calculations | Free |

| Gaussian [31] | Molecular | Industry standard for molecular system calculations; GUI available | Paid |

| GAMESS [31] | Molecular | Free software with active feature development | Free |

| ORCA [31] | Molecular | Strong in optical properties and high-precision calculations; free for academic use | Paid (Academic free) |

The selection of the exchange-correlation functional is equally critical. Benchmark studies reveal the performance of different functionals for specific properties:

Table 2: Functional Performance for Key Ground-State Properties

| Functional | Typical Application | Performance & Accuracy Notes |

|---|---|---|

| PBE (GGA) [32] | General purpose, structural properties | Good for lattice constants but tends to overestimate them; often underestimates band gaps severely. |

| HSE06 (Hybrid) [32] | Band gaps, electronic properties | Significantly improves band gap accuracy and lattice parameter prediction over PBE. |

| PBE+U [32] | Systems with localized d/f electrons | Can improve properties for correlated electrons but may underestimate lattice parameters. |

| LDA [33] | - | Known to over-bind, typically underestimating lattice constants. |

Experimental Protocols and Benchmarking Data

Benchmarking Lattice Constants and Band Gaps

A benchmark study on bulk MoS₂ provides a clear protocol for evaluating functional performance.

Computational Protocol [32]:

- Software & Method: Calculations performed using the Quantum ESPRESSO simulation package.

- Pseudopotentials: Optimized Norm-Conserving Vanderbilt (ONCV) pseudopotentials.

- Plane-Wave Cutoff: A kinetic energy cutoff of 80 Ry was used for the plane-wave basis set.

- k-point Grid: A 12×12×3 Monkhorst-Pack k-point grid sampled the Brillouin zone.

- Convergence: Structures were relaxed until the force on each atom was less than 0.001 eV/Å and the energy change between steps was below 10⁻⁸ eV.

Quantitative Results [32]:

- PBE: Overestimated the lattice constant

aof MoS₂ by approximately 0.5% compared to experimental data. - HSE06: Reduced the percentage error in the lattice constant, providing a more accurate value, and delivered a much more accurate band gap.

- PBE: Overestimated the lattice constant

Protocol for Enhancing DFT Efficiency

Reducing the computational cost of DFT without sacrificing accuracy is a key research area.

- Efficiency Optimization Protocol [33]:

- Objective: Minimize the number of self-consistent field (SCF) iterations required for convergence by optimizing charge mixing parameters.

- Algorithm: Use Bayesian Optimization (BO), a data-efficient, derivative-free algorithm, to find the optimal parameter set.

- Implementation: This procedure can be applied alongside standard convergence tests for cutoff energy and k-points.

- Outcome: This approach demonstrated a significant reduction in SCF iterations and total simulation time for insulating, semiconducting, and metallic systems compared to default parameters in VASP [33].

The Rise of Machine Learning Potentials

Neural Network Potentials (NNPs) are emerging as a powerful alternative, offering near-DFT accuracy at a fraction of the computational cost.

Table 3: Emerging Machine Learning Potentials for Material Simulation

| Model/Platform | Description | Reported Performance |

|---|---|---|

| EMFF-2025 [34] | A general NNP for C, H, N, O-based high-energy materials. | Achieves DFT-level accuracy in predicting structures, mechanical properties, and decomposition pathways [34]. |

| Egret-1 (Rowan) [35] | An open-source family of NNPs. | Matches or exceeds quantum-mechanics-based simulation accuracy while running orders-of-magnitude faster [35]. |

| OMol25 NNPs [36] | NNPs (eSEN, UMA) trained on Meta's large-scale dataset. | Can predict charge-related properties (e.g., electron affinity) with accuracy comparable to or better than low-cost DFT methods for certain species [36]. |

Workflow and Logical Pathways

The following diagram illustrates a decision pathway for selecting a computational method based on project goals, system size, and required accuracy.

The Scientist's Toolkit

This table details essential computational "reagents" for performing DFT calculations.

Table 4: Essential Research Reagents for DFT Simulations

| Tool Category | Examples | Function & Purpose |

|---|---|---|

| DFT Software [31] | VASP, Quantum ESPRESSO, Gaussian, ORCA | Core engines that perform the electronic structure calculations by solving the Kohn-Sham equations. |

| Visualization & Modeling [31] | VESTA, Avogadro, GaussView | Used to build atomic structures, create input files, and visualize results like electron densities and molecular orbitals. |

| Pseudopotentials [37] | Ultrasoft, PAW, Norm-Conserving | Replace core electrons to reduce computational cost while accurately representing the effect of the nucleus and core electrons on valence electrons. |

| Basis Sets | Plane-Waves, Gaussian-Type Orbitals | Mathematical functions used to describe electron wavefunctions. Plane-waves are standard for solids, while Gaussian functions are common for molecules. |

| Exchange-Correlation Functional [33] [32] | PBE, HSE06, LDA, PBE+U | Approximates the quantum mechanical exchange and correlation energy, which is the key unknown in DFT that determines accuracy. |

| Machine Learning Potentials [34] [35] | EMFF-2025, Egret-1, OMol25 NNPs | Pre-trained models that learn from DFT data to predict energies and forces with high speed and accuracy, enabling large-scale simulations. |