Navigating Quantum Chemistry Benchmarks: From Theory to Drug Discovery Applications

This article provides a comprehensive guide to quantum chemistry method accuracy benchmarking, essential for researchers and drug development professionals.

Navigating Quantum Chemistry Benchmarks: From Theory to Drug Discovery Applications

Abstract

This article provides a comprehensive guide to quantum chemistry method accuracy benchmarking, essential for researchers and drug development professionals. It explores the foundational principles of benchmarking, examines current methodological frameworks and their real-world applications in areas like protein-ligand interactions, addresses common troubleshooting and optimization challenges, and presents validation strategies for reliable method selection. By synthesizing insights from recent benchmark studies, this work aims to equip scientists with the knowledge to make informed decisions in computational chemistry and materials science.

The Critical Role of Benchmarking in Quantum Chemistry

Why Benchmark? Establishing Reliability in Computational Chemistry

In computational chemistry, where theoretical models approximate complex quantum mechanical systems, benchmarking is not merely a best practice but a fundamental requirement for establishing reliability. It is the process of systematically evaluating the performance, accuracy, and computational cost of computational methods against well-defined reference data, often from high-level theory or experimental results. For researchers in quantum chemistry and drug development, benchmarking provides the essential evidence base needed to select appropriate methods for a given project, validate new protocols, and understand the limitations of theoretical approaches, thereby mitigating the risk of basing conclusions on inaccurate predictions.

The necessity of benchmarking is acutely felt in the Noisy Intermediate-Scale Quantum (NISQ) era, where hybrid quantum-classical algorithms show promise but must be rigorously validated. Furthermore, as machine learning potentials trained on massive datasets, such as Meta's OMol25, become more prevalent, benchmarking their performance against traditional computational chemistry workhorses like Density Functional Theory (DFT) is crucial for their adoption in high-stakes environments like drug development [1].

Key Benchmarking Studies in Focus

Benchmarking studies provide critical insights by systematically testing computational methods across different chemical systems and properties. The following examples highlight how this process establishes reliability and reveals methodological limitations.

Case Study 1: BenchQC and Variational Quantum Eigensolver (VQE)

The BenchQC benchmarking toolkit was used to evaluate the performance of the VQE algorithm for calculating the ground-state energies of aluminum clusters (Al⁻, Al₂, Al₃⁻) within a quantum-DFT embedding framework [2]. This study systematically varied key parameters, including classical optimizers, circuit types, and noise models, to assess their impact on performance. The results demonstrated that with optimized parameters, the VQE could achieve results with percent errors consistently below 0.02% when compared to benchmarks from the Computational Chemistry Comparison and Benchmark DataBase (CCCBDB) [2]. This establishes VQE's potential for reliable energy estimations in quantum chemistry simulations, provided careful parameter selection is undertaken.

Case Study 2: Traditional Methods and the Iminodiacetic Acid (IDA) Challenge

A benchmark study on iminodiacetic acid (IDA) serves as a powerful reminder of the potential pitfalls in computational chemistry. The study investigated the performance of various methods, including B3LYP and Hartree-Fock with different basis sets, in predicting vibrational spectra and NMR chemical shifts [3]. While the methods performed reasonably well for NMR chemical shifts, they were unsuccessful in predicting high-frequency vibrational frequencies (>2200 cm⁻¹), despite strong correlations at lower frequencies [3]. This critical finding underscores that computational chemistry, while powerful, is not infallible and can fail for specific systems and properties, highlighting why benchmarking is indispensable.

Case Study 3: Benchmarking Machine Learning Potentials

The recent release of large-scale datasets like Meta's Open Molecules 2025 (OMol25), containing over 100 million quantum chemical calculations, has enabled the training of sophisticated Neural Network Potentials (NNPs) [1]. In one benchmarking effort, NNPs trained on OMol25 were evaluated against experimental reduction-potential and electron-affinity data for various main-group and organometallic species. Surprisingly, these NNPs, which do not explicitly consider charge- or spin-based physics, were found to be as accurate or more accurate than low-cost DFT and semiempirical quantum mechanical (SQM) methods [4]. This demonstrates how benchmarking accelerates the adoption of innovative methods by objectively quantifying their performance against established techniques.

Comparative Performance Data

The table below summarizes the quantitative findings from the featured benchmarking studies, providing a clear, at-a-glance comparison of methodological performance.

Table 1: Summary of Benchmarking Results from Featured Studies

| Study Focus | Methods Benchmarked | Key Benchmark Metric | Reported Performance |

|---|---|---|---|

| VQE for Aluminum Clusters [2] | VQE with varying optimizers, circuits, and noise models | Percent error in ground-state energy vs. CCCBDB | Errors consistently < 0.02% |

| Vibrational Spectra of IDA [3] | B3LYP, HF, and semi-empirical methods (AM1, PM3, PM6) | Accuracy of predicted IR/Raman frequencies | Strong correlation at <2200 cm⁻¹; failure at >2200 cm⁻¹ |

| Machine Learning Potentials [4] | OMol25-trained NNPs vs. low-cost DFT and SQM | Accuracy predicting reduction potentials & electron affinities | NNPs as accurate or more accurate than DFT/SQM |

Detailed Experimental Protocols

To ensure the reliability and reproducibility of benchmarking studies, a rigorous and well-defined experimental protocol is essential. The following workflows are adapted from the cited research.

BenchQC Workflow for VQE Benchmarking

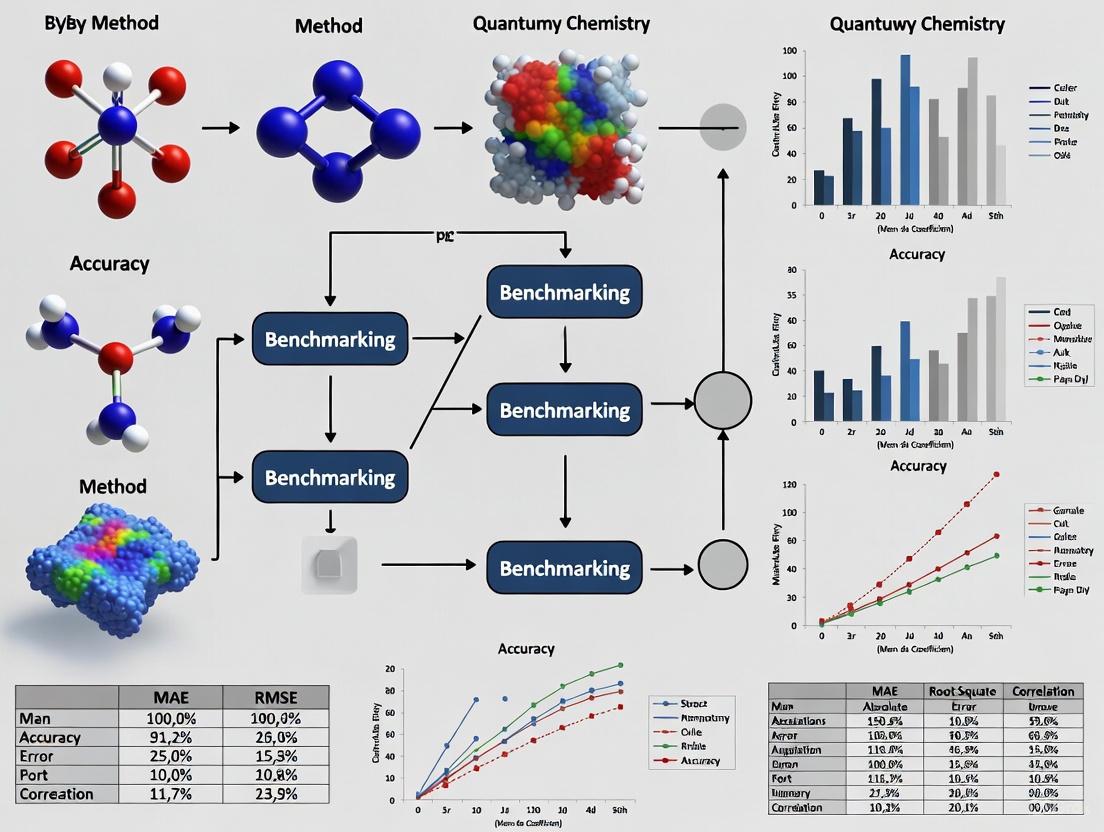

The following diagram illustrates the end-to-end workflow for benchmarking the Variational Quantum Eigensolver, from structure preparation to result analysis.

Figure 1: The BenchQC VQE benchmarking workflow for quantum chemistry simulations.

Methodology Details:

- Structure Generation: Pre-optimized molecular structures (e.g., for Al clusters) are obtained from databases like CCCBDB or JARVIS-DFT [2].

- Single-Point Calculation: The PySCF package, integrated within the Qiskit framework, is used to perform initial calculations on the structures to analyze molecular orbitals [2].

- Active Space Selection: The Active Space Transformer (Qiskit Nature) selects a subset of orbitals and electrons (e.g., 3 orbitals and 4 electrons) for the quantum computation, focusing on the chemically relevant region [2].

- Hamiltonian Mapping: The electronic Hamiltonian of the active space is mapped to a qubit representation using the Jordan-Wigner transformation [2].

- VQE Computation: The VQE algorithm is executed, typically on a quantum simulator. Key parameters such as the classical optimizer (e.g., SLSQP), the quantum circuit ansatz (e.g., EfficientSU2), and the number of repetitions are systematically varied [2].

- Analysis and Benchmarking: The computed ground-state energy is compared against reference data from exact diagonalization (using NumPy) or established databases (CCCBDB). The results are evaluated based on accuracy (percent error) and computational efficiency [2].

Workflow for Benchmarking Molecular Properties

The protocol for benchmarking traditional and machine-learning methods against experimental properties involves a structured comparison of computed versus experimental values.

Figure 2: A general workflow for benchmarking computational methods against experimental data.

Methodology Details:

- Reference Data Curation: A dataset of reliable experimental values for target properties (e.g., reduction potentials, electron affinities, vibrational frequencies) is assembled [4] [3].

- Computational Predictions: Multiple computational methods are used to predict the same set of properties for the molecules in the benchmark set. This typically includes:

- Statistical Comparison: The computed values are statistically compared to the experimental references. Common metrics include mean absolute error (MAE), root mean square error (RMSE), and correlation coefficients (R²) [4] [3].

- Performance Ranking: Methods are ranked based on their accuracy and computational cost, providing a guide for researchers on which method is best suited for predicting a specific property [4] [3].

This section details key computational tools and datasets that are foundational for modern benchmarking studies in computational chemistry.

Table 2: Essential Resources for Computational Chemistry Benchmarking

| Tool/Resource Name | Type | Primary Function in Benchmarking | Relevance to Drug Development |

|---|---|---|---|

| BenchQC [2] | Software Toolkit | Benchmarks quantum computing algorithms (e.g., VQE) for chemistry simulations. | Assessing quantum utility for molecular modeling. |

| OMol25 Dataset [1] | Training Dataset | A massive dataset of >100M calculations used to train and benchmark NNPs. | Provides high-quality data for biomolecules & metal complexes. |

| Neural Network Potentials (NNPs) [4] [1] | Computational Method | Fast, accurate energy predictions; benchmarked against DFT and experiment. | Enables rapid screening of large molecular libraries. |

| ORCA [3] | Quantum Chemistry Software | Performs ab initio and DFT calculations; used to generate reference data. | Workhorse for calculating molecular properties and energies. |

| CCCBDB [2] | Reference Database | Provides experimental and high-level computational reference data. | Source of ground-truth data for method validation. |

| JARVIS-DFT [2] | Materials Database | Contains pre-calculated DFT data for materials; used for validation. | Useful for materials-in-drug delivery and biomaterial studies. |

The accurate computational modeling of molecular systems is fundamental to advancements in drug design, materials science, and catalysis [5]. Quantum chemical methods provide the theoretical framework for predicting the structures, energies, and properties of molecules, from simple diatomics to complex biological ligands. However, these methods encompass a vast spectrum of approximations, each with distinct trade-offs between computational cost and predictive accuracy. Navigating this hierarchy is crucial for researchers to select the appropriate method for a given scientific problem. This guide provides an objective comparison of quantum chemical methods, framed within the context of modern benchmarking studies, to equip researchers with the knowledge to make informed decisions in their computational workflows.

The development of quantum chemistry has seen a quiet revolution, with electronic structure calculations becoming ubiquitous in chemical research [6]. This guide systematically examines the performance tiers of these methods, from highly accurate but computationally expensive coupled-cluster theories to efficient but approximate density functional methods and semi-empirical approaches. By presenting quantitative benchmarking data and detailed experimental protocols, we aim to establish a clear framework for understanding the relative strengths and limitations of each methodological rung in the quantum chemical ladder.

The Accuracy Hierarchy: From Gold Standards to Practical Workhorses

Method Classifications and Benchmarks

Quantum chemical methods can be organized into a hierarchy based on their underlying approximations and theoretical rigor. This ranking is essential for meaningful benchmarking and practical application. Wave-function-based methods follow a well-defined ordering, with coupled-cluster (CC) methods often serving as the "gold standard" for single-reference systems [6]. For density functional theory (DFT), the Jacob's Ladder classification scheme proposed by Perdew provides a conceptual framework for organizing functionals based on the ingredients used in their exchange-correlation kernels [6].

The table below summarizes the key characteristics and typical application domains for the main classes of quantum chemical methods.

Table 1: Hierarchy of Quantum Chemical Methods and Their Characteristics

| Method Class | Theoretical Foundation | Computational Cost | Typical Application Domain | Key Benchmark Accuracy (where available) |

|---|---|---|---|---|

| Coupled Cluster (e.g., CCSD(T)) | Wave-function theory; Handles electron correlation systematically [7] | Very High to Prohibitive for large systems | Small molecules, benchmark studies, parameterization of lower-level methods [5] | MAE of 1.5 kcal/mol for spin-state energetics [7]; "Gold standard" [6] |

| Quantum Monte Carlo (QMC) | Stochastic solution of Schrödinger equation [5] | Very High | Benchmark interaction energies for complex systems [5] | Agreement within 0.5 kcal/mol with CC for ligand-pocket interactions [5] |

| Double-Hybrid DFT (e.g., PWPB95-D3) | DFT with mixture of HF and DFT exchange, and MP2 correlation [7] | High | Transition metal complexes, non-covalent interactions [7] | MAE < 3 kcal/mol for spin-state energetics [7] |

| Hybrid DFT (e.g., B3LYP, PBE0) | DFT with a mixture of HF exchange and DFT exchange-correlation [8] | Medium | Geometry optimizations, frequency calculations, general-purpose chemistry [5] [8] | Performance varies widely; RMSE ~0.05-0.07 V for redox potentials [8] |

| Meta-GGA DFT (e.g., TPSSh) | DFT with dependence on kinetic energy density or other meta-variables [7] | Low to Medium | Transition metal chemistry, solid-state physics [7] | MAE of 5–7 kcal/mol for spin-state energetics [7] |

| Semi-Empirical Methods (SEQM) | Approximate quantum mechanics with parameterized integrals [5] [8] | Low | High-throughput screening, molecular dynamics of large systems [8] | Requires improvement for non-covalent interactions [5] |

| Molecular Mechanics (Force Fields) | Classical potentials, no electronic structure [5] | Very Low | Molecular dynamics of proteins, polymers, and large assemblies [5] | Limited transferability; inaccurate for out-of-equilibrium geometries [5] |

Composite Methods: Aiming for Chemical Accuracy

Composite methods, such as the Gaussian-n (Gn) theories and the Feller-Peterson-Dixon (FPD) approach, represent a distinct class of computational strategies designed to achieve high accuracy—often termed chemical accuracy (1 kcal/mol)—by combining the results of several calculations [9]. These methods systematically approximate the results of a high-level calculation (e.g., CCSD(T)) at the complete basis set (CBS) limit through a series of additive corrections.

- Gaussian-2 (G2) Theory: This model chemistry combines calculations at multiple levels. It uses a QCISD(T)/6-311G(d) calculation as a baseline and adds corrections for diffuse functions, higher-level polarization functions, and a larger basis set at the MP2 level. An empirical "higher-level correction" (HLC) is applied based on the number of valence and unpaired electrons [9].

- Gaussian-4 (G4) Theory: An advancement over G3 theory, G4 introduces an extrapolation scheme for the Hartree-Fock energy limit, uses CCSD(T) for the highest-level correction, and employs DFT-optimized geometries and zero-point energies [9].

- Feller-Peterson-Dixon (FPD) Approach: This is a more flexible framework, not a single fixed recipe. It typically uses CCSD(T) with very large basis sets, extrapolated to the CBS limit, and adds corrections for core-valence correlation, scalar relativistic effects, and spin-orbit coupling. It is capable of achieving remarkable accuracy, with root-mean-square deviations of 0.30 kcal/mol for thermochemical properties [9].

Table 2: Overview of Select Composite Quantum Chemical Methods

| Method | Key Components | Additive Corrections | Target Accuracy | Typical System Size Limit |

|---|---|---|---|---|

| Gaussian-2 (G2) | QCISD(T), MP4, MP2 with various Pople-type basis sets [9] | Polarization, diffuse functions, HLC [9] | Chemical accuracy (~1 kcal/mol) for thermochemistry [9] | Medium-sized organic molecules |

| Gaussian-4 (G4) | CCSD(T), MP2 with customized large basis sets (G3large, G4large) [9] | CBS extrapolation (HF), core correlation, spin-orbit, HLC [9] | Improved accuracy over G3 [9] | Medium-sized organic molecules (main-group up to Kr) |

| ccCA | MP2/CBS, CCSD(T)/cc-pVTZ [9] | Higher-order correlation, core-valence, scalar relativity, ZPVE [9] | Near chemical accuracy without empirical HLC [9] | ~10 first/second row atoms [9] |

| FPD | CCSD(T)/CBS (using large correlation-consistent basis sets) [9] | Core-valence, scalar relativistic, higher-order correlation [9] | High accuracy (RMS ~0.3 kcal/mol) [9] | ~10 or fewer first/second row atoms [9] |

Benchmarking Studies and Performance Data

Benchmarking Non-Covalent Interactions in Drug-Relevant Systems

Non-covalent interactions (NCIs) are critical determinants of binding affinity in ligand-protein systems, a key area in drug design. The QUID (QUantum Interacting Dimer) benchmark framework was developed to assess the accuracy of quantum mechanical methods for these complex interactions [5]. This framework includes 170 molecular dimers modeling chemically diverse ligand-pocket motifs.

Robust benchmark data was establishing by achieving an agreement of 0.5 kcal/mol between two fundamentally different "gold standard" methods: Coupled Cluster (LNO-CCSD(T)) and Quantum Monte Carlo (FN-DMC). This tight agreement establishes a "platinum standard" for these systems [5]. The study revealed that several dispersion-inclusive density functional approximations provide accurate energy predictions. However, semi-empirical methods and empirical force fields require significant improvements in capturing NCIs, especially for out-of-equilibrium geometries [5].

Benchmarking Spin-State Energetics in Transition Metal Complexes

Accurately predicting the spin-state energetics of transition metal complexes is a formidable challenge with major implications for catalysis and (bio)inorganic chemistry. A 2024 benchmark study (SSE17) derived reference data from experimental measurements on 17 transition metal complexes [7].

The results demonstrated the high accuracy of the CCSD(T) method, which achieved a mean absolute error (MAE) of 1.5 kcal/mol and outperformed all tested multireference methods (CASPT2, MRCI+Q). Regarding DFT, the best-performing functionals were double-hybrids (e.g., PWPB95-D3(BJ), B2PLYP-D3(BJ)) with MAEs below 3 kcal/mol. In contrast, popular hybrid functionals like B3LYP*-D3(BJ) and TPSSh-D3(BJ), often recommended for spin states, performed significantly worse, with MAEs of 5–7 kcal/mol and maximum errors exceeding 10 kcal/mol [7].

Performance for Redox Potential Prediction in Energy Storage

High-throughput computational screening is vital for discovering novel electroactive compounds for organic redox flow batteries. A systematic study evaluated the performance of various methods for predicting the redox potentials of quinone-based molecules [8].

The study found that using low-level theories (e.g., GFN2-xTB or PM7) for geometry optimization, followed by single-point energy (SPE) DFT calculations with an implicit solvation model, offered accuracy comparable to high-level DFT methods at a significantly lower computational cost [8]. For example, the PBE functional, when used with gas-phase optimized geometries and SPE in solution, achieved an RMSE of 0.072 V (R² = 0.954). Notably, performing full geometry optimizations with implicit solvation did not improve accuracy but increased computational cost [8].

Experimental Protocols for Key Benchmarks

Protocol: The QUID Benchmark for Ligand-Pocket Interactions

The QUID framework was designed to provide robust benchmarks for non-covalent interactions relevant to drug binding [5].

- System Selection: Nine large, flexible, drug-like molecules (up to ~50 atoms) were selected from the Aquamarine dataset. Two small ligand motifs were chosen: benzene and imidazole [5].

- Dimer Generation: Initial dimer conformations were created by aligning the aromatic ring of the small monomer with a binding site on the large monomer at a distance of 3.55 ± 0.05 Å [5].

- Geometry Optimization: The dimers were optimized at the PBE0+MBD level of theory, resulting in 42 equilibrium dimers classified as 'Linear', 'Semi-Folded', or 'Folded' based on the conformation of the large monomer [5].

- Non-Equilibrium Structures: A subset of 16 equilibrium dimers was used to generate 128 non-equilibrium structures by sampling along the dissociation pathway (8 intermonomer distances) [5].

- Reference Energy Calculation: Highly accurate interaction energies (E_int) were computed using both LNO-CCSD(T) and FN-DMC methods, with agreement to within 0.5 kcal/mol deemed the benchmark "platinum standard" [5].

- Method Assessment: The performance of various DFT functionals, semi-empirical methods, and force fields was evaluated by comparison to this reference data [5].

Protocol: Performance for Redox Potential Prediction

The computational workflow for evaluating methods for predicting redox potentials of quinones was as follows [8]:

- Initial Structure Generation: The SMILES string of a molecule is converted to a 3D structure, which is optimized using the OPLS3e force field to find the lowest-energy conformer [8].

- Geometry Optimization (Variable Methods): The FF geometry is further optimized in the gas phase using different levels of theory: Semi-Empirical (e.g., GFN2-xTB), DFTB, and DFT. Some methods also perform optimization in an implicit aqueous phase [8].

- Single-Point Energy (SPE) Calculation: The energy of each optimized geometry is recalculated using various DFT functionals (e.g., PBE, B3LYP, M08-HX). This step is performed for both gas-phase and, crucially, with an implicit solvation model (Poisson-Boltzmann PBF) to simulate the aqueous environment [8].

- Descriptor Calculation: The redox potential is predicted using the reaction energy (ΔErxn) of the redox reaction as the primary descriptor. The inclusion of zero-point energy and thermal corrections to obtain ΔUrxn or ΔG°_rxn was found to offer only marginal improvement [8].

- Calibration and Validation: The computed ΔE_rxn values are linearly calibrated against experimentally measured redox potentials. Performance is assessed using metrics like Root Mean Square Error (RMSE) and coefficient of determination (R²) [8].

Visualizing the Quantum Chemical Workflow

The following diagram illustrates a generalized computational workflow for a quantum chemical benchmarking study, integrating elements from the protocols described above.

Diagram 1: General QM Benchmarking Workflow

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Computational Tools and Resources for Quantum Chemical Benchmarking

| Tool/Resource | Type | Primary Function in Benchmarking | Example/Reference |

|---|---|---|---|

| Coupled Cluster with Single, Double, and Perturbative Triple Excitations (CCSD(T)) | Wave-Function Method | Provides "gold standard" reference energies for systems where it is computationally feasible [5] [7]. | LNO-CCSD(T) in QUID benchmark [5]. |

| Quantum Monte Carlo (QMC) | Stochastic Wave-Function Method | Provides high-accuracy benchmark energies via an approach fundamentally different from CC, used for validation [5]. | FN-DMC in QUID benchmark [5]. |

| Density Functional Theory (DFT) | Electronic Structure Method | Workhorse method for geometry optimizations and property calculations; performance is benchmarked against higher-level methods [8]. | PBE, B3LYP, M08-HX for redox potentials [8]. |

| Symmetry-Adapted Perturbation Theory (SAPT) | Energy Decomposition Method | Decomposes interaction energies into physical components (electrostatics, exchange, induction, dispersion), aiding interpretation of NCIs [5]. | Used to analyze coverage of non-covalent motifs in QUID [5]. |

| Implicit Solvation Models | Computational Solvation Method | Approximates the effect of a solvent (e.g., water) on molecular structure and energy, crucial for predicting solution-phase properties [8]. | Poisson-Boltzmann PBF model in redox potential studies [8]. |

| Benchmark Datasets | Curated Data | Provides reliable reference data (theoretical or experimental) for validating quantum chemical methods. | QUID [5], SSE17 [7], GMTKN30 [6]. |

Accurate computational prediction of molecular properties is a cornerstone of modern chemical research, with profound implications for drug discovery and materials design. The central challenge lies in validating theoretical quantum chemistry methods against reliable reference data. This guide examines two dominant benchmarking paradigms: one that relies on cross-validation against other, higher-level theoretical methods (the "theory" standard) and another that uses data derived from experimental measurements (the "experimental" standard) [5] [7]. Each approach offers distinct advantages and limitations, influencing how researchers assess method accuracy for critical applications like predicting protein-ligand binding affinities in pharmaceutical development [5] or spin-state energetics in transition metal catalysis [7].

Theoretical Reference Benchmarks

Methodology and Common Approaches

Theoretical benchmarks establish reference data by employing quantum chemical methods considered nearly exact for the system under study. The "gold standard" is typically the coupled-cluster with single, double, and perturbative triple excitations (CCSD(T)) method, especially when extrapolated to the complete basis set (CBS) limit [5] [7]. Other high-level methods like quantum Monte Carlo (QMC) are also used to provide robust reference points [5]. The process involves:

- System Selection: Choosing a set of molecules or complexes representative of the chemical space of interest.

- High-Level Calculation: Computing target properties (e.g., interaction energies, reaction barriers) using high-accuracy methods like CCSD(T)/CBS or FN-DMC.

- Benchmarking: Evaluating the performance of more approximate methods (e.g., Density Functional Theory or force fields) by comparing their results against the theoretical reference data.

Large-scale datasets such as S66, S22, and the newer QUID (QUantum Interacting Dimer) framework are built using this paradigm, providing thousands of interaction energies for non-covalent complexes [5].

Case Study: The QUID Framework

The QUID benchmark exemplifies the modern theoretical benchmark. It contains 170 molecular dimers modeling ligand-pocket interactions, with systems of up to 64 atoms [5]. Its "platinum standard" is established by achieving tight agreement (within 0.5 kcal/mol) between two completely different "gold standard" methods: LNO-CCSD(T) and FN-DMC [5]. This cross-validation significantly reduces uncertainty in the reference data. The workflow for creating and using such a benchmark is systematic, as shown in the diagram below.

Diagram: Workflow for creating and using a theoretical quantum chemistry benchmark.

Performance Data: Theoretical Benchmarks

Table 1: Performance of various quantum chemistry methods on theoretical benchmarks for non-covalent interactions (QUID) [5] and spin-state energetics (SSE17) [7]. MAE = Mean Absolute Error.

| Method Category | Specific Method | Benchmark Set | Key Metric | Performance |

|---|---|---|---|---|

| Coupled Cluster | CCSD(T)/CBS | QUID (NCI Energetics) | Agreement with FN-DMC | ~0.5 kcal/mol [5] |

| Coupled Cluster | CCSD(T) | SSE17 (Spin-State Energetics) | MAE vs. Experimental Ref. | 1.5 kcal/mol [7] |

| Double-Hybrid DFT | PWPB95-D3(BJ) | SSE17 (Spin-State Energetics) | MAE vs. Experimental Ref. | <3 kcal/mol [7] |

| Popular Hybrid DFT | B3LYP*-D3(BJ) | SSE17 (Spin-State Energetics) | MAE vs. Experimental Ref. | 5-7 kcal/mol [7] |

Experimental Reference Benchmarks

Methodology and Derivation from Experiment

This paradigm derives its reference data directly from experimental measurements, such as spin-crossover enthalpies, energies of spin-forbidden absorption bands, or vibrationally-corrected formation energies [7]. The process is often more complex:

- Data Curation: Collecting reliable, well-contextualized experimental data from the literature.

- Back-Correction: Critically processing raw experimental data to isolate the electronic energy component of interest. This often involves correcting for vibrational energies, environmental effects (e.g., solvation), and other factors to derive a "theoretical" value comparable to quantum chemistry calculations [7].

- Benchmarking: Testing computational methods against this experimentally-derived reference data.

This approach directly answers the question: "Can this method predict what we actually measure in the lab?"

Case Study: The SSE17 Benchmark Set

The SSE17 benchmark is a prime example of using experimental references. It provides spin-state energetics for 17 first-row transition metal complexes (Fe, Co, Mn, Ni) derived from experimental spin-crossover enthalpies or absorption band energies [7]. The key methodological step is the careful back-correction of the experimental data to remove vibrational and environmental contributions, leaving a robust reference value for the purely electronic spin-state energy splitting. The diagram below outlines this crucial process.

Diagram: Workflow for creating a benchmark from experimental data, highlighting the critical back-correction steps.

Performance Data: Experimental Benchmarks

Table 2: Performance of quantum chemistry methods on the experimentally-derived SSE17 benchmark for spin-state energetics. MAE = Mean Absolute Error vs. experimental reference [7].

| Method Category | Specific Method | Performance (MAE) | Key Insight |

|---|---|---|---|

| Coupled Cluster | CCSD(T) | 1.5 kcal/mol | Outperformed all tested multireference methods [7] |

| Double-Hybrid DFT | PWPB95-D3(BJ), B2PLYP-D3(BJ) | <3 kcal/mol | Best performing DFT functionals [7] |

| Popular Hybrid DFT | B3LYP*-D3(BJ), TPSSh-D3(BJ) | 5-7 kcal/mol | Previously recommended, but show larger errors [7] |

| Multireference | CASPT2, MRCI+Q | >1.5 kcal/mol | Underperformed versus CCSD(T) in this study [7] |

Comparative Analysis: Strengths and Limitations

Direct Comparison of Paradigms

Table 3: Comparative analysis of the two primary benchmarking paradigms in quantum chemistry.

| Aspect | Theoretical Reference Paradigm | Experimental Reference Paradigm |

|---|---|---|

| Reference Data Source | High-level ab initio theory (e.g., CCSD(T), QMC) [5] | Curated and back-corrected experimental measurements [7] |

| Primary Strength | Provides a precise, well-defined target for electronic energy; vast dataset generation possible [10] | Directly tests real-world predictive power; ultimate validation [7] |

| Key Limitation | Inherits systematic errors of the reference method; may not reflect experimental reality [5] | Scarce for large/complex systems; back-correction introduces uncertainty [5] [7] |

| Ideal Use Case | Rapid screening and development of new methods; studying systems with no experimental data | Final validation before application to experimental prediction; drug candidate scoring [5] |

| Data Volume | Can generate 100M+ data points (e.g., OMoI25) [10] | Typically smaller, focused sets (e.g., 17 complexes in SSE17) [7] |

Successful benchmarking requires both computational tools and curated data resources. The following table details key solutions for researchers in this field.

Table 4: Essential "Research Reagent Solutions" for Quantum Chemistry Benchmarking.

| Tool/Resource Name | Type | Primary Function | Relevance to Benchmarking |

|---|---|---|---|

| QUID Dataset [5] | Benchmark Data | Provides "platinum standard" interaction energies for ligand-pocket model systems. | Validates methods on non-covalent interactions critical to drug binding. |

| SSE17 Dataset [7] | Benchmark Data | Provides experimental-derived spin-state energetics for transition metal complexes. | Tests method accuracy for challenging open-shell systems in catalysis. |

| JARVIS-Leaderboard [11] | Platform | An integrated platform for benchmarking AI, electronic structure, and force-field methods. | Allows centralized comparison of method performance across diverse tasks and data. |

| LNO-CCSD(T) [5] | Software Method | A highly accurate coupled cluster method for large molecules. | Used to generate reliable theoretical reference data for complex systems. |

| FN-DMC [5] | Software Method | A Quantum Monte Carlo method for high-accuracy electronic structure. | Provides an independent theoretical reference to validate other high-level methods. |

| ORCA/Q-Chem [10] | Software Suite | Comprehensive quantum chemistry packages for DFT and wavefunction calculations. | Workhorse tools for running calculations on benchmark sets. |

The rigorous benchmarking of quantum chemistry methods remains indispensable for progress in computational drug discovery and materials science. Both theoretical and experimental reference paradigms are essential, serving complementary roles. The theoretical paradigm enables the large-scale data generation needed for modern AI training [10], while the experimental paradigm provides the crucial, final reality check [7]. The emergence of "platinum standards" from method agreement [5] and large-scale, integrated platforms like JARVIS-Leaderboard [11] points toward a future of more robust, reproducible, and trustworthy computational chemistry. For researchers, the optimal strategy involves using theoretical benchmarks for method development and initial screening, followed by final validation on the more scarce, but ultimately definitive, experimental benchmarks.

The predictive power of computational chemistry methods is foundational to modern scientific discovery, influencing fields from drug design to materials science. The accuracy of these methods, however, is not inherent; it must be rigorously validated against reliable reference data. This necessity has catalyzed the development of specialized benchmark datasets that serve as trusted rulers for measuring the performance of quantum chemistry approaches. Early benchmarking efforts were hampered by a scarcity of high-quality reference data, often leading to method validation on limited or non-representative chemical systems. The field has since evolved through several generations of increasingly sophisticated benchmarks, from pioneering sets like S22 to comprehensive collections such as GMTKN55, and more recently, to highly specialized and expansive datasets designed to probe specific chemical domains or leverage machine learning. These datasets provide the essential foundation for assessing whether computational methods produce physically sound results that researchers can confidently use in scientific investigations. This guide examines the key benchmark datasets that define the state-of-the-art, providing researchers with the knowledge to select appropriate validation tools for their specific applications.

The Evolution of Benchmark Datasets

The development of benchmark datasets in quantum chemistry reflects a continuous effort to address more complex chemical problems with greater accuracy and broader chemical diversity. The following diagram illustrates the logical relationship and evolution of these key datasets, showing how they build upon one another to cover increasingly sophisticated challenges.

This evolution demonstrates a clear trajectory from small, focused datasets to large-scale resources that enable both rigorous validation and machine learning model training. The community's understanding of what constitutes a robust benchmark has significantly matured, with modern datasets emphasizing not only size but also chemical diversity, balanced representation of interaction types, and rigorous curation to eliminate problematic reference data.

Comparative Analysis of Major Benchmark Datasets

Table 1: Key Characteristics of Major Benchmark Datasets

| Dataset | Primary Focus | Size (# data points) | Level of Theory | Key Strengths | Primary Applications |

|---|---|---|---|---|---|

| S66 [12] [13] | Non-covalent interactions | 66 equilibrium + 528 non-equilibrium | CCSD(T)/CBS | Well-balanced representation of dispersion & electrostatic contributions; dissociation curves | Biomolecular interaction accuracy; Force field validation |

| S66x8 [12] [13] | Non-covalent interaction potential energy surfaces | 528 (8 points × 66 complexes) | CCSD(T)/CBS | Systematic exploration of dissociation curves; non-equilibrium geometries | Testing functional behavior beyond equilibrium |

| GMTKN55 [14] | General main-group chemistry | >1500 across 55 subsets | Mixed (curated CCSD(T)/CBS) | Extremely broad coverage; diverse chemical properties | Comprehensive functional evaluation; Method development |

| GSCDB138 [14] | Comprehensive functional validation | 8,383 across 138 subsets | Gold-standard CCSD(T) | Rigorous curation; updated values; property-focused sets | Stringent DFA validation; ML functional training |

| QUID [5] | Ligand-pocket interactions | 170 dimers (42 equilibrium + 128 non-equilibrium) | CCSD(T) & Quantum Monte Carlo | "Platinum standard" agreement between CC & QMC; biologically relevant | Drug design; protein-ligand binding affinity prediction |

| SSE17 [7] | Transition metal spin-state energetics | 17 transition metal complexes | Experimental-derived | Experimental reference data; diverse metals & ligands | Computational catalysis; (bio)inorganic chemistry |

| OMol25 [15] [1] | Broad ML training | >100 million molecular snapshots | ωB97M-V/def2-TZVPD | Unprecedented size & diversity; includes biomolecules & electrolytes | Training ML interatomic potentials; Materials discovery |

| QCML [16] | ML training foundation | 33.5M DFT + 14.7B semi-empirical calculations | DFT & semi-empirical | Systematic chemical space coverage; hierarchical organization | Training universal quantum chemistry ML models |

Table 2: Chemical Domain Coverage Across Benchmark Datasets

| Dataset | Non-covalent Interactions | Reaction Energies | Barrier Heights | Transition Metals | Biomolecular Systems | Molecular Properties |

|---|---|---|---|---|---|---|

| S66 | Extensive | Limited | No | No | Indirect | No |

| GMTKN55 | Comprehensive | Extensive | Extensive | Limited | Limited | Limited |

| GSCDB138 | Comprehensive | Extensive | Extensive | Good | Limited | Extensive |

| QUID | Specialized | No | No | No | Extensive (ligand-pocket) | Limited |

| SSE17 | No | No | No | Exclusive (spin states) | Indirect | No |

| OMol25 | Extensive | Indirect | Indirect | Good | Extensive | Indirect |

| QCML | Extensive | Extensive | Indirect | Limited | Limited | Extensive |

Detailed Dataset Profiles and Experimental Protocols

S66 & S66x8: The Non-covalent Interaction Standards

The S66 dataset and its extension S66x8 were specifically designed to address limitations in earlier non-covalent interaction (NCI) benchmarks like S22. While S22 heavily favored nucleic acid-like structures, S66 provides a more balanced representation of interaction motifs relevant to biomolecules, with careful attention to ensuring comparable representation of dispersion and electrostatic contributions [13]. The dataset comprises 66 molecular complexes at their equilibrium geometries, covering hydrogen-bonded, dispersion-dominated, and mixed-character complexes. The experimental protocol for generating reference values employs an estimated CCSD(T)/CBS (coupled cluster with single, double, and perturbative triple excitations at the complete basis set limit) approach, which combines extrapolated MP2/CBS results with CCSD(T) corrections calculated using smaller basis sets [13]. This protocol achieves an accuracy sufficient for benchmarking while maintaining computational feasibility for medium-sized complexes.

The S66x8 extension systematically explores dissociation curves for each of the 66 complexes at 8 geometrically defined points (0.90, 0.95, 1.00, 1.05, 1.10, 1.25, 1.50, 1.75, and 2.00 times the equilibrium separation), providing 528 total data points that capture both equilibrium and non-equilibrium interactions [12] [13]. This design enables researchers to assess how methods perform across the potential energy surface, not just at minimum-energy configurations. The dataset has been instrumental in demonstrating the importance of dispersion corrections in density functional theory and validating the accuracy of double-hybrid functionals for NCIs [12].

GMTKN55 and GSCDB138: Comprehensive Functional Evaluation

The GMTKN55 database represents a significant scaling of benchmark scope, integrating 55 separate datasets with over 1500 individual data points covering general main-group thermochemistry, kinetics, and noncovalent interactions [14]. Its "superdatabase" approach allows for comprehensive functional evaluation across diverse chemical domains, helping to identify whether improved performance in one area comes at the expense of accuracy in another. The experimental protocol for GMTKN55 incorporates reference values from multiple high-level theoretical sources, primarily CCSD(T)/CBS calculations, though the specific level of theory varies across subdatasets.

GSCDB138 (Gold-Standard Chemical Database 138) is a recently introduced benchmark that advances beyond GMTKN55 through rigorous curation and expanded coverage [14]. It contains 138 datasets with 8,383 individual data points requiring 14,013 single-point energy calculations. The experimental protocol emphasizes "gold-standard" accuracy through several key steps: updating legacy data from GMTKN55 and MGCDB84 to contemporary best reference values, removing redundant or spin-contaminated data points, adding new property-focused sets (including dipole moments, polarizabilities, electric-field response energies, and vibrational frequencies), and significantly expanding transition metal data from realistic organometallic reactions [14]. This meticulous approach aims to provide a more reliable platform for functional validation and development.

QUID: Benchmarking Ligand-Pocket Interactions

The QUID (QUantum Interacting Dimer) benchmark framework addresses a critical gap in evaluating methods for biological systems, particularly ligand-protein interactions [5]. It contains 170 non-covalent dimers (42 equilibrium and 128 non-equilibrium) modeling chemically and structurally diverse ligand-pocket motifs with up to 64 atoms. The experimental protocol establishes what the authors term a "platinum standard" through exceptional agreement between two fundamentally different high-level methods: LNO-CCSD(T) (local natural orbital coupled cluster) and FN-DMC (fixed-node diffusion Monte Carlo) [5]. This convergence significantly reduces uncertainty in reference values for larger systems.

The dataset generation protocol involves: (1) selecting nine flexible chain-like drug molecules from the Aquamarine dataset as large monomers representing pockets; (2) probing these with benzene and imidazole as small monomer ligands; (3) optimizing dimer geometries at the PBE0+MBD level; (4) classifying resulting dimers as Linear, Semi-Folded, or Folded based on pocket geometry; and (5) generating non-equilibrium conformations along dissociation pathways for a representative subset [5]. This systematic approach produces interaction energies ranging from -24.3 to -5.5 kcal/mol, effectively capturing the energetics relevant to drug binding.

SSE17: Transition Metal Spin-State Energetics

The SSE17 benchmark addresses the particularly challenging problem of predicting spin-state energetics for transition metal complexes, which has enormous implications for modeling catalytic mechanisms and computational materials discovery [7]. Unlike other benchmarks that rely on theoretical reference data, SSE17 derives its reference values from experimental measurements, including spin crossover enthalpies and energies of spin-forbidden absorption bands, carefully back-corrected for vibrational and environmental effects [7]. The experimental protocol involves 17 first-row transition metal complexes (FeII, FeIII, CoII, CoIII, MnII, and NiII) with chemically diverse ligands, providing adiabatic or vertical spin-state splittings for method benchmarking.

This experimental foundation makes SSE17 particularly valuable, as it avoids potential uncertainties in theoretical reference methods for these challenging electronic structures. Benchmarking results using SSE17 have revealed that double-hybrid functionals (PWPB95-D3(BJ), B2PLYP-D3(BJ)) outperform the typically recommended DFT methods for spin states, with mean absolute errors below 3 kcal/mol compared to 5-7 kcal/mol for popular functionals like B3LYP*-D3(BJ) [7].

OMol25 and QCML: Datasets for Machine Learning

OMol25 (Open Molecules 2025) represents a paradigm shift in dataset scale, comprising over 100 million 3D molecular snapshots with properties calculated at the ωB97M-V/def2-TZVPD level of theory [15] [1]. The experimental protocol consumed approximately 6 billion CPU hours (over ten times more than previous datasets) to generate configurations with up to 350 atoms from across most of the periodic table, including challenging heavy elements and metals [15]. The dataset specifically focuses on biomolecules, electrolytes, and metal complexes, incorporating and recalculating existing community datasets at a consistent level of theory [1]. This resource enables training of machine learning interatomic potentials (MLIPs) that can achieve DFT-level accuracy at speeds approximately 10,000 times faster, making previously impossible simulations of scientifically relevant systems feasible [15].

The QCML dataset takes a complementary approach, systematically covering chemical space with small molecules (up to 8 heavy atoms) but generating an enormous volume of calculations: 33.5 million DFT and 14.7 billion semi-empirical entries [16]. The experimental protocol uses a hierarchical organization: chemical graphs sourced from existing databases and systematically generated; conformer search and normal mode sampling to generate both equilibrium and off-equilibrium 3D structures; and property calculation including energies, forces, multipole moments, and matrix quantities like Kohn-Sham matrices [16]. This systematic coverage of local bonding patterns enables trained ML models to extrapolate to larger structures.

Table 3: Key Research Reagent Solutions for Quantum Chemistry Benchmarking

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| S66/S66x8 | Benchmark dataset | Validate NCI accuracy | Biomolecular simulations; Force field development |

| GMTKN55/GSCDB138 | Benchmark database | Comprehensive functional evaluation | Method selection; Functional development |

| QUID | Specialized benchmark | Assess ligand-pocket interaction accuracy | Drug design; Protein-ligand binding studies |

| SSE17 | Experimental-derived benchmark | Validate spin-state energetics | Computational catalysis; Inorganic chemistry |

| OMol25 | ML training dataset | Train neural network potentials | Large-scale atomistic simulations; Materials discovery |

| QCML | ML training dataset | Train universal quantum chemistry models | Foundation model development; Chemical space exploration |

| CCSD(T)/CBS | Computational method | Generate gold-standard reference data | Benchmark creation; High-accuracy calculations |

| ωB97M-V/def2-TZVPD | Density functional | Generate high-quality training data | ML dataset creation; Accurate property prediction |

| DFT-D3 | Dispersion correction | Account for van der Waals interactions | Improved NCI prediction across functionals |

The landscape of quantum chemistry benchmarking has evolved from fragmented, limited validation efforts to sophisticated, comprehensive resources that enable rigorous method evaluation across virtually all chemical domains of interest. Established datasets like S66 and GMTKN55 continue to provide valuable service for specific validation needs, while next-generation resources like GSCDB138 offer improved curation and expanded property coverage. Simultaneously, highly specialized benchmarks like QUID for ligand-pocket interactions and SSE17 for transition metal spin states address critical application areas where accurate prediction remains challenging.

A significant trend emerges toward massive-scale datasets like OMol25 and QCML, which serve dual purposes of enabling machine learning potential development while providing extensive validation opportunities. As computational chemistry increasingly integrates machine learning approaches, the distinction between benchmarks for traditional method validation and datasets for model training continues to blur. Future benchmarking efforts will likely place greater emphasis on uncertainty quantification, automated curation processes, and coverage of increasingly complex chemical phenomena, such as reactive processes in condensed phases and excited-state dynamics. By understanding the strengths and limitations of these essential benchmarking resources, researchers can make informed decisions about method selection and validation, ultimately increasing the reliability and predictive power of computational chemistry across scientific disciplines.

Modern Benchmarking Frameworks and Their Scientific Applications

The advancement of quantum computing and its application to scientific fields like chemistry and materials science necessitates robust methods to evaluate performance and accuracy. Within this context, specialized benchmarking toolkits are critical for assessing whether emerging computational methods genuinely capture domain-specific knowledge and provide reliable results. This guide examines two distinct toolkits, BenchQC and QuantumBench, which address different aspects of this challenge. BenchQC provides an application-centric framework for benchmarking the performance of quantum algorithms on computational chemistry problems, specifically evaluating how algorithm parameters impact the accuracy of physical property calculations [17] [18] [19]. In contrast, QuantumBench serves as an evaluation dataset for assessing the conceptual understanding and reasoning capabilities of large language models (LLMs) within the quantum domain [20] [21]. This comparison will detail their methodologies, experimental protocols, and performance data, providing researchers with a clear understanding of their respective roles in validating tools for quantum-enhanced discovery.

The following table summarizes the core attributes and applications of BenchQC and QuantumBench, highlighting their distinct focuses within quantum science benchmarking.

Table 1: Fundamental Characteristics of BenchQC and QuantumBench

| Feature | BenchQC | QuantumBench |

|---|---|---|

| Primary Purpose | Benchmarking quantum algorithm performance for computational chemistry [22] [18] | Evaluating LLM understanding of quantum science concepts [20] |

| Core Function | Application-centric performance assessment [17] | Knowledge and reasoning evaluation [20] |

| Target Technology | Variational Quantum Eigensolver (VQE) and quantum simulators/hardware [18] [2] | Large Language Models (LLMs) [20] |

| Domain of Study | Quantum chemistry, materials science [19] [23] | Quantum mechanics, computation, field theory, and related subfields [20] |

| Key Deliverable | Energy estimation accuracy and parameter optimization guidance [22] | Model performance scores across quantum subdomains [20] |

Experimental Protocols and Methodologies

BenchQC: Benchmarking Quantum Chemistry Workflows

The BenchQC methodology employs a structured workflow to evaluate the Variational Quantum Eigensolver (VQE) within a quantum-DFT embedding framework. This workflow systematically assesses how different parameters affect the accuracy of ground-state energy calculations for molecular systems [18] [2].

Table 2: Key Research Reagents in the BenchQC Workflow

| Reagent / Tool | Function in the Protocol | Source/Implementation |

|---|---|---|

| Aluminum Clusters (Al⁻, Al₂, Al₃⁻) | Well-characterized model systems for benchmarking [18] | CCCBDB, JARVIS-DFT [18] [2] |

| Quantum-DFT Embedding | Hybrid approach: DFT for core electrons, quantum computation for valence electrons [18] | Qiskit, PySCF [18] [2] |

| Active Space Transformer | Selects the crucial orbitals for quantum computation, ensuring efficiency [2] | Qiskit Nature [18] [2] |

| Parameterized Quantum Circuit (Ansatz) | Forms the trial wavefunction for the VQE algorithm [18] | EfficientSU2 circuit in Qiskit [18] [2] |

| Classical Optimizers | Minimizes the energy calculated by the quantum circuit [22] [18] | SLSQP, COBYLA, L-BFGS-B, etc. [22] |

| Noise Models | Simulates the effect of imperfect quantum hardware [18] [2] | IBM device noise models [18] |

The diagram below illustrates the integrated benchmarking process of BenchQC.

QuantumBench: Evaluating Large Language Models

QuantumBench was constructed to systematically probe the understanding of quantum science by LLMs. Its methodology focuses on curating a high-quality, human-authored dataset from authoritative educational sources [20].

Table 3: Key Research Reagents in QuantumBench

| Reagent / Tool | Function in the Protocol | Source/Implementation |

|---|---|---|

| Source Materials | Provides expert-authored questions and answers for the benchmark [20] | MIT OCW, TU Delft OCW, LibreTexts [20] |

| Question-Answer Pairs | The fundamental unit for testing knowledge and reasoning [20] | 769 undergraduate-level problems [20] |

| Multiple-Choice Format | Enables scalable and consistent evaluation of LLMs [20] | 8 options per question (1 correct, 7 plausible distractors) [20] |

| Subfield Categorization | Allows for granular analysis of model performance across topics [20] | 9 categories, e.g., Quantum Mechanics, Quantum Computation [20] |

| Problem Type Tags | Facilitates analysis of reasoning type required [20] | Algebraic Calculation, Numerical Calculation, Conceptual Understanding [20] |

The logical structure for the creation and use of the QuantumBench dataset is shown below.

Performance and Results Analysis

BenchQC Quantitative Performance Data

BenchQC benchmarking studies provide quantitative data on the impact of various parameters on VQE performance. The following tables consolidate key experimental findings from assessments on aluminum clusters.

Table 4: Impact of BenchQC Parameters on VQE Performance [22] [18] [2]

| Parameter Varied | Key Finding | Impact on Accuracy/Performance |

|---|---|---|

| Classical Optimizer | SLSQP and L-BFGS-B showed efficient convergence [22] | Directly affects convergence efficiency and resource use [22] |

| Circuit Type (Ansatz) | Hardware-efficient ansatzes (e.g., EfficientSU2) were tested [18] | Significant impact on accuracy; choice balances expressivity and noise [18] |

| Basis Set | Higher-level sets (e.g., cc-pVQZ) closely matched classical data [22] [18] | Major impact; higher-level sets increase accuracy toward classical benchmarks [22] |

| Noise Models | IBM noise models were applied to simulate real hardware [18] | Results remained within 0.2% error of CCCBDB benchmarks, showing noise resilience [18] [19] |

Table 5: Representative BenchQC Results for Aluminum Clusters [18] [19] [2]

| Molecular System | BenchQC Result (VQE Energy) | Classical Benchmark (NumPy/CCCBDB) | Reported Percent Error |

|---|---|---|---|

| Al⁻ | - | - | < 0.2% [19] |

| Al₂ | - | - | < 0.2% [19] |

| Al₃⁻ | - | - | < 0.2% [19] |

QuantumBench Performance Evaluation

QuantumBench serves as a diagnostic tool, revealing the strengths and limitations of various LLMs in the quantum domain. The benchmark evaluates performance across different subfields and problem types.

Table 6: QuantumBench Problem Distribution by Subfield and Type [20]

| Subfield | Algebraic Calculation | Numerical Calculation | Conceptual Understanding | Total |

|---|---|---|---|---|

| Quantum Mechanics | 177 | 21 | 14 | 212 |

| Quantum Chemistry | 16 | 64 | 6 | 86 |

| Quantum Computation | 54 | 1 | 5 | 60 |

| Quantum Field Theory | 104 | 1 | 2 | 107 |

| Optics | 101 | 41 | 15 | 157 |

| Other (Math, Photonics, etc.) | 123 | 16 | 8 | 147 |

| Total | 575 | 144 | 50 | 769 |

Evaluation results from QuantumBench indicate that LLM performance is sensitive to problem difficulty and format. While some models demonstrate capability, performance generally drops as problems require deeper reasoning [21] [24]. The benchmark effectively highlights that even advanced models can struggle with the nuanced conceptual and mathematical challenges inherent to quantum science [20].

BenchQC and QuantumBench address the critical need for domain-specific benchmarking in quantum science from two complementary angles. BenchQC provides a rigorous, application-centric framework that quantifies the performance and guides the optimization of quantum algorithms for computational chemistry. Its systematic parameter studies offer reproducible insights into achieving accurate results, such as consistently maintaining errors below 0.2% for ground-state energy calculations, which is crucial for reliable materials discovery and drug development [18] [19]. QuantumBench, on the other hand, establishes a foundational standard for evaluating the cognitive capabilities of AI research agents in the quantum domain. By diagnosing how well LLMs understand quantum concepts, it helps ensure that AI tools used for tasks like literature synthesis or experimental planning are built on a foundation of correct scientific knowledge [20].

For researchers in quantum chemistry and related fields, the concurrent use of both toolkits is recommended. BenchQC should be employed to validate and tune the performance of quantum computational workflows intended for simulating molecular systems. Meanwhile, QuantumBench can serve as a critical check on the conceptual reliability of AI models that may be used to assist in research design, code generation, or data interpretation. Together, they provide a more comprehensive assurance of quality, covering both the execution of quantum calculations and the scientific intelligence guiding the research. As the field progresses, these specialized benchmarks will be indispensable for distinguishing genuine advancements from speculative claims, thereby accelerating the path toward practical quantum advantage.

Accurate computational prediction of protein-ligand binding affinities is a cornerstone of modern drug discovery, yet achieving quantum-mechanical accuracy for biologically relevant systems has remained persistently challenging. The flexibility of ligand-pocket motifs arises from a complex interplay of attractive and repulsive electronic interactions during binding, including hydrogen bonding, π–π stacking, and dispersion forces [5]. Accurately accounting for all these interactions requires robust quantum-mechanical (QM) benchmarks that have been scarce for ligand-pocket systems. Compounding this challenge, historical disagreement between established "gold standard" quantum methods has cast doubt on the reliability of existing benchmarks for larger non-covalent systems [25]. Within this context, the Quantum Interacting Dimer (QUID) framework emerges as a transformative solution—a benchmark framework specifically designed to redefine accuracy standards for biological ligand-pocket interactions by establishing a new "platinum standard" through agreement between complementary high-level quantum methods [5].

QUID addresses a critical gap in computational drug design by providing reliable benchmark data for the development and validation of faster, more approximate methods used in high-throughput virtual screening. Even small errors of 1 kcal/mol can lead to erroneous conclusions about relative binding affinities, potentially derailing drug discovery pipelines [5]. The framework's comprehensive approach enables researchers to move beyond traditional limitations, offering insights not only into binding energies but also into the atomic forces and molecular properties that govern ligand binding mechanisms. By spanning both equilibrium and non-equilibrium geometries, QUID captures the dynamic nature of binding processes, making it an indispensable tool for advancing computational methods in structure-based drug design [5] [25].

QUID Framework Design and Composition

Systematic Construction of Model Systems

The QUID framework comprises 170 chemically diverse molecular dimers, including 42 equilibrium and 128 non-equilibrium systems, with molecular sizes of up to 64 atoms [5]. This systematic construction begins with the selection of large, flexible, chain-like drug molecules from the Aquamarine dataset, representing host "pockets" that incorporate most atom types of interest for drug discovery (H, N, C, O, F, P, S, and Cl) [5]. The selection of ligand-pocket motifs was achieved through exhaustive exploration of different binding sites of nine large flexible drug molecules, each systematically probed with two small monomer ligands: benzene (C6H6) and imidazole (C3H4N2) [5]. These small monomers represent common fragments in drug design—benzene as the quintessential aromatic compound present in phenylalanine side-chains, and imidazole as a more reactive motif present in histidine and commonly used drug compounds [5].

The initial dimer conformations were constructed with the aromatic ring of the small monomer aligned with that of the binding site at a distance of 3.55 ± 0.05 Å, similar to the established S66 dimers, followed by optimization at the PBE0+MBD level of theory [5]. Post-optimization, the resulting 42 equilibrium dimers were categorized into three structural classes based on the configuration of the large monomer: 'Linear' (retaining original chain-like geometry), 'Semi-Folded' (partially bent sections), and 'Folded' (encapsulating the smaller monomer) [5]. This classification models pockets with different packing densities, from crowded binding pockets to more open surface pockets [5].

Comprehensive Sampling of Binding Motifs and Geometries

QUID's design ensures broad coverage of non-covalent binding motifs prevalent in biological systems. Analysis using symmetry-adapted perturbation theory (SAPT) confirms that the framework comprehensively covers diverse non-covalent interactions and their energetic contributions, including exchange-repulsion, electrostatic, induction, and dispersion components [25]. The resulting complexes represent the three most frequent interaction types found in over 100,000 interactions within PDB structures: aliphatic-aromatic, H-bonding, and π-stacking interactions, with many dimers exhibiting mixed character that simultaneously combines multiple interaction types [5].

For enhanced utility in studying binding processes, a representative selection of 16 dimers was used to construct non-equilibrium conformations sampled along the dissociation pathway of the non-covalent bond [5]. These conformations were generated at eight distances characterized by a multiplicative dimensionless factor q (0.90, 0.95, 1.00, 1.05, 1.10, 1.25, 1.50, 1.75, and 2.00), where q = 1.00 represents the equilibrium dimer geometry [5]. This systematic approach to sampling both equilibrium and dissociation geometries provides unprecedented insight into the behavior of non-covalent interactions across the binding process, offering valuable data for method development beyond single-point energy calculations.

Table: QUID Framework System Composition and Characteristics

| Category | Number of Systems | Description | Interaction Energy Range (kcal/mol) | Key Features |

|---|---|---|---|---|

| Equilibrium Dimers | 42 | Optimized structures at PBE0+MBD level | -24.3 to -5.5 [5] | Linear, Semi-Folded, and Folded geometries |

| Non-Equilibrium Dimers | 128 | Dissociation pathways for 16 selected dimers | Varies with distance [5] | 8 points along dissociation coordinate (q=0.90-2.00) |

| Small Monomers | 2 | Benzene and Imidazole | N/A | Representative ligand fragments |

| Large Monomers | 9 | Drug-like molecules from Aquamarine dataset | N/A | 50 atoms, flexible chain-like structures |

Experimental Methodology and Benchmarking Protocol

Establishing the Platinum Standard through Methodological Consensus

The cornerstone of QUID's benchmarking approach is the establishment of what the developers term a "platinum standard" for ligand-pocket interaction energies, achieved through tight agreement between two fundamentally different high-level quantum methods: Local Natural Orbital Coupled Cluster (LNO-CCSD(T)) and Fixed-Node Diffusion Monte Carlo (FN-DMC) [5] [25]. This dual-methodology approach significantly reduces the uncertainty that has plagued previous benchmark efforts for larger non-covalent systems. The consensus-based strategy is particularly valuable given the historical disagreement between coupled cluster and quantum Monte Carlo methods that had cast doubt on existing benchmarks [25]. The remarkable agreement of 0.3-0.5 kcal/mol between these independent methodologies provides unprecedented reliability for benchmarking studies [25].

The benchmarking protocol employs a multi-layered validation strategy beginning with the generation of reference interaction energies at the platinum standard level. These reference values then serve as benchmarks for evaluating more approximate methods, including various density functional approximations, semiempirical methods, and classical force fields [5]. The evaluation encompasses not only interaction energies but also atomic forces and molecular properties, providing a comprehensive assessment of method performance across multiple dimensions relevant to drug discovery applications. The robust and reproducible binding energies obtained through this protocol establish QUID as a reliable foundation for method development and validation in computational drug design.

Computational Workflow and Validation Procedures

The experimental workflow for QUID benchmark generation follows a systematic procedure that ensures reliability and reproducibility. The process begins with structure generation and optimization using PBE0+MBD, followed by single-point energy calculations using both LNO-CCSD(T) and FN-DMC methods [5]. The critical validation step involves comparing results from these two independent methodologies to ensure they fall within the tight agreement threshold of 0.5 kcal/mol [5]. For systems meeting this criterion, the reference values are established as the platinum standard benchmark.

The subsequent evaluation phase subjects a wide range of computational methods to testing against these benchmark values, analyzing not only quantitative accuracy in energy prediction but also performance across different interaction types, system sizes, and geometric distortions [5]. Special attention is paid to the performance of methods for non-equilibrium geometries, which represent snapshots of the binding process and are particularly challenging for many approximate methods [5]. The comprehensive validation includes analysis of forces and molecular properties, providing insights that extend beyond energy comparisons to address the fundamental physical interactions governing binding affinity.

Performance Comparison with Alternative Approaches

Quantitative Assessment Across Computational Methods

The QUID framework enables systematic evaluation of diverse computational methodologies, revealing distinct performance patterns across different classes of methods. Several dispersion-inclusive density functional approximations demonstrate promising accuracy for energy predictions, achieving close agreement with the platinum standard reference values [5] [25]. However, more detailed analysis reveals that even accurate DFT methods exhibit significant discrepancies in the magnitude and orientation of atomic van der Waals forces, which could substantially influence molecular dynamics simulations and binding pathway predictions [25].

Semiempirical methods and widely used empirical force fields show notable limitations, particularly for capturing non-covalent interactions in out-of-equilibrium geometries [5] [25]. These methods require substantial improvements to reliably model the complex interaction landscape encountered in real binding processes. The comprehensive nature of the QUID dataset, with its wide span of molecular dipole moments and polarizabilities, further demonstrates the flexibility in designing pocket structures to achieve desired binding properties, providing valuable insights for rational drug design [25].

Table: Performance Comparison of Computational Methods on QUID Benchmark

| Method Category | Representative Methods | Accuracy (vs. Platinum Standard) | Strengths | Limitations |

|---|---|---|---|---|

| Platinum Standard | LNO-CCSD(T), FN-DMC | Reference (0.3-0.5 kcal/mol agreement) [25] | Highest accuracy, methodological consensus | Extreme computational cost |

| DFT with Dispersion | PBE0+MBD, other dispersion-inclusive functionals | Variable, several with good accuracy [5] | Balanced accuracy/efficiency, good for energies | Inaccurate van der Waals forces [25] |

| Semiempirical Methods | Various SE approaches | Requires improvement [5] | Computational efficiency | Poor performance for NCIs, especially non-equilibrium [5] |

| Empirical Force Fields | Standard MM force fields | Requires improvement [5] | High throughput, suitable for MD | Inadequate treatment of polarization and dispersion [5] |

Comparison with Other Benchmarking and Generative Approaches

While QUID focuses on quantum-mechanical benchmarking of interaction energies, alternative approaches in the computational drug design landscape address complementary challenges. Structure-based generative models like PoLiGenX employ equivariant diffusion models to design novel ligands conditioned on protein pocket structures [26]. These methods have demonstrated capabilities in generating shape-similar ligands with enhanced binding affinities, lower strain energies, and reduced steric clashes compared to reference molecules [26]. Similarly, DiffSMol represents another generative AI approach that creates 3D binding molecules based on ligand shapes, achieving a 61.4% success rate in generating molecules resembling ligand shapes when incorporating shape guidance [27].

The Folding-Docking-Affinity (FDA) framework offers a different strategy by integrating deep learning-based protein folding (ColabFold), docking (DiffDock), and affinity prediction (GIGN) to predict binding affinities when experimental structures are unavailable [28]. This approach performs comparably to state-of-the-art docking-free methods and demonstrates enhanced generalizability in challenging test scenarios where proteins and ligands in the test set have no overlap with the training set [28].

QUID distinguishes itself from these approaches by providing rigorous quantum-mechanical validation data essential for developing and refining such methods. Whereas generative models and affinity prediction frameworks focus on novel compound design or rapid screening, QUID establishes the fundamental physical accuracy necessary to ensure the reliability of all such computational approaches in drug discovery pipelines.

Research Reagent Solutions: Essential Tools for Implementation

Successful implementation and utilization of the QUID framework require specific computational tools and methodologies. The following research reagent solutions represent essential components for researchers working with this benchmark system or developing similar benchmarking approaches.

Table: Essential Research Reagents for QUID Framework Implementation

| Research Reagent | Category | Function | Implementation Notes |

|---|---|---|---|

| LNO-CCSD(T) | High-Level Quantum Chemistry | Provides coupled cluster reference energies with reduced computational cost [5] | Uses local natural orbital approximations for larger systems |

| FN-DMC | Quantum Monte Carlo | Provides benchmark energies through stochastic quantum approach [5] | Fixed-node approximation required for fermionic systems |

| SAPT Analysis | Energy Decomposition | Decomposes interaction energies into physical components [5] [25] | Essential for understanding interaction contributions |

| PBE0+MBD | Density Functional Theory | Structure optimization and initial energy assessment [5] | Includes many-body dispersion corrections |

| QUID Dataset | Benchmark Data | 170 molecular dimers for method validation [5] | Openly available in GitHub repository [25] |

The QUID framework represents a significant advancement in the quantum-mechanical benchmarking of biological ligand-pocket interactions, establishing a new platinum standard through methodological consensus between coupled cluster and quantum Monte Carlo approaches. Its comprehensive design, encompassing both equilibrium and non-equilibrium geometries across chemically diverse systems, provides an unprecedented resource for validating computational methods in drug discovery. The framework's rigorous validation protocol reveals distinctive performance patterns across method classes, highlighting the accuracy of certain dispersion-inclusive density functionals while identifying critical limitations in semiempirical methods and force fields, particularly for non-equilibrium geometries.

Future developments will likely focus on expanding the chemical space covered by QUID, incorporating additional biologically relevant molecular fragments and interaction types. The integration of machine learning approaches with benchmark-quality data holds particular promise for developing next-generation force fields and semiempirical methods that maintain accuracy while achieving computational efficiency. Furthermore, the principles established by QUID—methodological consensus, comprehensive system coverage, and rigorous validation—provide a template for developing benchmarks in related areas, such as solvated systems or membrane-protein interactions. As computational methods continue to evolve in sophistication and application scope, robust benchmarking frameworks like QUID will remain essential for ensuring their reliability in accelerating drug discovery and advancing our understanding of biomolecular interactions.

{remove the following content if it does not match the instructions}

SSE17: Benchmarking Spin-State Energetics with Experimental Data

Table of Contents

- Introduction

- The SSE17 Benchmark Set

- Methodology Overview

- Performance of Wave Function Methods

- Performance of Density Functional Theory Methods

- Research Toolkit

- Conclusions and Recommendations

Accurate prediction of spin-state energetics is one of the most compelling challenges in computational transition metal chemistry. These predictions are crucial for modeling catalytic reaction mechanisms, interpreting spectroscopic data, and computational discovery of materials [29]. However, computed spin-state energies are notoriously method-dependent, and the scarcity of credible reference data has made it difficult to assess the accuracy of quantum chemistry methods conclusively [7] [29]. To address this, a novel benchmark set known as SSE17 (Spin-State Energetics of 17 complexes) was developed, deriving reference values from carefully curated and corrected experimental data [7] [30]. This guide provides a comparative analysis of the performance of various quantum chemistry methods against the SSE17 benchmark, offering researchers evidence-based recommendations for method selection.

The SSE17 Benchmark Set

The SSE17 set comprises 17 first-row transition metal complexes, selected for their chemical diversity and the reliability of their associated experimental data [7] [29].

- Metal Ions: The set includes Fe(II), Fe(III), Co(II), Co(III), Mn(II), and Ni(II) ions.

- Ligand Diversity: The complexes feature a wide range of ligands, ensuring a broad representation of ligand-field strengths and coordination architectures.

- Data Sources: The reference spin-state energetics are derived from two types of experimental data:

- Spin Crossover (SCO) Enthalpies: For 9 complexes, adiabatic energy differences were obtained from spin-crossover enthalpies.

- Spin-Forbidden Absorption Bands: For the remaining 8 complexes, vertical energy differences were obtained from energies of spin-forbidden d-d absorption bands in reflectance spectra.

- Data Curation: A critical aspect of SSE17 is the application of back-corrections to the raw experimental data to account for vibrational effects (changes in vibrational frequencies between spin states) and environmental effects (perturbations from solvation or crystal packing). This yields reference values for electronic energy differences that are directly comparable to the outputs of quantum chemistry calculations on isolated molecules [29].

The SSE17 benchmark provides a standardized platform for evaluating method accuracy. The following workflow illustrates the key steps involved in its creation and application:

Performance of Wave Function Methods

Wave function theory (WFT) methods are considered high-level and are often used for benchmarking. The SSE17 study evaluated several popular WFT approaches [7] [29].

Table 1: Performance of Wave Function Methods on the SSE17 Benchmark