Fermionic Antisymmetry: Overcoming Computational Challenges in Quantum Simulations

The antisymmetric nature of fermionic wavefunctions presents a fundamental and persistent challenge in computational physics and quantum chemistry, hindering the simulation of systems ranging from drug molecules to novel materials.

Fermionic Antisymmetry: Overcoming Computational Challenges in Quantum Simulations

Abstract

The antisymmetric nature of fermionic wavefunctions presents a fundamental and persistent challenge in computational physics and quantum chemistry, hindering the simulation of systems ranging from drug molecules to novel materials. This article explores the core of this problem, known as the Fermion Sign Problem (FSP), which causes an exponential decay in the signal-to-noise ratio in key methods like Quantum Monte Carlo. We survey the latest methodological breakthroughs, from neural-network-based global optimization techniques to novel quantum algorithms and tensor decompositions, that effectively mitigate these issues. Furthermore, we provide a comparative analysis of these emerging strategies, evaluating their scalability, accuracy, and potential to enable reliable, first-principles simulations for biomedical and clinical research.

The Fermion Sign Problem: A Foundational Barrier in Quantum Simulation

Defining Fermionic Antisymmetry and Its Physical Origins

Frequently Asked Questions (FAQs)

1. What is the fundamental reason fermionic wavefunctions must be antisymmetric? The requirement stems from the spin-statistics theorem in quantum field theory. In non-relativistic quantum mechanics, imposing antisymmetry is necessary to ensure the Hamiltonian energy spectrum is bounded from below (stable). If fermions used symmetric wavefunctions, the energy could become infinitely negative, making matter unstable [1] [2].

2. Are there any alternative representations that avoid explicit antisymmetrization? Yes, recent theoretical work shows fermionic wavefunctions can be exactly represented using symmetric functions in an enlarged space with auxiliary coordinates. This "fermion-boson transformation" encodes the antisymmetry through a special mapping, though it typically requires a more complicated many-particle Hamiltonian [3] [4].

3. What computational challenges arise from antisymmetry? The antisymmetric nature causes the Fermion Sign Problem (FSP) in Quantum Monte Carlo (QMC) methods. This leads to an exponential decay of the signal-to-noise ratio as system size increases, making large-scale simulations of fermionic systems numerically unstable and computationally expensive [5].

4. How does antisymmetry physically affect electron behavior in atoms and molecules?

- Pauli Exclusion: Electrons avoid occupying the same quantum state [6].

- Spatial Correlations: In a spatially antisymmetric state, two electrons have zero probability of being at the same location and are on average further apart, lowering their electrostatic repulsion energy [7].

- Magnetic Properties: This energy difference explains Hund's rule in atomic physics, where electrons fill orbitals with parallel spins, underlying ferromagnetism [7].

5. How is the antisymmetry condition mathematically enforced for N fermions?

For N fermions, the wavefunction is constructed as a Slater determinant [7]:

[

\psi(\mathbf{r}1, \dots, \mathbf{r}N) = \frac{1}{\sqrt{N!}}

\begin{vmatrix}

\phi1(\mathbf{r}1) & \phi2(\mathbf{r}1) & \cdots & \phiN(\mathbf{r}1) \

\phi1(\mathbf{r}2) & \phi2(\mathbf{r}2) & \cdots & \phiN(\mathbf{r}2) \

\vdots & \vdots & \ddots & \vdots \

\phi1(\mathbf{r}N) & \phi2(\mathbf{r}N) & \cdots & \phiN(\mathbf{r}N)

\end{vmatrix}

]

This determinant automatically changes sign upon swapping any two particle coordinates (r_i and r_j), enforcing the antisymmetry.

Troubleshooting Common Computational Challenges

| Challenge | Underlying Cause | Potential Mitigation Strategies |

|---|---|---|

| Fermion Sign Problem [5] | Antisymmetry causes positive and negative amplitudes canceling in QMC simulations. | Explore fixed-node approximation, constrained path methods, or novel manifold learning techniques [5] [8]. |

| High Computational Scaling | Traditional Slater determinants scale as (O(N^3)) with system size (N) [9]. | Use new neural network (FermiNet) with pairwise antisymmetry construction scaling at (O(N^2)) [9] or tensor decomposition techniques [8]. |

| Optimization Difficulties | Long-range parity flips from antisymmetry create complex, non-local patterns in amplitudes that are hard for optimizers like VMC to find [8]. | Initialize with a physically motivated guess (e.g., Hartree-Fock orbitals), use states with built-in antisymmetry (Slater determinants), or apply symmetry-breaking and restoration techniques [8]. |

Experimental and Computational Protocols

Protocol 1: Constructing a Properly Antisymmetrized Multi-Fermion Wavefunction

This is a foundational methodology for initializing many computational simulations.

- Identify Single-Particle States: For your system (e.g., a molecule), compute or choose a set of single-particle spin-orbitals ({\phi\mu(\mathbf{r})}), where each (\phi\mu) incorporates both spatial and spin components [7] [6].

- Assign Occupations: For (N) fermions, occupy (N) of these orbitals according to the Aufbau principle or your specific system's needs.

- Build the Slater Determinant: Construct the multi-particle wavefunction (\Psi) as the determinant of the (N \times N) matrix (M{ij} = \phij(\mathbf{r}_i)), where (i) indexes electrons and (j) indexes occupied orbitals [7].

- Normalize: The prefactor (1/\sqrt{N!}) ensures the wavefunction is normalized.

Protocol 2: Implementing a Neural Network Backflow Ansatz (FermiNet-style)

This modern approach enhances a simple Slater determinant with neural networks to capture correlations [8] [9].

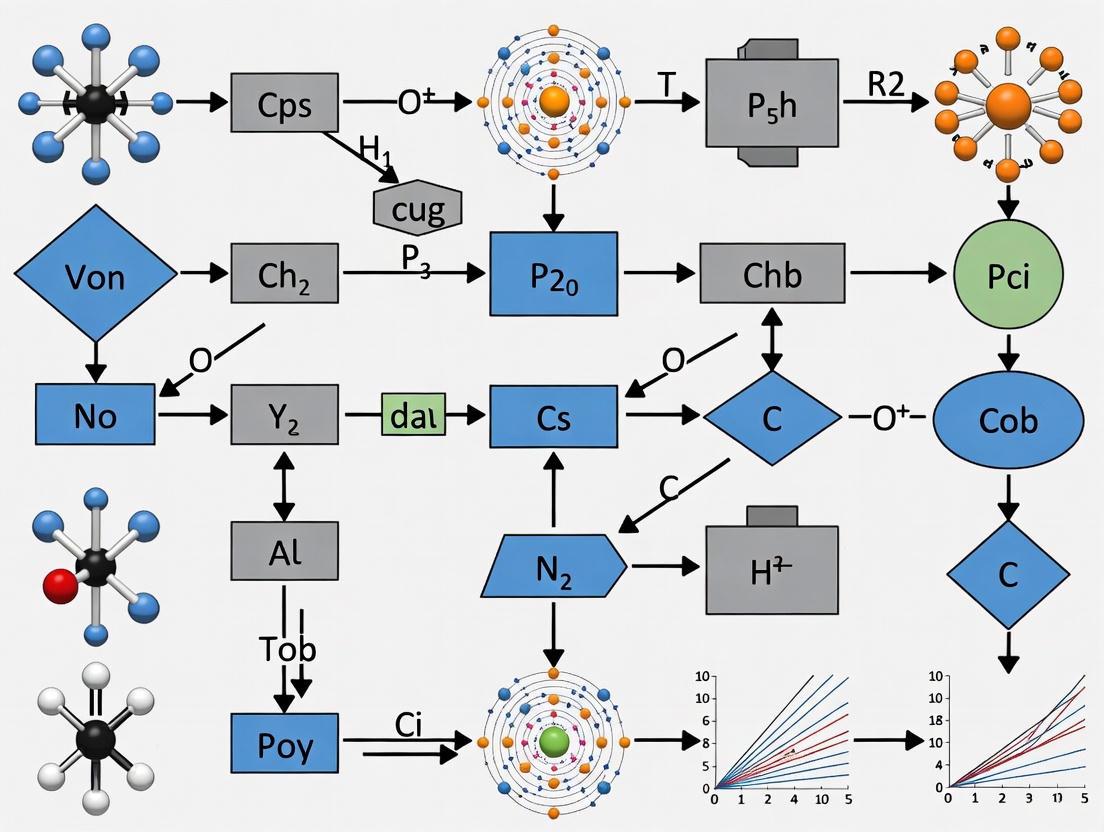

Diagram: Neural Network Backflow Workflow

- Input Electron Configuration: Feed the coordinates of all (N) electrons into the network [9].

- Permutation-Equivariant Processing: Process coordinates through a neural network that is permutation-equivariant. The output for each electron depends on the coordinates of all others in a symmetric way [9].

- Generate Backflow-Transformed Orbitals: Use the network's output to create a set of "backflow" orbitals. Each single-electron orbital now depends on the positions of all other electrons [8].

- Enforce Antisymmetry: Assemble the final wavefunction by applying a single Slater determinant or a more efficient pairwise antisymmetrization function to the backflow-transformed orbitals [9].

| Category | Item | Function |

|---|---|---|

| Core Mathematical Objects | Slater Determinant | The standard and exact method to construct an antisymmetric wavefunction from a set of single-particle orbitals, ensuring the Pauli exclusion principle [7]. |

| Jastrow Factor | A symmetric, multiplicative factor applied to the wavefunction to explicitly capture electron-electron correlation effects beyond the mean-field level [8]. | |

| Advanced Ansätze | Neural Network Backflow (FermiNet) | Replaces the single-particle orbitals in a determinant with complex functions parameterized by a neural network, allowing orbitals to depend on the configuration of all electrons [8] [9]. |

| Tensor-Decomposed Backflow | Uses a CANDECOMP/PARAFAC (CP) tensor factorization to represent the backflow transformation, offering a systematically improvable and often more compact parameterization [8]. | |

| Efficient Antisymmetrizers | Pairwise Antisymmetry Construction | An alternative to the full Slater determinant that reduces the computational cost of enforcing antisymmetry from (O(N^3)) to (O(N^2)) [9]. |

| Signature Encoder / Auxiliary Coordinates | A mathematical mapping that allows fermionic wavefunctions to be represented as symmetric functions in an enlarged space, transferring the complexity from the wavefunction to the Hamiltonian [4]. |

The Fermion Sign Problem (FSP) in Quantum Monte Carlo (QMC) Simulations

Frequently Asked Questions (FAQs)

Q1: What is the Fermion Sign Problem (FSP) and why does it occur?

The Fermion Sign Problem (FSP) is a fundamental computational challenge that arises in Quantum Monte Carlo (QMC) simulations of fermionic systems. It stems from the antisymmetric nature of fermionic wavefunctions, a requirement of the Pauli exclusion principle. When two fermions are exchanged, the wavefunction must change sign. In QMC simulations, this results in the sampling weight ρ[σ] for configurations becoming non-positive (it can be negative or even complex). This prevents ρ[σ] from being interpreted as a probability distribution, which is essential for standard Monte Carlo methods. The problem manifests as an exponential decay of the signal-to-noise ratio as the system size or inverse temperature increases, leading to numerical instabilities [5] [10].

Q2: In which specific research areas does the FSP hinder progress? The FSP is a major unsolved problem that impedes progress in multiple fields of physics involving strongly interacting fermions [10]:

- Condensed Matter Physics: It prevents the numerical solution of systems with a high density of strongly correlated electrons, such as the doped Hubbard model relevant for high-temperature superconductivity [11] [10].

- Nuclear Physics: It limits ab initio calculations of properties of nuclear matter and our understanding of nuclei and neutron stars [10].

- Quantum Field Theory: It prevents the use of lattice QCD to predict the phases and properties of quark matter at finite baryon density [5] [10].

- Quantum Chemistry: It hinders accurate first-principles simulations of molecular systems [5].

Q3: Is the Fermion Sign Problem a solved problem? No, the Fermion Sign Problem is not generally solved. It has been proven to be NP-hard, meaning a full and generic solution is computationally intractable and is not expected to be found [12]. However, this does not mean all is lost. Researchers have developed many innovative strategies and workarounds to mitigate the problem for specific classes of systems or to extend the range of accessible parameters. Progress is being made on finding solutions for specific models, but a universal solution remains elusive [5] [10] [12].

Q4: What are the most common strategies for mitigating the Sign Problem? Several approaches have been developed to mitigate the FSP [10]:

- Reweighting: Incorporating the sign or complex phase of the weight into the observable being measured. However, this often fails for large systems as the average sign becomes exponentially small [10].

- Contour Deformation: Complexifying the field space and deforming the integration path into the complex plane to a region where the sign problem is milder [10].

- Fixed-Node Approximation: Fixing the nodes (zeros) of the many-body wavefunction based on a trial wavefunction and using QMC to estimate the energy subject to that constraint [10].

- Diagrammatic Monte Carlo: Stochastically sampling Feynman diagrams, which can sometimes render the sign problem more tractable [11] [10].

- Using a Reference System: Designing a perturbation theory around a "sign-problem-free" reference system (e.g., the half-filled, particle-hole symmetric Hubbard model) and calculating corrections to it [11].

Troubleshooting Guide: Mitigation Strategies and Protocols

This guide provides detailed methodologies for implementing selected FSP mitigation strategies.

Strategy: Perturbative Solution via a Reference System

This approach leverages a sign-problem-free Hamiltonian as a starting point for perturbations [11].

Workflow Overview:

Detailed Protocol:

- Define Target System: Identify the fermionic Hamiltonian you wish to solve (e.g., a doped

t-t'-UHubbard model withU=8t,t'=-0.3t, chemical potentialμ = -2.0t) [11]. - Choose Reference System: Select a related system that is free from the sign problem. A canonical example is the half-filled (

μ=0), particle-hole symmetric (t'=0) Hubbard model. Perform a numerically exact lattice QMC simulation (e.g., using Continuous-Time QMC with the interaction expansion, CT-INT) for this reference system on a sufficiently large periodic cluster (e.g., 8x8) to obtain its properties [11]. - Calculate Two-Particle Green's Function: Within the QMC simulation of the reference system, compute the two-particle Green's function, or equivalently, the four-leg vertex function. This dynamic vertex contains information about the correlations in the reference system [11].

- Apply Perturbation: Use a diagrammatic technique (e.g., the dual fermion approach) to compute the first-order perturbative corrections in the parameters that take you from the reference system to the target system. In our example, these are the chemical potential

μand the next-nearest-neighbor hoppingt'[11]. - Compute Spectral Properties: From the perturbed Green's function, obtain the electronic spectral function of the target doped system. This may require analytical continuation from imaginary to real frequencies using methods like the Maximum Entropy (MaxEnt) scheme [11].

- Expected Outcome: This protocol can reveal the formation of a pseudogap, nodal-antinodal dichotomy, and other strong correlation features in the spectral function of the doped system, which are challenging to access with straightforward QMC [11].

Strategy: The Reweighting Procedure

This is a common but often limited method to handle non-positive weights [10].

Workflow Overview:

Detailed Protocol:

- Sample with Positive Weight: Define a positive sampling weight

p[σ] = |ρ[σ]|. Perform the Monte Carlo sampling using this positive weightp[σ][10]. - Measure the Average Sign: During the sampling, compute the average value of the sign (or phase)

⟨sign⟩ = ⟨ρ[σ] / p[σ]⟩_p. This is a critical quantity [10]. - Compute the Physical Observable: Calculate the expectation value of any physical observable

Ausing the reweighting formula:⟨A⟩_ρ = ⟨A ⋅ sign⟩_p / ⟨sign⟩_p. Here,⟨...⟩_pdenotes the average taken with the positive weightp[σ][10]. - Troubleshooting: The primary issue is the exponential decay of the average sign

⟨sign⟩. It typically scales as⟨sign⟩ ∝ exp(-fV/T), whereVis the volume,Tis temperature, andfis a constant. For large systems or low temperatures,⟨sign⟩becomes vanishingly small, leading to a ratio of two very small numbers and an uncontrollable uncertainty in⟨A⟩_ρ[10]. If the average sign is consistently zero within error bars, this method is not viable for your system.

Research Reagent Solutions: Computational Tools

The table below lists key computational "reagents" — algorithms and theoretical constructs — essential for research into the Fermion Sign Problem.

| Research Reagent | Function & Purpose |

|---|---|

| Reference System | A sign-problem-free Hamiltonian (e.g., half-filled Hubbard model) serving as the starting point for perturbation theories [11]. |

| Dual Fermion Formalism | A diagrammatic technique that maps a problem with strong local correlations to a weaker-coupled problem on a lattice, facilitating perturbation around a reference solution [11]. |

| Continuous-Time QMC (CT-QMC) | A class of impurity solvers (like CT-INT) used to solve the reference system and compute higher-order correlation functions [11]. |

| Fixed-Node Approximation | Constrains the sampling to regions where the trial wavefunction's sign is constant, effectively solving a sign-free problem at the cost of a bias from the chosen nodes [10]. |

| Meron-Cluster Algorithms | A technique that identifies clusters ("merons") in the configuration space whose contributions can be summed analytically, potentially leading to an exponential speed-up for specific models [10]. |

The following table summarizes the main approaches to tackling the FSP, their core ideas, and their limitations.

| Approach | Core Idea | Key Limitations | |

|---|---|---|---|

| Reweighting [10] | Sample with the absolute value of the weight `|ρ[σ] | ` and incorporate the sign into measured observables. | Average sign decays exponentially with system size and inverse temperature, making large systems/lowe temps intractable. |

| Reference System + Perturbation [11] | Perturb away from a sign-problem-free point using diagrammatic techniques (e.g., Dual Fermions). | Accuracy is limited to the order of perturbation and the quality of the chosen reference system. | |

| Fixed-Node Monte Carlo [10] | Use an approximate trial wavefunction to fix the nodes of the true wavefunction, making the sampling positive. | Results are biased by the quality of the trial wavefunction; the method is not exact. | |

| Contour Deformation [10] | Deform the integration contour in the complex plane to a region where the weight is positive. | Finding the optimal contour is non-trivial and not a generic solution. | |

| Meron-Cluster Algorithms [10] | Decompose the configuration into clusters that can be flipped without changing the Boltzmann weight. | Only applicable to a specific class of models; not general for e.g., the repulsive Hubbard model or QCD. | |

| Diagrammatic Monte Carlo [11] [10] | Sample Feynman diagrams of a perturbation series directly. | Series may diverge at strong coupling, limiting applicability to weaker interactions [11]. |

# Frequently Asked Questions

What causes the exponential decay of the Signal-to-Noise Ratio (SNR) in fermionic simulations? The exponential decay of SNR is a direct consequence of the Fermion Sign Problem (FSP). In simulations of fermionic systems (such as electrons in materials or quantum chemistry), the wavefunction must be antisymmetric. This antisymmetric nature means that the contributions of different quantum paths in a simulation (e.g., in Quantum Monte Carlo or QMC methods) cancel each other out with positive and negative signs. As the system size or simulation complexity grows, this cancellation leads to an exponential decay of the measurable signal relative to the background noise [5].

Which computational methods are most affected by this SNR decay? The Fermion Sign Problem specifically hinders Quantum Monte Carlo (QMC) methods. It makes applying these methods to fermionic systems numerically unstable for large-scale simulations, affecting research in Fermi liquids, quantum chemistry, nuclear matter, and lattice QCD [5].

Are there any promising strategies to mitigate this challenge? While a universal solution remains elusive, recent research focuses on innovative mitigation strategies. These include developing new QMC methodologies, algorithmic innovations, and theoretical treatments of the sign problem. The field is actively exploring these approaches to reduce the impact of the FSP [5].

# Troubleshooting Guides

# Diagnosing SNR Decay in Your Simulations

| Symptom | Possible Cause | Next Steps for Verification |

|---|---|---|

| Exponential growth of error bars with increasing system size or inverse temperature. | The Fermion Sign Problem (FSP) in fermionic QMC simulations [5]. | Check if your system contains identical fermions requiring an antisymmetric wavefunction. |

| Inability to converge results or numerical instability in large-scale fermionic system simulations. | The signal is overwhelmed by noise due to the FSP [5]. | Profile your simulation to confirm that the computational cost scales exponentially with system parameters. |

| Inconsistent or physically impossible results (e.g., negative probabilities, violation of known physical laws). | Severe signal cancellation from the antisymmetric nature of fermionic wavefunctions [5]. | Validate your results against small, tractable systems or alternative methods where the FSP is absent. |

# Guide to Mitigation Strategies

| Strategy | Core Principle | Best-Suited For |

|---|---|---|

| Algorithmic Antisymmetrization | Deterministically constructs antisymmetric states to handle exchange statistics efficiently [13] [14]. | First-quantized simulations where the number of single-particle states far exceeds the number of particles [13]. |

| Maximum Likelihood (ML) Signal Decomposition | Uses ML optimization to robustly estimate signal components in high-noise environments, offering lower sensitivity to noise and clipping compared to classical methods like Prony's [15]. | Analyzing multi-exponential signals (e.g., from dynamic testing) that are corrupted by noise and other distortions [15]. |

| Qubitization for Phase Estimation | A technique for performing the time evolution necessary for phase estimation with exactly zero error, improving the precision of eigenstate preparation [14]. | Preparing Hamiltonian eigenstates (like the ground state) with high precision for quantum simulations [14]. |

# Experimental Protocols & Methodologies

# Protocol 1: Verifying Lightly Restricted Diffusion in a Single Compartment

This experiment demonstrates that a multi-exponential signal decay can originate from a single physical compartment, cautioning against over-interpretation of data [16].

Objective: To measure the diffusion signal decay in a single cylindrical tube and fit the data to a bi-exponential model.

Materials:

- Sample Vessel: Single smooth silica tube (e.g., inner radii of 50.5 μm or 160 μm).

- Sample Fluid: Water with a small amount of CuSO₄ (to shorten T1 for faster averaging).

- NMR/MRI System: A system with a stimulated echo sequence and diffusion-gradient pulses (e.g., a 4.7 T magnet) [16].

Procedure:

- Fill the tube with the prepared fluid.

- Orient the tube perpendicular to the diffusion gradient direction.

- Use a stimulated echo sequence to minimize signal loss from background field gradients.

- Set the duration of the diffusion-gradient pulses (δ) to 3.0 ms and the separation between gradients (Δ) to values such as 54 ms or 138 ms to achieve a small parameter

a = √(D₀Δ)/r[16]. - Acquire signal data across a wide range of b-values (from 0.15 to 6000 mm²/s) to capture both rapid and slow decay components.

- Fit the signal decay data to the bi-exponential model: ( S = \zeta e^{-bDs} + (1-\zeta)e^{-bDF} ) where ( \zeta ) is the relative amplitude of the slow component, ( Ds ) is the slow apparent diffusion coefficient, and ( DF ) is the fast apparent diffusion coefficient [16].

Expected Outcome: A successful experiment will yield a signal decay that is well-modeled by a bi-exponential function, despite the spins being housed in a single, uniform compartment. The parameters from the fit (e.g., ( Ds/DF \approx 0.2 ), ( \zeta \approx 0.02-0.04 )) should agree with theoretical predictions for lightly restricted diffusion [16].

# Protocol 2: Decomposing Multi-Exponential Signals Using Maximum Likelihood Estimation

This methodology is used to measure individual components of a distorted multi-exponential signal, which is common in dynamic testing and calibration [15].

Objective: To accurately estimate the parameters (amplitudes and time constants) of a multi-exponential signal in the presence of noise and other distortions.

Materials:

- Signal Source: A device under test (e.g., an RC circuit generating an exponential stimulus signal).

- Reference Waveform Recorder (RWR): A data acquisition system with known nonlinearity error parameters.

- Software: Optimization software (e.g., in LabVIEW) capable of implementing the Maximum Likelihood (ML) method and, for comparison, Prony's method [15].

Procedure:

- Acquire the multi-exponential signal using the RWR. The signal model is: ( xs(t) = \sum{i=1}^{n} Ai e^{-Bi t} + C ) where ( Ai ) are amplitudes, ( Bi ) are decay constants, and ( C ) is a DC offset [15].

- Initialization: Use an analytical method like Prony's method to get an initial estimate of the signal parameters ( (Ai, Bi) ). This serves as the starting point for the ML optimization [15].

- Optimization: Implement a Maximum Likelihood estimation procedure. This involves a multidimensional optimization that finds the parameter set which maximizes the likelihood of the recorded data, taking into account the known nonlinearity of the RWR.

- Comparison: Evaluate the performance of the ML method against Prony's method in terms of robustness to superimposed noise, quantization noise, and signal clipping [15].

Expected Outcome: The ML method is expected to show superior performance compared to Prony's method, particularly in conditions of low signal-to-noise ratio and when dealing with non-ideal signal distortions [15].

# Essential Visualizations

# Fermionic Antisymmetry to SNR Decay Pathway

This diagram illustrates the logical relationship between the fundamental property of fermions and the resulting computational challenge.

# Maximum Likelihood Estimation Workflow

This workflow details the process for robust multi-exponential signal decomposition, a key tool for mitigating SNR-related issues in data analysis.

# The Scientist's Toolkit: Research Reagent Solutions

The following table lists key computational and methodological "reagents" essential for research in this field.

| Research Reagent | Function & Explanation |

|---|---|

| Quantum Monte Carlo (QMC) | A class of stochastic algorithms for studying quantum many-body systems. It is directly hampered by the Fermion Sign Problem when applied to fermions [5]. |

| Sorting-Based Antisymmetrization | A deterministic quantum algorithm that prepares antisymmetric states by applying the reverse of a sorting network to a sorted quantum array. It is crucial for initializing fermionic simulations in first quantization [14]. |

| Maximum Likelihood (ML) Estimation | An optimization-based method for measuring components of a multi-exponential signal. It is more robust to noise and distortions compared to analytical methods like Prony's, making it valuable for analyzing noisy decay data [15]. |

| Prony's Method | An analytical technique for the identification of signal components in a multi-exponential model. It is often used to generate initial parameter guesses for more robust optimization routines like ML [15]. |

| Qubitization | A quantum algorithm technique that allows for the execution of time evolution necessary for phase estimation with exactly zero error, enhancing the precision of eigenstate preparation [14]. |

| Stimulated Echo Sequence | An NMR pulse sequence used in diffusion experiments. It minimizes signal losses from background field gradients, allowing for cleaner measurement of the diffusion signal decay [16]. |

Troubleshooting Guide: Fermionic Antisymmetry in Computational Methods

This guide addresses common challenges researchers face when dealing with fermionic antisymmetry in computational simulations across quantum chemistry, condensed matter, and warm dense matter physics.

Frequently Asked Questions (FAQs)

Q: My Quantum Monte Carlo (QMC) simulations exhibit exponentially decaying signal-to-noise ratios with increasing system size. What is the cause and how can I mitigate it?

A: You are likely experiencing the Fermion Sign Problem (FSP), a fundamental issue originating from the antisymmetric nature of fermionic wavefunctions [5]. This problem is particularly severe in QMC simulations of Fermi liquids, quantum chemistry systems, nuclear matter, and lattice QCD [5].

- Mitigation Strategy: While a universal solution remains elusive, emerging QMC methodologies show promise. For systems like liquid 3He and warm dense matter, recent algorithmic innovations have effectively reduced the FSP's impact. Focus on developing and applying problem-specific mitigation strategies rather than seeking a general-purpose solution [5].

Q: When using first quantization mapping on a quantum computer, my initial states are not properly antisymmetrized. How can I correctly prepare fermionic Slater determinants?

A: This is a common issue in first-quantization approaches where antisymmetry is not inherent. You can use a deterministic recursive antisymmetrization algorithm [13].

- Solution Protocol: This algorithm iteratively builds antisymmetric states for 2, 3, ..., up to N particles. It initializes each particle's state independently and does not require ordered input states, unlike sorting-based methods. For a system of (N) particles and (Ns) single-particle states, it prepares antisymmetrized states using (O(N^2\sqrt{Ns})) T-gates, outperforming alternatives when (N \lesssim \sqrt{N_s}) [13].

Q: My classical DFT calculations fail for systems with strong static correlation, such as bond-breaking or transition metal complexes. What is a more accurate yet computationally feasible approach?

A: Traditional Kohn-Sham DFT often fails for strongly correlated systems. Consider transitioning to Multiconfiguration Pair-Density Functional Theory (MC-PDFT) [17].

- Implementation Steps: MC-PDFT calculates the total energy by splitting it into:

- Classical energy (kinetic, nuclear attraction, Coulomb): obtained from a multiconfigurational wave function.

- Nonclassical energy (exchange-correlation): approximated using a density functional based on electron density and the on-top pair density.

- Recommended Functional: Use the new MC23 functional, which incorporates kinetic energy density for a more accurate description of electron correlation, significantly improving performance for spin splitting, bond energies, and multiconfigurational systems [17].

Advanced Troubleshooting: Quantum Computing Algorithms

Issue: High gate overhead in fermionic simulations using Jordan-Wigner transformation.

- Diagnosis: The Jordan-Wigner encoding maps fermionic creation/annihilation operators to qubit operators, but introduces non-local string operators (( \prod Z_k )), which increase circuit depth and gate count [18].

- Solution: Implement a Fermionic SWAP (FSWAP) network [18]. FSWAP networks rearrange qubits to simplify these non-local interactions, significantly reducing the overall gate complexity required to simulate electron dynamics in molecules.

Issue: Preparing antisymmetric states on quantum hardware with minimal qubit overhead.

- Diagnosis: Projection-based antisymmetrization techniques can have low overlap with the target state for systems with more than a few particles, making them inefficient [13].

- Solution: Employ the measurement-based variant of the recursive antisymmetrization algorithm [13]. This variant preserves a 100% success probability while reducing the quantum circuit's gate cost by roughly a factor of two compared to its non-measurement counterpart.

Comparison of Antisymmetrization Algorithms for Quantum Computers

The following table summarizes the resource requirements for different quantum algorithms that handle fermionic antisymmetry, crucial for selecting the right approach for your experiment.

| Algorithm / Method | Key Principle | Qubit Scaling | Gate Scaling (T-gates) | Best-Suited For |

|---|---|---|---|---|

| Recursive Antisymmetrization [13] | Iteratively builds antisymmetric states from independent particle states. | Requires (O(\sqrt{N_s})) dirty ancillae. | (O(N^2\sqrt{N_s})) | Systems where (N \lesssim \sqrt{N_s}); no need for ordered inputs. |

| Sorting-Based Antisymmetrization [13] | Requires input states where particle labels are ordered (e.g., (r1 < ... < rN)). | - | - | Compatible with state-preparation procedures for Slater determinants. |

| Jordan-Wigner Encoding [18] | Maps fermionic operators to qubits via non-local string operators. | Linear with number of single-particle states ((K)). | High due to non-local (Z) strings. | Standard approach in second quantization; foundational for many algorithms. |

| FSWAP Networks [18] | Rearranges qubit ordering to mitigate non-locality of JW strings. | - | Reduces gate complexity from JW. | Essential for reducing gate overhead in molecular simulations. |

Performance of Classical Electronic Structure Methods

This table compares the accuracy and computational cost of various classical methods, highlighting where new approaches like MC-PDFT provide advantages.

| Computational Method | Handling of Strong Static Correlation | Typical Computational Cost | Key Limitations |

|---|---|---|---|

| Kohn-Sham DFT (KS-DFT) [17] | Often fails or is inaccurate. | Low to Moderate | Inaccurate for transition metals, bond-breaking, and systems with near-degenerate states. |

| Wave Function-Based Methods [17] | Accurate but computationally prohibitive. | Very High | Infeasible for large systems like proteins or complex materials. |

| Multiconfiguration Pair-Density FT (MC-PDFT) [17] | Excellent, hybrid approach. | Moderate | More complex than KS-DFT but enables studies of large, correlated systems. |

| MC23 Functional [17] | Superior, includes kinetic energy density. | Moderate (similar to MC-PDFT) | Newest method, requires broader adoption and testing. |

Experimental Protocols

Protocol: Deterministic Preparation of Antisymmetric States on a Quantum Computer

Objective: Prepare a fully antisymmetric state of (N) fermions in a first-quantization mapping, starting from a product state of orthogonal single-particle orbitals [13].

Methodology:

- Initialization: Initialize the quantum register to a product state where each of the (N) particles is in an independent, orthogonal single-particle orbital. No ordering of states is required.

- Ancilla Allocation: Allocate (N-1) ancilla qubits for intermediate calculations. For larger systems, (O(\sqrt{Ns})) dirty ancillae are required to achieve the favorable (O(N^2\sqrt{Ns})) T-gate scaling.

- Recursive Construction:

- Begin by constructing the properly antisymmetrized state for the first two particles.

- Iteratively add the (k)-th particle (for (k = 3) to (N)), each time applying a controlled operation that correctly antisymmetrizes the new particle with the already antisymmetrized state of the (k-1) particles.

- Ancilla Disentanglement: After each iterative step, apply unitary operations to disentangle the ancilla qubits from the system qubits. This returns the ancillae to their initial state without the need for measurement.

- Measurement-Based Variant (Alternative): To reduce gate cost by approximately half, a measurement-based variant of the algorithm can be used. This variant still guarantees a 100% success probability upon obtaining the correct measurement outcome [13].

Protocol: Calculating Atomic Forces with Quantum-Classical AFQMC (QC-AFQMC)

Objective: Accurately compute atomic-level forces, beyond total energy, for modeling chemical reactivity and reaction pathways in systems like carbon capture materials [19].

Methodology:

- System Setup: Identify critical points on the potential energy surface where significant changes occur (e.g., near transition states).

- QC-AFQMC Execution: Run the Quantum-Classical Auxiliary-Field Quantum Monte Carlo algorithm on quantum hardware to evaluate the electronic energy and wavefunction at these points.

- Force Calculation: Compute the nuclear forces as the negative gradient of the potential energy with respect to nuclear coordinates. This demonstration has proven more accurate than forces derived from purely classical methods [19].

- Integration into Classical Workflow: Feed the calculated quantum-level forces into a classical molecular dynamics (MD) simulation workflow. This guides the MD simulation along more accurate reaction pathways.

- Analysis: Use the improved trajectories to estimate more accurate reaction rates and aid in the design of efficient materials, such as those for carbon capture [19].

Workflow Visualization

Antisymmetrization Algorithm Strategy

The diagram below illustrates the logical workflow for selecting the appropriate antisymmetrization strategy in quantum simulations, based on the specific requirements of the problem.

Quantum-Classical Force Calculation

This workflow details the hybrid quantum-classical protocol for calculating precise atomic forces, a critical capability for modeling chemical reactions.

The Scientist's Toolkit: Research Reagent Solutions

This table catalogs key computational "reagents" — algorithms, theories, and functionals — essential for tackling fermionic antisymmetry challenges.

| Research 'Reagent' | Function / Purpose | Field of Application |

|---|---|---|

| Recursive Antisymmetrization Algorithm [13] | Deterministically prepares antisymmetric fermionic states in first quantization. | Quantum Computing, Nuclear Physics, Few-Body Systems. |

| MC23 Functional [17] | A density functional for highly accurate simulation of strongly correlated electron systems. | Quantum Chemistry, Materials Science, Catalysis Research. |

| Quantum-Classical AFQMC (QC-AFQMC) [19] | Accurately calculates atomic-level forces for reaction modeling, surpassing classical accuracy. | Molecular Dynamics, Drug Discovery, Carbon Capture Material Design. |

| Fermionic SWAP (FSWAP) Network [18] | Reduces gate complexity in quantum simulations by efficiently rearranging qubits. | Quantum Algorithm Development, Quantum Computational Chemistry. |

| Jordan-Wigner Encoding [18] | Foundational mapping from fermionic operators to qubit operators for quantum simulation. | Quantum Computing, Quantum Chemistry Simulations. |

Emerging Methodologies: From Neural Networks to Quantum Circuits

Frequently Asked Questions (FAQs)

Q1: What is the core advantage of using a global spacetime optimization approach over traditional methods for solving the Time-Dependent Schrödinger Equation (TDSE)?

The primary advantage lies in its non-sequential formulation. Traditional methods like time-dependent variational principle (TDVP) or real-time time-dependent density functional theory (RT-TDDFT) rely on step-by-step time propagation, where numerical errors accumulate over the simulation period, degrading long-time accuracy [20]. In contrast, the global spacetime approach, such as the Fermionic Antisymmetric Spatio-Temporal Network (FAST-Net), treats time as an explicit input variable alongside spatial coordinates [21] [20]. This frames the TDSE as a single global optimization problem over the entire spacetime domain, enabling highly parallelizable training and mitigating the issue of cumulative errors associated with sequential propagation [21] [20].

Q2: How do neural network methods enforce the antisymmetry requirement for fermionic wavefunctions?

Enforcing fermionic antisymmetry is a central challenge. Modern neural network approaches employ several strategies:

- Antisymmetric Feature Encoding: Methods like the one proposed by Fu introduce a parity-graded representation, lifting the antisymmetric wavefunction into an enlarged space using antisymmetric feature variables (denoted as

η) that encode the exchange statistics. The wavefunction is then represented as a continuous function of both symmetric and antisymmetric features, guaranteeing antisymmetry by construction [4]. - Slater Determinant Framework: Prominent architectures like the FermiNet use neural networks to learn complex, permutation-equivariant functions that are then fed into a Slater determinant structure. This structure automatically ensures the wavefunction is antisymmetric under particle exchange [22] [20].

- Spin Mappings: An alternative approach involves mapping the fermionic problem to an equivalent spin system using transformations like the Jordan-Wigner or Bravyi-Kitaev encoding. A neural network quantum state (e.g., a Restricted Boltzmann Machine) can then represent the state of the spin system [22].

Q3: What are the common failure modes when the training loss for a spacetime network fails to converge?

Non-convergence can stem from several sources:

- Inadequate Network Expressivity: The neural network may lack the capacity to represent the complex, high-frequency patterns of the target wavefunction. This can be addressed by increasing the network's width or depth, though this must be balanced against computational cost [23].

- Incorrect Antisymmetry Handling: Improper implementation of the antisymmetric constraint can lead to unphysical wavefunctions and failed training. Verifying the antisymmetry property of the network output for permuted inputs is crucial [5] [4].

- Poorly Conditioned Loss Landscape: The global spacetime loss function, often based on the PDE residual, can be difficult to optimize. Techniques like loss weighting (e.g., assigning higher weights to initial conditions) or gradient clipping may be necessary to stabilize training [20].

- Insufficient Sampling: The training may not adequately sample critical regions of the spacetime domain, such as areas where the potential changes rapidly, leading to an incomplete solution.

Q4: Can these methods be applied to quantum systems relevant to drug discovery, such as large organic molecules?

While the field is advancing rapidly, applying these methods to large molecules used in drug discovery remains a significant challenge. Current demonstrations, like the simulation of a laser-driven H₂ molecule, show promise for small systems [21] [20]. However, the computational cost scales with the number of electrons and the complexity of their interactions. The fermionic sign problem presents a fundamental bottleneck, causing an exponential decay in the signal-to-noise ratio for large systems [5]. Research is focused on developing more efficient representations and scaling algorithms, but simulating large biomolecules is currently beyond the reach of most ab initio neural network methods.

Troubleshooting Guides

Training Instability and Divergence

Problem: The loss value oscillates wildly or diverges to infinity during training.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Improper loss function scaling | Check the relative magnitude of different loss components (e.g., PDE residual vs. initial condition). | Apply adaptive loss weighting to balance the contributions from the TDSE residual, initial conditions, and boundary conditions [20]. |

| Exploding gradients | Monitor gradient norms during training. | Implement gradient clipping or use optimization algorithms designed to handle unstable gradients. |

| High learning rate | Observe if loss increases monotonically. | Reduce the learning rate or use a learning rate scheduler. |

Violation of Physical Symmetries

Problem: The simulated wavefunction does not respect fermionic antisymmetry, leading to unphysical results.

Diagnostic Flowchart:

Solutions:

- Architecture Fix: Ensure your network uses a proven antisymmetric architecture. For a minimal representation, implement a parity-graded network that takes symmetric (

ξ) and antisymmetric (η) features as input [4]. Alternatively, use a Slater determinant of neural network-generated orbitals [22] [20]. - Feature Verification: If using a custom antisymmetric feature map (

η), verify that it satisfiesη(r1, r2) = -η(r2, r1)for a 2-particle system and is continuous. A faulty feature map will not properly encode the fermionic statistics [4].

Inaccurate Long-Time Dynamics

Problem: The simulation is accurate for short times but deviates significantly from expected behavior at longer times.

| Issue | Mitigation Strategy |

|---|---|

| Insufficient network capacity | Increase the size (width/depth) of the network or the number of determinants in a FermiNet-like approach to enhance its expressive power [22]. |

| Inadequate training sampling | Ensure that the training points (collocation points) in the spacetime domain sufficiently cover regions of dynamical interest, especially at later times. |

| Residual cumulative error | While global optimization reduces error accumulation, it does not eliminate it. Consider using a hybrid approach or breaking the long-time simulation into smaller, coupled spacetime windows [20]. |

High Memory and Computational Demand

Problem: The model requires more GPU/CPU memory than is available, or training is prohibitively slow.

Action Plan:

- Reduce Batch Size: Lower the batch size of collocation points during training. This is the most straightforward way to reduce memory usage.

- Use Mixed Precision: Train the model using 16-bit floating-point precision (if supported by your hardware) to halve memory consumption and potentially speed up computation.

- Distributed Training: Implement model or data parallelism to distribute the computational load across multiple GPUs.

- Optimize Network: Explore more parameter-efficient network architectures. The search for minimal representations, such as those with feature dimensions scaling as

D ~ N, is an active area of research that can alleviate computational burdens [4].

Research Reagent Solutions

The table below lists key computational "reagents" and their functions for implementing global spacetime optimization for the fermionic TDSE.

| Reagent / Component | Function & Explanation |

|---|---|

| Spatiotemporal Network (e.g., FAST-Net) | The core neural network architecture that takes spatial coordinates and time (r, t) as input and outputs the complex-valued wavefunction. It provides a unified representation for the entire dynamics [21] [20]. |

Antisymmetric Feature Map (η) |

A mathematically defined function that encodes the fermionic exchange statistics. It maps particle coordinates to a space where the wavefunction's sign structure can be represented simply, acting as a "signature" for particle permutations [4]. |

| Global Spacetime Loss | The objective function for training, typically the L2 norm of the TDSE residual. It measures how well the network satisfies the quantum dynamics law across the entire domain, avoiding sequential time-stepping [21] [20]. |

| Fermion-to-Spin Mapper | An algorithmic tool (e.g., Jordan-Wigner transformation) that converts the fermionic Hamiltonian into an equivalent spin Hamiltonian. This allows the use of powerful spin-based neural network states [22]. |

| Adaptive Sampler | A method for selecting collocation points (r, t) within the spacetime domain during training. Effective sampling is critical for efficiently learning the solution, especially in regions with strong interactions [23]. |

Experimental Protocol: Benchmarking on a Model System

This protocol outlines how to benchmark a global spacetime optimization method using the benchmark of two interacting fermions in a time-dependent harmonic trap, as used in foundational studies [21] [20].

Workflow Diagram:

Step-by-Step Procedure:

System Definition and Reference Data Generation

- Hamiltonian: Set up the Hamiltonian for two fermions in a 1D harmonic trap

V(x) = 0.5 * m * ω(t)² * x², whereω(t)is a time-varying frequency. - Initial State: Prepare the initial wavefunction as the antisymmetric ground state of the trap at

t=0. - Reference Data: Use a highly accurate, conventional numerical solver (e.g., split-operator or high-order TDCI) to propagate the system for a defined time

T. Store the wavefunction and observables (e.g., density, energy) at several time points. This serves as the ground truth for benchmarking [20].

- Hamiltonian: Set up the Hamiltonian for two fermions in a 1D harmonic trap

Network Implementation

- Architecture: Implement a neural network,

Ψ(r1, r2, t; W), whereWare the network parameters. The architecture must enforce antisymmetry:Ψ(r1, r2, t) = -Ψ(r2, r1, t). This can be achieved using an antisymmetric feature map [4] or a simple Slater determinant structure for this small system. - Loss Function: Define the loss

Las:L = || i ∂Ψ/∂t - H Ψ ||² + λ_ic ||Ψ(t=0) - Ψ_0||² + λ_bc (Boundary Condition Loss)whereλ_icandλ_bcare weighting coefficients for the initial and boundary conditions, respectively [21] [20].

- Architecture: Implement a neural network,

Training Loop

- Sampling: Randomly sample a large batch of points

(r1, r2, t)from the entire spacetime domain[X_min, X_max] x [0, T]. - Optimization: Use a gradient-based optimizer (e.g., Adam) to minimize the loss

Lwith respect to the network parametersW. The gradients are computed via automatic differentiation.

- Sampling: Randomly sample a large batch of points

Validation and Analysis

- Quantitative Comparison: Calculate the mean absolute error (MAE) of the particle density

n(x, t)and the total energyE(t)between the network prediction and the reference data. - Symmetry Check: Explicitly verify that the final learned wavefunction is antisymmetric by testing with permuted inputs.

- Long-time Stability: Assess the accuracy of the simulation at the final time

Tto evaluate the method's effectiveness in mitigating error accumulation.

- Quantitative Comparison: Calculate the mean absolute error (MAE) of the particle density

Frequently Asked Questions (FAQs)

Q1: What is the primary purpose of using an explicitly antisymmetrized neural network layer in variational Monte Carlo (VMC) simulations? The primary purpose is to serve as a diagnostic tool to better understand and improve the expressiveness of the antisymmetric part of a neural wavefunction ansatz. Replacing the standard determinant layer in architectures like the FermiNet with a generic antisymmetric (GA) layer, which is the explicit antisymmetrization of a neural network, allows researchers to probe the limitations of conventional sum-of-determinants structures. It was found that while a generic antisymmetric layer can accurately model ground states, a factorized version does not outperform the standard FermiNet, suggesting the sum-of-products structure itself is a key limiting factor [24] [25].

Q2: My model's energy estimate is consistently higher than the exact ground state. Is the error likely from the antisymmetric layer or the equivariant backflow? Pinpointing the source of error can be challenging. However, you can perform an ablation study using a Generic Antisymmetric (GA) block. The GA block is constructed to be a universal approximator for antisymmetric functions by design. If replacing your standard antisymmetric layer with a GA block (while keeping the backflow the same) significantly reduces the energy error, it indicates that the expressiveness of your original antisymmetric layer was a primary bottleneck. If the error persists, the issue likely lies in the quality of the permutation-equivariant features provided by the backflow transformation [24].

Q3: Are there ways to implement antisymmetrization that are more efficient than the factorial-cost explicit sum over permutations? Yes, recent research explores alternative approaches. One line of inquiry shows that the function expressed by a 2-layer GA block can be decomposed into a sum of determinants, potentially offering a more computationally tractable implementation [25]. Furthermore, in the context of quantum computing for first-quantized simulations, deterministic recursive algorithms have been devised that prepare antisymmetric states without a full factorial scaling, though they require ancilla qubits [13]. For classical VMC, using a single full determinant instead of a product of smaller determinants has also been shown to improve performance significantly, sometimes outperforming models with many standard determinants [24].

Q4: Can a single neural network wavefunction be applied to multiple system configurations, such as different molecular geometries or supercell sizes? Yes, the development of transferable neural wavefunctions is an active and promising area of research. By conditioning a single neural network ansatz not only on electron positions but also on system parameters (like nuclear coordinates, boundary conditions, or supercell size), you can optimize one wavefunction across multiple related systems. This approach can drastically reduce the total optimization steps required. For example, a network pretrained on a 2x2x2 supercell can be fine-tuned for a 3x3x3 supercell with 50x fewer steps than training from scratch [26].

Q5: How does the "full determinant mode" in FermiNet differ from the standard implementation, and when should I use it? The standard FermiNet typically uses a product of determinants (e.g., one for each spin channel). The full determinant mode replaces this product with a single, combined determinant that includes orbitals for all electrons. This structure is more expressive. You should consider using it, especially for simulating strongly correlated systems where the standard FermiNet with multiple determinants struggles. For instance, a full single-determinant FermiNet has been shown to outperform a standard 64-determinant FermiNet when simulating a nitrogen molecule at a dissociating bond length [24].

Troubleshooting Guides

Problem: High Variance or Instability in Energy Calculations

Symptoms: The estimated energy during VMC optimization fluctuates wildly, fails to converge, or is significantly off benchmark values.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Insufficient expressivity of the antisymmetric layer | Replace your antisymmetric layer with a simple Generic Antisymmetric (GA) block for a small system. A drop in energy suggests this was the issue [24]. | Switch to a more expressive antisymmetric layer, such as the full determinant mode [24] or increase the number of determinants in a standard ansatz. |

| Poorly optimized equivariant backflow | Check the convergence of the features entering the determinant/antisymmetric layer. | Ensure the backflow network is deep enough and that training is stable. Using transfer learning from a pre-trained model on a smaller system can provide a better initialization [26]. |

| Inadequate sampling | Monitor the statistical uncertainty of your energy estimates. | Increase the number of Monte Carlo samples per optimization step. Check for pathologies in the electron configurations being sampled. |

Problem: Prohibitive Computational Cost in Large Systems

Symptoms: Optimization steps take too long, making the study of larger molecules or solids impractical.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Factorial scaling of explicit antisymmetrization | Profile your code to confirm the antisymmetrization step is the bottleneck. | For a GA block, utilize its theoretical equivalence to a sum of determinants for a more efficient implementation [25]. |

| Redundant re-optimization for similar systems | You are running separate calculations for each geometry, supercell size, or twist angle. | Implement a transferable wavefunction approach. Train a single network that takes system parameters as additional input, then fine-tune for specific cases [26]. |

| Inefficient determinant calculation | The cost of calculating numerous large determinants dominates runtime. | Explore the use of a full determinant instead of a product of determinants, as a single, more expressive determinant can be more efficient than many smaller ones [24]. |

Problem: Failure to Capture Strong Correlation

Symptoms: The model fails to accurately describe bond dissociation, systems with near-degeneracies, or other strongly correlated phenomena.

| Potential Cause | Diagnostic Steps | Solution |

|---|---|---|

| Limitations of the sum-of-products structure | Compare the performance of a single-determinant FermiNet vs. its full-determinant variant on your system. | Adopt the full determinant mode in your architecture. This has been proven highly effective for challenging systems like dissociating N₂ [24]. |

| Lack of multi-reference character | The model may be collapsing to a single Slater determinant reference. | Increase the number of determinants (K) in your ansatz. Alternatively, a well-designed GA block can inherently capture more complex correlations [24] [25]. |

Experimental Protocols & Data

Protocol: Benchmarking Antisymmetric Layers on a Small Molecule

This protocol outlines how to compare the performance of different antisymmetric layers on a diatomic molecule like Nitrogen (N₂) at a dissociating bond length.

1. System Setup

- Molecule: N₂

- Geometry: Set the bond length to a dissociating value, e.g., 4.0 Bohr [24].

- Baseline Method: A highly accurate method like r12-MR-ACPF for reference energy.

2. Wavefunction Ansatze

- Ansatz A: Standard FermiNet with a large number of determinants (e.g., 64).

- Ansatz B: FermiNet with a full determinant mode (single, combined determinant).

- Ansatz C: FermiNet with a Generic Antisymmetric (GA) block.

3. Optimization Procedure

- Use the VMC method to optimize the parameters of each ansatz.

- For each, record the final energy estimate and the number of optimization steps required to reach convergence.

4. Quantitative Results Table

The table below summarizes expected outcomes from such a benchmark, based on published findings [24].

| Wavefunction Ansatz | Number of Determinants / Antisymmetrizers | Final Energy (a.u.) | Error vs. Reference (kcal/mol) | Relative Optimization Cost |

|---|---|---|---|---|

| Standard FermiNet | 64 | -109.45 | >1.0 | 1.0x (Baseline) |

| Full Determinant FermiNet | 1 (Full) | -109.49 | ~0.4 | ~1.0x |

| FermiNet with GA-2 Block | 1 (Antisymmetrized NN) | ~-109.50 | <0.4 | >1.0x (but system-dependent) |

Protocol: Implementing a Transferable Wavefunction for Solids

This protocol describes how to train a single neural wavefunction for a solid (e.g., LiH) that is transferable across different supercell sizes [26].

1. System Setup

- Solid: Lithium Hydride (LiH).

- Supercells: 2x2x2 (32 electrons) and 3x3x3 (108 electrons) supercells.

- Boundary Conditions: Include multiple twist angles (e.g., a Monkhorst-Pack grid) in the training.

2. Network Architecture

- Design a neural network ansatz that takes as input not only the electron positions r but also system parameters p. The parameter vector p should encode:

- Supercell size

- Twist vector k

- Ionic positions (or lattice vectors)

3. Two-Stage Optimization

- Stage 1 (Pretraining): Optimize the network on all available data from the smaller 2x2x2 supercells and multiple twists.

- Stage 2 (Fine-tuning): Take the pretrained network and fine-tune it on the larger 3x3x3 supercell.

4. Expected Outcome

- The fine-tuned model for the 3x3x3 supercell should reach a low-energy, accurate state in a fraction (e.g., 1/50th) of the optimization steps required if training from scratch [26].

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in the Context of Antisymmetric Neural Wavefunctions |

|---|---|

| Generic Antisymmetric (GA) Block | A diagnostic "reagent": The explicit antisymmetrization of a neural network used to test if the antisymmetric layer is a bottleneck in expressivity [24] [25]. |

| Full Determinant Mode | An architectural "reagent": Replaces the product-of-determinants in ansatzes like FermiNet with a single, large determinant, often improving performance for strongly correlated systems without increasing the determinant count [24]. |

| Transferable Wavefunction Framework | An efficiency "reagent": A single neural network conditioned on system parameters (geometry, supercell size, twist), enabling transfer learning and drastic reduction in optimization cost for new system configurations [26]. |

| Permutation-Equivariant Backflow | A foundational "reagent": A neural network that transforms single-particle coordinates into a set of permutation-equivariant features, ensuring the input to the antisymmetric layer respects the indistinguishability of electrons [24] [26]. |

| Determinant Decomposition Theorem | A theoretical "reagent": The result showing that a 2-layer GA block can be represented as a sum of determinants, providing a potential path to implement general antisymmetrization without factorial cost [25]. |

Troubleshooting Guide: Common Implementation Challenges

Problem 1: Algorithm Fails to Produce Antisymmetric Output State

- Symptoms: The final wavefunction does not change sign under particle exchange; Measurements of observables yield results inconsistent with fermionic statistics.

- Potential Causes & Solutions:

- Incorrect Ancilla Management: The algorithm requires

O(√Ns)dirty ancilla qubits for intermediate calculations. Ensure these are properly allocated and that the disentanglement steps at the end of each iteration are correctly implemented [13]. - Non-Orthogonal Input States: The algorithm requires the input single-particle orbitals to be orthogonal. Verify the orthogonality of your prepared single-particle states before applying the antisymmetrization procedure [13].

- Incorrect Ancilla Management: The algorithm requires

Problem 2: Excessive T-gate Count During Compilation

- Symptoms: Circuit compilation results in a

T-gate count that scales poorly with the number of particles (N) or single-particle states (Ns). - Potential Causes & Solutions:

- Suboptimal Scaling: The expected

T-gate scaling isO(N²√Ns), which is most efficient whenN ≲ √Ns[13]. If your system is larger, consider alternative algorithms. - Inefficient Oracle Implementation: The cost is heavily influenced by the implementation of oracles that encode the single-particle states. Leverage specific knowledge of the states (e.g., spatial locality in grid-based simulations) to optimize these oracles and reduce resource overhead [13] [27].

- Suboptimal Scaling: The expected

Problem 3: High Error Rates in Noisy Intermediate-Scale Quantum (NISQ) Simulations

- Symptoms: Simulation results are dominated by noise, making the antisymmetrized state unrecognizable.

- Potential Causes & Solutions:

- Deep Circuit Depth: The recursive algorithm involves multiple iterative steps. Consider using the measurement-based variant of the algorithm, which reduces the gate cost by roughly a factor of two, thereby shortening the circuit and potentially mitigating error accumulation [13].

- Insufficient Error Mitigation: For the specific case of validating antisymmetrization, one can introduce a perturbation via a permutation Hamiltonian (

H_perm = ∑_{i<j} P_{ij}) and use quantum phase estimation to check that the prepared state lies in the correct antisymmetric symmetry sector, which should have the lowest energy [13].

Frequently Asked Questions (FAQs)

Q1: Why should I use this recursive algorithm over sorting-based antisymmetrization methods?

A1: The primary advantage is flexibility in the input states. Sorting-based methods require the input state to be a superposition of ordered basis states (|r₁, ..., r_N⟩ where r₁ < ... < r_N). In contrast, this recursive algorithm can initialize the state of each particle independently and works for any set of orthogonal single-particle orbitals, simultaneously handling antisymmetrization and state preparation [13].

Q2: In what scenarios is the first-quantization mapping preferred for fermionic simulation?

A2: First-quantization is more qubit-efficient when the number of single-particle states (N_s) is much larger than the number of particles (N). The qubit count scales as O(N log N_s), which is preferable to second-quantization's O(N_s) scaling for problems requiring high resolution, such as simulations of chemical reactions or nucleon scattering [13] [27].

Q3: What is the role of the "dirty ancilla" qubits, and can I use any available qubits for this?

A3: The O(√N_s) dirty ancillae are essential for intermediate calculations to achieve the favorable O(N²√N_s) T-gate scaling. "Dirty" implies they can start in an arbitrary state, but they must be properly cleaned (disentangled) by the end of the computation. They are an integral part of the algorithm and their allocation must be managed carefully [13].

Q4: How does this algorithm integrate into a full chemical reaction simulation workflow?

A4: This algorithm serves as the state preparation module. A complete simulator, like the "crsQ" chemical reaction simulator, would chain this antisymmetrization circuit with a time-evolution circuit based on Suzuki-Trotter decomposition and a final measurement circuit to extract physical observables [27].

Experimental Protocol: Validating the Algorithm for a Three-Particle System

This protocol outlines the steps to implement and validate the recursive antisymmetrization algorithm for a small-scale system on a quantum simulator.

1. Objective: Prepare and verify the antisymmetric state of three fermions in distinct, non-trivial single-particle orbitals.

2. Prerequisites:

- A quantum computing framework (e.g., Qiskit, Cirq).

- Access to a quantum simulator (statevector preferred for validation).

3. Procedure:

- Step 1 — Initialize Registers: Allocate three registers of

nqubits each to represent the coordinates of the three particles. AllocateO(√N_s)ancilla qubits as required [13]. - Step 2 — Prepare Single-Particle Orbitals: Apply sub-circuits to load the desired single-particle wave functions (e.g., Gaussian orbitals or Hartree-Fock orbitals) into the particle registers. The orbitals must be orthogonal [13] [27].

- Step 3 — Apply Recursive Antisymmetrization:

- Construct the antisymmetrized state for particles 1 and 2.

- Recursively incorporate particle 3 into the antisymmetrized state, following the algorithm's construction for the three-particle case [13].

- Step 4 — Disentangle Ancillae: Implement the reverse operations to cleanly disentangle all ancilla qubits from the system register [13].

- Step 5 — Verification:

- Method A (Direct Inspection): Use the simulator to output the final statevector. Check that the state is a superposition of Slater determinants and that it picks up a factor of

-1under the exchange of any two particle labels. - Method B (Observable Measurement): Measure the expectation value of a two-body operator (e.g., a Coulomb-like interaction). Compare the result against the value computed classically for the known antisymmetric state to confirm correctness.

- Method A (Direct Inspection): Use the simulator to output the final statevector. Check that the state is a superposition of Slater determinants and that it picks up a factor of

4. Expected Outcome: A successfully prepared three-fermion antisymmetric state, with the circuit exhibiting the expected T-gate count and ancilla usage for the chosen N_s.

Algorithm Workflow and Logical Structure

The following diagram illustrates the recursive procedure for building the antisymmetric state.

The Scientist's Toolkit: Research Reagent Solutions

The table below details the key "research reagents" — the fundamental quantum resources and components — required to implement the recursive antisymmetrization algorithm.

| Resource / Component | Function & Explanation |

|---|---|

| Particle Register Qubits | Represents the coordinates of each particle. The total number scales as O(N log N_s), providing the qubit efficiency of the first-quantization approach [13] [27]. |

| Dirty Ancilla Qubits | Temporary work qubits required for intermediate calculations. Their O(√N_s) scaling is crucial for achieving the algorithm's low T-gate complexity [13]. |

| Single-Particle State Oracles | Quantum circuits that load the specific single-particle orbitals (e.g., Gaussian packets, Hartree-Fock orbitals) into the particle registers. Their complexity directly impacts the overall gate cost [13]. |

| Clifford + T Gates | The universal gate set used for circuit decomposition and resource estimation. The T-gate count is a primary metric for the algorithm's cost on fault-tolerant hardware [13]. |

| Permutation Hamiltonian (H_perm) | A diagnostic tool defined as ∑_{i<j} P_{ij}, where P_{ij} permutes particles i and j. Used with phase estimation to verify the antisymmetry of the prepared state [13]. |

Frequently Asked Questions (FAQs)

Q1: What is the core innovation of the CP-decomposed backflow ansatz for fermionic systems?

The core innovation is the application of a CANDECOMP/PARAFAC (CP) tensor decomposition to a general backflow transformation in second quantization [8] [28]. This creates a simple, compact, and systematically improvable variational wave function that directly encodes N-body correlations. Unlike some other tensor decompositions, it does this without introducing an ordering dependence, thereby simplifying the treatment of fermionic antisymmetry [8].

Q2: What specific fermionic challenges does this method help to overcome?

This approach addresses several key challenges:

- Antisymmetry: It provides a way to build explicit many-body correlations into the wave function while maintaining the required antisymmetry [8].

- Sign Problem: The method is free of the sign problem that plagues Quantum Monte Carlo (QMC) in frustrated and fermionic systems [29].

- High Dimensions: It demonstrates potential for application in systems beyond one dimension, where methods like Density Matrix Renormalization Group (DMRG) are less effective [29].

Q3: My VMC optimization is converging slowly or to a poor energy. What could be the issue?

Slow or poor optimization is a known challenge in Variational Monte Carlo (VMC), even with simple ansätze [28]. Consider the following:

- Sampling: Increase the sampling in your VMC optimization. Inadequate sampling is a common cause for not reaching the expressibility limit of the ansatz [28].

- Initialization: The choice of initial parameters is critical. Poor initialization can lead to convergence in local minima [8].

- Rank Truncation: The chosen rank of the CP decomposition may be too low to capture the necessary correlations. Systematically increasing the rank can lead to improvement [8].

Q4: How do I control the computational cost of the method for larger systems?

The computational scaling can be systematically reduced through controllable truncations [8] [28]:

- Rank Reduction: Lower the rank (R) of the CP decomposition.

- Correlation Range: Truncate the spatial range of the backflow correlations, ignoring long-range interactions that contribute less.

- Energy Screening: Screen out local energy contributions that are below a certain threshold.

Q5: What is the role of the CP decomposition in this ansatz?

The CP decomposition factorizes a tensor into a sum of rank-one components [30]. In this context, it is used to factorize the backflow transformation, providing a simple and efficient parameterization for the configuration-dependent orbitals [8] [31]. This decomposition is key to the method's compactness, systematic improvability, and ability to directly encode many-body correlations.

Troubleshooting Guides

Issue: Handling Fermionic Anti-commutation in Implementation

Problem: The anti-commutation properties of fermion operators make the implementation of fermionic tensor network states (f-TNS) particularly complex [29].

Solution: Two primary strategies can be employed to manage this complexity:

- Fermi-Arrow Method: Redefine fermion tensors by introducing a "fermi-arrow" to define the order of fermion operators and establish specific operation rules for contraction, transposition, and decomposition [29].

- Grassmann Tensor Method: A similar approach that uses Grassmann algebra to consistently handle the anti-commutative relations [29].

Workflow:

Issue: Managing Computational Scaling inAb InitioSystems

Problem: The computational cost of the method becomes prohibitive for large ab initio systems with realistic long-range Coulomb interactions.

Solution: Implement a multi-faceted truncation strategy to control scaling, reducing it to approximately ( \mathcal{O}[N^{3-4}] ) [8].

Protocol:

- Rank Truncation: Systematically reduce the number of components (R) in the CP decomposition.

- Correlation Range Truncation: Impose a spatial cut-off on the backflow correlations, neglecting interactions beyond a certain distance.

- Local Energy Screening: Identify and filter out Hamiltonian terms that contribute negligibly to the local energy during VMC sampling.

The following table summarizes the benchmarking results of the CPD backflow ansatz against other methods across different systems:

Table 1: Benchmarking Performance of CPD Backflow Ansatz

| System Type | Comparison Method | Performance Result | Key Citation |

|---|---|---|---|

| Fermi-Hubbard Models | Other Neural Quantum State (NQS) models | Displays improved accuracy | [8] [28] |

| Small Molecular Systems | Standard quantum chemistry (e.g., CCSD, FCI) | Shows improvement over comparable methods | [8] [28] |

| 2D Hydrogenic Lattices | Density Matrix Renormalization Group (DMRG) | Achieves competitive accuracy | [8] [28] |

Issue: Low-Rank Tensor Approximation is Insufficient

Problem: A simple low-rank tensor representation of data (e.g., an image) does not possess a strong enough low-rank property for effective restoration or completion [32].

Solution (from Image Processing): Adopt a sub-image strategy to enhance the low-rank property. This involves sampling the original image to create a set of sub-images, which collectively form a tensor with a stronger low-rank characteristic than the original single image [32].

Workflow:

Experimental Protocols

Protocol: Benchmarking on Fermi-Hubbard and Molecular Systems

Objective: To evaluate the performance of the CP-decomposed backflow ansatz against established methods like coupled-cluster (CCSD) and exact diagonalization (FCI) for small systems, and DMRG for extended systems [8] [28].

Methodology:

- Ansatz Construction: Implement the wave function using a CP tensor decomposition of the backflow transformation in second quantization [8].

- VMC Optimization: Use the Variational Monte Carlo framework to optimize the parameters of the model by minimizing the energy, ( E\theta = \langle \Psi\theta | \hat{H} | \Psi_\theta \rangle ) [8] [28].

- Systematic Comparison: Calculate the ground state energy for the target systems and compare the accuracy and computational efficiency against reference methods.

Protocol: Applying Controllable Truncations for Scaling Reduction

Objective: To systematically reduce the computational scaling of the method to ( \mathcal{O}[N^{3-4}] ) for application to larger systems [8].

Methodology:

- Vary CP Rank: Run calculations with increasing rank (R) of the CP decomposition to find the point of diminishing returns.

- Impose Spatial Cut-off: Limit the range of backflow correlations, ignoring electron interactions beyond a defined spatial radius.

- Screen Hamiltonian Terms: In the VMC calculation, implement a threshold to neglect local energy contributions from Hamiltonian terms with magnitudes below a chosen value.

The Scientist's Toolkit

Table 2: Essential Research Reagents and Computational Tools

| Item/Tool | Function in Research | Relevance to Field |

|---|---|---|

| CP Tensor Decomposition | Factorizes the backflow transformation, enabling a compact and systematically improvable wave function ansatz [8]. | Core innovation of the method; directly encodes N-body correlations. |

| Variational Monte Carlo (VMC) | A stochastic optimization framework used to find the ground state energy by minimizing ( E_\theta ) with respect to the ansatz's parameters [8] [28]. | Enables the use of powerful, non-perturbative ansätze for strongly correlated systems. |

| Fermi-Arrow / Grassmann Methods | Provides a consistent set of rules for handling the anti-commutation relations of fermion operators within tensor network algorithms [29]. | Crucial for the correct and efficient implementation of fermionic tensor network states (f-TNS). |

| TNSP Framework | A software package designed to support the development of tensor network state methods, including those for fermionic systems with various symmetries [29]. | Facilitates implementation by providing a uniform interface for tensor operations, reducing development effort. |

Frequently Asked Questions (FAQs)

Q1: What is the core computational challenge when simulating fermionic systems like liquid ³He, and what is a promising new method to address it?

A1: The core challenge is the fermionic sign problem, which causes an exponential decay of the signal-to-noise ratio in Quantum Monte Carlo (QMC) simulations, making calculations for large systems or low temperatures intractable [5] [33]. A promising method involves using a parametrized partition function with a variable, ξ [33]. Simulations are performed for ξ ≥ 0 (where the sign problem is absent), and the results are then extrapolated to the physical fermionic case at ξ = -1 [33].

Q2: For modeling the electron dynamics in molecules like H₂ under intense lasers, why are common methods like RT-TDCIS or RT-TDDFT sometimes insufficient?

A2: Real-Time Time-Dependent Configuration Interaction Singles (RT-TDCIS) completely neglects dynamical electron-electron correlation, which is crucial for accurately describing strong-field phenomena [34]. Real-Time Time-Dependent Density Functional Theory (RT-TDDFT) can include correlation but, as a single-determinant approach, it cannot correctly describe transitions to open-shell singlet states that are essential for processes like High-Harmonic Generation (HHG) in closed-shell molecules [34].

Q3: What is a key computational strategy for making the more accurate RT-TDCISD method feasible for laser-driven dynamics?