Effective Hamiltonian Methods and Embedding Techniques: A 2025 Guide for Computational Drug Discovery

This article provides researchers, scientists, and drug development professionals with a comprehensive overview of embedding techniques and effective Hamiltonian methods.

Effective Hamiltonian Methods and Embedding Techniques: A 2025 Guide for Computational Drug Discovery

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive overview of embedding techniques and effective Hamiltonian methods. It covers foundational quantum mechanical principles, explores advanced methodological approaches like the NextHAM framework and quantum computing pipelines, addresses key optimization challenges for biomolecular systems, and validates performance against established computational chemistry standards. The content synthesizes the latest 2025 research to offer a practical guide for applying these powerful simulations to accelerate drug design for complex targets, including covalent inhibitors and metalloenzymes.

Quantum Foundations: Core Principles of Hamiltonian Mechanics and Embedding Theory

The electronic-structure Hamiltonian is a mathematical representation of the energy interactions within a molecular system. It is the cornerstone for predicting chemical properties, from reaction rates to spectroscopic behavior, by describing how electrons and nuclei interact under quantum mechanics. The exact, or first-quantized, form of the molecular Hamiltonian in atomic units is given by:

$$ H = -\sum{i}\frac{\nabla^{2}{\mathbf{R}{i}}}{2M{i}} - \sum{i}\frac{\nabla^{2}{\mathbf{r}{i}}}{2} - \sum{i,j}\frac{Z{i}}{|\mathbf{R}{i} - \mathbf{r}{j}|} + \sum{i,j>i}\frac{Z{i}Z{j}}{|\mathbf{R}{i} - \mathbf{R}{j}|} + \sum{i,j>i}\frac{1}{|\mathbf{r}{i} - \mathbf{r}_{j}|} $$

where $\mathbf{R}{i}$, $M{i}$, and $Z{i}$ are the position, mass, and charge of the nuclei, respectively, and $\mathbf{r}{i}$ denotes the position of the electrons [1]. Solving this equation is computationally intractable for all but the smallest systems, as the problem is classified as NP-hard, with resources scaling exponentially with electron count [2]. This necessitates a range of approximations, leading to the second-quantized formalism,

$$ H = \sum{p,q} h{pq} cp^\dagger cq + \frac{1}{2} \sum{p,q,r,s} h{pqrs} cp^\dagger cq^\dagger cr cs $$

where $c^\dagger$ and $c$ are fermionic creation and annihilation operators, and $h{pq}$ and $h{pqrs}$ are the one- and two-electron integrals evaluated in a chosen basis set of molecular orbitals [3]. This form is particularly amenable to both classical computational chemistry methods and emerging quantum algorithms.

Computational Protocols for Hamiltonian Construction

Protocol: Building a Molecular Hamiltonian with PennyLane

This protocol details the construction of a qubit-representation of a molecular Hamiltonian using the PennyLane quantum chemistry library, suitable for subsequent quantum simulation [3].

- Objective: To generate the second-quantized electronic Hamiltonian for a molecule and map it to a qubit operator via the Jordan-Wigner transformation.

- Primary Software: Python with PennyLane and QChem module.

Step-by-Step Procedure:

- Define Molecular Structure: Input the atomic symbols and their nuclear coordinates (in atomic units).

Alternatively, read the structure from an external

.xyzfile:symbols, coordinates = qchem.read_structure("path/to/file.xyz").

Create a Molecule Object: Instantiate the

Moleculeclass with the defined structure.Construct the Qubit Hamiltonian: Call the

molecular_hamiltonian()function. This single step encapsulates several automated sub-steps:- Hartree-Fock Calculation: A differentiable HF solver is run to obtain the molecular orbitals.

- Integral Evaluation: The one- and two-electron integrals ($h{pq}$, $h{pqrs}$) are computed in the molecular orbital basis.

- Qubit Mapping: The fermionic Hamiltonian is mapped to a linear combination of Pauli strings using the Jordan-Wigner transformation.

Troubleshooting Tip: For larger molecules, the number of qubits required can become prohibitive. The number of spin-orbitals (and thus qubits) is determined by the size of the atomic basis set used in the HF calculation.

Protocol: Extracting an Effective Spin Hamiltonian with EOM-CC

This protocol uses Equation-of-Motion Coupled-Cluster (EOM-CC) theory to extract a low-energy effective Hamiltonian, such as a Heisenberg or Hubbard model, from an ab initio calculation. This is a key embedding technique for studying magnetic systems and strongly correlated materials [4].

- Objective: To construct a coarse-grained effective Hamiltonian from EOM-CC wave functions for a selected model space.

- Primary Software: Q-Chem electronic structure package.

Step-by-Step Procedure:

- Input File Preparation: Create a Q-Chem input file (

input.dat) with the following key sections:$molecule: Specify the molecular geometry, charge, and multiplicity.$rem: Set calculation parameters.$eff_ham: Define the states and model for the effective Hamiltonian.

Run the Calculation: Execute the job:

qchem input.dat output.dat.Output Analysis: Upon completion, Q-Chem produces the effective Hamiltonian in two forms:

- Bloch's Form: A non-Hermitian effective Hamiltonian.

- des Cloizeaux's Form: A Hermitian effective Hamiltonian derived from Bloch's form. The output includes the matrix representation of the effective Hamiltonian in the specified model space, which can be directly compared to experimental parameters.

Troubleshooting Tip: The localization procedure for the open-shell orbitals (CC_OSFNO) may fail if multiple orbitals reside on the same radical center, making the Boys localization ill-conditioned.

Performance and Method Comparison

The choice of method for Hamiltonian generation involves a trade-off between accuracy and computational cost. The table below summarizes key metrics for several prominent approaches.

Table 1: Comparison of Electronic Structure Methods for Hamiltonian Construction

| Method | Theoretical Scaling | Typical System Size | Key Output | Primary Application Context |

|---|---|---|---|---|

| Density Functional Theory (DFT) [5] [6] | $\mathcal{O}(N^3)$ | 100s of atoms | Ground-state energy, electron density | High-throughput screening of materials and large molecules. |

| Coupled Cluster (CCSD(T)) [6] | $\mathcal{O}(N^7)$ | ~10 atoms | High-accuracy energies & properties | "Gold standard" for small molecules; benchmark for ML models. |

| Deep Learning (NextHAM) [7] | $\mathcal{O}(N)$ (after training) | 10,000s of atoms (materials) | Real- & k-space Hamiltonian | Rapid, DFT-accurate prediction for diverse materials. |

| Variational Quantum Eigensolver (VQE) [1] | Circuit depth dependent | ~10s of qubits (small molecules) | Ground-state energy estimate | Quantum hardware simulation of small molecules. |

| PennyLane (QChem Module) [3] | $\mathcal{O}(N^4)$ (integral eval.) | ~10s of atoms (small molecules) | Qubit Hamiltonian | Quantum algorithm development and simulation. |

Recent advancements in machine learning (ML) are dramatically altering this landscape. ML models like NextHAM can predict the entire Hamiltonian with DFT-level accuracy but at a fraction of the computational cost, achieving errors as low as 1.417 meV in real-space and suppressing spin-orbit coupling block errors to the sub-$\mu$eV scale [7]. Similarly, models like MEHnet, trained on CCSD(T) data, can extrapolate to predict properties of molecules with thousands of atoms at CCSD(T)-level accuracy, far exceeding the traditional limits of the method [6].

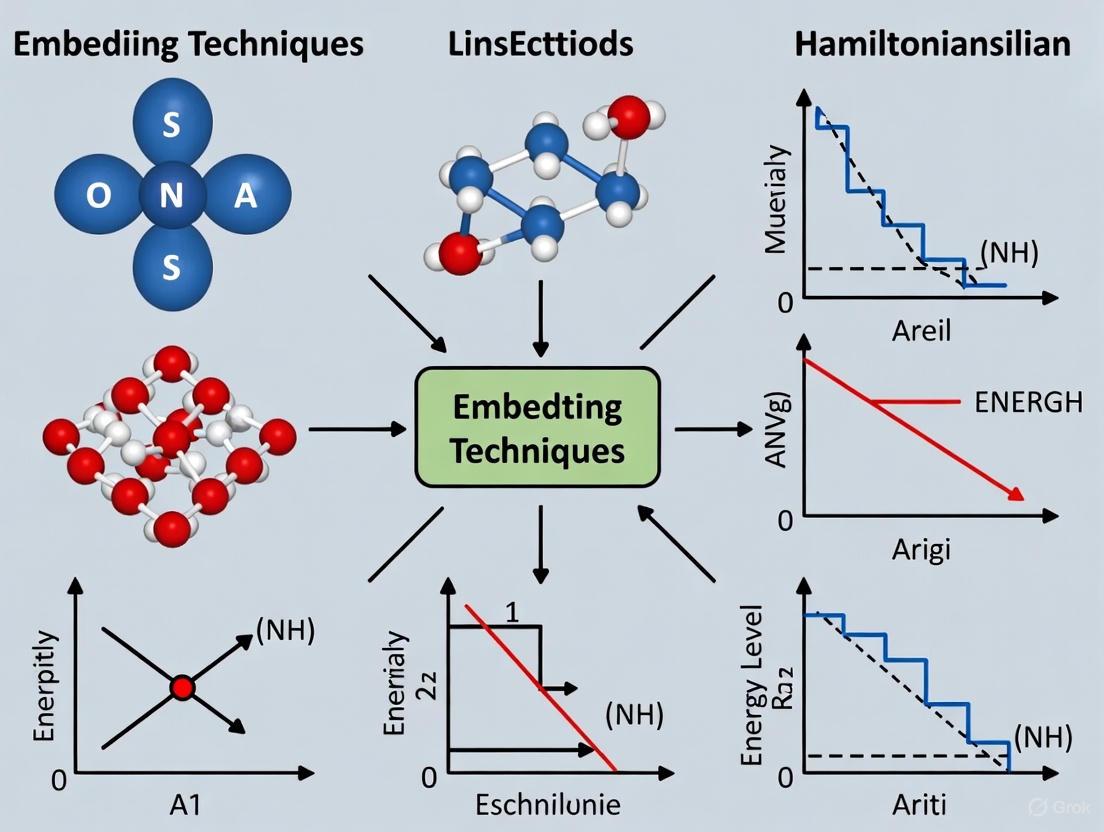

Workflow Visualization

The following diagram illustrates the two primary computational pathways for obtaining and utilizing electronic-structure Hamiltonians, integrating both classical and quantum approaches.

Essential Research Reagents and Computational Tools

A successful computational research program in this field relies on a suite of software and theoretical "reagents." The table below catalogs key resources for constructing and leveraging electronic-structure Hamiltonians.

Table 2: Key Research Reagents and Computational Tools

| Tool / Concept | Type | Primary Function | Relevance to Hamiltonian Methods |

|---|---|---|---|

| Atomic Orbital Basis Set | Theoretical Basis | Expands molecular orbitals as a linear combination of atomic functions. | Determines the dimensionality and accuracy of the second-quantized Hamiltonian [3]. |

| Pseudopotential | Computational Approximation | Replaces core electrons with an effective potential. | Reduces computational cost for heavier elements; crucial for materials with many atoms [7]. |

| E(3)-Equivariant Neural Network | Machine Learning Architecture | A network that respects Euclidean symmetries (rotation, translation, reflection). | Ensures predicted Hamiltonians correctly transform under symmetry operations, guaranteeing physical soundness [7] [6]. |

| Jordan-Wigner Transformation | Algorithm | Maps fermionic creation/annihilation operators to Pauli spin operators. | Encodes the electronic Hamiltonian onto a quantum computer's qubits [3] [1]. |

| Unitary Coupled Cluster (UCC) Ansatz | Quantum Circuit Template | A parameterized quantum circuit inspired by coupled-cluster theory. | Forms the ansatz for the VQE algorithm to prepare molecular wavefunctions on quantum hardware [1]. |

| Zeroth-Step Hamiltonian ($H^{(0)}$) | Physical Descriptor | Constructed from initial electron density without self-consistent cycles. | Serves as an informative input and initial guess for ML models, simplifying the learning task [7]. |

| Bloch's Formalism | Mathematical Framework | A theory for projecting the full Hamiltonian into a reduced model space. | The foundation for extracting effective Hamiltonians from high-level wavefunctions like EOM-CC [4]. |

The Role of Embedding Techniques in Multi-Scale Quantum Simulations

Embedding techniques have emerged as a pivotal strategy for enabling quantum simulations of chemically and biologically relevant systems on contemporary noisy intermediate-scale quantum (NISQ) devices. These methods address a fundamental challenge: the systems of greatest scientific interest, such as proteins in drug discovery or materials with specific quantum defects, are far too large to be treated directly on current quantum hardware [8]. Embedding methods strategically partition a large system, applying high-accuracy quantum computational resources only to a critical subregion, while treating the surrounding environment with more efficient classical methods [9]. This multi-scale approach is crucial for achieving quantum utility—solving problems beyond the reach of classical computers—in practical applications. By systematically reducing the quantum resource requirements, these techniques provide a realistic pathway for applying near-term quantum computers to significant problems in chemistry and materials science [9] [8] [10].

Embedding techniques can be broadly categorized by their approach to partitioning the physical system and the level of theory used for each segment. The following table summarizes the primary methods discussed in the literature.

Table 1: Key Embedding Techniques for Quantum Simulations

| Method | Primary Partitioning Strategy | Embedding Theory | Key Advantage | Example Application |

|---|---|---|---|---|

| QM/MM [9] [8] | Chemical intuition; region of interest vs. environment | Quantum Mechanics in Molecular Mechanics | Allows inclusion of large, complex biomolecular environments. | Proton transfer in water; protein-ligand binding [9] [8]. |

| Projection-Based Embedding (PBE) [9] | Chemically-motivated orbital partitioning | Quantum Mechanics in Quantum Mechanics (e.g., high-level in DFT) | Allows different QM theories within a single calculation. | Active subsystem treatment within a larger QM region [9]. |

| Density Matrix Embedding Theory (DMET) [9] [8] | Schmidt decomposition; fragment + bath orbitals | Quantum Mechanics in Quantum Mechanics | Systematically captures entanglement with the environment. | Hydrogen rings; Hubbard models [8]. |

| Bootstrap Embedding (BE) [8] | Overlapping fragments of the system | Quantum Mechanics in Quantum Mechanics | Robust recovery of local correlation effects for large QM regions. | Drug binding energy calculations [8]. |

| Quantum Defect Embedding Theory (QDET) [10] | Active region (defect) vs. bulk | Strongly-correlated methods in Density Functional Theory | Enables calculation of strongly-correlated states in materials. | Spin defects in diamond, SiC, and MgO [10]. |

These methods can be nested to create powerful multi-layered workflows. For instance, a large biological system can first be partitioned via QM/MM. The resulting QM region, which may still be too large for a quantum computer, can be further reduced using BE or DMET, finally yielding a fragment small enough for simulation on NISQ hardware [9] [8].

Detailed Experimental Protocol: A QM/QM/MM Workflow

This protocol details a multi-scale embedding workflow for calculating the binding energy of a ligand to a protein, a critical task in drug development, by coupling QM/MM with Bootstrap Embedding (BE) [8].

Preparation of the Molecular System

- System Setup: Obtain the atomic coordinates for the protein-ligand complex (e.g., from a crystal structure, PDB code 19G for MCL-1 inhibitor 19G). Place the solvated protein-ligand system in a simulation box using classical molecular dynamics (MD) software such as GROMACS or AMBER.

- Classical MD Simulation: Run an MD simulation to sample thermally accessible configurations of the complex. This generates an ensemble of structures, which is essential for calculating averaged binding energies.

- Configuration Selection: Extract multiple snapshots from the equilibrated portion of the MD trajectory for subsequent quantum embedding calculations.

QM/MM Region Partitioning

- Automated QM Region Selection: For each snapshot, automatically select the QM region. This typically includes the ligand and key protein residues involved in binding (e.g., via covalent bonds, electrostatic interactions, or hydrogen bonding). The rest of the protein and solvent constitutes the MM region.

- Energy Calculation with Electrostatic Embedding: Perform a QM/MM calculation for the entire system. The total energy in the electrostatic embedding scheme is given by:

E_{QM/MM}^{Total} = E_{QM}^{QM} + E_{MM}^{MM} + E_{QM-MM}Here,E_{QM}^{QM}is the energy of the QM region calculated with a quantum mechanical method,E_{MM}^{MM}is the energy of the MM region from a force field, andE_{QM-MM}describes the interaction between the two regions. A critical component is that the point charges of the MM atoms are included as one-electron terms in the QM Hamiltonian, polarizing the QM wavefunction [9] [8].

Bootstrap Embedding of the QM Region

The QM region from the previous step is often too large. BE is used to break it into manageable fragments.

- Fragment Generation: Partition the orbitals of the QM region into multiple, overlapping fragments. Common strategies are based on localized orbitals.

- Fragment Calculation & Bath Construction: For each fragment:

- Construct the bath orbitals from the rest of the system using a Schmidt decomposition.

- Form the embedded fragment Hamiltonian, which includes the fragment and its bath orbitals.

- Self-Consistent Loop: Solve for the ground state of each embedded fragment Hamiltonian using a high-level solver (e.g., CCSD on a classical computer or VQE/QPE on a quantum computer). The individual fragment solutions are used to update a global chemical potential, which is included in each fragment Hamiltonian to ensure particle conservation across the system. Iterate until the chemical potential and fragment densities converge [8].

- Total Energy Assembly: The total energy for the QM region is assembled from the converged fragment calculations. This energy is then used in the overarching QM/MM energy expression from Step 3.2.

Binding Energy Calculation

- Perform the above procedure (Steps 3.1-3.3) for the protein-ligand complex, the isolated protein, and the isolated ligand.

- Calculate the binding energy as:

ΔE_{Bind} = E_{Complex} - E_{Protein} - E_{Ligand}. - Average the binding energy values obtained from all analyzed MD snapshots to get a final, statistically robust estimate.

The following workflow diagram illustrates this multi-scale protocol:

Table 2: Key Resources for Multi-Scale Quantum Embedding Experiments

| Resource Category | Item / Software / Code | Function / Purpose |

|---|---|---|

| Simulation Software | GROMACS, AMBER | Performs classical Molecular Dynamics (MD) to generate ensemble of system configurations. |

| Quantum Chemistry Codes | PySCF, Q-Chem, WEST | Performs electronic structure calculations (DFT, CCSD); implements embedding methods (e.g., DMET, QDET). |

| Quantum Algorithm Frameworks | Qiskit, Cirq, PennyLane | Implements VQE and other quantum algorithms; provides interfaces for quantum hardware/simulators. |

| High-Performance Computing (HPC) | CPU/GPU Clusters (e.g., SuperMUC-NG) | Executes classical parts of the workflow: MD, MM, and quantum circuit simulation/control. |

| Quantum Processing Units (QPUs) | Superconducting (e.g., IQM 20-qubit), Photonic | Hardware for executing the quantum core of the calculation (e.g., fragment Hamiltonian in BE). |

Quantum Embedding and Effective Hamiltonians

At the heart of many embedding techniques lies the construction of an effective Hamiltonian that describes the physics within a targeted subspace. The general goal is to find a simpler Hamiltonian, H_eff, whose low-energy eigenvalues and eigenvectors approximate those of the full, intractable system Hamiltonian, H_full.

The process of deriving and solving an effective Hamiltonian for a fragment in Density Matrix Embedding Theory (DMET) or Bootstrap Embedding (BE) can be visualized as follows:

A specific and powerful approach for generating effective Hamiltonians, particularly for spin defects, involves a generalized Schrieffer-Wolff transformation [11]. This method aims to derive an effective spin-Hamiltonian acting on a subspace of the full electronic Hilbert space.

Protocol: Deriving an Effective Spin-Hamiltonian via Generalized Schrieffer-Wolff Transformation

- Identify Spin-Like Orbitals: For a given molecule or solid-state defect, analyze the full electronic Hamiltonian. A metric is optimized to identify the optimal linear combination of orbitals that best represent the system's significant spin degrees of freedom [11].

- Define the Target Subspace: Define the low-energy subspace (

P) where the charge degrees of freedom for electrons in the identified spin-orbitals are frozen. The complementary high-energy space isQ. - Apply the Transformation: Perform a unitary transformation

H̃ = e^S H e^{-S}such that the transformed HamiltonianH̃has no matrix elements connecting thePandQsubspaces. The generatorSof this transformation is found by solving the equation[S, H_0] = V_{PQ}, whereH_0is the diagonal part of the Hamiltonian andV_{PQ}is the off-diagonal coupling. - Project the Effective Hamiltonian: The effective Hamiltonian acting within the spin subspace is then given by

H_eff = P H̃ P. ThisH_effwill typically take the form of a spin-bath model (e.g., Heisenberg or XYZ model with external fields), which is much more amenable to simulation and analysis, both on classical and quantum computers [11].

This approach is vital for focusing quantum computational resources on the most relevant—and often most quantum—aspects of a system's behavior.

Embedding techniques represent a pragmatic and powerful paradigm for harnessing the potential of quantum computing to address real-world scientific problems. By strategically combining different levels of theory—from classical force fields to density functional theory to high-level wavefunction methods on quantum processors—these multi-scale approaches effectively bridge the gap between the scale of current quantum hardware and the complexity of systems in chemistry, materials science, and drug discovery. The development of robust experimental protocols, such as the coupled QM/QM/MM workflow and the use of effective Hamiltonian methods, provides a clear roadmap for researchers aiming to achieve quantum utility. As quantum hardware continues to mature, these embedding strategies will undoubtedly evolve, further expanding the frontiers of what is possible in computational simulation.

The journey from the Schrödinger equation to Density Functional Theory (DFT) represents a cornerstone of modern computational physics and chemistry, enabling the prediction of material and molecular properties from first principles. This theoretical foundation is particularly crucial within embedding technique and effective Hamiltonian research, which aims to make quantum mechanical simulations of large, complex systems computationally tractable. The fundamental challenge in quantum chemistry involves solving the many-body Schrödinger equation for systems with interacting electrons; while theoretically precise, this approach becomes computationally intractable for all but the smallest molecules due to its exponential scaling with system size. This computational barrier motivated the development of DFT, which reformulates the problem using the electron density as the fundamental variable instead of the many-body wavefunction, dramatically reducing the computational complexity while maintaining quantum accuracy.

Embedding techniques and effective Hamiltonian methods represent a logical extension of this philosophical approach, creating powerful multiscale simulations where different spatial regions of a system are treated at different levels of theoretical rigor. For researchers and drug development professionals, these methods enable the precise quantum mechanical treatment of a critical active site, such as a drug binding pocket or catalytic center, while embedding it within a larger environment treated with less computationally expensive methods. This practical compromise makes it feasible to study biologically relevant systems with quantum accuracy, bridging the gap between theoretical physics and applied pharmaceutical research. The following sections detail the formal theoretical foundations, contemporary computational frameworks, and practical experimental protocols that make these advanced simulations possible.

Theoretical Foundations: From First Principles to Effective Models

The Schrödinger Equation and its Computational Challenges

The time-independent Schrödinger equation, ĤΨ = EΨ, provides the complete non-relativistic quantum mechanical description of a molecular system. Here, Ĥ represents the Hamiltonian operator, Ψ is the many-electron wavefunction, and E is the total energy. The Hamiltonian encompasses all kinetic energy contributions from electrons and nuclei, as well as all potential energy contributions arising from electron-electron, nucleus-nucleus, and electron-nucleus interactions. The wavefunction Ψ depends on the spatial coordinates and spins of all electrons, making it an incredibly complex mathematical object.

For any system containing more than a few electrons, obtaining an exact solution to the Schrödinger equation becomes impossible due to the intractable computational scaling. The coupled nature of electron motions, known as electron correlation, requires sophisticated and computationally expensive wavefunction-based methods that scale poorly with system size (typically O(N⁵) to O(e^N) or worse). This exponential scaling wall fundamentally limits the application of accurate ab initio quantum chemistry to small molecules, creating a pressing need for alternative approaches that can deliver quantitative accuracy for larger, chemically and biologically relevant systems.

Density Functional Theory: The Electron Density as Fundamental Variable

Density Functional Theory bypasses the complexity of the many-electron wavefunction by using the electron density ρ(r) as the central quantity. The Hohenberg-Kohn theorems provide the rigorous foundation for this approach: the first theorem establishes a one-to-one mapping between the ground-state electron density and the external potential, meaning all system properties are, in principle, determined by the density alone. The second theorem provides a variational principle for the energy functional E[ρ], guaranteeing that the exact density minimizes this functional to yield the ground-state energy.

The practical implementation of DFT occurs through the Kohn-Sham scheme, which introduces a fictitious system of non-interacting electrons that exactly reproduces the density of the true, interacting system. The Kohn-Sham equations resemble Schrödinger-like single-particle equations:

[-½∇² + v_eff(r)] φ_i(r) = ε_i φ_i(r)

where v_eff(r) = v_ext(r) + ∫(ρ(r′)/|r-r′|)dr′ + v_XC(r) is an effective potential, and φ_i(r) are the Kohn-Sham orbitals. The critical, and unknown, component is the exchange-correlation functional v_XC(r), which must account for all quantum mechanical effects not captured by the other terms. The accuracy of a DFT calculation hinges entirely on the approximation used for this functional. Modern functionals (e.g., LDA, GGA, meta-GGA, hybrid) represent different trade-offs between computational cost and accuracy for various chemical properties.

Table 1: Comparison of Quantum Chemical Methods and Their Scaling

| Method | Fundamental Variable | Computational Scaling | Key Limitations |

|---|---|---|---|

| Wavefunction Theory | Many-electron Wavefunction | O(e^N) to O(N⁷) | Computationally prohibitive for large systems |

| Density Functional Theory | Electron Density | O(N³) | Accuracy depends on approximate exchange-correlation functional |

| Deep-Learning Hamiltonians | Structure → Hamiltonian | O(N) after training | Requires extensive training data; transferability concerns |

Effective Hamiltonians and Embedding Philosophies

Effective Hamiltonian methods continue the theme of computational expedience by strategically reducing the complexity of the quantum mechanical problem. The core idea involves projecting the full Hamiltonian onto a significantly smaller, physically relevant subspace of the complete Hilbert space. This projection produces an effective Hamiltonian H_eff that operates only within this targeted subspace but incorporates the physical influence of the excluded degrees of freedom. For example, in studying magnetism, one might derive an effective spin Hamiltonian (e.g., Heisenberg model) where the electronic charge degrees of freedom have been integrated out, leaving only spin operators [11].

Embedding techniques operationalize this concept spatially by partitioning a system into multiple domains treated at different levels of theory. The total system energy in such a hybrid quantum mechanics/molecular mechanics (QM/MM) framework can be expressed through an additive scheme:

E_{QM/MM}^{(add)} = E_{QM}^{QM} + E_{MM}^{MM} + E_{QM/MM}^{full}

where E_{QM}^{QM} is the quantum mechanical energy of the core region, E_{MM}^{MM} is the molecular mechanics energy of the environment, and E_{QM/MM}^{full} captures the interaction energy between them [9]. These interactions can be treated with varying sophistication, from simple mechanical embedding (using MM force fields for cross-terms) to electrostatic embedding (including MM point charges in the QM Hamiltonian) and polarizable embedding (allowing for mutual polarization between regions).

Computational Frameworks and Deep Learning Advances

Modern Deep Learning Approaches for Electronic Structure

Recent breakthroughs have married DFT with deep learning to overcome the traditional accuracy-efficiency dilemma. The DeepH method represents a pioneering approach that uses message-passing neural networks to learn the mapping from atomic structure {R} to the DFT Hamiltonian H_DFT({R}) [12]. This method respects the gauge covariance of the Hamiltonian matrix—its transformation under changes of coordinate system or basis functions—through the use of local coordinates and atomic-centered orbitals. By learning this mapping, DeepH and similar models can bypass the expensive self-consistent field procedure of DFT, reducing the computational cost from O(N³) per structure to O(N) after training.

The NextHAM framework further advances this paradigm by introducing several key innovations [7]. It uses the zeroth-step Hamiltonian H⁽⁰⁾, constructed from the initial electron density without self-consistency, as both an informative physical descriptor for the network input and as a baseline for correction. The network then predicts ΔH = H⁽ᵀ⁾ - H⁽⁰⁾ rather than the full Hamiltonian H⁽ᵀ⁾, significantly simplifying the learning task. NextHAM also employs a joint optimization framework that simultaneously refines both real-space (R-space) and reciprocal-space (k-space) Hamiltonians, preventing error amplification and the emergence of unphysical "ghost states" that can occur when only the real-space Hamiltonian is considered.

Table 2: Deep Learning Frameworks for Hamiltonian Prediction

| Method | Key Innovation | Architecture | Reported Accuracy |

|---|---|---|---|

| DeepH [12] | Learns gauge-covariant DFT Hamiltonian | Message-Passing Neural Network | Millielectronvolt scale errors |

| NextHAM [7] | Correction approach using zeroth-step Hamiltonian | E(3)-Equivariant Transformer | 1.417 meV error; spin-off-diagonal blocks <1μeV |

| QM/MM with Quantum Computing [9] | Embeds quantum computation in classical MD | Hybrid HPC + QPU workflow | Enabled 77-qubit scale quantum simulations |

Hybrid Quantum-Classical Computing Platforms

The integration of quantum processing units (QPUs) with conventional high-performance computing (HPC) creates hybrid platforms that strategically deploy quantum resources where they provide maximum benefit. Current quantum algorithms like the variational quantum eigensolver (VQE) and quantum-selected configuration interaction (QSCI) have enabled simulations up to 77 qubits, but these have been largely limited to gas-phase calculations of small molecules [9].

The QM/MM framework provides a practical pathway for integrating these quantum computations into workflows for studying realistic chemical systems in condensed phases. In this approach, a quantum computational method (e.g., QSCI) treats the electronically complex core region, while the extensive environment is handled with classical molecular mechanics. This layered strategy was demonstrated in a proof-of-concept study of proton transfer in water, where quantum computation was deployed within a larger classical molecular dynamics simulation [9]. Additional resource reduction techniques, such as qubit tapering and the contextual subspace method, can further reduce qubit requirements to make the quantum computation feasible on near-term hardware.

Experimental Protocols and Application Notes

Protocol: Deep-Learning Hamiltonian Prediction with NextHAM

Purpose: To predict electronic-structure Hamiltonians and derived properties (e.g., band structures) with DFT-level accuracy but dramatically improved computational efficiency.

Principles: The protocol learns the mapping from atomic structure to the final DFT Hamiltonian after self-consistent convergence, using a correction approach that simplifies the learning task.

Procedure:

- Dataset Curation: Compile a diverse set of material structures encompassing the chemical elements and structural types of interest. For the Materials-HAM-SOC benchmark, 17,000 structures spanning 68 elements were used, with DFT calculations explicitly including spin-orbit coupling effects [7].

- Input Feature Preparation: For each structure, compute the zeroth-step Hamiltonian

H⁽⁰⁾directly from the initial electron densityρ⁽⁰⁾(r)(the sum of atomic charge densities) without performing self-consistent iterations. - Network Training:

- Use an E(3)-equivariant Transformer architecture that respects physical symmetries (translations, rotations, inversions).

- Train the network to predict the correction

ΔH = H⁽ᵀ⁾ - H⁽⁰⁾rather than the full HamiltonianH⁽ᵀ⁾. - Employ a joint loss function that optimizes both real-space Hamiltonian accuracy and reciprocal-space (band structure) accuracy.

- Inference and Validation:

- For new structures, compute

H⁽⁰⁾and pass it through the trained network to obtainΔH_predicted. - Reconstruct the full Hamiltonian as

H⁽ᵀ⁾_predicted = H⁽⁰⁾ + ΔH_predicted. - Validate predictions against reference DFT calculations for both Hamiltonian matrix elements and derived electronic properties.

- For new structures, compute

Troubleshooting: If band structure accuracy is unsatisfactory despite good real-space Hamiltonian accuracy, increase the weight of the k-space loss component during training. If generalization across diverse elements is poor, ensure the training dataset adequately represents the chemical diversity and consider increasing model capacity.

Protocol: Multiscale Workflow with Quantum-Classical Embedding

Purpose: To deploy quantum computational resources for studying electronically complex regions embedded within large-scale classical environments.

Principles: Layers multiple embedding techniques—classical QM/MM, projection-based embedding, and qubit subspace methods—to progressively reduce problem size for feasibility on near-term quantum hardware [9].

Procedure:

- System Preparation: Obtain an initial configuration of the full system (e.g., a solute in solvent, enzyme with substrate) through classical molecular dynamics simulation.

- QM/MM Partitioning:

- Identify the chemically active region (e.g., reaction center, catalytic site) for quantum treatment.

- Treat the remaining environment with molecular mechanics force fields.

- Implement electrostatic embedding by including MM point charges as one-electron terms in the quantum Hamiltonian:

H_embed = H_QM + Σ_i (q_i/|r - R_i|)whereq_iare MM point charges.

- Projection-Based Embedding (PBE):

- Within the QM region, further partition into an active subsystem (containing the strongly correlated electrons) and its environment.

- Use PBE to embed the high-level quantum calculation (e.g., QSCI on quantum processor) within a lower-level DFT description of the environmental orbitals.

- Qubit Subspace Reduction:

- Identify and exploit approximate symmetries in the embedded active subsystem Hamiltonian.

- Apply qubit tapering or contextual subspace methods to reduce the number of qubits required for the quantum computation.

- Quantum Computation:

- Execute quantum-selected configuration interaction on the reduced Hamiltonian using available QPU resources.

- Integrate the quantum-computed energy and properties back through the embedding layers to obtain the full system description.

Validation: Compare energy differences (e.g., reaction barriers, binding affinities) against classical high-level ab initio benchmarks where computationally feasible. Verify consistency across different embedding boundary placements when possible.

The Scientist's Toolkit: Essential Research Reagents and Computational Materials

Table 3: Essential Computational Tools for Effective Hamiltonian Research

| Tool/Resource | Type | Function/Purpose | Example Applications |

|---|---|---|---|

| Zeroth-Step Hamiltonian H⁽⁰⁾ [7] | Physical Descriptor | Provides initial electronic structure estimate without SCF cycles; simplifies learning target for deep neural networks | Input feature and regression target for NextHAM method |

| E(3)-Equivariant Neural Networks [7] | Algorithmic Framework | Maintains physical symmetry constraints (rotation, translation, inversion) during Hamiltonian prediction | DeepH, NextHAM, and other symmetry-aware deep learning models |

| Projection-Based Embedding (PBE) [9] | Embedding Method | Enables different levels of theory within a quantum mechanical region | Coupling high-level quantum methods with DFT in active space studies |

| Quantum-Selected CI (QSCI) [9] | Quantum Algorithm | Provides high-accuracy solutions for strongly correlated electronic systems on quantum processors | Embedded quantum computations for active sites in enzymes |

| Qubit Tapering Techniques [9] | Resource Reduction | Exploits symmetries to reduce qubit requirements for quantum simulations | Enables larger active space calculations on limited-qubit QPUs |

| Materials-HAM-SOC Dataset [7] | Benchmark Data | Diverse collection of 17,000 material structures with high-quality DFT Hamiltonians | Training and evaluation of generalizable deep learning models |

Applications in Drug Development and Materials Science

The application of these advanced quantum embedding and effective Hamiltonian methods spans from fundamental materials science to practical pharmaceutical development. In drug discovery, these techniques enable quantum-accurate modeling of drug-receptor interactions, enzymatic reaction mechanisms, and spectroscopic properties of biological molecules—systems far too large for conventional quantum chemical treatment. The ability to embed a high-level quantum description of an active site within its protein and solvent environment provides unprecedented insight into molecular recognition and catalytic processes.

In materials science, deep-learning Hamiltonian approaches like DeepH and NextHAM have demonstrated remarkable success in studying complex material systems such as twisted van der Waals heterostructures, where subtle interlayer interactions and moiré patterns give rise to novel electronic phenomena [12]. The computational efficiency of these methods—delivering DFT-level precision with dramatically reduced computational cost—opens opportunities for high-throughput screening of candidate materials for energy storage, catalysis, and quantum information applications. The sub-μeV accuracy achieved for spin-orbit coupling interactions in NextHAM is particularly relevant for designing spintronic materials and understanding magnetic properties [7].

The continued development of these embedding techniques, particularly their integration with emerging quantum computing resources, promises to further expand the boundaries of quantum mechanical simulation. As quantum hardware matures, the hierarchical embedding strategies described in these protocols will enable researchers to tackle increasingly complex chemical and biological systems, potentially transforming the design processes for new pharmaceuticals and advanced functional materials.

The Evolution of Effective Hamiltonians in Computational Chemistry

The effective Hamiltonian method stands as a cornerstone in computational chemistry and materials science, enabling the accurate simulation of complex quantum systems that are otherwise computationally intractable for direct first-principles approaches. Traditionally, these methods have provided a powerful framework for reducing the complexity of many-body quantum problems by focusing on the most relevant degrees of freedom in a system. However, the field is currently undergoing a significant transformation driven by advances in machine learning (ML) and quantum computing (QC). These technologies are revolutionizing how effective Hamiltonians are constructed, parameterized, and deployed, moving beyond traditional limitations of manual parameterization and predefined interaction terms. This evolution is particularly evident in the emergence of hybrid ML approaches for automatic Hamiltonian construction and novel quantum embedding techniques that facilitate efficient simulation on nascent quantum hardware. These developments are expanding the accessible scale and complexity of quantum simulations, opening new frontiers for modeling super-large-scale atomic structures and quantum materials with unprecedented accuracy and efficiency, thereby reshaping the computational chemistry landscape.

The Paradigm Shift in Parameterization and Construction

From Manual Fitting to Automated Machine Learning

The traditional parameterization of effective Hamiltonians has relied on manually fitting coupling parameters to first-principles calculations for structures with specific distortions, a process often described as "tricky and complex" that sometimes requires approximations leading to uncertainties or manual adjustment to reproduce experimental results [13]. This paradigm is being displaced by active machine learning approaches that automate and enhance this process. For instance, Bayesian linear regression is now employed for on-the-fly parameterization of general effective Hamiltonians during molecular dynamics simulations [13]. This method actively predicts energy, forces, stress, and their uncertainties at each simulation step, intelligently deciding whether to invoke costly first-principles calculations to retrain parameters, thereby ensuring reliability while minimizing computational expense [13].

A notable advancement in this domain is the Lasso-GA Hybrid Method (LGHM), which combines Lasso regression with genetic algorithms to rapidly construct effective Hamiltonian models without requiring manually predefined interaction terms [14]. This approach offers broad applicability to both magnetic systems (e.g., spin Hamiltonians) and atomic displacement models. The methodology has been successfully validated on monolayer CrI₃ and Fe₃GaTe₂, where it not only identified key interaction terms with high fitting accuracy but also reproduced experimental magnetic ground states and Curie temperatures through subsequent Monte Carlo simulations [14].

Table 1: Comparison of Traditional and Machine Learning Approaches for Effective Hamiltonian Construction

| Aspect | Traditional Approaches | Modern ML Approaches |

|---|---|---|

| Parameterization | Manual fitting with predefined interactions [13] | Automated active learning (e.g., Bayesian regression) [13] |

| Interaction Terms | Manually predefined, limiting flexibility [14] | Automatically identified via Lasso-GA Hybrid Method [14] |

| Computational Cost | High, requiring many first-principles calculations [13] | Reduced via on-the-fly uncertainty quantification [13] |

| Applicability | Limited to systems with known interactions | Broad applicability to complex and novel systems [14] |

Protocol: Active Learning for Effective Hamiltonian Parameterization

Objective: To parameterize an effective Hamiltonian for super-large-scale atomic structures (>10⁷ atoms) using an active machine learning approach [13].

Materials and System Setup:

- Software Requirements: Molecular dynamics package with machine learning capabilities.

- Hardware Requirements: High-performance computing cluster for first-principles calculations.

- System Preparation: Define the reference structure with high symmetry (e.g., cubic perovskite for ABX₃ compounds). Initialize the supercell with appropriate boundary conditions.

Procedure:

- Initialize General Effective Hamiltonian: Formulate the Hamiltonian to include local modes ({s₁}, {s₂}, ⋯), homogeneous strain tensor η, and atomic occupation variables {σ} [13].

- Define Potential Energy Terms: Structure the potential energy (Eₚₒₜ) to include:

- Eₛᵢₙ₉ₗₑ: Self-energies of each mode (single-site terms)

- Eₛₜᵣₐᵢₙ: Strain tensor-related energies

- Eᵢₙₜₑᵣ: Two-body interactions between different local modes

- Eₛₚᵣᵢₙ₉: Spring terms for alloying effects [13]

- Run Molecular Dynamics with Uncertainty Quantification:

- At each MD step, predict energy, forces, and stress using current Hamiltonian parameters.

- Calculate uncertainties of these predictions.

- If uncertainties exceed a predefined threshold, perform first-principles calculations to generate new training data.

- Update Hamiltonian parameters using Bayesian linear regression with the expanded dataset [13].

- Validation: Compare simulated properties (e.g., phase transitions, domain structures) with experimental data and conventional first-principles calculations to validate the parameterized Hamiltonian.

Troubleshooting Tips:

- For systems with complex interactions, ensure the general Hamiltonian includes sufficient higher-order coupling terms.

- Adjust the uncertainty threshold to balance computational cost and accuracy.

- For perovskites, explicitly include dipolar mode vector {u}, antiferrodistortive pseudovector {ω}, and acoustic mode {v} as the fundamental modes [13].

Expanding Applications to Complex Materials Systems

Bridging Scales from Molecules to Materials

Effective Hamiltonian methods have dramatically expanded their applicability across multiple scales and material classes. In quantum chemistry, coupled cluster theory—a cornerstone of molecular electronic structure calculation—has been successfully extended to real metals through effective Hamiltonian techniques, overcoming the challenge of extremely large supercells previously needed to capture long-range electronic correlation effects [15]. This approach utilizes the transition structure factor, which maps electronic excitations from the Hartree-Fock wavefunction, to create an effective Hamiltonian with significantly fewer finite-size effects than conventional periodic boundary conditions [15]. This advancement not only enables accurate quantum chemical treatment of metals but also reduces computational costs by two orders of magnitude compared to previous methods [15].

For complex perovskites and ferroelectric materials, effective Hamiltonians now successfully describe systems with intricate couplings between various order parameters. The modern generalized effective Hamiltonian incorporates multiple degrees of freedom including local dipolar modes, antiferrodistortive (AFD) pseudovectors, inhomogeneous strain vectors (acoustic modes), and atomic occupation variables [13]. This comprehensive approach has enabled the discovery and explanation of complex polar textures such as ferroelectric vortices, labyrinthine domains, skyrmions, and merons in perovskite systems [13].

Table 2: Key Research Reagent Solutions for Effective Hamiltonian Applications

| Research Reagent | Function/Description | Application Examples |

|---|---|---|

| Local Mode Basis | Represents local collective atomic displacements in specified patterns [13] | Dipolar modes in perovskites, phonon modes |

| Transition Structure Factor | Maps electronic excitations from reference wavefunction [15] | Coupled cluster calculations for metals |

| Bayesian Linear Regression | Active learning algorithm for parameter uncertainty quantification [13] | On-the-fly Hamiltonian parameterization |

| Lasso-GA Hybrid (LGHM) | Machine learning method combining Lasso and genetic algorithms [14] | Automatic identification of interaction terms |

| Genetic Algorithms | Optimization method for selecting optimal interactions [14] | Hamiltonian term selection and parameter fitting |

Protocol: Lasso-GA Hybrid Method (LGHM) for Spin Hamiltonians

Objective: To construct an effective spin Hamiltonian for magnetic materials using the Lasso-GA Hybrid Method [14].

Computational Resources:

- Software: Python with scikit-learn for Lasso regression, custom genetic algorithm implementation.

- Hardware: Workstation or computing cluster for Monte Carlo simulations.

- Data Requirements: First-principles calculations or experimental data for training.

Methodology:

- System Preparation:

- Generate training data from first-principles calculations or experimental measurements for the target system.

- For magnetic systems like monolayer CrI₃ or Fe₃GaTe₂, calculate energies for various spin configurations [14].

Feature Space Construction:

- Create an extensive library of possible interaction terms (Heisenberg exchange, single-ion anisotropy, Dzyaloshinskii-Moriya, higher-order interactions).

- Avoid manual preselection to maintain flexibility in capturing complex interactions [14].

Lasso Regression Phase:

- Apply Lasso regression to the training data with the full feature library.

- Utilize L1 regularization to drive coefficients of irrelevant interaction terms to zero.

- Identify a subset of potentially important interactions for further optimization [14].

Genetic Algorithm Optimization:

- Implement a genetic algorithm with the interaction terms as genes.

- Define fitness function based on prediction accuracy for energies and properties.

- Evolve populations of Hamiltonian models through selection, crossover, and mutation.

- Converge to an optimal set of interaction terms and parameters [14].

Validation with Monte Carlo Simulation:

- Use the optimized Hamiltonian in Monte Carlo simulations to calculate experimental observables.

- Verify reproduction of experimental properties (e.g., magnetic ground state, Curie temperature) [14].

- For Fe₃GaTe₂, confirm that single-ion anisotropy and Heisenberg interaction yield out-of-plane ferromagnetic ground state, with fourth-order interactions contributing significantly to high Curie temperature [14].

Key Considerations:

- The hybrid approach overcomes limitations of using either Lasso or genetic algorithms alone.

- Balance model complexity with predictive accuracy through careful regularization.

- Ensure training data adequately samples the relevant configuration space.

Quantum Computing and Hamiltonian Embedding Techniques

Hamiltonian Embedding for Quantum Simulation

A groundbreaking development in effective Hamiltonian theory is the emergence of Hamiltonian embedding techniques for quantum computation. This approach simulates a desired sparse Hamiltonian by embedding it into the evolution of a larger, more structured quantum system that can be efficiently manipulated using hardware-efficient operations [16] [17] [18]. Unlike theoretically appealing but impractical black-box quantum algorithms, Hamiltonian embedding leverages both the sparsity structure of the input data and the resource efficiency of underlying quantum hardware, enabling deployment of interesting quantum applications on current quantum computers [16].

This technique fundamentally expands the hardware-efficiently manipulable Hilbert space by embedding target Hamiltonians as blocks within larger, more structured Hamiltonians that are easier to implement on physical devices [17] [18]. By evolving this larger system using native hardware operations, the desired simulation occurs naturally within a protected subspace, bypassing inefficient compilation steps and significantly reducing computational overhead [18]. This approach has successfully demonstrated experimental realization of quantum walks on complicated graphs (e.g., binary trees, glued-tree graphs), quantum spatial search, and simulation of real-space Schrödinger equations on current trapped-ion and neutral-atom platforms [17] [18].

Advanced Algorithmic Approaches

Beyond basic embedding, sophisticated product formulas like the Trotter Heuristic Resource Improved Formulas for Time-dynamics (THRIFT) have been developed for quantum simulation of systems with hierarchical energy scales [19]. These algorithms generate decompositions of the evolution operator into products of simple unitaries directly implementable on quantum computers, achieving better error scaling than standard Trotter formulas—O(α²t²) for first-order THRIFT compared to O(αt²) for standard first-order formulas, where α represents the scale of the smaller Hamiltonian component [19]. This improved scaling is particularly valuable for simulating systems with strong short-range interactions and weaker long-range interactions, or systems subject to weak external perturbations [19].

For practical implementation, comprehensive benchmarking frameworks have been established to evaluate quantum Hamiltonian simulation performance across various hardware platforms and algorithmic approaches [20]. These frameworks employ multiple fidelity assessment methods including comparison with noiseless simulators, exact diagonalization results, and scalable mirror circuit techniques to evaluate hardware performance beyond classical simulation capabilities [20]. Such systematic benchmarking reveals crucial crossover points where quantum hardware begins to outperform classical CPU/GPU simulators, providing valuable guidance for resource allocation in computational chemistry research [20].

Protocol: Hamiltonian Embedding for Quantum Simulation

Objective: To implement Hamiltonian embedding for sparse Hamiltonian simulation on quantum hardware [16] [17].

Hardware and Software Requirements:

- Quantum Hardware: Trapped-ion or neutral-atom platform with native operations.

- Software: Quantum programming framework (e.g., Qiskit, Amazon Braket SDK) [16].

- Classical Computation: Resource estimation tools for circuit compilation.

Implementation Steps:

- Circuit Compilation:

Embedding Configuration:

- Select appropriate embedding strategy based on sparsity structure of target Hamiltonian.

- Configure the larger structured system to contain target Hamiltonian as a block [17] [18].

- For specific applications (quantum walk, spatial search, real-space simulation), use dedicated subdirectories and scripts [16].

Resource Estimation:

Execution and Validation:

Application Notes:

- Hamiltonian embedding is particularly effective for quantum walks on complicated graphs and real-space Schrödinger equation simulation [17].

- The technique significantly reduces resource requirements compared to standard binary encoding approaches [16].

- Current implementations focus on expanding the horizon of implementable quantum advantages in the NISQ era [18].

The evolution of effective Hamiltonians in computational chemistry represents a paradigm shift from empirically parameterized models to automated, physically rigorous frameworks capable of describing quantum systems across unprecedented scales. The integration of machine learning has transformed Hamiltonian construction from a manually intensive process to an automated, adaptive procedure, while quantum embedding techniques have opened pathways for exploiting emerging quantum hardware. These advances collectively address the dual challenges of accuracy and computational feasibility, enabling first-principles-quality modeling of systems from complex perovskites to real metals. As these methodologies continue to mature, they promise to further expand the frontiers of computational chemistry, providing increasingly powerful tools for understanding and designing complex materials and molecular systems with applications spanning drug development, energy storage, and quantum materials engineering.

Challenges in Achieving Generalization Across Diverse Material and Molecular Systems

The pursuit of generalized models—those capable of accurate prediction across diverse, unseen material and molecular systems—represents a central challenge in computational science. For researchers and drug development professionals, the ability to extrapolate beyond narrow training data is paramount for accelerating the design of novel materials and therapeutic compounds. This application note frames these challenges within the broader thesis of embedding techniques and effective Hamiltonian methods, which offer promising pathways to enhanced generalizability. We detail specific, quantifiable obstacles, provide actionable experimental protocols for model evaluation and development, and visualize key methodologies to equip scientists with the tools to advance this critical frontier.

Quantified Challenges in Model Generalization

The obstacles to achieving generalization are not merely theoretical; they manifest as measurable performance gaps in practical applications. The table below summarizes the core challenges and their documented impact on model performance.

Table 1: Core Challenges in Generalization for Material and Molecular Systems

| Challenge | Description | Quantitative Impact & Evidence |

|---|---|---|

| Data Scarcity & Cost | Key data modalities (e.g., microstructure images from SEM) are expensive and complex to acquire, leading to incomplete datasets. [21] | Models often lack crucial structural information, limiting predictive accuracy for real-world material systems. [21] |

| Distribution Shifts | Differences in the distribution of sequences or properties between training data and new, unseen datasets. [22] | A study of 19 state-of-the-art models showed a consistent reduction in performance as similarity between train and test data decreased. [22] |

| Multiscale Complexity | Material properties emerge from interactions across scales (composition, processing, structure, properties). [21] | Integrating multiscale features is crucial for accurate representation but remains a significant modeling challenge. [21] |

| Generalization Gap in Generative Models | Generative models for molecular systems can fail to sample all relevant configurations, struggling with data efficiency. [23] | Simple systems can remain out of reach for current generative models, highlighting a gap between theory and practice. [23] |

Protocols for Assessing and Improving Generalization

To systematically address the challenges outlined in Table 1, researchers can adopt the following detailed experimental protocols.

Protocol: Evaluating Generalizability with the Spectra Framework

This protocol provides a robust method for moving beyond traditional train-test splits to comprehensively evaluate a model's generalizability, particularly for molecular sequencing data [22].

- I. Objective: To characterize a model's performance as a function of the similarity between its training and test data, providing a more complete picture of its generalizability.

- II. Materials/Software:

- Molecular sequencing dataset with associated phenotypes (e.g., from benchmarks like PEER, ProteinGym, or TAPE).

- The machine learning model to be evaluated (e.g., CNN, GNN, LLM).

- Computational resources for model training and inference.

- (Optional) Spectra framework implementation.

- III. Step-by-Step Procedure:

- Define Spectral Property (SP): Identify a molecular sequence property (e.g., GC content, 3D protein structure) expected to influence generalizability for the specific task.

- Construct Spectral Property Graph: Calculate the defined SP for all sequences. Build a graph where nodes represent sequences and edges connect sequences that share the spectral property.

- Generate Adaptive Splits: Create a spectrum of train-test splits from the graph by varying an internal spectral parameter from 0 to 1. This produces splits with systematically decreasing cross-split overlap (the proportion of test samples that share an SP with the training set).

- Train and Test Model: For each generated split, train the model on the training set and evaluate its performance on the test set.

- Plot Spectral Performance Curve (SPC): Plot the model's performance (e.g., accuracy, F1-score) against the spectral parameter (or cross-split overlap).

- Calculate AUSPC: Compute the Area Under the Spectral Performance Curve (AUSPC) to obtain a single, summary metric of model generalizability.

- IV. Analysis and Interpretation:

- A model that maintains high performance even at low cross-split overlap (resulting in a flatter SPC and higher AUSPC) has superior generalizability.

- Compare AUSPC values across different models to select the most robust one for the task.

- The SPC reveals how performance degrades with increasing data distribution shifts, informing the model's safe operating domain.

Protocol: Implementing a Multimodal Learning Framework for Robust Property Prediction

This protocol leverages multimodal learning to mitigate data scarcity and integrate multiscale knowledge, improving property prediction even when key modalities are missing. [21]

- I. Objective: To train a model that fuses information from multiple data types (e.g., processing parameters and microstructure images) to enable accurate material property prediction in the absence of complete data.

- II. Materials/Software:

- A multimodal material dataset (e.g., processing parameters and corresponding SEM images).

- Computational framework (e.g., Python, PyTorch/TensorFlow).

- Encoder networks (e.g., MLP for tabular data, CNN or ViT for images).

- III. Step-by-Step Procedure:

- Data Preparation: Compile a dataset where each sample consists of multiple modalities (e.g.,

Processing ParametersandSEM Image) and a targetPropertyvalue. - Structure-Guided Pre-training (SGPT):

a. Use separate encoders to project each modality (

Processing,Structure, and their fusion) into latent representations. b. Employ a contrastive learning loss to align these representations in a joint latent space. Use the fused representation as an anchor, pulling same-sample unimodal representations (positives) closer and pushing different-sample representations (negatives) apart. [21] - Downstream Prediction: a. Freeze the pre-trained encoders. b. Attach a trainable predictor (e.g., a multi-layer perceptron) to the fused (or available unimodal) representation. c. Train the predictor to regress or classify the target material property.

- Data Preparation: Compile a dataset where each sample consists of multiple modalities (e.g.,

- IV. Analysis and Interpretation:

- Evaluate the model on test samples where one or more modalities (e.g., SEM images) are missing. The aligned latent space should allow the available modalities to provide a robust prediction.

- Compare performance against models trained only on single modalities to quantify the benefit of multimodal learning.

Protocol: Incorporating Physics-Based Models into Generative Networks

This protocol outlines a strategy for integrating physics-based knowledge to improve the generalization and data efficiency of generative models for molecular systems. [23]

- I. Objective: To enhance a generative neural network's ability to produce statistically likely and physically valid molecular configurations by incorporating physics-based constraints.

- II. Materials/Software:

- A dataset of molecular configurations.

- A generative neural network (e.g., Normalizing Flow, Diffusion Model).

- Access to the potential energy function ( U(x) ) for the molecular system.

- III. Step-by-Step Procedure:

- Base Model Training: Train a generative model to learn the equilibrium probability distribution ( \varrho_{eq}(x) = Z^{-1} e^{-\beta U(x)} ) from the training data. [23]

- Physics-Based Coarse-Graining: Implement a physics-based coarse-graining (CG) map that reduces the dimensionality of the atomistic system, focusing on essential degrees of freedom. [23]

- Latent Space Dynamics: Carry out sampling or dynamics in this lower-dimensional latent (CG) space, which is more computationally efficient.

- Backmapping: Use the generative model to recover the full atomistic configuration from the sampled CG representation.

- Physics-Informed Loss: Augment the training loss function with a term that penalizes configurations with high potential energy ( U(x) ), forcing the model to respect the laws of chemical physics.

- IV. Analysis and Interpretation:

- Evaluate the generated ensembles by comparing statistical properties (e.g., radial distribution functions, free energy differences) against those obtained from traditional molecular dynamics simulations.

- Assess whether the model can discover rare events or stable molecular configurations not present in the original training data.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential computational tools and methodological components critical for research in this domain.

Table 2: Essential Research Reagents and Tools for Generalization Studies

| Item/Tool | Function & Application | Relevance to Generalization |

|---|---|---|

| Spectra Framework [22] | A spectral framework for comprehensive model evaluation. | Provides a rigorous metric (AUSPC) for assessing generalizability across data distribution shifts, moving beyond simplistic train-test splits. |

| MatMCL Framework [21] | A multimodal learning framework for material science. | Addresses data scarcity and missing modalities by aligning multiscale information, enabling robust prediction on incomplete data. |

| Hamiltonian Embedding [17] [18] | A quantum simulation technique that embeds a target Hamiltonian into a larger, more structured system. | Enables more efficient simulation of complex systems on near-term hardware, expanding the scope of verifiable physical models. |

| Physics-Based Coarse-Graining [23] | A dimensionality reduction technique using physical principles. | Improves data efficiency of generative models by guiding sampling in a lower-dimensional, physically-relevant latent space. |

| Cross-Validation (K-fold, LOOCV) [24] | A resampling method to assess model performance on limited data. | Fundamental technique for estimating how a model will generalize to an independent dataset, preventing overfitting. |

| Regularization (Dropout, L2) [24] | Techniques that constrain model complexity during training. | Directly improves generalization ability by preventing the model from overfitting to noise in the training data. |

Advanced Methodologies and Real-World Drug Discovery Applications

The accurate prediction of molecular properties and the generation of novel drug candidates are central challenges in modern computational drug discovery. Traditional methods, often reliant on quantum chemistry calculations like Density Functional Theory (DFT), provide high fidelity but are computationally prohibitive for high-throughput screening [25] [26]. The integration of E(3)-equivariance—a property ensuring model outputs rotate, translate, and reflect in unison with their inputs—into deep learning architectures represents a paradigm shift. This advancement, particularly when combined with the expressive power of Transformer architectures, provides a robust framework for learning from 3D molecular structures. These models offer a compelling synergy: they embed fundamental physical laws as inductive biases, leading to superior data efficiency, generalization, and physical meaningfulness compared to non-equivariant models [27] [25]. This document details the application of these next-generation frameworks, placing them within the research context of embedding techniques and effective Hamiltonian methods, which aim to create computationally efficient yet accurate representations of complex quantum systems.

Core Architectural Principles and Applications

E(3)-equivariant models are engineered to respect the symmetries of Euclidean space, making them inherently suited for modeling atomic systems where physical laws are invariant to rotation and translation. When this geometric priors is integrated with the self-attention mechanism of Transformers, it results in architectures capable of capturing both local atomic interactions and long-range dependencies within molecular graphs.

Key Architectural Formulations

The core of these models lies in constraining their operations to be equivariant. For a group G (e.g., the rotation group SO(3)) and group actions Tg and T'g, a layer Φ is equivariant if it satisfies the commutation relation: Φ(Tg[f]) = T'g[Φ(f)] for all inputs f and group elements g ∈ G [27]. In practice, this is achieved through several key mechanisms:

- Steerable Features and Irreducible Representations (Irreps): Atomic features are elevated from simple scalars to geometric tensors (e.g., scalars, vectors, higher-order tensors) that transform predictably under group actions. Operations are then defined using tools from group theory, such as Clebsch-Gordan tensor products, to ensure equivariance [27].

- Equivariant Attention: The standard self-attention mechanism is re-engineered. Queries, keys, and values are projected using equivariant linear layers, and attention scores are computed via group-invariant inner products, such as the dot product of equivariant vector features [27]. The Platonic Transformer offers an efficient alternative by defining attention relative to reference frames derived from Platonic solid symmetry groups, achieving equivariance without altering the standard Transformer's computational graph [28].

- Equivariant Nonlinearities: Standard activation functions like ReLU are replaced with equivariant alternatives. A common technique is norm-gating, where the magnitudes of higher-order features are scaled by gating signals derived from invariant scalar features [27].

Quantitative Performance Benchmarks

The empirical superiority of E(3)-equivariant Transformer models is evidenced by their performance across diverse molecular tasks. The following table summarizes key benchmarks, demonstrating their advantages in accuracy and data efficiency.

Table 1: Performance Benchmarks of E(3)-Equivariant Models on Molecular Tasks

| Model | Task / Dataset | Key Metric | Performance | Comparison vs. Baseline |

|---|---|---|---|---|

| EnviroDetaNet [25] | Multiple molecular properties (QM9) | Mean Absolute Error (MAE) | Superior across 8 properties | 39-52% error reduction vs. DetaNet on Hessian, polarizability |

| EnviroDetaNet (50% Data) [25] | Multiple molecular properties (QM9) | Mean Absolute Error (MAE) | Near-state-of-the-art | Error reduction vs. baseline DetaNet on 7/8 properties |

| DiffGui [29] | Target-aware molecule generation (PDBbind) | Binding affinity (Vina Score), Structure quality | State-of-the-art | Higher binding affinity, better chemical structure & properties |

| Platonic Transformer [28] | Molecular property prediction (QM9) | MAE | Competitive | Achieves performance with no added computational cost |

| Equivariant Transformer (ET) [27] | Molecular dynamics (N-body) | Stability & Equivariance Error | Superior | Stable performance, exact equivariance error |

Application Notes in Drug Discovery

E(3)-equivariant Transformers are revolutionizing specific pipelines in computer-aided drug design, from de novo molecule generation to precise property prediction.

Target-Aware 3D Molecular Generation

Generating novel, synthetically accessible molecules that bind strongly to a specific protein target is a primary goal. Diffusion models built on E(3)-equivariant graph neural networks have emerged as the state-of-the-art. DiffGui is one such model that addresses key challenges: it concurrently generates both atoms and bonds through a combined atom and bond diffusion process, mitigating the generation of unrealistic ring structures and strained molecules. Furthermore, it explicitly incorporates property guidance (e.g., for binding affinity, drug-likeness QED, synthetic accessibility SA) during sampling, ensuring the generated ligands are not only high-affinity but also drug-like [29]. Another model, PoLiGenX, employs a latent-conditioned equivariant diffusion process conditioned on a reference molecule. This is particularly valuable for hit expansion, as it generates novel ligands that retain the shape and key interactions of a promising initial hit while exploring novel chemical space to improve properties like binding affinity or reduce strain energy [30].

Molecular Property and Spectral Prediction

Predicting quantum chemical properties directly from 3D structure is critical for screening. Models like EnviroDetaNet demonstrate the impact of incorporating rich atomic environment information. This E(3)-equivariant message-passing network integrates intrinsic atomic properties, spatial coordinates, and molecular environment embeddings, allowing it to capture both local and global information effectively. Its performance, especially under data-scarce conditions (e.g., with a 50% reduction in training data), highlights its robustness and superior generalization [25]. Similarly, the LGT framework (Local and Global Transformer) addresses the limitations of standard GNNs (which struggle with long-range interactions) and pure Transformers (which lose original graph structure). By fusing a graph convolution-based Local Transformer with a Global Transformer that captures long-range dependencies using inter-atomic distances, it achieves strong results on benchmarks like QM9 and ZINC [26].

Protein Representation Learning

Learning robust representations of protein structures is essential for function annotation and binding site prediction. The E^3former model addresses the challenge of noise in experimental and AlphaFold-predicted structures. It uses energy function-based receptive fields to construct proximity graphs and incorporates an equivariant high-tensor-elastic selective State Space Model (SSM) within a Transformer. This hybrid architecture allows it to adapt to complex atom interactions and extract geometric features with a high signal-to-noise ratio, leading to state-of-the-art performance on tasks like inverse folding [31].

Experimental Protocols

Protocol 1: Target-Aware Molecular Generation with DiffGui

Objective: To generate novel, drug-like molecular ligands for a specified protein binding pocket using the DiffGui equivariant diffusion model. Background: This protocol leverages a non-autoregressive E(3)-equivariant diffusion process to generate 3D molecular structures in the context of a protein pocket, explicitly optimizing for binding affinity and chemical validity [29].

Materials:

- Software: Python, PyTorch, RDKit, DiffGui codebase.

- Data: A prepared structure of the target protein (e.g., from PDB or AlphaFold).

- Hardware: GPU (e.g., NVIDIA A100) with ≥40GB VRAM recommended.

Procedure:

- Pocket Preparation:

- Identify the binding site of the target protein from a co-crystallized ligand or via pocket detection algorithms.

- Extract the pocket residues, defining a molecular surface or a set of atoms within a cutoff (e.g., 5-10 Å) from the expected ligand center.

Model Configuration:

- Initialize the DiffGui model with pre-trained weights.

- Configure the sampling parameters: number of denoising steps (e.g., 500-1000), and guidance scales for properties (Vina Score, QED, SA).

Conditional Generation:

- Feed the prepared protein pocket graph into the model as the conditioning input.

- Initiate the reverse diffusion process from a prior Gaussian distribution of atom coordinates and types.

- At each denoising step, the E(3)-equivariant GNN predicts the clean atom coordinates, types, and bond types, guided by the target property predictors.

Ligand Assembly and Validation:

- Assemble the final denoised atom positions and bond types into a complete molecule.

- Validate the generated molecule for chemical stability and correctness using RDKit (e.g., check for invalid valences, unstable rings).

- Evaluate key metrics: binding affinity via docking (e.g., Vina Score), quantitative estimate of drug-likeness (QED), and synthetic accessibility (SA) score.

Troubleshooting:

- Issue: Generated molecules are chemically invalid.

- Solution: Adjust the bond diffusion guidance strength and validate the bond type prediction module.

- Issue: Molecules show poor predicted binding affinity.

- Solution: Increase the guidance weight for the Vina Score during sampling.

Protocol 2: Molecular Property Prediction with EnviroDetaNet

Objective: To predict quantum chemical properties (e.g., polarizability, dipole moment) for a set of organic molecules using the EnviroDetaNet model. Background: This protocol uses an E(3)-equivariant message-passing network that incorporates molecular environment information for highly accurate and data-efficient regression of molecular properties [25].

Materials:

- Software: Python, PyTorch, PyTorch Geometric, RDKit.

- Data: A dataset of molecules with known 3D geometries (e.g., QM9). Molecular geometries should be pre-optimized.

- Hardware: GPU (e.g., NVIDIA V100 or newer).

Procedure:

- Data Preprocessing:

- Generate 3D conformers for each molecule in the dataset if not already available.

- Standardize the molecular structures and compute their intrinsic atomic features (e.g., atomic number, chirality).

- Compute molecular environment embeddings for each atom using a pre-trained model (e.g., Uni-Mol) to provide global context.

Model Training/Inference:

- For a new dataset, split the data into training, validation, and test sets (e.g., 80/10/10).

- Configure EnviroDetaNet: specify the number of layers, hidden feature dimensions, and the type of irreducible representations.

- Train the model using a regression loss (e.g., Mean Absolute Error) with an optimizer like Adam.

- For inference, load the trained model and pass the featurized molecular graph through the network to obtain the predicted property value.

Validation and Analysis:

- Evaluate predictions against ground-truth quantum chemistry values using MAE and R² scores.

- Perform ablation studies to confirm the importance of environmental embeddings by comparing performance to a variant without them (DetaNet-Atom) [25].

Troubleshooting:

- Issue: Model performance plateaus during training.

- Solution: Introduce learning rate scheduling or adjust the dimensionality of the environmental embeddings.

- Issue: Poor generalization to larger molecules.

- Solution: Ensure the training set includes a diverse range of molecular sizes and functional groups.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Data Resources for E(3)-Equivariant Modeling

| Name / Resource | Type | Primary Function | Relevance to E(3)-Models |

|---|---|---|---|