DFT vs Post-Hartree-Fock: A Practical Guide to Accuracy in Computational Chemistry and Drug Discovery

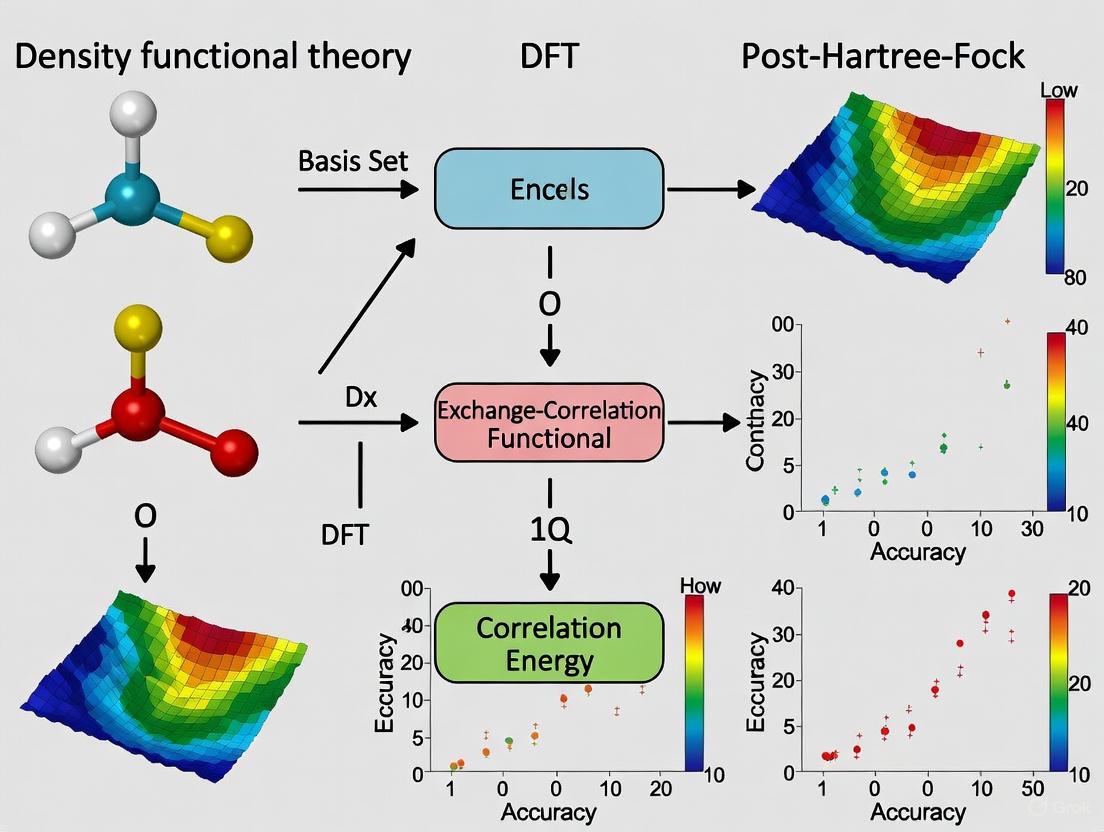

This article provides a comprehensive comparison of Density Functional Theory (DFT) and post-Hartree-Fock (post-HF) methods, focusing on their accuracy for researchers and professionals in drug development.

DFT vs Post-Hartree-Fock: A Practical Guide to Accuracy in Computational Chemistry and Drug Discovery

Abstract

This article provides a comprehensive comparison of Density Functional Theory (DFT) and post-Hartree-Fock (post-HF) methods, focusing on their accuracy for researchers and professionals in drug development. We explore the foundational principles of both approaches, detailing how they address electron correlation. The review covers key methodological applications, from drug formulation design to spin-state energetics in transition metal complexes, and examines current challenges like scalability and environmental modeling. We also discuss innovative troubleshooting strategies, including machine learning corrections and hybrid multiscale models. Finally, we present a rigorous validation framework based on benchmark studies against experimental data, offering clear guidance for selecting the appropriate method to achieve chemical accuracy in biomedical research.

Core Principles: How DFT and Post-HF Methods Tackle Electron Correlation

A central challenge in quantum chemistry is the accurate and efficient computation of the electronic structure of molecules and materials. Two fundamentally distinct theoretical frameworks have emerged to solve this problem: wavefunction theory (WFT) and density functional theory (DFT). The relationship and competition between these approaches form a core theme in modern computational chemistry and materials science research. While both start from the same N-electron Hamiltonian, they diverge dramatically in their fundamental principles and practical implementation. Wavefunction-based methods, including Hartree-Fock (HF) and post-Hartree-Fock approaches, explicitly treat the many-electron wavefunction and systematically improve accuracy by accounting for electron correlation. In contrast, DFT reformulates the problem in terms of the electron density alone, offering computational efficiency but relying on approximate exchange-correlation functionals whose accuracy is not systematically improvable. This guide provides a comprehensive comparison of these competing paradigms, examining their theoretical foundations, performance characteristics, and suitability for different applications in chemical research and drug development.

Theoretical Foundations: Contrasting Formalisms

Wavefunction Theory: A First-Principles Approach

Wavefunction theory approaches the many-electron problem by directly solving the electronic Schrödinger equation. The fundamental quantity is the N-electron wavefunction, Ψ(x₁, x₂, ..., xₙ), which contains the complete information about the electronic system. A critical feature is that the wavefunction must be antisymmetric with respect to particle exchange, satisfying the Pauli exclusion principle. In practice, the wavefunction is typically constructed as a linear combination of Slater determinants, which are antisymmetrized products of one-electron spin orbitals [1].

The simplest wavefunction method is Hartree-Fock (HF), which approximates the wavefunction as a single Slater determinant. The HF equations are derived by varying the orbitals to minimize the energy expectation value. However, HF fails to account for electron correlation—the tendency of electrons to avoid each other beyond what is required by the antisymmetry of the wavefunction. This limitation led to the development of post-Hartree-Fock methods such as Møller-Plesset perturbation theory (MP2, MP4), configuration interaction (CI), coupled-cluster (CC) theory, and complete active space self-consistent field (CASSCF), which systematically improve upon HF by incorporating electron correlation effects [2] [1].

Density Functional Theory: The Density as Fundamental Variable

Density functional theory takes a radically different approach, reformulating the many-electron problem using the electron density n(r) as the fundamental variable instead of the wavefunction. This reformulation is justified by the Hohenberg-Kohn theorems, which establish that: (1) the ground-state electron density uniquely determines the external potential and thus all properties of the system, and (2) a universal functional F[n(r)] exists such that the energy can be variationally minimized with respect to the density [3] [1].

In practice, DFT is implemented through the Kohn-Sham scheme, which introduces a fictitious system of non-interacting electrons that has the same density as the real system. The complexity of the many-body problem is buried in the exchange-correlation functional, which must be approximated. The accuracy of a DFT calculation depends almost entirely on the quality of this functional, leading to the development of various approximations including the local density approximation (LDA), generalized gradient approximation (GGA), meta-GGAs, and hybrid functionals [1].

Table 1: Fundamental Comparison of Theoretical Frameworks

| Feature | Wavefunction Theory | Density Functional Theory |

|---|---|---|

| Fundamental variable | N-electron wavefunction Ψ(x₁,x₂,...,xₙ) | Electron density n(r) |

| Theoretical foundation | Variational principle, Hartree-Fock, post-HF methods | Hohenberg-Kohn theorems, Kohn-Sham equations |

| Treatment of electron correlation | Explicit via configuration interaction, perturbation theory, coupled-cluster | Approximated via exchange-correlation functional |

| Computational scaling | HF: N⁴, MP2: N⁵, CCSD(T): N⁷ | N³ with conventional functionals |

| Systematic improvability | Yes (through increasing perturbation order or active space) | No (dependent on functional choice) |

Accuracy Comparison: Benchmarking Against Experimental Data

Quantitative Performance Across Chemical Systems

The performance of wavefunction and density-based methods varies significantly across different chemical systems and properties. Wavefunction methods typically provide more systematic accuracy but at substantially higher computational cost, while DFT offers a favorable accuracy-to-cost ratio but with functional-dependent performance.

Table 2: Accuracy Comparison for Different Chemical Systems (Mean Absolute Errors in kcal/mol)

| Method | General Main-Group Chemistry | Transition Metals | Dispersion Interactions | Reaction Barriers | Excitation Energies |

|---|---|---|---|---|---|

| HF | ~80-150 | >100 | >100 | >50 | >100 |

| MP2 | ~5-10 | Variable | ~1-5 (with correction) | ~5-10 | Not applicable |

| CCSD(T) | ~1-2 | ~3-5 | ~0.5-1 | ~1-3 | Requires EOM-CC |

| DFT (GGA) | ~5-10 | ~10-20 | Poor (no dispersion) | ~5-10 | Poor |

| DFT (Hybrid) | ~3-7 | ~5-15 | ~2-5 (with correction) | ~3-7 | ~0.3-0.5 eV |

| DFT (Range-separated) | ~2-5 | Variable | ~1-3 | ~2-5 | ~0.2-0.3 eV |

Case Studies Highlighting the Divide

Gold Clusters (Au₁₀): The Au₁₀ cluster represents a challenging system where the transition from 2D to 3D structures occurs. Studies have shown significant discrepancies between different theoretical methods. While many DFT functionals predict a planar D₂h structure for Au₁₀, second-order Møller-Plesset (MP2) calculations predict a 3D C₂v arrangement. However, the validity of single-reference MP2 for near-metallic systems remains questionable. Coupled-cluster calculations with perturbative triple corrections (CCSD(T)) change the order of cluster stability, making the onset of the 2D to 3D transition in gold clusters elusive [4].

Zwitterionic Systems: For zwitterionic molecules like pyridinium benzimidazolates, HF theory has demonstrated superior performance over many DFT functionals in reproducing experimental dipole moments. In one comprehensive study, HF produced a dipole moment of 10.33 D for a specific zwitterion, matching the experimental value exactly, while various DFT functionals showed significant deviations. The study attributed HF's better performance to its more appropriate handling of the localization issue in these charge-separated systems, where DFT tends to over-delocalize electrons [2].

Color Centers in Semiconductors: The accurate description of in-gap states of point defects in semiconductors with significant multideterminant character presents a particular challenge for DFT. For the NV⁻ center in diamond, widely applied DFT is inherently a single-determinant method for ground state calculations and has limitations in describing states of strongly multideterminantal nature. Wavefunction methods like CASSCF and NEVPT2 provide a more accurate treatment of both static and dynamic correlation effects crucial for predicting magneto-optical properties of these quantum bits [5].

Computational Requirements and Protocols

Methodological Implementation Details

Wavefunction Theory Workflow:

- Hartree-Fock Calculation: Obtain reference wavefunction and molecular orbitals

- Basis Set Selection: Choose appropriate Gaussian-type or plane-wave basis sets

- Electron Correlation Treatment: Apply selected post-HF method (MP2, CCSD, CASSCF, etc.)

- Property Calculation: Compute energies, gradients, and molecular properties

- Geometry Optimization: Iteratively minimize energy with respect to nuclear coordinates

Density Functional Theory Workflow:

- Functional Selection: Choose appropriate exchange-correlation functional

- Basis Set Selection: Select plane-wave basis or Gaussian-type orbitals

- Self-Consistent Field Calculation: Solve Kohn-Sham equations iteratively

- Convergence Testing: Ensure convergence of energy, density, and forces

- Property Evaluation: Calculate derived properties from electron density

Hybrid and Machine Learning Approaches

Recent advances have focused on combining the strengths of both approaches. Δ-DFT is a machine learning approach that calculates coupled-cluster energies from DFT densities, reaching quantum chemical accuracy (errors below 1 kcal·mol⁻¹). This method learns the difference between DFT and coupled-cluster energies as a functional of input DFT densities, significantly reducing the amount of training data required. The robustness of Δ-DFT has been demonstrated by correcting "on the fly" DFT-based molecular dynamics simulations to obtain trajectories with coupled-cluster accuracy [3].

Another innovative approach is the DFT-CPP (correlation-polarization potential) method, which extends DFT to study positron binding in molecules and materials. This method combines molecular electrostatic and correlation-polarization potentials, with the short-range correlation described by density functionals and the asymptotic long-range behavior represented by an attractive polarization potential [6].

Table 3: Key Computational Methods and Their Applications

| Method/Software | Theoretical Foundation | Primary Applications | Key Strengths | Notable Limitations |

|---|---|---|---|---|

| Gaussian | WFT & DFT | Molecular electronics, drug design | Comprehensive method coverage, user-friendly | Limited periodic systems support |

| VASP | DFT (plane-wave) | Materials science, surface chemistry | Excellent periodic boundary conditions | Primarily DFT-focused |

| NWChem | WFT & DFT | Large-scale parallel calculations | Strong coupled-cluster implementation | Steeper learning curve |

| CP2K | DFT (atom-centered) | Biomolecular systems, liquids | Efficient for large systems | Limited high-level WFT |

| Psi4 | WFT & DFT | Benchmark calculations, method development | Excellent WFT capabilities | Smaller user community |

| ABINIT | DFT (plane-wave) | Materials properties, phonons | Strong periodic capabilities | Limited molecular focus |

The fundamental divide between wavefunction and density-based approaches represents a continuing tension in computational chemistry between accuracy and efficiency. For systems where high accuracy is paramount—such as spectroscopy, reaction barrier prediction, and properties dominated by strong electron correlation—wavefunction methods (particularly coupled-cluster and multireference approaches) remain the gold standard. However, for large systems, molecular dynamics simulations, and high-throughput screening where computational efficiency is essential, density functional theory offers an unparalleled balance of cost and accuracy.

The emerging trend of combining these approaches through embedding schemes, machine learning corrections, and multi-scale methods represents the most promising direction for the field. Methods like Δ-DFT that leverage machine learning to extract high-level wavefunction accuracy from DFT calculations may eventually bridge the historical divide, offering a path toward quantum chemical accuracy for complex systems currently beyond the reach of conventional computational approaches [3] [5].

Understanding the Electron Correlation Problem in Quantum Chemistry

Electron correlation energy is a fundamental concept in quantum mechanics, representing the additional energy required to describe the interactions between electrons in a many-electron system beyond what can be explained by the mean-field approximation, such as the Hartree-Fock method [7]. This correlation arises from the mutual electrostatic repulsion between electrons and leads to complex quantum effects that cannot be represented by simple mathematical models [7]. The accurate calculation of this correlation energy remains one of the most significant challenges in quantum chemistry, with critical implications for predicting molecular properties, reaction pathways, and materials behavior in fields ranging from drug development to materials science.

The quest to solve the electron correlation problem has spawned two fundamentally different philosophical approaches: the parameterized functionals of Density Functional Theory (DFT) and the systematic, wavefunction-based post-Hartree-Fock (post-HF) methods. This guide provides a comprehensive comparison of these competing paradigms, examining their theoretical foundations, accuracy across different chemical systems, computational demands, and applicability to real-world research problems. Understanding the relative strengths and limitations of each approach is essential for researchers selecting appropriate computational tools for predicting molecular properties and interactions.

Theoretical Foundations and Methodological Approaches

Density Functional Theory (DFT) Approaches

DFT operates on the fundamental principle that all ground-state properties of a many-electron system can be determined from its electron density [8]. The theory bypasses the need for the complex many-electron wavefunction by using functionals (functions of functions) that depend only on the spatially dependent electron density. The key advantage of DFT is its favorable scaling with system size, making it applicable to larger molecules and materials that are computationally prohibitive for wavefunction-based methods [8].

The accuracy of DFT calculations critically depends on the approximation used for the exchange-correlation functional, which encapsulates all quantum mechanical effects not captured by the simpler Hartree term. Recent developments have introduced increasingly sophisticated functionals:

New Ionization Energy-Dependent Functional (2024): This novel approach incorporates the density's dependence on ionization energy, theoretically deriving a correlation functional that combines with a previously reported ionization energy-dependent exchange functional [7]. The functional demonstrates minimal mean absolute error when tested on 62 molecules for properties including total energy, bond energy, dipole moment, and zero-point energy [7].

Chachiyo Functional: A simpler correlation functional based on the hypothesis that "correlation energy favors uniform electron distribution" [9]. It employs a gradient-suppressing factor designed to attenuate the energy from its maximum value when the density gradient is zero [9]. Despite its mathematical simplicity, it achieves accuracy competitive with established functionals like B3LYP and PBE [9].

Information-Theoretic Approach (ITA): This innovative method uses density-based descriptors like Shannon entropy and Fisher information to predict post-Hartree-Fock electron correlation energies at the cost of Hartree-Fock calculations [10] [11]. By treating the electron density as a continuous probability distribution, ITA introduces physically interpretable descriptors that encode global and local features of electron distribution [10].

Post-Hartree-Fock Wavefunction Methods

Post-HF methods have the primary goal of capturing the part of electron correlation missing in the original HF formulation [12]. These approaches work directly with the many-electron wavefunction rather than the electron density, and can be broadly categorized into two philosophical frameworks:

Perturbation Theory Methods: Møller-Plesset (MP) perturbation theory introduces correlation as a correction to the HF Hamiltonian, with MP2 (second-order) being the most popular variant [12]. MP2 captures a considerable amount of dynamical correlation at computational cost not significantly higher than HF, though it can produce poor results for systems with strong correlation effects like transition metal compounds [12].

Configuration Interaction Methods: CI constructs the multielectron wavefunction as a linear combination of different electron configurations using HF wavefunctions [12]. Full CI provides the exact solution for a given basis set but is computationally intractable for most systems. Truncated approaches like CISD (CI singles and doubles) offer practical compromises [12].

Coupled-Cluster Methods: Particularly CCSD and CCSD(T) (the "gold standard" of quantum chemistry), these methods provide high accuracy for electron correlation but at dramatically increased computational cost [10].

Multiconfigurational Methods: CASSCF (complete active space self-consistent field) performs a full CI calculation within a selected set of active orbitals, making it particularly suitable for systems with strong static correlation, such as transition metal complexes and molecules with degenerate or near-degenerate states [12].

Table 1: Fundamental Characteristics of Electron Correlation Methods

| Method Category | Theoretical Basis | Key Strengths | Inherent Limitations |

|---|---|---|---|

| DFT Functionals | Electron density | Favorable scaling to larger systems; Computational efficiency; Good for main-group chemistry | Inexact functional problem; Dispersion interaction challenges; Systematic error difficult to improve |

| MP Perturbation Theory | Rayleigh-Schrödinger perturbation theory | Size-consistent; More affordable than CC methods; Good for dynamical correlation | Not variational; Can diverge for strong correlation; Poor for open-shell systems |

| Configuration Interaction | Linear combination of Slater determinants | Variational; Systematic improvability; Conceptual simplicity | Not size-consistent except FCI; Exponential cost scaling |

| Coupled-Cluster | Exponential wavefunction ansatz | Size-consistent; High accuracy (especially CCSD(T)); Gold standard for single-reference systems | High computational cost; Not variational |

Performance Comparison Across Chemical Systems

Accuracy Metrics and Benchmarking Approaches

Evaluating the performance of electron correlation methods requires careful benchmarking against reliable experimental data or high-level theoretical references. Key metrics include:

Mean Absolute Error (MAE): The average absolute difference between computed and reference values, providing an overall measure of accuracy [7].

Root Mean Square Deviation (RMSD): Measures the spread of errors, with higher penalties for larger deviations [10].

Linear Correlation Coefficient (R²): Quantifies how well computational results track with reference data across a series of related systems [10].

Recent studies have employed diverse test sets including the 24 octane isomers, polymeric structures (polyyne, polyene, all-trans-polymethineimine, acene), and various molecular clusters (metallic Ben and Mgn, covalent Sn, hydrogen-bonded protonated water clusters H+(H₂O)ₙ, and dispersion-bound carbon dioxide (CO₂)ₙ and benzene (C₆H₆)ₙ clusters) [10]. These diverse systems provide rigorous testing across different bonding regimes and electronic environments.

Quantitative Performance Data

Table 2: Accuracy Comparison of Correlation Methods Across Molecular Systems

| Method/System | Total Energy MAE | Bond Energy MAE | Dipole Moment MAE | Zero-Point Energy MAE |

|---|---|---|---|---|

| New Ionization DFT (62 molecules) | Minimal (exact values not specified) [7] | Minimal [7] | Minimal [7] | Minimal [7] |

| Chachiyo Functional | Competitive with B3LYP, PBE [9] | Competitive with B3LYP, PBE [9] | Information not specified | Information not specified |

| B3LYP | Reference standard [7] [9] | Reference standard [7] [9] | Reference standard [7] | Reference standard [7] |

| PBE | Higher error than B3LYP [7] | Higher error than B3LYP [7] | Higher error than B3LYP [7] | Higher error than B3LYP [7] |

| ITA (Octane Isomers) | RMSD <2.0 mH for MP2 correlation energies [10] | Information not specified | Information not specified | Information not specified |

Table 3: Performance for Complex Systems and Scaling Considerations

| Method/System Type | Correlation Energy Accuracy | Computational Scaling | Key Limitations |

|---|---|---|---|

| ITA-Predicted (Polymeric systems) | RMSD ~1.5 mH (polyyne) to ~11 mH (acene) with R² ≈ 1.000 [10] | Cost of HF calculations [10] | Accuracy varies with system type; Single descriptor may be insufficient |

| ITA-Predicted (3D metallic clusters) | R² > 0.990 but RMSD ~17-42 mH [10] | Cost of HF calculations [10] | Quantitative prediction challenging for complex 3D systems |

| MP2 | Good for dynamical correlation [12] | O(N⁵) | Poor for strong correlation, transition metals [12] |

| CCSD(T) | Near-chemical accuracy [10] | O(N⁷) | Prohibitively expensive for large systems [10] |

| CASSCF | Excellent for static correlation [12] | Exponential with active space size | Requires careful active space selection [12] |

Experimental Protocols and Methodologies

Protocol for DFT Functional Development and Testing

The development and validation of new DFT functionals follows a systematic protocol:

Functional Design: Formulate mathematical expressions based on physical principles, such as the ionization energy dependence [7] or the tendency toward uniform electron distribution [9].

Parameter Optimization: Determine optimal parameters through fitting to reference data, which may include accurate Quantum Monte Carlo computations or experimental results [7].

Benchmarking: Test the functional on standard molecular sets (e.g., 62 diverse molecules) for properties including total energy, bond energy, dipole moment, and zero-point energy [7].

Comparison: Evaluate performance against established functionals (e.g., QMC, PBE, B3LYP, Chachiyo) using metrics like Mean Absolute Error [7].

Validation: Apply to more complex systems to assess transferability and limitations [10].

Information-Theoretic Approach Protocol

The LR(ITA) protocol for predicting post-HF correlation energies involves:

Descriptor Calculation: Compute a set of 11 information-theoretic quantities from Hartree-Fock electron density, including Shannon entropy (SS), Fisher information (IF), Ghosh, Berkowitz, and Parr entropy (SGBP), Onicescu information energy (E2 and E3), relative Rényi entropy (R2r and R3r), relative Shannon entropy (IG) and relative Fisher information (G1, G2, and G3) [10].

Linear Regression: Establish linear relationships between ITA quantities and high-level correlation energies (MP2, CCSD, CCSD(T)) using a training set of molecules [10].

Prediction: Apply the linear regression equations to predict correlation energies for new systems using only HF-level ITA quantities [10].

Validation: Compare predicted correlation energies with explicitly calculated post-HF values and assess accuracy using RMSD and R² metrics [10].

Experimental Validation in Complex Systems

Recent experimental advances enable direct testing of correlation models in extreme conditions:

X-ray Thomson Scattering: Probe plasmon dispersion in shock-compressed materials (e.g., aluminum at 3.75-4.5 g/cm³ density and ~0.6 eV temperature) [13].

Comparison with Theory: Compare experimental results with predictions from time-dependent DFT, mean-field models, and static local field correction models [13].

Model Assessment: Identify which theoretical approaches successfully reproduce observed plasmon energies and spectral shapes [13].

Method Selection Workflow

The Scientist's Toolkit: Essential Research Reagents

Table 4: Computational Tools for Electron Correlation Studies

| Tool/Resource | Function/Role | Application Context |

|---|---|---|

| 6-311++G(d,p) Basis Set | Standard polarized triple-zeta basis set with diffuse functions | Balanced accuracy/efficiency for main-group elements; Used in benchmark studies [10] |

| Quantum Monte Carlo (QMC) | Provides accurate reference data for uniform electron gas | Fitting parameters for LDA functionals [7] |

| Generalized Energy-Based Fragmentation (GEBF) | Linear-scaling method for large systems | Accuracy benchmark for molecular clusters [10] [11] |

| Information-Theoretic Descriptors | Density-based quantities (Shannon entropy, Fisher information) | Predict correlation energies from HF calculations [10] [11] |

| Local Field Corrections | Describe response of uniform electron gas | Testing against experimental data in warm dense matter [13] |

The comparison between DFT and post-HF methods for solving the electron correlation problem reveals a complex landscape where no single approach dominates across all chemical systems and accuracy requirements. DFT functionals offer the compelling advantage of computational efficiency and applicability to larger systems, with recent developments like ionization energy-dependent functionals and information-theoretic approaches pushing the boundaries of accuracy within the DFT framework [7] [10]. Post-HF methods provide systematically improvable accuracy and better performance for strongly correlated systems but at dramatically increased computational cost [12].

Future research directions likely include the further development of multi-scale and hybrid approaches that leverage the strengths of both paradigms, such as embedding high-level wavefunction calculations within DFT frameworks for critical regions of molecular systems. Machine learning and information-theoretic approaches show particular promise for predicting correlation energies at reduced computational cost [10] [11]. As experimental validation techniques advance [13], the feedback between theory and experiment will continue to drive the development of more accurate, efficient, and broadly applicable solutions to the electron correlation problem in quantum chemistry.

Post-Hartree-Fock (post-HF) methods are advanced computational techniques in quantum chemistry that systematically improve upon the Hartree-Fock approximation by incorporating electron correlation effects. This guide provides a comprehensive comparison of three principal post-HF approaches—Configuration Interaction (CI), Møller-Plesset Perturbation Theory (MP2), and Coupled Cluster (CCSD(T))—within the broader context of density functional theory (DFT) accuracy research. For researchers and drug development professionals, understanding the trade-offs between computational cost and accuracy among these methods is crucial for reliable predictions of molecular properties, reaction energies, and non-covalent interactions in complex systems.

The fundamental challenge of quantum chemistry lies in solving the Schrödinger equation for many-electron systems, a problem that grows exponentially in complexity with system size. While Density Functional Theory (DFT) offers an attractive balance between computational efficiency and accuracy for many systems, its approximations in the exchange-correlation functional lead to known limitations and systematic failures for certain chemical problems [14] [15]. Hartree-Fock (HF) theory provides the foundational wavefunction-based approach but neglects electron correlation entirely, leading to quantitatively inaccurate results for most chemical applications.

Post-HF methods bridge this gap by adding electron correlation to the HF foundation through different mathematical frameworks, offering systematically improvable accuracy at increased computational cost [16]. These methods are particularly valuable for benchmarking DFT performance and for applications where DFT approximations prove inadequate, such as in modeling dispersion interactions, transition states, strongly correlated systems, and accurate thermochemical predictions [17].

Theoretical Foundations of Post-HF Methods

The Electron Correlation Problem

Electron correlation encompasses two distinct physical phenomena: static correlation (also called strong correlation or near-degeneracy effects), which arises when multiple electronic configurations contribute significantly to the ground state, and dynamic correlation, which accounts for the instantaneous Coulombic repulsion between electrons. Post-HF methods differ in their ability to handle these different correlation types:

- Static correlation dominates in bond-breaking situations, diradicals, and systems with degenerate or near-degenerate frontier orbitals

- Dynamic correlation is always present and particularly important for accurate prediction of binding energies, reaction barriers, and molecular properties

The exact solution to the Schrödinger equation within a given basis set, the Full Configuration Interaction (FCI), includes all possible electron excitations but remains computationally prohibitive for all but the smallest systems [16].

Mathematical Frameworks

Post-HF approaches employ different mathematical formulations to approximate the electron correlation energy:

- Wavefunction expansion (CI): Constructs the wavefunction as a linear combination of Slater determinants with variationally optimized coefficients

- Perturbation theory (MP): Treats electron correlation as a small perturbation to the HF Hamiltonian

- Exponential ansatz (CC): Uses an exponential cluster operator to generate excited determinants, ensuring size extensivity

Methodological Deep Dive

Configuration Interaction (CI) Methods

Theoretical Basis

The CI wavefunction is constructed as a linear combination of Slater determinants: [ \Psi{\text{CI}} = c0 \Phi0 + \sum{i,a} ci^a \Phii^a + \sum{i>j, a>b} c{ij}^{ab} \Phi{ij}^{ab} + \cdots ] where (\Phi0) is the HF reference determinant, (\Phii^a) are singly-excited determinants, (\Phi{ij}^{ab}) are doubly-excited determinants, and the coefficients (c) are determined variationally by minimizing the energy [16].

Truncated CI and Its Limitations

In practice, the CI expansion is truncated at a certain excitation level due to computational constraints:

- CIS: Includes only single excitations; improves excited states but not ground-state correlation

- CISD: Includes single and double excitations; most common truncated variant

- CISDT: Includes single, double, and triple excitations; computationally demanding

A critical limitation of truncated CI methods is their lack of size consistency and size extensivity [16]. The energy of two non-interacting fragments does not equal the sum of their individual energies, leading to significant errors in calculated properties of larger systems and dissociation energies.

Full CI and Benchmarking

Full CI includes all possible excitations from the reference determinant and provides the exact solution within the chosen basis set. While computationally feasible only for small systems with limited basis sets, it serves as a valuable benchmark for assessing more approximate methods [16].

Møller-Plesset Perturbation Theory (MP2)

Theoretical Foundation

MP perturbation theory treats electron correlation as a perturbation to the HF Hamiltonian. The Hamiltonian is partitioned as (H = F + \lambda V), where (F) is the Fock operator and (V) is the perturbation potential [16]. The MP energy expansion is: [ E = E^{(0)} + E^{(1)} + E^{(2)} + E^{(3)} + \cdots ] where (E^{(0)}) is the sum of HF orbital energies, (E^{(1)}) is the HF energy correction, and higher terms represent correlation contributions.

MP2 Practical Implementation

Second-order Møller-Plesset (MP2) theory includes the (E^{(2)}) term, which accounts for the majority of the correlation energy. For canonical HF orbitals, the MP2 correlation energy is calculated as [18]: [ E{\text{MP2}} = \frac{1}{4} \sum{ijab} \frac{|\langle ab||ij \rangle|^2}{\varepsiloni + \varepsilonj - \varepsilona - \varepsilonb} ] where (\langle ab||ij \rangle) are antisymmetrized two-electron integrals and (\varepsilon) are orbital energies.

MP2 calculations typically employ the frozen-core approximation, excluding core orbitals from the correlation treatment to reduce computational cost without significant accuracy loss for valence properties.

Coupled Cluster Methods

Exponential Ansatz

Coupled cluster theory expresses the wavefunction using an exponential ansatz: [ \Psi{\text{CC}} = e^{\hat{T}} \Phi0 ] where (\hat{T} = \hat{T}1 + \hat{T}2 + \hat{T}3 + \cdots) is the cluster operator composed of single ((\hat{T}1)), double ((\hat{T}2)), triple ((\hat{T}3)), and higher excitation operators [16].

CCSD and CCSD(T)

CCSD includes single and double excitations ((\hat{T} = \hat{T}1 + \hat{T}2)) and solves for the cluster amplitudes projected onto singly and doubly excited determinants: [ \langle \Phii^a | \bar{H} | \Phi0 \rangle = 0, \quad \langle \Phi{ij}^{ab} | \bar{H} | \Phi0 \rangle = 0 ] where (\bar{H} = e^{-\hat{T}} H e^{\hat{T}}) is the similarity-transformed Hamiltonian [18].

CCSD(T) adds a perturbative treatment of connected triple excitations, often called the "gold standard" of quantum chemistry for single-reference systems [16] [18]. The (T) correction is computed as: [ E{(T)} = \sum{ijkabc} \frac{(4W{ijk}^{abc} + W{ijk}^{bca} + W{ijk}^{cab})(V{ijk}^{abc} - V{ijk}^{cba})}{\varepsiloni + \varepsilonj + \varepsilonk - \varepsilona - \varepsilonb - \varepsilon_c} ] where (W) and (V) are intermediate quantities derived from the CCSD amplitudes [18].

Comparative Analysis of Performance

Table 1: Computational Scaling and Resource Requirements

| Method | Computational Scaling | Memory Requirements | System Size Limit | Key Advantages |

|---|---|---|---|---|

| HF | (N^3)-(N^4) | Low | 1000+ atoms | Foundation for post-HF methods |

| MP2 | (N^5) | Moderate | 500+ atoms | Good for non-covalent interactions |

| CCSD | (N^6) | High | 50-100 atoms | High accuracy for dynamic correlation |

| CCSD(T) | (N^7) | Very high | 20-50 atoms | Gold standard accuracy |

| Full CI | Factorial | Extreme | <10 atoms | Exact within basis set |

Accuracy Across Chemical Properties

Table 2: Accuracy Comparison for Molecular Properties

| Method | Bond Lengths | Vibrational Frequencies | Reaction Energies | Non-covalent Interactions | Transition Metals |

|---|---|---|---|---|---|

| HF | Poor | Poor | Poor | Very Poor | Poor |

| MP2 | Good | Good | Moderate | Good (but overestimates) | Variable |

| CCSD | Very Good | Very Good | Good | Very Good | Good |

| CCSD(T) | Excellent | Excellent | Excellent | Excellent | Very Good |

Basis Set Dependence and Convergence

The accuracy of post-HF methods is strongly dependent on basis set quality. The basis set incompleteness error (BSIE) arises because the correlation energy converges slowly with basis set size, particularly for methods like MP2 [18]. Two primary approaches address this limitation:

- Explicitly correlated (F12) methods: Incorporate the interelectronic distance (r_{12}) directly into the wavefunction, dramatically improving basis set convergence [18]

- Density-based basis-set correction: Uses range-separated DFT to correct for BSIE, providing improved accuracy at lower computational cost than F12 methods [18]

Table 3: Recommended Basis Sets for Post-HF Calculations

| Basis Set | Tier | Recommended Use | Computational Cost |

|---|---|---|---|

| cc-pVDZ | Minimal | Screening calculations | Low |

| cc-pVTZ | Standard | Most production work | Moderate |

| cc-pVQZ | Large | High-accuracy needs | High |

| aug-cc-pVXZ | Diffuse | Anions, weak bonds | Higher than cc-pVXZ |

| cc-pVXZ-F12 | Optimized | Explicitly correlated methods | Similar to standard |

Experimental Protocols and Benchmarking

Standard Benchmarking Protocols

Rigorous assessment of post-HF methods requires comparison against reliable experimental or theoretical reference data:

- Thermochemical benchmarks (e.g., G2/97, W4-17): Assess reaction energies, atomization energies, and barrier heights

- Non-covalent interaction benchmarks (e.g., S66, HSG): Evaluate performance for dispersion, hydrogen bonding, and π-π interactions

- Spectroscopic benchmarks: Compare calculated vibrational frequencies, bond lengths, and rotational constants with experimental data

- Solid-state benchmarks: Assess performance for periodic systems and materials properties

Case Study: Monochalcogenide Diatomic Molecules

A comparative study of XSe and XTe molecules (where X = N, P, As) illustrates the performance differences between post-HF and DFT methods [17]:

Computational Protocol:

- Methods compared: CCSD(T), MP2, CAS-SCF, and various DFT functionals (B3LYP, PBE0, TPSS)

- Basis sets: Correlation-consistent (cc-pVXZ) with relativistic effective core potentials for heavy atoms

- Calculated properties: Bond lengths, vibrational frequencies, ionization potentials, dissociation energies

Key Findings:

- CCSD(T) provided the most reliable results across all molecular properties

- MP2 showed good performance for structural parameters but larger errors for dissociation energies

- The popular B3LYP functional performed surprisingly well, often接近 to CCSD(T) accuracy for certain properties

- Basis set effects were more pronounced for wavefunction methods than for DFT

Research Reagent Solutions: Computational Tools

Table 4: Essential Computational Tools for Post-HF Calculations

| Tool Category | Specific Examples | Primary Function | Considerations |

|---|---|---|---|

| Quantum Chemistry Packages | Gaussian, ORCA, CFOUR, Molpro, PSI4 | Implementation of electronic structure methods | Varying capabilities for high-level methods |

| Basis Set Libraries | Basis Set Exchange, EMSL Basis Set Library | Provide standardized basis sets | Ensure consistency across calculations |

| Analysis & Visualization | Multiwfn, ChemCraft, GaussView | Analyze wavefunctions and properties | Critical for interpreting results |

| High-Performance Computing | Linux clusters with high-speed interconnects | Enable computationally demanding calculations | Memory and processor core requirements scale with method |

Method Selection Guide

The choice between CI, MP2, and CCSD(T) depends on the specific research requirements:

- For rapid screening or large systems: MP2 offers the best balance of cost and accuracy for non-covalent interactions and preliminary geometry optimizations

- For high-accuracy single-point energies: CCSD(T) remains the gold standard for systems within its computational reach, particularly for thermochemical properties

- For multireference systems: CAS-SCF followed by MRCI or NEVPT2 is preferable over single-reference methods like CCSD(T)

- When DFT fails: CCSD(T) provides crucial benchmarking for developing and validating new density functionals

Future Perspectives and Emerging Trends

Machine learning approaches are being developed to correct for fundamental errors in density functional approximations, potentially bridging the gap between DFT efficiency and post-HF accuracy [14]. Quantum computing also holds promise for extending computational reach beyond classical limitations, particularly for strongly correlated systems in drug discovery applications [15].

The development of composite methods (e.g., CBS-QB3, G4) that combine different levels of theory provides practical pathways to high accuracy with reduced computational cost. Continued algorithmic improvements and hardware advances will further expand the applicability of accurate post-HF methods to larger, more chemically relevant systems.

Visual Guide to Post-HF Method Relationships

Post-HF Method Relationships and Accuracy Hierarchy: This diagram illustrates the progression from Hartree-Fock to increasingly accurate post-HF methods, with color coding indicating methodological families (yellow for CI, green for MP, blue for CC, red for the gold-standard CCSD(T)).

Basis Set Convergence Techniques

Addressing Basis Set Convergence Challenges: This workflow illustrates strategies to overcome the slow basis set convergence in conventional post-HF methods, highlighting explicitly correlated and density-based correction approaches that enable higher accuracy with smaller basis sets.

Density Functional Theory (DFT) represents one of the most widely used computational approaches in quantum chemistry, materials science, and drug development research. Its theoretical foundation rests upon the groundbreaking Hohenberg-Kohn theorems, which established that all electronic properties of a many-body system can be uniquely determined from its ground-state electron density alone—a remarkable simplification compared to traditional wavefunction-based methods that depend on 3N variables for an N-electron system [19] [20]. This theoretical framework eliminates the need for the complex N-electron wavefunction, replacing it with the significantly simpler electron density as the fundamental variable, thereby offering a computationally efficient pathway to studying molecular structures and properties.

The practical implementation of DFT occurs primarily through the Kohn-Sham scheme, which introduces a fictitious system of non-interacting electrons that generates the same density as the true interacting system [21]. Within this approach, the critical challenge becomes the accurate description of the exchange-correlation functional, which must account for all quantum mechanical effects not captured by the classical electrostatic and non-interacting kinetic energy terms. The development of increasingly sophisticated approximations for this functional—from the Local Density Approximation (LDA) to Generalized Gradient Approximations (GGA) and meta-GGAs—has positioned DFT as an indispensable tool for researchers investigating molecular systems across chemistry, physics, and biology [22] [23].

Theoretical Foundations: The Hohenberg-Kohn Theorems

The First Hohenberg-Kohn Theorem

The first Hohenberg-Kohn theorem establishes a fundamental one-to-one correspondence between the external potential acting on a system of interacting electrons and the ground-state electron density [19] [20]. Formally, the theorem states that for a given electron-electron interaction, the external potential V(r) is uniquely determined, up to an additive constant, by the ground state electron density n₀(r). This represents a profound simplification, as it demonstrates that the electron density—a function of only three spatial coordinates—contains exactly the same information as the external potential, which in turn uniquely determines the Hamiltonian and thus all properties of the system, including excited states [19].

This theorem allows the total energy of the system to be expressed as a functional of the density:

[ E0 = E[n0(\mathbf{r})] = F{\mathrm{HK}}[n0(\mathbf{r})] + V[n_0(\mathbf{r})] ]

where ( V[n0(\mathbf{r})] = \int v(\mathbf{r}) n0(\mathbf{r}) \, \mathrm{d}^3\mathbf{r} ) represents the interaction with the external potential, and ( F{\mathrm{HK}}[n0(\mathbf{r})] = T[n0(\mathbf{r})] + U[n0(\mathbf{r})] ) is the universal Hohenberg-Kohn functional comprising the kinetic energy (T) and electron-electron interaction (U) functionals [19]. The universality of FHK[n] means it has the same functional form for all systems, independent of the specific external potential.

The Second Hohenberg-Kohn Theorem and Variational Principle

The second Hohenberg-Kohn theorem establishes a variational principle for the energy functional [19] [20]. It states that the functional E[n(r)] assumes its minimum value at the exact ground-state density for a given external potential. This provides a theoretical justification for using variational methods to determine the ground-state density and energy. The theorem can be formally expressed as:

[ E0 \leq E[\tilde{n}0(\mathbf{r})] ]

where E₀ is the true ground-state energy, and Ẽ₀(r) is any trial density satisfying the necessary conditions of being v-representable [19]. In practice, the requirement of v-representability (that the density must come from some antisymmetric wavefunction for a potential V(r)) posed significant challenges for practical implementations, which were later resolved through the concept of N-representability [19].

The constrained search formulation of Levy and Lieb extended the formal framework to N-representable densities, defining the universal functional as [19]:

[ F[n(\mathbf{r})] = \min_{\Psi \to n(\mathbf{r})} \langle \Psi | \hat{T} +\hat{U}| \Psi \rangle ]

This formulation searches over all wavefunctions Ψ that yield the density n(r) and selects the one that minimizes the expectation value of the kinetic and electron-electron repulsion operators [19]. The minimization condition for the energy functional can be expressed using a Lagrange multiplier μ (the chemical potential) that preserves the number of electrons:

[ \delta \Big[ E[n(\mathbf{r})] + \mu \Big( N - \int n(\mathbf{r}) \, d^3\mathbf{r} \Big) \Big] = 0 ]

This leads to the stationary condition: ( \mu = v(\mathbf{r}) + \frac{\delta F[n(\mathbf{r})]}{\delta n(\mathbf{r})} ) [19].

Figure 1: Logical progression from the Hohenberg-Kohn theorems to practical computational schemes.

The Kohn-Sham Equations: Bridging Theory and Computation

The Kohn-Sham Ansatz

The Kohn-Sham approach, introduced in 1965, provides a practical computational framework that circumvents the need to directly approximate the difficult components of the universal functional F[n] [21]. The fundamental insight was to introduce a fictitious system of non-interacting electrons that exactly reproduces the density of the true interacting system [24] [21]. This allows the kinetic energy to be computed with high accuracy for the non-interacting system, while all the complexities of electron interaction are relegated to the exchange-correlation functional.

In the Kohn-Sham scheme, the total energy functional is partitioned as:

[ EV[n] = Ts[n] + V[n] + U[n] + E_{xc}[n] ]

where Tₛ[n] is the kinetic energy of the non-interacting system, V[n] is the external potential energy, U[n] is the classical electrostatic Hartree energy, and Eₓc[n] is the exchange-correlation energy that captures all remaining quantum mechanical effects [21]. The corresponding Kohn-Sham equations take the form of single-particle Schrödinger equations:

[ \left[-\frac{\hbar^2}{2m}\nabla^2 + vs(\mathbf{r})\right] \phii(\mathbf{r}) = \varepsiloni \phii(\mathbf{r}) ]

where the effective Kohn-Sham potential v_s(r) is given by:

[ vsn = v(\mathbf{r}) + \int d^3\mathbf{r}' \frac{n(\mathbf{r}')}{|\mathbf{r}-\mathbf{r}'|} + v{xc}n ]

and the exchange-correlation potential is the functional derivative vₓcn = δEₓc[n]/δn(r) [24]. The electron density is constructed from the occupied Kohn-Sham orbitals: n(r) = Σᵢ |φᵢ(r)|² [24]. These equations must be solved self-consistently since the potential depends on the density, which in turn depends on the orbitals.

Computational Workflow in Kohn-Sham DFT

Figure 2: Self-consistent cycle for solving Kohn-Sham equations.

Exchange-Correlation Functionals: The Key Approximation

The Hierarchy of Approximations

The accuracy of DFT calculations critically depends on the approximation used for the exchange-correlation functional Eₓc[n]. Over decades, a hierarchy of increasingly sophisticated functionals has been developed, each with characteristic strengths and limitations [22].

Local Density Approximation (LDA) represents the simplest approach, where the exchange-correlation energy at each point in space is that of a homogeneous electron gas with the same density:

[ E{xc}^{\mathrm{LDA}}[rho] = \int rho(\mathbf{r}) \epsilon{xc}(rho(\mathbf{r})) \mathrm{d} \mathbf{r} ]

where εₓc(ρ(r)) is the exchange-correlation energy per particle of a homogeneous electron gas [23]. For exchange, this has a simple analytical form: Eₓ ∝ ∫ρ(r)⁴/³ dr, while correlation is typically obtained from quantum Monte Carlo simulations [23]. LDA often overbinds molecules and solids but provides reasonable lattice parameters and structural properties [23] [21].

Generalized Gradient Approximations (GGA) improve upon LDA by including the density gradient as an additional variable:

[ E{xc}^{\mathrm{GGA}}[rho] = \int \epsilon{xc}(rho(\mathbf{r}), \nablarho(\mathbf{r})) rho(\mathbf{r}) \mathrm{d} \mathbf{r} ]

This allows the functional to account for inhomogeneities in the electron density [22]. Popular GGA functionals include PBE (Perdew-Burke-Ernzerhof) and BLYP (Becke-Lee-Yang-Parr), which typically improve binding energies compared to LDA [22] [21].

Meta-GGAs incorporate additional information through the kinetic energy density:

[ \tau(\mathbf{r}) = \frac{1}{2}\sum{i}^{\mathrm{occ}} |\nabla\phii(\mathbf{r})|^2 ]

This allows the functional to detect the local bonding character (e.g., metallic, covalent, or weak bonds) and provides improved accuracy for reaction energies and lattice constants [22]. The MS-B86bl meta-GGA, for instance, has shown excellent performance for predicting surface reaction probabilities [22].

Hybrid Functionals mix a portion of exact Hartree-Fock exchange with DFT exchange, addressing the self-interaction error and improving bandgap predictions. The machine-learned DM21mu functional, which incorporates physical constraints including the homogeneous electron gas, has demonstrated improved band structure predictions for semiconductors like silicon compared to standard semi-local functionals [22].

Performance Comparison of Exchange-Correlation Functionals

Table 1: Characteristics of major classes of exchange-correlation functionals

| Functional Type | Dependence | Key Examples | Strengths | Limitations |

|---|---|---|---|---|

| LDA | Local density only | SVWN | Reasonable structures, computational efficiency | Overbinding, poor for weak interactions |

| GGA | Density and its gradient | PBE, BLYP | Improved binding energies, widely applicable | Underestimation of band gaps |

| meta-GGA | Density, gradient, and kinetic energy density | SCAN, MS-B86bl | Detects bonding character, better for diverse systems | Increased computational cost |

| Hybrid | Includes exact exchange | B3LYP, PBE0 | Improved band gaps, molecular properties | High computational cost, empirical mixing |

| Machine Learned | Complex parameterization | DM21mu, MCML | High accuracy for training data | Transferability concerns |

Table 2: Performance assessment for different chemical systems

| System Type | LDA Performance | GGA Performance | meta-GGA Performance | Hybrid Performance |

|---|---|---|---|---|

| Metals/Bulk Solids | Reasonable lattice parameters | Good structural properties | Improved simultaneously for surfaces and bulk | Often overcorrects, computationally expensive |

| Molecules/Surfaces | Overbinding, inaccurate energetics | Improved but variable accuracy | Good for surface chemistry (e.g., MCML) | Good but system-dependent |

| Band Gaps | Severe underestimation | Underestimation | Moderate improvement | Significant improvement (e.g., DM21mu for Si) |

| Transition Metal Oxides | Incorrect metallic ground state | Often fails for strongly correlated systems | Limited improvement for strong correlation | Requires +U corrections for localized states |

| Dispersion/van der Waals | Poor description | Poor without corrections | Poor without corrections | Requires explicit non-local corrections |

Comparative Assessment: DFT vs. Post-Hartree-Fock Methods

Methodological Comparisons

The performance of DFT must be understood in relation to wavefunction-based quantum chemical methods, particularly post-Hartree-Fock approaches. Traditional Hartree-Fock (HF) theory includes exact exchange but completely neglects electron correlation, leading to systematic errors in bond energies and molecular properties [2]. Post-HF methods address this limitation by adding increasingly sophisticated treatments of electron correlation: MP2 (Møller-Plesset perturbation theory to second order), CCSD (Coupled Cluster with Single and Double excitations), CASSCF (Complete Active Space Self-Consistent Field), and others [2].

A critical study comparing HF and DFT for zwitterionic systems demonstrated that HF could outperform many DFT functionals in reproducing experimental dipole moments, with CCSD, CASSCF, CISD, and QCISD methods providing very similar results to HF [2]. This highlights the significance of the delocalization error in DFT, where the excessive delocalization of electrons in many DFT functionals leads to poor performance for systems with significant charge separation [2]. In such cases, the localization issue associated with HF proved advantageous over delocalization issue of DFT for correctly describing structure-property correlations in zwitterion systems [2].

For reaction energies and barrier heights, modern DFT functionals often provide reasonable accuracy at substantially lower computational cost than high-level wavefunction methods. However, multi-reference systems—where a single Slater determinant provides an inadequate description—remain challenging for conventional DFT approximations [2].

Performance in Specific Applications

Surface Chemistry and Catalysis: The MCML (multi-purpose, constrained, and machine-learned) meta-GGA functional has demonstrated excellent performance for surface chemistry applications, showing the lowest mean absolute error for chemisorption and physisorption binding energies on transition metal surfaces compared to other GGA and meta-GGA functionals [22]. Similarly, the MS-B86bl meta-GGA has shown remarkable accuracy in predicting D₂ sticking probabilities on Cu(111), in close agreement with experimental results [22].

Semiconductor Band Structures: Traditional semi-local functionals like PBE severely underestimate band gaps, a fundamental limitation for semiconductor applications [22]. The machine-learned DM21mu functional, which incorporates training from molecular quantum chemistry data while maintaining the homogeneous electron gas as a physical constraint, provides significantly improved band gaps for materials like silicon while reducing overall bandwidth [22].

Dispermsion Interactions: For systems where van der Waals interactions are crucial, such as the interaction of graphene with nickel, the VCML-rVV10 functional (which optimizes both semi-local exchange and a non-local vdW kernel) shows excellent agreement with experimental estimates for both chemisorption minima and long-range vdW behavior [22]. Bayesian ensemble approaches applied to this functional allow quantification of uncertainty in computed predictions [22].

Computational Protocols and Research Tools

Essential Computational Methodologies

Software Packages: Multiple software packages implement DFT with various numerical approaches. FHI-aims employs numerical atomic orbitals and has been benchmarked on various processor architectures including A64FX and GRACE [25]. Other widely used packages include Gaussian, VASP, Quantum ESPRESSO, and CASTEP, each with particular strengths for molecular or periodic systems [2] [23].

Basis Sets: Two primary approaches exist for representing Kohn-Sham orbitals: plane-wave basis sets (common for periodic systems) and localized atomic-orbital basis sets (often preferred for molecular systems). The choice significantly impacts computational efficiency and convergence properties.

Core Computational Steps:

- Geometry Optimization: Finding the minimum energy structure through iterative updates of atomic positions until forces fall below a specified threshold.

- Electronic Structure Calculation: Self-consistent solution of the Kohn-Sham equations to obtain the ground-state density, energy, and related properties.

- Property Evaluation: Computation of derived properties including vibrational frequencies, NMR chemical shifts, and electronic excitation energies (the latter typically requiring time-dependent DFT).

Research Reagent Solutions: Computational Toolkit

Table 3: Essential computational tools for DFT research

| Tool Category | Specific Examples | Primary Function | Application Context |

|---|---|---|---|

| DFT Software Packages | FHI-aims, Gaussian, VASP, Quantum ESPRESSO | Solve Kohn-Sham equations numerically | Molecular and materials simulation |

| Wavefunction Analysis | Multiwfn, Bader Analysis | Analyze electron density distributions | Bonding analysis, charge transfer studies |

| Visualization Tools | VESTA, JMol, ChemCraft | Visualize molecular structures and orbitals | Results interpretation and presentation |

| Benchmark Databases | GMTKN55, S22, AE6 | Provide benchmark sets for method validation | Functional development and validation |

| High-Performance Computing | A64FX, GRACE processors | Enable computationally demanding simulations | Large systems and high-throughput studies |

Density Functional Theory, founded upon the rigorous Hohenberg-Kohn theorems and implemented through the practical Kohn-Sham scheme, provides a powerful framework for computational studies across chemistry, materials science, and drug development. The accuracy of DFT calculations remains intrinsically tied to the approximation used for the exchange-correlation functional, with different classes of functionals exhibiting characteristic strengths and limitations across various chemical systems.

The ongoing development of increasingly sophisticated functionals—particularly machine-learned approaches trained on high-quality reference data while incorporating physical constraints—promises to extend the accuracy and applicability of DFT. For researchers in drug development and materials design, careful selection of functional appropriate to the specific system and properties of interest remains essential for obtaining reliable computational predictions. As functional development continues and computational resources expand, DFT is positioned to maintain its central role in the computational elucidation of molecular structure and properties.

The pursuit of accurate predictions of molecular structure and properties is a central endeavor in computational chemistry, with profound implications for drug discovery and materials science. This pursuit is fundamentally constrained by the accuracy-speed trade-off, a computational scaling relationship that dictates the balance between predictive reliability and practical feasibility. Researchers must navigate this trade-off when selecting between two dominant families of electronic structure methods: density functional theory (DFT) and post-Hartree-Fock (post-HF) methods.

DFT methods achieve computational efficiency by utilizing the electron density as the fundamental variable, typically scaling more favorably with system size (often as O(N³)) compared to post-HF methods [26]. However, this speed comes with potential accuracy limitations due to the approximate nature of exchange-correlation functionals, which can lead to delocalization errors and poor performance for systems with strong correlation effects [2] [27]. In contrast, post-HF methods systematically approach the exact solution of the Schrödinger equation by accounting for electron correlation through more computationally demanding approaches, with scaling that can range from O(N⁵) for MP2 to O(N⁷) or higher for coupled-cluster methods like CCSD(T) [2] [17].

This guide objectively compares the performance of these methodological approaches, providing experimental data and protocols to inform method selection for research applications, particularly in pharmaceutical development where both accuracy and computational feasibility are critical concerns.

Theoretical Framework and Computational Scaling

The theoretical foundations of DFT and post-HF methods differ significantly, leading to their characteristic accuracy-speed trade-offs. DFT, grounded in the Hohenberg-Kohn theorems, determines ground-state properties from the electron density rather than the many-electron wavefunction [26]. The accuracy of DFT depends almost entirely on the approximation used for the exchange-correlation functional, with "Jacob's Ladder" representing a hierarchy of functional complexity and potential accuracy [26].

Post-HF methods, in contrast, start from the Hartree-Fock wavefunction and systematically improve upon it by adding electron correlation effects. These methods include Møller-Plesset perturbation theory (MP2, MP4), coupled-cluster (CCSD, CCSD(T)), and configuration interaction (CISD, CASSCF) approaches [2] [17]. The computational cost of these methods increases dramatically with system size and the level of theory, but they offer a more systematic path to high accuracy, particularly for challenging electronic structures.

Table: Computational Scaling of Electronic Structure Methods

| Method | Computational Scaling | Key Applications | System Size Limitations |

|---|---|---|---|

| HF | O(N⁴) | Small molecules, initial guesses | Medium (50-100 atoms) |

| DFT (GGA) | O(N³) | Geometry optimization, medium systems | Large (100-1000 atoms) |

| DFT (Hybrid) | O(N⁴) | Accurate energetics, reaction barriers | Medium-Large (50-500 atoms) |

| MP2 | O(N⁵) | Correlation energy, weak interactions | Small-Medium (10-100 atoms) |

| CCSD(T) | O(N⁷) | Benchmark accuracy, small systems | Very Small (5-20 atoms) |

| CASSCF | Exponential | Multiconfigurational systems, active spaces | Small (5-50 atoms) |

Quantitative Performance Comparison

Accuracy Benchmarks for Molecular Properties

Comparative studies reveal significant differences in method performance across various molecular properties. For zwitterionic systems, HF has demonstrated surprising superiority over many DFT functionals in reproducing experimental dipole moments. In one study, HF achieved nearly exact agreement with the experimental dipole moment of 10.33D for a pyridinium benzimidazolate zwitterion, while many DFT functionals showed substantial deviations [2].

Table: Accuracy Comparison for Molecular Properties

| Method | Dipole Moment Accuracy (Zwitterions) | Bond Length Error (Å) | Vibrational Frequency Error (%) | Dissociation Energy Error (kcal/mol) |

|---|---|---|---|---|

| HF | Excellent (matches 10.33D experimental) | Variable | Variable | Often overestimated |

| B3LYP | Moderate deviation | ~0.01-0.02 | ~1-3 | ~2-5 |

| PBE0 | Moderate deviation | ~0.01-0.02 | ~1-3 | ~2-5 |

| CCSD(T) | High accuracy | ~0.005-0.015 | ~0.5-2 | ~0.5-2 |

| MP2 | Good accuracy | ~0.01-0.03 | ~1-4 | ~1-3 |

For monochalcogenide diatomic molecules, studies have shown that the popular B3LYP functional exhibits performance often close to CCSD(T) for certain properties, and sometimes even superior for dissociation energies and equilibrium bond lengths [17]. However, the performance depends significantly on the chemical system, with DFT generally performing better for main-group elements than for transition metals or systems with strong static correlation.

Timing and Computational Efficiency

The computational cost difference between methods becomes dramatic as system size increases. While a DFT calculation on a medium-sized organic molecule (20-30 atoms) might complete in hours on a standard workstation, a CCSD(T) calculation on the same system could require days or weeks on high-performance computing resources [17]. This efficiency gap widens substantially for larger systems relevant to drug discovery, such as protein-ligand complexes or supramolecular assemblies.

Experimental Protocols and Methodologies

Benchmarking Protocol for Method Validation

To ensure reliable comparisons between computational methods, researchers should implement standardized benchmarking protocols:

System Selection and Preparation

- Select diverse molecular systems with high-quality experimental reference data

- Include molecules with varying electronic challenges: zwitterions, transition states, systems with weak interactions

- Obtain or optimize initial geometries using medium-level theory (B3LYP/6-31G* or similar)

Computational Methodology

- Employ consistent, high-quality basis sets (def2-TZVP, cc-pVTZ, or similar)

- Perform geometry optimizations at each level of theory being compared

- Conduct frequency calculations to confirm minima (no imaginary frequencies) or transition states (one imaginary frequency)

- Apply consistent treatment of solvation effects if relevant (e.g., PCM, SMD models)

Accuracy Assessment

- Compare computed values (structures, energies, properties) with experimental reference data

- Calculate statistical measures: mean absolute error, root mean square deviation, maximum error

- Assess computational cost: CPU time, memory requirements, disk usage

Case Study: Zwitterionic Systems Protocol

The study demonstrating HF superiority for zwitterions employed this methodology [2]:

Systems: Pyridinium benzimidazolates synthesized by Boyd (1966) and characterized by Alcalde et al. (1987)

Methods Compared: HF, multiple DFT functionals (B3LYP, CAM-B3LYP, BMK, B3PW91, TPSSh, LC-ωPBE, M06-2X, M06-HF, ωB97xD), post-HF methods (MP2, CASSCF, CCSD, QCISD, CISD), and semi-empirical methods

Computational Details:

- Software: Gaussian 09

- Geometry optimization: No symmetry restrictions

- Property calculation: Dipole moments, structural parameters

- Validation: Comparison with experimental crystal structures and dipole measurements

Key Finding: HF more accurately reproduced experimental dipole moments of zwitterions compared to most DFT functionals, with post-HF methods (CCSD, CASSCF, CISD, QCISD) confirming HF's reliability for these systems [2]

Diagram: Method Benchmarking Workflow for evaluating computational chemistry approaches.

Successful computational chemistry research requires both theoretical knowledge and practical resources. This toolkit outlines essential components for conducting research on the accuracy-speed trade-off.

Table: Essential Computational Research Resources

| Resource Category | Specific Tools | Function/Purpose | Key Considerations |

|---|---|---|---|

| Quantum Chemistry Software | Gaussian 09, GAMESS, ORCA, Q-Chem, NWChem | Perform electronic structure calculations | License cost, parallel efficiency, feature set |

| Basis Sets | Pople-style (6-31G*), Dunning (cc-pVXZ), Karlsruhe (def2) | Mathematical functions for electron orbitals | Balance between accuracy and computational cost |

| DFT Functionals | B3LYP, PBE0, ωB97XD, M06-2X, TPSSh | Approximate exchange-correlation energy | Functional choice depends on chemical system |

| Post-HF Methods | MP2, CCSD(T), CASSCF, QCISD | High-accuracy electron correlation treatment | Computational scaling limits application size |

| Computational Hardware | Multi-core CPUs, High-memory nodes, GPU accelerators | Provide computational power for calculations | Balance between CPU speed, memory, and storage |

| Visualization & Analysis | GaussView, Avogadro, VMD, Jupyter notebooks | Molecular visualization and data analysis | Interpretation of computational results |

Implications for Drug Development and Materials Design

The accuracy-speed trade-off has direct practical implications for computational drug development. While high-level post-HF methods like CCSD(T) often provide benchmark accuracy for binding energies and reaction barriers, their computational cost precludes application to most drug-sized molecules [17]. Instead, pharmaceutical researchers typically employ a multi-level strategy:

Virtual Screening: DFT or even faster semi-empirical methods screen large compound libraries to identify promising candidates based on geometric and electronic properties.

Lead Optimization: Higher-accuracy methods (hybrid DFT, double-hybrid DFT, or MP2) refine predictions for selected compounds, providing more reliable binding affinities and reaction profiles.

Benchmarking: Selectively applied high-level calculations validate the performance of more efficient methods for specific molecular classes relevant to the drug discovery program.

For materials design, where system sizes are typically larger, DFT remains the workhorse method, though careful functional selection is crucial. Systems with significant electron correlation, such as transition metal complexes or radical species, may require more advanced methods like CASSCF or DMRG even for qualitative accuracy [2] [27].

Diagram: Multi-level Computational Strategy for drug discovery that balances speed and accuracy.

The accuracy-speed trade-off presents both a challenge and an opportunity for computational chemistry. No single method universally outperforms others across all chemical systems and properties. Instead, researchers must make informed choices based on:

Chemical System Complexity: Simple organic molecules may be treated adequately with DFT, while multiconfigurational systems require post-HF approaches [2].

Target Properties: Energetic properties often demand higher-level theory than structural parameters [17].

Available Resources: Computational budget and time constraints frequently dictate methodological choices.

Error Cancellation: Sometimes, lower-level methods benefit from fortuitous error cancellation for specific applications [27].

Emerging approaches like density-corrected DFT (DC-DFT) and range-separated hybrids attempt to bridge the gap between these methodological families [27]. Additionally, the development of more efficient implementations and the increasing availability of computational resources continue to shift the balance, making higher-accuracy methods applicable to larger, more chemically relevant systems.

For drug development professionals, the most effective strategy involves understanding the limitations of chosen computational methods and implementing validation protocols specific to their molecular classes of interest. By carefully considering the accuracy-speed trade-off, researchers can maximize predictive reliability while working within practical computational constraints.

Practical Applications: Where DFT and Post-HF Methods Excel in Research

The development of new pharmaceutical formulations has traditionally relied on empirical trial-and-error approaches, a process that is both time-consuming and resource-intensive. This paradigm is rapidly shifting with the adoption of computational pharmaceutics, particularly Density Functional Theory (DFT), which enables precision design at the molecular level by elucidating the electronic nature of molecular interactions [28]. DFT addresses a critical challenge in modern drug development: more than 60% of formulation failures for Biopharmaceutics Classification System (BCS) II/IV drugs are attributed to unforeseen molecular interactions between active pharmaceutical ingredients (APIs) and excipients [28]. By solving the Kohn-Sham equations with quantum mechanical precision achieving an accuracy of approximately 0.1 kcal/mol, DFT reconstructs molecular orbital interactions and provides unprecedented insights into drug-excipient composite systems [28]. This review examines the pivotal role of DFT in modeling molecular interactions and ensuring formulation stability, contextualizing its performance within the broader landscape of post-Hartree-Fock quantum chemical methods.

Theoretical Foundations: DFT in Context

Fundamental Principles of DFT

DFT is a computational method based on the principles of quantum mechanics that describes the properties of multi-electron systems through electron density rather than wavefunctions. The theoretical framework rests on two cornerstone theorems: the Hohenberg-Kohn theorem, which establishes that the ground-state properties of a system are uniquely determined by its electron density, and the Kohn-Sham equations, which reduce the complex multi-electron problem to a more tractable single-electron approximation [28] [1]. The accuracy of DFT critically depends on the selection of exchange-correlation functionals, which approximate the quantum mechanical exchange and correlation effects that distinguish DFT from classical theories [28].

DFT typically employs the self-consistent field (SCF) method, which iteratively optimizes the Kohn-Sham orbitals until convergence is achieved, yielding ground-state electronic structure parameters including molecular orbital energies, geometric configurations, vibrational frequencies, and dipole moments [28]. These parameters provide essential support for analyzing structure-activity relationships in drug molecules.

DFT Versus Post-Hartree-Fock Methodologies

The fundamental distinction between DFT and Hartree-Fock (HF) methodologies lies in their approach to electron correlation. HF approximates the wavefunction as a single Slater determinant and does not fully account for electron correlation effects, often resulting in energies higher than the true energy of the system [1]. In contrast, DFT reformulates the many-body problem in terms of the electron density and utilizes the Hohenberg-Kohn theorems to find the ground state properties, potentially capturing more correlation effects [1].

While post-Hartree-Fock methods (such as MP2, CCSD, and CASSCF) generally provide more accurate results by systematically accounting for electron correlation, they remain computationally expensive and often prohibitive for medium to large systems [2]. This computational constraint has established DFT as the predominant method for pharmaceutical applications involving drug-sized molecules, though HF theory occasionally demonstrates superior performance for specific systems like zwitterions, where its localization advantage proves beneficial over DFT's delocalization error [2].

Table 1: Comparison of Quantum Chemical Methods in Drug Design Applications

| Method | Theoretical Basis | Electron Correlation | Computational Cost | Typical Applications in Drug Design |

|---|---|---|---|---|

| Hartree-Fock | Wavefunction (Single determinant) | None | O(N⁴) | Initial geometry optimization; systems with strong localization [2] |

| DFT | Electron density | Approximate via functionals | O(N³) to O(N⁴) | Most drug design applications; geometry, electronic properties [28] |

| MP2 | Wavefunction (Perturbation theory) | Approximate | O(N⁵) | Small drug molecules; interaction energies [2] |

| CCSD | Wavefunction (Coupled cluster) | High accuracy | O(N⁶) | Benchmark calculations for small systems [2] |

| CASSCF | Wavefunction (Multi-configurational) | Accurate for specific electrons | Very high | Complex electronic structures; excited states [2] |

DFT Applications in Drug Formulation Design

Solid Dosage Forms and Co-Crystal Engineering

In solid dosage forms, DFT has proven invaluable for elucidating the electronic driving forces governing API-excipient co-crystallization. By leveraging Fukui functions to predict reactive sites, DFT guides stability-oriented co-crystal design through systematic analysis of molecular electrostatic potential (MEP) maps and average local ionization energy (ALIE) [28]. These approaches identify electron-rich (nucleophilic) and electron-deficient (electrophilic) regions critical for predicting drug-target binding sites [28]. The ability to model these interactions at quantum mechanical precision has substantially reduced experimental validation cycles in preformulation studies.

Nanodelivery Systems Optimization

For nanodelivery systems, DFT enables precise calculation of van der Waals interactions and π-π stacking energy levels, facilitating the engineering of carriers with tailored surface charge distributions [28]. These calculations help optimize targeting efficiency by predicting the interaction strength between drug molecules and nanocarriers. In a recent study of benzodiazepines with 2-hydroxypropyl-β-cyclodextrin (2HPβCD), DFT calculations complemented molecular dynamics simulations by providing insights into electronic interactions that enhance drug delivery efficiency [29]. The research found negative values for binding free energy across all studied drugs, indicating thermodynamic favorability and improved solubility [29].

Solvation Modeling and Release Kinetics