Beyond Single-Reference Models: Advanced Quantum Chemistry Methods for Strongly Correlated Systems

Strong electron correlation presents a significant challenge in quantum chemistry, rendering standard density functional theory (DFT) and single-reference wavefunction methods inadequate for systems like open-shell transition metal complexes, diradicals, and...

Beyond Single-Reference Models: Advanced Quantum Chemistry Methods for Strongly Correlated Systems

Abstract

Strong electron correlation presents a significant challenge in quantum chemistry, rendering standard density functional theory (DFT) and single-reference wavefunction methods inadequate for systems like open-shell transition metal complexes, diradicals, and bond-breaking processes. This article provides a comprehensive overview for researchers and drug development professionals, exploring the foundational principles of strong correlation and its implications in biochemical systems. It details state-of-the-art multireference and local correlation methods, alongside emerging quantum computing approaches, that offer accurate solutions. The content further delivers practical guidance on method selection, troubleshooting, and optimization, and concludes with a comparative analysis of modern methods, highlighting their validation, performance, and growing role in enabling predictive simulations for drug discovery and materials science.

The Strong Correlation Problem: From Quantum Mysteries to Real-World Materials

In quantum chemistry, the electron correlation problem represents a fundamental challenge in accurately describing the behavior of many-electron systems. Electron correlation is formally defined as the energy difference between the exact, non-relativistic solution of the Schrödinger equation and the Hartree-Fock (HF) approximation: Ecorr = Eexact - E_HF [1]. While HF theory recovers approximately 99% of the total energy of a system using a mean-field approach where electrons experience an average potential, the missing 1% of correlation energy is chemically significant—often corresponding to the energy scales of chemical reactions and bonding [1].

The distinction between weak and strong electron correlation is primarily determined by the adequacy of single-reference wavefunctions. Strong electron correlation manifests when a single Slater determinant provides a qualitatively incorrect description of the electronic structure, necessitating a multi-reference approach. This occurs in numerous chemically important scenarios including bond dissociation, transition metal complexes, open-shell systems, and conjugated molecular chains [2] [1]. In the HF method, this limitation becomes apparent during bond breaking, where an improper dissociation limit is obtained, highlighting the critical need for methods that capture strong correlation effects [1].

Quantitative Assessment of Correlation Methods

Theoretical Scaling of Computational Methods

Table 1: Computational scaling and application scope of electronic structure methods

| Method | Computational Scaling | Strength in Electron Correlation | Typical Applications |

|---|---|---|---|

| Hartree-Fock (HF) | N⁴ | None (mean-field) | Reference wavefunction; starting point for correlated methods [2] |

| Density Functional Theory (DFT) | N³ to N⁴ | Weak to moderate (depending on functional) | Ground state properties of medium-sized molecules [2] [1] |

| Møller-Plesset Perturbation (MP2) | N⁵ | Weak to moderate | Initial correlation energy estimates; larger systems [2] |

| Coupled Cluster (CCSD) | N⁶ | Strong (but single-reference) | Accurate thermochemistry; single-reference systems [2] |

| Configuration Interaction (CISD) | Exponential (truncated) | Moderate to strong | Multireference problems; small active spaces [2] |

| Full CI | Factorial | Exact (within basis set) | Benchmark calculations; small molecules [2] |

Correlation Energy Magnitudes

Table 2: Correlation energy contributions for two-electron systems

| System | Hartree-Fock Energy (E_HF) | Exact Energy (E_exact) | Correlation Energy (E_corr) | Remarks |

|---|---|---|---|---|

| Helium-like ions (Z=2-18) | Varies with Z | Varies with Z | ~1% of total energy | Accuracy improves with HF basis [1] |

| Critical nuclear charge (Z_c) | Z_c^HF ≈ 1.031 | Z_c^exact ≈ 0.911 | Stabilization of anion | Correlation essential for anion stability [1] |

The data in Table 2 illustrates that while correlation energy constitutes a small percentage of the total energy, its contribution is chemically significant. This is particularly evident in the case of the critical nuclear charge (Z_c), where electron correlation stabilizes systems that would otherwise be unbound at the HF level [1].

Experimental Protocols for Strong Correlation

Protocol 1: Ab Initio Downfolding for Correlated Materials

Purpose: To derive accurate, material-specific many-body Hamiltonians for strongly-correlated systems while maintaining computational tractability [3].

Principle: This technique combines density functional theory with quantum many-body methods to create effective Hamiltonians that capture essential correlation effects in a reduced orbital space [3].

Procedure:

- Initial DFT Calculation: Perform a full density functional theory calculation of the target material's electronic structure using appropriate exchange-correlation functionals [3].

- Active Space Selection: Identify the correlated subspaces (e.g., d-orbitals in transition metals, f-orbitals in lanthanides) most relevant to the strongly-correlated behavior [3].

- Hamiltonian Downfolding: Derive a many-body Hamiltonian (e.g., Hubbard-type model) through systematic downfolding procedures that integrate out high-energy degrees of freedom while preserving the low-energy physics [3].

- Quantum Solver Implementation: Apply variational quantum eigensolvers or classical tensor network methods to solve the downfolded Hamiltonian and obtain ground state properties [3].

- Property Calculation: Compute observable properties including spectral functions, charge orders, and magnetic correlations from the obtained wavefunctions [3].

Validation: Compare predicted states with experimental observations such as antiferromagnetic behavior in one-dimensional cuprates or excitonic ground states in monolayer WTe₂ [3].

Protocol 2: Machine Learning for Molecular Wave Functions

Purpose: To accurately and transferably compute electronic energies and geometries by learning complex molecular wave functions across diverse molecular sizes and compositions [4].

Principle: Machine learning models can approximate the high-dimensional mapping from molecular structure to electronic wave functions, bypassing the exponential scaling of traditional quantum chemistry methods [4].

Procedure:

- Training Set Construction: Generate a diverse set of molecular structures and their corresponding high-level quantum chemistry references (e.g., from CCSD(T) or quantum Monte Carlo) [4].

- Feature Engineering: Develop molecular descriptors that uniquely represent atomic configurations while maintaining invariance to symmetry operations [4].

- Model Architecture Selection: Implement neural network architectures capable of representing complex wave functions, such as Fermi nets or Pauli nets [4].

- Wave Function Learning: Train models to predict electronic energies and wave functions by minimizing the energy variance or maximizing the overlap with reference data [4].

- Transferability Assessment: Validate model performance on molecular systems not included in the training set, particularly for bond dissociation and formation processes [4].

Applications: This protocol is particularly valuable for studying chemical reactions involving bond dissociation and formation, critical for understanding catalysis and chemical transformations [4].

Protocol 3: Configuration Interaction for Strong Correlation

Purpose: To systematically improve beyond the mean-field approximation by incorporating multiple electronic configurations [2] [1].

Principle: The wavefunction is constructed as a linear combination of Slater determinants representing both the ground and excited electron configurations, allowing explicit treatment of electron correlation [2].

Procedure:

- Reference Wavefunction: Obtain a Hartree-Fock reference wavefunction as the starting point [2].

- Excitation Generation: Create singly, doubly, triply, etc. excited determinants by promoting electrons from occupied to virtual orbitals [2].

- Hamiltonian Diagonalization: Construct and diagonalize the Hamiltonian matrix in the basis of these determinants to obtain correlated wavefunctions and energies [2].

- Truncation Scheme Selection: Implement appropriate truncation such as CISD (single and double excitations) or CASSCF (complete active space) based on system size and correlation strength [2].

- Property Evaluation: Compute expectation values of operators using the correlated wavefunction to obtain improved molecular properties [2].

Limitations: Traditional CI methods face exponential scaling with system size, though selected CI approaches and active space methods can extend their applicability [2].

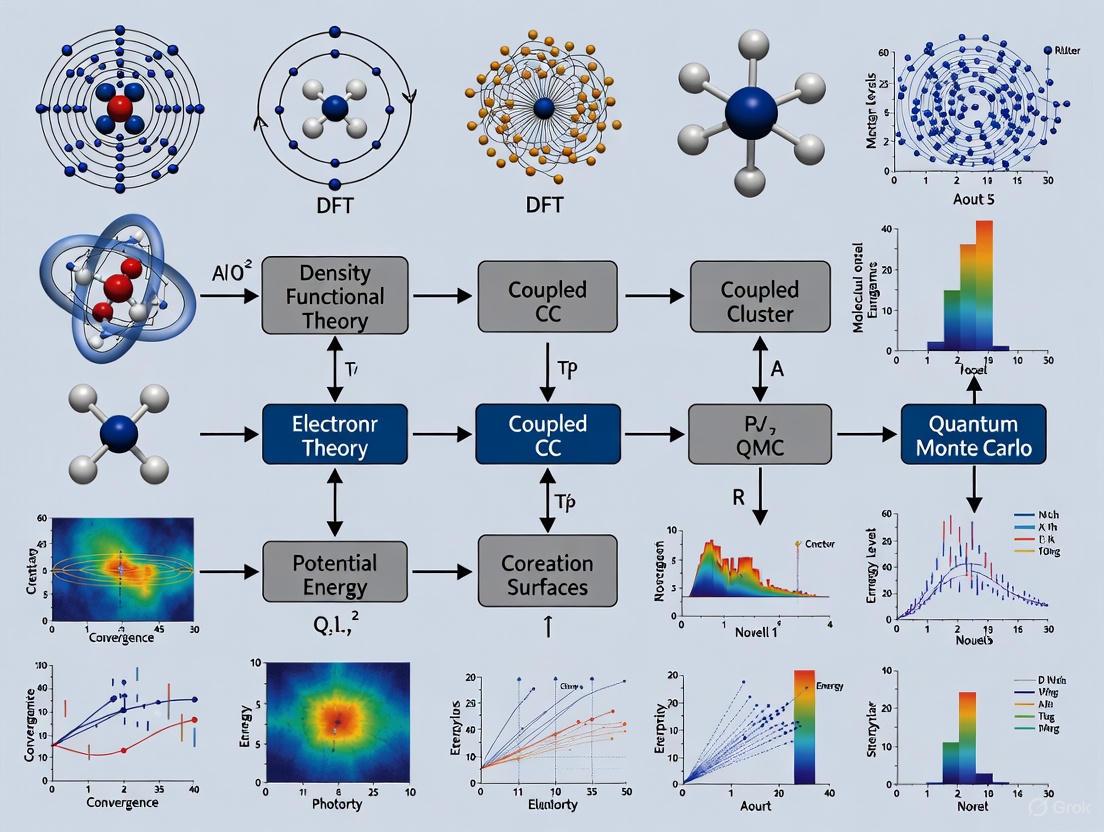

Visualization of Methodologies

Quantum Chemistry Method Decision Pathway

The Scientist's Toolkit

Research Reagent Solutions for Strong Correlation Studies

Table 3: Essential computational tools for strong electron correlation research

| Tool/Resource | Category | Function | Application Context |

|---|---|---|---|

| Variational Quantum Eigensolver (VQE) | Quantum Algorithm | Finds ground states of quantum systems using hybrid quantum-classical approach [3] | Solving downfolded Hamiltonians for correlated materials [3] |

| Complete Active Space SCF (CASSCF) | Ab Initio Method | Multideterminant approach for clear multireference cases [2] | Biradicals, transition states, systems with near-degenerate HOMO-LUMO [2] |

| Density Functional Theory (DFT) | Electronic Structure Method | Provides starting point for downfolding with approximate XC functional [3] [1] | Initial electronic structure assessment of correlated materials [3] |

| Coupled Cluster (CCSD(T)) | High-Accuracy Method | "Gold standard" for single-reference correlation [2] | Benchmarking and training machine learning models [4] [2] |

| Machine Learning Wavefunction Models | Emerging Technology | Learns complex wavefunctions from data; transferable across molecules [4] | Large systems where traditional methods are computationally prohibitive [4] |

| Symmetry-Adapted Cluster CI (SAC-CI) | Specialized Method | Accurate description of ground/excited states with correlation [2] | Excited and ionized states of correlated systems [2] |

The field of strong electron correlation continues to evolve with several promising research directions emerging. Quantum computing approaches are showing increasing potential for handling the exponential complexity of strongly-correlated systems, particularly when combined with ab initio downfolding techniques [3]. Future work may focus on developing more flexible ansatz designs for variational approaches, implementing rigorous treatments of dynamic Coulomb interactions, and investigating how different DFT starting points influence the downfolding process [3].

Machine learning methodologies offer another promising avenue, enabling the accurate computation of electronic energies and geometries by learning complex molecular wave functions [4]. These data-driven approaches demonstrate remarkable transferability across molecules of different sizes and compositions, potentially addressing key limitations of traditional quantum chemical methods [4].

As these advanced methods mature, incorporating lattice effects and understanding how atomic movements influence electron screening will further enhance the accuracy of derived Hamiltonians [3]. The continued synergy between theoretical advances, computational implementations, and experimental validation promises to unlock increasingly complex strongly-correlated systems, with significant implications for materials design, catalysis, and fundamental understanding of quantum phenomena in molecular systems.

Strong electron correlation presents a significant challenge in modern electronic structure theory, arising when the behavior of electrons cannot be effectively described as non-interacting entities within a mean-field approximation [5]. This phenomenon is crucial in diverse chemical contexts, including transition-metal chemistry, bond-breaking processes, and systems with near-degenerate electronic states such as diradicals [6] [7]. In these systems, multiple electronic configurations contribute substantially to the ground or excited states, rendering standard quantum chemical methods like restricted Hartree-Fock (RHF) theory or conventional Kohn-Sham density functional theory (KS-DFT) inadequate [7] [8]. The essential feature of strongly correlated materials is that their electronic properties—such as metal-insulator transitions (Mott insulation), heavy fermion behavior, and spin-charge separation—emerge from complex electron interactions that require advanced theoretical treatments beyond single-reference descriptions [5] [9].

The quantitative measure of correlation strength can be defined through the reduction of electron number fluctuations on an atomic site. A suitable metric is the parameter Σ(i), which represents the normalized mean-square deviation of electron number compared to the uncorrelated Hartree-Fock description [9]. For systems with strongly correlated electrons, such as La₂CuO₄, Σ values approach 0.8, indicating substantial suppression of charge fluctuations compared to the independent-electron picture [9]. This perspective article details the key electronic structures prone to strong correlation, provides quantitative characterization data, and outlines robust experimental and computational protocols for their investigation within quantum chemistry research.

Electronic Structure Classes and Quantitative Data

Transition Metal Complexes and Oxides

Transition metal compounds represent a major class of strongly correlated materials characterized by incompletely filled d- or f-electron shells with narrow energy bands [5]. Their distinctive electronic properties arise from the interplay between localized d/f electrons and delocalized conduction electrons, leading to phenomena such as high-temperature superconductivity in cuprates, Mott insulation, and colossal magnetoresistance [5] [9].

Table 1: Characteristic Properties of Selected Transition Metal Oxides

| Material | Electronic Character | Key Phenomenon | Correlation Strength Σ | Notable Features |

|---|---|---|---|---|

| La₂CuO₄ | Mott Insulator | Antiferromagnetism | Σ(Cu) ≈ 0.8 [9] | Parent compound for high-Tc cuprates |

| NiO | Charge-Transfer Insulator | Metal-Insulator Transition | N/A | Would be metallic without correlations [5] |

| CeAl₃ | Heavy Fermion System | Kondo screening | > La₂CuO₄ [9] | Enhanced effective electron mass |

| Fe₃O₄ | Mixed-Valence System | Verwey Transition [9] | N/A | Charge ordering at low temperature |

The correlation effects in these materials are quantified through configuration probabilities. For La₂CuO₄, correlated ground states show nearly complete suppression of d⁸ configurations (P(d⁸) ≈ 0.0), with probabilities shifting to P(d¹⁰) = 0.29 and P(d⁹) = 0.70, contrasting sharply with Hartree-Fock predictions [9]. This reconfiguration demonstrates how strong correlations significantly alter electronic structure.

Bond Dissociation Processes

Bond dissociation represents a fundamental process where strong correlation effects dominate, particularly as bonds are stretched toward breaking points [7]. The dissociation of simple diatomic molecules like H₂ illustrates the core challenge: the restricted Hartree-Fock (RHF) wave function maintains inappropriate ionic terms (H⁺H⁻) at large internuclear separations, leading to dramatically overestimated energies [7].

Table 2: Bond Dissociation Energies (BDEs) for Representative Bonds

| Bond | Molecule | BDE (kcal/mol) | BDE (kJ/mol) | Computational Note |

|---|---|---|---|---|

| C-H | CH₄ | 103.011 (298 K) [10] | 431 (298 K) [10] | Strong aliphatic bond |

| C-C | Ethane | 83-90 [11] | 347-377 [11] | Typical alkane single bond |

| O-H | Water | 119 [11] | 497 [11] | First O-H dissociation |

| H-H | H₂ | 104.1539 [11] | 435.780 [11] | High-precision reference |

| Si-F | F₃Si-F | 166 [11] | 695 [11] | One of strongest single bonds |

The bond dissociation energy is defined as the standard enthalpy change when a bond A-B is cleaved by homolysis to give fragments A and B, which are typically radical species [11]. Accurate computation requires careful methodology selection, as standard quantum chemical approaches fail to describe the multiconfigurational character of the dissociation products [7].

Diradicals and Multiconfigurational Systems

Diradicals represent prototypical strongly correlated systems characterized by two unpaired electrons in degenerate or near-degenerate molecular orbitals [7]. These systems exhibit significant near-degeneracy correlation, where multiple electronic configurations contribute nearly equally to the wave function, making single-reference methods qualitatively incorrect.

The electronic structure of diradicals shares conceptual similarities with bond dissociation processes, as both involve near-degenerate electronic states [7]. In diradicals, the ground state wavefunction requires a balanced treatment of both covalent and ionic configurations to properly describe electron correlation effects, analogous to the Heitler-London approach for H₂ [9]. These systems are particularly prevalent in reaction intermediates, excited states, and materials with unusual magnetic properties.

Experimental Protocols

Computational Protocol for Bond Dissociation Energy Calculation

Objective: Calculate the C-H bond dissociation energy in methane (CH₄ → CH₃• + H•) using Gaussian16 software [10].

Step-by-Step Procedure:

Geometry Optimization and Frequency Calculation:

- Create input files for methane (CH₄) and the methyl radical (CH₃•).

- Use the appropriate level of theory (e.g., wB97XD/cc-pVDZ as referenced [10]).

- Include

optandfreqkeywords in the route section. - For the methyl radical, set

charge=0andspin=doublet. - Run calculations and verify convergence and absence of imaginary frequencies (confirming a true minimum).

Thermochemical Data Extraction:

- For each optimized structure, locate the "Sum of electronic and thermal Enthalpies" value in the output file.

- Record these values for CH₄, CH₃•, and H•.

BDE Calculation:

- Apply the formula: BDE = H(CH₃•) + H(H•) - H(CH₄)

- Convert the resulting enthalpy difference from Hartrees to kcal/mol or kJ/mol using standard conversion factors (1 Ha = 627.509 kcal/mol).

- For calculations at specific temperatures, include the

temperature=XXXkeyword in the route section.

Critical Notes: The hydrogen atom energy should theoretically be -0.500000 Ha at 0K, but practical DFT calculations with finite basis sets will yield slightly different values [10]. Always employ the same consistent methodology across all species.

Protocol for Multiconfiguration Pair-Density Functional Theory (MC-PDFT)

Objective: Calculate accurate potential energy surfaces for strongly correlated systems using MC-PDFT [6] [8].

Step-by-Step Procedure:

Complete Active Space Self-Consistent Field (CASSCF) Calculation:

- Select an active space appropriate for the system (e.g., (2e,2o) for bond breaking, larger spaces for transition metals).

- Perform CASSCF calculation to obtain a multiconfigurational reference wavefunction.

Energy Evaluation with MC-PDFT:

- Using the CASSCF wavefunction, compute the total energy with MC-PDFT.

- The total energy is separated into:

- Classical energy (kinetic, nuclear attraction, Coulomb) from the multiconfigurational wavefunction.

- Nonclassical energy (exchange-correlation) approximated using a density functional based on electron density and on-top pair density [8].

- For highest accuracy, use the recently developed MC23 functional, which incorporates kinetic energy density for improved electron correlation description [8].

Result Analysis:

- Examine potential energy curves for bond dissociation or reaction pathways.

- Compare spin densities and electronic properties with experimental data when available.

Applications: This protocol is particularly effective for transition metal complexes, bond-breaking processes, and diradicals where static correlation dominates [6] [8].

Visualization of Computational Workflows

Research Reagent Solutions

Table 3: Essential Computational Tools for Strong Correlation Problems

| Research Reagent | Function | Application Context |

|---|---|---|

| Gaussian16 | General-purpose quantum chemistry software | BDE calculation, geometry optimization, frequency analysis [10] |

| CASSCF | Multiconfigurational wavefunction method | Reference calculation for MC-PDFT, active space treatment [7] |

| MC-PDFT | Hybrid wavefunction-DFT method | Strongly correlated systems at lower computational cost [6] [8] |

| MC23 Functional | Optimized density functional for MC-PDFT | Improved accuracy for spin splitting and bond energies [8] |

| Nitrogen-Vacancy Center Sensors | Diamond-based quantum sensors | Experimental measurement of magnetic fluctuations in materials [12] |

| DMRG | Density Matrix Renormalization Group | Handling extremely large active spaces for complex systems [6] |

Relativistic Effects and Their Role in Heavy Element Chemistry

Relativistic effects become significant for heavy elements and substantially influence their chemical properties. These effects are critical for accurately modeling superheavy elements (SHEs), where relativistic calculations are essential for predicting behavior and understanding the Periodic Table's limits [13]. Relativistic quantum chemistry methods are indispensable for studying compounds containing heavy atoms like halogens or for properties like NMR chemical shifts, even in molecules with light atoms bonded to heavy ones [14].

Theoretical Background

Origins of Relativistic Effects

Relativistic effects in heavy elements arise from the high velocities of inner-shell electrons. As the atomic number increases, these electrons must travel at speeds comparable to the speed of light to avoid collapsing into the nucleus. This leads to two primary consequences:

- Orbital Contraction and Stabilization: The mass increase of core electrons causes a radial contraction of s and p orbitals (direct relativistic effect).

- Orbital Expansion and Destabilization: The enhanced shielding of the nuclear charge by contracted core electrons leads to the expansion and destabilization of d and f orbitals (indirect relativistic effect) [13].

Scalar and Spin-Orbit Relativistic Effects

Modern relativistic calculations typically incorporate effects through two main approaches:

- Scalar Relativistic Effects: These include the contraction of s orbitals and the expansion of d and f orbitals, influencing molecular geometries and bond energies.

- Spin-Orbit Coupling: This interaction splits atomic energy levels and significantly affects spectroscopic properties and the electronic structure of open-shell systems [14].

For accurate NMR parameters, both scalar relativistic and spin-orbit coupling can have large effects, especially for heavy atoms or light elements close to a heavy atom [14].

Computational Protocols

Quantifying Relativistic Effects

The contribution of relativity to a computed property is quantified by performing two separate calculations: one that includes a relativistic Hamiltonian and another that is non-relativistic, then taking the difference. The most straightforward method is using the same decontracted basis set for both the nonrelativistic and relativistic calculations [15]. The X2C (exact two-component) Hamiltonian is recommended over older Douglas-Kroll (DK) methods as it is superior "in every conceivable way" [15].

Protocol: NMR Chemical Shifts with Relativistic DFT

This protocol details the calculation of NMR chemical shifts for hydrogen halides (HF, HCl, HBr, HI), illustrating the effect of spin-orbit coupling [14].

Research Reagent Solutions

| Reagent / Material | Function in Calculation |

|---|---|

| AMSinput Software | Graphical user interface for building molecular structures and setting up calculations [14]. |

| ADF Engine | Computational engine performing the density functional theory (DFT) calculations [14]. |

| PBE Functional | GGA (Generalized Gradient Approximation) exchange-correlation functional for geometry optimization [14]. |

| PBE0 Functional | Hybrid exchange-correlation functional, recommended for more accurate NMR calculations [14]. |

| QZ4P Basis Set | All-electron quadruple-zeta basis set with four polarization functions for high accuracy [14]. |

| ZORA Hamiltonian | Relativistic Hamiltonian (Scalar or Spin-Orbit) to account for relativistic effects [14]. |

Step-by-Step Workflow

Geometry Optimization:

- Build the molecule (e.g., HF) in AMSinput.

- In the Main panel, set the Task to

Geometry Optimization. - Select the XC functional (e.g.,

GGA:PBE). - Choose an all-electron basis set (e.g.,

QZ4P). - Set Frozen core to

none. - Set Relativity to

Scalar. - Set Numerical Quality to

Good.

NMR Property Calculation Setup:

- Navigate to Properties → NMR.

- Select the atoms (e.g.,

H atoms) for shielding calculation. - Select boxes for

Isotropic shielding constantsandFull shielding tensors.

Systematic Variation:

- For each molecule (HF, HCl, HBr, HI), perform four calculations combining:

- XC functionals:

GGA:PBEandHybrid:PBE0 - Relativistic treatments:

ScalarandSpin-Orbit

- XC functionals:

- Save each input file with a different name.

- For each molecule (HF, HCl, HBr, HI), perform four calculations combining:

Result Analysis with ADFreport:

- In AMSJobs, select Tools → New Report Template.

- Create a custom report to extract

NMR Shieldingsand thedistancebetween atoms 1 and 2. - Select all jobs and generate the report for comparative analysis [14].

Figure 1: Computational workflow for relativistic NMR calculations.

Data Analysis and Comparison to Experiment

The NMR chemical shift (δi) is calculated as the difference between the isotropic shielding of a reference compound (σref) and the compound of interest (σi): δi = σref - σi For the hydrogen halide series, HF is used as the reference (δi(HF) = 0.0 ppm). The experimental 1H NMR chemical shifts for the series are [14]:

| Compound | Experimental 1H δi (ppm) | Experimental Bond Distance (Å) |

|---|---|---|

| HF | 0.00 (by definition) | 0.91680 |

| HCl | -2.58 | 1.27455 |

| HBr | -6.43 | 1.41443 |

| HI | -15.34 | 1.60916 |

Comparison of calculated versus experimental results shows that spin-orbit coupling is necessary to achieve reasonable agreement for the NMR chemical shifts, and the PBE0 functional generally provides better geometries than PBE [14].

Application to Superheavy Elements (SHEs)

Relativistic electronic structure theory is crucial for predicting properties of SHEs and their compounds, profoundly influencing their chemical behavior and placement in the Periodic Table [13]. Key effects include:

- Stabilization of SHE Valence Orbitals: Relativistic effects can stabilize the 7s and 7p1/2 orbitals in SHEs.

- Spin-Orbit Splitting: Massive splitting of p, d, and f orbitals leads to ground-state electron configurations that differ from non-relativistic predictions.

- Relativistic Deshielding of Divalent Rn: A noted example where relativity is essential for accurate property prediction [13].

These effects can cause deviations from standard periodic trends, influencing volatility, bonding, and reactivity, which are critical for designing experiments given the low production rates and short half-lives of SHEs [13].

Figure 2: How relativistic effects influence superheavy element chemistry.

Relativistic effects are fundamental in heavy element chemistry. They are necessary for accurate prediction of molecular properties such as geometry and NMR parameters, and for understanding the chemical behavior of superheavy elements. Modern computational protocols using relativistic DFT, with methods like X2C and ZORA, provide powerful tools for researchers to incorporate these critical effects, bridging the gap between non-relativistic quantum chemistry and experimental observations for heavy systems.

In the realm of quantum materials, high-temperature superconductivity and strange metals represent two of the most challenging and fascinating phenomena arising from strong electron correlations. These systems fall under the classification of strongly correlated materials, where electron-electron interactions dominate over the individual kinetic energy of electrons, making their behavior impossible to describe with conventional single-particle theories like standard density functional theory or the nearly-free-electron model [16] [17]. In such materials, the motion of one electron becomes highly dependent on the positions and states of other electrons, leading to extraordinary emergent properties including Mott insulating behavior, unconventional superconductivity, and the peculiar charge transport characteristics observed in strange metals [17].

The fundamental challenge in understanding these materials lies in solving the complex many-body Hamiltonian that describes their electronic behavior. The full many-body Hamiltonian, which includes all electronic and nuclear degrees of freedom, is nearly impossible to solve exactly due to its complexity [17]. This theoretical challenge forms the core of the quantum chemistry problem for strongly correlated systems and drives the development of advanced computational and experimental approaches discussed in this application note.

Strange Metals: Characterization and Experimental Protocols

Fundamental Properties and Identification

Strange metals constitute a class of quantum materials that defy the standard Fermi liquid theory describing conventional metals like copper or gold. Their defining characteristic is a linear temperature dependence of electrical resistivity (ρ ∝ T) that extends down to very low temperatures, unlike conventional metals which exhibit a saturation of resistivity at low temperatures due to the dominance of T² dependence [18] [19]. This anomalous behavior indicates the absence of well-defined quasiparticles, which are the fundamental excitations in ordinary metals.

Another remarkable feature of strange metals is their universal scattering rate. Research led by Debanjan Chowdhury at Cornell has revealed that in strange metals at low temperatures, the interval between successive electron collisions is unusually short and is precisely determined by the temperature of the system and Planck's constant [19]. This behavior holds true regardless of the strange metal's chemical composition, suggesting a fundamental universal physics underlying these materials that transcends their specific microscopic details.

Experimental Synthesis and Measurement Protocols

Synthesis of Kagome-Based Strange Metals

Recent breakthroughs at MIT have demonstrated a novel approach to creating strange metals through quantum geometric engineering. The protocol involves fabricating materials with atoms arranged in a kagome lattice structure, which resembles a repeating pattern of sheriff's stars or Japanese basket-weaving motifs [20]. The experimental workflow can be summarized as follows:

- Crystal Growth: Synthesize single crystals of kagome metals (e.g., CsV₃Sb₅) using flux growth techniques or chemical vapor deposition.

- Geometric Engineering: Utilize the inherent flat electronic bands in the kagome structure at the Fermi level, where electrons become heavily correlated due to reduced kinetic energy.

- Electronic Structure Confirmation: Employ angle-resolved photoemission spectroscopy (ARPES) to verify the presence of Dirac fermions and flat bands in the synthesized materials.

- Strange Metal State Activation: Apply external perturbations such as high pressure (up to several GPa) and magnetic fields to drive the system into the strange metal regime where electron interactions dominate.

The discovery that kagome metals can host strange metal behavior provides researchers with a tunable platform for exploring the relationship between quantum geometry and strong correlations [20].

Quantum Entanglement Measurement Protocol

A groundbreaking methodological approach developed by Rice University physicists enables direct probing of electron entanglement in strange metals using quantum information science tools [21]:

- Sample Preparation: Prepare high-quality single crystals of candidate strange metal materials (e.g., heavy fermion compounds or cuprates).

- Quantum Fisher Information (QFI) Measurement: Apply QFI, a concept from quantum metrology, to measure how electron interactions evolve under extreme conditions.

- Neutron Scattering Validation: Perform inelastic neutron scattering experiments to correlate QFI measurements with atomic-level material properties.

- Entanglement Mapping: Track the evolution of electron spin entanglement across quantum critical points by monitoring the loss of well-defined quasiparticles.

This protocol has revealed that electron entanglement peaks precisely at the quantum critical point—the transition between two distinct states of matter—providing direct evidence for the quantum origin of strange metal behavior [21].

Table 1: Key Characterization Techniques for Strange Metals

| Technique | Measured Property | Key Signature of Strange Metal |

|---|---|---|

| Electrical Transport | Resistivity vs Temperature | Linear ρ(T) extending to lowest temperatures |

| Quantum Fisher Information | Electron entanglement | Peak at quantum critical point |

| Inelastic Neutron Scattering | Quasiparticle lifetime | Absence of well-defined quasiparticles |

| Angle-Resolved Photoemission | Electronic band structure | Destruction of Fermi surface |

Theoretical Framework and Universal Model

A universal theory proposed by Patel and colleagues at the Flatiron Institute explains strange metal behavior through the combination of two key properties: widespread quantum entanglement and atomic-scale nonuniformity [18]. In this model, electrons in strange metals become quantum mechanically entangled over long distances, binding their fates together. Simultaneously, the patchwork-like arrangement of atoms in these materials means that electron entanglements vary spatially based on where in the material the entanglement occurred.

This combination introduces randomness to electron momentum as they move through the material and interact. Instead of flowing collectively, electrons scatter in all directions, resulting in the characteristic temperature-linear resistivity. The model successfully predicts that resistivity scales linearly with temperature according to the fundamental constants h (Planck's constant) and kB (Boltzmann's constant), explaining the universal behavior observed across different strange metal compounds [18].

High-Temperature Superconductivity: Materials and Methods

Ambient-Pressure Stabilization Protocols

A significant experimental advancement in high-temperature superconductivity research comes from the development of pressure-quench synthesis techniques. Researchers at the University of Houston have established a protocol to stabilize superconducting materials at ambient pressure, overcoming a major limitation for practical applications [22]:

- High-Pressure Synthesis: Prepare composite materials (e.g., bismuth-antimony-tellurium systems) under extreme pressure conditions (2-3 GPa) where superconducting phases are stable.

- Pressure Quenching: Rapidly release the applied pressure while maintaining low temperature conditions (below 150K).

- Metastable Phase Preservation: Characterize the retained superconducting properties at ambient pressure using transport measurements and magnetic susceptibility.

- Material Optimization: Systematically vary chemical composition and quenching parameters to enhance superconducting critical temperature (Tc) and volume fraction.

This protocol has enabled the stabilization of superconducting phases outside of high-pressure environments, opening new pathways for material discovery and practical application [22].

Computational Discovery Framework

The HTSC-2025 benchmark dataset represents a paradigm shift in superconductor discovery through AI-driven approaches [23]. This comprehensive compilation encompasses theoretically predicted superconducting materials discovered by theoretical physicists from 2023 to 2025 based on BCS superconductivity theory, including several prominent material classes:

- X₂YH₆ systems (e.g., hydrides with rare-earth and transition metals)

- Perovskite MXH₃ systems

- M₃XH₈ systems

- Cage-like BCN-doped metal atomic systems derived from LaH₁₀ structural evolution

- Two-dimensional honeycomb-structured systems evolving from MgB₂

The dataset implementation protocol involves:

- Data Curation: Collect and standardize structural, electronic, and superconducting properties from theoretical publications.

- Feature Engineering: Compute relevant descriptors including electron-phonon coupling strengths, density of states at Fermi level, and vibrational properties.

- Model Training: Implement machine learning algorithms (neural networks, gradient boosting) to predict critical temperatures from material descriptors.

- Experimental Validation: Prioritize synthetic targets for experimental verification based on computational predictions.

This benchmark enables fair comparison between different AI algorithms and accelerates the discovery of new superconducting materials [23].

Table 2: Major High-Temperature Superconductor Material Classes

| Material Class | Representative Compounds | Maximum Reported Tc | Pressure Requirement |

|---|---|---|---|

| Cuprates | YBa₂Cu₃O₇₋ₓ | ~90K | Ambient |

| Iron-Based | Ba₁₋ₓKₓFe₂As₂ | ~38K | Ambient |

| Hydrides | H₂S, LaH₁₀ | 203K [24] | High (>>100 GPa) |

| Carbon Structures | Doped carbyne chains | 115K (predicted) [24] | Ambient |

| Kagome Metals | CsV₃Sb₅ | ~3K | Ambient |

Low-Dimensional Carbon Structure Design

Theoretical and experimental work on low-dimensional carbon systems has revealed promising pathways to high-temperature superconductivity. A systematic protocol for designing and optimizing carbon-based superconductors involves [24]:

- Dimensionality Engineering: Start with known carbon allotropes (graphene, nanotubes) and systematically reduce dimensionality.

- Van Hove Singularity Enhancement: Design structures that enhance singularities in the electronic density of states through quantum confinement.

- Electron-Phonon Coupling Optimization: Tune structural parameters (bond lengths, kink structures) to maximize coupling strength while maintaining metallicity.

- Array Fabrication: Arrange one-dimensional elements (chains, nanotubes) in parallel arrays with controlled spacing to suppress phase fluctuations while preserving 1D electronic characteristics.

This approach has predicted Tc values up to 115K for optimized carbon ring structures, demonstrating the potential of carbon-based materials for high-temperature superconductivity without extreme pressure requirements [24].

Advanced Computational Methodologies for Strong Correlation

Beyond Standard Density Functional Theory

Strongly correlated materials present fundamental challenges for conventional computational methods. Standard density functional theory (DFT) often fails to accurately describe these systems due to its inadequate treatment of dynamic electron correlations and self-interaction errors [17]. The limitations become particularly severe for materials with localized d- and f-electrons, where electron-electron repulsion dominates their electronic behavior.

To address these challenges, researchers have developed advanced computational frameworks that extend beyond standard DFT:

DFT+U Method: Incorporates an on-site Coulomb repulsion term (U) to better describe localized electrons, providing improved treatment of Mott insulating behavior and band gap predictions.

Dynamical Mean Field Theory (DMFT): Maps the lattice problem to an impurity model coupled to a self-consistent electron bath, capturing local dynamic correlations and temperature-dependent effects missing in static DFT approaches.

Density Matrix Renormalization Group (DMRG): Provides highly accurate solutions for one-dimensional and quasi-1D systems by variationally optimizing a matrix product state representation of the wavefunction, effectively capturing entanglement in low-dimensional geometries [17].

The DFT+DMFT framework has proven particularly powerful for studying realistic materials, combining first-principles DFT calculations with many-body DMFT treatment of correlated orbitals. This approach has revealed complex phenomena such as the dual nature of polarons in Li-doped V₂O₅ and orbital-selective Mott transitions in cobaltates [17].

Quantum Embedding Strategies

For complex material systems with multiple correlated sites, quantum embedding theories provide a powerful hierarchical approach:

- Wannier Hamiltonian Construction: From DFT band structures, construct localized Wannier functions for correlated subspaces.

- Impurity Model Definition: Identify correlated sites and embed them in a self-consistent bath representing the rest of the material.

- Impurity Solver Application: Employ continuous-time quantum Monte Carlo (CT-QMC) or exact diagonalization to solve the impurity problem.

- Self-Consistency Cycle: Update the bath Green's function and repeat until convergence of the self-energy.

This framework enables first-principles calculations of real materials while capturing the essential strong correlation physics responsible for strange metal behavior and high-temperature superconductivity.

Research Reagent Solutions

Table 3: Essential Research Materials and Reagents

| Material/Reagent | Function in Research | Application Examples |

|---|---|---|

| Kagome Metal Crystals | Platform for geometric frustration and flat band physics | CsV₃Sb₅, FeSn, YMn₆Sn₆ |

| High-Pressure Cells | Synthesis of metastable phases | Diamond anvil cells, multi-anvil presses |

| Quantum Critical Materials | Study of entanglement at phase transitions | YbAl₃, CeCoIn₅, Cr-doped V₂O₃ |

| Hydride Precursors | High-Tc superconductor synthesis | H₂S, LaH₁₀, YH₆ |

| Moiré Heterostructure Materials | Tunable strongly correlated platforms | Twisted bilayer graphene, transition metal dichalcogenides |

| Low-Dimensional Carbon Allotropes | Light-element high-Tc candidates | Carbyne chains, carbon nanotubes, graphene nanoribbons |

Integrated Workflow and Future Directions

The study of high-temperature superconductivity and strange metals requires an integrated approach combining materials synthesis, advanced characterization, and sophisticated computational modeling. A comprehensive research workflow connects these elements through iterative feedback between prediction, synthesis, measurement, and theoretical refinement.

Future directions in the field include:

Moiré Material Engineering: Utilizing twisted van der Waals heterostructures to create tunable strongly correlated systems where the relative strength of interactions versus kinetic energy can be precisely controlled by twist angle [19].

Quantum Information Cross-Pollination: Further application of quantum information concepts (entanglement measures, quantum Fisher information) to characterize and classify correlated electron states [21] [18].

High-Throughput Computational Discovery: Leveraging benchmark datasets like HTSC-2025 in combination with machine learning to accelerate the identification of new superconducting material candidates [23].

Strange Metal Theory Unification: Developing a comprehensive theoretical framework that explains the universal properties of strange metals and their connection to high-temperature superconductivity across different material classes [18] [19].

These research avenues hold the promise of not only solving fundamental puzzles in quantum materials but also enabling transformative technologies through the development of room-temperature superconductors and novel quantum devices.

Research Workflow for Strongly Correlated Materials

Theoretical Framework for Strong Correlation Phenomena

The Inert Pair Effect and Other Chemical Anomalies Explained by Correlation

The "inert pair effect," a concept introduced by Nevil Sidgwick in 1927, describes the tendency of the outermost s-orbital electron pair in heavier p-block elements to remain unshared in their compounds, leading to a prevalence of oxidation states two lower than the group valence [25] [26]. For decades, this was primarily a descriptive phenomenon. However, modern quantum chemistry reveals that this chemical anomaly, along with others like the unexpected insulating behavior of certain transition metal oxides, is a manifestation of strong electron correlation [17] [5]. Strongly correlated systems are those in which the behavior of electrons cannot be accurately described by models that treat electrons as independent, non-interacting particles moving in an average field; instead, the motion of each electron is highly dependent on the positions and states of all others [16] [17]. This article details how advanced computational protocols can elucidate the role of electron correlation in explaining the inert pair effect and related material properties, providing a crucial toolkit for researchers tackling strong correlation problems.

Quantitative Data: Energetics of the Inert Pair Effect

The stability of lower oxidation states in heavier elements like Thallium (Tl), Lead (Pb), and Bismuth (Bi) can be quantified through thermodynamic data. The following tables summarize key energetic parameters that underpin the inert pair effect [25].

Table 1: Promotion Energies and Bond Dissociation Energies for Group 13 and 14 Elements [25]

| Element | Promotion Energy (s²pⁿ → s¹pⁿ⁺¹) (kJ/mol) | M–X Bond Dissociation Energy (kJ/mol) | Difference (Bond Energy - Promotion Energy) |

|---|---|---|---|

| Aluminum (Al) | ~400 | ~580 | ~+180 |

| Gallium (Ga) | ~470 | ~540 | ~+70 |

| Indium (In) | ~420 | ~460 | ~+40 |

| Thallium (Tl) | ~520 | ~380 | ~-140 |

Note: The values are approximate, compiled from various literature sources. The trend shows that for thallium, the energy required for electron promotion is no longer compensated by the energy released from forming two additional bonds.

Table 2: Ionization Energies (kJ/mol) for Group 13 Elements [26]

| Element | 1st I.E. | 2nd I.E. | 3rd I.E. | Sum (2nd + 3rd I.E.) |

|---|---|---|---|---|

| Boron (B) | 800 | 2,427 | 3,659 | 6,086 |

| Aluminum (Al) | 577 | 1,816 | 2,744 | 4,560 |

| Gallium (Ga) | 578 | 1,979 | 2,963 | 4,942 |

| Indium (In) | 558 | 1,820 | 2,704 | 4,524 |

| Thallium (Tl) | 589 | 1,971 | 2,878 | 4,849 |

Note: The higher-than-expected sum of the second and third ionization energies for Thallium compared to Indium indicates the increased difficulty in removing the "inert" s-electron pair, partly attributable to relativistic effects and poor shielding by intervening d and f orbitals [26].

Protocol 1: Investigating the Inert Pair Effect via DFT+U

This protocol outlines a computational methodology for analyzing the electronic structure of compounds exhibiting the inert pair effect, using Thallium(I) and Thallium(III) halides as an example.

Materials and Computational Reagents

Table 3: Research Reagent Solutions for Computational Analysis

| Item | Function & Specification |

|---|---|

| Crystal Structure File | Input geometry for the calculation. Format: .cif or .xyz. Source: Materials Project (MP) or Inorganic Crystal Structure Database (ICSD). |

| DFT Code | Primary computational engine. Examples: VASP, Quantum ESPRESSO. |

| Pseudopotential/PAW Library | Describes electron-ion interactions. Must be consistent with the chosen DFT code (e.g., GBRV, PSLibrary). |

| U Parameter | Empirical Hubbard correction. Value range: 3-7 eV for Tl 6s/p orbitals, determined via linear response. |

| Structural Optimization Script | Automates geometry relaxation (e.g., Bash/Python script controlling DFT code input/output). |

Step-by-Step Procedure

System Setup and Initialization

- Obtain the crystal structures of TlX and TlX₃ (X = F, Cl, Br, I) from a database like the ICSD.

- Generate the input files for your DFT code, specifying the calculation parameters: a plane-wave cutoff energy of 520 eV, a k-point mesh with a spacing of 0.03 Å⁻¹, and the Perdew-Burke-Ernzerhof (PBE) generalized gradient approximation (GGA) functional.

Parameter Calibration (U)

- Perform a linear response calculation on a simple compound, such as Tl₂O₃, to determine the optimal Hubbard U parameter for the Tl 6s and 6p orbitals [17].

- Run a series of single-point energy calculations with U values ranging from 0 to 10 eV.

- Plot the total energy and the occupation matrices of the relevant orbitals against the U value. The U value that yields a stable electronic structure with localized 6s electrons is selected for subsequent steps.

Geometry Optimization and Electronic Structure Analysis

- Perform a full geometry optimization of all structures using the calibrated U parameter. Convergence criteria should be set to 10⁻⁵ eV for electronic steps and 0.01 eV/Å for ionic forces.

- From the optimized structure, calculate the electronic density of states (DOS) and project it onto the atomic orbitals of Tl (Projected DOS - PDOS).

- Analyze the PDOS to identify the energy and localization of the Tl 6s states. Compare the stability and electronic structure of Tl(I) versus Tl(III) compounds.

Data Interpretation

- The calculated DOS should show that the 6s states in Tl(III) compounds are higher in energy and more involved in bonding, whereas in stable Tl(I) compounds, they form a deep, localized band, confirming their "inert" nature.

- Compare the computed cohesive energies of TlX and TlX₃ to confirm the higher stability of the lower oxidation state.

The logical flow of this computational investigation is summarized below.

Protocol 2: Probing Strong Correlation in Transition Metal Oxides via DFT+DMFT

This protocol applies to materials where strong correlation leads to dramatic phenomena, such as the Mott insulating behavior in NiO, which is incorrectly predicted to be a metal by standard DFT [5].

Materials and Computational Reagents

Table 4: Research Reagent Solutions for Advanced Correlation Studies

| Item | Function & Specification |

|---|---|

| Wannier90 Code | Generates maximally localized Wannier functions (MLWFs) from DFT output. |

| DFT+DMFT Software | Solves the impurity problem. Examples: TRIQS, EDMFTF. |

| Continuous-Time Quantum Monte Carlo (CT-QMC) Solver | Used within DMFT to solve the quantum impurity model. |

| Double Counting Correction | Accounts for electron interactions already described by DFT. Common choice: "fully localized limit" (FLL). |

Step-by-Step Procedure

Initial DFT Calculation

- Perform a high-precision, non-magnetic DFT calculation for the target material (e.g., NiO) using its experimental crystal structure.

- Confirm the incorrect metallic state in the DFT DOS as a baseline.

Wannier Hamiltonian Construction

- Use the Wannier90 code to construct a localized basis set (MLWFs) for the relevant transition metal d-orbitals [17].

- The output is a tight-binding Hamiltonian (Hᵣₑf) that accurately reproduces the DFT band structure near the Fermi level.

DMFT Self-Consistency Loop

- Define the Hubbard Model: Set up the Hamiltonian with the hopping parameters from Hᵣₑf and the electron-electron interaction term (Hubbard U, Hund's coupling J).

- Impurity Solver: Use the CT-QMC solver to compute the local Green's function for the impurity problem.

- Self-Consistency: The DMFT self-consistent loop updates the hybridization function until the local Green's function converges (typically 10⁻⁶ tolerance). This process maps the lattice problem onto a self-consistently determined quantum impurity problem and back.

Spectral Function and Property Analysis

- After convergence, compute the analytically continued spectral function to obtain the DFT+DMFT density of states.

- Analyze the spectral function for the opening of a Mott gap and the appearance of characteristic upper and lower Hubbard bands, which explain the insulating state.

- Calculate the momentum-resolved spectral function to compare with Angle-Resolved Photoemission Spectroscopy (ARPES) data.

The intricate workflow of the DFT+DMFT method, which is critical for accurately simulating such systems, is outlined below.

The inert pair effect, once a descriptive chemical curiosity, and the Mott insulating behavior in transition metal oxides are unified under the framework of strong electron correlation. The experimental protocols detailed herein—employing advanced computational methods like DFT+U and DFT+DMFT—provide researchers with a clear pathway to move beyond qualitative explanations. By quantitatively modeling the localization of electron pairs and the emergence of correlation-driven band gaps, these tools are indispensable for the rational design of next-generation materials, from tailored catalysts exploiting stable low-valent states to novel Mottronic devices.

A Practical Toolkit: Multireference, Local Correlation, and Quantum Computing Methods

The Complete Active Space Self-Consistent Field (CASSCF) method represents a cornerstone in quantum chemistry for treating systems with strong electron correlation. Developed by Björn Roos and colleagues in 1980, CASSCF provides a completely general approach for even-handed treatment of all types of electronic structures, independent of open shell character, spin multiplicity, or bond-breaking situations [27]. Unlike single-reference methods such as Hartree-Fock or Density Functional Theory (DFT), which often fail for multiconfigurational problems, CASSCF offers a robust framework for studying diradicals, transition metal complexes, excited states, and chemical reactions where multiple electronic configurations contribute significantly to the wavefunction [27] [28].

The fundamental strength of CASSCF lies in its ability to treat the nondynamical part of electron-electron correlation explicitly through a multideterminantal wavefunction [27]. This makes it particularly valuable for molecular systems where static correlation effects dominate, including bond dissociation processes, conical intersections in photochemistry, and open-shell systems that are prevalent in catalytic and biochemical processes [28]. As quantum chemistry expands into increasingly complex molecular systems and interacts with emerging fields like quantum computing and polaritonic chemistry, CASSCF continues to provide the foundational multireference description upon which more accurate treatments are built.

Theoretical Framework and Computational Protocol

CASSCF Wavefunction and Orbital Spaces

The CASSCF wavefunction is constructed as a linear combination of Configuration State Functions (CSFs) adapted to total spin S [29]:

[ \left| \PsiI^S \right\rangle = \sum{k} { C{kI} \left| \Phik^S \right\rangle} ]

The molecular orbital space is partitioned into three distinct subspaces [29]:

- Inactive orbitals: Doubly occupied in all CSFs

- Active orbitals: Variable occupation numbers across CSFs

- External orbitals: Unoccupied in all CSFs

The key variational parameters are the molecular orbital coefficients ((c{\mu i})) and the CI expansion coefficients ((C{kI})). The energy is made stationary with respect to variations in both sets of parameters, satisfying the conditions [29]:

[ \frac{\partial E(\mathbf{c},\mathbf{C})}{\partial c{\mu i}} = 0, \quad \frac{\partial E(\mathbf{c},\mathbf{C})}{\partial C{kI}} = 0 ]

Table 1: CASSCF Orbital Space Specifications

| Orbital Space | Electron Occupation | Indices | Role in Wavefunction |

|---|---|---|---|

| Inactive | Fixed double occupation | i, j, k, l | Core electron description |

| Active | Variable occupation (0-2) | t, u, v, w | Nondynamical correlation |

| External | Unoccupied | a, b, c, d | Virtual orbitals |

Active Space Selection Protocol

The selection of active electrons and orbitals constitutes the most critical step in CASSCF calculations. The procedure involves:

- Identify correlated regions: Locate molecular orbitals involved in bond breaking/forming, open-shell electrons, or near-degeneracies [29] [28]

- Determine active electrons: Count electrons participating in correlation effects

- Select active orbitals: Include orbitals with expected occupation numbers between 0.02 and 1.98 for optimal convergence [29]

- Validate selection: Verify active space provides balanced description of all states/geometries of interest

For challenging systems, the following strategies are recommended:

- Use initial DFT or HF calculations to identify frontier orbitals

- Employ atomic orbital analysis for transition metal systems

- Consider state-averaging when multiple electronic states are involved

- Implement automated active space selection tools (e.g, AVAS, DMRG-SCF) for complex systems

Application Notes: CASSCF in Chemical Research

Strong Correlation in Molecular Systems

CASSCF has demonstrated exceptional capability for studying strongly correlated molecular systems. Recent applications include:

Transition Metal Complexes: CASSCF/CASPT2 has provided the only successful description to date of the chemical bond in Cr₂, addressing the complex interplay of covalent, ionic, and dispersion contributions [27]. For lanthanide and actinide compounds, CASSCF with spin-orbit coupling has revealed unique bonding in the U₂ dimer, leading to a renaissance of interest in fundamental chemical bonding concepts [27].

Photoreceptor Proteins: Polarizable embedding CASSCF/MM approaches have been applied to photoreceptors like the Dronpa variant of green fluorescent protein and the orange carotenoid protein [30]. These studies investigate how protein environments impact structural and photophysical properties of embedded chromophores, with particular attention to hydrogen-bonding interactions and polarization effects [30].

CASSCF for Quantum Error Mitigation

The multireference character of CASSCF has inspired novel quantum error mitigation (QEM) strategies for quantum computation of chemistry. Multireference-state error mitigation (MREM) extends reference-state error mitigation by systematically capturing quantum hardware noise in strongly correlated ground states using multireference states [31].

MREM employs Givens rotations to efficiently construct quantum circuits generating multireference states and uses compact wavefunctions composed of dominant Slater determinants [31]. This approach balances circuit expressivity and noise sensitivity, demonstrating significant improvements for molecular systems H₂O, N₂, and F₂ compared to original REM methods [31].

Table 2: CASSCF Performance in Quantum Error Mitigation

| Molecule | Active Space | REM Error (Hartree) | MREM Error (Hartree) | Improvement Factor |

|---|---|---|---|---|

| H₂O | (6,5) | 0.0124 | 0.0038 | 3.26× |

| N₂ | (6,6) | 0.0217 | 0.0062 | 3.50× |

| F₂ | (6,6) | 0.0341 | 0.0089 | 3.83× |

Real-Time Electron Dynamics

Real-time CASSCF (Ehrenfest) dynamics enables modeling of electron dynamics in organic semiconductors, providing mechanistic insight at the electronic structure level [32]. This approach couples all-electron dynamics to classical nuclear dynamics for studying charge carrier dynamics, spin density dynamics, and the effects of crystal structure on charge migration [32].

Applications to π-stacked ethylene models and bisdithiazolyl/bisdiselenazolyl radicals have revealed that charge migration cannot propagate across entire systems with molecular slippage; instead, coherence is limited to 3 molecular units [32]. This has profound implications for designing organic semiconductors with enhanced charge transport properties.

Advanced Methodologies and Extensions

CASSCF/MM with Polarizable Force Fields

The integration of CASSCF with polarizable molecular mechanics (MM) force fields like AMOEBA enables realistic modeling of molecules in complex environments [30]. The Lagrangian formulation incorporates mutual polarization between QM and MM regions [30]:

[ L(\kappa,c,\mud,\mup) = E{CAS}(\kappa,c) + E{self}(M) + E{ele}(\kappa,c,M) + E{pol}(\kappa,c,M) + \frac{1}{2}\langle \mup, T\mud - E(\kappa,c) - E_d(M) \rangle ]

This approach accounts for environment polarization effects on CASSCF energies and gradients, which is particularly important for excited states and charge transfer processes [30]. The implementation in frameworks like OpenMMPol coupled with CFour provides analytical gradients for geometry optimizations of ground and excited states [30].

QED-CASSCF for Polaritonic Chemistry

The recent extension of CASSCF to quantum electrodynamics environments (QED-CASSCF) enables investigation of molecules strongly interacting with quantized electromagnetic fields in optical cavities [28]. This approach captures how multireference effects are induced or reduced by quantum fields, opening possibilities for manipulating molecular properties through non-intrusive field controls [28].

QED-CASSCF is particularly valuable for studying polariton formation, where photons and molecular states hybridize, generating new states with mixed molecular and photonic character [28]. The method has been tested on benchmark multireference problems and applied to investigate field-induced effects on electronic structure in multiconfigurational processes [28].

Hybrid Quantum Computing Approaches

CASSCF concepts are being adapted for hybrid quantum-classical computing pipelines in drug discovery [33]. These approaches use variational quantum eigensolvers (VQE) to prepare molecular wavefunctions on quantum devices, with CASCI energies serving as exact solutions under active space approximations [33].

Applications include precise determination of Gibbs free energy profiles for prodrug activation involving covalent bond cleavage and simulation of covalent bond interactions in drug-target systems like KRAS inhibitors [33]. This demonstrates the potential for quantum computing to enhance computational drug discovery for complex electronic structures.

Table 3: Research Reagent Solutions for CASSCF Calculations

| Tool/Category | Specific Examples | Function/Purpose |

|---|---|---|

| Software Packages | ORCA, CFour, MOLCAS, Gaussian | Implement CASSCF with various CI solvers and extensions |

| Active Space Tools | AVAS, DMRG-SCF, ICE-CI | Assist in active space selection and handle large active spaces |

| QM/MM Frameworks | OpenMMPol, AMOEBA | Enable polarizable embedding for complex environments |

| Analysis Utilities | Molden, Jupyter notebooks | Orbital visualization and computational data analysis |

| Quantum Computing | VQE, MREM | Quantum error mitigation and hybrid algorithms |

Computational Protocols

Standard CASSCF Optimization Protocol

Initial Orbital Generation:

- Obtain starting orbitals from HF or DFT calculations

- Transform to appropriate basis for active space selection

- For excited states, consider using state-averaged HF orbitals

Active Space Definition:

- Select active electrons and orbitals based on chemical intuition

- Verify orbital occupations will be between 0.02-1.98

- For problematic cases, use automated selection tools

Wavefunction Optimization:

- Employ super-CI algorithm for orbital optimization

- Use direct CI solver for full configuration interaction in active space

- Monitor convergence of energy, gradients, and density matrices

Analysis and Validation:

- Examine natural orbitals and occupation numbers

- Check for consistency across geometric configurations

- Compare with experimental or higher-level computational data

CASSCF/MM with Polarizable Embedding Protocol

System Preparation:

- Partition system into QM (CASSCF) and MM (AMOEBA) regions

- Define link-atom handling if QM/MM boundary cuts covalent bonds

- Generate MM topology with polarizable parameters

Lagrangian Implementation:

Self-Consistent Optimization:

- Solve CASSCF equations in presence of polarizable environment

- Update induced dipoles based on current QM density

- Iterate until mutual convergence of QM and MM components

CASSCF methodology continues to evolve, addressing increasingly complex chemical problems while integrating with emerging computational paradigms. Future developments will likely focus on:

- Handling larger active spaces through efficient CI solvers and density matrix renormalization group (DMRG) techniques

- Improved treatments of dynamical correlation through multireference perturbation theory (CASPT2) and coupled cluster methods

- Tighter integration with quantum computing platforms for enhanced simulation capabilities

- Advanced embedding schemes for complex biological and materials systems

The foundational role of CASSCF in addressing strong correlation problems ensures its continued relevance as quantum chemistry expands to tackle more challenging chemical systems and processes.

Multireference Configuration Interaction (MRCI) is a cornerstone of high-accuracy quantum chemistry, providing robust solutions for molecular systems where single-reference methods fail. These strongly correlated systems—characterized by nearly degenerate electronic configurations—include diradicals, transition metal complexes, dissociative structures, and molecules at conical intersections [34]. MRCI addresses this challenge by constructing wavefunctions from multiple reference determinants, simultaneously capturing nondynamic (static) and dynamic electron correlation effects that are crucial for quantitative accuracy [35] [34].

The method has evolved significantly since its initial development by Buenker and Peyerimhoff in the 1970s as Multi-Reference Single and Double Configuration Interaction (MRSDCI) [36]. Subsequent innovations, such as the internally contracted MRCI by Werner and Knowles, streamlined the methodology and expanded its applicability [36] [37]. Today, MRCI remains the gold standard for calculating accurate potential energy surfaces, excitation energies, and spectroscopic properties for small molecules and complex systems containing heavy elements [37] [38].

Theoretical Foundation and Computational Approaches

Core Methodology of MRCI

The MRCI method expands the electronic wavefunction as a linear combination of Slater determinants generated by exciting electrons from a set of reference configurations. In practice, the expansion is typically truncated at single and double excitations (MRCISD), providing a favorable balance between accuracy and computational cost [36] [34]. The references are usually selected from a prior Complete Active Space Self-Consistent Field (CASSCF) calculation that describes the static correlation.

A critical aspect of MRCI implementation involves handling the configuration interaction space. Two primary approaches exist:

- Internally contracted (ic) MRCI: Constructs the CI expansion in a basis contracted with respect to the reference functions, significantly reducing the number of CSFs [37].

- Uncontracted MRCI: Uses all configurations explicitly, as implemented in the COLUMBUS program package utilizing the Graphical Unitary Group Approach (GUGA) for efficient Hamiltonian matrix element evaluation [37].

Addressing Methodological Challenges

Despite its accuracy, conventional MRCI faces two significant challenges:

Size Inconsistency: Like all truncated CI methods, MRCI suffers from size inconsistency, meaning the energy of two infinitely separated fragments does not equal the sum of individual fragment energies [36] [35]. This limitation can be partially mitigated by corrections such as the Davidson correction (+Q), which approximates the effect of quadruple excitations [35].

Computational Scaling: The computational cost of MRCISD scales steeply with system size, limiting applications to smaller molecules unless approximations are introduced [35]. Modern approaches address this through:

- Parallel computing algorithms for constructing potential energy surfaces [39].

- Local correlation methods that exploit the short-range nature of dynamic correlation.

- Integration with density matrix renormalization group (DMRG) for handling large active spaces [40] [41].

Performance Data and Method Comparison

The quantitative performance of MRCI methods is well-established across various chemical systems. The table below summarizes key benchmarks for different MRCI variants.

Table 1: Performance Characteristics of MRCI Method Variants

| Method | Computational Scaling | Key Features | Typical Applications | Limitations |

|---|---|---|---|---|

| MRCISD | Very high | High accuracy for excited states and bond breaking [36] [35] | Potential energy surfaces for small molecules [35] | Size inconsistency; High computational cost [35] |

| MRCI+Q | Very high (similar to MRCISD) | Davidson correction improves size consistency [35] | Transition metal complexes; Diradicals | Empirical correction; Variable performance |

| DMRG-MRCI | High | Combines DMRG active space with MRCI correlation [40] [41] | Large active spaces (>30 orbitals) [41] | Implementation complexity; Reference reconstruction |

| MR-AQCC | High | Size-extensive modification of MRCI [37] | Multistate dynamics; Analytic gradients | Less established than MRCISD |

Table 2: Representative MRCI Applications and Results

| System | Method | Key Results | Reference |

|---|---|---|---|

| GeB molecule | CASSCF/MRCISD | Characterized 17 doublet/quartet states; Ground state: ^4Σ^-; D_e: 2.97 eV [38] | [38] |

| Cr₂ | DMRG-ec-MRCI | Accurate potential curve for challenging dimer | [41] |

| n-Acenes | DMRG-ec-MRCI | Singlet-triplet gaps in large conjugated systems [41] | [41] |

| Heme enzymes | DDCI+Q | A2u/A1u gap ~1.9 kcal/mol [35] | [35] |

Experimental Protocols

Standard MRCI Protocol for Diatomic Molecules

This protocol outlines the characterization of low-lying electronic states for diatomic molecules like GeB [38]:

Reference Space Selection

- Identify dominant configurations using Wigner-Witmer rules correlating to relevant dissociation channels.

- Perform CASSCF calculation with active space encompassing valence molecular orbitals.

- For GeB: Use quintuple-ζ basis set with relativistic effective core potentials.

MRCI Calculation Setup

- Generate all single and double excitations from reference configurations.

- Include scalar relativistic effects via Douglas-Kroll-Hess Hamiltonian.

- For spin-orbit coupling: Use state-interaction approach with full MRCI wavefunctions.

Property Evaluation

- Compute potential energy curves by scanning internuclear distances.

- Extract spectroscopic parameters (Te, ωe, r_e) by fitting to Morse/Dunham potentials.

- Evaluate transition properties (Einstein coefficients, radiative lifetimes) from dipole matrix elements.

Data Analysis

- Apply Davidson correction (+Q) for size consistency.

- Analyze wavefunction composition for state characterisation.

- Compare with experimental data or isovalent systems (BSi, BC) for validation.

DMRG-ec-MRCI Protocol for Large Active Spaces

This specialized protocol integrates DMRG with MRCI for systems requiring large active spaces [41]:

DMRG Reference Calculation

- Perform DMRG calculation with large active space (e.g., 30-50 orbitals).

- Use entropy-driven genetic algorithm (EDGA) to reconstruct compact CASCI-type reference.

External Contraction Scheme

- Generate MRCI expansion using reconstructed references.

- Apply external contraction (ec) to avoid high-order reduced density matrices.

Dynamic Correlation Treatment

- Use Epstein-Nesbet partitioning for external space Hamiltonian.

- Iteratively solve for wave operator matrices connecting primary and external spaces.

Result Validation

- Compare with traditional MRCI for small systems.

- Check convergence with active space size and number of reference configurations.

Workflow Visualization

Figure 1: Standard MRCI calculation workflow for molecular systems, illustrating the sequential steps from initial structure to final results.

The Scientist's Toolkit

Table 3: Essential Computational Tools for MRCI Calculations

| Tool/Component | Function | Implementation Notes |

|---|---|---|

| CASSCF | Determines reference space and orbitals | Prerequisite for most MRCI calculations [38] |

| GUGA (Graphical Unitary Group Approach) | Efficiently handles CI Hamiltonian matrix elements [37] | Core of COLUMBUS program package [37] |

| Davidson Correction (+Q) | Approximates quadruple excitations for size consistency [35] | Empirical correction; suffix "+Q" or "(Q)" [35] |

| DMRG Integration | Handles large active spaces beyond conventional CAS [40] [41] | Uses entropy-driven genetic algorithm for reference reconstruction [41] |

| Analytic Gradients | Calculates energy derivatives for geometry optimization [37] | Available in COLUMBUS for MRCI and MR-AQCC [37] |

Emerging Developments and Future Directions

The field of MRCI methodology continues to evolve with several promising directions:

Hybrid DMRG-MRCI Methods: New approaches like DMRG-ec-MRCI bypass the bottleneck of computing high-order reduced density matrices by reconstructing compact reference wavefunctions from DMRG solutions [41]. This enables treatment of active spaces with over 30 orbitals and large basis sets, as demonstrated in applications to Cr₂ and higher n-acenes.

Efficient Parallel Algorithms: Recent developments focus on parallel procedures for constructing potential energy surfaces, moving beyond traditional sequential calculations to leverage modern computing architectures [39]. These approaches maintain reliability while significantly improving computational efficiency for mapping complex electronic landscapes.

Extended Applications: MRCI methods are increasingly applied to complex systems including lanthanide and actinide compounds through fully variational uncontracted spin-orbit MRCI implementations [37]. The availability of analytic nonadiabatic couplings further enables sophisticated studies of nonadiabatic dynamics and diabatization procedures.

Local Correlation Approaches: To address the steep computational scaling, local electron correlation MRCI methods are being developed that exploit the short-range nature of dynamic correlation, promising to extend the applicability of MRCI to larger molecular systems.

These advances collectively push the boundaries of MRCI applications, making highly accurate calculations possible for increasingly complex and larger molecular systems in both ground and excited states.

Multireference perturbation theories represent a cornerstone of modern quantum chemistry, providing some of the most accurate methods in computational chemistry for treating systems with significant static and dynamical electron correlation. These methods are particularly indispensable for investigating entire potential energy surfaces, bond dissociation processes, and excited electronic states where single-reference methods fail catastrophically. The fundamental strength of these approaches lies in their hybrid variational-perturbational formulation, which captures large amounts of both dynamical and static correlation effects through perturbative inclusion of large numbers of configuration state functions (CSFs) following a variational treatment of a smaller reference set. [42]

In the hierarchy of quantum chemical methods, multireference perturbation theories occupy a crucial niche between purely variational methods like multireference configuration interaction (MRCI) and more approximate single-reference approaches. Unlike MRCI, which includes all configurations variationally and suffers from exponential growth in computational demand, perturbative methods offer a more computationally efficient pathway to high accuracy. This efficiency arises from the treatment of higher excitations through perturbation theory rather than full variational optimization, making these methods applicable to a much wider range of chemical problems including complex systems with delocalized electrons, multi-radicals, and transition metal complexes. [42]