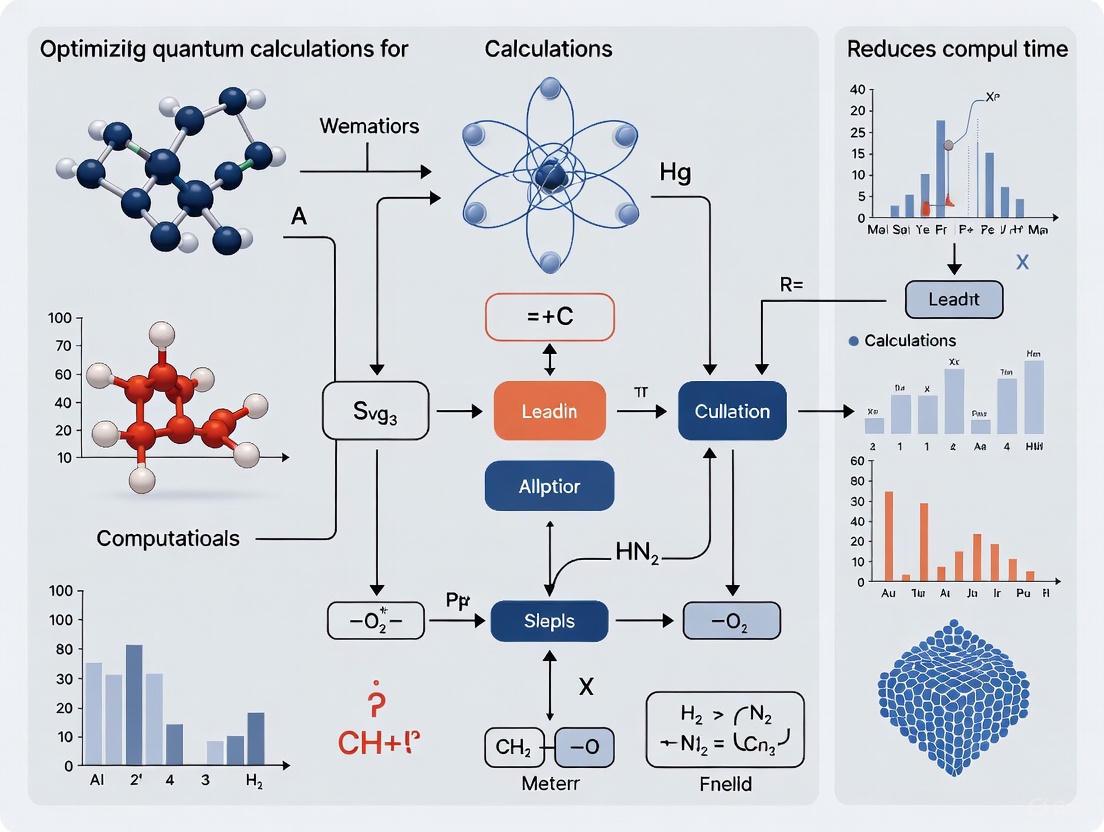

Accelerating Discovery: Strategies to Optimize Quantum Chemical Calculations and Reduce Computational Time

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the latest strategies for reducing the computational time of quantum chemical calculations.

Accelerating Discovery: Strategies to Optimize Quantum Chemical Calculations and Reduce Computational Time

Abstract

This article provides researchers, scientists, and drug development professionals with a comprehensive guide to the latest strategies for reducing the computational time of quantum chemical calculations. We explore the foundational shift from purely classical to hybrid quantum-classical computing, detailing breakthroughs in quantum hardware and error correction. The piece offers a practical examination of cutting-edge methodological approaches, including variational algorithms and deep learning-inspired techniques, and provides actionable insights for troubleshooting noise and resource bottlenecks. Finally, we present a comparative analysis of current quantum and quantum-inspired solutions, validating their performance against classical methods and outlining a future where these tools significantly accelerate biomedical research and clinical application development.

The Quantum Leap: From Classical Bottlenecks to Hybrid Computing Foundations

Electron correlation refers to the interaction between electrons in a quantum system that goes beyond the simple mean-field approximation. In essence, it measures how much the movement of one electron is influenced by the presence of all other electrons [1]. Accurately capturing these correlated electron motions is one of the most significant challenges in computational chemistry and physics.

The correlation energy is formally defined as the difference between the exact energy of a system (within the Born-Oppenheimer approximation) and the energy calculated by the Hartree-Fock (HF) method [1] [2]. While the HF method provides a good starting point and accounts for Pauli correlation (preventing electrons with parallel spin from occupying the same point in space), it neglects Coulomb correlation—the correlation in electron positions due to their electrostatic repulsion [1]. This missing correlation is crucial for predicting chemically important phenomena, including London dispersion forces, reaction energies, and the properties of transition metal complexes [1] [2].

- Dynamical Correlation arises from the local, short-range repulsion between electrons as they avoid each other. It can be systematically approximated by including excited electron configurations from a single reference state [3].

- Static (or Non-Dynamical) Correlation becomes important when a system's ground state cannot be described by a single dominant electron configuration, often occurring in molecules with (near-)degenerate frontiers orbitals, such as diradicals or in bond-breaking situations [1]. Treating such systems requires a multi-reference description from the outset.

Neglecting these effects, as the standard HF method does, leads to substantial inaccuracies in predicting key molecular properties like bond lengths, vibrational frequencies, and binding energies [2]. Overcoming this "Computational Wall" is therefore essential for achieving chemical accuracy in simulations.

Troubleshooting Guides: Identifying and Solving Correlation Problems

Guide 1: Diagnosing Common Symptoms of Poor Electron Correlation Treatment

| Symptom | Underlying Cause | Recommended Solution |

|---|---|---|

| Systematically underestimated binding energies (e.g., for non-covalent interactions) [4] | HF's neglect of long-range dispersion forces, a direct consequence of missing dynamic correlation [4]. | Apply empirical dispersion corrections (e.g., D3, D4) [5] or switch to a method that describes dispersion, such as CCSD(T) or MP2 [4]. |

| Inaccurate dissociation curves (e.g., bond breaking gives qualitatively wrong results) | Lack of static correlation; a single determinant reference state is insufficient [1]. | Use a multi-reference method like MCSCF or CASSCF as a starting point [1]. |

| Large errors in reaction energies [2] | Inadequate treatment of correlation energy changes during bond formation/breaking. | Employ a correlated method like CCSD(T) or a high-level DFT functional for the entire reaction pathway [2]. |

| Poor prediction of electronic spectra | Imbalanced treatment of correlation between ground and excited states [1]. | Use multi-reference configuration interaction (MRCI) or high-level coupled-cluster (e.g., EOM-CCSD) methods [1]. |

Guide 2: Selecting the Right Computational Method

Choosing an appropriate method is critical to overcoming the computational wall. The table below compares the scalability and applicability of common approaches.

| Method | Handles Dynamical Correlation? | Handles Static Correlation? | Computational Scaling | Ideal Use Case |

|---|---|---|---|---|

| Hartree-Fock (HF) [4] [2] | No (only Pauli correlation) | No | O(N⁴) | Fast baseline calculation; starting point for post-HF methods. |

| Density Functional Theory (DFT) [6] [4] | Yes (approximate, via XC functional) | Limited (standard functionals fail for strong correlation) | O(N³) to O(N⁴) | Workhorse for large molecules (100-500 atoms); ground-state properties [4]. |

| Møller-Plesset Perturbation (MP2) [1] | Yes (2nd order perturbation) | No | O(N⁵) | Accounting for dispersion interactions at a lower cost than CCSD(T). |

| Coupled Cluster (CCSD(T)) [5] [2] | Yes (highly accurate) | No (single-reference) | O(N⁷) | "Gold standard" for single-reference systems where applicable [5]. |

| Multi-configurational SCF (MCSCF) [1] | No | Yes | High (depends on active space) | Bond dissociation, diradicals, and other multi-reference ground states. |

| Hybrid AI/QM (AIQM1) [5] | Yes (via NN trained on CCSD(T)* data) | Limited by underlying SQM method | ~SQM cost (Very Fast) | Rapid screening of organic, neutral closed-shell molecules with coupled-cluster level accuracy [5]. |

Guide 3: Advanced Protocol: Dynamic Correlation Treatment with a Large Active Space

For large, strongly correlated systems (e.g., involving lanthanides), treating dynamic correlation beyond a large active space is a frontier challenge. The following protocol outlines a modern approach [3].

Objective: To accurately describe dynamic electron correlation without the prohibitive cost of handling high-order reduced density matrices from a large active space calculation.

Workflow Summary: The process begins with a multi-reference calculation to build a large active space and capture static correlation, then incorporates dynamic correlation from the external space using advanced methods, and finally produces a highly accurate total energy.

Methodology Details:

- Initial Wavefunction: Perform a multi-configurational self-consistent field (MCSCF) calculation to generate a reference wavefunction that captures the essential static correlation. This involves selecting an active space (e.g., 10 electrons in 10 orbitals, denoted as (10e,10o)), which is often the computational bottleneck [3].

- Dynamic Correlation Treatment: Use a method that adds dynamic correlation on top of the multi-reference wavefunction without directly computing high-order reduced density matrices (RDMs). Promising categories of methods include [3]:

- Multi-Reference Perturbation Theory (MRPT2)

- Internally Contracted approaches

- Incremental or Embedding schemes

- Validation: Benchmark the calculated potential energy curves or properties against experimental data or higher-level theoretical results. A case study on neodymium oxide (NdO) demonstrates the application of such protocols [3].

Key Considerations:

- The choice of the active space is critical and must include orbitals essential for the chemical process under study.

- The computational cost scales factorially with the size of the active space, making it the primary limitation.

- These methods are computationally demanding and require specialized software and expertise.

Frequently Asked Questions (FAQs)

Q1: If Hartree-Fock is so inaccurate, why is it still used? Hartree-Fock (HF) theory provides a computationally efficient starting point that recovers about 99% of the total energy of a system. Its orbitals and energy form the foundational reference for most post-HF correlation methods like Configuration Interaction (CI), Møller-Plesset Perturbation Theory (MP2), and Coupled Cluster (CC) [2]. It is also used for generating initial guesses for DFT calculations and for systems where qualitative trends are sufficient.

Q2: What is the fundamental difference between DFT and wavefunction-based methods in treating correlation? Wavefunction-based methods (like CI, MP2, CC) explicitly build electron correlation into the many-electron wavefunction by considering combinations of excited electron configurations [6] [1]. In contrast, Density Functional Theory (DFT) incorporates correlation implicitly through an approximate exchange-correlation (XC) functional, which is a function of the electron density [6] [4]. The accuracy of DFT is therefore entirely dependent on the quality of the chosen XC functional, while wavefunction methods can be systematically improved towards an exact solution [2].

Q3: My DFT calculations are failing for dispersion-bound complexes. What should I do? This is a classic symptom of standard DFT functionals failing to describe long-range electron correlation. The standard solution is to employ empirical dispersion corrections, such as the D3 or D4 methods, which add a semi-classical dispersion energy term to the DFT total energy [5]. These corrections are now widely available in most quantum chemistry software packages.

Q4: When is it absolutely necessary to go beyond single-reference methods like CCSD(T)? CCSD(T), while being the gold standard, is a single-reference method. It fails when the underlying HF reference is qualitatively wrong, which occurs in situations with significant static correlation. Examples include [1]:

- Bond-breaking processes (e.g., F₂ dissociation)

- Diradical species (e.g., O₂)

- Systems with near-degenerate frontier orbitals

- Many transition metal complexes and lanthanide/actinide compounds [3] [2] In these cases, multi-reference methods like CASSCF are required for a qualitatively correct description.

Q5: Are there new computing paradigms that can solve the electron correlation problem? Yes, quantum computing and artificial intelligence (AI) are emerging as powerful paradigms.

- Quantum Computing: The Variational Quantum Eigensolver (VQE) is a hybrid quantum-classical algorithm designed to efficiently estimate molecular ground-state energies by leveraging quantum circuits to represent entangled wavefunctions, potentially overcoming the exponential scaling of classical full CI [7].

- Artificial Intelligence: Hybrid AI-QM methods like AIQM1 combine fast semi-empirical quantum methods with neural network (NN) corrections trained on high-level (e.g., CCSD(T)) data. This approach can achieve coupled-cluster level accuracy at a fraction of the computational cost, though its transferability is currently limited to specific classes of molecules [5].

The Scientist's Toolkit: Essential Research Reagents & Materials

This table details key computational "reagents" and software components used in advanced electron correlation studies.

| Item / "Reagent" | Function & Explanation |

|---|---|

| Gaussian-Type Orbital (GTO) Basis Sets | A set of mathematical functions (approximated by Gaussians) used to represent atomic and molecular orbitals. The size and quality of the basis set (e.g., cc-pVTZ, def2-TZVPP) directly control the accuracy and cost of the calculation [5]. |

| Exchange-Correlation (XC) Functional (for DFT) | The key approximation in DFT that defines how exchange and correlation energy are calculated from the electron density. Examples include B3LYP and PBE0. The choice of functional dictates DFT's performance [4] [2]. |

| Active Space (for MCSCF) | A selection of molecular orbitals and electrons that are most relevant to the chemical process being studied (e.g., bonding/antibonding pairs, frontier orbitals). It is the central concept in multi-reference calculations for capturing static correlation [1] [3]. |

| Neural Network (NN) Potentials (e.g., in AIQM1) | A machine learning model trained on high-level quantum mechanical data. It acts as a correction to a lower-level method, enabling the prediction of energies and forces with high accuracy and low computational cost [5]. |

| Parameterized Quantum Circuit (Ansatz) (for VQE) | A specific arrangement of quantum gates on a quantum computer, designed to prepare a trial wavefunction for a molecule. Chemically inspired ansatze like UCCSD are used to efficiently explore the Hilbert space [7]. |

| Dispersion Correction (e.g., D4) | An add-on correction for DFT or semi-empirical methods that adds a damped, long-range dispersion energy term, crucial for accurately modeling van der Waals interactions and non-covalent binding [5]. |

Quantum advantage represents a critical milestone in computational science, where a quantum computer solves a problem that is practically infeasible for any classical computer to tackle. For researchers in computational chemistry and drug development, this isn't merely an academic curiosity—it heralds a fundamental shift in what's computationally possible. Quantum advantage stems from the unique properties of qubits, which can exist in superposition states and become entangled, enabling a form of parallel computation that classical bits cannot achieve. Where classical computing power grows linearly with additional bits, quantum systems can offer exponential scaling advantages for specific problem classes, potentially reducing calculation times from years to minutes for complex molecular simulations and optimization challenges central to pharmaceutical research.

Core Concepts: Frequently Asked Questions

What exactly is quantum advantage?

Quantum advantage occurs when a quantum computer can solve a problem that would be practically impossible for any classical computer to solve within a reasonable timeframe. This advantage can manifest as exponential scaling, where as the problem size increases, the quantum computer's performance gap over classical methods grows dramatically. Unlike simple speed improvements, this scaling advantage means that for each additional variable in a problem, the quantum benefit roughly doubles, making it increasingly impossible for classical systems to compete as problems grow more complex [8].

How do qubits enable exponential speedups?

Qubits enable exponential speedups through two fundamental quantum mechanical phenomena:

- Superposition: A qubit can exist in a combination of both 0 and 1 states simultaneously, unlike classical bits that must be either 0 or 1. This allows quantum computers to process multiple computational paths at once.

- Entanglement: When qubits become entangled, they form correlated systems where the state of one qubit instantly influences others, regardless of physical separation. This creates an exponentially large computational space that can be manipulated with relatively few quantum operations [9].

For example, in solving Simon's problem—a theoretical precursor to practical quantum algorithms—a quantum computer requires only O(w logn) queries to find a hidden pattern, while the best classical algorithms need Ω(n^{w/2}) queries, demonstrating a clear exponential separation [9].

What problems currently show quantum advantage?

Several problem classes have demonstrated quantum advantage in recent experiments:

Table: Problems Demonstrating Quantum Advantage

| Problem Type | Quantum System Used | Performance Advantage | Relevance to Chemistry/Drug Discovery |

|---|---|---|---|

| Simon's Problem | 127-qubit IBM Quantum Eagle | Exponential scaling advantage proven up to 58 qubits [9] | Foundation for period-finding in quantum algorithms |

| Quantum Echoes (OTOC(2)) | Google's 65-qubit Willow processor | 13,000x faster than Frontier supercomputer [10] | Probing quantum chaos; extends NMR spectroscopy capabilities |

| Medical Device Simulation | IonQ 36-qubit computer | 12% performance improvement over classical HPC [11] | Direct application to biomedical simulations |

| MaxCut Optimization | Quantinuum H2-1 (56 qubits) | Meaningful results beyond classical simulation capability [12] | Combinatorial optimization relevant to molecular conformation |

What are the main challenges in achieving quantum advantage?

The primary challenges include:

- Quantum Decoherence: Qubits gradually lose their quantum state due to environmental interactions, limiting computation time.

- Gate Errors: Imperfect quantum operations accumulate during computations.

- Circuit Depth Limitations: Current hardware constraints restrict the number of sequential operations possible.

- Verification Complexity: Confirming quantum results classically becomes infeasible for beyond-classical problems [13].

Recent advances in error mitigation techniques like dynamical decoupling and measurement error mitigation have extended the coherent computation window, enabling demonstrations of advantage on today's noisy intermediate-scale quantum (NISQ) devices [9].

Troubleshooting Quantum Advantage Experiments

Issue: Results degrade as circuit depth increases

Solution: Implement a combination of error mitigation strategies:

- Dynamical Decoupling: Apply carefully designed microwave pulse sequences to idle qubits to reverse the effects of environmental noise and crosstalk. Research from USC demonstrated this technique significantly improved results, yielding scaling curves much closer to ideal quantum performance [9].

- Circuit Optimization: Use transpilation tools (like those in Qiskit) to reduce quantum logic operations and create shallower circuits. The USC team relied heavily on existing Qiskit functionality to compress the number of required operations [9].

- Measurement Error Mitigation: Characterize and correct for readout errors through calibration techniques. This should be applied after dynamical decoupling to address remaining measurement imperfections [8].

Issue: Difficulty verifying quantum results classically

Solution: Implement efficient verification protocols:

- For analog quantum simulations, use the protocol developed by JQI and University of Maryland researchers that combines low-energy state preparation with constant-sample verification, dramatically reducing the number of repetitions needed [13].

- For digital quantum computations, leverage problems with efficient classical verification, such as the second-order out-of-time-order correlator (OTOC(2)) used in Google's Quantum Echoes algorithm, which produces verifiable predictions that can be cross-checked against experimental data or run on different quantum computers [10].

Issue: Maintaining coherence at scale

Solution: Optimize experimental design for your hardware's strengths:

- For trapped-ion systems (Quantinuum, IonQ): Leverage their all-to-all connectivity and high fidelity for fully connected problems but be mindful of slower gate times. The Quantinuum H2-1 maintained coherent computation on a 56-qubit MaxCut problem with over 4,600 two-qubit gates [12].

- For superconducting qubits (IBM, Google): Utilize faster gate times and parallel operations but implement advanced error mitigation. IBM's Heron processors with fractional gates reduced two-qubit operations by half, enabling deeper circuits with less error accumulation [12].

Experimental Protocols for Demonstrating Quantum Advantage

Protocol 1: Modified Simon's Problem (Oracle-Based Advantage)

Based on USC experiments demonstrating unconditional exponential quantum scaling advantage [9]

Objective: Demonstrate exponential scaling advantage for finding a hidden bitstring.

Methodology:

- Problem Encoding:

- Choose a secret bitstring ( b \in {0,1}^n ) with fixed Hamming weight ( w ) (number of 1s restricted to limit circuit complexity).

- Define a function ( f ) where ( f(x) = f(y) ) if and only if ( x = y ) or ( x = y \oplus b ).

Quantum Circuit Implementation:

- Construct a quantum oracle that implements ( f ) using a optimized sequence of quantum gates.

- Apply Hadamard transforms to create superposition states.

- Query the oracle in superposition to extract information about ( b ).

Key Optimization Techniques:

- Apply dynamical decoupling sequences to idle qubits.

- Use measurement error mitigation during readout.

- Implement circuit transpilation to minimize gate count and depth.

Metrics: Compare Number of Oracle Queries to Solution (NTS) between quantum and classical approaches. Quantum advantage is demonstrated when quantum NTS scales as ( O(w \log n) ) versus classical ( Ω(n^{w/2}) ).

Simon's Problem Experimental Workflow

Protocol 2: Quantum Echoes Algorithm (Physics-Based Advantage)

Based on Google Quantum AI's demonstration of 13,000x speedup [10]

Objective: Measure out-of-time-order correlators (OTOC(2)) to probe quantum chaos and information scrambling.

Methodology:

- System Initialization: Prepare a 65-qubit system in a known initial state.

- Time Evolution:

- Evolve the system forward in time with a chaotic Hamiltonian.

- Apply a small "butterfly" perturbation to specific qubits.

- Evolve the system backward in time (time-reversal).

- Measurement: Detect the "butterfly effect" on faraway qubits by measuring interference patterns.

Application to Chemistry: This protocol can be adapted for Hamiltonian learning—extracting unknown parameters governing quantum system evolution—which has direct applications to molecular simulation and NMR spectroscopy enhancement.

Quantum Echoes Algorithm Workflow

Quantum Hardware Performance Comparison

Table: Quantum Processing Unit Benchmark Data (2024-2025)

| Quantum Processor | Qubit Count | Architecture | Key Performance Metric | Optimal Use Cases |

|---|---|---|---|---|

| Quantinuum H2-1 | 56 (effective) | Trapped Ion (QCCD) | Maintained coherence on 56-qubit MaxCut with 4,620 two-qubit gates [12] | Fully connected problems, high-fidelity simulations |

| IBM Fez (Heron) | 100+ | Superconducting | Handled up to 10,000 LR-QAOA layers (~1M gates) before thermalization [12] | Deep circuits, optimization problems |

| Google Willow | 65 | Superconducting | 13,000x speedup on OTOC(2) vs. Frontier supercomputer [10] | Quantum chaos simulation, Hamiltonian learning |

| IBM Eagle | 127 | Superconducting | Demonstrated exponential scaling advantage up to 58 qubits [9] | Algorithm development, foundational experiments |

Table: Key Research Reagent Solutions for Quantum Advantage Experiments

| Tool/Technique | Function | Example Implementation |

|---|---|---|

| Dynamical Decoupling | Suppresses dephasing noise in idle qubits | Applying microwave pulse sequences to reverse environmental noise effects [9] |

| Measurement Error Mitigation | Corrects readout inaccuracies | Calibration protocols that find and correct measurement imperfections [8] |

| Circuit Transpilation | Optimizes quantum circuits for specific hardware | Using Qiskit to reduce gate count and depth while preserving functionality [9] |

| Probabilistic Error Cancellation (PEC) | Removes bias from noisy quantum circuits | Advanced classical post-processing with reduced sampling overhead [14] |

| Linear-Ramp QAOA | Benchmarking quantum optimization performance | Fixed-parameter implementation for combinatorial problems like MaxCut [12] |

The demonstrations of quantum advantage from 2024-2025 mark a significant transition from theoretical promise to tangible computational capability. For researchers in computational chemistry and drug development, these advances signal that quantum computing is evolving from a speculative technology to a potentially transformative tool. While current advantage demonstrations remain largely in specialized domains rather than practical applications, the exponential scaling relationships now being empirically validated suggest that broader utility for molecular simulation, reaction modeling, and drug discovery is approaching rapidly. As error correction techniques improve and hardware coherence times increase, the quantum advantage boundary will continue to expand toward directly addressing the exponential complexity challenges that limit classical computational chemistry methods.

Frequently Asked Questions (FAQs)

Q1: Our quantum phase estimation (QPE) experiments are becoming prohibitively expensive as we include more orbitals for dynamic correlation. How can we mitigate this? The computational cost of QPE, dominated by the Hamiltonian 1-norm, often scales quadratically with the number of molecular orbitals. A highly effective strategy is to use Frozen Natural Orbitals (FNOs) derived from a larger basis set. This approach focuses resources on the most important virtual orbitals. Research shows this can reduce the number of orbitals required by 55% and lower the key cost driver (the 1-norm, λ) by up to 80%, without sacrificing chemical accuracy [15].

Q2: For simulating drug-like molecules, which quantum algorithms are currently most feasible on available hardware? For near-term experiments on noisy hardware, hybrid quantum-classical algorithms are the most practical.

- The Variational Quantum Eigensolver (VQE) is widely used for estimating ground-state energies of small molecules like hydrogen, lithium hydride, and iron-sulfur clusters [16].

- Algorithms are also being developed for specific tasks, such as computing forces between atoms and simulating simple chemical dynamics, moving beyond static energy calculations [16].

Q3: We need to simulate a large biomolecule. Is this possible with current quantum resources? Simulating large biomolecules in full detail remains a future goal. However, pioneering demonstrations are underway. For example, researchers have used a 16-qubit computer to find potential drugs that inhibit the KRAS protein (linked to cancers), and others have simulated the folding of a 12-amino-acid chain—the largest protein-folding demonstration on quantum hardware to date [16]. These feats are currently achieved by using quantum processors alongside powerful classical supercomputers in a hybrid model [16].

Q4: What is the most significant bottleneck for applying quantum computing to real-world chemistry problems? The primary bottleneck is qubit stability and error correction. Current quantum processors are prone to errors due to the fragile nature of qubits. While algorithms like VQE are designed to be somewhat resilient to noise, larger, more accurate simulations will require error-corrected logical qubits. Estimates suggest that simulating complex industrial targets like the FeMoco cofactor for nitrogen fixation could require anywhere from nearly 100,000 to millions of physical qubits [16]. Significant efforts are underway to make Quantum Error Correction (QEC) a practical reality [17].

Troubleshooting Guides

Problem 1: High 1-Norm in Quantum Phase Estimation Calculations

- Symptoms: Projected runtime for QPE is intractable, especially when trying to incorporate dynamic correlation by expanding the active space.

- Solution: Implement a Frozen Natural Orbital (FNO) active space.

- Start with a large basis set: Contrary to intuition, starting with a dense basis set (e.g., cc-pVTZ) is crucial [15].

- Perform a classical mean-field calculation: Run a Hartree-Fock or Density Functional Theory (DFT) calculation with the large basis set.

- Generate Natural Orbitals: Compute the virtual-virtual block of the one-particle reduced density matrix.

- Select and freeze: Truncate the virtual orbital space by retaining only the most important natural orbitals based on their occupation numbers. Studies show this can reduce orbital count by 55% [15].

- Construct the Hamiltonian: Build your active space Hamiltonian using the retained FNOs for the subsequent QPE calculation.

Problem 2: Algorithm Failure on Noisy Hardware

- Symptoms: The quantum algorithm (e.g., VQE) does not converge, or the results are too noisy to be useful.

- Solution: Adopt a tiered workflow that integrates classical and quantum resources.

- Classical Pre-processing: Use classical high-performance computing (HPC) and AI to pre-screen the problem. Identify which part of the calculation (e.g., the strongly correlated electrons in a specific fragment) truly requires the quantum computer [18].

- Problem Formulation: Ensure the Hamiltonian is encoded efficiently using techniques like double factorization to minimize the number of quantum gates required [15].

- Error Mitigation: Apply advanced error mitigation techniques tailored to your specific hardware platform.

- Classical Post-processing: Use classical computing to refine, analyze, and validate the results from the quantum processor [18].

Problem 3: Inefficient Catalyst Screening

- Symptoms: The process of identifying catalytic active sites and predicting catalyst performance is too slow for high-throughput screening.

- Solution: Leverage topological quantum chemistry descriptors for rapid pre-screening.

- Identify a Topological Descriptor: Use theory to find a descriptor like Obstructed Surface States (OSSs), which are intrinsic metallic surface states that often coincide with high catalytic activity [19].

- Database Screening: Rapidly scan crystal structure databases (e.g., the Inorganic Crystal Structure Database) for materials that exhibit OSSs. This can quickly identify over 400 potential high-activity catalysts [19].

- Targeted Quantum Simulation: Use more precise (but expensive) quantum chemical calculations only on the shortlisted candidate materials to confirm and refine their properties.

The following table summarizes resource reductions achieved by advanced methods in quantum computational chemistry, providing benchmarks for your experiments.

Table 1: Benchmarking Resource Reductions in Quantum Computational Chemistry

| Method / Strategy | Key Performance Metric | Reported Improvement/Reduction | Application Context |

|---|---|---|---|

| Frozen Natural Orbital (FNO) Active Space [15] | Hamiltonian 1-norm (λ) | Up to 80% reduction | QPE for dynamical correlation |

| Frozen Natural Orbital (FNO) Active Space [15] | Number of orbitals | 55% reduction | QPE for dynamical correlation |

| Optimized Gaussian Basis Sets [15] | Hamiltonian 1-norm (λ) | Up to 10% reduction (system-dependent) | General Hamiltonian representation |

| Improved VQE Algorithm [16] | Computational Speed | ~9x faster than classical method | Modeling nitrogen fixation reactions |

Experimental Protocols

Protocol 1: Implementing a Frozen Natural Orbital (FNO) Workflow for QPE

Objective: To reduce the computational cost of Quantum Phase Estimation by constructing a compact and accurate active space.

- Initial Calculation:

- Perform a correlated classical calculation (e.g., MP2 or CCSD) on your molecular system using a large parent basis set (e.g., cc-pVTZ).

- Generate Density Matrix:

- Obtain the one-particle reduced density matrix from the initial calculation.

- Diagonalize:

- Diagonalize the virtual-virtual block of the density matrix to obtain natural orbitals and their occupation numbers.

- Orbital Selection:

- Sort the natural orbitals in descending order of their occupation numbers.

- Retain only the orbitals with occupation numbers above a predefined threshold (e.g., 0.02). This truncated set forms your FNO active space.

- Hamiltonian Construction:

- Transform the one- and two-electron integrals into the FNO basis.

- Use this transformed Hamiltonian for the subsequent QPE algorithm.

Protocol 2: Hybrid Quantum-Classical Simulation of a Reaction Pathway

Objective: To map a chemical reaction pathway using a variational quantum algorithm.

- Define Reaction Coordinates:

- Classically, identify the key internal coordinates (e.g., bond lengths, angles) that define the reaction path from reactants to products.

- Generate Molecular Structures:

- Generate a series of molecular geometries along the reaction coordinate.

- Quantum Resource Preparation:

- For each geometry, prepare the qubit Hamiltonian using an encoding method like Jordan-Wigner or Bravyi-Kitaev.

- Execute VQE Loop:

- For each geometry, run the VQE algorithm to find the ground-state energy.

- A parameterized quantum circuit (ansatz) is prepared on the quantum processor.

- The energy expectation value is measured.

- A classical optimizer adjusts the circuit parameters to minimize the energy.

- For each geometry, run the VQE algorithm to find the ground-state energy.

- Construct Potential Energy Surface (PES):

- Compile the ground-state energies for all geometries to map the reaction PES, identifying the transition state and energy barrier.

Workflow and System Diagrams

Diagram 1: Tiered Quantum-Classical Workflow. This illustrates the hybrid approach recommended for efficient resource use, where classical computers handle pre- and post-processing, and the quantum processor is reserved for tasks where it holds a potential advantage. [18]

The Scientist's Toolkit

Table 2: Essential Research Reagents & Computational Tools

| Tool / Resource | Category | Primary Function |

|---|---|---|

| Frozen Natural Orbitals (FNOs) [15] | Method & Protocol | Creates a compact, high-quality active space to dramatically reduce QPE costs. |

| Variational Quantum Eigensolver (VQE) [16] | Quantum Algorithm | A hybrid algorithm for finding molecular ground-state energies on noisy hardware. |

| Density Functional Theory (DFT) [20] | Classical Method | Provides the initial electron density and orbitals for generating FNOs and other properties. |

| Obstructed Surface States (OSSs) [19] | Theoretical Descriptor | A topological descriptor for rapidly identifying potential catalytic active sites in crystalline materials. |

| Topological Quantum Chemistry [19] | Theoretical Framework | A framework for high-throughput screening of material properties based on symmetry and topology. |

| Quantum Error Correction (QEC) [17] | Hardware/Software Stack | A set of techniques to correct errors during computation, essential for future large-scale simulations. |

In the pursuit of optimizing quantum chemical calculations, the integration of quantum and classical computing resources has emerged as a foundational strategy. Hybrid quantum-classical algorithms are designed to leverage the unique strengths of both computational paradigms: quantum processors handle specific tasks where quantum mechanics offers a potential advantage, such as preparing complex quantum states, while classical computers manage control processes, error correction, and data analysis [21] [22]. This cooperative approach is particularly vital for current quantum hardware, which often faces limitations due to noise, error rates, and qubit coherence times, making it not yet fully capable of running complete quantum algorithms independently [21]. The core of this paradigm often involves a feedback loop, where a quantum processor performs a computation, sends the results to a classical computer for processing, and the system iterates based on the classical optimization's output [21] [22].

This hybrid imperative is powerfully illustrated in a 2025 study from Caltech and IBM, which used a quantum-centric supercomputing approach to study the electronic energy levels of a complex [4Fe-4S] molecular cluster—a system crucial for biological processes like nitrogen fixation [23]. The research team used an IBM quantum device, powered by a Heron processor with up to 77 qubits, to identify the most important components of a massive Hamiltonian matrix. This quantum-refined matrix was then fed into the RIKEN Fugaku supercomputer to solve for the exact wave function [23]. This workflow demonstrates how hybrid approaches can tackle problems of a scale that was previously infeasible, moving the field closer to practical quantum advantage in computational chemistry.

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the primary advantages of using a hybrid approach for quantum chemical calculations? Hybrid approaches offer several key benefits for quantum chemistry:

- Scalability: They enable researchers to tackle larger, more complex molecular systems than could be handled by either classical or quantum systems alone [21].

- Reduced Resource Demand: Quantum processors are used selectively for the most computationally intense tasks, minimizing hardware requirements [21].

- Error Mitigation: Classical processors can correct quantum errors, making hybrid approaches more stable and reliable for practical applications [21].

- Rigorous State Preparation: Quantum computers can replace classical heuristics to more rigorously identify the most important components in a Hamiltonian matrix, as demonstrated in the [4Fe-4S] cluster study [23].

Q2: Which hybrid algorithms are most relevant for quantum chemistry applications? The Variational Quantum Eigensolver (VQE) is a prominent hybrid algorithm particularly useful for quantum chemistry and material science [21] [22]. In VQE, the quantum processor calculates the energy levels of a molecule, and a classical optimizer varies circuit parameters to find the molecular ground state [22]. Other relevant algorithms include the Quantum Approximate Optimization Algorithm (QAOA) for combinatorial problems and hybrid approaches in Quantum Machine Learning (QML) [21].

Q3: My quantum chemistry simulation is experiencing long queue times or failing on hardware targets. What should I check? When submitting jobs to quantum hardware, follow these diagnostic steps [24]:

- Check the target processor's status and availability via your provider's portal. Jobs submitted to targets with a "Degraded" status may experience significant delays or timeouts.

- Use the

get_results()method with your job object to retrieve detailed output or error messages. - For failed jobs, check your provider's job management console for specific error codes and messages that can guide troubleshooting.

Common Error Codes and Resolutions

Table: Common Quantum Chemistry Job Errors and Solutions

| Error Code / Message | Likely Cause | Solution |

|---|---|---|

Operation returns an invalid status code 'Unauthorized' |

Insufficient permissions for the storage account linked to the quantum workspace [24]. | In the Azure Portal, verify your account has 'Owner' or 'Contributor' role for the workspace and that the storage account allows public network access [24]. |

Operation returned an invalid status code 'Forbidden' |

Incomplete role assignment during workspace creation, often from closing the browser tab prematurely [24]. | In the storage account's Access Control (IAM), manually add the workspace as a 'Contributor,' or create a new workspace and wait for full creation [24]. |

Compiler error: "Wrong number of gate parameters" |

Use of a comma "," as a decimal separator in QASM code, which is common in many locales but not supported [24]. | Replace all non-period decimal separators with periods "." in the quantum circuit code (e.g., rx(1.57) q[0];) [24]. |

"Algorithm requires at least one T state or measurement to estimate resources" (Resource Estimator) |

The input quantum program contains no T gates, rotation gates, or measurement operations [24]. | Introduce the necessary quantum operations (T gates, rotations, or measurements) into the algorithm so the Resource Estimator can map it to logical qubits [24]. |

Job fails after updating the azure-quantum package with "ModuleNotFoundError: No module named qiskit.tools" |

Deprecation of the qiskit.tools module in Qiskit 1.0 [24]. |

Replace job_monitor() with job.wait_for_final_state() to wait for job completion, or use result = job.result() to get results [24]. |

Experimental Protocols for Optimizing Calculations

Protocol: Quantum-Centric Supercomputing for Electronic Structure

This protocol is based on the 2025 methodology used to study the [4Fe-4S] molecular cluster, demonstrating a practical hybrid workflow [23].

Objective: To determine the ground state energy and wave function of a complex molecular system by leveraging both quantum and classical high-performance computing (HPC) resources.

Materials and Setup:

- Quantum Processing Unit (QPU): Access to a quantum device (e.g., an IBM Heron processor).

- Classical HPC: Access to a supercomputer (e.g., RIKEN's Fugaku).

- Software Stack: Appropriate quantum programming and classical computational chemistry tools.

Methodology:

- System Preparation: Input all known information about the molecular system (atomic positions, number of electrons, etc.) to classically generate the full Hamiltonian matrix.

- Quantum Subroutine: Use the quantum processor to analyze the Hamiltonian and identify the most important components, effectively pruning the matrix to a more manageable subset. This step replaces classical heuristics with a more rigorous quantum-based selection [23].

- Classical Processing: Feed the refined, smaller Hamiltonian matrix to the supercomputer to solve for the exact wave function and ground state energy.

- Iteration and Analysis: The process may involve iteration between the quantum and classical components. Analyze the resulting ground state for chemical properties like reactivity and stability.

Protocol: Basis Set Optimization with Frozen Natural Orbitals

This protocol details a strategy to significantly reduce the computational cost of the Quantum Phase Estimation (QPE) algorithm, a promising method for achieving chemical accuracy in ground-state energy calculations [15].

Objective: To reduce the Hamiltonian 1-norm (a key cost driver in QPE) and the number of orbitals required for a calculation, without compromising the accuracy of the ground state energy.

Materials and Setup:

- Software: Electronic structure software capable of generating and processing natural orbitals.

- Computational Resources: Adequate classical computing resources for preliminary density functional theory (DFT) calculations.

Methodology:

- Initial Calculation with Large Basis Set: Perform a high-level DFT calculation (e.g., at the ωB97M-V/def2-TZVPD level of theory) on the target molecule using a large, dense basis set [25] [15].

- Generate Frozen Natural Orbitals (FNOs): From the initial calculation, derive the frozen natural orbitals. These orbitals are obtained by diagonalizing the virtual-virtual block of the one-body reduced density matrix, which orders the orbitals by their contribution to the electron correlation.

- Truncate Virtual Space: Truncate the virtual orbital space by retaining only the most important FNOs. Studies show this can lead to a ~55% reduction in the number of orbitals and up to an 80% reduction in the Hamiltonian 1-norm (λ) [15].

- Execute QPE: Use the truncated, high-quality active space constructed from the FNOs to run the QPE algorithm with tractable resource requirements.

The following workflow diagram illustrates the two key experimental protocols for optimizing quantum chemical calculations:

Table: Key Resources for Hybrid Quantum-Chemical Research

| Resource / Tool | Function / Description | Relevance to Hybrid Calculations |

|---|---|---|

| Variational Quantum Eigensolver (VQE) [21] [22] | A hybrid algorithm where a quantum computer evaluates a parameterized wave function (prepares a state) and a classical computer optimizes the parameters to minimize the energy. | The leading algorithm for finding molecular ground states on near-term quantum devices; ideal for leveraging current hardware with limited qubit counts. |

| Frozen Natural Orbitals (FNOs) [15] | Orbitals derived from a correlated density matrix, used to truncate the virtual orbital space while preserving dynamical correlation energy. | Critical for reducing the resource cost (qubits, gates, 1-norm) of quantum algorithms like QPE, enabling the study of larger, more correlated systems. |

| High-Accuracy Molecular Datasets (e.g., OMol25) [25] | Massive datasets of quantum chemical calculations (e.g., >100 million calculations) run at high levels of theory (e.g., ωB97M-V/def2-TZVPD) for diverse chemical structures. | Provides training data for neural network potentials and benchmark results for validating new hybrid algorithms and computational methods. |

| Neural Network Potentials (NNPs) [25] | Machine-learned models trained on quantum chemistry data that provide fast, accurate approximations of molecular potential energy surfaces. | Can be used for preliminary exploration or in conjunction with quantum computations to accelerate molecular dynamics and property prediction. |

| Quantum Resource Estimator [24] | A tool (e.g., part of the Azure Quantum service) that estimates the physical resources required to run a quantum algorithm, such as qubit counts and T-state factories. | Essential for planning and budgeting computational campaigns, allowing researchers to assess the feasibility of a QPE or VQE calculation before execution. |

For researchers focused on quantum chemical calculations, the hardware landscape in 2025 is defined by rapid scaling and a clear industry-wide push toward fault tolerance. The following table summarizes the key roadmap milestones from leading hardware developers, illustrating the anticipated progression in qubit counts and capabilities.

| Company | Approach | 2025 Status / Near-term (2025-2026) | Mid-term (2027-2029) | Long-term (2030+) |

|---|---|---|---|---|

| IBM [14] [26] | Superconducting | 120-qubit Nighthawk; Heron (3rd revision); Roadmap: 1,386-qubit Kookaburra multi-chip processor [11]. | 200 logical qubit Starling system (planned for 2029) [26]. | Quantum-centric supercomputers with 100,000+ qubits by 2033 [11]. |

| Pasqal [27] | Neutral Atoms | Orion Gamma ( >140 physical qubits); Target: 1,000 physical qubits; Roadmap: 250-qubit QPU for advantage demonstrations in 2026. | Vela (200+ physical qubits, 2027); Centaurus (early FTQC, 2028); Lyra (impactful FTQC, 2029). | 200 high-fidelity logical qubits by 2030. |

| Google [11] | Superconducting | 105-qubit Willow chip demonstrating exponential error reduction. | - | - |

| Atom Computing [11] | Neutral Atoms | Collaboration with Microsoft demonstrated 28 logical qubits encoded onto 112 atoms. | Plans to scale systems substantially by 2026 [11]. | - |

| Microsoft [11] | Topological / Partnerships | Majorana 1 topological qubit; 4D geometric codes with 1,000-fold error reduction. | - | - |

Troubleshooting Guides for Quantum Experiments

When running quantum chemistry simulations, researchers often face challenges related to hardware noise and computational efficiency. The following guides address common issues.

Issue 1: Managing Noise and Errors in Quantum Simulations

Problem: Results from quantum processing units (QPUs) are skewed by high error rates, making outputs unreliable for precise chemical modeling.

Troubleshooting Steps:

- Employ Dynamic Circuits: If your hardware supports it, use dynamic circuits that incorporate classical operations mid-circuit. A demo using this for a 46-site Ising model showed a 58% reduction in two-qubit gates and up to 25% more accurate results [14].

- Integrate Advanced Error Mitigation: Leverage software packages like the

samplomaticpackage in Qiskit. It allows you to apply techniques like Probabilistic Error Cancellation (PEC) to specific circuit regions, which can decrease the sampling overhead of PEC by 100x [14]. - Utilize Accelerated Classical Decoding: For advanced error correction, use GPU-accelerated tools. Researchers using NVIDIA's CUDA-Q achieved a 50x boost in decoding speed with improved accuracy for quantum error correction codes [28].

Issue 2: Optimizing Circuit Compilation and Execution

Problem: Quantum circuits, especially for complex molecules, become too large or inefficient to run on current hardware.

Troubleshooting Steps:

- Leverage High-Performance SDKs: Use high-performing, open-source SDKs like Qiskit, which benchmarks have shown to be 83x faster at transpiling than some alternatives [14].

- Use Hardware-Specific Compilation: Implement hardware-aware compilation tools. A GPU-accelerated layout selection method called ∆-Motif has demonstrated speedups of up to 600x in quantum compilation tasks by optimizing qubit layout on the physical chip [28].

- Adopt a Hybrid Quantum-Classical Approach: Frame your problem to use the QPU only for specific, quantum-native subroutines (like calculating a part of the Hamiltonian). Use the C++ interface for Qiskit to achieve deeper integration with HPC systems, enabling more efficient hybrid workflows [14].

Frequently Asked Questions (FAQs)

Q1: We keep hearing about "quantum advantage." Has it been achieved for chemistry problems in 2025?

While definitive, universally accepted quantum advantage has not yet been claimed, 2025 has seen significant milestones. Enterprises are building "potentially useful quantum-powered alternatives" to classical methods [14]. For instance, IonQ and Ansys ran a medical device simulation that outperformed classical high-performance computing by 12%, an early documented case of practical advantage in an application [11]. The community is actively tracking these candidates through open initiatives like the Quantum Advantage Tracker [14].

Q2: What is the most significant bottleneck for scaling quantum hardware today?

The primary bottleneck is quantum error correction. While physical qubit counts are rising, these qubits are noisy. Progress hinges on grouping many physical qubits into a single, stable "logical qubit" that is resistant to errors. Breakthroughs in 2025, such as Google's Willow chip demonstrating exponential error reduction and Microsoft's novel codes that reduce error rates 1,000-fold, are directly targeted at this challenge [11] [29].

Q3: How can I reduce the computational time of my quantum chemistry simulations today?

A multi-pronged approach is most effective:

- Use Hybrid Algorithms: Implement variational algorithms like the Variational Quantum Eigensolver (VQE) that split work between quantum and classical processors [14] [30].

- Apply Error Mitigation: As detailed in the troubleshooting guide, use techniques like PEC to extract more accurate results from noisy hardware without the overhead of full error correction [14].

- Leverage GPU Acceleration: For both classical pre- and post-processing and quantum circuit simulations, use accelerated computing. High-fidelity simulations of quantum systems have seen performance boosts of up to 4,000x using tools like NVIDIA's cuQuantum [28].

Experimental Protocol: Demonstrating a Quantum Hardware Workflow

This protocol outlines a generalized methodology for executing and validating a quantum chemistry calculation on contemporary hardware, incorporating error mitigation.

The Scientist's Toolkit: Key Research Reagents & Solutions

This table details essential software and hardware solutions that form the modern toolkit for quantum computational chemistry research.

| Item / Solution | Function / Role | Relevance to Quantum Chemistry |

|---|---|---|

| Qiskit SDK [14] | An open-source quantum software development kit (SDK). | Used to build, optimize, and transpile quantum circuits. Essential for implementing algorithms like VQE for molecular energy calculations. |

| CUDA-Q [28] | A platform for integrating and accelerating quantum workflows with GPUs. | Dramatically speeds up classical components like error correction decoding and quantum circuit simulations, reducing overall research time. |

samplomatic package [14] |

A Qiskit add-on for advanced error mitigation. | Allows researchers to apply techniques like PEC to specific circuit regions, crucial for obtaining accurate, noise-free expectation values from chemistry simulations. |

| Quantum Processing Unit (QPU) | The physical quantum hardware that executes circuits. | Used for the quantum-native part of hybrid algorithms. Access is often via cloud (QaaS) from providers like IBM, Pasqal, and others [11] [27]. |

| High-Performance Computing (HPC) Cluster | Classical computing infrastructure for large-scale numerical tasks. | Runs demanding classical computations, including quantum circuit simulators and the classical optimizer in hybrid variational algorithms [14] [27]. |

A Practical Toolkit: Quantum Algorithms and Deep Learning for Faster Results

This technical support center provides troubleshooting guides and FAQs for researchers using variational quantum algorithms to optimize computational time in quantum chemical calculations.

Frequently Asked Questions (FAQs)

Q1: What are the primary use cases for VQE, QAOA, and Quantum Annealing in chemistry research?

- VQE (Variational Quantum Eigensolver): Primarily used to find the ground-state energy of molecular systems, a central task in quantum chemistry for drug discovery and materials design [31] [32].

- QAOA (Quantum Approximate Optimization Algorithm): Applied to combinatorial optimization problems. In chemistry, it can be mapped to problems like molecular conformation analysis [33] [32].

- Quantum Annealing: Used to find the lowest energy state of a system. It is applied to chemistry problems formulated as Quadratic Unconstrained Binary Optimization (QUBO) problems, such as protein folding and molecular similarity searches [34].

Q2: Which algorithm is best suited for Noisy Intermediate-Scale Quantum (NISQ) hardware? VQE and QAOA are specifically designed as hybrid quantum-classical algorithms for the NISQ era. They use short-depth quantum circuits and leverage classical optimizers to handle noise and limited qubit coherence [32]. Quantum Annealing is also considered a heuristic for NISQ devices [31].

Q3: A common issue is the "barren plateau" phenomenon, where the cost function gradient vanishes. How can I mitigate this? Barren plateaus, where the optimization landscape becomes flat, are an active research area. Current strategies include:

- Using problem-inspired initial parameter guesses instead of random initialization.

- Designing structured, hardware-efficient ansatzes that limit excessive entanglement.

- Implementing layer-wise training protocols to gradually increase circuit complexity [32].

Q4: My results from the quantum processor are noisy and inconsistent. What are the best practices?

- Error Mitigation: Employ techniques like zero-noise extrapolation to estimate the noiseless value from results obtained at different noise levels.

- Resampling: Run the same quantum circuit thousands of times to gather statistical data and identify the most probable (lowest-energy) solution [34].

- Parameter Tuning: Work closely with the classical optimizer to find robust parameters that are less sensitive to hardware noise [32].

Q5: How do I encode my classical chemistry problem into a quantum algorithm?

- For VQE: The molecular Hamiltonian is mapped to qubit operators using transformations like Jordan-Wigner or Bravyi-Kitaev. The ansatz (parameterized quantum circuit) is then designed to prepare trial wavefunctions [31] [32].

- For QAOA and Annealing: The chemistry problem must be formulated as a QUBO or Ising model. The solution to this model corresponds to the solution of your original problem [34].

Troubleshooting Guides

Issue 1: Poor Convergence in VQE/QAOA Optimization Loop

Problem: The classical optimizer fails to converge to a minimum energy value, or convergence is excessively slow.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Poor initial parameters | Check if the cost function starts in a flat region. | Use a classical heuristic (like Hartree-Fock) to generate informed initial parameters instead of random ones. |

| Hardware noise | Compare results from a simulator vs. real hardware. | Increase the number of measurement shots and employ error mitigation techniques [32]. |

| Inadequate optimizer | Test different classical optimizers (e.g., COBYLA, SPSA). | Use optimizers designed for noisy environments, such as SPSA [32]. |

| Weak ansatz | Verify the ansatz's expressibility. | Switch to a more expressive, problem-inspired ansatz if possible [31]. |

Issue 2: Sub-Optimal Results from Quantum Annealing

Problem: The annealer returns a solution that is not the global minimum or the solution quality is poor.

| Possible Cause | Diagnostic Steps | Solution |

|---|---|---|

| Inefficient minor-embedding | Check the chain breaks in the embedded problem. | Use different embedding algorithms or adjust chain strength to ensure qubit chains behave as a single logical qubit [34]. |

| Sub-optimal annealing schedule | Analyze the success probability for different run times. | For some problems, using a reverse annealing schedule, which starts from a known classical state, can improve results [34]. |

| Insufficient sampling | Look at the distribution of returned solutions. | Drastically increase the number of reads/ samples (from 1,000 to 10,000 or more) to improve the probability of observing the ground state [34]. |

Experimental Protocols & Performance Data

Detailed Methodology: Running a VQE Experiment

The following workflow outlines the standard protocol for a VQE calculation, from problem formulation to result analysis.

Algorithm Performance Benchmarking

The table below summarizes key performance characteristics of the core algorithms, based on current research and hardware capabilities.

| Algorithm | Primary Use in Chemistry | Key Hardware Consideration | Reported Performance vs. Classical |

|---|---|---|---|

| VQE | Ground-state energy calculation for molecules [31] [32] | NISQ-friendly; short circuits [32] | Accurate for small molecules; larger systems remain a challenge [35]. |

| QAOA | Combinatorial problems (e.g., molecular conformation) [33] | NISQ-friendly; hybrid approach [32] | Can be faster but with reduced solution quality vs. classical algorithms like NSGA-II [35]. |

| Quantum Annealing | Global optimization for problems like protein folding [34] | Requires specialized annealer; sensitive to embedding [34] | Shows potential speedup on tailored problems; practical advantage on real-world chemistry problems is still under investigation [36] [34]. |

Table Note: Performance is highly dependent on the specific problem instance, hardware used, and implementation details. The field is rapidly evolving.

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential "reagents" or components needed to conduct experiments with these quantum algorithms.

| Tool / Component | Function | Examples / Notes |

|---|---|---|

| Parameterized Quantum Circuit (Ansatz) | Generates trial wavefunctions for VQE or trial states for QAOA. | "Hardware-efficient" (for NISQ) or "problem-inspired" (e.g., UCCSD for chemistry) [32]. |

| Classical Optimizer | Adjusts circuit parameters to minimize the cost function. | COBYLA, SPSA, BFGS. Choice depends on noise tolerance and convergence speed [32]. |

| Qubit Hamiltonian | Encodes the chemistry problem (e.g., molecular energy) into a quantum-mechanical operator. | Generated via Jordan-Wigner or Bravyi-Kitaev transformation of the electronic structure Hamiltonian [31]. |

| Quantum Processor | Executes the quantum circuit or annealing schedule. | Gate-based processors (for VQE/QAOA) from IBM, Rigetti; Quantum annealers from D-Wave [31] [34]. |

| Cost Function | Defines the target of the optimization (the "energy" to minimize). | For VQE, it is the expectation value of the Hamiltonian [32]. |

Troubleshooting Guides and FAQs

This guide addresses common challenges researchers face when implementing the Variational Quantum Eigensolver (VQE) for molecular ground-state energy calculations, framed within research on optimizing computational time.

Optimization and Convergence Issues

Problem: The VQE optimization is stuck in a local minimum or converges very slowly.

- Cause: The energy landscape of molecular Hamiltonians is complex and high-dimensional, leading to many local minima that trap classical optimizers [37]. Standard gradient-based or gradient-free black-box optimizers often struggle with this complexity.

- Solutions:

- Use quantum-aware optimizers: Implement specialized optimizers like

ExcitationSolvethat leverage the analytical form of the energy landscape for excitation-based ansätze. This optimizer is globally-informed, gradient-free, and hyperparameter-free, and it determines the global optimum for each parameter using the same quantum resources as a single gradient-based update step [37]. - Restrict the parameter search space: For ansätze composed of excitation operators (e.g., UCCSD), the energy with respect to a single parameter θ_j is a second-order Fourier series:

f_θ(θ_j) = a₁cos(θ_j) + a₂cos(2θ_j) + b₁sin(θ_j) + b₂sin(2θ_j) + c. Use this known form to reconstruct and minimize the landscape classically with only five energy evaluations per parameter [37]. - Employ adaptive ansätze: Use adaptive algorithms like ADAPT-VQE, which build the ansatz iteratively, adding operators that most reduce the energy at each step, often leading to shallower circuits and faster convergence [37] [38].

- Use quantum-aware optimizers: Implement specialized optimizers like

Problem: The optimization is noisy and unstable on real hardware.

- Cause: Readout errors, gate infidelities, and decoherence on NISQ devices corrupt energy evaluations.

- Solutions:

- Leverage robust landscape reconstruction: When using an optimizer like

ExcitationSolve, using more than five energy evaluations per parameter (via the least squares method) can improve noise robustness [37]. - Utilize error mitigation techniques: Apply techniques like Zero-Noise Extrapolation (ZNE) to obtain more reliable energy estimates from noisy runs.

- Leverage robust landscape reconstruction: When using an optimizer like

Measurement and Resource Overhead

Problem: The number of measurements required to estimate the energy is prohibitively high.

- Cause: The molecular Hamiltonian is a sum of many Pauli terms, each requiring separate measurement. This is a major bottleneck for VQE [38] [39].

- Solutions:

- Use informationally complete measurements (IC-POVMs): Algorithms like AIM-ADAPT-VQE use IC-POVMs to get an unbiased estimate of the quantum state itself. This allows for the subsequent pool selection in adaptive algorithms to be performed classically, drastically reducing the number of quantum circuit executions [39].

- Exploit parallelization: Use high-performance computing (HPC) resources to perform independent circuit measurements in parallel. Virtualize quantum processing units (QPUs) mapped to classical HPC nodes to run many quantum circuit executions simultaneously [40].

- Employ classical boosting: The Classically-Boosted VQE (CB-VQE) method reduces quantum measurements by solving a generalized eigenvalue problem in a subspace spanned by both a classically tractable state (e.g., Hartree-Fock) and a quantum state. This hybrid approach lowers the quantum resource burden [41].

Problem: The quantum circuit (ansatz) is too deep to run reliably on available hardware.

- Cause: Fixed ansätze like UCCSD can result in deep circuits that exceed the coherence time of NISQ devices.

- Solutions:

- Switch to adaptive ansätze: ADAPT-VQE typically constructs shorter, more problem-tailored circuits than fixed UCCSD ansätze [38].

- Use more hardware-efficient operator pools: Replace fermionic excitation pools with more compact ones. The Coupled Exchange Operator (CEO) pool, for example, has been shown to reduce CNOT counts and circuit depths significantly [38].

- Optimize fermion-to-qubit mapping: Use advanced mappings like the PPTT (Bonsai algorithm) family, which are optimized for the hardware connectivity graph. This can reduce the number of qubits involved in excitations and decrease the number of two-qubit gates required [39].

Ansatz and Initialization

Problem: How to choose a good initial state and ansatz for an arbitrary molecule?

- Cause: A poor initial state or ansatz can lead to slow convergence or failure to reach the ground state.

- Solutions:

- Start from the Hartree-Fock (HF) state: The HF state is a mean-field approximation of the ground state and is a standard, chemically motivated starting point. It is a product state that is easy to prepare on a quantum computer [41] [42].

- Use physically-motivated ansätze: Ansätze based on fermionic excitation operators (e.g., UCCSD) conserve physical symmetries like particle number, ensuring physically plausible states [37].

- For complex systems, use an adaptive approach: When the exact excitations are unknown, ADAPT-VQE is the preferred method. It dynamically builds the ansatz from a pool of operators (e.g., singles and doubles), selecting the one with the largest energy gradient at each step [38].

Execution and Compilation

Problem: The compiled quantum circuit has a high number of CNOT gates, increasing noise.

- Cause: Native gate decomposition of complex excitation operations can be inefficient.

- Solutions:

- Apply circuit optimization and compilation: Use tools that optimize the quantum circuit at the gate level.

- Use mapping-aware compilation: Techniques like "Treespilation" do not just transpile a given circuit but also iteratively optimize the underlying fermion-to-qubit mapping itself to find a more compact representation of the entire state, greatly reducing the number of two-qubit gates [39].

The following table summarizes the resource reduction achieved by a state-of-the-art adaptive algorithm compared to its original version:

Table 1: Resource Reduction in State-of-the-Art ADAPT-VQE (CEO-ADAPT-VQE*) [38]

| Molecule (Qubits) | CNOT Count Reduction | CNOT Depth Reduction | Measurement Cost Reduction |

|---|---|---|---|

| LiH (12) | 88% | 96% | 99.6% |

| H6 (12) | Up to 88% | Up to 96% | Up to 99.6% |

| BeH2 (14) | Up to 88% | Up to 96% | Up to 99.6% |

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a VQE Experiment in Quantum Chemistry

| Item | Function | Key Examples & Notes |

|---|---|---|

| Molecular Hamiltonian | Encodes the electronic energy of the molecule; the operator whose ground state is sought. | Generated via classical quantum chemistry packages (e.g., PySCF [43]) and mapped to qubits using Jordan-Wigner, Bravyi-Kitaev, or PPTT [39]. |

| Initial Reference State | A simple-to-prepare starting state for the variational circuit. | The Hartree-Fock (HF) state is most common [41] [42]. |

| Variational Ansatz | A parameterized quantum circuit that prepares the trial wavefunction. | UCCSD: Standard, physically-motivated fixed ansatz [43]. ADAPT-VQE: Dynamically constructed ansatz, shallower and more accurate [38]. |

| Optimizer | A classical algorithm that updates the variational parameters to minimize the energy. | Gradient-based: Adam, BFGS [37]. Quantum-aware: ExcitationSolve for excitation-based ansätze [37], Rotosolve for rotation gates [37]. |

| Measurement Strategy | A method for estimating the expectation value of the Hamiltonian. | Term-by-Term: Standard but costly [38]. IC-POVMs: Used in AIM-ADAPT-VQE to reduce measurement overhead [39]. Classical Boosting: CB-VQE uses a classical subspace to reduce quantum measurements [41]. |

| Fermion-to-Qubit Mapping | Translates the fermionic Hamiltonian and operations into the qubit space. | Jordan-Wigner: Standard but can lead to long strings of gates [42]. PPTT (Bonsai): Can be tailored to hardware connectivity for more compact circuits [39]. |

Experimental Protocols

Protocol 1: Running a Basic VQE for H₂ with a Fixed Ansatz

This protocol outlines the steps to compute the ground state energy of an H₂ molecule using a fixed UCC-type ansatz, as demonstrated in PennyLane [42].

- Define the Molecule and Generate Hamiltonian: Specify the molecule's geometry (atom symbols and coordinates) and a basis set (e.g., STO-3G). Use a quantum chemistry library (e.g., PennyLane's

qchemmodule) to generate the electronic Hamiltonian in the qubit basis (e.g., via Jordan-Wigner transformation) [42]. - Prepare the Initial State: Initialize the qubit register to the Hartree-Fock state using

qml.BasisState[42]. - Construct the Ansatz Circuit: Apply a parameterized quantum circuit. For H₂, a single

DoubleExcitationgate (a Givens rotation) is sufficient to couple the Hartree-Fock state |1100⟩ with the doubly-excited state |0011⟩ [42]. - Define the Cost Function: Create a QNode that returns the expectation value of the molecular Hamiltonian with respect to the ansatz state.

- Optimize the Parameters: Select a classical optimizer (e.g., Stochastic Gradient Descent from

optax). Iteratively evaluate the cost function and update the parameter until convergence to a minimum energy is reached [42].

This protocol details the use of the ExcitationSolve optimizer for a fixed ansatz composed of excitation operators [37].

- Prerequisite: Ensure the ansatz is a product of unitaries

U(θ_j) = exp(-iθ_j G_j)where the generatorsG_jsatisfyG_j³ = G_j(this includes single and double excitation operators). - Iterative Parameter Sweep: For each parameter

θ_jin the ansatz (sweeping through allNparameters): a. Energy Evaluation: Hold all other parameters fixed. Evaluate the energy at (at least) five different values ofθ_j. b. Landscape Reconstruction: Classically, solve for the five coefficients (a₁, a₂, b₁, b₂, c) of the 2nd-order Fourier series that fits these energy points. c. Global Minimization: Using a classical companion-matrix method, find the global minimum of the reconstructed 1D energy landscape and updateθ_jto this optimal value [37]. - Check for Convergence: Repeat the full parameter sweep until the energy reduction between sweeps falls below a defined threshold.

The workflow for this optimizer is visualized below.

Figure 1: ExcitationSolve optimization workflow. This gradient-free, quantum-aware optimizer efficiently finds global minima for excitation-based ansätze [37].

Protocol 3: Running CEO-ADAPT-VQE* for Resource-Efficient Calculations

This protocol describes a state-of-the-art adaptive algorithm that minimizes quantum resource requirements [38].

- Initialization: Start with a simple reference state, typically the Hartree-Fock state, and an empty ansatz circuit.

- Operator Pool Selection: Use the Coupled Exchange Operator (CEO) pool, which is more hardware-efficient and compact than traditional fermionic pools [38].

- Iterative Ansatz Growth: At each iteration:

a. Gradient Calculation: For all operators in the CEO pool, compute the energy gradient (or an approximation thereof) with respect to adding that operator to the current ansatz.

b. Operator Selection: Append the parameterized unitary corresponding to the operator with the largest gradient magnitude to the ansatz.

c. Parameter Optimization: Optimize all parameters in the newly grown ansatz. Optimizers like

ExcitationSolveare suitable here. - Termination: The algorithm stops when the energy gradient falls below a threshold, indicating convergence to the ground state.

Table 3: Comparison of Key VQE Optimizers

| Optimizer | Type | Key Principle | Best For |

|---|---|---|---|

| Gradient Descent / Adam [37] | Gradient-based | Uses first-order gradients to descend the energy landscape. | General-purpose optimization. |

| ExcitationSolve [37] | Gradient-free, Quantum-aware | Reconstructs 1D energy landscape for excitation operators to find global optimum per parameter. | Fixed or adaptive ansätze with fermionic/qubit excitation operators. |

| Rotosolve [37] | Gradient-free, Quantum-aware | Similar to ExcitationSolve, but for gates with self-inverse generators (e.g., Pauli rotations). | Hardware-efficient ansätze with parameterized qubit rotations. |

Advanced Methodologies

Parallelization of Quantum Circuit Simulations

For large-scale problems, the measurement and simulation of many quantum circuits can be parallelized on classical HPC systems to drastically reduce computation time [40].

- Virtualization Model: Model classical compute nodes (CPUs/GPUs) as virtual Quantum Processing Units (vQPUs).

- Task Distribution: Use a message passing interface (MPI) to orchestrate the distribution of independent quantum circuit simulations (e.g., for different parameter shifts or Hamiltonian terms) across the array of vQPUs.

- Execution: Each vQPUs runs its assigned circuit simulation(s) using a chosen backend (e.g., a state vector simulator accelerated by the cuQuantum SDK on GPUs).

- Result Aggregation: The classical results from all vQPUs are collected to compute the total energy or gradient, which is then used by the classical optimizer.

Figure 2: Parallel simulation via virtual QPUs. This HPC approach accelerates VQE by running circuit simulations concurrently [40].

Troubleshooting Guide

Common Error Resolution

Q: The optimization is stuck in a local minimum or exhibits slow convergence. What can I do?

- Check Parameter Initialization: Poor initial parameter values are a common cause. Avoid random initialization. For Variational Quantum Eigensolver (VQE) experiments, initializing all parameters to zero has been shown to lead to faster and more stable convergence [7].

- Adjust Hyperparameters: The default ADAM parameters (e.g.,

stepsize=0.001,beta1=0.9,beta2=0.99,eps=1e-8) are a good starting point [44] [45]. If convergence is slow, consider tuning the step size. A smaller step size can improve stability but may slow down learning, while a larger one can speed up initial learning but risk instability [45] [46]. - Verify Gradient Calculations: Ensure that the gradient of your objective function is computed correctly. For quantum circuits, use appropriate methods provided by your quantum machine learning library (e.g., parameter-shift rules) [44].

Q: The optimization process is unstable or produces NaN values.

- Inspect the Epsilon (

eps) Value: Theeps(orepsilon) hyperparameter prevents division by zero. If your gradients or second-moment estimates are very small, a defaultepsvalue like1e-8might be too small, leading to numerical instability. For some applications, like training Inception networks on ImageNet, values of1.0or0.1have been used. Experiment with increasingeps[45]. - Review Learning Rate and Betas: An excessively large learning rate can cause overshooting and instability. Try reducing the learning rate. Also, ensure the

beta1andbeta2parameters are set close to their recommended values (0.9 and 0.999, respectively) to ensure stable moment estimates [44] [46]. - Check for Invalid Inputs/Operations: In quantum chemistry workflows, ensure that the molecular data or the output from the quantum circuit (e.g., expectation values) is valid and does not contain invalid numbers that could propagate through the optimization.

Q: My hybrid quantum-classical model is not generalizing well or is hitting a "barren plateau".

- Evaluate Ansatz Choice: The choice of parameterized quantum circuit (ansatz) is critical. Chemically inspired ansätze, such as UCCSD (Unitary Coupled Cluster Singles and Doubles), often yield superior convergence and precision when combined with adaptive optimizers like ADAM [7].

- Leverage Quantum Advantages: Consider incorporating a quantum prior from a model like a Quantum Circuit Born Machine (QCBM). This has been shown to improve the quality and success rate of generated molecular structures in drug discovery applications, as quantum effects like entanglement can help explore complex distributions more efficiently [47].

- Consider Alternative Optimizers: For specific quantum problems, especially those with physically motivated ansätze based on excitation operators (e.g., UCCSD), quantum-aware optimizers like ExcitationSolve may be more suitable. These are globally-informed, gradient-free, and can be more robust against noise on real hardware [37].

Frequently Asked Questions (FAQs)

Q: Why is ADAM a good default choice for optimizing quantum workflows? ADAM is effective because it combines the advantages of two other optimization methods: Momentum and RMSProp [45] [46].

- It uses the first moment (the mean of gradients) to accelerate the search in consistent directions, similar to momentum.

- It uses the second moment (the uncentered variance of gradients) to adapt the learning rate for each parameter individually. This means parameters with frequent large updates get a smaller effective learning rate, while parameters with infrequent updates get a larger one, leading to more stable and efficient convergence [45] [46]. This is particularly beneficial for the noisy and high-dimensional landscapes often encountered in quantum chemistry and variational quantum algorithms [7].

Q: What are the default hyperparameters for the ADAM optimizer, and when should I tune them? The widely used default parameters are [44] [45] [46]:

- Learning rate (

stepsizeorlr): 0.001 - beta1: 0.9

- beta2: 0.999

- epsilon (

eps): 1e-8

You should consider tuning these when:

- You have deep domain knowledge that suggests a different setting.